Massive benefits of AI come with environmental and human costs. Can AI itself be part of the solution?

Generative AI has a large carbon footprint and other drawbacks. But AI can help mitigate its own harms—by plowing through mountains of data on extreme weather and human displacement.

The recent explosion of generative artificial intelligence tools like ChatGPT and Dall-E enabled anyone with internet access to harness AI’s power for enhanced productivity, creativity, and problem-solving. With their ever-improving capabilities and expanding user base, these tools proved useful across disciplines, from the creative to the scientific.

But beneath the technological wonders of human-like conversation and creative expression lies a dirty secret—an alarming environmental and human cost. AI has an immense carbon footprint. Systems like ChatGPT take months to train in high-powered data centers, which demand huge amounts of electricity, much of which is still generated with fossil fuels, as well as water for cooling. “One of the reasons why Open AI needs investments [to the tune of] $10 billion from Microsoft is because they need to pay for all of that computation,” says Kentaro Toyama, a computer scientist at the University of Michigan. There’s also an ecological toll from mining rare minerals required for hardware and infrastructure. This environmental exploitation pollutes land, triggers natural disasters and causes large-scale human displacement. Finally, for data labeling needed to train and correct AI algorithms, the Big Data industry employs cheap and exploitative labor, often from the Global South.

Generative AI tools are based on large language models (LLMs), with most well-known being various versions of GPT. LLMs can perform natural language processing, including translating, summarizing and answering questions. They use artificial neural networks, called deep learning or machine learning. Inspired by the human brain, neural networks are made of millions of artificial neurons. “The basic principles of neural networks were known even in the 1950s and 1960s,” Toyama says, “but it’s only now, with the tremendous amount of compute power that we have, as well as huge amounts of data, that it’s become possible to train generative AI models.”

Though there aren’t any official figures about the power consumption or emissions from data centers, experts estimate that they use one percent of global electricity—more than entire countries.

In recent months, much attention has gone to the transformative benefits of these technologies. But it’s important to consider that these remarkable advances may come at a price.

AI’s carbon footprint

In their latest annual report, 2023 Landscape: Confronting Tech Power, the AI Now Institute, an independent policy research entity focusing on the concentration of power in the tech industry, says: “The constant push for scale in artificial intelligence has led Big Tech firms to develop hugely energy-intensive computational models that optimize for ‘accuracy’—through increasingly large datasets and computationally intensive model training—over more efficient and sustainable alternatives.”

Though there aren’t any official figures about the power consumption or emissions from data centers, experts estimate that they use one percent of global electricity—more than entire countries. In 2019, Emma Strubell, then a graduate researcher at the University of Massachusetts Amherst, estimated that training a single LLM resulted in over 280,000 kg in CO2 emissions—an equivalent of driving almost 1.2 million km in a gas-powered car. A couple of years later, David Patterson, a computer scientist from the University of California Berkeley, and colleagues, estimated GPT-3’s carbon footprint at over 550,000 kg of CO2 In 2022, the tech company Hugging Face, estimated the carbon footprint of its own language model, BLOOM, as 25,000 kg in CO2 emissions. (BLOOM’s footprint is lower because Hugging Face uses renewable energy, but it doubled when other life-cycle processes like hardware manufacturing and use were added.)

Luckily, despite the growing size and numbers of data centers, their increasing energy demands and emissions have not kept pace proportionately—thanks to renewable energy sources and energy-efficient hardware.

But emissions don’t tell the full story.

AI’s hidden human cost

“If historical colonialism annexed territories, their resources, and the bodies that worked on them, data colonialism’s power grab is both simpler and deeper: the capture and control of human life itself through appropriating the data that can be extracted from it for profit.” So write Nick Couldry and Ulises Mejias, authors of the bookThe Costs of Connection.

The energy requirements, hardware manufacture and the cheap human labor behind AI systems disproportionately affect marginalized communities.

Technologies we use daily inexorably gather our data. “Human experience, potentially every layer and aspect of it, is becoming the target of profitable extraction,” Couldry and Meijas say. This feeds data capitalism, the economic model built on the extraction and commodification of data. While we are being dispossessed of our data, Big Tech commodifies it for their own benefit. This results in consolidation of power structures that reinforce existing race, gender, class and other inequalities.

“The political economy around tech and tech companies, and the development in advances in AI contribute to massive displacement and pollution, and significantly changes the built environment,” says technologist and activist Yeshi Milner, who founded Data For Black Lives (D4BL) to create measurable change in Black people’s lives using data. The energy requirements, hardware manufacture and the cheap human labor behind AI systems disproportionately affect marginalized communities.

AI’s recent explosive growth spiked the demand for manual, behind-the-scenes tasks, creating an industry described by Mary Gray and Siddharth Suri as “ghost work” in their book. This invisible human workforce that lies behind the “magic” of AI, is overworked and underpaid, and very often based in the Global South. For example, workers in Kenya who made less than $2 an hour, were the behind the mechanism that trained ChatGPT to properly talk about violence, hate speech and sexual abuse. And, according to an article in Analytics India Magazine, in some cases these workers may not have been paid at all, a case for wage theft. An exposé by the Washington Post describes “digital sweatshops” in the Philippines, where thousands of workers experience low wages, delays in payment, and wage theft by Remotasks, a platform owned by Scale AI, a $7 billion dollar American startup. Rights groups and labor researchers have flagged Scale AI as one company that flouts basic labor standards for workers abroad.

It is possible to draw a parallel with chattel slavery—the most significant economic event that continues to shape the modern world—to see the business structures that allow for the massive exploitation of people, Milner says. Back then, people got chocolate, sugar, cotton; today, they get generative AI tools. “What’s invisible through distance—because [tech companies] also control what we see—is the massive exploitation,” Milner says.

“At Data for Black Lives, we are less concerned with whether AI will become human…[W]e’re more concerned with the growing power of AI to decide who’s human and who’s not,” Milner says. As a decision-making force, AI becomes a “justifying factor for policies, practices, rules that not just reinforce, but are currently turning the clock back generations years on people’s civil and human rights.”

Ironically, AI plays an important role in mitigating its own harms—by plowing through mountains of data about weather changes, extreme weather events and human displacement.

Nuria Oliver, a computer scientist, and co-founder and vice-president of the European Laboratory of Learning and Intelligent Systems (ELLIS), says that instead of focusing on the hypothetical existential risks of today’s AI, we should talk about its real, tangible risks.

“Because AI is a transverse discipline that you can apply to any field [from education, journalism, medicine, to transportation and energy], it has a transformative power…and an exponential impact,” she says.

AI's accountability

“At the core of what we were arguing about data capitalism [is] a call to action to abolish Big Data,” says Milner. “Not to abolish data itself, but the power structures that concentrate [its] power in the hands of very few actors.”

A comprehensive AI Act currently negotiated in the European Parliament aims to rein Big Tech in. It plans to introduce a rating of AI tools based on the harms caused to humans, while being as technology-neutral as possible. That sets standards for safe, transparent, traceable, non-discriminatory, and environmentally friendly AI systems, overseen by people, not automation. The regulations also ask for transparency in the content used to train generative AIs, particularly with copyrighted data, and also disclosing that the content is AI-generated. “This European regulation is setting the example for other regions and countries in the world,” Oliver says. But, she adds, such transparencies are hard to achieve.

Google, for example, recently updated its privacy policy to say that anything on the public internet will be used as training data. “Obviously, technology companies have to respond to their economic interests, so their decisions are not necessarily going to be the best for society and for the environment,” Oliver says. “And that’s why we need strong research institutions and civil society institutions to push for actions.” ELLIS also advocates for data centers to be built in locations where the energy can be produced sustainably.

Ironically, AI plays an important role in mitigating its own harms—by plowing through mountains of data about weather changes, extreme weather events and human displacement. “The only way to make sense of this data is using machine learning methods,” Oliver says.

Milner believes that the best way to expose AI-caused systemic inequalities is through people's stories. “In these last five years, so much of our work [at D4BL] has been creating new datasets, new data tools, bringing the data to life. To show the harms but also to continue to reclaim it as a tool for social change and for political change.” This change, she adds, will depend on whose hands it is in.

Thanks to safety cautions from the COVID-19 pandemic, a strain of influenza has been completely eliminated.

If you were one of the millions who masked up, washed your hands thoroughly and socially distanced, pat yourself on the back—you may have helped change the course of human history.

Scientists say that thanks to these safety precautions, which were introduced in early 2020 as a way to stop transmission of the novel COVID-19 virus, a strain of influenza has been completely eliminated. This marks the first time in human history that a virus has been wiped out through non-pharmaceutical interventions, such as vaccines.

The flu shot, explained

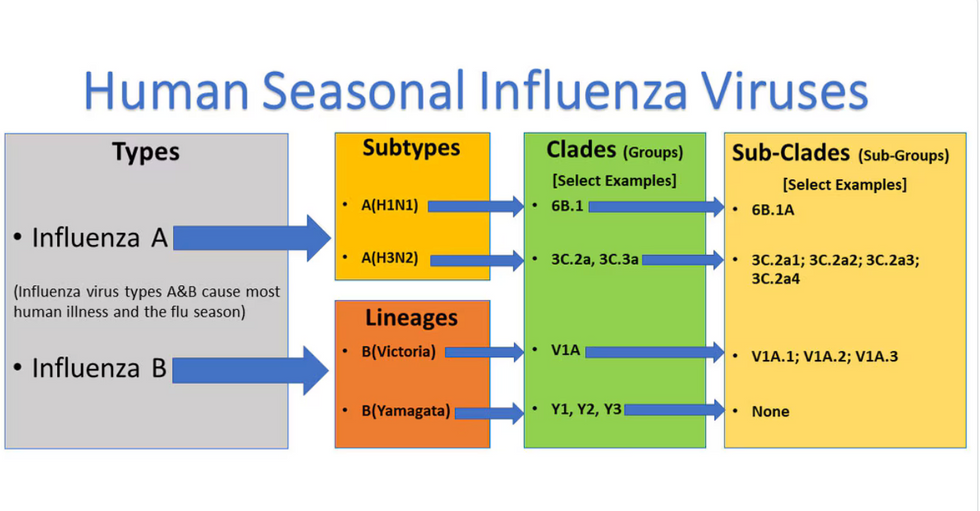

Influenza viruses type A and B are responsible for the majority of human illnesses and the flu season.

Centers for Disease Control

For more than a decade, flu shots have protected against two types of the influenza virus–type A and type B. While there are four different strains of influenza in existence (A, B, C, and D), only strains A, B, and C are capable of infecting humans, and only A and B cause pandemics. In other words, if you catch the flu during flu season, you’re most likely sick with flu type A or B.

Flu vaccines contain inactivated—or dead—influenza virus. These inactivated viruses can’t cause sickness in humans, but when administered as part of a vaccine, they teach a person’s immune system to recognize and kill those viruses when they’re encountered in the wild.

Each spring, a panel of experts gives a recommendation to the US Food and Drug Administration on which strains of each flu type to include in that year’s flu vaccine, depending on what surveillance data says is circulating and what they believe is likely to cause the most illness during the upcoming flu season. For the past decade, Americans have had access to vaccines that provide protection against two strains of influenza A and two lineages of influenza B, known as the Victoria lineage and the Yamagata lineage. But this year, the seasonal flu shot won’t include the Yamagata strain, because the Yamagata strain is no longer circulating among humans.

How Yamagata Disappeared

Flu surveillance data from the Global Initiative on Sharing All Influenza Data (GISAID) shows that the Yamagata lineage of flu type B has not been sequenced since April 2020.

Nature

Experts believe that the Yamagata lineage had already been in decline before the pandemic hit, likely because the strain was naturally less capable of infecting large numbers of people compared to the other strains. When the COVID-19 pandemic hit, the resulting safety precautions such as social distancing, isolating, hand-washing, and masking were enough to drive the virus into extinction completely.

Because the strain hasn’t been circulating since 2020, the FDA elected to remove the Yamagata strain from the seasonal flu vaccine. This will mark the first time since 2012 that the annual flu shot will be trivalent (three-component) rather than quadrivalent (four-component).

Should I still get the flu shot?

The flu shot will protect against fewer strains this year—but that doesn’t mean we should skip it. Influenza places a substantial health burden on the United States every year, responsible for hundreds of thousands of hospitalizations and tens of thousands of deaths. The flu shot has been shown to prevent millions of illnesses each year (more than six million during the 2022-2023 season). And while it’s still possible to catch the flu after getting the flu shot, studies show that people are far less likely to be hospitalized or die when they’re vaccinated.

Another unexpected benefit of dropping the Yamagata strain from the seasonal vaccine? This will possibly make production of the flu vaccine faster, and enable manufacturers to make more vaccines, helping countries who have a flu vaccine shortage and potentially saving millions more lives.

After his grandmother’s dementia diagnosis, one man invented a snack to keep her healthy and hydrated.

Founder Lewis Hornby and his grandmother Pat, sampling Jelly Drops—an edible gummy containing water and life-saving electrolytes.

On a visit to his grandmother’s nursing home in 2016, college student Lewis Hornby made a shocking discovery: Dehydration is a common (and dangerous) problem among seniors—especially those that are diagnosed with dementia.

Hornby’s grandmother, Pat, had always had difficulty keeping up her water intake as she got older, a common issue with seniors. As we age, our body composition changes, and we naturally hold less water than younger adults or children, so it’s easier to become dehydrated quickly if those fluids aren’t replenished. What’s more, our thirst signals diminish naturally as we age as well—meaning our body is not as good as it once was in letting us know that we need to rehydrate. This often creates a perfect storm that commonly leads to dehydration. In Pat’s case, her dehydration was so severe she nearly died.

When Lewis Hornby visited his grandmother at her nursing home afterward, he learned that dehydration especially affects people with dementia, as they often don’t feel thirst cues at all, or may not recognize how to use cups correctly. But while dementia patients often don’t remember to drink water, it seemed to Hornby that they had less problem remembering to eat, particularly candy.

Hornby wanted to create a solution for elderly people who struggled keeping their fluid intake up. He spent the next eighteen months researching and designing a solution and securing funding for his project. In 2019, Hornby won a sizable grant from the Alzheimer’s Society, a UK-based care and research charity for people with dementia and their caregivers. Together, through the charity’s Accelerator Program, they created a bite-sized, sugar-free, edible jelly drop that looked and tasted like candy. The candy, called Jelly Drops, contained 95% water and electrolytes—important minerals that are often lost during dehydration. The final product launched in 2020—and was an immediate success. The drops were able to provide extra hydration to the elderly, as well as help keep dementia patients safe, since dehydration commonly leads to confusion, hospitalization, and sometimes even death.

Not only did Jelly Drops quickly become a favorite snack among dementia patients in the UK, but they were able to provide an additional boost of hydration to hospital workers during the pandemic. In NHS coronavirus hospital wards, patients infected with the virus were regularly given Jelly Drops to keep their fluid levels normal—and staff members snacked on them as well, since long shifts and personal protective equipment (PPE) they were required to wear often left them feeling parched.

In April 2022, Jelly Drops launched in the United States. The company continues to donate 1% of its profits to help fund Alzheimer’s research.