“Deep Fake” Video Technology Is Advancing Faster Than Our Policies Can Keep Up

Artificial avatars for hire and sophisticated video manipulation carry profound implications for society.

This article is part of the magazine, "The Future of Science In America: The Election Issue," co-published by LeapsMag, the Aspen Institute Science & Society Program, and GOOD.

Alethea.ai sports a grid of faces smiling, blinking and looking about. Some are beautiful, some are oddly familiar, but all share one thing in common—they are fake.

Alethea creates "synthetic media"— including digital faces customers can license saying anything they choose with any voice they choose. Companies can hire these photorealistic avatars to appear in explainer videos, advertisements, multimedia projects or any other applications they might dream up without running auditions or paying talent agents or actor fees. Licenses begin at a mere $99. Companies may also license digital avatars of real celebrities or hire mashups created from real celebrities including "Don Exotic" (a mashup of Donald Trump and Joe Exotic) or "Baby Obama" (a large-eared toddler that looks remarkably similar to a former U.S. President).

Naturally, in the midst of the COVID pandemic, the appeal is understandable. Rather than flying to a remote location to film a beer commercial, an actor can simply license their avatar to do the work for them. The question is—where and when this tech will cross the line between legitimately licensed and authorized synthetic media to deep fakes—synthetic videos designed to deceive the public for financial and political gain.

Deep fakes are not new. From written quotes that are manipulated and taken out of context to audio quotes that are spliced together to mean something other than originally intended, misrepresentation has been around for centuries. What is new is the technology that allows this sort of seamless and sophisticated deception to be brought to the world of video.

"At one point, video content was considered more reliable, and had a higher threshold of trust," said Alethea CEO and co-founder, Arif Khan. "We think video is harder to fake and we aren't yet as sensitive to detecting those fakes. But the technology is definitely there."

"In the future, each of us will only trust about 15 people and that's it," said Phil Lelyveld, who serves as Immersive Media Program Lead at the Entertainment Technology Center at the University of Southern California. "It's already very difficult to tell true footage from fake. In the future, I expect this will only become more difficult."

How do we know what's true in a world where original videos created with avatars of celebrities and politicians can be manipulated to say virtually anything?

As the U.S. 2020 Presidential Election nears, the potential moral and ethical implications of this technology are startling. A number of cases of truth tampering have recently been widely publicized. On August 5, President Donald Trump's campaign released an ad featuring several photos of Joe Biden that were altered to make it seem like was hiding all alone in his basement. In one photo, at least ten people who had been sitting with Biden in the original shot were cut out. In other photos, Biden's image was removed from a nature preserve and praying in church to make it appear Biden was in that same basement. Recently several videos of Speaker of the House Nancy Pelosi were slowed down by 75 percent to make her sound as if her speech was slurred.

During a campaign event in Florida on September 15 of this year, former Vice President Joe Biden was introduced by Puerto Rican singer-songwriter Luis Fonsi. After he was introduced, Biden paid tribute to the singer-songwriter—he held up his cell phone and played the hit song "Despecito". Shortly afterward, a doctored version of this video appeared on self-described parody site the United Spot replacing the Despicito with N.W.A.'s "F—- Tha Police". By September 16, Donald Trump retweeted the video, twice—first with the line "What is this all about" and second with the line "China is drooling. They can't believe this!" Twitter was quick to mark the video in these tweets as manipulated media.

Twitter had previously addressed several of Donald Trump's tweets—flagging a video shared in June as manipulated media and removing altogether a video shared by Trump in July showing a group promoting the hydroxychloroquine as an effective cure for COVID-19. Many of these manipulated videos are ultimately flagged or taken down, but not before they are seen and shared by millions of online viewers.

These faked videos were exposed rather quickly, as they could be compared with the original, publicly available source material. But what happens when there is no original source material? How do we know what's true in a world where original videos created with avatars of celebrities and politicians can be manipulated to say virtually anything?

"This type of fake media is a profound threat to our democracy," said Reid Blackman, the CEO of VIRTUE--an ethics consultancy for AI leaders. "Democracy depends on well-informed citizens. When citizens can't or won't discern between real and fake news, the implications are huge."

In light of the importance of reliable information in the political system, there's a clear and present need to verify that the images and news we consume is authentic. So how can anyone ever know that the content they are viewing is real?

"This will not be a simple technological solution," said Blackman. "There is no 'truth' button to push to verify authenticity. There's plenty of blame and condemnation to go around. Purveyors of information have a responsibility to vet the reliability of their sources. And consumers also have a responsibility to vet their sources."

Yet the process of verifying sources has never been more challenging. More and more citizens are choosing to live in a "media bubble"—gathering and sharing news only from and with people who share their political leanings and opinions. At one time, United States broadcasters were bound by the Fairness Doctrine—requiring them to present controversial issues important to the public in a way that the FCC deemed honest, equitable and balanced. The repeal of this doctrine in 1987 paved the way for new forms of cable news channels such as Fox News and MSNBC that appealed to viewers with a particular point of view. The Internet has only exacerbated these tendencies. Social media algorithms are designed to keep people clicking within their comfort zones by presenting members with only the thoughts and opinions they want to hear.

"I sometimes laugh when I hear people tell me they can back a particular opinion they hold with research," said Blackman. "Having conducted a fair bit of true scientific research, I am aware that clicking on one article on the Internet hardly qualifies. But a surprising number of people believe that finding any source online that states the fact they choose to believe is the same as proving it true."

Back to the fundamental challenge: How do we as a society root out what's false online? Lelyveld suggests that it will begin by verifying things that are known to be true rather than trying to call out everything that is fake. "The EU called me in to talk about how to deal with fake news coming out of Russia," said Lelyveld. "I told them Hollywood has spent 100 years developing special effects technology to make things that are wholly fictional indistinguishable from the truth. I told them that you'll never chase down every source of fake news. You're better off focusing on what can be proved true."

Arif Khan agrees. "There are probably 100 accounts attributed to Elon Musk on Twitter, but only one has the blue checkmark," said Khan. "That means Twitter has verified that an account of public interest is real. That's what we're trying to do with our platform. Allow celebrities to verify that specific videos were licensed and authorized directly by them."

Alethea will use another key technology called blockchain to mark all authentic authorized videos with celebrity avatars. Blockchain uses a distributed ledger technology to make sure that no undetected changes have been made to the content. Think of the difference between editing a document in a traditional word processing program and editing in a distributed online editing system like Google Docs. In a traditional word processing program, you can edit and copy a document without revealing any changes. In a shared editing system like Google Docs, every person who shares the document can see a record of every edit, addition and copy made of any portion of the document. In a similar way, blockchain helps Alethea ensure that approved videos have not been copied or altered inappropriately.

While AI companies like Alethea are moving to ensure that avatars based on real individuals aren't wrongly identified, the situation becomes a bit murkier when it comes to the question of representing groups, races, creeds, and other forms of identity. Alethea is rightly proud that the completely artificial avatars visually represent a variety of ages, races and sexes. However, companies could conceivably license an avatar to represent a marginalized group without actually hiring a person within that group to decide what the avatar will do or say.

"I don't know if I would call this tokenism, as that is difficult to identify without understanding the hiring company's intent," said Blackman. "Where this becomes deeply troubling is when avatars are used to represent a marginalized group without clearly pointing out the actor is an avatar. It's one thing for an African American woman avatar to say, 'I like ice cream.' It's entirely different thing for an African American woman avatar to say she supports a particular political candidate. In the second case, the avatar is being used as social proof that real people of a certain type back a certain political idea. And there the deception is far more problematic."

"It always comes down to unintended consequences of technology," said Lelyveld. "Technology is neutral—it's only the implementation that has the power to be good or bad. Without a thoughtful approach to the cultural, moral and political implications of technology, it often drifts towards the bad. We need to make a conscious decision as we release new technology to ensure it moves towards the good."

When presented with the idea that his avatars might be used to misrepresent marginalized groups, Khan was thoughtful. "Yes, I can see that is an unintended consequence of our technology. We would like to encourage people to license the avatars of real people, who would have final approval over what their avatars say or do. As to what people do with our completely artificial avatars, we will have to consider that moving forward."

Lelyveld frankly sees the ability for advertisers to create avatars that are our assistants or even our friends as a greater moral concern. "Once our digital assistant or avatar becomes an integral part of our life—even a friend as it were, what's to stop marketers from having those digital friends make suggestions about what drink we buy, which shirt we wear or even which candidate we elect? The possibilities for bad actors to reach us through our digital circle is mind-boggling."

Ultimately, Blackman suggests, we as a society will need to make decisions about what matters to us. "We will need to build policies and write laws—tackling the biggest problems like political deep fakes first. And then we have to figure out how to make the penalties stiff enough to matter. Fining a multibillion-dollar company a few million for a major offense isn't likely to move the needle. The punishment will need to fit the crime."

Until then, media consumers will need to do their own due diligence—to do the difficult work of uncovering the often messy and deeply uncomfortable news that's the truth.

[Editor's Note: To read other articles in this special magazine issue, visit the beautifully designed e-reader version.]

Have You Heard of the Best Sport for Brain Health?

In this week's Friday Five, research points to this brain healthiest of sports. Plus, the natural way to reprogram cells to a younger state, the network that could underlie many different mental illnesses, and a new test could diagnose autism in newborns. Plus, scientists 3D print an ear and attach it to woman

The Friday Five covers five stories in research that you may have missed this week. There are plenty of controversies and troubling ethical issues in science – and we get into many of them in our online magazine – but this news roundup focuses on scientific creativity and progress to give you a therapeutic dose of inspiration headed into the weekend.

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

Here are the promising studies covered in this week's Friday Five:

- Reprogram cells to a younger state

- Pick up this sport for brain health

- Do all mental illnesses have the same underlying cause?

- New test could diagnose autism in newborns

- Scientists 3D print an ear and attach it to woman

Can blockchain help solve the Henrietta Lacks problem?

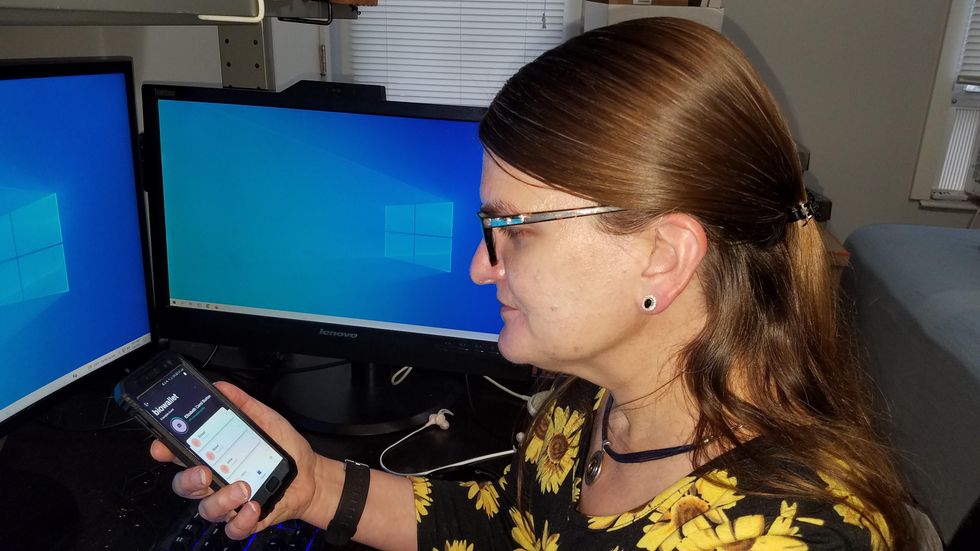

Marielle Gross, a professor at the University of Pittsburgh, shows patients a new app that tracks how their samples are used during biomedical research.

Science has come a long way since Henrietta Lacks, a Black woman from Baltimore, succumbed to cervical cancer at age 31 in 1951 -- only eight months after her diagnosis. Since then, research involving her cancer cells has advanced scientific understanding of the human papilloma virus, polio vaccines, medications for HIV/AIDS and in vitro fertilization.

Today, the World Health Organization reports that those cells are essential in mounting a COVID-19 response. But they were commercialized without the awareness or permission of Lacks or her family, who have filed a lawsuit against a biotech company for profiting from these “HeLa” cells.

While obtaining an individual's informed consent has become standard procedure before the use of tissues in medical research, many patients still don’t know what happens to their samples. Now, a new phone-based app is aiming to change that.

Tissue donors can track what scientists do with their samples while safeguarding privacy, through a pilot program initiated in October by researchers at the Johns Hopkins Berman Institute of Bioethics and the University of Pittsburgh’s Institute for Precision Medicine. The program uses blockchain technology to offer patients this opportunity through the University of Pittsburgh's Breast Disease Research Repository, while assuring that their identities remain anonymous to investigators.

A blockchain is a digital, tamper-proof ledger of transactions duplicated and distributed across a computer system network. Whenever a transaction occurs with a patient’s sample, multiple stakeholders can track it while the owner’s identity remains encrypted. Special certificates called “nonfungible tokens,” or NFTs, represent patients’ unique samples on a trusted and widely used blockchain that reinforces transparency.

Blockchain could be used to notify people if cancer researchers discover that they have certain risk factors.

“Healthcare is very data rich, but control of that data often does not lie with the patient,” said Julius Bogdan, vice president of analytics for North America at the Healthcare Information and Management Systems Society (HIMSS), a Chicago-based global technology nonprofit. “NFTs allow for the encapsulation of a patient’s data in a digital asset controlled by the patient.” He added that this technology enables a more secure and informed method of participating in clinical and research trials.

Without this technology, de-identification of patients’ samples during biomedical research had the unintended consequence of preventing them from discovering what researchers find -- even if that data could benefit their health. A solution was urgently needed, said Marielle Gross, assistant professor of obstetrics, gynecology and reproductive science and bioethics at the University of Pittsburgh School of Medicine.

“A researcher can learn something from your bio samples or medical records that could be life-saving information for you, and they have no way to let you or your doctor know,” said Gross, who is also an affiliate assistant professor at the Berman Institute. “There’s no good reason for that to stay the way that it is.”

For instance, blockchain could be used to notify people if cancer researchers discover that they have certain risk factors. Gross estimated that less than half of breast cancer patients are tested for mutations in BRCA1 and BRCA2 — tumor suppressor genes that are important in combating cancer. With normal function, these genes help prevent breast, ovarian and other cells from proliferating in an uncontrolled manner. If researchers find mutations, it’s relevant for a patient’s and family’s follow-up care — and that’s a prime example of how this newly designed app could play a life-saving role, she said.

Liz Burton was one of the first patients at the University of Pittsburgh to opt for the app -- called de-bi, which is short for decentralized biobank -- before undergoing a mastectomy for early-stage breast cancer in November, after it was diagnosed on a routine mammogram. She often takes part in medical research and looks forward to tracking her tissues.

“Anytime there’s a scientific experiment or study, I’m quick to participate -- to advance my own wellness as well as knowledge in general,” said Burton, 49, a life insurance service representative who lives in Carnegie, Pa. “It’s my way of contributing.”

Liz Burton was one of the first patients at the University of Pittsburgh to opt for the app before undergoing a mastectomy for early-stage breast cancer.

Liz Burton

The pilot program raises the issue of what investigators may owe study participants, especially since certain populations, such as Black and indigenous peoples, historically were not treated in an ethical manner for scientific purposes. “It’s a truly laudable effort,” Tamar Schiff, a postdoctoral fellow in medical ethics at New York University’s Grossman School of Medicine, said of the endeavor. “Research participants are beautifully altruistic.”

Lauren Sankary, a bioethicist and associate director of the neuroethics program at Cleveland Clinic, agrees that the pilot program provides increased transparency for study participants regarding how scientists use their tissues while acknowledging individuals’ contributions to research.

However, she added, “it may require researchers to develop a process for ongoing communication to be responsive to additional input from research participants.”

Peter H. Schwartz, professor of medicine and director of Indiana University’s Center for Bioethics in Indianapolis, said the program is promising, but he wonders what will happen if a patient has concerns about a particular research project involving their tissues.

“I can imagine a situation where a patient objects to their sample being used for some disease they’ve never heard about, or which carries some kind of stigma like a mental illness,” Schwartz said, noting that researchers would have to evaluate how to react. “There’s no simple answer to those questions, but the technology has to be assessed with an eye to the problems it could raise.”

To truly make a difference, blockchain must enable broad consent from patients, not just de-identification.

As a result, researchers may need to factor in how much information to share with patients and how to explain it, Schiff said. There are also concerns that in tracking their samples, patients could tell others what they learned before researchers are ready to publicly release this information. However, Bogdan, the vice president of the HIMSS nonprofit, believes only a minimal study identifier would be stored in an NFT, not patient data, research results or any type of proprietary trial information.

Some patients may be confused by blockchain and reluctant to embrace it. “The complexity of NFTs may prevent the average citizen from capitalizing on their potential or vendors willing to participate in the blockchain network,” Bogdan said. “Blockchain technology is also quite costly in terms of computational power and energy consumption, contributing to greenhouse gas emissions and climate change.”

In addition, this nascent, groundbreaking technology is immature and vulnerable to data security flaws, disputes over intellectual property rights and privacy issues, though it does offer baseline protections to maintain confidentiality. To truly make a difference, blockchain must enable broad consent from patients, not just de-identification, said Robyn Shapiro, a bioethicist and founding attorney at Health Sciences Law Group near Milwaukee.

The Henrietta Lacks story is a prime example, Shapiro noted. During her treatment for cervical cancer at Johns Hopkins, Lacks’s tissue was de-identified (albeit not entirely, because her cell line, HeLa, bore her initials). After her death, those cells were replicated and distributed for important and lucrative research and product development purposes without her knowledge or consent.

Nonetheless, Shapiro thinks that the initiative by the University of Pittsburgh and Johns Hopkins has potential to solve some ethical challenges involved in research use of biospecimens. “Compared to the system that allowed Lacks’s cells to be used without her permission, Shapiro said, “blockchain technology using nonfungible tokens that allow patients to follow their samples may enhance transparency, accountability and respect for persons who contribute their tissue and clinical data for research.”

Read more about laws that have prevented people from the rights to their own cells.