DNA Tests for Intelligence Ignore the Real Reasons Why Kids Succeed or Fail

A child prodigy considers a math equation.

[Editor's Note: This essay is in response to our current Big Question, which we posed to experts with different perspectives: "How should DNA tests for intelligence be used, if at all, by parents and educators?"]

It's 2019. Prenatal genetic tests are being used to help parents select from healthy and diseased eggs. Genetic risk profiles are being created for a range of common diseases. And embryonic gene editing has moved into the clinic. The science community is nearly unanimous on the question of whether we should be consulting our genomes as early as possible to create healthy offspring. If you can predict it, let's prevent it, and the sooner, the better.

There are big issues with IQ genetics that should be considered before parents and educators adopt DNA IQ predictions.

When it comes to care of our babies, kids, and future generations, we are doing things today that we never even dreamed would be possible. But one area that remains murky is the long fraught question of IQ, and whether to use DNA science to tell us something about it. There are big issues with IQ genetics that should be considered before parents and educators adopt DNA IQ predictions.

IQ tests have been around for over a century. They've been used by doctors, teachers, government officials, and a whole host of institutions as a proxy for intelligence, especially in youth. At times in history, test results have been used to determine whether to allow a person to procreate, remain a part of society, or merely stay alive. These abuses seem to be a distant part of our past, and IQ tests have since garnered their fair share of controversy for exhibiting racial and cultural biases. But they continue to be used across society. Indeed, much of the literature aimed at expecting parents justifies its recommendations (more omegas, less formula, etc.) based on promises of raising a baby's IQ.

This is the power of IQ testing sans DNA science. Until recently, the two were separate entities, with IQ tests indicating a coefficient created from individual responses to written questions and genetic tests indicating some disease susceptibility based on a sequence of one's DNA. Yet in recent years, scientists have begun to unlock the secrets of inherited aspects of intelligence with genetic analyses that scan millions of points of variation in DNA. Both bench scientists and direct-to-consumer companies have used these new technologies to find variants associated with exceptional IQ scores. There are a number of tests on the open market that parents and educators can use at will. These tests purport to reveal whether a child is inherently predisposed to be intelligent, and some suggest ways to track them for success.

I started looking into these tests when I was doing research for my book, "Social by Nature: The Promise and Peril of Sociogenomics." This book investigated the new genetic science of social phenomena, like educational attainment and political persuasion, investment strategies, and health habits. I learned that, while many of the scientists doing much of the basic research into these things cautioned that the effects of genetic factors were quite small, most saw testing as one data point among many that could help to somehow level the playing field for young people. The rationale went that in certain circumstances, some needed help more than others. Why not put our collective resources together to help them?

Good nutrition, support at home, and access to healthcare and education make a huge difference in how people do.

Some experts believed so strongly in the power of DNA behavioral prediction that they argued it would be unfair not to use predictors to determine a kid's future, prevent negative outcomes, and promote the possibility for positive ones. The educators out in the wider world that I spoke with agreed. With careful attention, they thought sociogenomic tests could help young people get the push they needed when they possessed DNA sequences that weren't working in their favor. Officials working with troubled youth told me they hoped DNA data could be marshaled early enough that kids would thrive at home and in school, thereby avoiding ending up in their care. While my conversations with folks centered around sociogenomic data in general, genetic IQ prediction was completely entangled in it all.

I present these prevailing views to demonstrate both the widespread appeal of genetic predictors as well as the well-meaning intentions of those in favor of using them. It's a truly progressive notion to help those who need help the most. But we must question whether genetic predictors are data points worth looking at.

When we examine the way DNA IQ predictors are generated, we see scientists grouping people with similar IQ test results and academic achievements, and then searching for the DNA those people have in common. But there's a lot more to scores and achievements than meets the eye. Good nutrition, support at home, and access to healthcare and education make a huge difference in how people do. Therefore, the first problem with using DNA IQ predictors is that the data points themselves may be compromised by numerous inaccuracies.

We must then ask ourselves where the deep, enduring inequities in our society are really coming from. A deluge of research has shown that poor life outcomes are a product of social inequalities, like toxic living conditions, underfunded schools, and unhealthy jobs. A wealth of research has also shown that race, gender, sexuality, and class heavily influence life outcomes in numerous ways. Parents and caregivers feed, talk, and play differently with babies of different genders. Teachers treat girls and boys, as well as members of different racial and ethnic backgrounds, differently to the point where they do better and worse in different subject areas.

Healthcare providers consistently racially profile, using diagnostics and prescribing therapies differently for the same health conditions. Access to good schools and healthcare are strongly mitigated by one's race and socioeconomic status. But even youth from privileged backgrounds suffer worse health and life outcomes when they identify or are identified as queer. These are but a few examples of the ways in which social inequities affect our chances in life. Therefore, the second problem with using DNA IQ predictors is that it obscures these very real, and frankly lethal, determinants. Instead of attending to the social environment, parents and educators take inborn genetics as the reason for a child's successes or failures.

It is time that we shift our priorities from seeking genetic causes to fixing the social causes we know to be real.

The other problem with using DNA IQ predictors is that research into the weightiness of DNA evidence has shown time and again that people take DNA evidence more seriously than they do other kinds of evidence. So it's not realistic to say that we can just consider IQ genetics as merely one tiny data point. People will always give more weight to DNA evidence than it deserves. And given its proven negligible effect, it would be irresponsible to do so.

It is time that we shift our priorities from seeking genetic causes to fixing the social causes we know to be real. Parents and educators need to be wary of solutions aimed at them and their individual children.

[Editor's Note: Read another perspective in the series here.]

Thanks to safety cautions from the COVID-19 pandemic, a strain of influenza has been completely eliminated.

If you were one of the millions who masked up, washed your hands thoroughly and socially distanced, pat yourself on the back—you may have helped change the course of human history.

Scientists say that thanks to these safety precautions, which were introduced in early 2020 as a way to stop transmission of the novel COVID-19 virus, a strain of influenza has been completely eliminated. This marks the first time in human history that a virus has been wiped out through non-pharmaceutical interventions, such as vaccines.

The flu shot, explained

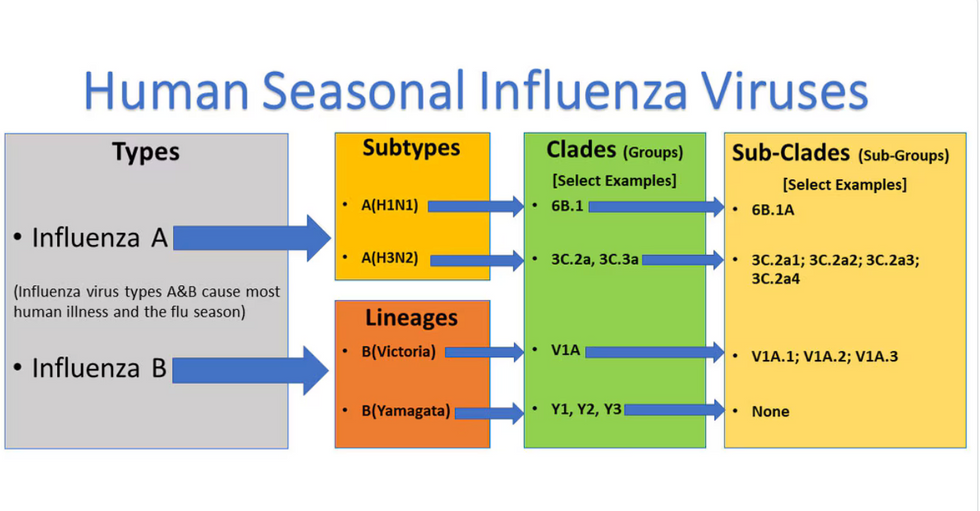

Influenza viruses type A and B are responsible for the majority of human illnesses and the flu season.

Centers for Disease Control

For more than a decade, flu shots have protected against two types of the influenza virus–type A and type B. While there are four different strains of influenza in existence (A, B, C, and D), only strains A, B, and C are capable of infecting humans, and only A and B cause pandemics. In other words, if you catch the flu during flu season, you’re most likely sick with flu type A or B.

Flu vaccines contain inactivated—or dead—influenza virus. These inactivated viruses can’t cause sickness in humans, but when administered as part of a vaccine, they teach a person’s immune system to recognize and kill those viruses when they’re encountered in the wild.

Each spring, a panel of experts gives a recommendation to the US Food and Drug Administration on which strains of each flu type to include in that year’s flu vaccine, depending on what surveillance data says is circulating and what they believe is likely to cause the most illness during the upcoming flu season. For the past decade, Americans have had access to vaccines that provide protection against two strains of influenza A and two lineages of influenza B, known as the Victoria lineage and the Yamagata lineage. But this year, the seasonal flu shot won’t include the Yamagata strain, because the Yamagata strain is no longer circulating among humans.

How Yamagata Disappeared

Flu surveillance data from the Global Initiative on Sharing All Influenza Data (GISAID) shows that the Yamagata lineage of flu type B has not been sequenced since April 2020.

Nature

Experts believe that the Yamagata lineage had already been in decline before the pandemic hit, likely because the strain was naturally less capable of infecting large numbers of people compared to the other strains. When the COVID-19 pandemic hit, the resulting safety precautions such as social distancing, isolating, hand-washing, and masking were enough to drive the virus into extinction completely.

Because the strain hasn’t been circulating since 2020, the FDA elected to remove the Yamagata strain from the seasonal flu vaccine. This will mark the first time since 2012 that the annual flu shot will be trivalent (three-component) rather than quadrivalent (four-component).

Should I still get the flu shot?

The flu shot will protect against fewer strains this year—but that doesn’t mean we should skip it. Influenza places a substantial health burden on the United States every year, responsible for hundreds of thousands of hospitalizations and tens of thousands of deaths. The flu shot has been shown to prevent millions of illnesses each year (more than six million during the 2022-2023 season). And while it’s still possible to catch the flu after getting the flu shot, studies show that people are far less likely to be hospitalized or die when they’re vaccinated.

Another unexpected benefit of dropping the Yamagata strain from the seasonal vaccine? This will possibly make production of the flu vaccine faster, and enable manufacturers to make more vaccines, helping countries who have a flu vaccine shortage and potentially saving millions more lives.

After his grandmother’s dementia diagnosis, one man invented a snack to keep her healthy and hydrated.

Founder Lewis Hornby and his grandmother Pat, sampling Jelly Drops—an edible gummy containing water and life-saving electrolytes.

On a visit to his grandmother’s nursing home in 2016, college student Lewis Hornby made a shocking discovery: Dehydration is a common (and dangerous) problem among seniors—especially those that are diagnosed with dementia.

Hornby’s grandmother, Pat, had always had difficulty keeping up her water intake as she got older, a common issue with seniors. As we age, our body composition changes, and we naturally hold less water than younger adults or children, so it’s easier to become dehydrated quickly if those fluids aren’t replenished. What’s more, our thirst signals diminish naturally as we age as well—meaning our body is not as good as it once was in letting us know that we need to rehydrate. This often creates a perfect storm that commonly leads to dehydration. In Pat’s case, her dehydration was so severe she nearly died.

When Lewis Hornby visited his grandmother at her nursing home afterward, he learned that dehydration especially affects people with dementia, as they often don’t feel thirst cues at all, or may not recognize how to use cups correctly. But while dementia patients often don’t remember to drink water, it seemed to Hornby that they had less problem remembering to eat, particularly candy.

Hornby wanted to create a solution for elderly people who struggled keeping their fluid intake up. He spent the next eighteen months researching and designing a solution and securing funding for his project. In 2019, Hornby won a sizable grant from the Alzheimer’s Society, a UK-based care and research charity for people with dementia and their caregivers. Together, through the charity’s Accelerator Program, they created a bite-sized, sugar-free, edible jelly drop that looked and tasted like candy. The candy, called Jelly Drops, contained 95% water and electrolytes—important minerals that are often lost during dehydration. The final product launched in 2020—and was an immediate success. The drops were able to provide extra hydration to the elderly, as well as help keep dementia patients safe, since dehydration commonly leads to confusion, hospitalization, and sometimes even death.

Not only did Jelly Drops quickly become a favorite snack among dementia patients in the UK, but they were able to provide an additional boost of hydration to hospital workers during the pandemic. In NHS coronavirus hospital wards, patients infected with the virus were regularly given Jelly Drops to keep their fluid levels normal—and staff members snacked on them as well, since long shifts and personal protective equipment (PPE) they were required to wear often left them feeling parched.

In April 2022, Jelly Drops launched in the United States. The company continues to donate 1% of its profits to help fund Alzheimer’s research.