Move Over, Iron Man. A Real-Life Power Suit Helped This Paralyzed Grandmother Learn to Run.

Puschel Sorensen undergoing physical therapy using Cyberdyne's hybrid assistive limb, left, and with her grandson while still in a wheelchair.

Puschel Sorensen first noticed something was wrong when her fingertips began to tingle. Later that day, she grew weak and fell.

It picked up small electrical impulses on her skin's surface and turned them into full movement in her legs.

Her family rushed her to the doctor, where she received the devastating diagnosis of Guillain-Barré Syndrome -- a rare and rapidly progressing autoimmune disorder that attacks the myelin sheath covering nerves.

Sorensen, a once-spry grandmother in her late fifties, spent 54 days in intensive care in 2018. When she was finally transferred to a rehab facility near her home in Florida, she was still on a feeding tube and ventilator, and was paralyzed from the neck down. Progress with traditional physical therapy was slow.

Sorensen in the hospital after her diagnosis of Guillain-Barré syndrome.

And then everything changed. Sorensen began using a cutting-edge technology called an exoskeleton to relearn how to walk. In the vein of Iron Man's fictional power suit, it confers strength and mobility to the wearer that isn't possible otherwise. In Sorensen's case, her device, called HAL – for hybrid assistive limb -- picked up small electrical impulses on her skin's surface and turned them into full movement in her legs while she attempted to walk on a treadmill.

"It was very difficult, but super awesome," recalls Sorensen, of first using the device. "The robot was having to do all the work for me."

Amazingly, within a year, she was running. She's one of 38 patients who have used HAL to recover from accidents or medical catastrophes.

"How do you thank someone for giving them back the ability to walk, the ability to live your life again?" Sorensen asks effusively.

It's still early days for such exoskeleton devices, which number perhaps a few thousand worldwide, according to data from the handful of manufacturers who create them with any scale. But the devices' ability to dramatically rehabilitate patients like Sorensen highlights their potential to extract untold numbers of people from wheelchairs, and even to usher in a new paradigm for caregiving – one of the fastest growing segments of the U.S. economy.

"I've been a physical therapist for 16 years, and (these devices) help teach patients the right way to move in rehabilitation," says Robert McIver, director of clinical technology at the Brooks Cybernic Treatment Center, part of the Brooks Rehabilitation Hospital in Jacksonville, Fla, where Sorensen recovered.

Another patient there, a 17-year-old named George with a snowboarding injury that paralyzed his legs, was getting around with a walker within 20 sessions.

As patients progress in their recoveries, so does exoskeleton technology. Jack Peurach, CEO of Ekso, one of the leaders in the space, believes within a decade they could resemble an article of clothing (a "magic pair of pants" is his phrase). They also may become inexpensive and reliable enough to transition from a medical to a consumer device. McIver sees them eventually being used in the home on an ongoing basis as a personal assistive device, much like a walker or cane, to prevent falls in elderly people.

Such a transition "certainly could eventually lessen the need for caregivers," says Sharona Hoffman, a professor of law at Case Western University in Cleveland who has written extensively on aging and bioethics. "We have a real shortage of caregivers, so that would be a good thing."

Of course, having an aging and disabled population using exoskeletons in much the same way as an Apple Watch raises issues of its own.

Dr. Elizabeth Landsverk, a California-based geriatrician and founder of a company that performs house calls for elderly patients, believes the tech holds some promise in easing the burden on caregivers, who sometimes have to lift or move patients without assistance. But she also believes exoskeletons could become overhyped.

"I don't see robotics as completely replacing the caregiver," she says. And even if exoskeletons became akin to articles of clothing, she is skeptical of how convenient they could become.

"It's hard enough to get into support hose. Would an older person be able to get in and out of it on their own?" she asks, noting that a patient's cognitive levels could pose a huge barrier to donning such a device without assistance.

If personal exoskeletons did wildly succeed, Hoffman wonders whether they would leave the elderly more physically mobile yet also more socially isolated, since caregivers or even residing in an assisted living facility may no longer be required. Or, if they were priced in the hundreds or thousands of dollars, he worries that the cost would exacerbate social inequalities among the elderly and disabled.

"It's almost like a bad dream that [my illness] happened."

With any technology that confers superhuman ability, there's also the question of appropriate usage. Even the fictional Power Loader in the movie Alien required an operator's license. In the real world, such an approach would likely pay dividends.

"We would have to make sure physicians are well-trained in these devices, and patients have a way of getting training to operate them that is thorough and responsible," Hoffman says.

But despite some unresolved questions, it is a remarkable achievement to be able to give people back their lives thanks to new technology.

"It's almost like a bad dream that [my illness] happened," says Sorensen, who managed to walk in her daughter's wedding after her recovery. "Because now everything is pretty much back to normal and it's awesome."

Artificial avatars for hire and sophisticated video manipulation carry profound implications for society.

This article is part of the magazine, "The Future of Science In America: The Election Issue," co-published by LeapsMag, the Aspen Institute Science & Society Program, and GOOD.

Alethea.ai sports a grid of faces smiling, blinking and looking about. Some are beautiful, some are oddly familiar, but all share one thing in common—they are fake.

Alethea creates "synthetic media"— including digital faces customers can license saying anything they choose with any voice they choose. Companies can hire these photorealistic avatars to appear in explainer videos, advertisements, multimedia projects or any other applications they might dream up without running auditions or paying talent agents or actor fees. Licenses begin at a mere $99. Companies may also license digital avatars of real celebrities or hire mashups created from real celebrities including "Don Exotic" (a mashup of Donald Trump and Joe Exotic) or "Baby Obama" (a large-eared toddler that looks remarkably similar to a former U.S. President).

Naturally, in the midst of the COVID pandemic, the appeal is understandable. Rather than flying to a remote location to film a beer commercial, an actor can simply license their avatar to do the work for them. The question is—where and when this tech will cross the line between legitimately licensed and authorized synthetic media to deep fakes—synthetic videos designed to deceive the public for financial and political gain.

Deep fakes are not new. From written quotes that are manipulated and taken out of context to audio quotes that are spliced together to mean something other than originally intended, misrepresentation has been around for centuries. What is new is the technology that allows this sort of seamless and sophisticated deception to be brought to the world of video.

"At one point, video content was considered more reliable, and had a higher threshold of trust," said Alethea CEO and co-founder, Arif Khan. "We think video is harder to fake and we aren't yet as sensitive to detecting those fakes. But the technology is definitely there."

"In the future, each of us will only trust about 15 people and that's it," said Phil Lelyveld, who serves as Immersive Media Program Lead at the Entertainment Technology Center at the University of Southern California. "It's already very difficult to tell true footage from fake. In the future, I expect this will only become more difficult."

How do we know what's true in a world where original videos created with avatars of celebrities and politicians can be manipulated to say virtually anything?

As the U.S. 2020 Presidential Election nears, the potential moral and ethical implications of this technology are startling. A number of cases of truth tampering have recently been widely publicized. On August 5, President Donald Trump's campaign released an ad featuring several photos of Joe Biden that were altered to make it seem like was hiding all alone in his basement. In one photo, at least ten people who had been sitting with Biden in the original shot were cut out. In other photos, Biden's image was removed from a nature preserve and praying in church to make it appear Biden was in that same basement. Recently several videos of Speaker of the House Nancy Pelosi were slowed down by 75 percent to make her sound as if her speech was slurred.

During a campaign event in Florida on September 15 of this year, former Vice President Joe Biden was introduced by Puerto Rican singer-songwriter Luis Fonsi. After he was introduced, Biden paid tribute to the singer-songwriter—he held up his cell phone and played the hit song "Despecito". Shortly afterward, a doctored version of this video appeared on self-described parody site the United Spot replacing the Despicito with N.W.A.'s "F—- Tha Police". By September 16, Donald Trump retweeted the video, twice—first with the line "What is this all about" and second with the line "China is drooling. They can't believe this!" Twitter was quick to mark the video in these tweets as manipulated media.

Twitter had previously addressed several of Donald Trump's tweets—flagging a video shared in June as manipulated media and removing altogether a video shared by Trump in July showing a group promoting the hydroxychloroquine as an effective cure for COVID-19. Many of these manipulated videos are ultimately flagged or taken down, but not before they are seen and shared by millions of online viewers.

These faked videos were exposed rather quickly, as they could be compared with the original, publicly available source material. But what happens when there is no original source material? How do we know what's true in a world where original videos created with avatars of celebrities and politicians can be manipulated to say virtually anything?

"This type of fake media is a profound threat to our democracy," said Reid Blackman, the CEO of VIRTUE--an ethics consultancy for AI leaders. "Democracy depends on well-informed citizens. When citizens can't or won't discern between real and fake news, the implications are huge."

In light of the importance of reliable information in the political system, there's a clear and present need to verify that the images and news we consume is authentic. So how can anyone ever know that the content they are viewing is real?

"This will not be a simple technological solution," said Blackman. "There is no 'truth' button to push to verify authenticity. There's plenty of blame and condemnation to go around. Purveyors of information have a responsibility to vet the reliability of their sources. And consumers also have a responsibility to vet their sources."

Yet the process of verifying sources has never been more challenging. More and more citizens are choosing to live in a "media bubble"—gathering and sharing news only from and with people who share their political leanings and opinions. At one time, United States broadcasters were bound by the Fairness Doctrine—requiring them to present controversial issues important to the public in a way that the FCC deemed honest, equitable and balanced. The repeal of this doctrine in 1987 paved the way for new forms of cable news channels such as Fox News and MSNBC that appealed to viewers with a particular point of view. The Internet has only exacerbated these tendencies. Social media algorithms are designed to keep people clicking within their comfort zones by presenting members with only the thoughts and opinions they want to hear.

"I sometimes laugh when I hear people tell me they can back a particular opinion they hold with research," said Blackman. "Having conducted a fair bit of true scientific research, I am aware that clicking on one article on the Internet hardly qualifies. But a surprising number of people believe that finding any source online that states the fact they choose to believe is the same as proving it true."

Back to the fundamental challenge: How do we as a society root out what's false online? Lelyveld suggests that it will begin by verifying things that are known to be true rather than trying to call out everything that is fake. "The EU called me in to talk about how to deal with fake news coming out of Russia," said Lelyveld. "I told them Hollywood has spent 100 years developing special effects technology to make things that are wholly fictional indistinguishable from the truth. I told them that you'll never chase down every source of fake news. You're better off focusing on what can be proved true."

Arif Khan agrees. "There are probably 100 accounts attributed to Elon Musk on Twitter, but only one has the blue checkmark," said Khan. "That means Twitter has verified that an account of public interest is real. That's what we're trying to do with our platform. Allow celebrities to verify that specific videos were licensed and authorized directly by them."

Alethea will use another key technology called blockchain to mark all authentic authorized videos with celebrity avatars. Blockchain uses a distributed ledger technology to make sure that no undetected changes have been made to the content. Think of the difference between editing a document in a traditional word processing program and editing in a distributed online editing system like Google Docs. In a traditional word processing program, you can edit and copy a document without revealing any changes. In a shared editing system like Google Docs, every person who shares the document can see a record of every edit, addition and copy made of any portion of the document. In a similar way, blockchain helps Alethea ensure that approved videos have not been copied or altered inappropriately.

While AI companies like Alethea are moving to ensure that avatars based on real individuals aren't wrongly identified, the situation becomes a bit murkier when it comes to the question of representing groups, races, creeds, and other forms of identity. Alethea is rightly proud that the completely artificial avatars visually represent a variety of ages, races and sexes. However, companies could conceivably license an avatar to represent a marginalized group without actually hiring a person within that group to decide what the avatar will do or say.

"I don't know if I would call this tokenism, as that is difficult to identify without understanding the hiring company's intent," said Blackman. "Where this becomes deeply troubling is when avatars are used to represent a marginalized group without clearly pointing out the actor is an avatar. It's one thing for an African American woman avatar to say, 'I like ice cream.' It's entirely different thing for an African American woman avatar to say she supports a particular political candidate. In the second case, the avatar is being used as social proof that real people of a certain type back a certain political idea. And there the deception is far more problematic."

"It always comes down to unintended consequences of technology," said Lelyveld. "Technology is neutral—it's only the implementation that has the power to be good or bad. Without a thoughtful approach to the cultural, moral and political implications of technology, it often drifts towards the bad. We need to make a conscious decision as we release new technology to ensure it moves towards the good."

When presented with the idea that his avatars might be used to misrepresent marginalized groups, Khan was thoughtful. "Yes, I can see that is an unintended consequence of our technology. We would like to encourage people to license the avatars of real people, who would have final approval over what their avatars say or do. As to what people do with our completely artificial avatars, we will have to consider that moving forward."

Lelyveld frankly sees the ability for advertisers to create avatars that are our assistants or even our friends as a greater moral concern. "Once our digital assistant or avatar becomes an integral part of our life—even a friend as it were, what's to stop marketers from having those digital friends make suggestions about what drink we buy, which shirt we wear or even which candidate we elect? The possibilities for bad actors to reach us through our digital circle is mind-boggling."

Ultimately, Blackman suggests, we as a society will need to make decisions about what matters to us. "We will need to build policies and write laws—tackling the biggest problems like political deep fakes first. And then we have to figure out how to make the penalties stiff enough to matter. Fining a multibillion-dollar company a few million for a major offense isn't likely to move the needle. The punishment will need to fit the crime."

Until then, media consumers will need to do their own due diligence—to do the difficult work of uncovering the often messy and deeply uncomfortable news that's the truth.

[Editor's Note: To read other articles in this special magazine issue, visit the beautifully designed e-reader version.]

World’s First “Augmented Reality” Contact Lens Aims to Revolutionize Much More Than Medicine

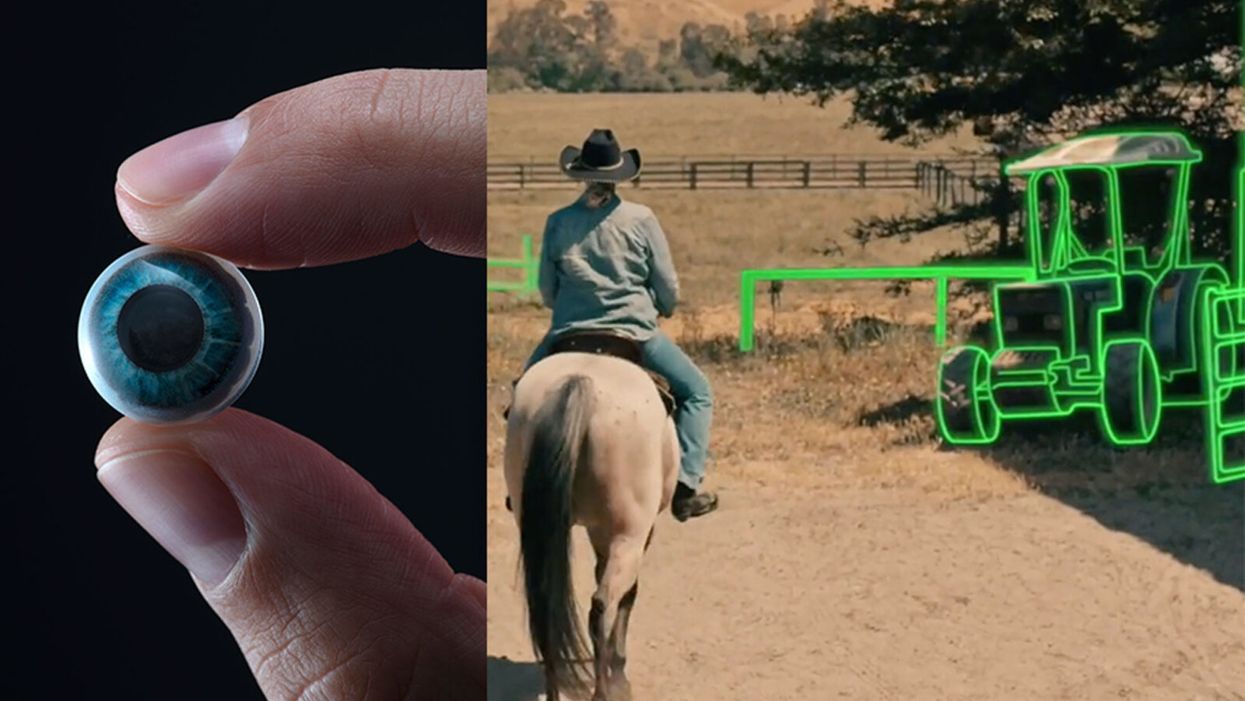

On the left, a picture of the Mojo lens smart contact; and a simulated image of a woman with low vision who is wearing the contact to highlight objects in her field of vision.

Imagine a world without screens. Instead of endlessly staring at your computer or craning your neck down to scroll through social media feeds and emails, information simply appears in front of your eyes when you need it and disappears when you don't.

"The vision is super clear...I was reading the poem with my eyes closed."

No more rude interruptions during dinner, no more bumping into people on the street while trying to follow GPS directions — just the information you want, when you need it, projected directly onto your visual field.

While this screenless future sounds like science fiction, it may soon be a reality thanks to the new Silicon Valley startup Mojo Vision, creator of the world's first smart contact lens. With a 14,000 pixel-per-inch display with eye-tracking, image stabilization, and a custom wireless radio, the Mojo smart lens bills itself the "smallest and densest dynamic display ever made." Unlike current augmented reality wearables such as Google Glass or ThirdEye, which project images onto a glass screen, the Mojo smart lens can project images directly onto the retina.

A current prototype displayed at the Consumer Electronics Show earlier this year in Las Vegas includes a tiny screen positioned right above the most sensitive area of the pupil. "[The Mojo lens] is a contact lens that essentially has wireless power and data transmission for a small micro LED projector that is placed over the center of the eye," explains David Hobbs, Director of Product Management at Mojo Vision. "[It] displays critical heads-up information when you need it and fades into the background when you're ready to continue on with your day."

Eventually, Mojo Visions' technology could replace our beloved smart devices but the first generation of the Mojo smart lens will be used to help the 2.2 billion people globally who suffer from vision impairment.

"If you think of the eye as a camera [for the visually impaired], the sensors are not working properly," explains Dr. Ashley Tuan, Vice President of Medical Devices at Mojo Vision and fellow of the American Academy of Optometry. "For this population, our lens can process the image so the contrast can be enhanced, we can make the image larger, magnify it so that low-vision people can see it or we can make it smaller so they can check their environment." In January of this year, the FDA granted Breakthrough Device Designation to Mojo, allowing them to have early and frequent discussions with the FDA about technical, safety and efficacy topics before clinical trials can be done and certification granted.

For now, Dr. Tuan is one of the few people who has actually worn the Mojo lens. "I put the contact lens on my eye. It was very comfortable like any contact lenses I've worn before," she describes. "The vision is super clear and then when I put on the accessories, suddenly I see Yoda in front of me and I see my vital signs. And then I have my colleague that prepared a beautiful poem that I loved when I was young [and] I was reading the poem with my eyes closed."

At the moment, there are several electronic glasses on the market like Acesight and Nueyes Pro that provide similar solutions for those suffering from visual impairment, but they are large, cumbersome, and highly visible. Mojo lens would be a discreet, more comfortable alternative that offers users more freedom of movement and independence.

"In the case of augmented-reality contact lenses, there could be an opportunity to improve the lives of people with low vision," says Dr. Thomas Steinemann, spokesperson for the American Academy of Ophthalmology and professor of ophthalmology at MetroHealth Medical Center in Cleveland. "There are existing tools for people currently living with low vision—such as digital apps, magnifiers, etc.— but something wearable could provide more flexibility and significantly more aid in day-to-day tasks."

As one of the first examples of "invisible computing," the potential applications of Mojo lens in the medical field are endless.

According to Dr. Tuan, the visually impaired often suffer from depression due to their lack of mobility and 70 percent of them are underemployed. "We hope that they can use this device to gain their mobility so they can get that social aspect back in their lives and then, eventually, employment," she explains. "That is our first and most important goal."

But helping those with low visual capabilities is only Mojo lens' first possible medical application; augmented reality is already being used in medicine and is poised to revolutionize the field in the coming decades. For example, Accuvein, a device that uses lasers to provide real-time images of veins, is widely used by nurses and doctors to help with the insertion of needles for IVs and blood tests.

According to the National Center for Biotechnology Information, augmentation of reality has been used in surgery for many years with surgeons using devices such as Google Glass to overlay critical information about their patients into their visual field. Using software like the Holographic Navigation Platform by Scopsis, surgeons can see a mixed-reality overlay that can "show you complicated tumor boundaries, assist with implant placements and guide you along anatomical pathways," its developers say.

However, according to Dr. Tuan, augmented reality headsets have drawbacks in the surgical setting. "The advantage of [Mojo lens] is you don't need to worry about sweating or that the headset or glasses will slide down to your nose," she explains "Also, our lens is designed so that it will understand your intent, so when you don't want the image overlay it will disappear, it will not block your visual field, and when you need it, it will come back at the right time."

As one of the first examples of "invisible computing," the potential applications of Mojo lens in the medical field are endless. Possibilities include live translation of sign language for deaf people; helping those with autism to read emotions; and improving doctors' bedside manner by allowing them to fully engage with patients without relying on a computer.

"[By] monitoring those blood vessels we can [track] chronic disease progression: high blood pressure, diabetes, and Alzheimer's."

Furthermore, the lens could be used to monitor health issues. "We have image sensors in the lens right now that point to the world but we can have a camera pointing inside of your eye to your retina," says Dr. Tuan, "[By] monitoring those blood vessels we can [track] chronic disease progression: high blood pressure, diabetes, and Alzheimer's."

For the moment, the future medical applications of the Mojo lens are still theoretical, but the team is confident they can eventually become a reality after going through the proper regulatory review. The company is still in the process of design, prototype and testing of the lens, so they don't know exactly when it will be available for use, but they anticipate shipping the first available products in the next couple of years. Once it does go to market, it will be available by prescription only for those with visual impairments, but the team's goal is to bring it to broader consumer markets pending regulatory clearance.

"We see that right now there's a unique opportunity here for Mojo lens and invisible computing to help to shape what the next decade of technology development looks like," explains David Hobbs. "We can use [the Mojo lens] to better serve us as opposed to us serving technology better."