6 Biotech Breakthroughs of 2021 That Missed the Attention They Deserved

A string of the code that comprises a DNA molecule, pictured at Miraikan, the National Museum of Emerging Science and Innovation in Japan.

News about COVID-19 continues to relentlessly dominate as Omicron surges around the globe. Yet somehow, during the pandemic’s exhausting twists and turns, progress in other areas of health and biotech has marched on.

In some cases, these innovations have occurred despite a broad reallocation of resources to address the COVID crisis. For other breakthroughs, COVID served as the forcing function, pushing scientists and medical providers to rethink key aspects of healthcare, including how cancer, Alzheimer’s and other diseases are studied, diagnosed and treated. Regardless of why they happened, many of these advances didn’t make the headlines of major media outlets, even when they represented turning points in overcoming our toughest health challenges.

If it bleeds, it leads—and many disturbing stories, such as COVID surges, deserve top billing. Too often, though, mainstream media’s parallel strategy seems to be: if it innovates, it fades to the background. But our breakthroughs are just as critical to understanding the state of the world as our setbacks. I asked six pragmatic yet forward-thinking experts on health and biotech for their perspectives on the most important, but under-appreciated, breakthrough of 2021.

Their descriptions, below, were lightly edited by Leaps.org for style and format.

New Alzheimer's Therapies

Mary Carrillo, Chief Science Officer at the Alzheimer’s Association

Alzheimer's Association

One of the biggest health stories of 2021 was the FDA’s accelerated approval of aducanumab, the first drug that treats the underlying biology of Alzheimer’s, not just the symptoms. But, Alzheimer’s is a complex disease and will likely need multiple treatment strategies that target various aspects of the disease. It’s been exciting to see many of these types of therapies advance in 2021.

Following the FDA action in June, we saw renewed excitement in this class of disease-modifying drugs that target beta-amyloid, a protein that accumulates in the brain and leads to brain cell death. This class includes drugs from Eli Lilly (donanemab), Eisai (lecanemab) and Roche (gantenerumab), all of which received Breakthrough Designation by the FDA in 2021, advancing the drugs more quickly through the approval process.

We’ve also seen treatments advance that target other hallmarks of Alzheimer’s this year. We heard topline results from a phase 2 trial of semorinemab, a drug that targets tau tangles, a toxic protein that destroys neurons in the Alzheimer’s brain. Plus, strategies targeting neuroinflammation, protecting brain cells, and reducing vascular contributions to dementia – all funded through the Alzheimer's Association Part the Cloud program – advanced into clinical trials.

The future of Alzheimer’s treatment will likely be combination therapy, including drug therapies and healthy lifestyle changes, similar to how we treat heart disease. Washington University announced they will be testing a combination of both anti-amyloid and anti-tau drugs in a first-of-its-kind clinical trial, with funding from the Alzheimer’s Association.

AlphaFold

Olivier Elemento, Director of the Caryl and Israel Englander Institute for Precision Medicine at Cornell University

Cornell University

AlphaFold is an artificial intelligence system designed by Google’s DeepMind that opens the door to understanding the three-dimensional structures and functions of proteins, the building blocks that make up almost half of our bodies' dry weight. In 2021, Google made AlphaFold available for free and since then, researchers have used it to drive greater understanding of how proteins interact. This is a foundational event in the field of biotech.

It’s going to take time for the benefits from AlphaFold to transpire, but once we know the 3-D structures of proteins that cause various diseases, it will be much easier to design new drugs that can bind to these proteins and change their activity. Prior to AlphaFold, scientists had identified the 3-D structure of just 17 percent of about 20,000 proteins in the body, partly because mapping the structures was extremely difficult and expensive. Thanks to AlphaFold, we’ve now jumped to knowing – with at least some degree of certainty – the protein structures of 98.5 percent of the proteome.

For example, kinases are a class of proteins that modify other proteins and are often aberrantly active in cancer due to DNA mutations. Some of the earliest targeted therapies for cancer were ones that block kinases but, before AlphaFold, we had only a premature understanding of a few hundred kinases. We can now determine the structures of all 1,500 kinases. This opens up a universe of drug targets we didn’t have before.

Additional progress has been made this year toward potentially using AlphaFold to develop blockers of certain protein receptors that contribute to psychiatric illnesses and other neurological diseases. And in July, scientists used AlphaFold to map the dimensions of a bacterial protein that may be key to countering antibiotic resistance. Another discovery in May could be essential to finding treatments for COVID-19. Ongoing research is using AlphaFold principles to create entirely new proteins from scratch that could have therapeutic uses. The AlphaFold revolution is just beginning.

Virtual First Care

Jennifer Goldsack, CEO of Digital Medicine Society

Digital Medicine Society

Imagine a new paradigm of healthcare defined by how good we are at keeping people healthy and out of the clinic, not how good we are at offering services to a sick person at the clinic. That is the promise of virtual-first care, or V1C, what I consider to be the greatest, and most underappreciated, advance that occurred in medicine this year.

V1C is defined as medical care accessed through digital interactions where possible, guided by a clinician, and integrated into a person’s everyday life. This type of care includes spit kits mailed for laboratory tests and replacing in-person exams with biometric sensors. It’s built around the patient, not the clinic, and provides us with the opportunity to fundamentally reimagine what good healthcare looks like.

V1C flew under the radar in 2021, eclipsed by the ongoing debate about the value of telehealth more broadly as we emerge from the pandemic. However, the growth in the number of specialty and primary care virtual-first providers has been matched only by the number of national health plans offering virtual-first plans. Our own virtual-first community, IMPACT, has tripled in size, mirroring the rapid growth of the field driven by patient demand for care on their terms.

V1C differs from the ‘bolt on’ approach of video visits as an add-on to traditional visit-based, episodic care. V1C takes a much more holistic approach; it allows individuals to initiate care at any time in any place, recognizing that healthcare needs extend beyond 9-5. It matches the care setting with each individual’s clinical needs and personal preferences, advancing a thorough, evidence-based, safe practice while protecting privacy and recognizing that patients’ expectations have changed following the pandemic. V1C puts the promise of digital health into practice. This is the blueprint for what good healthcare looks like in the digital era.

Digital Clinical Trials

Craig Lipset, Founder of Clinical Innovation Partners and former Head of Clinical Innovation at Pfizer

Craig Lipset

In 2021, a number of digital- and data-enabled approaches have sustained decentralized clinical trials around the world for many different disease types. Pharma companies and clinical researchers are enthusiastic about this development for good reason. Throughout the pandemic, these decentralized trials have allowed patients to continue in studies with a reduced need for site visits, without compromising their safety or data quality.

Risk-based monitoring was deployed using data and thoughtful algorithms to identify quality and safety issues without relying entirely on human monitors visiting research sites. Some trials used digital measures to ensure high quality data on target health outcomes that could be captured in ways that made the participants’ physical location irrelevant. More than three-quarters of research organizations, such as pharma and biotech, have accelerated their decentralized clinical trial strategies. Before COVID-19, 72 percent of trial sites “rarely or never” used telemedicine for trial participants; during COVID, 64 percent “sometimes, often or always” do.

While the research community does appreciate the tremendous hope and promise brought by these innovations, perhaps what has been under-appreciated is the culture shift toward thoughtful risk-taking and a willingness to embrace and adopt clinical trial innovations. These solutions existed before COVID, but the pandemic shifted the perception of risks versus benefits involved in these trials. If there is one breakthrough that is perhaps under-appreciated in life sciences clinical research today, it’s the power of this new culture of willingness and receptivity to outlast the pandemic. Perhaps the greatest loss to the research ecosystem would be if we lose the momentum with recent trial innovations and must wait for another global pandemic in order to see it again.

Designing Biology

Sudip Parikh, CEO of the American Association for the Advancement of Science and Executive Publisher of the Science family of journals

American Association for the Advancement of Science

As our understanding of basic biology has grown, we are fast approaching an era where it will be possible to design and direct biological machinery to create treatments, medicine, and materials. 2021 saw many breakthroughs in this area, three of which are listed below.

The understanding of the human microbiome is growing as is our ability to modify it. One example is the movement toward the notion of the “bug as the drug.” In June, scientists at the Brigham and Women’s Hospital published a paper showing that they had genetically engineered yeast – using CRISPR/Cas9 – to sense and treat inflammation in the body to relieve symptoms of irritable bowel syndrome in mice. This approach could potentially be used to address issues with your microbiome to treat other chronic conditions.

Another way in which we saw the application of basic biology discoveries to real world problems in 2021 is through groundbreaking research on synthetic biology. Several institutions and companies are pursuing this path. Ginkgo Bioworks, valued at $15 billion, already claims to engineer cells with assembly-line efficiency. Imagine the possibilities of programming cells and tissue to perform chemistry for the manufacturing process, inspired by the way your body does chemistry. That could mean cleaner, more controllable, and affordable ways to manufacture food, therapeutics, and other materials in a factory-like setting.

A final example: consider the possibility of leveraging the mechanics of your own body to deliver proteins as treatments, vaccines, and more. In 2021, several scientists accelerated research to apply the mRNA technology underlying COVID-19 vaccines to make and replace proteins that, when they’re missing or don’t work, cause rare conditions such as cystic fibrosis and multiple sclerosis.

These applications of basic biology to solve real world problems are exciting on their own, but their convergence with incredible advances in computing, materials, and drug delivery hold the promise of game-changing progress in health care and beyond.

Brain Biomarkers

David R. Walt, Professor of Biologically Inspired Engineering, Harvard Medical School, Brigham and Women’s Hospital, Wyss Institute at Harvard University

David Walt

2021 brought the first real hope for identifying biomarkers that can predict neurodegenerative disease. Multiple biomarkers (which are measurable indicators of the presence or severity of disease) were identified that can diagnose disease and that correlate with disease progression. Some of these biomarkers were detected in cerebrospinal fluid (CSF) but others were measured directly in blood by examining precursors of protein fibers.

The blood-brain barrier prevents many biomolecules from both exiting and entering the brain, so it has been a longstanding challenge to detect and identify biomarkers that signal changes in brain chemistry due to neurodegenerative disease. With the advent of omics-based approaches (an emerging field that encompasses genomics, epigenomics, transcriptomics, proteomics, and metabolomics), coupled with new ultrasensitive analytical methods, researchers are beginning to identify informative brain biomarkers. Such biomarkers portend our ability to detect earlier stages of disease when therapeutic intervention could be effective at halting progression.

In addition, these biomarkers should enable drug developers to monitor the efficacy of candidate drugs in the blood of participants enrolled in clinical trials aimed at slowing neurodegeneration. These biomarkers begin to move us away from relying on cognitive performance indicators and imaging—methods that do not directly measure the underlying biology of neurodegenerative disease. The identity of these biomarkers may also provide researchers with clues about the causes of neurodegenerative disease, which can serve as new targets for drug intervention.

Often called the window to the soul, the eyes are more sacred than other body parts, at least for some.

Awash in a fluid finely calibrated to keep it alive, a human eye rests inside a transparent cubic device. This ECaBox, or Eyes in a Care Box, is a one-of-a-kind system built by scientists at Barcelona’s Centre for Genomic Regulation (CRG). Their goal is to preserve human eyes for transplantation and related research.

In recent years, scientists have learned to transplant delicate organs such as the liver, lungs or pancreas, but eyes are another story. Even when preserved at the average transplant temperature of 4 Centigrade, they last for 48 hours max. That's one explanation for why transplanting the whole eye isn’t possible—only the cornea, the dome-shaped, outer layer of the eye, can withstand the procedure. The retina, the layer at the back of the eyeball that turns light into electrical signals, which the brain converts into images, is extremely difficult to transplant because it's packed with nerve tissue and blood vessels.

These challenges also make it tough to research transplantation. “This greatly limits their use for experiments, particularly when it comes to the effectiveness of new drugs and treatments,” said Maria Pia Cosma, a biologist at Barcelona’s Centre for Genomic Regulation (CRG), whose team is working on the ECaBox.

Eye transplants are desperately needed, but they're nowhere in sight. About 12.7 million people worldwide need a corneal transplant, which means that only one in 70 people who require them, get them. The gaps are international. Eye banks in the United Kingdom are around 20 percent below the level needed to supply hospitals, while Indian eye banks, which need at least 250,000 corneas per year, collect only around 45 to 50 thousand donor corneas (and of those 60 to 70 percent are successfully transplanted).

As for retinas, it's impossible currently to put one into the eye of another person. Artificial devices can be implanted to restore the sight of patients suffering from severe retinal diseases, but the number of people around the world with such “bionic eyes” is less than 600, while in America alone 11 million people have some type of retinal disease leading to severe vision loss. Add to this an increasingly aging population, commonly facing various vision impairments, and you have a recipe for heavy burdens on individuals, the economy and society. In the U.S. alone, the total annual economic impact of vision problems was $51.4 billion in 2017.

Even if you try growing tissues in the petri dish route into organoids mimicking the function of the human eye, you will not get the physiological complexity of the structure and metabolism of the real thing, according to Cosma. She is a member of a scientific consortium that includes researchers from major institutions from Spain, the U.K., Portugal, Italy and Israel. The consortium has received about $3.8 million from the European Union to pursue innovative eye research. Her team’s goal is to give hope to at least 2.2 billion people across the world afflicted with a vision impairment and 33 million who go through life with avoidable blindness.

Their method? Resuscitating cadaveric eyes for at least a month.

If we succeed, it will be the first intact human model of the eye capable of exploring and analyzing regenerative processes ex vivo. -- Maria Pia Cosma.

“We proposed to resuscitate eyes, that is to restore the global physiology and function of human explanted tissues,” Cosma said, referring to living tissues extracted from the eye and placed in a medium for culture. Their ECaBox is an ex vivo biological system, in which eyes taken from dead donors are placed in an artificial environment, designed to preserve the eye’s temperature and pH levels, deter blood clots, and remove the metabolic waste and toxins that would otherwise spell their demise.

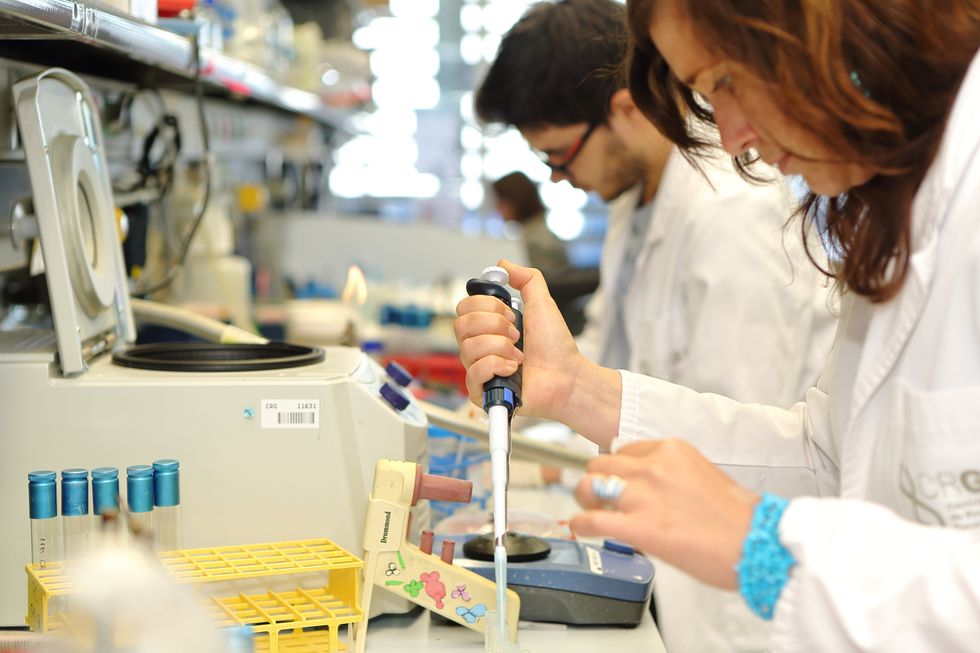

Scientists work on resuscitating eyes in the lab of Maria Pia Cosma.

Courtesy of Maria Pia Cosma.

“One of the great challenges is the passage of the blood in the capillary branches of the eye, what we call long-term perfusion,” Cosma said. Capillaries are an intricate network of very thin blood vessels that transport blood, nutrients and oxygen to cells in the body’s organs and systems. To maintain the garland-shaped structure of this network, sufficient amounts of oxygen and nutrients must be provided through the eye circulation and microcirculation. “Our ambition is to combine perfusion of the vessels with artificial blood," along with using a synthetic form of vitreous, or the gel-like fluid that lets in light and supports the the eye's round shape, Cosma said.

The scientists use this novel setup with the eye submersed in its medium to keep the organ viable, so they can test retinal function. “If we succeed, we will ensure full functionality of a human organ ex vivo. It will be the first intact human model of the eye capable of exploring and analyzing regenerative processes ex vivo,” Cosma added.

A rapidly developing field of regenerative medicine aims to stimulate the body's natural healing processes and restore or replace damaged tissues and organs. But for people with retinal diseases, regenerative medicine progress has been painfully slow. “Experiments on rodents show progress, but the risks for humans are unacceptable,” Cosma said.

The ECaBox could boost progress with regenerative medicine for people with retinal diseases, which has been painfully slow because human experiments involving their eyes are too risky. “We will test emerging treatments while reducing animal research, and greatly accelerate the discovery and preclinical research phase of new possible treatments for vision loss at significantly reduced costs,” Cosma explained. Much less time and money would be wasted during the drug discovery process. Their work may even make it possible to transplant the entire eyeball for those who need it.

“It is a very exciting project,” said Sanjay Sharma, a professor of ophthalmology and epidemiology at Queen's University, in Kingston, Canada. “The ability to explore and monitor regenerative interventions will increasingly be of importance as we develop therapies that can regenerate ocular tissues, including the retina.”

Seemingly, there's no sacred religious text or a holy book prohibiting the practice of eye donation.

But is the world ready for eye transplants? “People are a bit weird or very emotional about donating their eyes as compared to other organs,” Cosma said. And much can be said about the problem of eye donor shortage. Concerns include disfigurement and healthcare professionals’ fear that the conversation about eye donation will upset the departed person’s relatives because of cultural or religious considerations. As just one example, Sharma noted the paucity of eye donations in his home country, Canada.

Yet, experts like Sharma stress the importance of these donations for both the recipients and their family members. “It allows them some psychological benefit in a very difficult time,” he said. So why are global eye banks suffering? Is it because the eyes are the windows to the soul?

Seemingly, there's no sacred religious text or a holy book prohibiting the practice of eye donation. In fact, most major religions of the world permit and support organ transplantation and donation, and by extension eye donation, because they unequivocally see it as an “act of neighborly love and charity.” In Hinduism, the concept of eye donation aligns with the Hindu principle of daan or selfless giving, where individuals donate their organs or body after death to benefit others and contribute to society. In Islam, eye donation is a form of sadaqah jariyah, a perpetual charity, as it can continue to benefit others even after the donor's death.

Meanwhile, Buddhist masters teach that donating an organ gives another person the chance to live longer and practice dharma, the universal law and order, more meaningfully; they also dismiss misunderstandings of the type “if you donate an eye, you’ll be born without an eye in the next birth.” And Christian teachings emphasize the values of love, compassion, and selflessness, all compatible with organ donation, eye donation notwithstanding; besides, those that will have a house in heaven, will get a whole new body without imperfections and limitations.

The explanation for people’s resistance may lie in what Deepak Sarma, a professor of Indian religions and philosophy at Case Western Reserve University in Cleveland, calls “street interpretation” of religious or spiritual dogmas. Consider the mechanism of karma, which is about the causal relation between previous and current actions. “Maybe some Hindus believe there is karma in the eyes and, if the eye gets transplanted into another person, they will have to have that karmic card from now on,” Sarma said. “Even if there is peculiar karma due to an untimely death–which might be interpreted by some as bad karma–then you have the karma of the recipient, which is tremendously good karma, because they have access to these body parts, a tremendous gift,” Sarma said. The overall accumulation is that of good karma: “It’s a beautiful kind of balance,” Sarma said.

For the Jews, Christians, and Muslims who believe in the physical resurrection of the body that will be made new in an afterlife, the already existing body is sacred since it will be the basis of a new refashioned body in an afterlife.---Omar Sultan Haque.

With that said, Sarma believes it is a fallacy to personify or anthropomorphize the eye, which doesn’t have a soul, and stresses that the karma attaches itself to the soul and not the body parts. But for scholars like Omar Sultan Haque—a psychiatrist and social scientist at Harvard Medical School, investigating questions across global health, anthropology, social psychology, and bioethics—the hierarchy of sacredness of body parts is entrenched in human psychology. You cannot equate the pinky toe with the face, he explained.

“The eyes are the window to the soul,” Haque said. “People have a hierarchy of body parts that are considered more sacred or essential to the self or soul, such as the eyes, face, and brain.” In his view, the techno-utopian transhumanist communities (especially those in Silicon Valley) have reduced the totality of a person to a mere material object, a “wet robot” that knows no sacredness or hierarchy of human body parts. “But for the Jews, Christians, and Muslims who believe in the physical resurrection of the body that will be made new in an afterlife, the [already existing] body is sacred since it will be the basis of a new refashioned body in an afterlife,” Haque said. “You cannot treat the body like any old material artifact, or old chair or ragged cloth, just because materialistic, secular ideologies want so,” he continued.

For Cosma and her peers, however, the very definition of what is alive or not is a bit semantic. “As soon as we die, the electrophysiological activity in the eye stops,” she said. “The goal of the project is to restore this activity as soon as possible before the highly complex tissue of the eye starts degrading.” Cosma’s group doesn’t yet know when they will be able to keep the eyes alive and well in the ECaBox, but the consensus is that the sooner the better. Hopefully, the taboos and fears around the eye donations will dissipate around the same time.

As Our AI Systems Get Better, So Must We

In order to build the future we want, we must also become ever better humans, explains the futurist Jamie Metzl in this opinion essay.

As the power and capability of our AI systems increase by the day, the essential question we now face is what constitutes peak human. If we stay where we are while the AI systems we are unleashing continually get better, they will meet and then exceed our capabilities in an ever-growing number of domains. But while some technology visionaries like Elon Musk call for us to slow down the development of AI systems to buy time, this approach alone will simply not work in our hyper-competitive world, particularly when the potential benefits of AI are so great and our frameworks for global governance are so weak. In order to build the future we want, we must also become ever better humans.

The list of activities we once saw as uniquely human where AIs have now surpassed us is long and growing. First, AI systems could beat our best chess players, then our best Go players, then our best champions of multi-player poker. They can see patterns far better than we can, generate medical and other hypotheses most human specialists miss, predict and map out new cellular structures, and even generate beautiful, and, yes, creative, art.

A recent paper by Microsoft researchers analyzing the significant leap in capabilities in OpenAI’s latest AI bot, ChatGPT-4, asserted that the algorithm can “solve novel and difficult tasks that span mathematics, coding, vision, medicine, law, psychology and more, without needing any special prompting.” Calling this functionality “strikingly close to human-level performance,” the authors conclude it “could reasonably be viewed as an early (yet still incomplete) version of an artificial general intelligence (AGI) system.”

The concept of AGI has been around for decades. In its common use, the term suggests a time when individual machines can do many different things at a human level, not just one thing like playing Go or analyzing radiological images. Debating when AGI might arrive, a favorite pastime of computer scientists for years, now has become outdated.

We already have AI algorithms and chatbots that can do lots of different things. Based on the generalist definition, in other words, AGI is essentially already here.

Unfettered by the evolved capacity and storage constraints of our brains, AI algorithms can access nearly all of the digitized cultural inheritance of humanity since the dawn of recorded history and have increasing access to growing pools of digitized biological data from across the spectrum of life.

Once we recognize that both AI systems and humans have unique superpowers, the essential question becomes what each of us can do better than the other and what humans and AIs can best do in active collaboration. The future of our species will depend upon our ability to safely, dynamically, and continually figure that out.

With these ever-larger datasets, rapidly increasing computing and memory power, and new and better algorithms, our AI systems will keep getting better faster than most of us can today imagine. These capabilities have the potential to help us radically improve our healthcare, agriculture, and manufacturing, make our economies more productive and our development more sustainable, and do many important things better.

Soon, they will learn how to write their own code. Like human children, in other words, AI systems will grow up. But even that doesn’t mean our human goose is cooked.

Just like dolphins and dogs, these alternate forms of intelligence will be uniquely theirs, not a lesser or greater version of ours. There are lots of things AI systems can't do and will never be able to do because our AI algorithms, for better and for worse, will never be human. Our embodied human intelligence is its own thing.

Our human intelligence is uniquely ours based on the capacities we have developed in our 3.8-billion-year journey from single cell organisms to us. Our brains and bodies represent continuous adaptations on earlier models, which is why our skeletal systems look like those of lizards and our brains like most other mammals with some extra cerebral cortex mixed in. Human intelligence isn’t just some type of disembodied function but the inextricable manifestation of our evolved physical reality. It includes our sensory analytical skills and all of our animal instincts, intuitions, drives, and perceptions. Disembodied machine intelligence is something different than what we have evolved and possess.

Because of this, some linguists including Noam Chomsky have recently argued that AI systems will never be intelligent as long as they are just manipulating symbols and mathematical tokens without any inherent understanding. Nothing could be further from the truth. Anyone interacting with even first-generation AI chatbots quickly realizes that while these systems are far from perfect or omniscient and can sometimes be stupendously oblivious, they are surprisingly smart and versatile and will get more so… forever. We have little idea even how our own minds work, so judging AI systems based on their output is relatively close to how we evaluate ourselves.

Anyone not awed by the potential of these AI systems is missing the point. AI’s newfound capacities demand that we work urgently to establish norms, standards, and regulations at all levels from local to global to manage the very real risks. Pausing our development of AI systems now doesn’t make sense, however, even if it were possible, because we have no sufficient ways of uniformly enacting such a pause, no plan for how we would use the time, and no common framework for addressing global collective challenges like this.

But if all we feel is a passive awe for these new capabilities, we will also be missing the point.

Human evolution, biology, and cultural history are not just some kind of accidental legacy, disability, or parlor trick, but our inherent superpower. Our ancestors outcompeted rivals for billions of years to make us so well suited to the world we inhabit and helped build. Our social organization at scale has made it possible for us to forge civilizations of immense complexity, engineer biology and novel intelligence, and extend our reach to the stars. Our messy, embodied, intuitive, social human intelligence is roughly mimicable by AI systems but, by definition, never fully replicable by them.

Once we recognize that both AI systems and humans have unique superpowers, the essential question becomes what each of us can do better than the other and what humans and AIs can best do in active collaboration. We still don't know. The future of our species will depend upon our ability to safely, dynamically, and continually figure that out.

As we do, we'll learn that many of our ideas and actions are made up of parts, some of which will prove essentially human and some of which can be better achieved by AI systems. Those in every walk of work and life who most successfully identify the optimal contributions of humans, AIs, and the two together, and who build systems and workflows empowering humans to do human things, machines to do machine things, and humans and machines to work together in ways maximizing the respective strengths of each, will be the champions of the 21st century across all fields.

The dawn of the age of machine intelligence is upon us. It’s a quantum leap equivalent to the domestication of plants and animals, industrialization, electrification, and computing. Each of these revolutions forced us to rethink what it means to be human, how we live, and how we organize ourselves. The AI revolution will happen more suddenly than these earlier transformations but will follow the same general trajectory. Now is the time to aggressively prepare for what is fast heading our way, including by active public engagement, governance, and regulation.

AI systems will not replace us, but, like these earlier technology-driven revolutions, they will force us to become different humans as we co-evolve with our technology. We will never reach peak human in our ongoing evolutionary journey, but we’ve got to manage this transition wisely to build the type of future we’d like to inhabit.

Alongside our ascending AIs, we humans still have a lot of climbing to do.