Clever Firm Predicts Patients Most at Risk, Then Tries to Intervene Before They Get Sicker

Health firm Populytics tracks and analyzes patient data, and makes care suggestions based on that data.

The diabetic patient hit the danger zone.

Ideally, blood sugar, measured by an A1C test, rests at 5.9 or less. A 7 is elevated, according to the Diabetes Council. Over 10, and you're into the extreme danger zone, at risk of every diabetic crisis from kidney failure to blindness.

In three months of working with a case manager, Jen's blood sugar had dropped to 7.2, a much safer range.

This patient's A1C was 10. Let's call her Jen for the sake of this story. (Although the facts of her case are real, the patient's actual name wasn't released due to privacy laws.).

Jen happens to live in Pennsylvania's Lehigh Valley, home of the nonprofit Lehigh Valley Health Network, which has eight hospital campuses and various clinics and other services. This network has invested more than $1 billion in IT infrastructure and founded Populytics, a spin-off firm that tracks and analyzes patient data, and makes care suggestions based on that data.

When Jen left the doctor's office, the Populytics data machine started churning, analyzing her data compared to a wealth of information about future likely hospital visits if she did not comply with recommendations, as well as the potential positive impacts of outreach and early intervention.

About a month after Jen received the dangerous blood test results, a community outreach specialist with psychological training called her. She was on a list generated by Populytics of follow-up patients to contact.

"It's a very gentle conversation," says Cathryn Kelly, who manages a care coordination team at Populytics. "The case manager provides them understanding and support and coaching." The goal, in this case, was small behavioral changes that would actually stick, like dietary ones.

In three months of working with a case manager, Jen's blood sugar had dropped to 7.2, a much safer range. The odds of her cycling back to the hospital ER or veering into kidney failure, or worse, had dropped significantly.

While the health network is extremely localized to one area of one state, using data to inform precise medical decision-making appears to be the wave of the future, says Ann Mongovern, the associate director of Health Care Ethics at the Markkula Center for Applied Ethics at Santa Clara University in California.

"Many hospitals and hospital systems don't yet try to do this at all, which is striking given where we're at in terms of our general technical ability in this society," Mongovern says.

How It Happened

While many hospitals make money by filling beds, the Lehigh Valley Health Network, as a nonprofit, accepts many patients on Medicaid and other government insurances that don't cover some of the costs of a hospitalization. The area's population is both poorer and older than national averages, according to the U.S. Census data, meaning more people with higher medical needs that may not have the support to care for themselves. They end up in the ER, or worse, again and again.

In the early 2000s, LVHN CEO Dr. Brian Nester started wondering if his health network could develop a way to predict who is most likely to land themselves a pricey ICU stay -- and offer support before those people end up needing serious care.

Embracing data use in such specific ways also brings up issues of data security and patient safety.

"There was an early understanding, even if you go back to the (federal) balanced budget act of 1997, that we were just kicking the can down the road to having a functional financial model to deliver healthcare to everyone with a reasonable price," Nester says. "We've got a lot of people living longer without more of an investment in the healthcare trust."

Popultyics, founded in 2013, was the result of years of planning and agonizing over those population numbers and cost concerns.

"We looked at our own health plan," Nester says. Out of all the employees and dependants on the LVHN's own insurance network, "roughly 1.5 percent of our 25,000 people — under 400 people — drove $30 million of our $130 million on insurance costs -- about 25 percent."

"You don't have to boil the ocean to take cost out of the system," he says. "You just have to focus on that 1.5%."

Take Jen, the diabetic patient. High blood sugar can lead to kidney failure, which can mean weekly expensive dialysis for 20 years. Investing in the data and staff to reach patients, he says, is "pennies compared to $100 bills."

For most doctors, "there's no awareness for providers to know who they should be seeing vs. who they are seeing. There's no incentive, because the incentive is to see as many patients as you can," he says.

To change that, first the LVHN invested in the popular medical management system, Epic. Then, they negotiated with the top 18 insurance companies that cover patients in the region to allow access to their patient care data, which means they have reams of patient history to feed the analytics machine in order to make predictions about outcomes. Nester admits not every hospital could do that -- with 52 percent of the market share, LVHN had a very strong negotiating position.

Third party services take that data and churn out analytics that feeds models and care management plans. All identifying information is stripped from the data.

"We can do predictive modeling in patients," says Populytics President and CEO Gregory Kile. "We can identify care gaps. Those care gaps are noted as alerts when the patient presents at the office."

Kile uses himself as a hypothetical patient.

"I pull up Gregory Kile, and boom, I see a flag or an alert. I see he hasn't been in for his last blood test. There is a care gap there we need to complete."

"There's just so much more you can do with that information," he says, envisioning a future where follow-up for, say, knee replacement surgery and outcomes could be tracked, and either validated or changed.

Ethical Issues at the Forefront

Of course, embracing data use in such specific ways also brings up issues of security and patient safety. For example, says medical ethicist Mongovern, there are many touchpoints where breaches could occur. The public has a growing awareness of how data used to personalize their experiences, such as social media analytics, can also be monetized and sold in ways that benefit a company, but not the user. That's not to say data supporting medical decisions is a bad thing, she says, just one with potential for public distrust if not handled thoughtfully.

"You're going to need to do this to stay competitive," she says. "But there's obviously big challenges, not the least of which is patient trust."

So far, a majority of the patients targeted – 62 percent -- appear to embrace the effort.

Among the ways the LVHN uses the data is monthly reports they call registries, which include patients who have just come in contact with the health network, either through the hospital or a doctor that works with them. The community outreach team members at Populytics take the names from the list, pull their records, and start calling. So far, a majority of the patients targeted – 62 percent -- appear to embrace the effort.

Says Nester: "Most of these are vulnerable people who are thrilled to have someone care about them. So they engage, and when a person engages in their care, they take their insulin shots. It's not rocket science. The rocket science is in identifying who the people are — the delivery of care is easy."

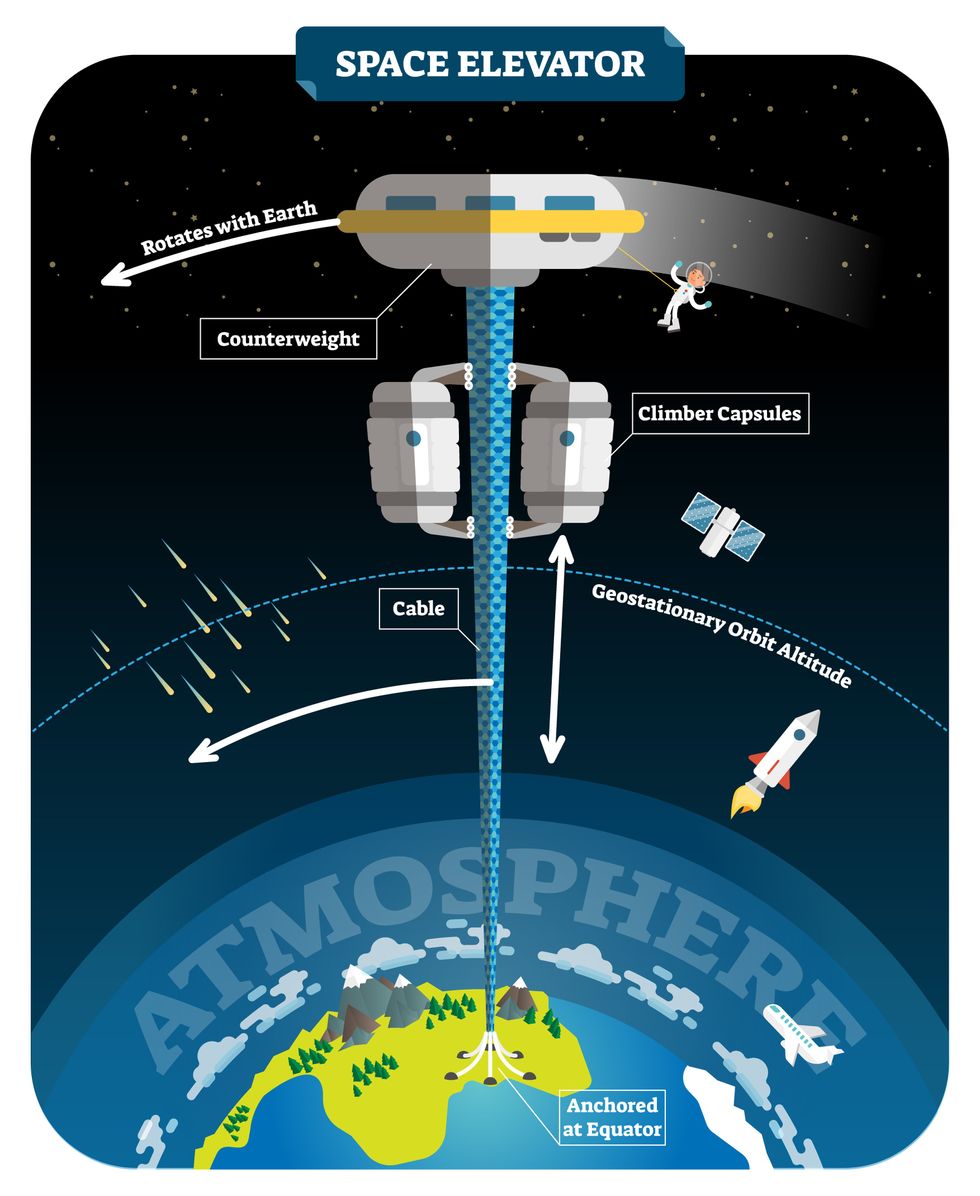

New elevators could lift up our access to space

A space elevator would be cheaper and cleaner than using rockets

Story by Big Think

When people first started exploring space in the 1960s, it cost upwards of $80,000 (adjusted for inflation) to put a single pound of payload into low-Earth orbit.

A major reason for this high cost was the need to build a new, expensive rocket for every launch. That really started to change when SpaceX began making cheap, reusable rockets, and today, the company is ferrying customer payloads to LEO at a price of just $1,300 per pound.

This is making space accessible to scientists, startups, and tourists who never could have afforded it previously, but the cheapest way to reach orbit might not be a rocket at all — it could be an elevator.

The space elevator

The seeds for a space elevator were first planted by Russian scientist Konstantin Tsiolkovsky in 1895, who, after visiting the 1,000-foot (305 m) Eiffel Tower, published a paper theorizing about the construction of a structure 22,000 miles (35,400 km) high.

This would provide access to geostationary orbit, an altitude where objects appear to remain fixed above Earth’s surface, but Tsiolkovsky conceded that no material could support the weight of such a tower.

We could then send electrically powered “climber” vehicles up and down the tether to deliver payloads to any Earth orbit.

In 1959, soon after Sputnik, Russian engineer Yuri N. Artsutanov proposed a way around this issue: instead of building a space elevator from the ground up, start at the top. More specifically, he suggested placing a satellite in geostationary orbit and dropping a tether from it down to Earth’s equator. As the tether descended, the satellite would ascend. Once attached to Earth’s surface, the tether would be kept taut, thanks to a combination of gravitational and centrifugal forces.

We could then send electrically powered “climber” vehicles up and down the tether to deliver payloads to any Earth orbit. According to physicist Bradley Edwards, who researched the concept for NASA about 20 years ago, it’d cost $10 billion and take 15 years to build a space elevator, but once operational, the cost of sending a payload to any Earth orbit could be as low as $100 per pound.

“Once you reduce the cost to almost a Fed-Ex kind of level, it opens the doors to lots of people, lots of countries, and lots of companies to get involved in space,” Edwards told Space.com in 2005.

In addition to the economic advantages, a space elevator would also be cleaner than using rockets — there’d be no burning of fuel, no harmful greenhouse emissions — and the new transport system wouldn’t contribute to the problem of space junk to the same degree that expendable rockets do.

So, why don’t we have one yet?

Tether troubles

Edwards wrote in his report for NASA that all of the technology needed to build a space elevator already existed except the material needed to build the tether, which needs to be light but also strong enough to withstand all the huge forces acting upon it.

The good news, according to the report, was that the perfect material — ultra-strong, ultra-tiny “nanotubes” of carbon — would be available in just two years.

“[S]teel is not strong enough, neither is Kevlar, carbon fiber, spider silk, or any other material other than carbon nanotubes,” wrote Edwards. “Fortunately for us, carbon nanotube research is extremely hot right now, and it is progressing quickly to commercial production.”Unfortunately, he misjudged how hard it would be to synthesize carbon nanotubes — to date, no one has been able to grow one longer than 21 inches (53 cm).

Further research into the material revealed that it tends to fray under extreme stress, too, meaning even if we could manufacture carbon nanotubes at the lengths needed, they’d be at risk of snapping, not only destroying the space elevator, but threatening lives on Earth.

Looking ahead

Carbon nanotubes might have been the early frontrunner as the tether material for space elevators, but there are other options, including graphene, an essentially two-dimensional form of carbon that is already easier to scale up than nanotubes (though still not easy).

Contrary to Edwards’ report, Johns Hopkins University researchers Sean Sun and Dan Popescu say Kevlar fibers could work — we would just need to constantly repair the tether, the same way the human body constantly repairs its tendons.

“Using sensors and artificially intelligent software, it would be possible to model the whole tether mathematically so as to predict when, where, and how the fibers would break,” the researchers wrote in Aeon in 2018.

“When they did, speedy robotic climbers patrolling up and down the tether would replace them, adjusting the rate of maintenance and repair as needed — mimicking the sensitivity of biological processes,” they continued.Astronomers from the University of Cambridge and Columbia University also think Kevlar could work for a space elevator — if we built it from the moon, rather than Earth.

They call their concept the Spaceline, and the idea is that a tether attached to the moon’s surface could extend toward Earth’s geostationary orbit, held taut by the pull of our planet’s gravity. We could then use rockets to deliver payloads — and potentially people — to solar-powered climber robots positioned at the end of this 200,000+ mile long tether. The bots could then travel up the line to the moon’s surface.

This wouldn’t eliminate the need for rockets to get into Earth’s orbit, but it would be a cheaper way to get to the moon. The forces acting on a lunar space elevator wouldn’t be as strong as one extending from Earth’s surface, either, according to the researchers, opening up more options for tether materials.

“[T]he necessary strength of the material is much lower than an Earth-based elevator — and thus it could be built from fibers that are already mass-produced … and relatively affordable,” they wrote in a paper shared on the preprint server arXiv.

After riding up the Earth-based space elevator, a capsule would fly to a space station attached to the tether of the moon-based one.

Electrically powered climber capsules could go up down the tether to deliver payloads to any Earth orbit.

Adobe Stock

Some Chinese researchers, meanwhile, aren’t giving up on the idea of using carbon nanotubes for a space elevator — in 2018, a team from Tsinghua University revealed that they’d developed nanotubes that they say are strong enough for a tether.

The researchers are still working on the issue of scaling up production, but in 2021, state-owned news outlet Xinhua released a video depicting an in-development concept, called “Sky Ladder,” that would consist of space elevators above Earth and the moon.

After riding up the Earth-based space elevator, a capsule would fly to a space station attached to the tether of the moon-based one. If the project could be pulled off — a huge if — China predicts Sky Ladder could cut the cost of sending people and goods to the moon by 96 percent.

The bottom line

In the 120 years since Tsiolkovsky looked at the Eiffel Tower and thought way bigger, tremendous progress has been made developing materials with the properties needed for a space elevator. At this point, it seems likely we could one day have a material that can be manufactured at the scale needed for a tether — but by the time that happens, the need for a space elevator may have evaporated.

Several aerospace companies are making progress with their own reusable rockets, and as those join the market with SpaceX, competition could cause launch prices to fall further.

California startup SpinLaunch, meanwhile, is developing a massive centrifuge to fling payloads into space, where much smaller rockets can propel them into orbit. If the company succeeds (another one of those big ifs), it says the system would slash the amount of fuel needed to reach orbit by 70 percent.

Even if SpinLaunch doesn’t get off the ground, several groups are developing environmentally friendly rocket fuels that produce far fewer (or no) harmful emissions. More work is needed to efficiently scale up their production, but overcoming that hurdle will likely be far easier than building a 22,000-mile (35,400-km) elevator to space.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.

New tech aims to make the ocean healthier for marine life

Overabundance of dissolved carbon dioxide poses a threat to marine life. A new system detects elevated levels of the greenhouse gases and mitigates them on the spot.

A defunct drydock basin arched by a rusting 19th century steel bridge seems an incongruous place to conduct state-of-the-art climate science. But this placid and protected sliver of water connecting Brooklyn’s Navy Yard to the East River was just right for Garrett Boudinot to float a small dock topped with water carbon-sensing gear. And while his system right now looks like a trio of plastic boxes wired up together, it aims to mediate the growing ocean acidification problem, caused by overabundance of dissolved carbon dioxide.

Boudinot, a biogeochemist and founder of a carbon-management startup called Vycarb, is honing his method for measuring CO2 levels in water, as well as (at least temporarily) correcting their negative effects. It’s a challenge that’s been occupying numerous climate scientists as the ocean heats up, and as states like New York recognize that reducing emissions won’t be enough to reach their climate goals; they’ll have to figure out how to remove carbon, too.

To date, though, methods for measuring CO2 in water at scale have been either intensely expensive, requiring fancy sensors that pump CO2 through membranes; or prohibitively complicated, involving a series of lab-based analyses. And that’s led to a bottleneck in efforts to remove carbon as well.

But recently, Boudinot cracked part of the code for measurement and mitigation, at least on a small scale. While the rest of the industry sorts out larger intricacies like getting ocean carbon markets up and running and driving carbon removal at billion-ton scale in centralized infrastructure, his decentralized method could have important, more immediate implications.

Specifically, for shellfish hatcheries, which grow seafood for human consumption and for coastal restoration projects. Some of these incubators for oysters and clams and scallops are already feeling the negative effects of excess carbon in water, and Vycarb’s tech could improve outcomes for the larval- and juvenile-stage mollusks they’re raising. “We’re learning from these folks about what their needs are, so that we’re developing our system as a solution that’s relevant,” Boudinot says.

Ocean acidification can wreak havoc on developing shellfish, inhibiting their shells from growing and leading to mass die-offs.

Ocean waters naturally absorb CO2 gas from the atmosphere. When CO2 accumulates faster than nature can dissipate it, it reacts with H2O molecules, forming carbonic acid, H2CO3, which makes the water column more acidic. On the West Coast, acidification occurs when deep, carbon dioxide-rich waters upwell onto the coast. This can wreak havoc on developing shellfish, inhibiting their shells from growing and leading to mass die-offs; this happened, disastrously, at Pacific Northwest oyster hatcheries in 2007.

This type of acidification will eventually come for the East Coast, too, says Ryan Wallace, assistant professor and graduate director of environmental studies and sciences at Long Island’s Adelphi University, who studies acidification. But at the moment, East Coast acidification has other sources: agricultural runoff, usually in the form of nitrogen, and human and animal waste entering coastal areas. These excess nutrient loads cause algae to grow, which isn’t a problem in and of itself, Wallace says; but when algae die, they’re consumed by bacteria, whose respiration in turn bumps up CO2 levels in water.

“Unfortunately, this is occurring at the bottom [of the water column], where shellfish organisms live and grow,” Wallace says. Acidification on the East Coast is minutely localized, occurring closest to where nutrients are being released, as well as seasonally; at least one local shellfish farm, on Fishers Island in the Long Island Sound, has contended with its effects.

The second Vycarb pilot, ready to be installed at the East Hampton shellfish hatchery.

Courtesy of Vycarb

Besides CO2, ocean water contains two other forms of dissolved carbon — carbonate (CO3-) and bicarbonate (HCO3) — at all times, at differing levels. At low pH (acidic), CO2 prevails; at medium pH, HCO3 is the dominant form; at higher pH, CO3 dominates. Boudinot’s invention is the first real-time measurement for all three, he says. From the dock at the Navy Yard, his pilot system uses carefully calibrated but low-cost sensors to gauge the water’s pH and its corresponding levels of CO2. When it detects elevated levels of the greenhouse gas, the system mitigates it on the spot. It does this by adding a bicarbonate powder that’s a byproduct of agricultural limestone mining in nearby Pennsylvania. Because the bicarbonate powder is alkaline, it increases the water pH and reduces the acidity. “We drive a chemical reaction to increase the pH to convert greenhouse gas- and acid-causing CO2 into bicarbonate, which is HCO3,” Boudinot says. “And HCO3 is what shellfish and fish and lots of marine life prefers over CO2.”

This de-acidifying “buffering” is something shellfish operations already do to water, usually by adding soda ash (NaHCO3), which is also alkaline. Some hatcheries add soda ash constantly, just in case; some wait till acidification causes significant problems. Generally, for an overly busy shellfish farmer to detect acidification takes time and effort. “We’re out there daily, taking a look at the pH and figuring out how much we need to dose it,” explains John “Barley” Dunne, director of the East Hampton Shellfish Hatchery on Long Island. “If this is an automatic system…that would be much less labor intensive — one less thing to monitor when we have so many other things we need to monitor.”

Across the Sound at the hatchery he runs, Dunne annually produces 30 million hard clams, 6 million oysters, and “if we’re lucky, some years we get a million bay scallops,” he says. These mollusks are destined for restoration projects around the town of East Hampton, where they’ll create habitat, filter water, and protect the coastline from sea level rise and storm surge. So far, Dunne’s hatchery has largely escaped the ill effects of acidification, although his bay scallops are having a finicky year and he’s checking to see if acidification might be part of the problem. But “I think it's important to have these solutions ready-at-hand for when the time comes,” he says. That’s why he’s hosting a second, 70-liter Vycarb pilot starting this summer on a dock adjacent to his East Hampton operation; it will amp up to a 50,000 liter-system in a few months.

If it can buffer water over a large area, absolutely this will benefit natural spawns. -- John “Barley” Dunne.

Boudinot hopes this new pilot will act as a proof of concept for hatcheries up and down the East Coast. The area from Maine to Nova Scotia is experiencing the worst of Atlantic acidification, due in part to increased Arctic meltwater combining with Gulf of St. Lawrence freshwater; that decreases saturation of calcium carbonate, making the water more acidic. Boudinot says his system should work to adjust low pH regardless of the cause or locale. The East Hampton system will eventually test and buffer-as-necessary the water that Dunne pumps from the Sound into 100-gallon land-based tanks where larvae grow for two weeks before being transferred to an in-Sound nursery to plump up.

Dunne says this could have positive effects — not only on his hatchery but on wild shellfish populations, too, reducing at least one stressor their larvae experience (others include increasing water temperatures and decreased oxygen levels). “If it can buffer water over a large area, absolutely this will [benefit] natural spawns,” he says.

No one believes the Vycarb model — even if it proves capable of functioning at much greater scale — is the sole solution to acidification in the ocean. Wallace says new water treatment plants in New York City, which reduce nitrogen released into coastal waters, are an important part of the equation. And “certainly, some green infrastructure would help,” says Boudinot, like restoring coastal and tidal wetlands to help filter nutrient runoff.

In the meantime, Boudinot continues to collect data in advance of amping up his own operations. Still unknown is the effect of releasing huge amounts of alkalinity into the ocean. Boudinot says a pH of 9 or higher can be too harsh for marine life, plus it can also trigger a release of CO2 from the water back into the atmosphere. For a third pilot, on Governor’s Island in New York Harbor, Vycarb will install yet another system from which Boudinot’s team will frequently sample to analyze some of those and other impacts. “Let's really make sure that we know what the results are,” he says. “Let's have data to show, because in this carbon world, things behave very differently out in the real world versus on paper.”