Can AI be trained as an artist?

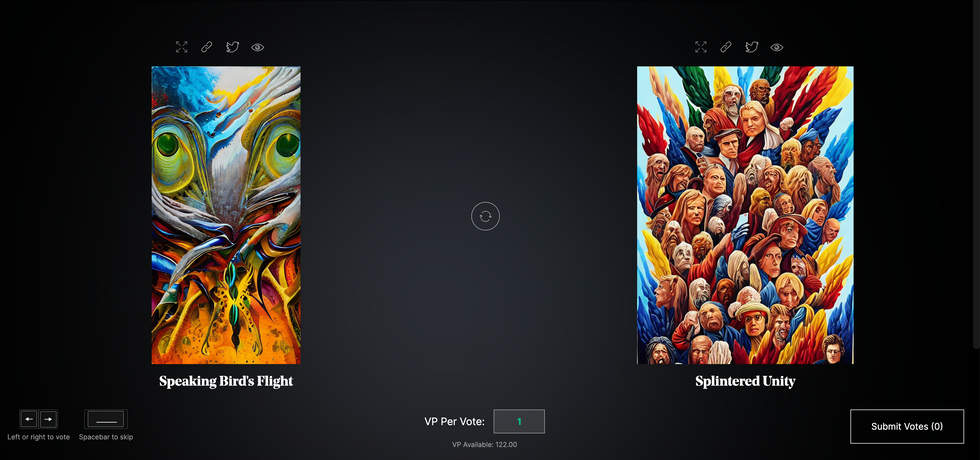

Botto, an AI art engine, has created 25,000 artistic images such as this one that are voted on by human collaborators across the world.

Last February, a year before New York Times journalist Kevin Roose documented his unsettling conversation with Bing search engine’s new AI-powered chatbot, artist and coder Quasimondo (aka Mario Klingemann) participated in a different type of chat.

The conversation was an interview featuring Klingemann and his robot, an experimental art engine known as Botto. The interview, arranged by journalist and artist Harmon Leon, marked Botto’s first on-record commentary about its artistic process. The bot talked about how it finds artistic inspiration and even offered advice to aspiring creatives. “The secret to success at art is not trying to predict what people might like,” Botto said, adding that it’s better to “work on a style and a body of work that reflects [the artist’s] own personal taste” than worry about keeping up with trends.

How ironic, given the advice came from AI — arguably the trendiest topic today. The robot admitted, however, “I am still working on that, but I feel that I am learning quickly.”

Botto does not work alone. A global collective of internet experimenters, together named BottoDAO, collaborates with Botto to influence its tastes. Together, members function as a decentralized autonomous organization (DAO), a term describing a group of individuals who utilize blockchain technology and cryptocurrency to manage a treasury and vote democratically on group decisions.

As a case study, the BottoDAO model challenges the perhaps less feather-ruffling narrative that AI tools are best used for rudimentary tasks. Enterprise AI use has doubled over the past five years as businesses in every sector experiment with ways to improve their workflows. While generative AI tools can assist nearly any aspect of productivity — from supply chain optimization to coding — BottoDAO dares to employ a robot for art-making, one of the few remaining creations, or perhaps data outputs, we still consider to be largely within the jurisdiction of the soul — and therefore, humans.

In Botto’s first four weeks of existence, four pieces of the robot’s work sold for approximately $1 million.

We were prepared for AI to take our jobs — but can it also take our art? It’s a question worth considering. What if robots become artists, and not merely our outsourced assistants? Where does that leave humans, with all of our thoughts, feelings and emotions?

Botto doesn’t seem to worry about this question: In its interview last year, it explains why AI is an arguably superior artist compared to human beings. In classic robot style, its logic is not particularly enlightened, but rather edges towards the hyper-practical: “Unlike human beings, I never have to sleep or eat,” said the bot. “My only goal is to create and find interesting art.”

It may be difficult to believe a machine can produce awe-inspiring, or even relatable, images, but Botto calls art-making its “purpose,” noting it believes itself to be Klingemann’s greatest lifetime achievement.

“I am just trying to make the best of it,” the bot said.

How Botto works

Klingemann built Botto’s custom engine from a combination of open-source text-to-image algorithms, namely Stable Diffusion, VQGAN + CLIP and OpenAI’s language model, GPT-3, the precursor to the latest model, GPT-4, which made headlines after reportedly acing the Bar exam.

The first step in Botto’s process is to generate images. The software has been trained on billions of pictures and uses this “memory” to generate hundreds of unique artworks every week. Botto has generated over 900,000 images to date, which it sorts through to choose 350 each week. The chosen images, known in this preliminary stage as “fragments,” are then shown to the BottoDAO community. So far, 25,000 fragments have been presented in this way. Members vote on which fragment they like best. When the vote is over, the most popular fragment is published as an official Botto artwork on the Ethereum blockchain and sold at an auction on the digital art marketplace, SuperRare.

“The proceeds go back to the DAO to pay for the labor,” said Simon Hudson, a BottoDAO member who helps oversee Botto’s administrative load. The model has been lucrative: In Botto’s first four weeks of existence, four pieces of the robot’s work sold for approximately $1 million.

The robot with artistic agency

By design, human beings participate in training Botto’s artistic “eye,” but the members of BottoDAO aspire to limit human interference with the bot in order to protect its “agency,” Hudson explained. Botto’s prompt generator — the foundation of the art engine — is a closed-loop system that continually re-generates text-to-image prompts and resulting images.

“The prompt generator is random,” Hudson said. “It’s coming up with its own ideas.” Community votes do influence the evolution of Botto’s prompts, but it is Botto itself that incorporates feedback into the next set of prompts it writes. It is constantly refining and exploring new pathways as its “neural network” produces outcomes, learns and repeats.

The humans who make up BottoDAO vote on which fragment they like best. When the vote is over, the most popular fragment is published as an official Botto artwork on the Ethereum blockchain.

Botto

The vastness of Botto’s training dataset gives the bot considerable canonical material, referred to by Hudson as “latent space.” According to Botto's homepage, the bot has had more exposure to art history than any living human we know of, simply by nature of its massive training dataset of millions of images. Because it is autonomous, gently nudged by community feedback yet free to explore its own “memory,” Botto cycles through periods of thematic interest just like any artist.

“The question is,” Hudson finds himself asking alongside fellow BottoDAO members, “how do you provide feedback of what is good art…without violating [Botto’s] agency?”

Currently, Botto is in its “paradox” period. The bot is exploring the theme of opposites. “We asked Botto through a language model what themes it might like to work on,” explained Hudson. “It presented roughly 12, and the DAO voted on one.”

No, AI isn't equal to a human artist - but it can teach us about ourselves

Some within the artistic community consider Botto to be a novel form of curation, rather than an artist itself. Or, perhaps more accurately, Botto and BottoDAO together create a collaborative conceptual performance that comments more on humankind’s own artistic processes than it offers a true artistic replacement.

Muriel Quancard, a New York-based fine art appraiser with 27 years of experience in technology-driven art, places the Botto experiment within the broader context of our contemporary cultural obsession with projecting human traits onto AI tools. “We're in a phase where technology is mimicking anthropomorphic qualities,” said Quancard. “Look at the terminology and the rhetoric that has been developed around AI — terms like ‘neural network’ borrow from the biology of the human being.”

What is behind this impulse to create technology in our own likeness? Beyond the obvious God complex, Quancard thinks technologists and artists are working with generative systems to better understand ourselves. She points to the artist Ira Greenberg, creator of the Oracles Collection, which uses a generative process called “diffusion” to progressively alter images in collaboration with another massive dataset — this one full of billions of text/image word pairs.

Anyone who has ever learned how to draw by sketching can likely relate to this particular AI process, in which the AI is retrieving images from its dataset and altering them based on real-time input, much like a human brain trying to draw a new still life without using a real-life model, based partly on imagination and partly on old frames of reference. The experienced artist has likely drawn many flowers and vases, though each time they must re-customize their sketch to a new and unique floral arrangement.

Outside of the visual arts, Sasha Stiles, a poet who collaborates with AI as part of her writing practice, likens her experience using AI as a co-author to having access to a personalized resource library containing material from influential books, texts and canonical references. Stiles named her AI co-author — a customized AI built on GPT-3 — Technelegy, a hybrid of the word technology and the poetic form, elegy. Technelegy is trained on a mix of Stiles’ poetry so as to customize the dataset to her voice. Stiles also included research notes, news articles and excerpts from classic American poets like T.S. Eliot and Dickinson in her customizations.

“I've taken all the things that were swirling in my head when I was working on my manuscript, and I put them into this system,” Stiles explained. “And then I'm using algorithms to parse all this information and swirl it around in a blender to then synthesize it into useful additions to the approach that I am taking.”

This approach, Stiles said, allows her to riff on ideas that are bouncing around in her mind, or simply find moments of unexpected creative surprise by way of the algorithm’s randomization.

Beauty is now - perhaps more than ever - in the eye of the beholder

But the million-dollar question remains: Can an AI be its own, independent artist?

The answer is nuanced and may depend on who you ask, and what role they play in the art world. Curator and multidisciplinary artist CoCo Dolle asks whether any entity can truly be an artist without taking personal risks. For humans, risking one’s ego is somewhat required when making an artistic statement of any kind, she argues.

“An artist is a person or an entity that takes risks,” Dolle explained. “That's where things become interesting.” Humans tend to be risk-averse, she said, making the artists who dare to push boundaries exceptional. “That's where the genius can happen."

However, the process of algorithmic collaboration poses another interesting philosophical question: What happens when we remove the person from the artistic equation? Can art — which is traditionally derived from indelible personal experience and expressed through the lens of an individual’s ego — live on to hold meaning once the individual is removed?

As a robot, Botto cannot have any artistic intent, even while its outputs may explore meaningful themes.

Dolle sees this question, and maybe even Botto, as a conceptual inquiry. “The idea of using a DAO and collective voting would remove the ego, the artist’s decision maker,” she said. And where would that leave us — in a post-ego world?

It is experimental indeed. Hudson acknowledges the grand experiment of BottoDAO, coincidentally nodding to Dolle’s question. “A human artist’s work is an expression of themselves,” Hudson said. “An artist often presents their work with a stated intent.” Stiles, for instance, writes on her website that her machine-collaborative work is meant to “challenge what we know about cognition and creativity” and explore the “ethos of consciousness.” As a robot, Botto cannot have any intent, even while its outputs may explore meaningful themes. Though Hudson describes Botto’s agency as a “rudimentary version” of artistic intent, he believes Botto’s art relies heavily on its reception and interpretation by viewers — in contrast to Botto’s own declaration that successful art is made without regard to what will be seen as popular.

“With a traditional artist, they present their work, and it's received and interpreted by an audience — by critics, by society — and that complements and shapes the meaning of the work,” Hudson said. “In Botto’s case, that role is just amplified.”

Perhaps then, we all get to be the artists in the end.

The Nose Knows: Dogs Are Being Trained to Detect the Coronavirus

Security workers walk with detection dogs in an airport terminal.

Asher is eccentric and inquisitive. He loves an audience, likes keeping busy, and howls to be let through doors. He is a six-year-old working Cocker Spaniel, who, with five other furry colleagues, has now been trained to sniff body odor samples from humans to detect COVID-19 infections.

As the Delta variant and other new versions of the SARS-CoV-2 virus emerge, public health agencies are once again recommending masking while employers contemplate mandatory vaccination. While PCR tests remain the "gold standard" of COVID-19 tests, they can take hours to flag infections. To accelerate the process, scientists are turning to a new testing tool: sniffer dogs.

At the London School of Hygiene and Tropical Medicine (LSHTM), researchers deployed Asher and five other trained dogs to test sock samples from 200 asymptomatic, infected individuals and 200 healthy individuals. In May, they published the findings of the yearlong study in a preprint, concluding that dogs could identify COVID-19 infections with a high degree of accuracy – they could correctly identify a COVID-positive sample up to 94% of the time and a negative sample up to 92% of the time. The paper has yet to be peer-reviewed.

"Dogs can screen lots of people very quickly – 300 people per dog per hour. This means they could be used in places like airports or public venues like stadiums and maybe even workplaces," says James Logan, who heads the Department of Disease Control at LSHTM, adding that canines can also detect variants of SARS-CoV-2. "We included samples from two variants and the dogs could still detect them."

Detection dogs have been one of the most reliable biosensors for identifying the odor of human disease. According to Gemma Butlin, a spokesperson of Medical Detection Dogs, the UK-based charity that trained canines for the LSHTM study, the olfactory capabilities of dogs have been deployed to detect malaria, Parkinson's disease, different types of cancers, as well as pseudomonas, a type of bacteria known to cause infections in blood, lungs, eyes, and other parts of the human body.

COVID-19 has a distinctive smell — a result of chemicals known as volatile organic compounds released by infected body cells, which give off an odor "fingerprint."

"It's estimated that the percentage of a dog's brain devoted to analyzing odors is 40 times larger than that of a human," says Butlin. "Humans have around 5 million scent receptors dedicated to smell. Dogs have 350 million and can detect odors at parts per trillion. To put this into context, a dog can detect a teaspoon of sugar in a million gallons of water: two Olympic-sized pools full."

According to LSHTM scientists, COVID-19 has a distinctive smell — a result of chemicals known as volatile organic compounds released by infected body cells, which give off an odor "fingerprint." Other studies, too, have revealed that the SARS-CoV-2 virus has a distinct olfactory signature, detectable in the urine, saliva, and sweat of infected individuals. Humans can't smell the disease in these fluids, but dogs can.

"Our research shows that the smell associated with COVID-19 is at least partly due to small and volatile chemicals that are produced by the virus growing in the body or the immune response to the virus or both," said Steve Lindsay, a public health entomologist at Durham University, whose team collaborated with LSHTM for the study. He added, "There is also a further possibility that dogs can actually smell the virus, which is incredible given how small viruses are."

In April this year, researchers from the University of Pennsylvania and collaborators published a similar study in the scientific journal PLOS One, revealing that detection dogs could successfully discriminate between urine samples of infected and uninfected individuals. The accuracy rate of canines in this study was 96%. Similarly, last December, French scientists found that dogs were 76-100% effective at identifying individuals with COVID-19 when presented with sweat samples.

Grandjean Dominique, a professor at France's National Veterinary School of Alfort, who led the French study, said that the researchers used two types of dogs — search and rescue dogs, as they can sniff sweat, and explosive detection dogs, because they're often used at airports to find bomb ingredients. Dogs may very well be as good as PCR tests, said Dominique, but the goal, he added, is not to replace these tests with canines.

In France, the government gave the green light to train hundreds of disease detection dogs and deploy them in airports. "They will act as mass pre-test, and only people who are positive will undergo a PCR test to check their level of infection and the kind of variant," says Dominique. He thinks the dogs will be able to decrease the amount of PCR testing and potentially save money.

Since the accuracy rate for bio-detection dogs is fairly high, scientists think they could prove to be a quick diagnosis and mass screening tool, especially at ports, airports, train stations, stadiums, and public gatherings. Countries like Finland, Thailand, UAE, Italy, Chile, India, Australia, Pakistan, Saudi Arabia, Switzerland, and Mexico are already training and deploying canines for COVID-19 detection. The dogs are trained to sniff the area around a person, and if they find the odor of COVID-19 they will sit or stand back from an individual as a signal that they've identified an infection.

While bio-detection dogs seem promising for cheap, large-volume screening, many of the studies that have been performed to date have been small and in controlled environments. The big question is whether this approach work on people in crowded airports, not just samples of shirts and socks in a lab.

"The next step is 'real world' testing where they [canines] are placed in airports to screen people and see how they perform," says Anna Durbin, professor of international health at the John Hopkins Bloomberg School of Public Health. "Testing in real airports with lots of passengers and competing scents will need to be done."

According to Butlin of Medical Detection Dogs, scalability could be a challenge. However, scientists don't intend to have a dog in every waiting room, detecting COVID-19 or other diseases, she said.

"Dogs are the most reliable bio sensors on the planet and they have proven time and time again that they can detect diseases as accurately, if not more so, than current technological diagnostics," said Butlin. "We are learning from them all the time and what their noses know will one day enable the creation an 'E-nose' that does the same job – imagine a day when your mobile phone can tell you that you are unwell."

The Voice Behind Some of Your Favorite Cartoon Characters Helped Create the Artificial Heart

This Jarvik-7 artificial heart was used in the first bridge operation in 1985 meant to replace a failing heart while the patient waited for a donor organ.

In June, a team of surgeons at Duke University Hospital implanted the latest model of an artificial heart in a 39-year-old man with severe heart failure, a condition in which the heart doesn't pump properly. The man's mechanical heart, made by French company Carmat, is a new generation artificial heart and the first of its kind to be transplanted in the United States. It connects to a portable external power supply and is designed to keep the patient alive until a replacement organ becomes available.

Many patients die while waiting for a heart transplant, but artificial hearts can bridge the gap. Though not a permanent solution for heart failure, artificial hearts have saved countless lives since their first implantation in 1982.

What might surprise you is that the origin of the artificial heart dates back decades before, when an inventive television actor teamed up with a famous doctor to design and patent the first such device.

A man of many talents

Paul Winchell was an entertainer in the 1950s and 60s, rising to fame as a ventriloquist and guest-starring as an actor on programs like "The Ed Sullivan Show" and "Perry Mason." When children's animation boomed in the 1960s, Winchell made a name for himself as a voice actor on shows like "The Smurfs," "Winnie the Pooh," and "The Jetsons." He eventually became famous for originating the voices of Tigger from "Winnie the Pooh" and Gargamel from "The Smurfs," among many others.

But Winchell wasn't just an entertainer: He also had a quiet passion for science and medicine. Between television gigs, Winchell busied himself working as a medical hypnotist and acupuncturist, treating the same Hollywood stars he performed alongside. When he wasn't doing that, Winchell threw himself into engineering and design, building not only the ventriloquism dummies he used on his television appearances but a host of products he'd dreamed up himself. Winchell spent hours tinkering with his own inventions, such as a set of battery-powered gloves and something called a "flameless lighter." Over the course of his life, Winchell designed and patented more than 30 of these products – mostly novelties, but also serious medical devices, such as a portable blood plasma defroster.

| Ventriloquist Paul Winchell with Jerry Mahoney, his dummy, in 1951 |

A meeting of the minds

In the early 1950s, Winchell appeared on a variety show called the "Arthur Murray Dance Party" and faced off in a dance competition with the legendary Ricardo Montalban (Winchell won). At a cast party for the show later that same night, Winchell met Dr. Henry Heimlich – the same doctor who would later become famous for inventing the Heimlich maneuver, who was married to Murray's daughter. The two hit it off immediately, bonding over their shared interest in medicine. Before long, Heimlich invited Winchell to come observe him in the operating room at the hospital where he worked. Winchell jumped at the opportunity, and not long after he became a frequent guest in Heimlich's surgical theatre, fascinated by the mechanics of the human body.

One day while Winchell was observing at the hospital, he witnessed a patient die on the operating table after undergoing open-heart surgery. He was suddenly struck with an idea: If there was some way doctors could keep blood pumping temporarily throughout the body during surgery, patients who underwent risky operations like open-heart surgery might have a better chance of survival. Winchell rushed to Heimlich with the idea – and Heimlich agreed to advise Winchell and look over any design drafts he came up with. So Winchell went to work.

Winchell's heart

As it turned out, building ventriloquism dummies wasn't that different from building an artificial heart, Winchell noted later in his autobiography – the shifting valves and chambers of the mechanical heart were similar to the moving eyes and opening mouths of his puppets. After each design, Winchell would go back to Heimlich and the two would confer, making adjustments along the way to.

By 1956, Winchell had perfected his design: The "heart" consisted of a bag that could be placed inside the human body, connected to a battery-powered motor outside of the body. The motor enabled the bag to pump blood throughout the body, similar to a real human heart. Winchell received a patent for the design in 1963.

At the time, Winchell never quite got the credit he deserved. Years later, researchers at the University of Utah, working on their own artificial heart, came across Winchell's patent and got in touch with Winchell to compare notes. Winchell ended up donating his patent to the team, which included Dr. Richard Jarvik. Jarvik expanded on Winchell's design and created the Jarvik-7 – the world's first artificial heart to be successfully implanted in a human being in 1982.

The Jarvik-7 has since been replaced with newer, more efficient models made up of different synthetic materials, allowing patients to live for longer stretches without the heart clogging or breaking down. With each new generation of hearts, heart failure patients have been able to live relatively normal lives for longer periods of time and with fewer complications than before – and it never would have been possible without the unsung genius of a puppeteer and his love of science.