Massive benefits of AI come with environmental and human costs. Can AI itself be part of the solution?

Generative AI has a large carbon footprint and other drawbacks. But AI can help mitigate its own harms—by plowing through mountains of data on extreme weather and human displacement.

The recent explosion of generative artificial intelligence tools like ChatGPT and Dall-E enabled anyone with internet access to harness AI’s power for enhanced productivity, creativity, and problem-solving. With their ever-improving capabilities and expanding user base, these tools proved useful across disciplines, from the creative to the scientific.

But beneath the technological wonders of human-like conversation and creative expression lies a dirty secret—an alarming environmental and human cost. AI has an immense carbon footprint. Systems like ChatGPT take months to train in high-powered data centers, which demand huge amounts of electricity, much of which is still generated with fossil fuels, as well as water for cooling. “One of the reasons why Open AI needs investments [to the tune of] $10 billion from Microsoft is because they need to pay for all of that computation,” says Kentaro Toyama, a computer scientist at the University of Michigan. There’s also an ecological toll from mining rare minerals required for hardware and infrastructure. This environmental exploitation pollutes land, triggers natural disasters and causes large-scale human displacement. Finally, for data labeling needed to train and correct AI algorithms, the Big Data industry employs cheap and exploitative labor, often from the Global South.

Generative AI tools are based on large language models (LLMs), with most well-known being various versions of GPT. LLMs can perform natural language processing, including translating, summarizing and answering questions. They use artificial neural networks, called deep learning or machine learning. Inspired by the human brain, neural networks are made of millions of artificial neurons. “The basic principles of neural networks were known even in the 1950s and 1960s,” Toyama says, “but it’s only now, with the tremendous amount of compute power that we have, as well as huge amounts of data, that it’s become possible to train generative AI models.”

Though there aren’t any official figures about the power consumption or emissions from data centers, experts estimate that they use one percent of global electricity—more than entire countries.

In recent months, much attention has gone to the transformative benefits of these technologies. But it’s important to consider that these remarkable advances may come at a price.

AI’s carbon footprint

In their latest annual report, 2023 Landscape: Confronting Tech Power, the AI Now Institute, an independent policy research entity focusing on the concentration of power in the tech industry, says: “The constant push for scale in artificial intelligence has led Big Tech firms to develop hugely energy-intensive computational models that optimize for ‘accuracy’—through increasingly large datasets and computationally intensive model training—over more efficient and sustainable alternatives.”

Though there aren’t any official figures about the power consumption or emissions from data centers, experts estimate that they use one percent of global electricity—more than entire countries. In 2019, Emma Strubell, then a graduate researcher at the University of Massachusetts Amherst, estimated that training a single LLM resulted in over 280,000 kg in CO2 emissions—an equivalent of driving almost 1.2 million km in a gas-powered car. A couple of years later, David Patterson, a computer scientist from the University of California Berkeley, and colleagues, estimated GPT-3’s carbon footprint at over 550,000 kg of CO2 In 2022, the tech company Hugging Face, estimated the carbon footprint of its own language model, BLOOM, as 25,000 kg in CO2 emissions. (BLOOM’s footprint is lower because Hugging Face uses renewable energy, but it doubled when other life-cycle processes like hardware manufacturing and use were added.)

Luckily, despite the growing size and numbers of data centers, their increasing energy demands and emissions have not kept pace proportionately—thanks to renewable energy sources and energy-efficient hardware.

But emissions don’t tell the full story.

AI’s hidden human cost

“If historical colonialism annexed territories, their resources, and the bodies that worked on them, data colonialism’s power grab is both simpler and deeper: the capture and control of human life itself through appropriating the data that can be extracted from it for profit.” So write Nick Couldry and Ulises Mejias, authors of the book The Costs of Connection.

The energy requirements, hardware manufacture and the cheap human labor behind AI systems disproportionately affect marginalized communities.

Technologies we use daily inexorably gather our data. “Human experience, potentially every layer and aspect of it, is becoming the target of profitable extraction,” Couldry and Meijas say. This feeds data capitalism, the economic model built on the extraction and commodification of data. While we are being dispossessed of our data, Big Tech commodifies it for their own benefit. This results in consolidation of power structures that reinforce existing race, gender, class and other inequalities.

“The political economy around tech and tech companies, and the development in advances in AI contribute to massive displacement and pollution, and significantly changes the built environment,” says technologist and activist Yeshi Milner, who founded Data For Black Lives (D4BL) to create measurable change in Black people’s lives using data. The energy requirements, hardware manufacture and the cheap human labor behind AI systems disproportionately affect marginalized communities.

AI’s recent explosive growth spiked the demand for manual, behind-the-scenes tasks, creating an industry described by Mary Gray and Siddharth Suri as “ghost work” in their book. This invisible human workforce that lies behind the “magic” of AI, is overworked and underpaid, and very often based in the Global South. For example, workers in Kenya who made less than $2 an hour, were the behind the mechanism that trained ChatGPT to properly talk about violence, hate speech and sexual abuse. And, according to an article in Analytics India Magazine, in some cases these workers may not have been paid at all, a case for wage theft. An exposé by the Washington Post describes “digital sweatshops” in the Philippines, where thousands of workers experience low wages, delays in payment, and wage theft by Remotasks, a platform owned by Scale AI, a $7 billion dollar American startup. Rights groups and labor researchers have flagged Scale AI as one company that flouts basic labor standards for workers abroad.

It is possible to draw a parallel with chattel slavery—the most significant economic event that continues to shape the modern world—to see the business structures that allow for the massive exploitation of people, Milner says. Back then, people got chocolate, sugar, cotton; today, they get generative AI tools. “What’s invisible through distance—because [tech companies] also control what we see—is the massive exploitation,” Milner says.

“At Data for Black Lives, we are less concerned with whether AI will become human…[W]e’re more concerned with the growing power of AI to decide who’s human and who’s not,” Milner says. As a decision-making force, AI becomes a “justifying factor for policies, practices, rules that not just reinforce, but are currently turning the clock back generations years on people’s civil and human rights.”

Ironically, AI plays an important role in mitigating its own harms—by plowing through mountains of data about weather changes, extreme weather events and human displacement.

Nuria Oliver, a computer scientist, and co-founder and vice-president of the European Laboratory of Learning and Intelligent Systems (ELLIS), says that instead of focusing on the hypothetical existential risks of today’s AI, we should talk about its real, tangible risks.

“Because AI is a transverse discipline that you can apply to any field [from education, journalism, medicine, to transportation and energy], it has a transformative power…and an exponential impact,” she says.

AI's accountability

“At the core of what we were arguing about data capitalism [is] a call to action to abolish Big Data,” says Milner. “Not to abolish data itself, but the power structures that concentrate [its] power in the hands of very few actors.”

A comprehensive AI Act currently negotiated in the European Parliament aims to rein Big Tech in. It plans to introduce a rating of AI tools based on the harms caused to humans, while being as technology-neutral as possible. That sets standards for safe, transparent, traceable, non-discriminatory, and environmentally friendly AI systems, overseen by people, not automation. The regulations also ask for transparency in the content used to train generative AIs, particularly with copyrighted data, and also disclosing that the content is AI-generated. “This European regulation is setting the example for other regions and countries in the world,” Oliver says. But, she adds, such transparencies are hard to achieve.

Google, for example, recently updated its privacy policy to say that anything on the public internet will be used as training data. “Obviously, technology companies have to respond to their economic interests, so their decisions are not necessarily going to be the best for society and for the environment,” Oliver says. “And that’s why we need strong research institutions and civil society institutions to push for actions.” ELLIS also advocates for data centers to be built in locations where the energy can be produced sustainably.

Ironically, AI plays an important role in mitigating its own harms—by plowing through mountains of data about weather changes, extreme weather events and human displacement. “The only way to make sense of this data is using machine learning methods,” Oliver says.

Milner believes that the best way to expose AI-caused systemic inequalities is through people's stories. “In these last five years, so much of our work [at D4BL] has been creating new datasets, new data tools, bringing the data to life. To show the harms but also to continue to reclaim it as a tool for social change and for political change.” This change, she adds, will depend on whose hands it is in.

Last month, a paper published in Cell by Harvard biologist David Sinclair explored root cause of aging, as well as examining whether this process can be controlled. We talked with Dr. Sinclair about this new research.

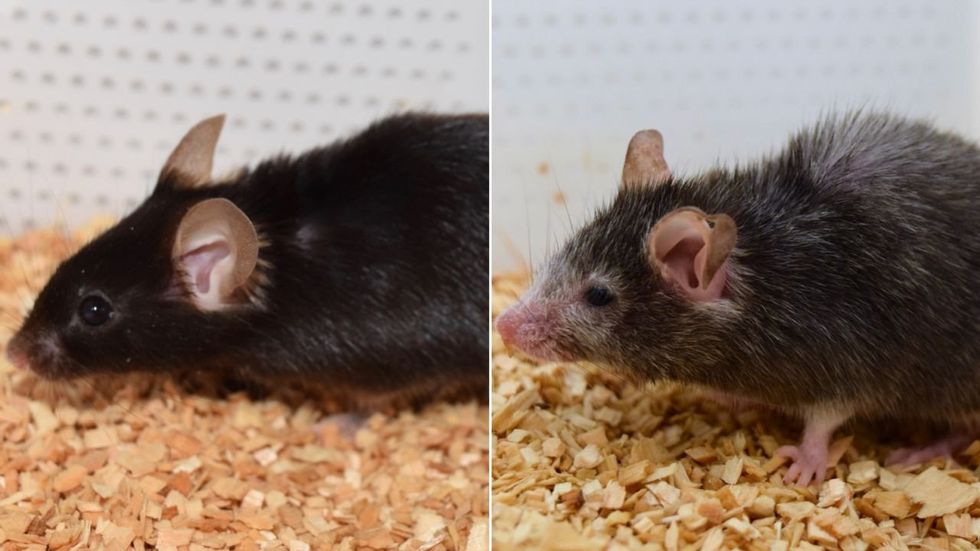

What causes aging? In a paper published last month, Dr. David Sinclair, Professor in the Department of Genetics at Harvard Medical School, reports that he and his co-authors have found the answer. Harnessing this knowledge, Dr. Sinclair was able to reverse this process, making mice younger, according to the study published in the journal Cell.

I talked with Dr. Sinclair about his new study for the latest episode of Making Sense of Science. Turning back the clock on mouse age through what’s called epigenetic reprogramming – and understanding why animals get older in the first place – are key steps toward finding therapies for healthier aging in humans. We also talked about questions that have been raised about the research.

Show links:

Dr. Sinclair's paper, published last month in Cell.

Recent pre-print paper - not yet peer reviewed - showing that mice treated with Yamanaka factors lived longer than the control group.

Dr. Sinclair's podcast.

Previous research on aging and DNA mutations.

Dr. Sinclair's book, Lifespan.

Harvard Medical School

Breakthrough therapies are breaking patients' banks. Key changes could improve access, experts say.

Single-treatment therapies are revolutionizing medicine. But insurers and patients wonder whether they can afford treatment and, if they can, whether the high costs are worthwhile.

CSL Behring’s new gene therapy for hemophilia, Hemgenix, costs $3.5 million for one treatment, but helps the body create substances that allow blood to clot. It appears to be a cure, eliminating the need for other treatments for many years at least.

Likewise, Novartis’s Kymriah mobilizes the body’s immune system to fight B-cell lymphoma, but at a cost $475,000. For patients who respond, it seems to offer years of life without the cancer progressing.

These single-treatment therapies are at the forefront of a new, bold era of medicine. Unfortunately, they also come with new, bold prices that leave insurers and patients wondering whether they can afford treatment and, if they can, whether the high costs are worthwhile.

“Most pharmaceutical leaders are there to improve and save people’s lives,” says Jeremy Levin, chairman and CEO of Ovid Therapeutics, and immediate past chairman of the Biotechnology Innovation Organization. If the therapeutics they develop are too expensive for payers to authorize, patients aren’t helped.

“The right to receive care and the right of pharmaceuticals developers to profit should never be at odds,” Levin stresses. And yet, sometimes they are.

Leigh Turner, executive director of the bioethics program, University of California, Irvine, notes this same tension between drug developers that are “seeking to maximize profits by charging as much as the market will bear for cell and gene therapy products and other medical interventions, and payers trying to control costs while also attempting to provide access to medical products with promising safety and efficacy profiles.”

Why Payers Balk

Health insurers can become skittish around extremely high prices, yet these therapies often accompany significant overall savings. For perspective, the estimated annual treatment cost for hemophilia exceeds $300,000. With Hemgenix, payers would break even after about 12 years.

But, in 12 years, will the patient still have that insurer? Therein lies the rub. U.S. payers, are used to a “pay-as-you-go” model, in which the lifetime costs of therapies typically are shared by multiple payers over many years, as patients change jobs. Single treatment therapeutics eliminate that cost-sharing ability.

"As long as formularies are based on profits to middlemen…Americans’ healthcare costs will continue to skyrocket,” says Patricia Goldsmith, the CEO of CancerCare.

“There is a phenomenally complex, bureaucratic reimbursement system that has grown, layer upon layer, during several decades,” Levin says. As medicine has innovated, payment systems haven’t kept up.

Therefore, biopharma companies begin working with insurance companies and their pharmacy benefit managers (PBMs), which act on an insurer’s behalf to decide which drugs to cover and by how much, early in the drug approval process. Their goal is to make sophisticated new drugs available while still earning a return on their investment.

New Payment Models

Pay-for-performance is one increasingly popular strategy, Turner says. “These models typically link payments to evidence generation and clinically significant outcomes.”

A biotech company called bluebird bio, for example, offers value-based pricing for Zynteglo, a $2.8 million possible cure for the rare blood disorder known as beta thalassaemia. It generally eliminates patients’ need for blood transfusions. The company is so sure it works that it will refund 80 percent of the cost of the therapy if patients need blood transfusions related to that condition within five years of being treated with Zynteglo.

In his February 2023 State of the Union speech, President Biden proposed three pilot programs to reduce drug costs. One of them, the Cell and Gene Therapy Access Model calls on the federal Centers for Medicare & Medicaid Services to establish outcomes-based agreements with manufacturers for certain cell and gene therapies.

A mortgage-style payment system is another, albeit rare, approach. Amortized payments spread the cost of treatments over decades, and let people change employers without losing their healthcare benefits.

Only about 14 percent of all drugs that enter clinical trials are approved by the FDA. Pharma companies, therefore, have an exigent need to earn a profit.

The new payment models that are being discussed aren’t solutions to high prices, says Bill Kramer, senior advisor for health policy at Purchaser Business Group on Health (PBGH), a nonprofit that seeks to lower health care costs. He points out that innovative pricing models, although well-intended, may distract from the real problem of high prices. They are attempts to “soften the blow. The best thing would be to charge a reasonable price to begin with,” he says.

Instead, he proposes making better use of research on cost and clinical effectiveness. The Institute for Clinical and Economic Review (ICER) conducts such research in the U.S., determining whether the benefits of specific drugs justify their proposed prices. ICER is an independent non-profit research institute. Its reports typically assess the degrees of improvement new therapies offer and suggest prices that would reflect that. “Publicizing that data is very important,” Kramer says. “Their results aren’t used to the extent they could and should be.” Pharmaceutical companies tend to price their therapies higher than ICER’s recommendations.

Drug Development Costs Soar

Drug developers have long pointed to the onerous costs of drug development as a reason for high prices.

A 2020 study found the average cost to bring a drug to market exceeded $1.1 billion, while other studies have estimated overall costs as high as $2.6 billion. The development timeframe is about 10 years. That’s because modern therapeutics target precise mechanisms to create better outcomes, but also have high failure rates. Only about 14 percent of all drugs that enter clinical trials are approved by the FDA. Pharma companies, therefore, have an exigent need to earn a profit.

Skewed Incentives Increase Costs

Pricing isn’t solely at the discretion of pharma companies, though. “What patients end up paying has much more to do with their PBMs than the actual price of the drug,” Patricia Goldsmith, CEO, CancerCare, says. Transparency is vital.

PBMs control patients’ access to therapies at three levels, through price negotiations, pricing tiers and pharmacy management.

When negotiating with drug manufacturers, Goldsmith says, “PBMs exchange a preferred spot on a formulary (the insurer’s or healthcare provider’s list of acceptable drugs) for cash-base rebates.” Unfortunately, 25 percent of the time, those rebates are not passed to insurers, according to the PBGH report.

Then, PBMs use pricing tiers to steer patients and physicians to certain drugs. For example, Kramer says, “Sometimes PBMs put a high-cost brand name drug in a preferred tier and a lower-cost competitor in a less preferred, higher-cost tier.” As the PBGH report elaborates, “(PBMs) are incentivized to include the highest-priced drugs…since both manufacturing rebates, as well as the administrative fees they charge…are calculated as a percentage of the drug’s price.

Finally, by steering patients to certain pharmacies, PBMs coordinate patients’ access to treatments, control patients’ out-of-pocket costs and receive management fees from the pharmacies.

Therefore, Goldsmith says, “As long as formularies are based on profits to middlemen…Americans’ healthcare costs will continue to skyrocket.”

Transparency into drug pricing will help curb costs, as will new payment strategies. What will make the most impact, however, may well be the development of a new reimbursement system designed to handle dramatic, breakthrough drugs. As Kramer says, “We need a better system to identify drugs that offer dramatic improvements in clinical care.”