Don’t fear AI, fear power-hungry humans

Story by Big Think

We live in strange times, when the technology we depend on the most is also that which we fear the most. We celebrate cutting-edge achievements even as we recoil in fear at how they could be used to hurt us. From genetic engineering and AI to nuclear technology and nanobots, the list of awe-inspiring, fast-developing technologies is long.

However, this fear of the machine is not as new as it may seem. Technology has a longstanding alliance with power and the state. The dark side of human history can be told as a series of wars whose victors are often those with the most advanced technology. (There are exceptions, of course.) Science, and its technological offspring, follows the money.

This fear of the machine seems to be misplaced. The machine has no intent: only its maker does. The fear of the machine is, in essence, the fear we have of each other — of what we are capable of doing to one another.

How AI changes things

Sure, you would reply, but AI changes everything. With artificial intelligence, the machine itself will develop some sort of autonomy, however ill-defined. It will have a will of its own. And this will, if it reflects anything that seems human, will not be benevolent. With AI, the claim goes, the machine will somehow know what it must do to get rid of us. It will threaten us as a species.

Well, this fear is also not new. Mary Shelley wrote Frankenstein in 1818 to warn us of what science could do if it served the wrong calling. In the case of her novel, Dr. Frankenstein’s call was to win the battle against death — to reverse the course of nature. Granted, any cure of an illness interferes with the normal workings of nature, yet we are justly proud of having developed cures for our ailments, prolonging life and increasing its quality. Science can achieve nothing more noble. What messes things up is when the pursuit of good is confused with that of power. In this distorted scale, the more powerful the better. The ultimate goal is to be as powerful as gods — masters of time, of life and death.

Should countries create a World Mind Organization that controls the technologies that develop AI?

Back to AI, there is no doubt the technology will help us tremendously. We will have better medical diagnostics, better traffic control, better bridge designs, and better pedagogical animations to teach in the classroom and virtually. But we will also have better winnings in the stock market, better war strategies, and better soldiers and remote ways of killing. This grants real power to those who control the best technologies. It increases the take of the winners of wars — those fought with weapons, and those fought with money.

A story as old as civilization

The question is how to move forward. This is where things get interesting and complicated. We hear over and over again that there is an urgent need for safeguards, for controls and legislation to deal with the AI revolution. Great. But if these machines are essentially functioning in a semi-black box of self-teaching neural nets, how exactly are we going to make safeguards that are sure to remain effective? How are we to ensure that the AI, with its unlimited ability to gather data, will not come up with new ways to bypass our safeguards, the same way that people break into safes?

The second question is that of global control. As I wrote before, overseeing new technology is complex. Should countries create a World Mind Organization that controls the technologies that develop AI? If so, how do we organize this planet-wide governing board? Who should be a part of its governing structure? What mechanisms will ensure that governments and private companies do not secretly break the rules, especially when to do so would put the most advanced weapons in the hands of the rule breakers? They will need those, after all, if other actors break the rules as well.

As before, the countries with the best scientists and engineers will have a great advantage. A new international détente will emerge in the molds of the nuclear détente of the Cold War. Again, we will fear destructive technology falling into the wrong hands. This can happen easily. AI machines will not need to be built at an industrial scale, as nuclear capabilities were, and AI-based terrorism will be a force to reckon with.

So here we are, afraid of our own technology all over again.

What is missing from this picture? It continues to illustrate the same destructive pattern of greed and power that has defined so much of our civilization. The failure it shows is moral, and only we can change it. We define civilization by the accumulation of wealth, and this worldview is killing us. The project of civilization we invented has become self-cannibalizing. As long as we do not see this, and we keep on following the same route we have trodden for the past 10,000 years, it will be very hard to legislate the technology to come and to ensure such legislation is followed. Unless, of course, AI helps us become better humans, perhaps by teaching us how stupid we have been for so long. This sounds far-fetched, given who this AI will be serving. But one can always hope.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.

Scientists Are Working to Develop a Clever Nasal Spray That Tricks the Coronavirus Out of the Body

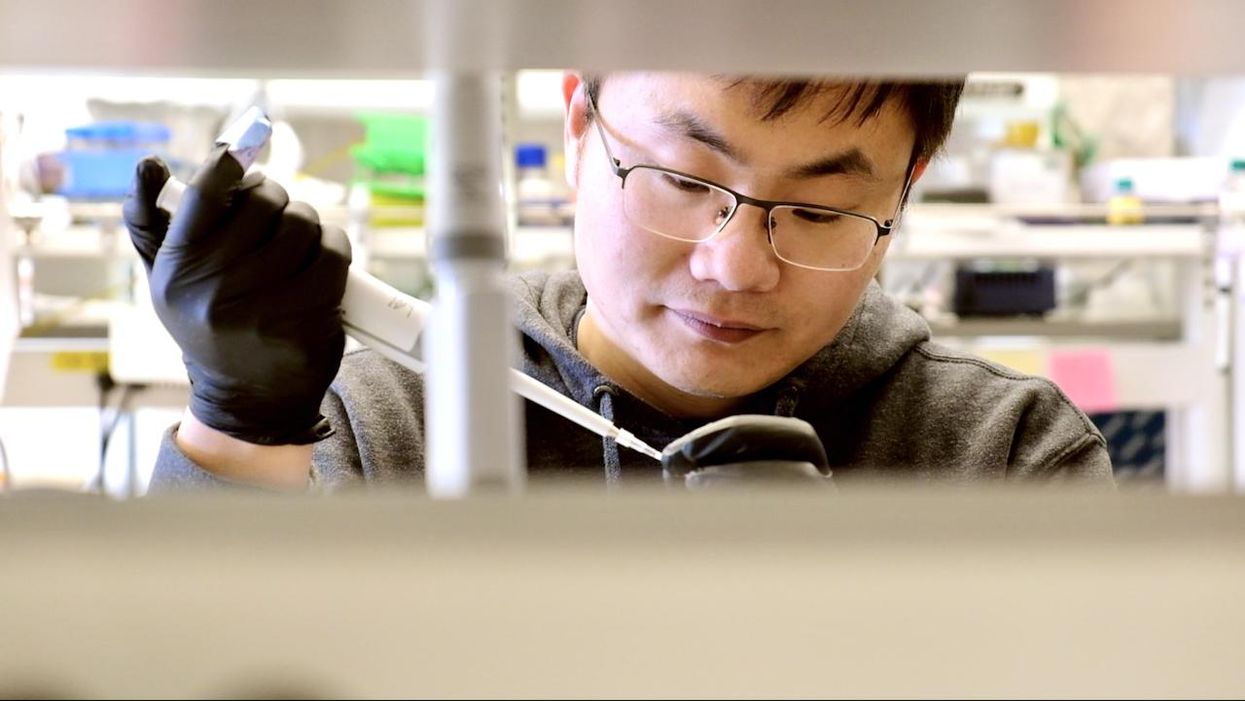

Biochemist Longxing Cao is working with colleagues at the University of Washington on promising research to disable infectious coronavirus in a person's nose.

Imagine this scenario: you get an annoying cough and a bit of a fever. When you wake up the next morning you lose your sense of taste and smell. That sounds familiar, so you head to a doctor's office for a Covid test, which comes back positive.

Your next step? An anti-Covid nasal spray of course, a "trickster drug" that will clear the once-dangerous and deadly virus out of the body. The drug works by tricking the coronavirus with decoy receptors that appear to be just like those on the surface of our own cells. The virus latches onto the drug's molecules "thinking" it is breaking into human cells, but instead it flushes out of your system before it can cause any serious damage.

This may sounds like science fiction, but several research groups are already working on such trickster coronavirus drugs, with some candidates close to clinical trials and possibly even becoming available late this year. The teams began working on them when the pandemic arrived, and continued in lockdown.

This may sounds like science fiction, but several research groups are already working on such trickster coronavirus drugs, with some candidates close to clinical trials and possibly even becoming available late this year. The teams began working on them when the pandemic arrived, and continued in lockdown.

When the pandemic first hit and the state of California issued a lockdown order on March 16, postdoctoral researchers Anum and Jeff Glasgow found themselves stuck at home with nothing to do. The two scientists who study bioengineering felt that they were well equipped to research molecular ways of disabling coronavirus's cell-penetrating spike protein, but they could no longer come to their labs at the University of California San Francisco.

"We were upset that no one put us in the game," says Anum Glasgow. "We have a lot of experience between us doing these types of projects so we wanted to contribute." But they still had computers so they began modeling the potential virus-disabling proteins in silico using Robetta, special software for designing and modeling protein structures, developed and maintained by University of Washington biochemist David Baker and his lab.

"We saw some imperfections in that lock and key and we created something better. We made a 10 times tighter adhesive."

The SARS-CoV-2 virus that causes Covid-19 uses its surface spike protein to bind on to a specific receptor on human cells called ACE2. Unfortunately for humans, the spike protein's molecular shape fits the ACE2 receptor like a well-cut key, making it very successful at breaking into our cells. But if one could design a molecular ACE2-mimic to "trick" the virus into latching onto it instead, the virus would no longer be able to enter cells. Scientists call such mimics receptor traps or inhibitors, or blockers. "It would block the adhesive part of the virus that binds to human cells," explains Jim Wells, professor of pharmaceutical chemistry at UCSF, whose lab took part in designing the ACE2-receptor mimic, working with the Glasgows and other colleagues.

The idea of disabling infectious or inflammatory agents by tricking them into binding to the targets' molecular look-alikes is something scientists have tried with other diseases. The anti-inflammatory drugs commonly used to treat autoimmune conditions, including rheumatoid arthritis, Crohn's disease and ulcerative colitis, rely on conceptually similar molecular mechanisms. Called TNF blockers, these drugs block the activity of the inflammatory cytokines, molecules that promote inflammation. "One of the biggest selling drugs in the world is a receptor trap," says Jeff Glasgow. "It acts as a receptor decoy. There's a TNF receptor that traps the cytokine."

In the recent past, scientists also pondered a similar look-alike approach to treating urinary tract infections, which are often caused by a pathogenic strain of Escherichia coli. An E. coli bacterium resembles a squid with protruding filaments equipped with proteins that can change their shape to form hooks, used to hang onto specific sugar molecules called ligands, which are present on the surface of the epithelial cells lining the urinary tract.

A recent study found that a sugar-like compound that's structurally similar to that ligand can play a similar trick on the E. Coli. When administered in in sufficient amounts, the compound hooks the bacteria on, which is then excreted out of the body with urine. The "trickster" method had been also tried against the HIV virus, but it wasn't very effective because HIV has a high mutation rate and multiple ways of entering human cells.

But the coronavirus spike protein's shape is more stable. And while it has a strong affinity for the ACE2 receptors, its natural binding to these receptors isn't perfect, which allowed the UCSF researchers to design a mimic with a better grip. "We saw some imperfections in that lock and key and we created something better," says Wells. "We made a 10 times tighter adhesive." The team demonstrated that their traps neutralized SARS-CoV-2 in lab experiments and published their study in the Proceedings of the National Academy of Sciences.

Baker, who is the director of the Institute for Protein Design at the University of Washington, was also devising ACE2 look-alikes with his team. Only unlike the UCSF team, they didn't perfect the virus-receptor lock and key combo, but instead designed their mimics from scratch. Using Robetta, they digitally modeled over two million proteins, zeroed-in on over 100,000 potential candidates and identified a handful with a strong promise of blocking SARS-CoV-2, testing them against the virus in human cells. Their design of the miniprotein inhibitors was published in the journal Science.

Biochemist David Baker, pictured in his lab at the University of Washington.

UW

The concept of the ACE2 receptor mimics is somewhat similar to the antibody plasma, but better, the teams explain. Antibodies don't always coat all of the virus's spike proteins and sometimes don't bind perfectly. By contrast, the ACE2 mimics directly compete with the virus's entry mechanism. ACE2 mimics are also easier and cheaper to make, researchers say.

Antibodies, which are long protein chains, must be grown inside mammalian cells, which is a slow and costly process. As drugs, antibody cocktails must be kept refrigerated. On the contrary, proteins that mimic ACE2 receptors are smaller and can be produced by bacteria easily and inexpensively. Designed to specs, these proteins don't need refrigeration and are easy to store. "We designed them to be very stable," says Baker. "Our computation design tries to come up with the stable proteins that have the desired functions."

That stability may allow the team to create an inhaler drug rather than an intravenous one, which would be another advantage over the antibody plasma, given via an IV. The team envisions people spraying the miniprotein solution into their nose, creating a protecting coating that would disable the inhaled virus. "The infection starts from your respiratory system, from your nose," explains Longxing Cao, the study's co-author—so a nasal spray would be a natural way to administer it. "So that you can have it like a layer, similar to a mask."

As the virus evolves, new variants are arising. But the teams think that their ACE2 protein mimics should work on the new variants too for several reasons. "Since the new SARS-CoV-2 variants still use ACE2 for their cell entry, they will likely still be susceptible to ACE2-based traps," Glasgow says.

Cao explains that their approach should work too because most of the mutations happen outside the ACE2 binding region. Plus, they are building multiple binders that can bind to an array of the coronavirus variants. "Our binder can still bind with most of the variants and we are trying to make one protein that could inhibit all the future escape variants," he says.

Baker and Cao hope that their miniproteins may be available to patients later this year. But besides getting the medicine out to patients, this approach will allow researchers to test the computer-modeled mimics end-to-end with an unprecedented speed. That would give humans a leg up in future pandemics or zoonotic disease outbreaks, which remain an increasingly pressing threat due to human activity and climate change.

"That's what we are focused on right now—understanding what we have learned from this pandemic to improve our design methods," says Baker. "So that we should be able to obtain binders like these very quickly when a new pandemic threat is identified."

Lina Zeldovich has written about science, medicine and technology for Popular Science, Smithsonian, National Geographic, Scientific American, Reader’s Digest, the New York Times and other major national and international publications. A Columbia J-School alumna, she has won several awards for her stories, including the ASJA Crisis Coverage Award for Covid reporting, and has been a contributing editor at Nautilus Magazine. In 2021, Zeldovich released her first book, The Other Dark Matter, published by the University of Chicago Press, about the science and business of turning waste into wealth and health. You can find her on http://linazeldovich.com/ and @linazeldovich.

How Will the New Strains of COVID-19 Affect Our Vaccination Plans?

The mutated strains that first arose in the U.K. and South Africa and have now spread to many countries are prompting urgent studies on the effectiveness of current vaccines to neutralize the new strains.

When the world's first Covid-19 vaccine received regulatory approval in November, it appeared that the end of the pandemic might be near. As one by one, the Pfizer/BioNTech, Moderna, AstraZeneca, and Sputnik V vaccines reported successful Phase III results, the prospect of life without lockdowns and restrictions seemed a tantalizing possibility.

But for scientists with many years' worth of experience in studying how viruses adapt over time, it remained clear that the fight against the SARS-CoV-2 virus was far from over. "The more virus circulates, the more it is likely that mutations occur," said Professor Beate Kampmann, director of the Vaccine Centre at the London School of Hygiene & Tropical Medicine. "It is inevitable that new variants will emerge."

Since the start of the pandemic, dozens of new variants of SARS-CoV-2 – containing different mutations in the viral genome sequence - have appeared as it copies itself while spreading through the human population. The majority of these mutations are inconsequential, but in recent months, some mutations have emerged in the receptor binding domain of the virus's spike protein, increasing how tightly it binds to human cells. These mutations appear to make some new strains up to 70 percent more transmissible, though estimates vary and more lab experiments are needed. Such new strains include the B.1.1.7 variant - currently the dominant strain in the UK – and the 501Y.V2 variant, which was first found in South Africa.

"I'm quite optimistic that even with these mutations, immunity is not going to suddenly fail on us."

Because so many more people are becoming infected with the SARS-CoV-2 virus as a result, vaccinologists point out that these new strains will prolong the pandemic.

"It may take longer to reach vaccine-induced herd immunity," says Deborah Fuller, professor of microbiology at the University of Washington School of Medicine. "With a more transmissible variant taking over, an even larger percentage of the population will need to get vaccinated before we can shut this pandemic down."

That is, of course, as long as the vaccinations are still highly protective. The South African variant, in particular, contains a mutation called E484K that is raising alarms among scientists. Emerging evidence indicates that this mutation allows the virus to escape from some people's immune responses, and thus could potentially weaken the effectiveness of current vaccines.

What We Know So Far

Over the past few weeks, manufacturers of the approved Covid-19 vaccines have been racing to conduct experiments, assessing whether their jabs still work well against the new variants. This process involves taking blood samples from people who have already been vaccinated and assessing whether the antibodies generated by those people can neutralize the new strains in a test tube.

Pfizer has just released results from the first of these studies, declaring that their vaccine was found to still be effective at neutralizing strains of the virus containing the N501Y mutation of the spike protein, one of the mutations present within both the UK and South African variants.

However, the study did not look at the full set of mutations contained within either of these variants. Earlier this week, academics at the Fred Hutchinson Cancer Research Center in Seattle suggested that the E484K spike protein mutation could be most problematic, publishing a study which showed that the efficacy of neutralizing antibodies against this region dropped by more than ten-fold because of the mutation.

Thankfully, this development is not expected to make vaccines useless. One of the Fred Hutch researchers, Jesse Bloom, told STAT News that he did not expect this mutation to seriously reduce vaccine efficacy, and that more harmful mutations would need to accrue over time to pose a very significant threat to vaccinations.

"I'm quite optimistic that even with these mutations, immunity is not going to suddenly fail on us," Bloom told STAT. "It might be gradually eroded, but it's not going to fail on us, at least in the short term."

While further vaccine efficacy data will emerge in the coming weeks, other vaccinologists are keen to stress this same point: At most, there will be a marginal drop in efficacy against the new variants.

"Each vaccine induces what we call polyclonal antibodies targeting multiple parts of the spike protein," said Fuller. "So if one antibody target mutates, there are other antibody targets on the spike protein that could still neutralize the virus. The vaccine platforms also induce T-cell responses that could provide a second line of defense. If some virus gets past antibodies, T-cell responses can find and eliminate infected cells before the virus does too much damage."

She estimates that if vaccine efficacy decreases, for example from 95% to 85%, against one of the new variants, the main implications will be that some individuals who might otherwise have become severely ill, may still experience mild or moderate symptoms from an infection -- but crucially, they will not end up in intensive care.

"Plug and Play" Vaccine Platforms

One of the advantages of the technologies which have been pioneered to create the Covid-19 vaccines is that they are relatively straightforward to update with a new viral sequence. The mRNA technology used in the Pfizer/BioNTech and Moderna vaccines, and the adenovirus vectors used in the Astra Zeneca and Sputnik V vaccines, are known as 'plug and play' platforms, meaning that a new form of the vaccine can be rapidly generated against any emerging variant.

"With a rapid pipeline for manufacture established, these new vaccine technologies could enable production and distribution within 1-3 months of a new variant emerging."

While the technology for the seasonal influenza vaccines is relatively inefficient, requiring scientists to grow and cultivate the new strain in the lab before vaccines can be produced - a process that takes nine months - mRNA and adenovirus-based vaccines can be updated within a matter of weeks. According to BioNTech CEO Uğur Şahin, a new version of their vaccine could be produced in six weeks.

"With a rapid pipeline for manufacture established, these new vaccine technologies could enable production and distribution within 1-3 months of a new variant emerging," says Fuller.

Fuller predicts that more new variants of the virus are almost certain to emerge within the coming months and years, potentially requiring the public to receive booster shots. This means there is one key advantage the mRNA-based vaccines have over the adenovirus technologies. mRNA vaccines only express the spike protein, while the AstraZeneca and Sputnik V vaccines use adenoviruses - common viruses most of us are exposed to - as a delivery mechanism for genes from the SARS-CoV-2 virus.

"For the adenovirus vaccines, our bodies make immune responses against both SARS-CoV-2 and the adenovirus backbone of the vaccine," says Fuller. "That means if you update the adenovirus-based vaccine with the new variant and then try to boost people, they may respond less well to the new vaccine, because they already have antibodies against the adenovirus that could block the vaccine from working. This makes mRNA vaccines more amenable to repeated use."

Regulatory Unknowns

One of the key questions remains whether regulators would require new versions of the vaccine to go through clinical trials, a hurdle which would slow down the response to emerging strains, or whether the seasonal influenza paradigm will be followed, whereby a new form of the vaccine can be released without further clinical testing.

Regulators are currently remaining tight-lipped on which process they will choose to follow, until there is more information on how vaccines respond against the new variants. "Only when such information becomes available can we start the scientific evaluation of what data would be needed to support such a change and assess what regulatory procedure would be required for that," said Rebecca Harding, communications officer for the European Medicines Agency.

The Food and Drug Administration (FDA) did not respond to requests for comment before press time.

While vaccinologists feel it is unlikely that a new complete Phase III trial would be required, some believe that because these are new technologies, regulators may well demand further safety data before approving an updated version of the vaccine.

"I would hope if we ever have to update the current vaccines, regulatory authorities will treat it like influenza," said Drew Weissman, professor of medicine at the University of Pennsylvania, who was involved in developing the mRNA technology behind the Pfizer/BioNTech and Moderna vaccines. "I would guess, at worst, they may want a new Phase 1 or 1 and 2 clinical trials."

Others suggest that rather than new trials, some bridging experiments may suffice to demonstrate that the levels of neutralizing antibodies induced by the new form of the vaccine are comparable to the previous one. "Vaccines have previously been licensed by this kind of immunogenicity data only, for example meningitis vaccines," said Kampmann.

While further mutations and strains of SARS-CoV-2 are inevitable, some scientists are concerned that the vaccine rollout strategy being employed in some countries -- of distributing a first shot to as many people as possible, and potentially delaying second shots as a result -- could encourage more new variants to emerge. Just today, the Biden administration announced its intention to release nearly all vaccine doses on hand right away, without keeping a reserve for second shots. This plan risks relying on vaccine manufacturing to ramp up quickly to keep pace if people are to receive their second shots at the right intervals.

"I am not very happy about this change as it could lead to a large number of people out there with partial immunity and this could select new mutations, and escalate the potential problem of vaccine escape."

The Biden administration's shift appears to conflict with the FDA's recent position that second doses should be given on a strict schedule, without any departure from the three- and four-week intervals established in clinical trials. Two top FDA officials said in a statement that changing the dosing schedule "is premature and not rooted solidly in the available evidence. Without appropriate data supporting such changes in vaccine administration, we run a significant risk of placing public health at risk, undermining the historic vaccination efforts to protect the population from COVID-19."

"I understand the argument of trying to get at least partial protection to as many people as possible, but I am concerned about the increased interval between the doses that is now being proposed," said Kampmann. "I am not very happy about this change as it could lead to a large number of people out there with partial immunity and this could select new mutations, and escalate the potential problem of vaccine escape."

But it's worth emphasizing that the virus is unlikely for now to accumulate enough harmful mutations to render the current vaccines completely ineffective.

"It will be very hard for the virus to evolve to completely evade the antibody responses the vaccines induce," said Fuller. "The parts of the virus that are targeted by vaccine-induced antibodies are essential for the virus to infect our cells. If the virus tries to mutate these parts to evade antibodies, then it could compromise its own fitness or even abort its ability to infect. To be sure, the virus is developing these mutations, but we just don't see these variants emerge because they die out."