Don’t fear AI, fear power-hungry humans

Story by Big Think

We live in strange times, when the technology we depend on the most is also that which we fear the most. We celebrate cutting-edge achievements even as we recoil in fear at how they could be used to hurt us. From genetic engineering and AI to nuclear technology and nanobots, the list of awe-inspiring, fast-developing technologies is long.

However, this fear of the machine is not as new as it may seem. Technology has a longstanding alliance with power and the state. The dark side of human history can be told as a series of wars whose victors are often those with the most advanced technology. (There are exceptions, of course.) Science, and its technological offspring, follows the money.

This fear of the machine seems to be misplaced. The machine has no intent: only its maker does. The fear of the machine is, in essence, the fear we have of each other — of what we are capable of doing to one another.

How AI changes things

Sure, you would reply, but AI changes everything. With artificial intelligence, the machine itself will develop some sort of autonomy, however ill-defined. It will have a will of its own. And this will, if it reflects anything that seems human, will not be benevolent. With AI, the claim goes, the machine will somehow know what it must do to get rid of us. It will threaten us as a species.

Well, this fear is also not new. Mary Shelley wrote Frankenstein in 1818 to warn us of what science could do if it served the wrong calling. In the case of her novel, Dr. Frankenstein’s call was to win the battle against death — to reverse the course of nature. Granted, any cure of an illness interferes with the normal workings of nature, yet we are justly proud of having developed cures for our ailments, prolonging life and increasing its quality. Science can achieve nothing more noble. What messes things up is when the pursuit of good is confused with that of power. In this distorted scale, the more powerful the better. The ultimate goal is to be as powerful as gods — masters of time, of life and death.

Should countries create a World Mind Organization that controls the technologies that develop AI?

Back to AI, there is no doubt the technology will help us tremendously. We will have better medical diagnostics, better traffic control, better bridge designs, and better pedagogical animations to teach in the classroom and virtually. But we will also have better winnings in the stock market, better war strategies, and better soldiers and remote ways of killing. This grants real power to those who control the best technologies. It increases the take of the winners of wars — those fought with weapons, and those fought with money.

A story as old as civilization

The question is how to move forward. This is where things get interesting and complicated. We hear over and over again that there is an urgent need for safeguards, for controls and legislation to deal with the AI revolution. Great. But if these machines are essentially functioning in a semi-black box of self-teaching neural nets, how exactly are we going to make safeguards that are sure to remain effective? How are we to ensure that the AI, with its unlimited ability to gather data, will not come up with new ways to bypass our safeguards, the same way that people break into safes?

The second question is that of global control. As I wrote before, overseeing new technology is complex. Should countries create a World Mind Organization that controls the technologies that develop AI? If so, how do we organize this planet-wide governing board? Who should be a part of its governing structure? What mechanisms will ensure that governments and private companies do not secretly break the rules, especially when to do so would put the most advanced weapons in the hands of the rule breakers? They will need those, after all, if other actors break the rules as well.

As before, the countries with the best scientists and engineers will have a great advantage. A new international détente will emerge in the molds of the nuclear détente of the Cold War. Again, we will fear destructive technology falling into the wrong hands. This can happen easily. AI machines will not need to be built at an industrial scale, as nuclear capabilities were, and AI-based terrorism will be a force to reckon with.

So here we are, afraid of our own technology all over again.

What is missing from this picture? It continues to illustrate the same destructive pattern of greed and power that has defined so much of our civilization. The failure it shows is moral, and only we can change it. We define civilization by the accumulation of wealth, and this worldview is killing us. The project of civilization we invented has become self-cannibalizing. As long as we do not see this, and we keep on following the same route we have trodden for the past 10,000 years, it will be very hard to legislate the technology to come and to ensure such legislation is followed. Unless, of course, AI helps us become better humans, perhaps by teaching us how stupid we have been for so long. This sounds far-fetched, given who this AI will be serving. But one can always hope.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.

Vaccines Without Vaccinations Won’t End the Pandemic

In this 2020 photograph, a bandage is placed on a patient who has just received a vaccine.

COVID-19 vaccine development has advanced at a record-setting pace, thanks to our nation's longstanding support for basic vaccine science coupled with massive public and private sector investments.

Yet, policymakers aren't according anywhere near the same level of priority to investments in the social, behavioral, and data science needed to better understand who and what influences vaccination decision-making. "If we want to be sure vaccines become vaccinations, this is exactly the kind of work that's urgently needed," says Dr. Bruce Gellin, President of Global Immunization at the Sabin Vaccine Institute.

Simply put: it's possible vaccines will remain in refrigerators and not be delivered to the arms of rolled-up sleeves if we don't quickly ramp up vaccine confidence research and broadly disseminate the findings.

According to the most recent Gallup poll, the share of U.S. adults who say they would get a COVID-19 vaccine rose to 58 percent this month from 50 percent in September, with non-white Americans and those ages 45-65 even less willing to be vaccinated. While there is still much we don't understand about COVID-19, we do know that without high levels of immunity in the population, a return to some semblance of normalcy is wishful thinking.

Research from prior vaccination campaigns such as H1N1, HPV, and the annual flu points us in the right direction. Key components of successful vaccination efforts require 1) Identifying the concerns of particular segments of the population; 2) Tailoring messages and incentives to address those concerns, and 3) Reaching out through trusted sources – health care providers, public health departments, and others in the community.

Research during the H1N1 flu found preparing people for some uncertainty actually improved trust, according to Dr. Sandra Crouse Quinn, professor and chair, Family Science, University of Maryland. Dr. Crouse Quinn's research during that period also underscored the need to address the specific vaccine concerns of racial and ethnic groups.

The stunning scientific achievement of COVID-19 vaccines anticipated to be ready in record time needs to be backed up by an equally ambitious and evidence-based effort to build the public's confidence in the vaccines.

Data science has provided crucial insight about the social media universe. Dr. Neil Johnson, a scientist at George Washington University, found that despite having fewer followers, anti-vaccination pages are more numerous and growing faster than pro-vaccination pages. They are more often linked to in discussions on other Facebook pages – such as school parent associations – where people are undecided about vaccination.

We've learned about building vaccine confidence from earlier campaigns. Now, however, we are faced with a unique and challenging set of obstacles to unpack quickly: How do we communicate the importance of eventual COVID-19 vaccines to Americans in light of the muddled-to-poor messaging from political leaders, the weaponizing of relatively simple public health recommendations, the enormous disproportionate toll on people of color, and the torrent of online misinformation? We urgently need data reflective of today's circumstances along with the policy to ensure it is quickly and effectively disseminated to the public health and clinical workforce.

Last year prompted in part by the measles outbreaks, Reps. Michael C. Burgess (R-TX) and Kim Shrier (D-WA), both physicians, introduced the bipartisan Vaccines Act to develop a national surveillance system to monitor vaccination rates and conduct a national campaign to increase awareness of the importance of vaccines. Unfortunately, that legislation wasn't passed. In response to COVID-19, Senate HELP Committee Ranking member Patty Murray (D-WA) has sought funds to strengthen vaccine confidence and combat misinformation with federally supported communication, research, and outreach efforts. Leading experts outside of Congress have called for this type of research, including the Sabin-Aspen Vaccine Science Policy Institute. Most recently, the National Academy of Sciences, in its report regarding the equitable distribution of the COVID-19 vaccine, included as one of its recommendations the need for "a rapid-response program to advance the science behind vaccine confidence."

Addressing trust in vaccination has never been as challenging nor as consequential. The stunning scientific achievement of COVID-19 vaccines anticipated to be ready in record time needs to be backed up by an equally ambitious and evidence-based effort to build the public's confidence in the vaccines. In its remaining days, the Trump Administration should invest in building vaccine confidence with current resources, targeting efforts to ensure COVID vaccines reduce rather than exacerbate racial and ethnic health disparities. Congress must also act to provide the additional research and outreach resources needed as well as pass the Vaccines Act so we are better prepared in the future.

If we don't succeed, COVID-19 will continue wreaking havoc on our health, our society, and our economy. We will also permanently jeopardize public trust in vaccines – one of the most successful medical interventions in human history.

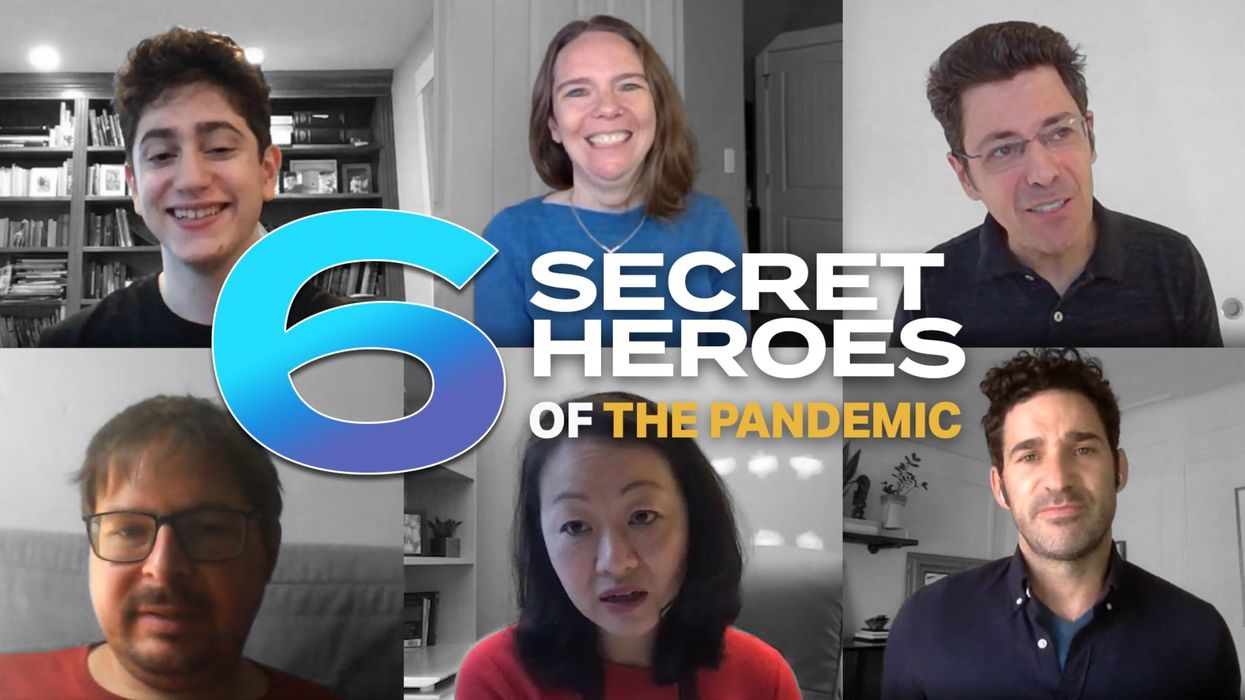

New Video: Secret Heroes of the Pandemic

These 6 people deserve to be widely known and celebrated for their brave and ingenious contributions to battling the pandemic.

Kira Peikoff was the editor-in-chief of Leaps.org from 2017 to 2021. As a journalist, her work has appeared in The New York Times, Newsweek, Nautilus, Popular Mechanics, The New York Academy of Sciences, and other outlets. She is also the author of four suspense novels that explore controversial issues arising from scientific innovation: Living Proof, No Time to Die, Die Again Tomorrow, and Mother Knows Best. Peikoff holds a B.A. in Journalism from New York University and an M.S. in Bioethics from Columbia University. She lives in New Jersey with her husband and two young sons. Follow her on Twitter @KiraPeikoff.