Don’t fear AI, fear power-hungry humans

Story by Big Think

We live in strange times, when the technology we depend on the most is also that which we fear the most. We celebrate cutting-edge achievements even as we recoil in fear at how they could be used to hurt us. From genetic engineering and AI to nuclear technology and nanobots, the list of awe-inspiring, fast-developing technologies is long.

However, this fear of the machine is not as new as it may seem. Technology has a longstanding alliance with power and the state. The dark side of human history can be told as a series of wars whose victors are often those with the most advanced technology. (There are exceptions, of course.) Science, and its technological offspring, follows the money.

This fear of the machine seems to be misplaced. The machine has no intent: only its maker does. The fear of the machine is, in essence, the fear we have of each other — of what we are capable of doing to one another.

How AI changes things

Sure, you would reply, but AI changes everything. With artificial intelligence, the machine itself will develop some sort of autonomy, however ill-defined. It will have a will of its own. And this will, if it reflects anything that seems human, will not be benevolent. With AI, the claim goes, the machine will somehow know what it must do to get rid of us. It will threaten us as a species.

Well, this fear is also not new. Mary Shelley wrote Frankenstein in 1818 to warn us of what science could do if it served the wrong calling. In the case of her novel, Dr. Frankenstein’s call was to win the battle against death — to reverse the course of nature. Granted, any cure of an illness interferes with the normal workings of nature, yet we are justly proud of having developed cures for our ailments, prolonging life and increasing its quality. Science can achieve nothing more noble. What messes things up is when the pursuit of good is confused with that of power. In this distorted scale, the more powerful the better. The ultimate goal is to be as powerful as gods — masters of time, of life and death.

Should countries create a World Mind Organization that controls the technologies that develop AI?

Back to AI, there is no doubt the technology will help us tremendously. We will have better medical diagnostics, better traffic control, better bridge designs, and better pedagogical animations to teach in the classroom and virtually. But we will also have better winnings in the stock market, better war strategies, and better soldiers and remote ways of killing. This grants real power to those who control the best technologies. It increases the take of the winners of wars — those fought with weapons, and those fought with money.

A story as old as civilization

The question is how to move forward. This is where things get interesting and complicated. We hear over and over again that there is an urgent need for safeguards, for controls and legislation to deal with the AI revolution. Great. But if these machines are essentially functioning in a semi-black box of self-teaching neural nets, how exactly are we going to make safeguards that are sure to remain effective? How are we to ensure that the AI, with its unlimited ability to gather data, will not come up with new ways to bypass our safeguards, the same way that people break into safes?

The second question is that of global control. As I wrote before, overseeing new technology is complex. Should countries create a World Mind Organization that controls the technologies that develop AI? If so, how do we organize this planet-wide governing board? Who should be a part of its governing structure? What mechanisms will ensure that governments and private companies do not secretly break the rules, especially when to do so would put the most advanced weapons in the hands of the rule breakers? They will need those, after all, if other actors break the rules as well.

As before, the countries with the best scientists and engineers will have a great advantage. A new international détente will emerge in the molds of the nuclear détente of the Cold War. Again, we will fear destructive technology falling into the wrong hands. This can happen easily. AI machines will not need to be built at an industrial scale, as nuclear capabilities were, and AI-based terrorism will be a force to reckon with.

So here we are, afraid of our own technology all over again.

What is missing from this picture? It continues to illustrate the same destructive pattern of greed and power that has defined so much of our civilization. The failure it shows is moral, and only we can change it. We define civilization by the accumulation of wealth, and this worldview is killing us. The project of civilization we invented has become self-cannibalizing. As long as we do not see this, and we keep on following the same route we have trodden for the past 10,000 years, it will be very hard to legislate the technology to come and to ensure such legislation is followed. Unless, of course, AI helps us become better humans, perhaps by teaching us how stupid we have been for so long. This sounds far-fetched, given who this AI will be serving. But one can always hope.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.

Scientists turn pee into power in Uganda

With conventional fuel cells as their model, researchers learned to use similar chemical reactions to make a fuel from microbes in pee.

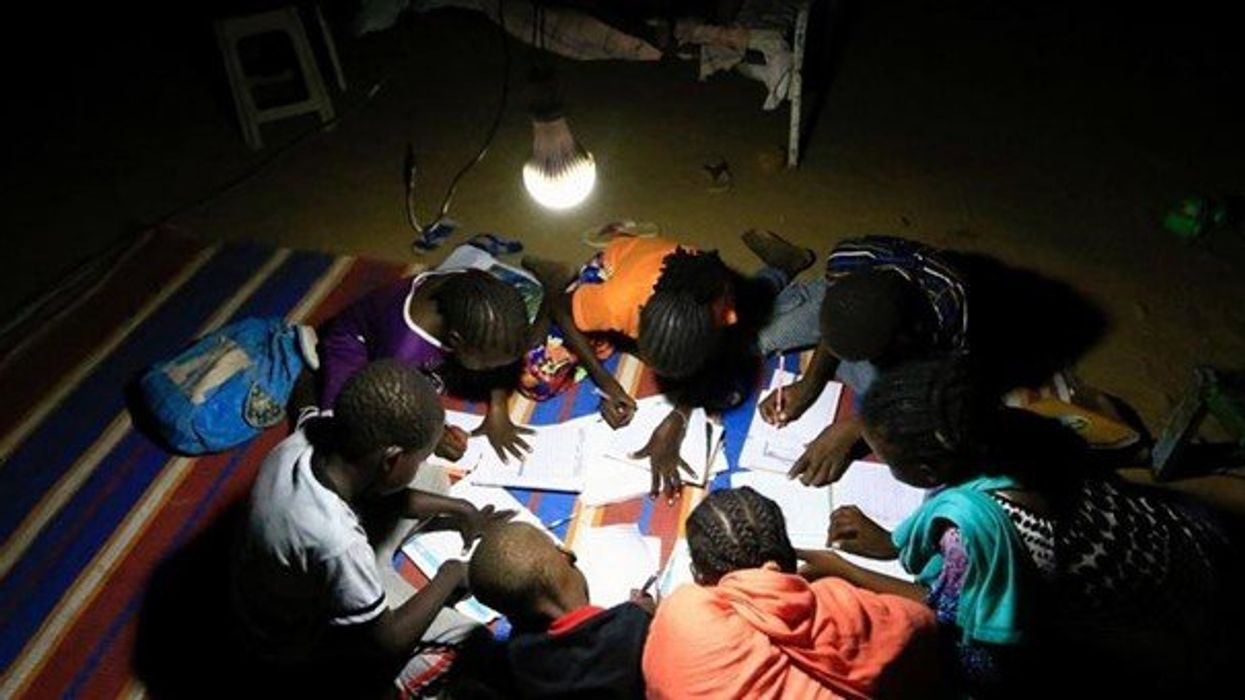

At the edge of a dirt road flanked by trees and green mountains outside the town of Kisoro, Uganda, sits the concrete building that houses Sesame Girls School, where girls aged 11 to 19 can live, learn and, at least for a while, safely use a toilet. In many developing regions, toileting at night is especially dangerous for children. Without electrical power for lighting, kids may fall into the deep pits of the latrines through broken or unsteady floorboards. Girls are sometimes assaulted by men who hide in the dark.

For the Sesame School girls, though, bright LED lights, connected to tiny gadgets, chased the fears away. They got to use new, clean toilets lit by the power of their own pee. Some girls even used the light provided by the latrines to study.

Urine, whether animal or human, is more than waste. It’s a cheap and abundant resource. Each day across the globe, 8.1 billion humans make 4 billion gallons of pee. Cows, pigs, deer, elephants and other animals add more. By spending money to get rid of it, we waste a renewable resource that can serve more than one purpose. Microorganisms that feed on nutrients in urine can be used in a microbial fuel cell that generates electricity – or "pee power," as the Sesame girls called it.

Plus, urine contains water, phosphorus, potassium and nitrogen, the key ingredients plants need to grow and survive. Human urine could replace about 25 percent of current nitrogen and phosphorous fertilizers worldwide and could save water for gardens and crops. The average U.S. resident flushes a toilet bowl containing only pee and paper about six to seven times a day, which adds up to about 3,500 gallons of water down per year. Plus cows in the U.S. produce 231 gallons of the stuff each year.

Pee power

A conventional fuel cell uses chemical reactions to produce energy, as electrons move from one electrode to another to power a lightbulb or phone. Ioannis Ieropoulos, a professor and chair of Environmental Engineering at the University of Southampton in England, realized the same type of reaction could be used to make a fuel from microbes in pee.

Bacterial species like Shewanella oneidensis and Pseudomonas aeruginosa can consume carbon and other nutrients in urine and pop out electrons as a result of their digestion. In a microbial fuel cell, one electrode is covered in microbes, immersed in urine and kept away from oxygen. Another electrode is in contact with oxygen. When the microbes feed on nutrients, they produce the electrons that flow through the circuit from one electrod to another to combine with oxygen on the other side. As long as the microbes have fresh pee to chomp on, electrons keep flowing. And after the microbes are done with the pee, it can be used as fertilizer.

These microbes are easily found in wastewater treatment plants, ponds, lakes, rivers or soil. Keeping them alive is the easy part, says Ieropoulos. Once the cells start producing stable power, his group sequences the microbes and keeps using them.

Like many promising technologies, scaling these devices for mass consumption won’t be easy, says Kevin Orner, a civil engineering professor at West Virginia University. But it’s moving in the right direction. Ieropoulos’s device has shrunk from the size of about three packs of cards to a large glue stick. It looks and works much like a AAA battery and produce about the same power. By itself, the device can barely power a light bulb, but when stacked together, they can do much more—just like photovoltaic cells in solar panels. His lab has produced 1760 fuel cells stacked together, and with manufacturing support, there’s no theoretical ceiling, he says.

Although pure urine produces the most power, Ieropoulos’s devices also work with the mixed liquids of the wastewater treatment plants, so they can be retrofit into urban wastewater utilities.

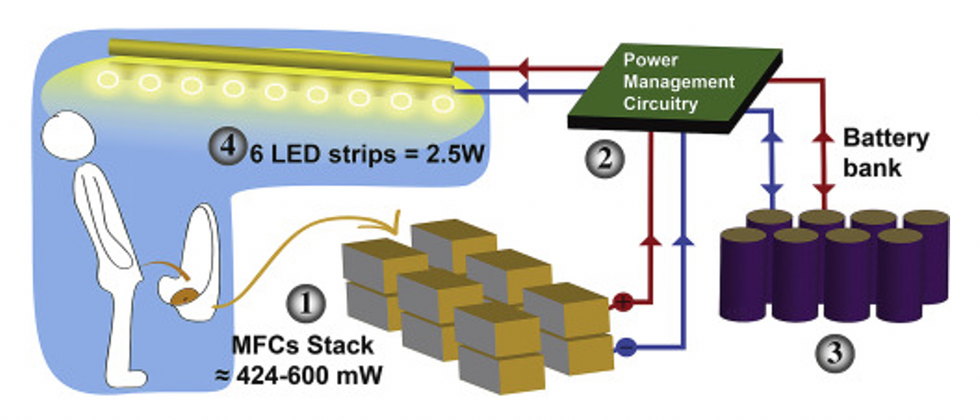

This image shows how the pee-powered system works. Pee feeds bacteria in the stack of fuel cells (1), which give off electrons (2) stored in parallel cylindrical cells (3). These cells are connected to a voltage regulator (4), which smooths out the electrical signal to ensure consistent power to the LED strips lighting the toilet.

Courtesy Ioannis Ieropoulos

Key to the long-term success of any urine reclamation effort, says Orner, is avoiding what he calls “parachute engineering”—when well-meaning scientists solve a problem with novel tech and then abandon it. “The way around that is to have either the need come from the community or to have an organization in a community that is committed to seeing a project operate and maintained,” he says.

Success with urine reclamation also depends on the economy. “If energy prices are low, it may not make sense to recover energy,” says Orner. “But right now, fertilizer prices worldwide are generally pretty high, so it may make sense to recover fertilizer and nutrients.” There are obstacles, too, such as few incentives for builders to incorporate urine recycling into new construction. And any hiccups like leaks or waste seepage will cost builders money and reputation. Right now, Orner says, the risks are just too high.

Despite the challenges, Ieropoulos envisions a future in which urine is passed through microbial fuel cells at wastewater treatment plants, retrofitted septic tanks, and building basements, and is then delivered to businesses to use as agricultural fertilizers. Although pure urine produces the most power, Ieropoulos’s devices also work with the mixed liquids of the wastewater treatment plants, so they can be retrofitted into urban wastewater utilities where they can make electricity from the effluent. And unlike solar cells, which are a common target of theft in some areas, nobody wants to steal a bunch of pee.

When Ieropoulos’s team returned to wrap up their pilot project 18 months later, the school’s director begged them to leave the fuel cells in place—because they made a major difference in students’ lives. “We replaced it with a substantial photovoltaic panel,” says Ieropoulos, They couldn’t leave the units forever, he explained, because of intellectual property reasons—their funders worried about theft of both the technology and the idea. But the photovoltaic replacement could be stolen, too, leaving the girls in the dark.

The story repeated itself at another school, in Nairobi, Kenya, as well as in an informal settlement in Durban, South Africa. Each time, Ieropoulos vowed to return. Though the pandemic has delayed his promise, he is resolute about continuing his work—it is a moral and legal obligation. “We've made a commitment to ourselves and to the pupils,” he says. “That's why we need to go back.”

Urine as fertilizer

Modern day industrial systems perpetuate the broken cycle of nutrients. When plants grow, they use up nutrients the soil. We eat the plans and excrete some of the nutrients we pass them into rivers and oceans. As a result, farmers must keep fertilizing the fields while our waste keeps fertilizing the waterways, where the algae, overfertilized with nitrogen, phosphorous and other nutrients grows out of control, sucking up oxygen that other marine species need to live. Few global communities remain untouched by the related challenges this broken chain create: insufficient clean water, food, and energy, and too much human and animal waste.

The Rich Earth Institute in Vermont runs a community-wide urine nutrient recovery program, which collects urine from homes and businesses, transports it for processing, and then supplies it as fertilizer to local farms.

One solution to this broken cycle is reclaiming urine and returning it back to the land. The Rich Earth Institute in Vermont is one of several organizations around the world working to divert and save urine for agricultural use. “The urine produced by an adult in one day contains enough fertilizer to grow all the wheat in one loaf of bread,” states their website.

Notably, while urine is not entirely sterile, it tends to harbor fewer pathogens than feces. That’s largely because urine has less organic matter and therefore less food for pathogens to feed on, but also because the urinary tract and the bladder have built-in antimicrobial defenses that kill many germs. In fact, the Rich Earth Institute says it’s safe to put your own urine onto crops grown for home consumption. Nonetheless, you’ll want to dilute it first because pee usually has too much nitrogen and can cause “fertilizer burn” if applied straight without dilution. Other projects to turn urine into fertilizer are in progress in Niger, South Africa, Kenya, Ethiopia, Sweden, Switzerland, The Netherlands, Australia, and France.

Eleven years ago, the Institute started a program that collects urine from homes and businesses, transports it for processing, and then supplies it as fertilizer to local farms. By 2021, the program included 180 donors producing over 12,000 gallons of urine each year. This urine is helping to fertilize hay fields at four partnering farms. Orner, the West Virginia professor, sees it as a success story. “They've shown how you can do this right--implementing it at a community level scale."

In this week's Friday Five, breathing this way may cut down on anxiety, a fasting regimen that could make you sick, this type of job makes men more virile, 3D printed hearts could save your life, and the role of metformin in preventing dementia.

The Friday Five covers five stories in research that you may have missed this week. There are plenty of controversies and troubling ethical issues in science – and we get into many of them in our online magazine – but this news roundup focuses on scientific creativity and progress to give you a therapeutic dose of inspiration headed into the weekend.

Here are the promising studies covered in this week's Friday Five, featuring interviews with Dr. David Spiegel, associate chair of psychiatry and behavioral sciences at Stanford, and Dr. Filip Swirski, professor of medicine and cardiology at the Icahn School of Medicine at Mount Sinai.

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

Here are the promising studies covered in this week's Friday Five, featuring interviews with Dr. David Spiegel, associate chair of psychiatry and behavioral sciences at Stanford, and Dr. Filip Swirski, professor of medicine and cardiology at the Icahn School of Medicine at Mount Sinai.

- Breathing this way cuts down on anxiety*

- Could your fasting regimen make you sick?

- This type of job makes men more virile

- 3D printed hearts could save your life

- Yet another potential benefit of metformin

* This video with Dr. Andrew Huberman of Stanford shows exactly how to do the breathing practice.

This podcast originally aired on March 3, 2023.