Don’t fear AI, fear power-hungry humans

Story by Big Think

We live in strange times, when the technology we depend on the most is also that which we fear the most. We celebrate cutting-edge achievements even as we recoil in fear at how they could be used to hurt us. From genetic engineering and AI to nuclear technology and nanobots, the list of awe-inspiring, fast-developing technologies is long.

However, this fear of the machine is not as new as it may seem. Technology has a longstanding alliance with power and the state. The dark side of human history can be told as a series of wars whose victors are often those with the most advanced technology. (There are exceptions, of course.) Science, and its technological offspring, follows the money.

This fear of the machine seems to be misplaced. The machine has no intent: only its maker does. The fear of the machine is, in essence, the fear we have of each other — of what we are capable of doing to one another.

How AI changes things

Sure, you would reply, but AI changes everything. With artificial intelligence, the machine itself will develop some sort of autonomy, however ill-defined. It will have a will of its own. And this will, if it reflects anything that seems human, will not be benevolent. With AI, the claim goes, the machine will somehow know what it must do to get rid of us. It will threaten us as a species.

Well, this fear is also not new. Mary Shelley wrote Frankenstein in 1818 to warn us of what science could do if it served the wrong calling. In the case of her novel, Dr. Frankenstein’s call was to win the battle against death — to reverse the course of nature. Granted, any cure of an illness interferes with the normal workings of nature, yet we are justly proud of having developed cures for our ailments, prolonging life and increasing its quality. Science can achieve nothing more noble. What messes things up is when the pursuit of good is confused with that of power. In this distorted scale, the more powerful the better. The ultimate goal is to be as powerful as gods — masters of time, of life and death.

Should countries create a World Mind Organization that controls the technologies that develop AI?

Back to AI, there is no doubt the technology will help us tremendously. We will have better medical diagnostics, better traffic control, better bridge designs, and better pedagogical animations to teach in the classroom and virtually. But we will also have better winnings in the stock market, better war strategies, and better soldiers and remote ways of killing. This grants real power to those who control the best technologies. It increases the take of the winners of wars — those fought with weapons, and those fought with money.

A story as old as civilization

The question is how to move forward. This is where things get interesting and complicated. We hear over and over again that there is an urgent need for safeguards, for controls and legislation to deal with the AI revolution. Great. But if these machines are essentially functioning in a semi-black box of self-teaching neural nets, how exactly are we going to make safeguards that are sure to remain effective? How are we to ensure that the AI, with its unlimited ability to gather data, will not come up with new ways to bypass our safeguards, the same way that people break into safes?

The second question is that of global control. As I wrote before, overseeing new technology is complex. Should countries create a World Mind Organization that controls the technologies that develop AI? If so, how do we organize this planet-wide governing board? Who should be a part of its governing structure? What mechanisms will ensure that governments and private companies do not secretly break the rules, especially when to do so would put the most advanced weapons in the hands of the rule breakers? They will need those, after all, if other actors break the rules as well.

As before, the countries with the best scientists and engineers will have a great advantage. A new international détente will emerge in the molds of the nuclear détente of the Cold War. Again, we will fear destructive technology falling into the wrong hands. This can happen easily. AI machines will not need to be built at an industrial scale, as nuclear capabilities were, and AI-based terrorism will be a force to reckon with.

So here we are, afraid of our own technology all over again.

What is missing from this picture? It continues to illustrate the same destructive pattern of greed and power that has defined so much of our civilization. The failure it shows is moral, and only we can change it. We define civilization by the accumulation of wealth, and this worldview is killing us. The project of civilization we invented has become self-cannibalizing. As long as we do not see this, and we keep on following the same route we have trodden for the past 10,000 years, it will be very hard to legislate the technology to come and to ensure such legislation is followed. Unless, of course, AI helps us become better humans, perhaps by teaching us how stupid we have been for so long. This sounds far-fetched, given who this AI will be serving. But one can always hope.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.

A Futuristic Suicide Machine Aims to End the Stigma of Assisted Dying

A prototype of the Sarco is currently on display at Venice Design.

Bob Dent ended his life in Perth, Australia in 1996 after multiple surgeries to treat terminal prostate cancer had left him mostly bedridden and in agony.

Although Dent and his immediate family believed it was the right thing to do, the physician who assisted in his suicide – and had pushed for Australia's Northern Territory to legalize the practice the prior year – was deeply shaken.

"You climb in, you are going somewhere, you are leaving, and you are saying goodbye."

"When you get to know someone pretty well, and they set a date to have lunch with you and then have them die at 2 p.m., it's hard to forget," recalls Philip Nitschke.

Nitschke remembers being highly anxious that the device he designed – which released a fatal dose of Nembutal into a patient's bloodstream after they answered a series of questions on a laptop computer to confirm consent – wouldn't work. He was so alarmed by the prospect he recalls his shirt being soaked through with perspiration.

Known as a "Deliverance Machine," it was comprised of the computer, attached by a sheet of wiring to an attache case containing an apparatus for delivering the Nembutal. Although gray, squat and grimly businesslike, it was vastly more sophisticated than Jack Kevorkian's Thanatron – a tangle of tubes, hooks and vials redolent of frontier dentistry.

The Deliverance Machine did work – for Dent and three other patients of Nitschke. However, it remained far from reassuring. "It's not a very comfortable feeling, having a little suitcase and going around to people," he says. "I felt a little like an executioner."

The furor caused in part by Nitschke's work led to Australia's federal government banning physician-assisted suicide in 1997. Nitschke went on to co-found Exit International, one of the foremost assisted suicide advocacy groups, and relocated to the Netherlands.

Exit International recently introduced its most ambitious initiative to date. It's called the Sarco — essentially the Eames lounger of suicide machines. A prototype is currently on display at Venice Design, an adjunct to the Biennale.

Sheathed in a soothing blue coating, the Sarco prototype contains a window and pivots on a pedestal to allow viewing by friends and family. Its close quarters means the opening of a small canister of liquid nitrogen would cause quick and painless asphyxiation. Patrons with second thoughts can press a button to cancel the process.

"The sleek and colorful death-pod looks like it is about to whisk you away to a new territory, or that it just landed after being launched from a Star Trek federation ship," says Charles C. Camosy, associate professor of theological and social ethics at Fordham University in New York City, in an email. Camosy, who has profound misgivings about such a device, was not being complimentary.

Nitschke's goal is to de-medicalize assisted suicide, as liquid nitrogen is readily available. But he suggests employing a futuristic design will also move debate on the issue forward.

"You pick the time...have the party and people come around. You climb in, you are going somewhere, you are leaving, and you are saying goodbye," he says. "It lends itself to a sense of occasion."

Assisted suicide is spreading in developed countries, but very slowly. It was legalized again in Australia just last June, but only in one of its six states. It is legal throughout Canada and in nine U.S. states.

Although the process is outlawed throughout much of Europe, nations permitting it have taken a liberal approach. Euthanasia — where death may be instigated by an assenting physician at a patient's request — is legal in both Belgium and the Netherlands. A terminal illness is not required; a severe disability or a condition causing profound misery may suffice.

Only Switzerland permits suicide with non-physician assistance regardless of an individual's medical condition. David Goodall, a 104-year Australian scientist, traveled 8,000 miles to Basel last year to die with Exit International's assistance. Goodall was in good health for his age and his mind was needle sharp; at a news conference the day before he passed, he thoughtfully answered questions and sang Beethoven's "Ode to Joy" from memory. He simply believed he had lived long enough and wanted to avoid a diminishing quality of life.

"Dying is not a medical process, and if you've decided to do this through rational [decision-making], you should not have to get permission from the medical profession," Nitschke says.

However, the deathstyle aspirations of the Sarco bely the fact obtaining one will not be as simple as swiping a credit card. To create a legal firewall, anyone wishing to obtain a Sarco would have to purchase the plans, print the device themselves — it requires a high-end industrial printer to do so — then assemble it. As with the Deliverance device, the end user must be able to answer computer-generated questions designed by a Swiss psychiatrist to determine if they are making a rational decision. The process concludes with the transmission of a four-digit code to make the Sarco operational.

As with many cutting-edge designs, the path to a working prototype has been nettlesome. Plans for a printed window have been abandoned. How it will be obtained by end users remains unclear. There have also been complications in creating an AI-based algorithm underlying the user questions to reliably determine if the individual is of sound mind.

While Nitschke believes the Sarco will be deployed in Switzerland for the first time sometime next year, it will almost certainly be a subject of immense controversy. The Hastings Center, one of the world's major bioethics organizations and a leader on end-of-life decision-making, flatly refused to comment on the Sarco.

Camosy strongly condemns it. He notes since U.S. life expectancy is actually shortening — with despair-driven suicide playing a role — efforts must be marshaled to mitigate the trend. To him, the Sarco sends an utterly wrong message.

"It is diabolical that we would create machines to make it easier for people to kill themselves."

"Most people who request help in killing themselves don't do so because they are in intense, unbearable pain," he observes. "They do it because the culture in which they live has made them feel like a burden. This culture has told them they only have value if they are able to be 'productive' and 'contribute to society.'" He adds that the large majority of disability activists have been against assisted suicide and euthanasia because it is imperative to their movement that a stigma remain in place.

"It is diabolical that we would create machines to make it easier for people to kill themselves," Camosy concludes. "And anyone with even a single progressive bone in their body should resist this disturbingly morbid profit-making venture with everything they have."

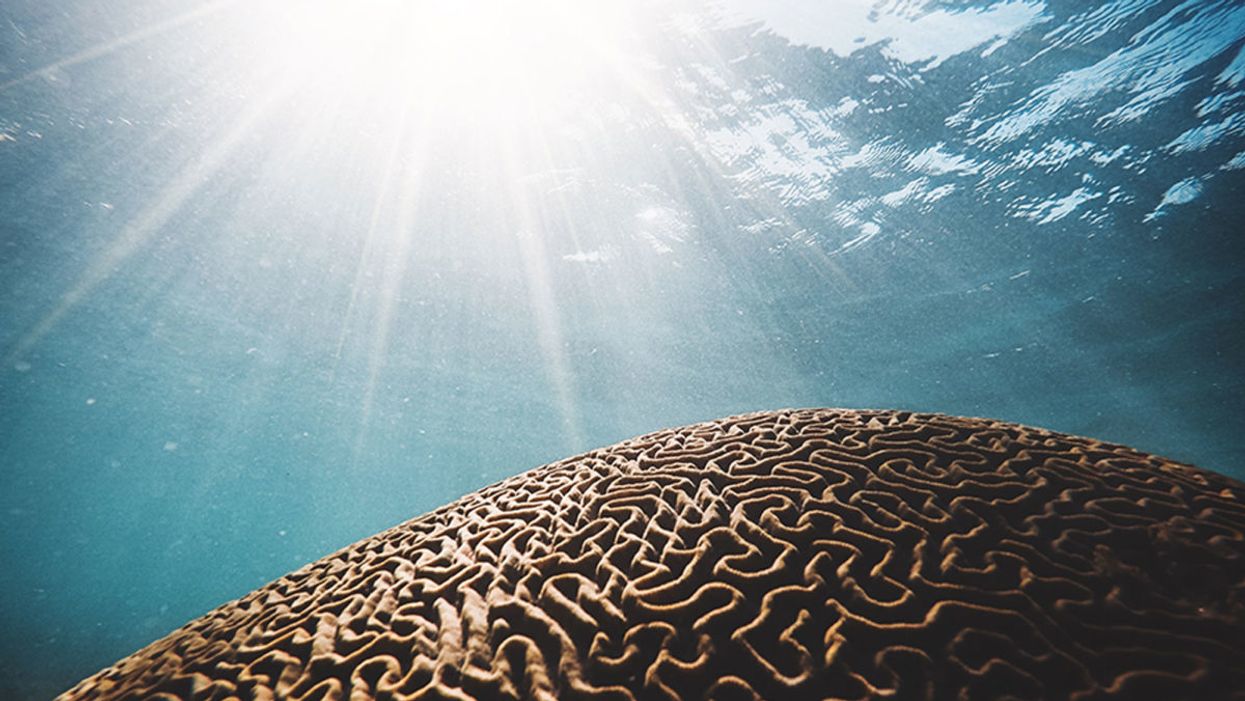

Biologists are Growing Mini-Brains. What If They Become Conscious?

Brain coral metaphorically represents the concept of growing organoids in a dish that may eventually attain consciousness--if scientists can first determine what that means.

Few images are more uncanny than that of a brain without a body, fully sentient but afloat in sterile isolation. Such specters have spooked the speculatively-minded since the seventeenth century, when René Descartes declared, "I think, therefore I am."

Since August 29, 2019, the prospect of a bodiless but functional brain has begun to seem far less fantastical.

In Meditations on First Philosophy (1641), the French penseur spins a chilling thought experiment: he imagines "having no hands or eyes, or flesh, or blood or senses," but being tricked by a demon into believing he has all these things, and a world to go with them. A disembodied brain itself becomes a demon in the classic young-adult novel A Wrinkle in Time (1962), using mind control to subjugate a planet called Camazotz. In the sci-fi blockbuster The Matrix (1999), most of humanity endures something like Descartes' nightmare—kept in womblike pods by their computer overlords, who fill the captives' brains with a synthetized reality while tapping their metabolic energy as a power source.

Since August 29, 2019, however, the prospect of a bodiless but functional brain has begun to seem far less fantastical. On that date, researchers at the University of California, San Diego published a study in the journal Cell Stem Cell, reporting the detection of brainwaves in cerebral organoids—pea-size "mini-brains" grown in the lab. Such organoids had emitted random electrical impulses in the past, but not these complex, synchronized oscillations. "There are some of my colleagues who say, 'No, these things will never be conscious,'" lead researcher Alysson Muotri, a Brazilian-born biologist, told The New York Times. "Now I'm not so sure."

Alysson Muotri has no qualms about his creations attaining consciousness as a side effect of advancing medical breakthroughs.

(Credit: ZELMAN STUDIOS)

Muotri's findings—and his avowed ambition to push them further—brought new urgency to simmering concerns over the implications of brain organoid research. "The closer we come to his goal," said Christof Koch, chief scientist and president of the Allen Brain Institute in Seattle, "the more likely we will get a brain that is capable of sentience and feeling pain, agony, and distress." At the annual meeting of the Society for Neuroscience, researchers from the Green Neuroscience Laboratory in San Diego called for a partial moratorium, warning that the field was "perilously close to crossing this ethical Rubicon and may have already done so."

Yet experts are far from a consensus on whether brain organoids can become conscious, whether that development would necessarily be dreadful—or even how to tell if it has occurred.

So how worried do we need to be?

***

An organoid is a miniaturized, simplified version of an organ, cultured from various types of stem cells. Scientists first learned to make them in the 1980s, and have since turned out mini-hearts, lungs, kidneys, intestines, thyroids, and retinas, among other wonders. These creations can be used for everything from observation of basic biological processes to testing the effects of gene variants, pathogens, or medications. They enable researchers to run experiments that might be less accurate using animal models and unethical or impractical using actual humans. And because organoids are three-dimensional, they can yield insights into structural, developmental, and other matters that an ordinary cell culture could never provide.

In 2006, Japanese biologist Shinya Yamanaka developed a mix of proteins that turned skin cells into "pluripotent" stem cells, which could subsequently be transformed into neurons, muscle cells, or blood cells. (He later won a Nobel Prize for his efforts.) Developmental biologist Madeline Lancaster, then a post-doctoral student at the Institute of Molecular Biotechnology in Vienna, adapted that technique to grow the first brain organoids in 2013. Other researchers soon followed suit, cultivating specialized mini-brains to study disorders ranging from microcephaly to schizophrenia.

Muotri, now a youthful 45-year-old, was among the boldest of these pioneers. His team revealed the process by which Zika virus causes brain damage, and showed that sofosbuvir, a drug previously approved for hepatitis C, protected organoids from infection. He persuaded NASA to fly his organoids to the International Space Station, where they're being used to trace the impact of microgravity on neurodevelopment. He grew brain organoids using cells implanted with Neanderthal genes, and found that their wiring differed from organoids with modern DNA.

Like the latter experiment, Muotri's brainwave breakthrough emerged from a longtime obsession with neuroarchaeology. "I wanted to figure out how the human brain became unique," he told me in a phone interview. "Compared to other species, we are very social. So I looked for conditions where the social brain doesn't function well, and that led me to autism." He began investigating how gene variants associated with severe forms of the disorder affected neural networks in brain organoids.

Tinkering with chemical cocktails, Muotri and his colleagues were able to keep their organoids alive far longer than earlier versions, and to culture more diverse types of brain cells. One team member, Priscilla Negraes, devised a way to measure the mini-brains' electrical activity, by planting them in a tray lined with electrodes. By four months, the researchers found to their astonishment, normal organoids (but not those with an autism gene) emitted bursts of synchronized firing, separated by 20-second silences. At nine months, the organoids were producing up to 300,000 spikes per minute, across a range of frequencies.

He shared his vision for "brain farms," which would grow organoids en masse for drug development or tissue transplants.

When the team used an artificial intelligence system to compare these patterns with EEGs of gestating fetuses, the program found them to be nearly identical at each stage of development. As many scientists noted when the news broke, that didn't mean the organoids were conscious. (Their chaotic bursts bore little resemblance to the orderly rhythms of waking adult brains.) But to some observers, it suggested that they might be approaching the borderline.

***

Shortly after Muotri's team published their findings, I attended a conference at UCSD on the ethical questions they raised. The scientist, in jeans and a sky-blue shirt, spoke rhapsodically of brain organoids' potential to solve scientific mysteries and lead to new medical treatments. He showed video of a spider-like robot connected to an organoid through a computer interface. The machine responded to different brainwave patterns by walking or stopping—the first stage, Muotri hoped, in teaching organoids to communicate with the outside world. He described his plans to develop organoids with multiple brain regions, and to hook them up to retinal organoids so they could "see." He shared his vision for "brain farms," which would grow organoids en masse for drug development or tissue transplants.

Muotri holds a spider-like robot that can connect to an organoid through a computer interface.

(Credit: ROLAND LIZARONDO/KPBS)

Yet Muotri also stressed the current limitations of the technology. His organoids contain approximately 2 million neurons, compared to about 200 million in a rat's brain and 86 billion in an adult human's. They consist only of a cerebral cortex, and lack many of a real brain's cell types. Because researchers haven't yet found a way to give organoids blood vessels, moreover, nutrients can't penetrate their inner recesses—a severe constraint on their growth.

Another panelist strongly downplayed the imminence of any Rubicon. Patricia Churchland, an eminent philosopher of neuroscience, cited research suggesting that in mammals, networked connections between the cortex and the thalamus are a minimum requirement for consciousness. "It may be a blessing that you don't have the enabling conditions," she said, "because then you don't have the ethical issues."

Christof Koch, for his part, sounded much less apprehensive than the Times had made him seem. He noted that science lacks a definition of consciousness, beyond an organism's sense of its own existence—"the fact that it feels like something to be you or me." As to the competing notions of how the phenomenon arises, he explained, he prefers one known as Integrated Information Theory, developed by neuroscientist Giulio Tononi. IIT considers consciousness to be a quality intrinsic to systems that reach a certain level of complexity, integration, and causal power (the ability for present actions to determine future states). By that standard, Koch doubted that brain organoids had stepped over the threshold.

One way to tell, he said, might be to use the "zap and zip" test invented by Tononi and his colleague Marcello Massimini in the early 2000s to determine whether patients are conscious in the medical sense. This technique zaps the brain with a pulse of magnetic energy, using a coil held to the scalp. As loops of neural impulses cascade through the cerebral circuitry, an EEG records the firing patterns. In a waking brain, the feedback is highly complex—neither totally predictable nor totally random. In other states, such as sleep, coma, or anesthesia, the rhythms are simpler. Applying an algorithm commonly used for computer "zip" files, the researchers devised a scale that allowed them to correctly diagnose most patients who were minimally conscious or in a vegetative state.

If scientists could find a way to apply "zap and zip" to brain organoids, Koch ventured, it should be possible to rank their degree of awareness on a similar scale. And if it turned out that an organoid was conscious, he added, our ethical calculations should strive to minimize suffering, and avoid it where possible—just as we now do, or ought to, with animal subjects. (Muotri, I later learned, was already contemplating sensors that would signal when organoids were likely in distress.)

During the question-and-answer period, an audience member pressed Churchland about how her views might change if the "enabling conditions" for consciousness in brain organoids were to arise. "My feeling is, we'll answer that when we get there," she said. "That's an unsatisfying answer, but it's because I don't know. Maybe they're totally happy hanging out in a dish! Maybe that's the way to be."

***

Muotri himself admits to no qualms about his creations attaining consciousness, whether sooner or later. "I think we should try to replicate the model as close as possible to the human brain," he told me after the conference. "And if that involves having a human consciousness, we should go in that direction." Still, he said, if strong evidence of sentience does arise, "we should pause and discuss among ourselves what to do."

"The field is moving so rapidly, you blink your eyes and another advance has occurred."

Churchland figures it will be at least a decade before anyone reaches the crossroads. "That's partly because the thalamus has a very complex architecture," she said. It might be possible to mimic that architecture in the lab, she added, "but I tend to think it's not going to be a piece of cake."

If anything worries Churchland about brain organoids, in fact, it's that Muotri's visionary claims for their potential could set off a backlash among those who find them unacceptably spooky. "Alysson has done brilliant work, and he's wonderfully charismatic and charming," she said. "But then there's that guy back there who doesn't think it's exciting; he thinks you're the Devil incarnate. You're playing into the hands of people who are going to shut you down."

Koch, however, is more willing to indulge Muotri's dreams. "Ten years ago," he said, "nobody would have believed you can take a stem cell and get an entire retina out of it. It's absolutely frigging amazing. So who am I to say the same thing can't be true for the thalamus or the cortex? The field is moving so rapidly, you blink your eyes and another advance has occurred."

The point, he went on, is not to build a Cartesian thought experiment—or a Matrix-style dystopia—but to vanquish some of humankind's most terrifying foes. "You know, my dad passed away of Parkinson's. I had a twin daughter; she passed away of sudden death syndrome. One of my best friends killed herself; she was schizophrenic. We want to eliminate all these terrible things, and that requires experimentation. We just have to go into it with open eyes."