Bad Actors Getting Your Health Data Is the FBI’s Latest Worry

A hacker activating a 3D rendering of DNA data.

In February 2015, the health insurer Anthem revealed that criminal hackers had gained access to the company's servers, exposing the personal information of nearly 79 million patients. It's the largest known healthcare breach in history.

FBI agents worry that the vast amounts of healthcare data being generated for precision medicine efforts could leave the U.S. vulnerable to cyber and biological attacks.

That year, the data of millions more would be compromised in one cyberattack after another on American insurers and other healthcare organizations. In fact, for the past several years, the number of reported data breaches has increased each year, from 199 in 2010 to 344 in 2017, according to a September 2018 analysis in the Journal of the American Medical Association.

The FBI's Edward You sees this as a worrying trend. He says hackers aren't just interested in your social security or credit card number. They're increasingly interested in stealing your medical information. Hackers can currently use this information to make fake identities, file fraudulent insurance claims, and order and sell expensive drugs and medical equipment. But beyond that, a new kind of cybersecurity threat is around the corner.

Mr. You and others worry that the vast amounts of healthcare data being generated for precision medicine efforts could leave the U.S. vulnerable to cyber and biological attacks. In the wrong hands, this data could be used to exploit or extort an individual, discriminate against certain groups of people, make targeted bioweapons, or give another country an economic advantage.

Precision medicine, of course, is the idea that medical treatments can be tailored to individuals based on their genetics, environment, lifestyle or other traits. But to do that requires collecting and analyzing huge quantities of health data from diverse populations. One research effort, called All of Us, launched by the U.S. National Institutes of Health last year, aims to collect genomic and other healthcare data from one million participants with the goal of advancing personalized medical care.

Other initiatives are underway by academic institutions and healthcare organizations. Electronic medical records, genetic tests, wearable health trackers, mobile apps, and social media are all sources of valuable healthcare data that a bad actor could potentially use to learn more about an individual or group of people.

"When you aggregate all of that data together, that becomes a very powerful profile of who you are," Mr. You says.

A supervisory special agent in the biological countermeasures unit within the FBI's weapons of mass destruction directorate, it's Mr. You's job to imagine worst-case bioterror scenarios and figure out how to prevent and prepare for them.

That used to mean focusing on threats like anthrax, Ebola, and smallpox—pathogens that could be used to intentionally infect people—"basically the dangerous bugs," as he puts it. In recent years, advances in gene editing and synthetic biology have given rise to fears that rogue, or even well-intentioned, scientists could create a virulent virus that's intentionally, or unintentionally, released outside the lab.

"If a foreign source, especially a criminal one, has your biological information, then they might have some particular insights into what your future medical needs might be and exploit that."

While Mr. You is still tracking those threats, he's been traveling around the country talking to scientists, lawyers, software engineers, cyber security professionals, government officials and CEOs about new security threats—those posed by genetic and other biological data.

Emerging threats

Mr. You says one possible situation he can imagine is the potential for nefarious actors to use an individual's sensitive medical information to extort or blackmail that person.

"If a foreign source, especially a criminal one, has your biological information, then they might have some particular insights into what your future medical needs might be and exploit that," he says. For instance, "what happens if you have a singular medical condition and an outside entity says they have a treatment for your condition?" You could get talked into paying a huge sum of money for a treatment that ends up being bogus.

Or what if hackers got a hold of a politician or high-profile CEO's health records? Say that person had a disease-causing genetic mutation that could affect their ability to carry out their job in the future and hackers threatened to expose that information. These scenarios may seem far-fetched, but Mr. You thinks they're becoming increasingly plausible.

On a wider scale, Kavita Berger, a scientist at Gryphon Scientific, a Washington, D.C.-area life sciences consulting firm, worries that data from different populations could be used to discriminate against certain groups of people, like minorities and immigrants.

For instance, the advocacy group Human Rights Watch in 2017 flagged a concerning trend in China's Xinjiang territory, a region with a history of government repression. Police there had purchased 12 DNA sequencers and were collecting and cataloging DNA samples from people to build a national database.

"The concern is that this particular province has a huge population of the Muslim minority in China," Ms. Berger says. "Now they have a really huge database of genetic sequences. You have to ask, why does a police station need 12 next-generation sequencers?"

Also alarming is the potential that large amounts of data from different groups of people could lead to customized bioweapons if that data ends up in the wrong hands.

Eleonore Pauwels, a research fellow on emerging cybertechnologies at United Nations University's Centre for Policy Research, says new insights gained from genomic and other data will give scientists a better understanding of how diseases occur and why certain people are more susceptible to certain diseases.

"As you get more and more knowledge about the genomic picture and how the microbiome and the immune system of different populations function, you could get a much deeper understanding about how you could target different populations for treatment but also how you could eventually target them with different forms of bioagents," Ms. Pauwels says.

Economic competitiveness

Another reason hackers might want to gain access to large genomic and other healthcare datasets is to give their country a leg up economically. Many large cyber-attacks on U.S. healthcare organizations have been tied to Chinese hacking groups.

"This is a biological space race and we just haven't woken up to the fact that we're in this race."

"It's becoming clear that China is increasingly interested in getting access to massive data sets that come from different countries," Ms. Pauwels says.

A year after U.S. President Barack Obama conceived of the Precision Medicine Initiative in 2015—later renamed All of Us—China followed suit, announcing the launch of a 15-year, $9 billion precision health effort aimed at turning China into a global leader in genomics.

Chinese genomics companies, too, are expanding their reach outside of Asia. One company, WuXi NextCODE, which has offices in Shanghai, Reykjavik, and Cambridge, Massachusetts, has built an extensive library of genomes from the U.S., China and Iceland, and is now setting its sights on Ireland.

Another Chinese company, BGI, has partnered with Children's Hospital of Philadelphia and Sinai Health System in Toronto, and also formed a collaboration with the Smithsonian Institute to sequence all species on the planet. BGI has built its own advanced genomic sequencing machines to compete with U.S.-based Illumina.

Mr. You says having access to all this data could lead to major breakthroughs in healthcare, such as new blockbuster drugs. "Whoever has the largest, most diverse dataset is truly going to win the day and come up with something very profitable," he says.

Some direct-to-consumer genetic testing companies with offices in the U.S., like Dante Labs, also use BGI to process customers' DNA.

Experts worry that China could race ahead the U.S. in precision medicine because of Chinese laws governing data sharing. Currently, China prohibits the exportation of genetic data without explicit permission from the government. Mr. You says this creates an asymmetry in data sharing between the U.S. and China.

"This is a biological space race and we just haven't woken up to the fact that we're in this race," he said in January at an American Society for Microbiology conference in Washington, D.C. "We don't have access to their data. There is absolutely no reciprocity."

Protecting your data

While Mr. You has been stressing the importance of data security to anyone who will listen, the National Academies of Sciences, Engineering, and Medicine, which makes scientific and policy recommendations on issues of national importance, has commissioned a study on "safeguarding the bioeconomy."

In the meantime, Ms. Berger says organizations that deal with people's health data should assess their security risks and identify potential vulnerabilities in their systems.

As for what individuals can do to protect themselves, she urges people to think about the different ways they're sharing healthcare data—such as via mobile health apps and wearables.

"Ask yourself, what's the benefit of sharing this? What are the potential consequences of sharing this?" she says.

Mr. You also cautions people to think twice before taking consumer DNA tests. They may seem harmless, he says, but at the end of the day, most people don't know where their genetic information is going. "If your genetic sequence is taken, once it's gone, it's gone. There's nothing you can do about it."

Researchers advance drugs that treat pain without addiction

New therapies are using creative approaches that target the body’s sensory neurons, which send pain signals to the brain.

Opioids are one of the most common ways to treat pain. They can be effective but are also highly addictive, an issue that has fueled the ongoing opioid crisis. In 2020, an estimated 2.3 million Americans were dependent on prescription opioids.

Opioids bind to receptors at the end of nerve cells in the brain and body to prevent pain signals. In the process, they trigger endorphins, so the brain constantly craves more. There is a huge risk of addiction in patients using opioids for chronic long-term pain. Even patients using the drugs for acute short-term pain can become dependent on them.

Scientists have been looking for non-addictive drugs to target pain for over 30 years, but their attempts have been largely ineffective. “We desperately need alternatives for pain management,” says Stephen E. Nadeau, a professor of neurology at the University of Florida.

A “dimmer switch” for pain

Paul Blum is a professor of biological sciences at the University of Nebraska. He and his team at Neurocarrus have created a drug called N-001 for acute short-term pain. N-001 is made up of specially engineered bacterial proteins that target the body’s sensory neurons, which send pain signals to the brain. The proteins in N-001 turn down pain signals, but they’re too large to cross the blood-brain barrier, so they don’t trigger the release of endorphins. There is no chance of addiction.

When sensory neurons detect pain, they become overactive and send pain signals to the brain. “We wanted a way to tone down sensory neurons but not turn them off completely,” Blum reveals. The proteins in N-001 act “like a dimmer switch, and that's key because pain is sensation overstimulated.”

Blum spent six years developing the drug. He finally managed to identify two proteins that form what’s called a C2C complex that changes the structure of a subunit of axons, the parts of neurons that transmit electrical signals of pain. Changing the structure reduces pain signaling.

“It will be a long path to get to a successful clinical trial in humans," says Stephen E. Nadeau, professor of neurology at the University of Florida. "But it presents a very novel approach to pain reduction.”

Blum is currently focusing on pain after knee and ankle surgery. Typically, patients are treated with anesthetics for a short time after surgery. But anesthetics usually only last for 4 to 6 hours, and long-term use is toxic. For some, the pain subsides. Others continue to suffer after the anesthetics have worn off and start taking opioids.

N-001 numbs sensation. It lasts for up to 7 days, much longer than any anesthetic. “Our goal is to prolong the time before patients have to start opioids,” Blum says. “The hope is that they can switch from an anesthetic to our drug and thereby decrease the likelihood they're going to take the opioid in the first place.”

Their latest animal trial showed promising results. In mice, N-001 reduced pain-like behaviour by 90 percent compared to the control group. One dose became effective in two hours and lasted a week. A high dose had pain-relieving effects similar to an opioid.

Professor Stephen P. Cohen, director of pain operations at John Hopkins, believes the Neurocarrus approach has potential but highlights the need to go beyond animal testing. “While I think it's promising, it's an uphill battle,” he says. “They have shown some efficacy comparable to opioids, but animal studies don't translate well to people.”

Nadeau, the University of Florida neurologist, agrees. “It will be a long path to get to a successful clinical trial in humans. But it presents a very novel approach to pain reduction.”

Blum is now awaiting approval for phase I clinical trials for acute pain. He also hopes to start testing the drug's effect on chronic pain.

Learning from people who feel no pain

Like Blum, a pharmaceutical company called Vertex is focusing on treating acute pain after surgery. But they’re doing this in a different way, by targeting a sodium channel that plays a critical role in transmitting pain signals.

In 2004, Stephen Waxman, a neurology professor at Yale, led a search for genetic pain anomalies and found that biologically related people who felt no pain despite fractures, burns and even childbirth had mutations in the Nav1.7 sodium channel. Further studies in other families who experienced no pain showed similar mutations in the Nav1.8 sodium channel.

Scientists set out to modify these channels. Many unsuccessful efforts followed, but Vertex has now developed VX-548, a medicine to inhibit Nav1.8. Typically, sodium ions flow through sodium channels to generate rapid changes in voltage which create electrical pulses. When pain is detected, these pulses in the Nav1.8 channel transmit pain signals. VX-548 uses small molecules to inhibit the channel from opening. This blocks the flow of sodium ions and the pain signal. Because Nav1.8 operates only in peripheral nerves, located outside the brain, VX-548 can relieve pain without any risk of addiction.

"Frankly we need drugs for chronic pain more than acute pain," says Waxman.

The team just finished phase II clinical trials for patients following abdominoplasty surgery and bunionectomy surgery.

After abdominoplasty surgery, 76 patients were treated with a high dose of VX-548. Researchers then measured its effectiveness in reducing pain over 48 hours, using the SPID48 scale, in which higher scores are desirable. The score for Vertex’s drug was 110.5 compared to 72.7 in the placebo group, whereas the score for patients taking an opioid was 85.2. The study involving bunionectomy surgery showed positive results as well.

Waxman, who has been at the forefront of studies into Nav1.7 and Nav1.8, believes that Vertex's results are promising, though he highlights the need for further clinical trials.

“Blocking Nav1.8 is an attractive target,” he says. “[Vertex is] studying pain that is relatively simple and uniform, and that's key to having a drug trial that is informative. But the study needs to be replicated and frankly we need drugs for chronic pain more than acute pain. If this is borne out by additional studies, it's one important step in a journey.”

Vertex will be launching phase III trials later this year.

Finding just the right amount of Nerve Growth Factor

Whereas Neurocarrus and Vertex are targeting short-term pain, a company called Levicept is concentrating on relieving chronic osteoarthritis pain. Around 32.5 million Americans suffer from osteoarthritis. Patients commonly take NSAIDs, or non-steroidal anti-inflammatory drugs, but they cannot be taken long-term. Some take opioids but they aren't very effective.

Levicept’s drug, Levi-04, is designed to modify a signaling pathway associated with pain. Nerve Growth Factor (NGF) is a neurotrophin: it’s involved in nerve growth and function. NGF signals by attaching to receptors. In pain there are excess neurotrophins attaching to receptors and activating pain signals.

“What Levi-04 does is it returns the natural equilibrium of neurotrophins,” says Simon Westbrook, the CEO and founder of Levicept. It stabilizes excess neurotrophins so that the NGF pathway does not signal pain. Levi-04 isn't addictive since it works within joints and in nerves outside the brain.

Westbrook was initially involved in creating an anti-NGF molecule for Pfizer called Tanezumab. At first, Tanezumab seemed effective in clinical trials and other companies even started developing their own versions. However, a problem emerged. Tanezumab caused rapidly progressive osteoarthritis, or RPOA, in some patients because it completely removed NGF from the system. NGF is not just involved in pain signalling, it’s also involved in bone growth and maintenance.

Levicept has found a way to modify the NGF pathway without completely removing NGF. They have now finished a small-scale phase I trial mainly designed to test safety rather than efficacy. “We demonstrated that Levi-04 is safe and that it bound to its target, NGF,” says Westbrook. It has not caused RPOA.

Professor Philip Conaghan, director of the Leeds Institute of Rheumatic and Musculoskeletal Medicine, believes that Levi-04 has potential but urges the need for caution. “At this early stage of development, their molecule looks promising for osteoarthritis pain,” he says. “They will have to watch out for RPOA which is a potential problem.”

Westbrook starts phase II trials with 500 patients this summer to check for potential side effects and test the drug’s efficacy.

There is a real push to find an effective alternative to opioids. “We have a lot of work to do,” says Professor Waxman. “But I am confident that we will be able to develop new, much more effective pain therapies.”

Tech-related injuries are becoming more common as many people depend on - and often develop addictions for - smart phones and computers.

In the 1990s, a mysterious virus spread throughout the Massachusetts Institute of Technology Artificial Intelligence Lab—or that’s what the scientists who worked there thought. More of them rubbed their aching forearms and massaged their cricked necks as new computers were introduced to the AI Lab on a floor-by-floor basis. They realized their musculoskeletal issues coincided with the arrival of these new computers—some of which were mounted high up on lab benches in awkward positions—and the hours spent typing on them.

Today, these injuries have become more common in a society awash with smart devices, sleek computers, and other gadgets. And we don’t just get hurt from typing on desktop computers; we’re massaging our sore wrists from hours of texting and Facetiming on phones, especially as they get bigger in size.

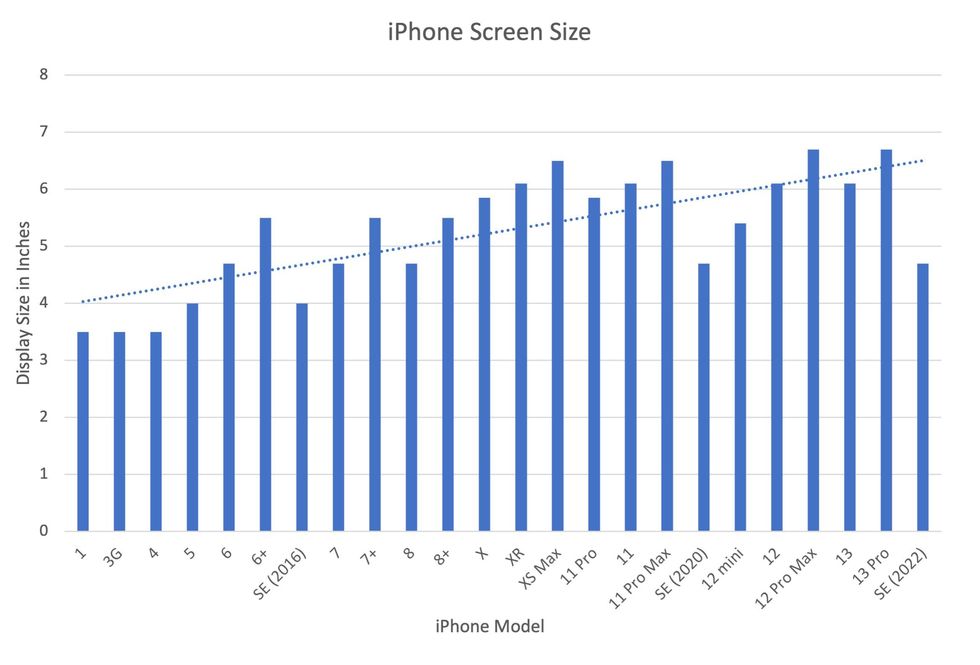

In 2007, the first iPhone measured 3.5-inches diagonally, a measurement known as the display size. That’s been nearly doubled by the newest iPhone 13 Pro, which has a 6.7-inch display. Other phones, too, like the Google Pixel 6 and the Samsung Galaxy S22, have bigger screens than their predecessors. Physical therapists and orthopedic surgeons have had to come up with names for a variety of new conditions: selfie elbow, tech neck, texting thumb. Orthopedic surgeon Sonya Sloan says she sees selfie elbow in younger kids and in women more often than men. She hears complaints related to technology once or twice a day.

The addictive quality of smartphones and social media means that people spend more time on their devices, which exacerbates injuries. According to Statista, 68 percent of those surveyed spent over three hours a day on their phone, and almost half spent five to six hours a day. Another report showed that people dedicate a third of their day to checking their phones, while the Media Effects Research Laboratory at Pennsylvania State University has found that bigger screens, ideal for entertainment purposes, immerse their users more than smaller screens. Oversized screens also provide easier navigation and more space for those with bigger hands or trouble seeing.

But others with conditions like arthritis can benefit from smaller phones. In March of 2016, Apple released the iPhone SE with a display size of 4.7 inches—an inch smaller than the iPhone 7, released that September. Apple has since come out with two more versions of the diminutive iPhone SE, one in 2020 and another in 2022.

These devices are now an inextricable part of our lives. So where does the burden of responsibility lie? Is it with consumers to adjust body positioning, get ergonomic workstations, and change habits to abate tech-related pain? Or should tech companies be held accountable?

Kavin Senapathy, a freelance science journalist, has the Google Pixel 6. She was drawn to the phone because Google marketed the Pixel 6’s camera as better at capturing different skin tones. But this phone boasts one of the largest display sizes on the market: 6.4 inches.

Senapathy was diagnosed with carpal and cubital tunnel syndromes in 2017 and fibromyalgia in 2019. She has had to create a curated ergonomic workplace setup, otherwise her wrists and hands get weak and tingly, and she’s had to adjust how she holds her phone to prevent pain flares.

Recently, Senapathy underwent an electromyography, or an EMG, in which doctors insert electrodes into muscles to measure their electrical activity. The electrical response of the muscles tells doctors whether the nerve cells and muscles are successfully communicating. Depending on her results, steroid shots and even surgery might be required. Senapathy wants to stick with her Pixel 6, but the pain she’s experiencing may push her to buy a smaller phone. Unfortunately, options for these modestly sized phones are more limited.

These devices are now an inextricable part of our lives. So where does the burden of responsibility lie? Is it with consumers like Senapathy to adjust body positioning, get ergonomic workstations, and change habits to abate tech-related pain? Or should tech companies be held accountable for creating addictive devices that lead to musculoskeletal injury?

Kavin Senapathy, a freelance journalist, bought the Google Pixel 6 because of its high-quality camera, but she’s had to adjust how she holds the oversized phone to prevent pain flares.

Kavin Senapathy

A one-size-fits-all mentality for smartphones will continue to lead to injuries because every user has different wants and needs. S. Shyam Sundar, the founder of Penn State’s lab on media effects and a communications professor, says the needs for mobility and portability conflict with the desire for greater visibility. “The best thing a company can do is offer different sizes,” he says.

Joanna Bryson, an AI ethics expert and professor at The Hertie School of Governance in Berlin, Germany, echoed these sentiments. “A lot of the lack of choice we see comes from the fact that the markets have consolidated so much,” she says. “We want to make sure there’s sufficient diversity [of products].”

Consumers can still maintain some control despite the ubiquity of tech. Sloan, the orthopedic surgeon, has to pester her son to change his body positioning when using his tablet. Our heads get heavier as they bend forward: at rest, they weigh 12 pounds, but bent 60 degrees, they weigh 60. “I have to tell him, ‘Raise your head, son!’” she says. It’s important, Sloan explains, to consider that growth and development will affect ligaments and bones in the neck, potentially making kids even more vulnerable to injuries from misusing gadgets. She recommends that parents limit their kids’ tech time to alleviate strain. She also suggested that tech companies implement a timer to remind us to change our body positioning.

In 2017, Nan-Wei Gong, a former contractor for Google, founded Figur8, which uses wearable trackers to measure muscle function and joint movement. It’s like physical therapy with biofeedback. “Each unique injury has a different biomarker,” says Gong. “With Figur8, you are comparing yourself to yourself.” This allows an individual to self-monitor for wear and tear and strengthen an injury in a way that’s efficient and designed for their body. Gong noticed that the work-from-home model during the COVID-19 pandemic created a new set of ergonomic problems that resulted in injuries. Figur8 provides real-time data for these injuries because “behavioral change requires feedback.”

Gong worked on a project called Jacquard while at Google. Textile experts weave conductive thread into their fabric, and the result is a patch of the fabric—like the cuff of a Levi’s jacket—that responds to commands on your smartphone. One swipe can call your partner or check the weather. It was designed with cyclists in mind who can’t easily check their phones, and it’s part of a growing movement in the tech industry to deliver creative, hands-free design. Gong thinks that engineers at large corporations like Google have accessibility in mind; it’s part of what drives their decisions for new products.

Display sizes of iPhones have become larger over time.

Sourced from Screenrant https://screenrant.com/iphone-apple-release-chronological-order-smartphone/ and Apple Tech Specs: https://www.apple.com/iphone-se/specs/

Back in Germany, Joanna Bryson reminds us that products like smartphones should adhere to best practices. These rules may be especially important for phones and other products with AI that are addictive. Disclosure, accountability, and regulation are important for AI, she says. “The correct balance will keep changing. But we have responsibilities and obligations to each other.” She was on an AI Ethics Council at Google, but the committee was disbanded after only one week due to issues with one of their members.

Bryson was upset about the Council’s dissolution but has faith that other regulatory bodies will prevail. OECD.AI, and international nonprofit, has drafted policies to regulate AI, which countries can sign and implement. “As of July 2021, 46 governments have adhered to the AI principles,” their website reads.

Sundar, the media effects professor, also directs Penn State’s Center for Socially Responsible AI. He says that inclusivity is a crucial aspect of social responsibility and how devices using AI are designed. “We have to go beyond first designing technologies and then making them accessible,” he says. “Instead, we should be considering the issues potentially faced by all different kinds of users before even designing them.”