Coronavirus Risk Calculators: What You Need to Know

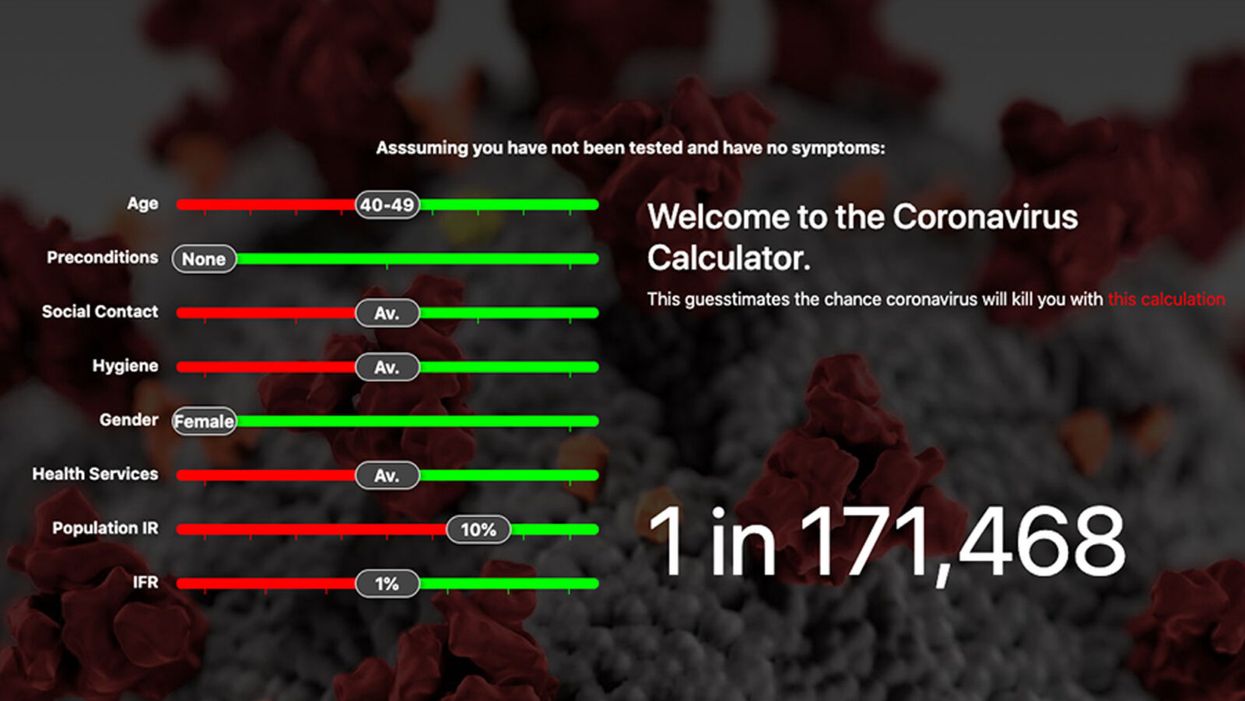

A screenshot of one coronavirus risk calculator.

People in my family seem to develop every ailment in the world, including feline distemper and Dutch elm disease, so I naturally put fingers to keyboard when I discovered that COVID-19 risk calculators now exist.

"It's best to look at your risk band. This will give you a more useful insight into your personal risk."

But the results – based on my answers to questions -- are bewildering.

A British risk calculator developed by the Nexoid software company declared I have a 5 percent, or 1 in 20, chance of developing COVID-19 and less than 1 percent risk of dying if I get it. Um, great, I think? Meanwhile, 19 and Me, a risk calculator created by data scientists, says my risk of infection is 0.01 percent per week, or 1 in 10,000, and it gave me a risk score of 44 out of 100.

Confused? Join the club. But it's actually possible to interpret numbers like these and put them to use. Here are five tips about using coronavirus risk calculators:

1. Make Sure the Calculator Is Designed For You

Not every COVID-19 risk calculator is designed to be used by the general public. Cleveland Clinic's risk calculator, for example, is only a tool for medical professionals, not sick people or the "worried well," said Dr. Lara Jehi, Cleveland Clinic's chief research information officer.

Unfortunately, the risk calculator's web page fails to explicitly identify its target audience. But there are hints that it's not for lay people such as its references to "platelets" and "chlorides."

The 19 and Me or the Nexoid risk calculators, in contrast, are both designed for use by everyone, as is a risk calculator developed by Emory University.

2. Take a Look at the Calculator's Privacy Policy

COVID-19 risk calculators ask for a lot of personal information. The Nexoid calculator, for example, wanted to know my age, weight, drug and alcohol history, pre-existing conditions, blood type and more. It even asked me about the prescription drugs I take.

It's wise to check the privacy policy and be cautious about providing an email address or other personal information. Nexoid's policy says it provides the information it gathers to researchers but it doesn't release IP addresses, which can reveal your location in certain circumstances.

John-Arne Skolbekken, a professor and risk specialist at Norwegian University of Science and Technology, entered his own data in the Nexoid calculator after being contacted by LeapsMag for comment. He noted that the calculator, among other things, asks for information about use of recreational drugs that could be illegal in some places. "I have given away some of my personal data to a company that I can hope will not misuse them," he said. "Let's hope they are trustworthy."

The 19 and Me calculator, by contrast, doesn't gather any data from users, said Cindy Hu, data scientist at Mathematica, which created it. "As soon as the window is closed, that data is gone and not captured."

The Emory University risk calculator, meanwhile, has a long privacy policy that states "the information we collect during your assessment will not be correlated with contact information if you provide it." However, it says personal information can be shared with third parties.

3. Keep an Eye on Time Horizons

Let's say a risk calculator says you have a 1 percent risk of infection. That's fairly low if we're talking about this year as a whole, but it's quite worrisome if the risk percentage refers to today and jumps by 1 percent each day going forward. That's why it's helpful to know exactly what the numbers mean in terms of time.

Unfortunately, this information isn't always readily available. You may have to dig around for it or contact a risk calculator's developers for more information. The 19 and Me calculator's risk percentages refer to this current week based on your behavior this week, Hu said. The Nexoid calculator, by contrast, has an "infinite timeline" that assumes no vaccine is developed, said Jonathon Grantham, the company's managing director. But your results will vary over time since the calculator's developers adjust it to reflect new data.

When you use a risk calculator, focus on this question: "How does your risk compare to the risk of an 'average' person?"

4. Focus on the Big Picture

The Nexoid calculator gave me numbers of 5 percent (getting COVID-19) and 99.309 percent (surviving it). It even provided betting odds for gambling types: The odds are in favor of me not getting infected (19-to-1) and not dying if I get infected (144-to-1).

However, Grantham told me that these numbers "are not the whole story." Instead, he said, "it's best to look at your risk band. This will give you a more useful insight into your personal risk." Risk bands refer to a segmentation of people into five categories, from lowest to highest risk, according to how a person's result sits relative to the whole dataset.

The Nexoid calculator says I'm in the "lowest risk band" for getting COVID-19, and a "high risk band" for dying of it if I get it. That suggests I'd better stay in the lowest-risk category because my pre-existing risk factors could spell trouble for my survival if I get infected.

Michael J. Pencina, a professor and biostatistician at Duke University School of Medicine, agreed that focusing on your general risk level is better than focusing on numbers. When you use a risk calculator, he said, focus on this question: "How does your risk compare to the risk of an 'average' person?"

The 19 and Me calculator, meanwhile, put my risk at 44 out of 100. Hu said that a score of 50 represents the typical person's risk of developing serious consequences from another disease – the flu.

5. Remember to Take Action

Hu, who helped develop the 19 and Me risk calculator, said it's best to use it to "understand the relative impact of different behaviors." As she noted, the calculator is designed to allow users to plug in different answers about their behavior and immediately see how their risk levels change.

This information can help us figure out if we should change the way we approach the world by, say, washing our hands more or avoiding more personal encounters.

"Estimation of risk is only one part of prevention," Pencina said. "The other is risk factors and our ability to reduce them." In other words, odds, percentages and risk bands can be revealing, but it's what we do to change them that matters.

On today’s episode of Making Sense of Science, I’m honored to be joined by Dr. Paul Song, a physician, oncologist, progressive activist and biotech chief medical officer. Through his company, NKGen Biotech, Dr. Song is leveraging the power of patients’ own immune systems by supercharging the body’s natural killer cells to make new treatments for Alzheimer’s and cancer.

Whereas other treatments for Alzheimer’s focus directly on reducing the build-up of proteins in the brain such as amyloid and tau in patients will mild cognitive impairment, NKGen is seeking to help patients that much of the rest of the medical community has written off as hopeless cases, those with late stage Alzheimer’s. And in small studies, NKGen has shown remarkable results, even improvement in the symptoms of people with these very progressed forms of Alzheimer’s, above and beyond slowing down the disease.

In the realm of cancer, Dr. Song is similarly setting his sights on another group of patients for whom treatment options are few and far between: people with solid tumors. Whereas some gradual progress has been made in treating blood cancers such as certain leukemias in past few decades, solid tumors have been even more of a challenge. But Dr. Song’s approach of using natural killer cells to treat solid tumors is promising. You may have heard of CAR-T, which uses genetic engineering to introduce cells into the body that have a particular function to help treat a disease. NKGen focuses on other means to enhance the 40 plus receptors of natural killer cells, making them more receptive and sensitive to picking out cancer cells.

Paul Y. Song, MD is currently CEO and Vice Chairman of NKGen Biotech. Dr. Song’s last clinical role was Asst. Professor at the Samuel Oschin Cancer Center at Cedars Sinai Medical Center.

Dr. Song served as the very first visiting fellow on healthcare policy in the California Department of Insurance in 2013. He is currently on the advisory board of the Pritzker School of Molecular Engineering at the University of Chicago and a board member of Mercy Corps, The Center for Health and Democracy, and Gideon’s Promise.

Dr. Song graduated with honors from the University of Chicago and received his MD from George Washington University. He completed his residency in radiation oncology at the University of Chicago where he served as Chief Resident and did a brachytherapy fellowship at the Institute Gustave Roussy in Villejuif, France. He was also awarded an ASTRO research fellowship in 1995 for his research in radiation inducible gene therapy.

With Dr. Song’s leadership, NKGen Biotech’s work on natural killer cells represents cutting-edge science leading to key findings and important pieces of the puzzle for treating two of humanity’s most intractable diseases.

Show links

- Paul Song LinkedIn

- NKGen Biotech on Twitter - @NKGenBiotech

- NKGen Website: https://nkgenbiotech.com/

- NKGen appoints Paul Song

- Patient Story: https://pix11.com/news/local-news/long-island/promising-new-treatment-for-advanced-alzheimers-patients/

- FDA Clearance: https://nkgenbiotech.com/nkgen-biotech-receives-ind-clearance-from-fda-for-snk02-allogeneic-natural-killer-cell-therapy-for-solid-tumors/Q3 earnings data: https://www.nasdaq.com/press-release/nkgen-biotech-inc.-reports-third-quarter-2023-financial-results-and-business

Is there a robot nanny in your child's future?

Some researchers argue that active, playful engagement with a "robot nanny" for a few hours a day is better than several hours in front of a TV or with an iPad.

From ROBOTS AND THE PEOPLE WHO LOVE THEM: Holding on to Our Humanity in an Age of Social Robots by Eve Herold. Copyright © 2024 by the author and reprinted by permission of St. Martin’s Publishing Group.

Could the use of robots take some of the workload off teachers, add engagement among students, and ultimately invigorate learning by taking it to a new level that is more consonant with the everyday experiences of young people? Do robots have the potential to become full-fledged educators and further push human teachers out of the profession? The preponderance of opinion on this subject is that, just as AI and medical technology are not going to eliminate doctors, robot teachers will never replace human teachers. Rather, they will change the job of teaching.

A 2017 study led by Google executive James Manyika suggested that skills like creativity, emotional intelligence, and communication will always be needed in the classroom and that robots aren’t likely to provide them at the same level that humans naturally do. But robot teachers do bring advantages, such as a depth of subject knowledge that teachers can’t match, and they’re great for student engagement.

The teacher and robot can complement each other in new ways, with the teacher facilitating interactions between robots and students. So far, this is the case with teaching “assistants” being adopted now in China, Japan, the U.S., and Europe. In this scenario, the robot (usually the SoftBank child-size robot NAO) is a tool for teaching mainly science, technology, engineering, and math (the STEM subjects), but the teacher is very involved in planning, overseeing, and evaluating progress. The students get an entertaining and enriched learning experience, and some of the teaching load is taken off the teacher. At least, that’s what researchers have been able to observe so far.

To be sure, there are some powerful arguments for having robots in the classroom. A not-to-be-underestimated one is that robots “speak the language” of today’s children, who have been steeped in technology since birth. These children are adept at navigating a media-rich environment that is highly visual and interactive. They are plugged into the Internet 24-7. They consume music, games, and huge numbers of videos on a weekly basis. They expect to be dazzled because they are used to being dazzled by more and more spectacular displays of digital artistry. Education has to compete with social media and the entertainment vehicles of students’ everyday lives.

Another compelling argument for teaching robots is that they help prepare students for the technological realities they will encounter in the real world when robots will be ubiquitous. From childhood on, they will be interacting and collaborating with robots in every sphere of their lives from the jobs they do to dealing with retail robots and helper robots in the home. Including robots in the classroom is one way of making sure that children of all socioeconomic backgrounds will be better prepared for a highly automated age, when successfully using robots will be as essential as reading and writing. We’ve already crossed this threshold with computers and smartphones.

Students need multimedia entertainment with their teaching. This is something robots can provide through their ability to connect to the Internet and act as a centralized host to videos, music, and games. Children also need interaction, something robots can deliver up to a point, but which humans can surpass. The education of a child is not just intended to make them technologically functional in a wired world, it’s to help them grow in intellectual, creative, social, and emotional ways. When considered through this perspective, it opens the door to questions concerning just how far robots should go. Robots don’t just teach and engage children; they’re designed to tug at their heartstrings.

It’s no coincidence that many toy makers and manufacturers are designing cute robots that look and behave like real children or animals, says Turkle. “When they make eye contact and gesture toward us, they predispose us to view them as thinking and caring,” she has written in The Washington Post. “They are designed to be cute, to provide a nurturing response” from the child. As mentioned previously, this nurturing experience is a powerful vehicle for drawing children in and promoting strong attachment. But should children really love their robots?

ROBOTS AND THE PEOPLE WHO LOVE THEM: Holding on to Our Humanity in an Age of Social Robots by Eve Herold (January 9, 2024).

St. Martin’s Publishing Group

The problem, once again, is that a child can be lulled into thinking that she’s in an actual relationship, when a robot can’t possibly love her back. If adults have these vulnerabilities, what might such asymmetrical relationships do to the emotional development of a small child? Turkle notes that while we tend to ascribe a mind and emotions to a socially interactive robot, “simulated thinking may be thinking, but simulated feeling is never feeling, and simulated love is never love.”

Always a consideration is the fact that in the first few years of life, a child’s brain is undergoing rapid growth and development that will form the foundation of their lifelong emotional health. These formative experiences are literally shaping the child’s brain, their expectations, and their view of the world and their place in it. In Alone Together, Turkle asks: What are we saying to children about their importance to us when we’re willing to outsource their care to a robot? A child might be superficially entertained by the robot while his self-esteem is systematically undermined.

Research has emerged showing that there are clear downsides to child-robot relationships.

Still, in the case of robot nannies in the home, is active, playful engagement with a robot for a few hours a day any more harmful than several hours in front of a TV or with an iPad? Some, like Xiong, regard interacting with a robot as better than mere passive entertainment. iPal’s manufacturers say that their robot can’t replace parents or teachers and is best used by three- to eight-year-olds after school, while they wait for their parents to get off work. But as robots become ever-more sophisticated, they’re expected to perform more of the tasks of day-to-day care and to be much more emotionally advanced. There is no question children will form deep attachments to some of them. And research has emerged showing that there are clear downsides to child-robot relationships.

Some studies, performed by Turkle and fellow MIT colleague Cynthia Breazeal, have revealed a darker side to the child-robot bond. Turkle has reported extensively on these studies in The Washington Post and in her book Alone Together. Most children love robots, but some act out their inner bully on the hapless machines, hitting and kicking them and otherwise trying to hurt them. The trouble is that the robot can’t fight back, teaching children that they can bully and abuse without consequences. As in any other robot relationship, such harmful behavior could carry over into the child’s human relationships.

And, ironically, it turns out that communicative machines don’t actually teach kids good communication skills. It’s well known that parent-child communication in the first three years of life sets the stage for a very young child’s intellectual and academic success. Verbal back-and-forth with parents and care-givers is like fuel for a child’s growing brain. One article that examined several types of play and their effect on children’s communication skills, published in JAMA Pediatrics in 2015, showed that babies who played with electronic toys—like the popular robot dog Aibo—show a decrease in both the quantity and quality of their language skills.

Anna V. Sosa of the Child Speech and Language Lab at Northern Arizona University studied twenty-six ten- to sixteen- month-old infants to compare the growth of their language skills after they played with three types of toys: electronic toys like a baby laptop and talking farm; traditional toys like wooden puzzles and building blocks; and books read aloud by their parents. The play that produced the most growth in verbal ability was having books read to them by a caregiver, followed by play with traditional toys. Language gains after playing with electronic toys came dead last. This form of play involved the least use of adult words, the least conversational turntaking, and the least verbalizations from the children. While the study sample was small, it’s not hard to extrapolate that no electronic toy or even more abled robot could supply the intimate responsiveness of a parent reading stories to a child, explaining new words, answering the child’s questions, and modeling the kind of back- and-forth interaction that promotes empathy and reciprocity in relationships.

***

Most experts acknowledge that robots can be valuable educational tools. But they can’t make a child feel truly loved, validated, and valued. That’s the job of parents, and when parents abdicate this responsibility, it’s not only the child who misses out on one of life’s most profound experiences.

We really don’t know how the tech-savvy children of today will ultimately process their attachments to robots and whether they will be excessively predisposed to choosing robot companionship over that of humans. It’s possible their techno literacy will draw for them a bold line between real life and a quasi-imaginary history with a robot. But it will be decades before we see long-term studies culminating in sufficient data to help scientists, and the rest of us, to parse out the effects of a lifetime spent with robots.

This is an excerpt from ROBOTS AND THE PEOPLE WHO LOVE THEM: Holding on to Our Humanity in an Age of Social Robots by Eve Herold. The book will be published on January 9, 2024.