COVID Variants Are Like “a Thief Changing Clothes” – and Our Camera System Barely Exists

Being able to track variants of concern in real time is crucial to our ability to stay ahead of the virus.

Whether it's "natural selection" as Darwin called it, or it's "mutating" as the X-Men called it, living organisms change over time, developing thumbs or more efficient protein spikes, depending on the organism and the demands of its environment. The coronavirus that causes COVID-19, SARS-CoV-2, is not an exception, and now, after the virus has infected millions of people around the globe for more than a year, scientists are beginning to see those changes.

The notorious variants that have popped up include B.1.1.7, sometimes called the UK variant, as well as P.1 and B.1.351, which seem to have emerged in Brazil and South Africa respectively. As vaccinations are picking up pace, officials are warning that now

is not the time to become complacent or relax restrictions because the variants aren't well understood.

Some appear to be more transmissible, and deadlier, while others can evade the immune system's defenses better than earlier versions of the virus, potentially undermining the effectiveness of vaccines to some degree. Genomic surveillance, the process of sequencing the genetic code of the virus widely to observe changes and patterns, is a critical way that scientists can keep track of its evolution and work to understand how the variants might affect humans.

"It's like a thief changing clothes"

It's important to note that viruses mutate all the time. If there were funding and personnel to sequence the genome of every sample of the virus, scientists would see thousands of mutations. Not every variant deserves our attention. The vast majority of mutations are not important at all, but recognizing those that are is a crucial tool in getting and staying ahead of the virus. The work of sequencing, analyzing, observing patterns, and using public health tools as necessary is complicated and confusing to those without years of specialized training.

Jeremy Kamil, associate professor of microbiology and immunology at LSU Health Shreveport, in Louisiana, says that the variants developing are like a thief changing clothes. The thief goes in your house, steals your stuff, then leaves and puts on a different shirt and a wig, in the hopes you won't recognize them. Genomic surveillance catches the "thief" even in those different clothes.

One of the tricky things about variants is recognizing the point at which they move from interesting, to concerning at a local level, to dangerous in a larger context.

Understanding variants, both the uninteresting ones and the potentially concerning ones, gives public health officials and researchers at different levels a useful set of tools. Locally, knowing which variants are circulating in the community helps leaders know whether mask mandates and similar measures should be implemented or discontinued, or whether businesses and schools can open relatively safely.

There's more to it than observing new variants

Analysis is complex, particularly when it comes to understanding which variants are of concern. "So the question is always if a mutation becomes common, is that a random occurrence?" says Phoebe Lostroh, associate professor of molecular biology at Colorado College. "Or is the variant the result of some kind of selection because the mutation changes some property about the virus that makes it reproduce more quickly than variants of the virus that don't have that mutation? For a virus, [mutations can affect outcomes like] how much it replicates inside a person's body, how much somebody breathes it out, whether the particles that somebody might breathe in get smaller and can lead to greater transmission."

Along with all of those factors, accurate and useful genomic surveillance requires an understanding of where variants are occurring, how they are related, and an examination of why they might be prevalent.

For example, if a potentially worrisome variant appears in a community and begins to spread very quickly, it's not time to raise a public health alarm until several important questions have been answered, such as whether the variant is spreading due to specific events, or if it's happening because the mutation has allowed the virus to infect people more efficiently. Kamil offered a hypothetical scenario to explain: Imagine that a member of a community became infected and the virus mutated. That person went to church and three more people were infected, but one of them went to a karaoke bar and while singing infected 100 other people. Examining the conditions under which the virus has spread is, therefore, an essential part of untangling whether a mutation itself made the virus more transmissible or if an infected person's behaviors contributed to a local outbreak.

One of the tricky things about variants is recognizing the point at which they move from interesting, to concerning at a local level, to dangerous in a larger context. Genomic sequencing can help with that, but only when it's coordinated. When the same mutation occurs frequently, but is localized to one region, it's a concern, but when the same mutation happens in different places at the same time, it's much more likely that the "virus is learning that's a good mutation," explains Kamil.

The process is called convergent evolution, and it was a fascinating topic long before COVID. Just as your heritage can be traced through DNA, so can that of viruses, and when separate lineages develop similar traits it's almost like scientists can see evolution happening in real time. A mutation to SARS-CoV-2 that happens in more than one place at once is a mutation that makes it easier in some way for the virus to survive and that is when it may become alarming. The widespread, documented variants P.1 and B.1.351 are examples of convergence because they share some of the same virulent mutations despite having developed thousands of miles apart.

However, even variants that are emerging in different places at the same time don't present the kind of threat SARS-CoV-2 did in 2019. "This is nature," says Kamil. "It just means that this virus will not easily be driven to extinction or complete elimination by vaccines." Although a person who has already had COVID-19 can be reinfected with a variant, "it is almost always much milder disease" than the original infection, Kamil adds. Rather than causing full-fledged disease, variants have the potiental to "penetrate herd immunity, spreading relatively quietly among people who have developed natural immunity or been vaccinated, until the virus finds someone who has no immunity yet, and that person would be at risk of hospitalization-grade severe disease or death."

Surveillance and predictions

According to Lostroh, genomic surveillance can help scientists predict what's going to happen. "With the British strain, for instance, that's more transmissible, you can measure how fast it's doubling in the population and you can sort of tell whether we should take more measures against this mutation. Should we shut things down a little longer because that mutation is present in the population? That could be really useful if you did enough sampling in the population that you knew where it was," says Lostroh. If, for example, the more transmissible strain was present in 50 percent of cases, but in another county or state it was barely present, it would allow for rolling lockdowns instead of sweeping measures.

Variants are also extremely important when it comes to the development, manufacture, and distribution of vaccines. "You're also looking at medical countermeasures, such as whether your vaccine is still effective, or if your antiviral needs to be updated," says Lane Warmbrod, a senior analyst and research associate at Johns Hopkins Center for Health Security.

Properly funded and extensive genomic surveillance could eventually help control endemic diseases, too, like the seasonal flu, or other common respiratory infections. Kamil says he envisions a future in which genomic surveillance allows for prediction of sickness just as the weather is predicted today. "It's a 51 for infection today at the San Francisco Airport. There's been detection of some respiratory viruses," he says, offering an example. He says that if you're a vulnerable person, if you're immune-suppressed for some reason, you may want to wear a mask based on the sickness report.

The U.S. has the ability, but lacks standards

The benefits of widespread genomic surveillance are clear, and the United States certainly has the necessary technology, equipment, and personnel to carry it out. But, it's not happening at the speed and extent it needs to for the country to gain the benefits.

"The numbers are improving," said Kamil. "We're probably still at less than half a percent of all the samples that have been taken have been sequenced since the beginning of the pandemic."

Although there's no consensus on how many sequences is ideal for a robust surveillance program, modeling performed by the company Illumina suggests about 5 percent of positive tests should be sequenced. The reasons the U.S. has lagged in implementing a sequencing program are complex and varied, but solvable.

Perhaps the most important element that is currently missing is leadership. In order to conduct an effective genomic surveillance program, there need to be standards. The Johns Hopkins Center for Health Security recently published a paper with recommendations as to what kinds of elements need to be standardized in order to make the best use of sequencing technology and analysis.

"Along with which bioinformatic pipelines you're going to use to do the analyses, which sequencing strategy protocol are you going to use, what's your sampling strategy going to be, how is the data is going to be reported, what data gets reported," says Warmbrod. Currently, there's no guidance from the CDC on any of those things. So, while scientists can collect and report information, they may be collecting and reporting different information that isn't comparable, making it less useful for public health measures and vaccine updates.

Globally, one of the most important tools in making the information from genomic surveillance useful is GISAID, a platform designed for scientists to share -- and, importantly, to be credited for -- their data regarding genetic sequences of influenza. Originally, it was launched as a database of bird flu sequences, but has evolved to become an essential tool used by the WHO to make flu vaccine virus recommendations each year. Scientists who share their credentials have free access to the database, and anyone who uses information from the database must credit the scientist who uploaded that information.

Safety, logistics, and funding matter

Scientists at university labs and other small organizations have been uploading sequences to GISAID almost from the beginning of the pandemic, but their funding is generally limited, and there are no standards regarding information collection or reporting. Private, for-profit labs haven't had motivation to set up sequencing programs, although many of them have the logistical capabilities and funding to do so. Public health departments are understaffed, underfunded, and overwhelmed.

University labs may also be limited by safety concerns. The SARS-CoV-2 virus is dangerous, and there's a question of how samples should be transported to labs for sequencing.

Larger, for-profit organizations often have the tools and distribution capabilities to safely collect and sequence samples, but there hasn't been a profit motive. Genomic sequencing is less expensive now than ever before, but even at $100 per sample, the cost adds up -- not to mention the cost of employing a scientist with the proper credentials to analyze the sequence.

The path forward

The recently passed COVID-19 relief bill does have some funding to address genomic sequencing. Specifically, the American Rescue Plan Act includes $1.75 billion in funding for the Centers for Disease Control and Prevention's Advanced Molecular Detection (AMD) program. In an interview last month, CDC Director Rochelle Walensky said that the additional funding will be "a dial. And we're going to need to dial it up." AMD has already announced a collaboration called the Sequencing for Public Health Emergency Response, Epidemiology, and Surveillance (SPHERES) Initiative that will bring together scientists from public health, academic, clinical, and non-profit laboratories across the country with the goal of accelerating sequencing.

Such a collaboration is a step toward following the recommendations in the paper Warmbrod coauthored. Building capacity now, creating a network of labs, and standardizing procedures will mean improved health in the future. "I want to be optimistic," she says. "The good news is there are a lot of passionate, smart, capable people who are continuing to work with government and work with different stakeholders." She cautions, however, that without a national strategy we won't succeed.

"If we maximize the potential and create that framework now, we can also use it for endemic diseases," she says. "It's a very helpful system for more than COVID if we're smart in how we plan it."

Researchers are looking to engineer chocolate with less oil, which could reduce some of its detriments to health.

Creamy milk with velvety texture. Dark with sprinkles of sea salt. Crunchy hazelnut-studded chunks. Chocolate is a treat that appeals to billions of people worldwide, no matter the age. And it’s not only the taste, but the feel of a chocolate morsel slowly melting in our mouths—the smoothness and slipperiness—that’s part of the overwhelming satisfaction. Why is it so enjoyable?

That’s what an interdisciplinary research team of chocolate lovers from the University of Leeds School of Food Science and Nutrition and School of Mechanical Engineering in the U.K. resolved to study in 2021. They wanted to know, “What is making chocolate that desirable?” says Siavash Soltanahmadi, one of the lead authors of a new study about chocolates hedonistic quality.

Besides addressing the researchers’ general curiosity, their answers might help chocolate manufacturers make the delicacy even more enjoyable and potentially healthier. After all, chocolate is a billion-dollar industry. Revenue from chocolate sales, whether milk or dark, is forecasted to grow 13 percent by 2027 in the U.K. In the U.S., chocolate and candy sales increased by 11 percent from 2020 to 2021, on track to reach $44.9 billion by 2026. Figuring out how chocolate affects the human palate could up the ante even more.

Building a 3D tongue

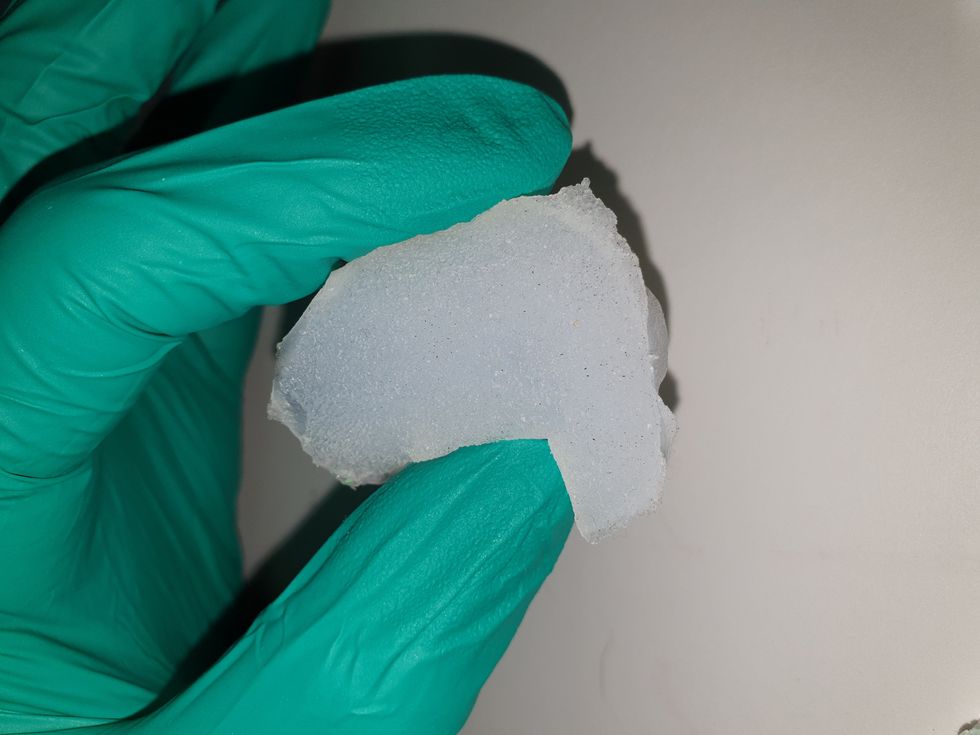

The team began by building a 3D tongue to analyze the physical process by which chocolate breaks down inside the mouth.

As part of the effort, reported earlier this year in the scientific journal ACS Applied Materials and Interfaces, the team studied a large variety of human tongues with the intention to build an “average” 3D model, says Soltanahmadi, a lubrication scientist. When it comes to edible substances, lubrication science looks at how food feels in the mouth and can help design foods that taste better and have more satisfying texture or health benefits.

There are variations in how people enjoy chocolate; some chew it while others “lick it” inside their mouths.

Tongue impressions from human participants studied using optical imaging helped the team build a tongue with key characteristics. “Our tongue is not a smooth muscle, it’s got some texture, it has got some roughness,” Soltanahmadi says. From those images, the team came up with a digital design of an average tongue and, using 3D printed molds, built a “mimic tongue.” They also added elastomers—such as silicone or polyurethane—to mimic the roughness, the texture and the mechanical properties of a real tongue. “Wettability" was another key component of the 3D tongue, Soltanahmadi says, referring to whether a surface mixes with water (hydrophilic) or, in the case of oil, resists it (hydrophobic).

Notably, the resulting artificial 3D-tongues looked nothing like the human version, but they were good mimics. The scientists also created “testing kits” that produced data on various physical parameters. One such parameter was viscosity, the measure of how gooey a food or liquid is — honey is more viscous compared to water, for example. Another was tribology, which defines how slippery something is — high fat yogurt is more slippery than low fat yogurt; milk can be more slippery than water. The researchers then mixed chocolate with artificial saliva and spread it on the 3D tongue to measure the tribology and the viscosity. From there they were able to study what happens inside the mouth when we eat chocolate.

The team focused on the stages of lubrication and the location of the fat in the chocolate, a process that has rarely been researched.

The artificial 3D-tongues look nothing like human tongues, but they function well enough to do the job.

Courtesy Anwesha Sarkar and University of Leeds

The oral processing of chocolate

We process food in our mouths in several stages, Soltanahmadi says. And there is variation in these stages depending on the type of food. So, the oral processing of a piece of meat would be different from, say, the processing of jelly or popcorn.

There are variations with chocolate, in particular; some people chew it while others use their tongues to explore it (within their mouths), Soltanahmadi explains. “Usually, from a consumer perspective, what we find is that if you have a luxury kind of a chocolate, then people tend to start with licking the chocolate rather than chewing it.” The researchers used a luxury brand of dark chocolate and focused on the process of licking rather than chewing.

As solid cocoa particles and fat are released, the emulsion envelops the tongue and coats the palette creating a smooth feeling of chocolate all over the mouth. That tactile sensation is part of the chocolate’s hedonistic appeal we crave.

Understanding the make-up of the chocolate was also an important step in the study. “Chocolate is a composite material. So, it has cocoa butter, which is oil, it has some particles in it, which is cocoa solid, and it has sugars," Soltanahmadi says. "Dark chocolate has less oil, for example, and less sugar in it, most of the time."

The researchers determined that the oral processing of chocolate begins as soon as it enters a person’s mouth; it starts melting upon exposure to one’s body temperature, even before the tongue starts moving, Soltanahmadi says. Then, lubrication begins. “[Saliva] mixes with the oily chocolate and it makes an emulsion." An emulsion is a fluid with a watery (or aqueous) phase and an oily phase. As chocolate breaks down in the mouth, that solid piece turns into a smooth emulsion with a fatty film. “The oil from the chocolate becomes droplets in a continuous aqueous phase,” says Soltanahmadi. In other words, as solid cocoa particles and fat are released, the emulsion envelops the tongue and coats the palette, creating a smooth feeling of chocolate all over the mouth. That tactile sensation is part of the chocolate’s hedonistic appeal we crave, says Soltanahmadi.

Finding the sweet spot

After determining how chocolate is orally processed, the research team wanted to find the exact sweet spot of the breakdown of solid cocoa particles and fat as they are released into the mouth. They determined that the epicurean pleasure comes only from the chocolate's outer layer of fat; the secondary fatty layers inside the chocolate don’t add to the sensation. It was this final discovery that helped the team determine that it might be possible to produce healthier chocolate that would contain less oil, says Soltanahmadi. And therefore, less fat.

Rongjia Tao, a physicist at Temple University in Philadelphia, thinks the Leeds study and the concept behind it is “very interesting.” Tao, himself, did a study in 2016 and found he could reduce fat in milk chocolate by 20 percent. He believes that the Leeds researchers’ discovery about the first layer of fat being more important for taste than the other layer can inform future chocolate manufacturing. “As a scientist I consider this significant and an important starting point,” he says.

Chocolate is rich in polyphenols, naturally occurring compounds also found in fruits and vegetables, such as grapes, apples and berries. Research found that plant polyphenols can protect against cancer, diabetes and osteoporosis as well as cardiovascular ad neurodegenerative diseases.

Not everyone thinks it’s a good idea, such as chef Michael Antonorsi, founder and owner of Chuao Chocolatier, one of the leading chocolate makers in the U.S. First, he says, “cacao fat is definitely a good fat.” Second, he’s not thrilled that science is trying to interfere with nature. “Every time we've tried to intervene and change nature, we get things out of balance,” says Antonorsi. “There’s a reason cacao is botanically known as food of the gods. The botanical name is the Theobroma cacao: Theobroma in ancient Greek, Theo is God and Brahma is food. So it's a food of the gods,” Antonorsi explains. He’s doubtful that a chocolate made only with a top layer of fat will produce the same epicurean satisfaction. “You're not going to achieve the same sensation because that surface fat is going to dissipate and there is no fat from behind coming to take over,” he says.

Without layers of fat, Antonorsi fears the deeply satisfying experiential part of savoring chocolate will be lost. The University of Leeds team, however, thinks that it may be possible to make chocolate healthier - when consumed in limited amounts - without sacrificing its taste. They believe the concept of less fatty but no less slick chocolate will resonate with at least some chocolate-makers and consumers, too.

Chocolate already contains some healthful compounds. Its cocoa particles have “loads of health benefits,” says Soltanahmadi. Dark chocolate usually has more cocoa than milk chocolate. Some experts recommend that dark chocolate should contain at least 70 percent cocoa in order for it to offer some health benefit. Research has shown that the cocoa in chocolate is rich in polyphenols, naturally occurring compounds also found in fruits and vegetables, such as grapes, apples and berries. Research has shown that consuming plant polyphenols can be protective against cancer, diabetes and osteoporosis as well as cardiovascular and neurodegenerative diseases.

“So keeping the healthy part of it and reducing the oily part of it, which is not healthy, but is giving you that indulgence of it … that was the final aim,” Soltanahmadi says. He adds that the team has been approached by individuals in the chocolate industry about their research. “Everyone wants to have a healthy chocolate, which at the same time tastes brilliant and gives you that self-indulging experience.”

Probiotic bacteria can be engineered to fight antibiotic-resistant superbugs by releasing chemicals that kill them.

In 1945, almost two decades after Alexander Fleming discovered penicillin, he warned that as antibiotics use grows, they may lose their efficiency. He was prescient—the first case of penicillin resistance was reported two years later. Back then, not many people paid attention to Fleming’s warning. After all, the “golden era” of the antibiotics age had just began. By the 1950s, three new antibiotics derived from soil bacteria — streptomycin, chloramphenicol, and tetracycline — could cure infectious diseases like tuberculosis, cholera, meningitis and typhoid fever, among others.

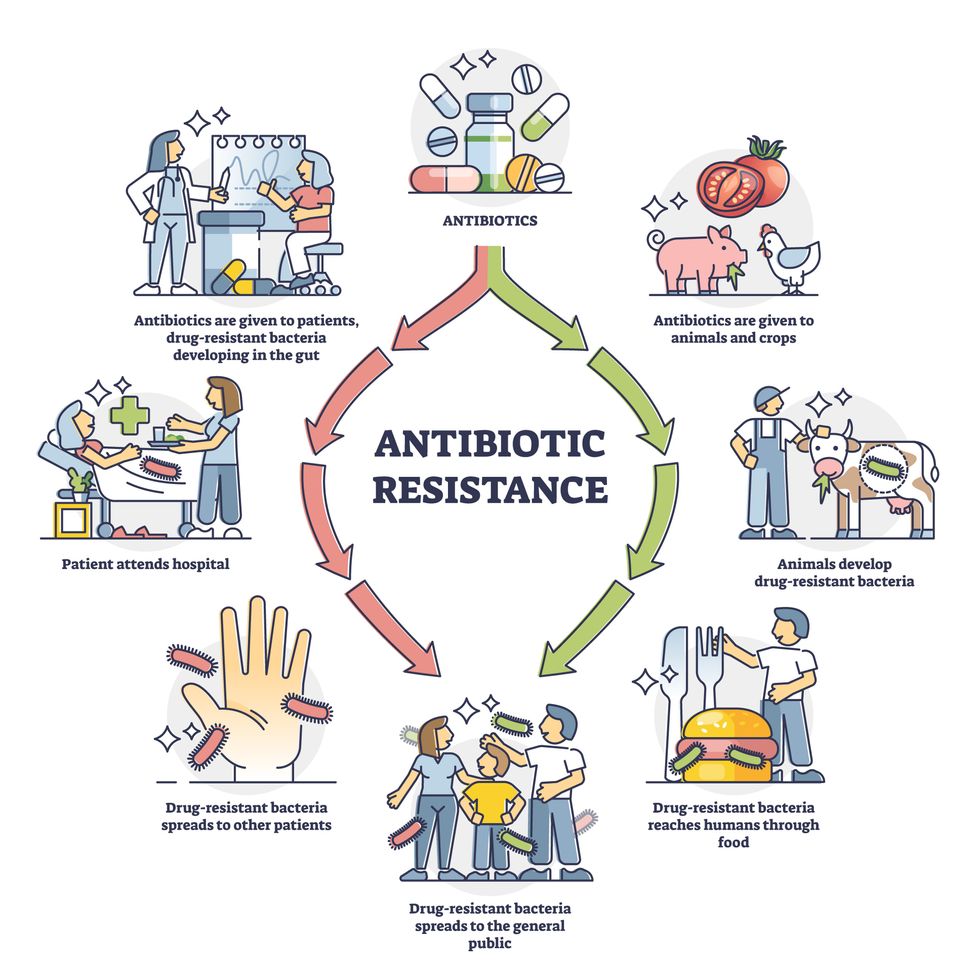

Today, these antibiotics and many of their successors developed through the 1980s are gradually losing their effectiveness. The extensive overuse and misuse of antibiotics led to the rise of drug resistance. The livestock sector buys around 80 percent of all antibiotics sold in the U.S. every year. Farmers feed cows and chickens low doses of antibiotics to prevent infections and fatten up the animals, which eventually causes resistant bacterial strains to evolve. If manure from cattle is used on fields, the soil and vegetables can get contaminated with antibiotic-resistant bacteria. Another major factor is doctors overprescribing antibiotics to humans, particularly in low-income countries. Between 2000 to 2018, the global rates of human antibiotic consumption shot up by 46 percent.

In recent years, researchers have been exploring a promising avenue: the use of synthetic biology to engineer new bacteria that may work better than antibiotics. The need continues to grow, as a Lancet study linked antibiotic resistance to over 1.27 million deaths worldwide in 2019, surpassing HIV/AIDS and malaria. The western sub-Saharan Africa region had the highest death rate (27.3 people per 100,000).

Researchers warn that if nothing changes, by 2050, antibiotic resistance could kill 10 million people annually.

To make it worse, our remedy pipelines are drying up. Out of the 18 biggest pharmaceutical companies, 15 abandoned antibiotic development by 2013. According to the AMR Action Fund, venture capital has remained indifferent towards biotech start-ups developing new antibiotics. In 2019, at least two antibiotic start-ups filed for bankruptcy. As of December 2020, there were 43 new antibiotics in clinical development. But because they are based on previously known molecules, scientists say they are inadequate for treating multidrug-resistant bacteria. Researchers warn that if nothing changes, by 2050, antibiotic resistance could kill 10 million people annually.

The rise of synthetic biology

To circumvent this dire future, scientists have been working on alternative solutions using synthetic biology tools, meaning genetically modifying good bacteria to fight the bad ones.

From the time life evolved on earth around 3.8 billion years ago, bacteria have engaged in biological warfare. They constantly strategize new methods to combat each other by synthesizing toxic proteins that kill competition.

For example, Escherichia coli produces bacteriocins or toxins to kill other strains of E.coli that attempt to colonize the same habitat. Microbes like E.coli (which are not all pathogenic) are also naturally present in the human microbiome. The human microbiome harbors up to 100 trillion symbiotic microbial cells. The majority of them are beneficial organisms residing in the gut at different compositions.

The chemicals that these “good bacteria” produce do not pose any health risks to us, but can be toxic to other bacteria, particularly to human pathogens. For the last three decades, scientists have been manipulating bacteria’s biological warfare tactics to our collective advantage.

In the late 1990s, researchers drew inspiration from electrical and computing engineering principles that involve constructing digital circuits to control devices. In certain ways, every cell in living organisms works like a tiny computer. The cell receives messages in the form of biochemical molecules that cling on to its surface. Those messages get processed within the cells through a series of complex molecular interactions.

Synthetic biologists can harness these living cells’ information processing skills and use them to construct genetic circuits that perform specific instructions—for example, secrete a toxin that kills pathogenic bacteria. “Any synthetic genetic circuit is merely a piece of information that hangs around in the bacteria’s cytoplasm,” explains José Rubén Morones-Ramírez, a professor at the Autonomous University of Nuevo León, Mexico. Then the ribosome, which synthesizes proteins in the cell, processes that new information, making the compounds scientists want bacteria to make. “The genetic circuit remains separated from the living cell’s DNA,” Morones-Ramírez explains. When the engineered bacteria replicates, the genetic circuit doesn’t become part of its genome.

Highly intelligent by bacterial standards, some multidrug resistant V. cholerae strains can also “collaborate” with other intestinal bacterial species to gain advantage and take hold of the gut.

In 2000, Boston-based researchers constructed an E.coli with a genetic switch that toggled between turning genes on and off two. Later, they built some safety checks into their bacteria. “To prevent unintentional or deleterious consequences, in 2009, we built a safety switch in the engineered bacteria’s genetic circuit that gets triggered after it gets exposed to a pathogen," says James Collins, a professor of biological engineering at MIT and faculty member at Harvard University’s Wyss Institute. “After getting rid of the pathogen, the engineered bacteria is designed to switch off and leave the patient's body.”

Overuse and misuse of antibiotics causes resistant strains to evolve

Adobe Stock

Seek and destroy

As the field of synthetic biology developed, scientists began using engineered bacteria to tackle superbugs. They first focused on Vibrio cholerae, which in the 19th and 20th century caused cholera pandemics in India, China, the Middle East, Europe, and Americas. Like many other bacteria, V. cholerae communicate with each other via quorum sensing, a process in which the microorganisms release different signaling molecules, to convey messages to its brethren. Highly intelligent by bacterial standards, some multidrug resistant V. cholerae strains can also “collaborate” with other intestinal bacterial species to gain advantage and take hold of the gut. When untreated, cholera has a mortality rate of 25 to 50 percent and outbreaks frequently occur in developing countries, especially during floods and droughts.

Sometimes, however, V. cholerae makes mistakes. In 2008, researchers at Cornell University observed that when quorum sensing V. cholerae accidentally released high concentrations of a signaling molecule called CAI-1, it had a counterproductive effect—the pathogen couldn’t colonize the gut.

So the group, led by John March, professor of biological and environmental engineering, developed a novel strategy to combat V. cholerae. They genetically engineered E.coli to eavesdrop on V. cholerae communication networks and equipped it with the ability to release the CAI-1 molecules. That interfered with V. cholerae progress. Two years later, the Cornell team showed that V. cholerae-infected mice treated with engineered E.coli had a 92 percent survival rate.

These findings inspired researchers to sic the good bacteria present in foods like yogurt and kimchi onto the drug-resistant ones.

Three years later in 2011, Singapore-based scientists engineered E.coli to detect and destroy Pseudomonas aeruginosa, an often drug-resistant pathogen that causes pneumonia, urinary tract infections, and sepsis. Once the genetically engineered E.coli found its target through its quorum sensing molecules, it then released a peptide, that could eradicate 99 percent of P. aeruginosa cells in a test-tube experiment. The team outlined their work in a Molecular Systems Biology study.

“At the time, we knew that we were entering new, uncharted territory,” says lead author Matthew Chang, an associate professor and synthetic biologist at the National University of Singapore and lead author of the study. “To date, we are still in the process of trying to understand how long these microbes stay in our bodies and how they might continue to evolve.”

More teams followed the same path. In a 2013 study, MIT researchers also genetically engineered E.coli to detect P. aeruginosa via the pathogen’s quorum-sensing molecules. It then destroyed the pathogen by secreting a lab-made toxin.

Probiotics that fight

A year later in 2014, a Nature study found that the abundance of Ruminococcus obeum, a probiotic bacteria naturally occurring in the human microbiome, interrupts and reduces V.cholerae’s colonization— by detecting the pathogen’s quorum sensing molecules. The natural accumulation of R. obeum in Bangladeshi adults helped them recover from cholera despite living in an area with frequent outbreaks.

The findings from 2008 to 2014 inspired Collins and his team to delve into how good bacteria present in foods like yogurt and kimchi can attack drug-resistant bacteria. In 2018, Collins and his team developed the engineered probiotic strategy. They tweaked a bacteria commonly found in yogurt called Lactococcus lactis to treat cholera.

Engineered bacteria can be trained to target pathogens when they are at their most vulnerable metabolic stage in the human gut. --José Rubén Morones-Ramírez.

More scientists followed with more experiments. So far, researchers have engineered various probiotic organisms to fight pathogenic bacteria like Staphylococcus aureus (leading cause of skin, tissue, bone, joint and blood infections) and Clostridium perfringens (which causes watery diarrhea) in test-tube and animal experiments. In 2020, Russian scientists engineered a probiotic called Pichia pastoris to produce an enzyme called lysostaphin that eradicated S. aureus in vitro. Another 2020 study from China used an engineered probiotic bacteria Lactobacilli casei as a vaccine to prevent C. perfringens infection in rabbits.

In a study last year, Ramírez’s group at the Autonomous University of Nuevo León, engineered E. coli to detect quorum-sensing molecules from Methicillin-resistant Staphylococcus aureus or MRSA, a notorious superbug. The E. coli then releases a bacteriocin that kills MRSA. “An antibiotic is just a molecule that is not intelligent,” says Ramírez. “On the other hand, engineered bacteria can be trained to target pathogens when they are at their most vulnerable metabolic stage in the human gut.”

Collins and Timothy Lu, an associate professor of biological engineering at MIT, found that engineered E. coli can help treat other conditions—such as phenylketonuria, a rare metabolic disorder, that causes the build-up of an amino acid phenylalanine. Their start-up Synlogic aims to commercialize the technology, and has completed a phase 2 clinical trial.

Circumventing the challenges

The bacteria-engineering technique is not without pitfalls. One major challenge is that beneficial gut bacteria produce their own quorum-sensing molecules that can be similar to those that pathogens secrete. If an engineered bacteria’s biosensor is not specific enough, it will be ineffective.

Another concern is whether engineered bacteria might mutate after entering the gut. “As with any technology, there are risks where bad actors could have the capability to engineer a microbe to act quite nastily,” says Collins of MIT. But Collins and Ramírez both insist that the chances of the engineered bacteria mutating on its own are virtually non-existent. “It is extremely unlikely for the engineered bacteria to mutate,” Ramírez says. “Coaxing a living cell to do anything on command is immensely challenging. Usually, the greater risk is that the engineered bacteria entirely lose its functionality.”

However, the biggest challenge is bringing the curative bacteria to consumers. Pharmaceutical companies aren’t interested in antibiotics or their alternatives because it’s less profitable than developing new medicines for non-infectious diseases. Unlike the more chronic conditions like diabetes or cancer that require long-term medications, infectious diseases are usually treated much quicker. Running clinical trials are expensive and antibiotic-alternatives aren’t lucrative enough.

“Unfortunately, new medications for antibiotic resistant infections have been pushed to the bottom of the field,” says Lu of MIT. “It's not because the technology does not work. This is more of a market issue. Because clinical trials cost hundreds of millions of dollars, the only solution is that governments will need to fund them.” Lu stresses that societies must lobby to change how the modern healthcare industry works. “The whole world needs better treatments for antibiotic resistance.”