Scientists are working on eye transplants for vision loss. Who will sign up?

Often called the window to the soul, the eyes are more sacred than other body parts, at least for some.

Awash in a fluid finely calibrated to keep it alive, a human eye rests inside a transparent cubic device. This ECaBox, or Eyes in a Care Box, is a one-of-a-kind system built by scientists at Barcelona’s Centre for Genomic Regulation (CRG). Their goal is to preserve human eyes for transplantation and related research.

In recent years, scientists have learned to transplant delicate organs such as the liver, lungs or pancreas, but eyes are another story. Even when preserved at the average transplant temperature of 4 Centigrade, they last for 48 hours max. That's one explanation for why transplanting the whole eye isn’t possible—only the cornea, the dome-shaped, outer layer of the eye, can withstand the procedure. The retina, the layer at the back of the eyeball that turns light into electrical signals, which the brain converts into images, is extremely difficult to transplant because it's packed with nerve tissue and blood vessels.

These challenges also make it tough to research transplantation. “This greatly limits their use for experiments, particularly when it comes to the effectiveness of new drugs and treatments,” said Maria Pia Cosma, a biologist at Barcelona’s Centre for Genomic Regulation (CRG), whose team is working on the ECaBox.

Eye transplants are desperately needed, but they're nowhere in sight. About 12.7 million people worldwide need a corneal transplant, which means that only one in 70 people who require them, get them. The gaps are international. Eye banks in the United Kingdom are around 20 percent below the level needed to supply hospitals, while Indian eye banks, which need at least 250,000 corneas per year, collect only around 45 to 50 thousand donor corneas (and of those 60 to 70 percent are successfully transplanted).

As for retinas, it's impossible currently to put one into the eye of another person. Artificial devices can be implanted to restore the sight of patients suffering from severe retinal diseases, but the number of people around the world with such “bionic eyes” is less than 600, while in America alone 11 million people have some type of retinal disease leading to severe vision loss. Add to this an increasingly aging population, commonly facing various vision impairments, and you have a recipe for heavy burdens on individuals, the economy and society. In the U.S. alone, the total annual economic impact of vision problems was $51.4 billion in 2017.

Even if you try growing tissues in the petri dish route into organoids mimicking the function of the human eye, you will not get the physiological complexity of the structure and metabolism of the real thing, according to Cosma. She is a member of a scientific consortium that includes researchers from major institutions from Spain, the U.K., Portugal, Italy and Israel. The consortium has received about $3.8 million from the European Union to pursue innovative eye research. Her team’s goal is to give hope to at least 2.2 billion people across the world afflicted with a vision impairment and 33 million who go through life with avoidable blindness.

Their method? Resuscitating cadaveric eyes for at least a month.

If we succeed, it will be the first intact human model of the eye capable of exploring and analyzing regenerative processes ex vivo. -- Maria Pia Cosma.

“We proposed to resuscitate eyes, that is to restore the global physiology and function of human explanted tissues,” Cosma said, referring to living tissues extracted from the eye and placed in a medium for culture. Their ECaBox is an ex vivo biological system, in which eyes taken from dead donors are placed in an artificial environment, designed to preserve the eye’s temperature and pH levels, deter blood clots, and remove the metabolic waste and toxins that would otherwise spell their demise.

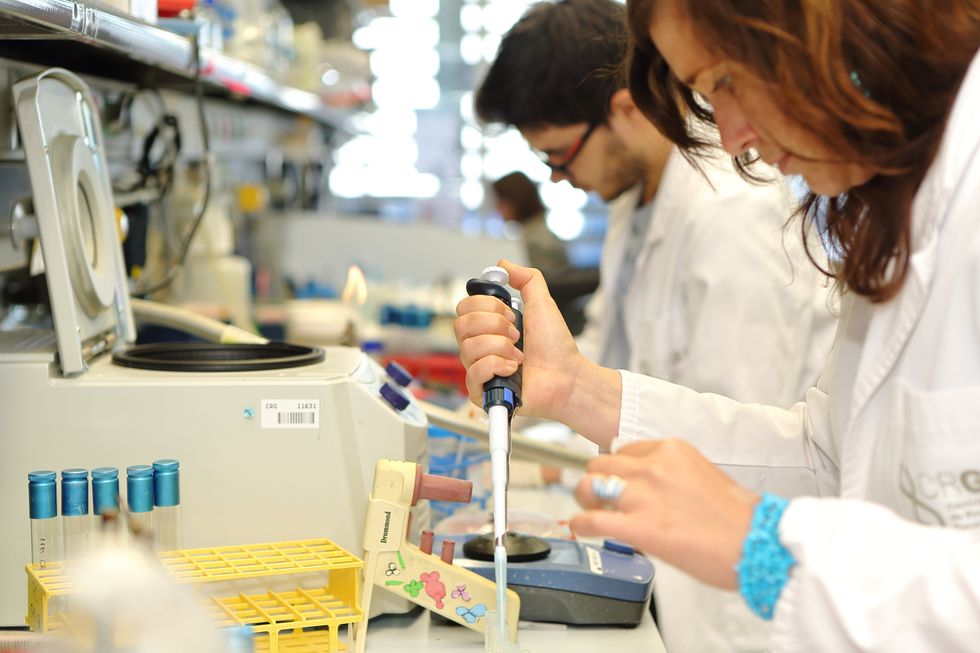

Scientists work on resuscitating eyes in the lab of Maria Pia Cosma.

Courtesy of Maria Pia Cosma.

“One of the great challenges is the passage of the blood in the capillary branches of the eye, what we call long-term perfusion,” Cosma said. Capillaries are an intricate network of very thin blood vessels that transport blood, nutrients and oxygen to cells in the body’s organs and systems. To maintain the garland-shaped structure of this network, sufficient amounts of oxygen and nutrients must be provided through the eye circulation and microcirculation. “Our ambition is to combine perfusion of the vessels with artificial blood," along with using a synthetic form of vitreous, or the gel-like fluid that lets in light and supports the the eye's round shape, Cosma said.

The scientists use this novel setup with the eye submersed in its medium to keep the organ viable, so they can test retinal function. “If we succeed, we will ensure full functionality of a human organ ex vivo. It will be the first intact human model of the eye capable of exploring and analyzing regenerative processes ex vivo,” Cosma added.

A rapidly developing field of regenerative medicine aims to stimulate the body's natural healing processes and restore or replace damaged tissues and organs. But for people with retinal diseases, regenerative medicine progress has been painfully slow. “Experiments on rodents show progress, but the risks for humans are unacceptable,” Cosma said.

The ECaBox could boost progress with regenerative medicine for people with retinal diseases, which has been painfully slow because human experiments involving their eyes are too risky. “We will test emerging treatments while reducing animal research, and greatly accelerate the discovery and preclinical research phase of new possible treatments for vision loss at significantly reduced costs,” Cosma explained. Much less time and money would be wasted during the drug discovery process. Their work may even make it possible to transplant the entire eyeball for those who need it.

“It is a very exciting project,” said Sanjay Sharma, a professor of ophthalmology and epidemiology at Queen's University, in Kingston, Canada. “The ability to explore and monitor regenerative interventions will increasingly be of importance as we develop therapies that can regenerate ocular tissues, including the retina.”

Seemingly, there's no sacred religious text or a holy book prohibiting the practice of eye donation.

But is the world ready for eye transplants? “People are a bit weird or very emotional about donating their eyes as compared to other organs,” Cosma said. And much can be said about the problem of eye donor shortage. Concerns include disfigurement and healthcare professionals’ fear that the conversation about eye donation will upset the departed person’s relatives because of cultural or religious considerations. As just one example, Sharma noted the paucity of eye donations in his home country, Canada.

Yet, experts like Sharma stress the importance of these donations for both the recipients and their family members. “It allows them some psychological benefit in a very difficult time,” he said. So why are global eye banks suffering? Is it because the eyes are the windows to the soul?

Seemingly, there's no sacred religious text or a holy book prohibiting the practice of eye donation. In fact, most major religions of the world permit and support organ transplantation and donation, and by extension eye donation, because they unequivocally see it as an “act of neighborly love and charity.” In Hinduism, the concept of eye donation aligns with the Hindu principle of daan or selfless giving, where individuals donate their organs or body after death to benefit others and contribute to society. In Islam, eye donation is a form of sadaqah jariyah, a perpetual charity, as it can continue to benefit others even after the donor's death.

Meanwhile, Buddhist masters teach that donating an organ gives another person the chance to live longer and practice dharma, the universal law and order, more meaningfully; they also dismiss misunderstandings of the type “if you donate an eye, you’ll be born without an eye in the next birth.” And Christian teachings emphasize the values of love, compassion, and selflessness, all compatible with organ donation, eye donation notwithstanding; besides, those that will have a house in heaven, will get a whole new body without imperfections and limitations.

The explanation for people’s resistance may lie in what Deepak Sarma, a professor of Indian religions and philosophy at Case Western Reserve University in Cleveland, calls “street interpretation” of religious or spiritual dogmas. Consider the mechanism of karma, which is about the causal relation between previous and current actions. “Maybe some Hindus believe there is karma in the eyes and, if the eye gets transplanted into another person, they will have to have that karmic card from now on,” Sarma said. “Even if there is peculiar karma due to an untimely death–which might be interpreted by some as bad karma–then you have the karma of the recipient, which is tremendously good karma, because they have access to these body parts, a tremendous gift,” Sarma said. The overall accumulation is that of good karma: “It’s a beautiful kind of balance,” Sarma said.

For the Jews, Christians, and Muslims who believe in the physical resurrection of the body that will be made new in an afterlife, the already existing body is sacred since it will be the basis of a new refashioned body in an afterlife.---Omar Sultan Haque.

With that said, Sarma believes it is a fallacy to personify or anthropomorphize the eye, which doesn’t have a soul, and stresses that the karma attaches itself to the soul and not the body parts. But for scholars like Omar Sultan Haque—a psychiatrist and social scientist at Harvard Medical School, investigating questions across global health, anthropology, social psychology, and bioethics—the hierarchy of sacredness of body parts is entrenched in human psychology. You cannot equate the pinky toe with the face, he explained.

“The eyes are the window to the soul,” Haque said. “People have a hierarchy of body parts that are considered more sacred or essential to the self or soul, such as the eyes, face, and brain.” In his view, the techno-utopian transhumanist communities (especially those in Silicon Valley) have reduced the totality of a person to a mere material object, a “wet robot” that knows no sacredness or hierarchy of human body parts. “But for the Jews, Christians, and Muslims who believe in the physical resurrection of the body that will be made new in an afterlife, the [already existing] body is sacred since it will be the basis of a new refashioned body in an afterlife,” Haque said. “You cannot treat the body like any old material artifact, or old chair or ragged cloth, just because materialistic, secular ideologies want so,” he continued.

For Cosma and her peers, however, the very definition of what is alive or not is a bit semantic. “As soon as we die, the electrophysiological activity in the eye stops,” she said. “The goal of the project is to restore this activity as soon as possible before the highly complex tissue of the eye starts degrading.” Cosma’s group doesn’t yet know when they will be able to keep the eyes alive and well in the ECaBox, but the consensus is that the sooner the better. Hopefully, the taboos and fears around the eye donations will dissipate around the same time.

Should Your Employer Have Access to Your Fitbit Data?

A woman using a wearable device to track her fitness activities.

The modern world today has become more dependent on technology than ever. We want to achieve maximal tasks with minimal human effort. And increasingly, we want our technology to go wherever we go.

Wearable devices operate by collecting massive amounts of personal information on unsuspecting users.

At work, we are leveraging the immense computing power of tablet computers. To supplement social interaction, we have turned to smartphones and social media. Lately, another novel and exciting technology is on the rise: wearable devices that track our personal data, like the FitBit and the Apple Watch. The interest and demand for these devices is soaring. CCS Insight, an organization that studies developments in digital markets, has reported that the market for wearables will be worth $25 billion by next year. By 2020, it is estimated that a staggering 411 million smart wearable devices will be sold.

Although wearables include smartwatches, fitness bands, and VR/AR headsets, devices that monitor and track health data are gaining most of the traction. Apple has announced the release of Apple Health Records, a new feature for their iOS operating system that will allow users to view and store medical records on their smart devices. Hospitals such as NYU Langone have started to use this feature on Apple Watch to send push notifications to ER doctors for vital lab results, so that they can review and respond immediately. Previously, Google partnered with Novartis to develop smart contact lens that can monitor blood glucose levels in diabetic patients, although the idea has been in limbo.

As these examples illustrate, these wearable devices present unique opportunities to address some of the most intractable problems in modern healthcare. At the same time, these devices operate by collecting massive personal information on unsuspecting users and pose unique ethical challenges regarding informed consent, user privacy, and health data security. If there is a lesson from the recent Facebook debacle, it is that big data applications, even those using anonymized data, are not immune from malicious third-party data-miners.

On consent: do users of wearable devices really know what they are getting into? There is very little evidence to support the claim that consent obtained on signing up can be considered 'informed.' A few months ago, researchers from Australia published an interesting study that surveyed users of wearable devices that monitor and track health data. The survey reported that users were "highly concerned" regarding issues of privacy and considered informed consent "very important" when asked about data sharing with third parties (for advertising or data analysis).

However, users were not aware of how privacy and informed consent were related. In essence, while they seemed to understand the abstract importance of privacy, they were unaware that clicking on the "I agree" dialog box entailed giving up control of their personal health information. This is not surprising, given that most user agreements for online applications or wearable devices are often in lengthy legalese.

Companies could theoretically use their employees' data to motivate desired behavior, throwing a modern wrench into the concept of work/life balance.

Privacy of health data is another unexamined ethical question. Although wearable devices have traditionally been used for promotion of healthy lifestyles (through fitness tracking) and ease of use (such as the call and message features on Apple Watch), increasing interest is coming from corporations. Tractica, a market research firm that studies trends in wearable devices, reports that corporate consumers will account for 17 percent of the market share in wearable devices by 2020 (current market share stands at 1 percent). This is because wearable devices, loaded with several sensors, provide unique insights to track workers' physical activity, stress levels, sleep, and health information. Companies could theoretically use this information to motivate desired behavior, throwing a modern wrench into the concept of work/life balance.

Since paying for employees' healthcare tends to be one of the largest expenses for employers, using wearable devices is seen as something that can boost the bottom line, while enhancing productivity. Even if one considers it reasonable to devise policies that promote productivity, we have yet to determine ethical frameworks that can prevent discrimination against those who may not be able-bodied, and to determine how much control employers ought to exert over the lifestyle of employees.

To be clear, wearable smart devices can address unique challenges in healthcare and elsewhere, but the focus needs to shift toward the user's needs. Data collection practices should also reflect this shift.

Privacy needs to be incorporated by design and not as an afterthought. If we were to read privacy policies properly, it could take some 180 to 300 hours per year per person. This needs to change. Privacy and consent policies ought to be in clear, simple language. If using your device means ultimately sharing your data with doctors, food manufacturers, insurers, companies, dating apps, or whoever might want access to it, then you should know that loud and clear.

The recent implementation of European Union's General Data Protection Regulation (GDPR) is also a move in the right direction. These protections include firm guidelines for consent, and an ability to withdraw consent; a right to access data, and to know what is being done with user's collected data; inherent privacy protections; notifications of security breach; and, strict penalties for companies that do not comply. For wearable devices in healthcare, collaborations with frontline providers would also reveal which areas can benefit from integrating wearable technology for maximum clinical benefit.

In our pursuit of advancement, we must not erode fundamental rights to privacy and security, and not infringe on the rights of the vulnerable and marginalized.

If current trends are any indication, wearable devices will play a central role in our future lives. In fact, the next generation of wearables will be implanted under our skin. This future is already visible when looking at the worrying rise in biohacking – or grinding, or cybernetic enhancement – where people attempt to enhance the physical capabilities of their bodies with do-it-yourself cybernetic devices (using hacker ethics to justify the practice).

Already, a company in Wisconsin called Three Square Market has become the first U.S. employer to provide rice-grained-sized radio-frequency identification (RFID) chips implanted under the skin between the thumb and forefinger of their employees. The company stated that these RFID chips (also available as wearable rings or bracelets) can be used to login to computers, open doors, or use the copy machines.

Humans have always used technology to push the boundaries of what we can do. But in our pursuit of advancement, we must not erode fundamental rights to privacy and security, and not infringe on the rights of the vulnerable and marginalized. The rise of powerful wearables will also necessitate a global discussion on moral questions such as: what are the boundaries for artificially enhancing the human body, and is hacking our bodies ethically acceptable? We should think long and hard before we answer.

A lamb receiving a shot from medical personnel.

More than 114,000 men, women, and children are awaiting organ transplants in the United States. Each day, 22 of them die waiting. To address this shortage, researchers are working hard to grow organs on-demand, using the patient's own cells, to eliminate the need to find a perfectly matched donor.

"The next step is to transplant these cells into a larger animal that will produce an organ that is the right size for a human."

But creating full-size replacement organs in a lab is still decades away. So some scientists are experimenting with the boundaries of nature and life itself: using other mammals to grow human cells. Earlier this year, this line of investigation took a big step forward when scientists announced they had grown sheep embryos that contained human cells.

Dr. Pablo Ross, an associate professor at the University of California, Davis, along with a team of colleagues, introduced human stem cells into the sheep embryos at a very early stage of their development and found that one in every 10,000 cells in the embryo were human. It was an improvement over their prior experiment, using a pig embryo, when they found that one in every 100,000 cells in the pig were human. The resulting chimera, as the embryo is called, is only allowed to develop for 28 days. Leapsmag contributor Caren Chesler recently spoke with Ross about his research. Their interview has been edited and condensed for clarity.

Your goal is to one day grow human organs in animals, for organ transplantation. What does your research entail?

We're transplanting stem cells from a person into an animal embryo, at about day three to five of embryo development.

This concept has already been shown to work between mice and rats. You can grow a mouse pancreas inside a rat, or you can grow a rat pancreas inside a mouse.

For this approach to work for humans, the next step is to transplant these cells into a larger animal that will produce an organ that is the right size for a human. That's why we chose to start some of this preliminary work using pigs and sheep. Adult pigs and adult sheep have organs that are of similar size to an adult human. Pigs and sheep also grow really fast, so they can grow from a single cell at the time of fertilization to human adult size -- about 200 pounds -- in only nine to 10 months. That's better than the average waiting time for an organ transplant.

"You don't want the cells to confer any human characteristics in the animal....Too many cells, that may be a problem, because we do not know what that threshold is."

So how do you get the animal to grow the human organ you want?

First, we need to generate the animal without its own organ. We can generate sheep or pigs that will not grow their own pancreases. Those animals can then be used as hosts for human pancreas generation.

For the approach to work, we need the human stem cells to be able to integrate into the embryo and to contribute to its tissues. What we've been doing with pigs, and more recently, in sheep, is testing different types of stem cells, and introducing them into an early embryo between three to five days of development. We then transfer that embryo to a surrogate female and then harvest the embryos back at day 28 of development, at which point most of the organs are pre-formed.

The human cells will contribute to every organ. But in trying to do that, they will compete with the host organism. Since this is happening inside a pig embryo, which is inside a pig foster mother, the pig cells will win that competition for every organ.

Because you're not putting in enough human cells?

No, because it's a pig environment. Everything is pig. The host, basically, is in control. That's what we see when we do rat mice, or mouse rat: the host always wins the battle.

But we need human cells in the early development -- a few, but not too few -- so that when an organ needs to form, like a pancreas (which develops at around day 25), the pig cells will not respond to that, but if there are human cells in that location, [those human cells] can respond to pancreas formation.

From the work in mice and rats, we know we need some kind of global contribution across multiple tissues -- even a 1% contribution will be sufficient. But if the cells are not there, then they're not going to contribute to that organ. The way we target the specific organ is by removing the competition for that organ.

So if you want it to grow a pancreas, you use an embryo that is not going to grow a pancreas of its own. But you can't control where the other cells go. For instance, you don't want them going to the animal's brain – or its gonads –right?

You don't want the cells to confer any human characteristics in the animal. But even if cells go to the brain, it's not going to confer on the animal human characteristics. A few human cells, even if they're in the brain, won't make it a human brain. Too many cells, that may be a problem, because we do not know what that threshold is.

The objective of our research right now is to look at just 28 days of embryonic development and evaluate what's going on: Are the human cells there? How many? Do they go to the brain? If so, how many? Is this a problem, or is it not a problem? If we find that too many human cells go to the brain, that will probably mean that we wouldn't continue with this approach. At this point, we're not controlling it; we're analyzing it.

"By keeping our research in a very early stage of development, we're not creating a human or a humanoid or anything in between."

What other ethical concerns have arisen?

Conferring human properties to the organism, that is a major concern. I wouldn't like to be involved in that, and so that's what we're trying to assess. By keeping our research in a very early stage of development, we're not creating a human or a humanoid or anything in between.

What specifically sets off the ethical alarms? An animal developing human traits?

Animals developing human characteristics goes beyond what would be considered acceptable. I share that concern. But so far, what we have observed, primarily in rats and mice, is that the host animal dictates development. When you put mouse cells into a rat -- and they're so closely related, sometimes the mouse cells contribute to about 30 percent of the cells in the animal -- the outcome is still a rat. It's the size of a rat. It's the shape of the rat. It has the organ sizes of a rat. Even when the pancreas is fully made out of mouse cells, the pancreas is rat-sized because it grew inside the rat.

This happens even with an organ that is not shared, like a gallbladder, which mice have but rats do not. If you put cells from a mouse into a rat, it never grows a gallbladder. And if you put rat cells into the mouse, the rat cells can end up in the gallbladder even though those rat cells would never have made a gallbladder in a rat.

That means the cell structure is following the directions of the embryo, in terms of how they're going to form and what they're going to make. Based on those observations, if you put human cells into a sheep, we are going to get a sheep with human cells. The organs, the pancreas, in our case, will be the size and shape of the sheep pancreas, but it will be loaded with human cells identical to those of the patient that provided the cells used to generate the stem cells.

But, yeah, if by doing this, the animal acquires the functional or anatomical characteristics associated with a human, it would not be acceptable for me.

So you think these concerns are justified?

Absolutely. They need to be considered. But sometimes by raising these concerns, we prevent technologies from being developed. We need to consider the concerns, but we must evaluate them fully, to determine if they are scientifically justified. Because while we must consider the ethics of doing this, we also need to consider the ethics of not doing it. Every day, 22 people in the US die because they don't receive the organ they need to survive. This shortage is not going to be solved by donations, alone. That's clear. And when people die of old age, their organs are not good anymore.

Since organ transplantation has been so successful, the number of people needing organs has just been growing. The number of organs available has also grown but at a much slower pace. We need to find an alternative, and I think growing the organs in animals is one of those alternatives.

Right now, there's a moratorium on National Institutes of Health funding?

Yes. It's only one agency, but it happens to be the largest biomedical funding source. We have public funding for this work from the California Institute for Regenerative Medicine, and one of my colleagues has funding from the Department of Defense.

"I can say, without NIH funding, it's not going to happen here. It may happen in other places, like China."

Can we put the moratorium in context? How much research in the U.S. is funded by the NIH?

Probably more than 75 percent.

So what kind of impact would lifting that ban have on speeding up possible treatments for those who need a new organ?

Oh, I think it would have a huge impact. The moratorium not only prevents people from seeking funding to advance this area of research, it influences other sources of funding, who think, well, if the NIH isn't doing it, why are we going to do it? It hinders progress.

So with the ban, how long until we can really have organs growing in animals? I've heard five or 10 years.

With or without the ban, I don't think I can give you an accurate estimate.

What we know so far is that human cells don't contribute a lot to the animal embryo. We don't know exactly why. We have a lot of good ideas about things we can test, but we can't move forward right now because we don't have funding -- or we're moving forward but very slowly. We're really just scratching the surface in terms of developing these technologies.

We still need that one major leap in our understanding of how different species interact, and how human cells participate in the development of other species. I cannot predict when we're going to reach that point. I can say, without NIH funding, it's not going to happen here. It may happen in other places, like China, but without NIH funding, it's not going to happen in the U.S.

I think it's important to mention that this is in a very early stage of development and it should not be presented to people who need an organ as something that is possible right now. It's not fair to give false hope to people who are desperate.

So the five to 10 year figure is not realistic.

I think it will take longer than that. If we had a drug right now that we knew could stop heart attacks, it could take five to 10 years just to get it to market. With this, you're talking about a much more complex system. I would say 20 to 25 years. Maybe.