The U.S. must fund more biotech innovation – or other countries will catch up faster than you think

In the coming years, U.S. market share in biotech will decline unless the federal government makes investments to improve the quality and quantity of U.S. research, writes the author.

The U.S. has approximately 58 percent of the market share in the biotech sector, followed by China with 11 percent. However, this market share is the result of several years of previous research and development (R&D) – it is a present picture of what happened in the past. In the future, this market share will decline unless the federal government makes investments to improve the quality and quantity of U.S. research in biotech.

The effectiveness of current R&D can be evaluated in a variety of ways such as monies invested and the number of patents filed. According to the UNESCO Institute for Statistics, the U.S. spends approximately 2.7 percent of GDP on R&D ($476,459.0M), whereas China spends 2 percent ($346,266.3M). However, investment levels do not necessarily translate into goods that end up contributing to innovation.

Patents are a better indication of innovation. The biotech industry relies on patents to protect their investments, making patenting a key tool in the process of translating scientific discoveries that can ultimately benefit patients. In 2020, China filed 1,497,159 patents, a 6.9 percent increase in growth rate. In contrast, the U.S. filed 597,172, a 3.9 percent decline. When it comes to patents filed, China has approximately 45 percent of the world share compared to 18 percent for the U.S.

So how did we get here? The nature of science in academia allows scientists to specialize by dedicating several years to advance discovery research and develop new inventions that can then be licensed by biotech companies. This makes academic science critical to innovation in the U.S. and abroad.

Academic scientists rely on government and foundation grants to pay for R&D, which includes salaries for faculty, investigators and trainees, as well as monies for infrastructure, support personnel and research supplies. Of particular interest to academic scientists to cover these costs is government support such as Research Project Grants, also known as R01 grants, the oldest grant mechanism from the National Institutes of Health. Unfortunately, this funding mechanism is extremely competitive, as applications have a success rate of only about 20 percent. To maximize the chances of getting funded, investigators tend to limit the innovation of their applications, since a project that seems overambitious is discouraged by grant reviewers.

Considering the difficulty in obtaining funding, the limited number of opportunities for scientists to become independent investigators capable of leading their own scientific projects, and the salaries available to pay for scientists with a doctoral degree, it is not surprising that the U.S. is progressively losing its workforce for innovation.

This approach affects the future success of the R&D enterprise in the U.S. Pursuing less innovative work tends to produce scientific results that are more obvious than groundbreaking, and when a discovery is obvious, it cannot be patented, resulting in fewer inventions that go on to benefit patients. Even though there are governmental funding options available for scientists in academia focused on more groundbreaking and translational projects, those options are less coveted by academic scientists who are trying to obtain tenure and long-term funding to cover salaries and other associated laboratory expenses. Therefore, since only a small percent of projects gets funded, the likelihood of scientists interested in pursuing academic science or even research in general keeps declining over time.

Efforts to raise the number of individuals who pursue a scientific education are paying off. However, the number of job openings for those trainees to carry out independent scientific research once they graduate has proved harder to increase. These limitations are not just in the number of faculty openings to pursue academic science, which are in part related to grant funding, but also the low salary available to pay those scientists after they obtain their doctoral degree, which ranges from $53,000 to $65,000, depending on years of experience.

Thus, considering the difficulty in obtaining funding, the limited number of opportunities for scientists to become independent investigators capable of leading their own scientific projects, and the salaries available to pay for scientists with a doctoral degree, it is not surprising that the U.S. is progressively losing its workforce for innovation, which results in fewer patents filed.

Perhaps instead of encouraging scientists to propose less innovative projects in order to increase their chances of getting grants, the U.S. government should give serious consideration to funding investigators for their potential for success -- or the success they have already achieved in contributing to the advancement of science. Such a funding approach should be tiered depending on career stage or years of experience, considering that 42 years old is the median age at which the first R01 is obtained. This suggests that after finishing their training, scientists spend 10 years before they establish themselves as independent academic investigators capable of having the appropriate funds to train the next generation of scientists who will help the U.S. maintain or even expand its market share in the biotech industry for years to come. Patenting should be given more weight as part of the academic endeavor for promotion purposes, or governmental investment in research funding should be increased to support more than just 20 percent of projects.

Remaining at the forefront of biotech innovation will give us the opportunity to not just generate more jobs, but it will also allow us to attract the brightest scientists from all over the world. This talented workforce will go on to train future U.S. scientists and will improve our standard of living by giving us the opportunity to produce the next generation of therapies intended to improve human health.

This problem cannot rely on just one solution, but what is certain is that unless there are more creative changes in funding approaches for scientists in academia, eventually we may be saying “remember when the U.S. was at the forefront of biotech innovation?”

Beyond Henrietta Lacks: How the Law Has Denied Every American Ownership Rights to Their Own Cells

A 2017 portrait of Henrietta Lacks.

The common perception is that Henrietta Lacks was a victim of poverty and racism when in 1951 doctors took samples of her cervical cancer without her knowledge or permission and turned them into the world's first immortalized cell line, which they called HeLa. The cell line became a workhorse of biomedical research and facilitated the creation of medical treatments and cures worth untold billions of dollars. Neither Lacks nor her family ever received a penny of those riches.

But racism and poverty is not to blame for Lacks' exploitation—the reality is even worse. In fact all patients, then and now, regardless of social or economic status, have absolutely no right to cells that are taken from their bodies. Some have called this biological slavery.

How We Got Here

The case that established this legal precedent is Moore v. Regents of the University of California.

John Moore was diagnosed with hairy-cell leukemia in 1976 and his spleen was removed as part of standard treatment at the UCLA Medical Center. On initial examination his physician, David W. Golde, had discovered some unusual qualities to Moore's cells and made plans prior to the surgery to have the tissue saved for research rather than discarded as waste. That research began almost immediately.

"On both sides of the case, legal experts and cultural observers cautioned that ownership of a human body was the first step on the slippery slope to 'bioslavery.'"

Even after Moore moved to Seattle, Golde kept bringing him back to Los Angeles to collect additional samples of blood and tissue, saying it was part of his treatment. When Moore asked if the work could be done in Seattle, he was told no. Golde's charade even went so far as claiming to find a low-income subsidy to pay for Moore's flights and put him up in a ritzy hotel to get him to return to Los Angeles, while paying for those out of his own pocket.

Moore became suspicious when he was asked to sign new consent forms giving up all rights to his biological samples and he hired an attorney to look into the matter. It turned out that Golde had been lying to his patient all along; he had been collecting samples unnecessary to Moore's treatment and had turned them into a cell line that he and UCLA had patented and already collected millions of dollars in compensation. The market for the cell lines was estimated at $3 billion by 1990.

Moore felt he had been taken advantage of and filed suit to claim a share of the money that had been made off of his body. "On both sides of the case, legal experts and cultural observers cautioned that ownership of a human body was the first step on the slippery slope to 'bioslavery,'" wrote Priscilla Wald, a professor at Duke University whose career has focused on issues of medicine and culture. "Moore could be viewed as asking to commodify his own body part or be seen as the victim of the theft of his most private and inalienable information."

The case bounced around different levels of the court system with conflicting verdicts for nearly six years until the California Supreme Court ruled on July 9, 1990 that Moore had no legal rights to cells and tissue once they were removed from his body.

The court made a utilitarian argument that the cells had no value until scientists manipulated them in the lab. And it would be too burdensome for researchers to track individual donations and subsequent cell lines to assure that they had been ethically gathered and used. It would impinge on the free sharing of materials between scientists, slow research, and harm the public good that arose from such research.

"In effect, what Moore is asking us to do is impose a tort duty on scientists to investigate the consensual pedigree of each human cell sample used in research," the majority wrote. In other words, researchers don't need to ask any questions about the materials they are using.

One member of the court did not see it that way. In his dissent, Stanley Mosk raised the specter of slavery that "arises wherever scientists or industrialists claim, as defendants have here, the right to appropriate and exploit a patient's tissue for their sole economic benefit—the right, in other words, to freely mine or harvest valuable physical properties of the patient's body. … This is particularly true when, as here, the parties are not in equal bargaining positions."

Mosk also cited the appeals court decision that the majority overturned: "If this science has become for profit, then we fail to see any justification for excluding the patient from participation in those profits."

But the majority bought the arguments that Golde, UCLA, and the nascent biotechnology industry in California had made in amici briefs filed throughout the legal proceedings. The road was now cleared for them to develop products worth billions without having to worry about or share with the persons who provided the raw materials upon which their research was based.

Critical Views

Biomedical research requires a continuous and ever-growing supply of human materials for the foundation of its ongoing work. If an increasing number of patients come to feel as John Moore did, that the system is ripping them off, then they become much less likely to consent to use of their materials in future research.

Some legal and ethical scholars say that donors should be able to limit the types of research allowed for their tissues and researchers should be monitored to assure compliance with those agreements. For example, today it is commonplace for companies to certify that their clothing is not made by child labor, their coffee is grown under fair trade conditions, that food labeled kosher is properly handled. Should we ask any less of our pharmaceuticals than that the donors whose cells made such products possible have been treated honestly and fairly, and share in the financial bounty that comes from such drugs?

Protection of individual rights is a hallmark of the American legal system, says Lisa Ikemoto, a law professor at the University of California Davis. "Putting the needs of a generalized public over the interests of a few often rests on devaluation of the humanity of the few," she writes in a reimagined version of the Moore decision that upholds Moore's property claims to his excised cells. The commentary is in a chapter of a forthcoming book in the Feminist Judgment series, where authors may only use legal precedent in effect at the time of the original decision.

"Why is the law willing to confer property rights upon some while denying the same rights to others?" asks Radhika Rao, a professor at the University of California, Hastings College of the Law. "The researchers who invest intellectual capital and the companies and universities that invest financial capital are permitted to reap profits from human research, so why not those who provide the human capital in the form of their own bodies?" It might be seen as a kind of sweat equity where cash strapped patients make a valuable in kind contribution to the enterprise.

The Moore court also made a big deal about inhibiting the free exchange of samples between scientists. That has become much less the situation over the more than three decades since the decision was handed down. Ironically, this decision, as well as other laws and regulations, have since strengthened the power of patents in biomedicine and by doing so have increased secrecy and limited sharing.

"Although the research community theoretically endorses the sharing of research, in reality sharing is commonly compromised by the aggressive pursuit and defense of patents and by the use of licensing fees that hinder collaboration and development," Robert D. Truog, Harvard Medical School ethicist and colleagues wrote in 2012 in the journal Science. "We believe that measures are required to ensure that patients not bear all of the altruistic burden of promoting medical research."

Additionally, the increased complexity of research and the need for exacting standardization of materials has given rise to an industry that supplies certified chemical reagents, cell lines, and whole animals bred to have specific genetic traits to meet research needs. This has been more efficient for research and has helped to ensure that results from one lab can be reproduced in another.

The Court's rationale of fostering collaboration and free exchange of materials between researchers also has been undercut by the changing structure of that research. Big pharma has shrunk the size of its own research labs and over the last decade has worked out cooperative agreements with major research universities where the companies contribute to the research budget and in return have first dibs on any findings (and sometimes a share of patent rights) that come out of those university labs. It has had a chilling effect on the exchange of materials between universities.

Perhaps tracking cell line donors and use restrictions on those donations might have been burdensome to researchers when Moore was being litigated. Some labs probably still kept their cell line records on 3x5 index cards, computers were primarily expensive room-size behemoths with limited capacity, the internet barely existed, and there was no cloud storage.

But that was the dawn of a new technological age and standards have changed. Now cell lines are kept in state-of-the-art sub zero storage units, tagged with the source, type of tissue, date gathered and often other information. Adding a few more data fields and contacting the donor if and when appropriate does not seem likely to disrupt the research process, as the court asserted.

Forging the Future

"U.S. universities are awarded almost 3,000 patents each year. They earn more than $2 billion each year from patent royalties. Sharing a modest portion of these profits is a novel method for creating a greater sense of fairness in research relationships that we think is worth exploring," wrote Mark Yarborough, a bioethicist at the University of California Davis Medical School, and colleagues. That was penned nearly a decade ago and those numbers have only grown.

The Michigan BioTrust for Health might serve as a useful model in tackling some of these issues. Dried blood spots have been collected from all newborns for half a century to be tested for certain genetic diseases, but controversy arose when the huge archive of dried spots was used for other research projects. As a result, the state created a nonprofit organization to in essence become a biobank and manage access to these spots only for specific purposes, and also to share any revenue that might arise from that research.

"If there can be no property in a whole living person, does it stand to reason that there can be no property in any part of a living person? If there were, can it be said that this could equate to some sort of 'biological slavery'?" Irish ethicist Asim A. Sheikh wrote several years ago. "Any amount of effort spent pondering the issue of 'ownership' in human biological materials with existing law leaves more questions than answers."

Perhaps the biggest question will arise when -- not if but when -- it becomes possible to clone a human being. Would a human clone be a legal person or the property of those who created it? Current legal precedent points to it being the latter.

Today, October 4, is the 70th anniversary of Henrietta Lacks' death from cancer. Over those decades her immortalized cells have helped make possible miraculous advances in medicine and have had a role in generating billions of dollars in profits. Surviving family members have spoken many times about seeking a share of those profits in the name of social justice; they intend to file lawsuits today. Such cases will succeed or fail on their own merits. But regardless of their specific outcomes, one can hope that they spark a larger public discussion of the role of patients in the biomedical research enterprise and lead to establishing a legal and financial claim for their contributions toward the next generation of biomedical research.

Is a Successful HIV Vaccine Finally on the Horizon?

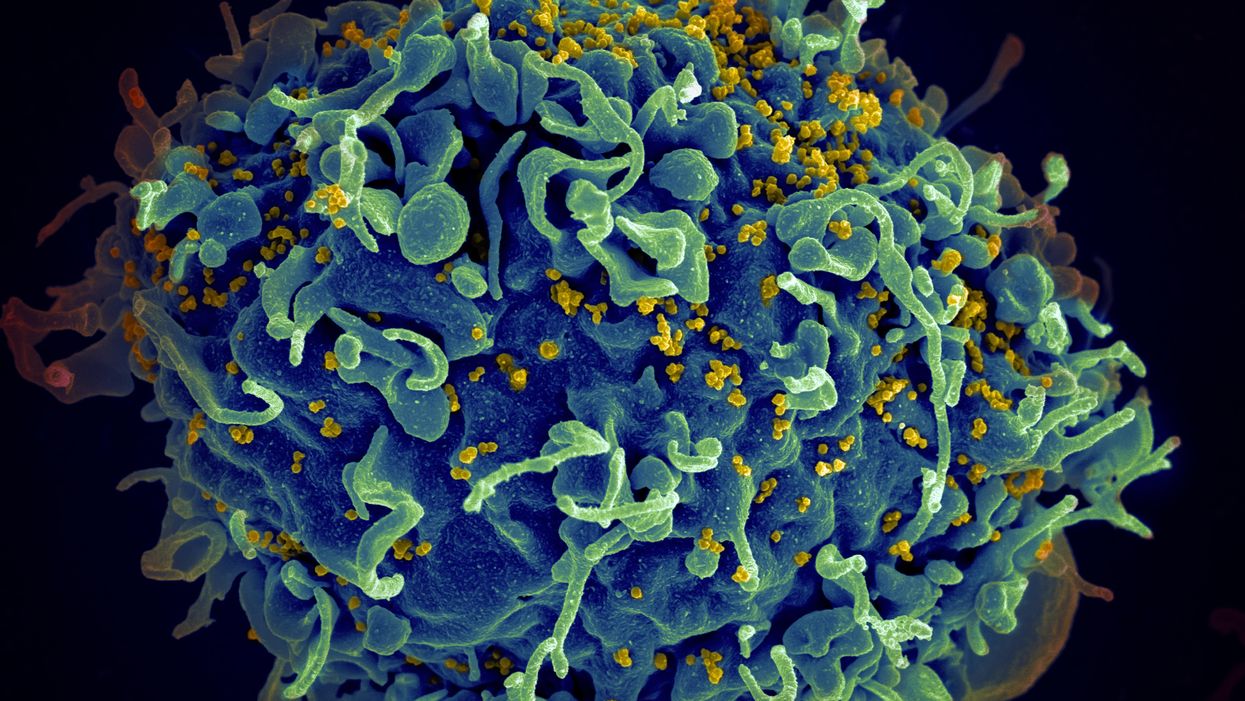

The HIV virus (yellow) infecting a human cell.

Few vaccines have been as complicated—and filled with false starts and crushed hopes—as the development of an HIV vaccine.

While antivirals help HIV-positive patients live longer and reduce viral transmission to virtually nil, these medications must be taken for life, and preventative medications like pre-exposure prophylaxis, known as PrEP, need to be taken every day to be effective. Vaccines, even if they need boosters, would make prevention much easier.

In August, Moderna began human trials for two HIV vaccine candidates based on messenger RNA.

As they have with the Covid-19 pandemic, mRNA vaccines could change the game. The technology could be applied for gene editing therapy, cancer, other infectious diseases—even a universal influenza vaccine.

In the past, three other mRNA vaccines completed phase-2 trials without success. But the easily customizable platforms mean the vaccines can be tweaked better to target HIV as researchers learn more.

Ever since HIV was discovered as the virus causing AIDS, researchers have been searching for a vaccine. But the decades-long journey has so far been fruitless; while some vaccine candidates showed promise in early trials, none of them have worked well among later-stage clinical trials.

There are two main reasons for this: HIV evolves incredibly quickly, and the structure of the virus makes it very difficult to neutralize with antibodies.

"We in HIV medicine have been desperate to find a vaccine that has effectiveness, but this goal has been elusive so far."

"You know the panic that goes on when a new coronavirus variant surfaces?" asked John Moore, professor of microbiology and immunology at Weill Cornell Medicine who has researched HIV vaccines for 25 years. "With HIV, that kind of variation [happens] pretty much every day in everybody who's infected. It's just orders of magnitude more variable a virus."

Vaccines like these usually work by imitating the outer layer of a virus to teach cells how to recognize and fight off the real thing off before it enters the cell. "If you can prevent landing, you can essentially keep the virus out of the cell," said Larry Corey, the former president and director of the Fred Hutchinson Cancer Research Center who helped run a recent trial of a Johnson & Johnson HIV vaccine candidate, which failed its first efficacy trial.

Like the coronavirus, HIV also has a spike protein with a receptor-binding domain—what Moore calls "the notorious RBD"—that could be neutralized with antibodies. But while that target sticks out like a sore thumb in a virus like SARS-CoV-2, in HIV it's buried under a dense shield. That's not the only target for neutralizing the virus, but all of the targets evolve rapidly and are difficult to reach.

"We understand these targets. We know where they are. But it's still proving incredibly difficult to raise antibodies against them by vaccination," Moore said.

In fact, mRNA vaccines for HIV have been under development for years. The Covid vaccines were built on decades of that research. But it's not as simple as building on this momentum, because of how much more complicated HIV is than SARS-CoV-2, researchers said.

"They haven't succeeded because they were not designed appropriately and haven't been able to induce what is necessary for them to induce," Moore said. "The mRNA technology will enable you to produce a lot of antibodies to the HIV envelope, but if they're the wrong antibodies that doesn't solve the problem."

Part of the problem is that the HIV vaccines have to perform better than our own immune systems. Many vaccines are created by imitating how our bodies overcome an infection, but that doesn't happen with HIV. Once you have the virus, you can't fight it off on your own.

"The human immune system actually does not know how to innately cure HIV," Corey said. "We needed to improve upon the human immune system to make it quicker… with Covid. But we have to actually be better than the human immune system" with HIV.

But in the past few years, there have been impressive leaps in understanding how an HIV vaccine might work. Scientists have known for decades that neutralizing antibodies are key for a vaccine. But in 2010 or so, they were able to mimic the HIV spike and understand how antibodies need to disable the virus. "It helps us understand the nature of the problem, but doesn't instantly solve the problem," Moore said. "Without neutralizing antibodies, you don't have a chance."

Because the vaccines need to induce broadly neutralizing antibodies, and because it's very difficult to neutralize the highly variable HIV, any vaccine will likely be a series of shots that teach the immune system to be on the lookout for a variety of potential attacks.

"Each dose is going to have to have a different purpose," Corey said. "And we hope by the end of the third or fourth dose, we will achieve the level of neutralization that we want."

That's not ideal, because each individual component has to be made and tested—and four shots make the vaccine harder to administer.

"You wouldn't even be going down that route, if there was a better alternative," Moore said. "But there isn't a better alternative."

The mRNA platform is exciting because it is easily customizable, which is especially important in fighting against a shapeshifting, complicated virus. And the mRNA platform has shown itself, in the Covid pandemic, to be safe and quick to make. Effective Covid vaccines were comparatively easy to develop, since the coronavirus is easier to battle than HIV. But companies like Moderna are capitalizing on their success to launch other mRNA therapeutics and vaccines, including the HIV trial.

"You can make the vaccine in two months, three months, in a research lab, and not a year—and the cost of that is really less," Corey said. "It gives us a chance to try many more options, if we've got a good response."

In a trial on macaque monkeys, the Moderna vaccine reduced the chances of infection by 85 percent. "The mRNA platform represents a very promising approach for the development of an HIV vaccine in the future," said Dr. Peng Zhang, who is helping lead the trial at the National Institute of Allergy and Infectious Diseases.

Moderna's trial in humans represents "a very exciting possibility for the prevention of HIV infection," Dr. Monica Gandhi, director of the UCSF-Gladstone Center for AIDS Research, said in an email. "We in HIV medicine have been desperate to find a vaccine that has effectiveness, but this goal has been elusive so far."

If a successful HIV vaccine is developed, the series of shots could include an mRNA shot that primes the immune system, followed by protein subunits that generate the necessary antibodies, Moore said.

"I think it's the only thing that's worth doing," he said. "Without something complicated like that, you have no chance of inducing broadly neutralizing antibodies."

"I can't guarantee you that's going to work," Moore added. "It may completely fail. But at least it's got some science behind it."