A skin patch to treat peanut allergies teaches the body to tolerate the nuts

Peanut allergies affect about a million children in the U.S., and most never outgrow them. Luckily, some promising remedies are in the works.

Ever since he was a baby, Sharon Wong’s son Brandon suffered from rashes, prolonged respiratory issues and vomiting. In 2006, as a young child, he was diagnosed with a severe peanut allergy.

"My son had a history of reacting to traces of peanuts in the air or in food,” says Wong, a food allergy advocate who runs a blog focusing on nut free recipes, cooking techniques and food allergy awareness. “Any participation in school activities, social events, or travel with his peanut allergy required a lot of preparation.”

Peanut allergies affect around a million children in the U.S. Most never outgrow the condition. The problem occurs when the immune system mistakenly views the proteins in peanuts as a threat and releases chemicals to counteract it. This can lead to digestive problems, hives and shortness of breath. For some, like Wong’s son, even exposure to trace amounts of peanuts could be life threatening. They go into anaphylactic shock and need to take a shot of adrenaline as soon as possible.

Typically, people with peanut allergies try to completely avoid them and carry an adrenaline autoinjector like an EpiPen in case of emergencies. This constant vigilance is very stressful, particularly for parents with young children.

“The search for a peanut allergy ‘cure’ has been a vigorous one,” says Claudia Gray, a pediatrician and allergist at Vincent Pallotti Hospital in Cape Town, South Africa. The closest thing to a solution so far, she says, is the process of desensitization, which exposes the patient to gradually increasing doses of peanut allergen to build up a tolerance. The most common type of desensitization is oral immunotherapy, where patients ingest small quantities of peanut powder. It has been effective but there is a risk of anaphylaxis since it involves swallowing the allergen.

"By the end of the trial, my son tolerated approximately 1.5 peanuts," Sharon Wong says.

DBV Technologies, a company based in Montrouge, France has created a skin patch to address this problem. The Viaskin Patch contains a much lower amount of peanut allergen than oral immunotherapy and delivers it through the skin to slowly increase tolerance. This decreases the risk of anaphylaxis.

Wong heard about the peanut patch and wanted her son to take part in an early phase 2 trial for 4-to-11-year-olds.

“We felt that participating in DBV’s peanut patch trial would give him the best chance at desensitization or at least increase his tolerance from a speck of peanut to a peanut,” Wong says. “The daily routine was quite simple, remove the old patch and then apply a new one. By the end of the trial, he tolerated approximately 1.5 peanuts.”

How it works

For DBV Technologies, it all began when pediatric gastroenterologist Pierre-Henri Benhamou teamed up with fellow professor of gastroenterology Christopher Dupont and his brother, engineer Bertrand Dupont. Together they created a more effective skin patch to detect when babies have allergies to cow's milk. Then they realized that the patch could actually be used to treat allergies by promoting tolerance. They decided to focus on peanut allergies first as the more dangerous.

The Viaskin patch utilizes the fact that the skin can promote tolerance to external stimuli. The skin is the body’s first defense. Controlling the extent of the immune response is crucial for the skin. So it has defense mechanisms against external stimuli and can promote tolerance.

The patch consists of an adhesive foam ring with a plastic film on top. A small amount of peanut protein is placed in the center. The adhesive ring is attached to the back of the patient's body. The peanut protein sits above the skin but does not directly touch it. As the patient sweats, water droplets on the inside of the film dissolve the peanut protein, which is then absorbed into the skin.

The peanut protein is then captured by skin cells called Langerhans cells. They play an important role in getting the immune system to tolerate certain external stimuli. Langerhans cells take the peanut protein to lymph nodes which activate T regulatory cells. T regulatory cells suppress the allergic response.

A different patch is applied to the skin every day to increase tolerance. It’s both easy to use and convenient.

“The DBV approach uses much smaller amounts than oral immunotherapy and works through the skin significantly reducing the risk of allergic reactions,” says Edwin H. Kim, the division chief of Pediatric Allergy and Immunology at the University of North Carolina, U.S., and one of the principal investigators of Viaskin’s clinical trials. “By not going through the mouth, the patch also avoids the taste and texture issues. Finally, the ability to apply a patch and immediately go about your day may be very attractive to very busy patients and families.”

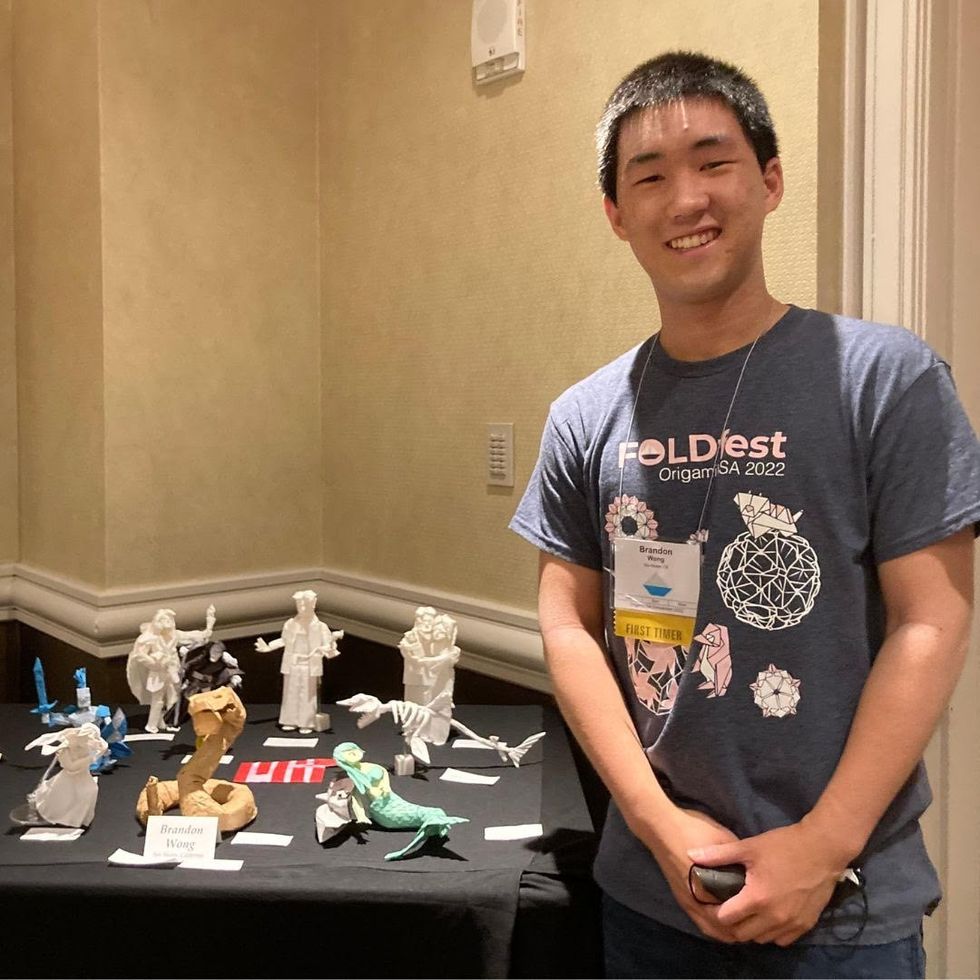

Brandon Wong displaying origami figures he folded at an Origami Convention in 2022

Sharon Wong

Clinical trials

Results from DBV's phase 3 trial in children ages 1 to 3 show its potential. For a positive result, patients who could not tolerate 10 milligrams or less of peanut protein had to be able to manage 300 mg or more after 12 months. Toddlers who could already tolerate more than 10 mg needed to be able to manage 1000 mg or more. In the end, 67 percent of subjects using the Viaskin patch met the target as compared to 33 percent of patients taking the placebo dose.

“The Viaskin peanut patch has been studied in several clinical trials to date with promising results,” says Suzanne M. Barshow, assistant professor of medicine in allergy and asthma research at Stanford University School of Medicine in the U.S. “The data shows that it is safe and well-tolerated. Compared to oral immunotherapy, treatment with the patch results in fewer side effects but appears to be less effective in achieving desensitization.”

The primary reason the patch is less potent is that oral immunotherapy uses a larger amount of the allergen. Additionally, absorption of the peanut protein into the skin could be erratic.

Gray also highlights that there is some tradeoff between risk and efficacy.

“The peanut patch is an exciting advance but not as effective as the oral route,” Gray says. “For those patients who are very sensitive to orally ingested peanut in oral immunotherapy or have an aversion to oral peanut, it has a use. So, essentially, the form of immunotherapy will have to be tailored to each patient.” Having different forms such as the Viaskin patch which is applied to the skin or pills that patients can swallow or dissolve under the tongue is helpful.

The hope is that the patch’s efficacy will increase over time. The team is currently running a follow-up trial, where the same patients continue using the patch.

“It is a very important study to show whether the benefit achieved after 12 months on the patch stays stable or hopefully continues to grow with longer duration,” says Kim, who is an investigator in this follow-up trial.

"My son now attends university in Massachusetts, lives on-campus, and eats dorm food. He has so much more freedom," Wong says.

The team is further ahead in the phase 3 follow-up trial for 4-to-11-year-olds. The initial phase 3 trial was not as successful as the trial for kids between one and three. The patch enabled patients to tolerate more peanuts but there was not a significant enough difference compared to the placebo group to be definitive. The follow-up trial showed greater potency. It suggests that the longer patients are on the patch, the stronger its effects.

They’re also testing if making the patch bigger, changing the shape and extending the minimum time it’s worn can improve its benefits in a trial for a new group of 4-to-11 year-olds.

The future

DBV Technologies is using the skin patch to treat cow’s milk allergies in children ages 1 to 17. They’re currently in phase 2 trials.

As for the peanut allergy trials in toddlers, the hope is to see more efficacy soon.

For Wong’s son who took part in the earlier phase 2 trial for 4-to-11-year-olds, the patch has transformed his life.

“My son continues to maintain his peanut tolerance and is not affected by peanut dust in the air or cross-contact,” Wong says. ”He attends university in Massachusetts, lives on-campus, and eats dorm food. He still carries an EpiPen but has so much more freedom than before his clinical trial. We will always be grateful.”

Giving robots self-awareness as they move through space - and maybe even providing them with gene-like methods for storing rules of behavior - could be important steps toward creating more intelligent machines.

One day in recent past, scientists at Columbia University’s Creative Machines Lab set up a robotic arm inside a circle of five streaming video cameras and let the robot watch itself move, turn and twist. For about three hours the robot did exactly that—it looked at itself this way and that, like toddlers exploring themselves in a room full of mirrors. By the time the robot stopped, its internal neural network finished learning the relationship between the robot’s motor actions and the volume it occupied in its environment. In other words, the robot built a spatial self-awareness, just like humans do. “We trained its deep neural network to understand how it moved in space,” says Boyuan Chen, one of the scientists who worked on it.

For decades robots have been doing helpful tasks that are too hard, too dangerous, or physically impossible for humans to carry out themselves. Robots are ultimately superior to humans in complex calculations, following rules to a tee and repeating the same steps perfectly. But even the biggest successes for human-robot collaborations—those in manufacturing and automotive industries—still require separating the two for safety reasons. Hardwired for a limited set of tasks, industrial robots don't have the intelligence to know where their robo-parts are in space, how fast they’re moving and when they can endanger a human.

Over the past decade or so, humans have begun to expect more from robots. Engineers have been building smarter versions that can avoid obstacles, follow voice commands, respond to human speech and make simple decisions. Some of them proved invaluable in many natural and man-made disasters like earthquakes, forest fires, nuclear accidents and chemical spills. These disaster recovery robots helped clean up dangerous chemicals, looked for survivors in crumbled buildings, and ventured into radioactive areas to assess damage.

Now roboticists are going a step further, training their creations to do even better: understand their own image in space and interact with humans like humans do. Today, there are already robot-teachers like KeeKo, robot-pets like Moffin, robot-babysitters like iPal, and robotic companions for the elderly like Pepper.

But even these reasonably intelligent creations still have huge limitations, some scientists think. “There are niche applications for the current generations of robots,” says professor Anthony Zador at Cold Spring Harbor Laboratory—but they are not “generalists” who can do varied tasks all on their own, as they mostly lack the abilities to improvise, make decisions based on a multitude of facts or emotions, and adjust to rapidly changing circumstances. “We don’t have general purpose robots that can interact with the world. We’re ages away from that.”

Robotic spatial self-awareness – the achievement by the team at Columbia – is an important step toward creating more intelligent machines. Hod Lipson, professor of mechanical engineering who runs the Columbia lab, says that future robots will need this ability to assist humans better. Knowing how you look and where in space your parts are, decreases the need for human oversight. It also helps the robot to detect and compensate for damage and keep up with its own wear-and-tear. And it allows robots to realize when something is wrong with them or their parts. “We want our robots to learn and continue to grow their minds and bodies on their own,” Chen says. That’s what Zador wants too—and on a much grander level. “I want a robot who can drive my car, take my dog for a walk and have a conversation with me.”

Columbia scientists have trained a robot to become aware of its own "body," so it can map the right path to touch a ball without running into an obstacle, in this case a square.

Jane Nisselson and Yinuo Qin/ Columbia Engineering

Today’s technological advances are making some of these leaps of progress possible. One of them is the so-called Deep Learning—a method that trains artificial intelligence systems to learn and use information similar to how humans do it. Described as a machine learning method based on neural network architectures with multiple layers of processing units, Deep Learning has been used to successfully teach machines to recognize images, understand speech and even write text.

Trained by Google, one of these language machine learning geniuses, BERT, can finish sentences. Another one called GPT3, designed by San Francisco-based company OpenAI, can write little stories. Yet, both of them still make funny mistakes in their linguistic exercises that even a child wouldn’t. According to a paper published by Stanford’s Center for Research on Foundational Models, BERT seems to not understand the word “not.” When asked to fill in the word after “A robin is a __” it correctly answers “bird.” But try inserting the word “not” into that sentence (“A robin is not a __”) and BERT still completes it the same way. Similarly, in one of its stories, GPT3 wrote that if you mix a spoonful of grape juice into your cranberry juice and drink the concoction, you die. It seems that robots, and artificial intelligence systems in general, are still missing some rudimentary facts of life that humans and animals grasp naturally and effortlessly.

How does one give robots a genome? Zador has an idea. We can’t really equip machines with real biological nucleotide-based genes, but we can mimic the neuronal blueprint those genes create.

It's not exactly the robots’ fault. Compared to humans, and all other organisms that have been around for thousands or millions of years, robots are very new. They are missing out on eons of evolutionary data-building. Animals and humans are born with the ability to do certain things because they are pre-wired in them. Flies know how to fly, fish knows how to swim, cats know how to meow, and babies know how to cry. Yet, flies don’t really learn to fly, fish doesn’t learn to swim, cats don’t learn to meow, and babies don’t learn to cry—they are born able to execute such behaviors because they’re preprogrammed to do so. All that happens thanks to the millions of years of evolutions wired into their respective genomes, which give rise to the brain’s neural networks responsible for these behaviors. Robots are the newbies, missing out on that trove of information, Zador argues.

A neuroscience professor who studies how brain circuitry generates various behaviors, Zador has a different approach to developing the robotic mind. Until their creators figure out a way to imbue the bots with that information, robots will remain quite limited in their abilities. Each model will only be able to do certain things it was programmed to do, but it will never go above and beyond its original code. So Zador argues that we have to start giving robots a genome.

How does one do that? Zador has an idea. We can’t really equip machines with real biological nucleotide-based genes, but we can mimic the neuronal blueprint those genes create. Genomes lay out rules for brain development. Specifically, the genome encodes blueprints for wiring up our nervous system—the details of which neurons are connected, the strength of those connections and other specs that will later hold the information learned throughout life. “Our genomes serve as blueprints for building our nervous system and these blueprints give rise to a human brain, which contains about 100 billion neurons,” Zador says.

If you think what a genome is, he explains, it is essentially a very compact and compressed form of information storage. Conceptually, genomes are similar to CliffsNotes and other study guides. When students read these short summaries, they know about what happened in a book, without actually reading that book. And that’s how we should be designing the next generation of robots if we ever want them to act like humans, Zador says. “We should give them a set of behavioral CliffsNotes, which they can then unwrap into brain-like structures.” Robots that have such brain-like structures will acquire a set of basic rules to generate basic behaviors and use them to learn more complex ones.

Currently Zador is in the process of developing algorithms that function like simple rules that generate such behaviors. “My algorithms would write these CliffsNotes, outlining how to solve a particular problem,” he explains. “And then, the neural networks will use these CliffsNotes to figure out which ones are useful and use them in their behaviors.” That’s how all living beings operate. They use the pre-programmed info from their genetics to adapt to their changing environments and learn what’s necessary to survive and thrive in these settings.

For example, a robot’s neural network could draw from CliffsNotes with “genetic” instructions for how to be aware of its own body or learn to adjust its movements. And other, different sets of CliffsNotes may imbue it with the basics of physical safety or the fundamentals of speech.

At the moment, Zador is working on algorithms that are trying to mimic neuronal blueprints for very simple organisms—such as earthworms, which have only 302 neurons and about 7000 synapses compared to the millions we have. That’s how evolution worked, too—expanding the brains from simple creatures to more complex to the Homo Sapiens. But if it took millions of years to arrive at modern humans, how long would it take scientists to forge a robot with human intelligence? That’s a billion-dollar question. Yet, Zador is optimistic. “My hypotheses is that if you can build simple organisms that can interact with the world, then the higher level functions will not be nearly as challenging as they currently are.”

Lina Zeldovich has written about science, medicine and technology for Popular Science, Smithsonian, National Geographic, Scientific American, Reader’s Digest, the New York Times and other major national and international publications. A Columbia J-School alumna, she has won several awards for her stories, including the ASJA Crisis Coverage Award for Covid reporting, and has been a contributing editor at Nautilus Magazine. In 2021, Zeldovich released her first book, The Other Dark Matter, published by the University of Chicago Press, about the science and business of turning waste into wealth and health. You can find her on http://linazeldovich.com/ and @linazeldovich.

Podcast: Wellness chatbots and meditation pods with Deepak Chopra

Leaps.org talked with Deepak Chopra about new technologies he's developing for mental health with Jonathan Marcoschamer, CEO of OpenSeed, and others.

Over the last few decades, perhaps no one has impacted healthy lifestyles more than Deepak Chopra. While several of his theories and recommendations have been criticized by prominent members of the scientific community, he has helped bring meditation, yoga and other practices for well-being into the mainstream in ways that benefit the health of vast numbers of people every day. His work has led many to accept new ways of thinking about alternative medicine, the power of mind over body, and the malleability of the aging process.

His impact is such that it's been observed our culture no longer recognizes him as a human being but as a pervasive symbol of new-agey personal health and spiritual growth. Last week, I had a chance to confirm that Chopra is, in fact, a human being – and deserving of his icon status – when I talked with him for the Leaps.org podcast. He relayed ideas that were wise and ancient, yet highly relevant to our world today, with the fluidity and ease of someone discussing the weather. Showing no signs of slowing down at age 76, he described his prolific work, including the publication of two books in the past year and a range of technologies he’s developing, including a meditation app, meditation pods for the workplace, and a chatbot for mental health called Piwi.

Take a listen and get inspired to do some meditation and deep thinking on the future of health. As Chopra told me, “If you don’t have time to meditate once per day, you probably need to meditate twice per day.”

Highlights:

2:10: Chopra talks about meditation broadly and meditation pods, including the ones made by OpenSeed for meditation in the workplace.

6:10: The drawbacks of quick fixes like drugs for mental health.

10:30: The benefits of group meditation versus individual meditation.

14:35: What is a "metahuman" and how to become one.

19:40: The difference between the conditioned mind and the mind that's infinitely creative.

22:48: How Chopra's views of free will differ from the views of many neuroscientists.

28:04: Thinking Fast and Slow, and the role of intuition.

31:20: Athletic and creative geniuses.

32:43: The nature of fundamental truth.

34:00: Meditation for kids.

37:12: Never alone.Love and how AI chatbots can support mental health.

42:30: Extending lifespan, gene editing and lifestyle.

46:05: Chopra's mentor in living a long good life (and my mentor).

47:45: The power of yoga.

Links:

- OpenSeed meditation pods for people to meditate at work (Chopra is an advisor to OpenSeed).

- Chopra's book from 2021, Metahuman: Unleash Your Infinite Potential

- Chopra's book from 2022, Abundance: The Inner Path to Wealth

- NeverAlone.Love, Chopra's collaboration of businesses, policy makers, mental health professionals and others to raise awareness about mental health, advance scientific research and "create a global technology platform to democratize access to resources."

- The Piwi chatbot for mental health

- The Chopra Meditation & Well-Being App for people of all ages

- Only 1.6 percent of U.S. children meditate, according to the National Center for Complementary and Integrative Health