Forget Farm-to-Table: Lab-to-Table Fresh Fish Is Making Waves

Kira Peikoff was the editor-in-chief of Leaps.org from 2017 to 2021. As a journalist, her work has appeared in The New York Times, Newsweek, Nautilus, Popular Mechanics, The New York Academy of Sciences, and other outlets. She is also the author of four suspense novels that explore controversial issues arising from scientific innovation: Living Proof, No Time to Die, Die Again Tomorrow, and Mother Knows Best. Peikoff holds a B.A. in Journalism from New York University and an M.S. in Bioethics from Columbia University. She lives in New Jersey with her husband and two young sons. Follow her on Twitter @KiraPeikoff.

A conventionally sourced sea bass from a fishery.

Ever wonder why you've never heard of wild-caught organic fish? It's because there's no way to certify a food that has a mysterious history. Mike Selden, a 26-year-old biochemist with an animal lover's heart and an entrepreneur's mind, decided there must be better way to consume one of our planet's primary sources of animal protein. A way that would eliminate the need to kill billions of fish per year while also producing toxin-free, cheap, delicious fish meat for your dinner table. Enter Finless Foods, a young startup with a bold vision. Selden took time out of chauffeuring fish carcasses around San Francisco (no joke!) to share his journey with LeapsMag.

What is the biggest problem with the way fish is consumed today?

There are a lot of problems ranging from metals to animal welfare to human health. Technology is solving those problems at the same time. You've got extreme over fishing, which is collapsing ocean ecosystems and removing populations of fish that are traditionally used as food sources in developing nations.

In terms of animal welfare, fish are killed in massive numbers, billions a year. Even if people don't care too much about that, we want to give them another option.

In terms of health, which I think for most people is the most convincing argument, current fish have mercury and plastic in them. And if you're getting that fish from a farm, you will also have high levels of antibiotics and growth hormones if you're getting it from outside the U.S. What we're doing is producing fish that doesn't have any of those contaminants.

What gave you the idea to start a company around lab-grown fish?

I studied biochemistry and molecular biology at UMass Amherst, traditionally an agricultural school out in the woods of Massachusetts. I have always been an environmental activist and cared about animals. I thought, animal agriculture is so incredibly inefficient, what could be done to change it?

"The worst way you can possibly make a hamburger is with a cow."

Agriculture is a system of inputs and outputs, the inputs being feed and the outputs being meat – so why are we wasting all of this input on outputs we don't care about? Why are we creating these animals that waste all this energy through sitting around, moving around, having a heartbeat, blinking? All of this uses energy and that's valuable input.

The worst way you can possibly make a hamburger is with a cow. It's an awful transfer of energy: you have to feed it many times its own weight in food that could have fed other people or other things.

In February, I got funding from Indie Bio, a startup accelerator for synthetic biology, and moved out to San Francisco with my co-founder Brian Wyrwas. We started working in our lab in March. We're the newest company in the space.

Walk me through the process of creating edible fish in the lab. Do you have to catch a real live fish first and get their cells?

We have a deal with the Aquarium of the Bay, and whenever a fish dies, they call me, I get in a zip car, drive over, and bring the fish back to the lab, where Brian cultures it up into a cell culture. We do use real, high-quality fish stock. From there, we get the cells going in a bioreactor in a suspension culture, grow them into large quantities, and then bring them out to differentiate them into the cells people want to eat—the muscle and fat tissue. Then we formulate it and bring it to people's tables.

How long does the whole process take from the phone call about the fish dying to the food on the table?

There are two different processes: One is a research process, getting the initial cells and engineering them to be what we're looking for.

The other is a production process – we have a cell line ready and need to grow it out. That timing depends on how big of a facility we have. Since we're working with cell division: If you have 1 cell, in 24 hours, you'll have two cells. Let's say you have 1 ton of cells, in 24 hours you'll have two tons of cells.

"We want to give people the wholesome food they are used to in a healthier setting."

How are you looking to scale this process?

We're trying to find a middle ground between efficiency and local distribution. Organic farming is hilariously bad for the environment and horrifyingly inefficient, but on the other hand, industrial agriculture requires lots of transport, which is also bad for the environment. We're looking to create regionally distributed facilities which don't require a lot of transit, so people can have fresh fish even extremely far inland.

What kinds of fish are you "cooking"?

Our first product will be Bluefin tuna. It's a high-quality fish with high demand and it's also a conservation issue. We also currently have a culture going with Branzino, European sea bass, that we're really happy with.

There's a concept in science called a model organism – one that is extremely well studied and understood. Like the fruit fly, for example. For fish, it's the zebra fish, which is used for genetic research, but no one eats it. It's tiny, so we started by thinking: what fish do people eat that is also close evolutionarily to the zebra fish? We came up with carp, even though it's not too widely eaten.

But our process is very species agnostic. We've done work in trout, salmon, goldfish. Any fish with a dorsal fin works with our process. We tried a wolf eel but it didn't work. Eels are pretty far evolutionarily from fish, so we dropped that one.

From left to right, Ron Shigeta (IndieBio), Brian Wyrwas (Finless Foods), Amy Fleming (The Guardian), and Jihyun Kim (Finless Foods) tasting the first ever clean carp croquettes.

(Courtesy Mike Selden)

Why fish as opposed to, say, a cow?

Scientifically, there are a lot of advantages. Fish have a simpler structure than land animals. A fillet from a cow has complex marbling going on between the fat and muscle. When it's fish, like sashimi, it's in layers of muscle and fat. So it's simpler to build, plus fish are cold-blooded, so because they breathe underwater, our equipment needs less complexity. We don't need a CO2 line and we don't need to culture our cells at 37 degrees Celsius. We culture them at room temperature.

It's also easier to get to market since there's much higher value. Chicken in the last year was $3.84 per pound in America, whereas Bluefin tuna is between $100 and $1200 a pound. Because this is about dropping cost, we can get to market faster and give investors a better value proposition.

What's also cool is that something like Bluefin tuna is something many people haven't had the opportunity to eat. We can get these down in cost until there is price parity with any cheap conventional fish. We want to give people a choice between buying something like albacore tuna in a can –with mercury and plastic– or high-quality tuna without any contaminants for the same price.

Do you shape them like fish fillets to help the consumer overcome whatever discomfort they might feel about eating a bunch of lab-grown cells?

Yeah, people want to continue eating food they are eating, and that's fine. We want to give people a better option. We don't want to give them something weird and out there. We want to give them the wholesome food they are used to in a healthier setting that also solves some environmental issues.

How about the taste? Have you done any blind side-by-side tests with the real thing and your version?

Not blind taste tests. But we have been tasting it, and it is firmly fish. I even tried leaving it outside of the fridge – and man, that tasted like spoiled fish.

We want it to have the exact same properties as real fish. We don't want people to have to learn how to cook with it. We want them to just bring it into their homes and eat it exactly like they were doing before, but better.

What you're growing isn't the whole fish, right? It is not an actual organism?

Right, we're only growing muscle cells. It doesn't know where it is. There is no brain, nervous system, or pain receptors.

Are you the only people in this lab-grown food space working on fish?

We're the only ones doing fish so far. Other companies are doing chicken, duck, egg white, milk, gelatin, leather, and beef.

Are people generally weirded out by sci-fi lab food, or intrigued?

It's been very positive. When people sit down and talk to us, they realize it's not some crazed money grab or some weird Ted talk, it's real activists using real science trying to solve real problems. Sure, there will be some pushback from people who don't understand it, and that's fine.

When can I expect to see Finless Food at my local Whole Foods?

We plan on being in restaurants in two years, and grocery stores in four years.

What about people who aren't big fans of fish in the first place? Like those who don't eat sushi, because consuming something raw with an unknown history isn't very appetizing.

There are too many examples of food poisoning because fish are in a less clean environment than they should be, swimming around in their own fecal matter, and being doused in antibiotics so their diseases don't transmit. It's a bit of a mess. That's why as an industry, we're calling this clean meat. Fish is a healthy thing, or at least it should be, with Omega 3 and 6, and DHA. This is a way for people to continue getting those nutrients without any of the questions of where it came from. For people who are skeptical of fish, we invite you to dive in.

Brian Wyrwas, Co-Founder & CSO, and Mike Selden, Co-Founder & CEO

(Courtesy Mike Selden)

Kira Peikoff was the editor-in-chief of Leaps.org from 2017 to 2021. As a journalist, her work has appeared in The New York Times, Newsweek, Nautilus, Popular Mechanics, The New York Academy of Sciences, and other outlets. She is also the author of four suspense novels that explore controversial issues arising from scientific innovation: Living Proof, No Time to Die, Die Again Tomorrow, and Mother Knows Best. Peikoff holds a B.A. in Journalism from New York University and an M.S. in Bioethics from Columbia University. She lives in New Jersey with her husband and two young sons. Follow her on Twitter @KiraPeikoff.

Send in the Robots: A Look into the Future of Firefighting

Drones are just one of several new technologies that are rising to the challenge of more frequent wildfires.

April in Paris stood still. Flames engulfed the beloved Notre Dame Cathedral as the world watched, horrified, in 2019. The worst looked inevitable when firefighters were forced to retreat from the out-of-control fire.

But the Paris Fire Brigade had an ace up their sleeve: Colossus, a firefighting robot. The seemingly indestructible tank-like machine ripped through the blaze with its motorized water cannon. It was able to put out flames in places that would have been deadly for firefighters.

Firefighting is entering a new era, driven by necessity. Conventional methods of managing fires have been no match for the fiercer, more expansive fires being triggered by climate change, urban sprawl, and susceptible wooded areas.

Robots have been a game-changer. Inspired by Paris, the Los Angeles Fire Department (LAFD) was the first in the U.S. to deploy a firefighting robot in 2021, the Thermite Robotics System 3 – RS3, for short.

RS3 is a 3,500-pound turbine on a crawler—the size of a Smart car—with a 36.8 horsepower engine that can go for 20 hours without refueling. It can plow through hazardous terrain, move cars from its path, and pull an 8,000-pound object from a fire.

All that while spurting 2,500 gallons of water per minute with a rear exhaust fan clearing the smoke. At a recent trade show, RS3 was billed as equivalent to 10 firefighters. The Los Angeles Times referred to it as “a droid on steroids.”

Robots such as the Thermite RS3 can plow through hazardous terrain and pull an 8,000-pound object from a fire.

Los Angeles Fire Department

The advantage of the robot is obvious. Operated remotely from a distance, it greatly reduces an emergency responder’s exposure to danger, says Wade White, assistant chief of the LAFD. The robot can be sent into airplane fires, nuclear reactors, hazardous areas with carcinogens (think East Palestine, Ohio), or buildings where a roof collapse is imminent.

Advances for firefighters are taking many other forms as well. Fibers have been developed that make the firefighter’s coat lighter and more protective from carcinogens. New wearable devices track firefighters’ biometrics in real time so commanders can monitor their heat stress and exertion levels. A sensor patch is in development which takes readings every four seconds to detect dangerous gases such as methane and carbon dioxide. A sonic fire extinguisher is being explored that uses low frequency soundwaves to remove oxygen from air molecules without unhealthy chemical compounds.

The demand for this technology is only increasing, especially with the recent rise in wildfires. In 2021, fires were responsible for 3,800 deaths and 14,700 injuries of civilians in this country. Last year, 68,988 wildfires burned down 7.6 million acres. Whether the next generation of firefighting can address these new challenges could depend on special cameras, robots of the aerial variety, AI and smart systems.

Fighting fire with cameras

Another key innovation for firefighters is a thermal imaging camera (TIC) that improves visibility through smoke. “At a fire, you might not see your hand in front of your face,” says White. “Using the TIC screen, you can find the door to get out safely or see a victim in the corner.” Since these cameras were introduced in the 1990s, the price has come down enough (from $10,000 or more to about $700) that every LAFD firefighter on duty has been carrying one since 2019, says White.

TICs are about the size of a cell phone. The camera can sense movement and body heat so it is ideal as a search tool for people trapped in buildings. If a firefighter has not moved in 30 seconds, the motion detector picks that up, too, and broadcasts a distress signal and directional information to others.

To enable firefighters to operate the camera hands-free, the newest TICs can attach inside a helmet. The firefighter sees the images inside their mask.

TICs also can be mounted on drones to get a bird’s-eye, 360 degree view of a disaster or scout for hot spots through the smoke. In addition, the camera can take photos to aid arson investigations or help determine the cause of a fire.

More help From above

Firefighters prefer the term “unmanned aerial systems” (UAS) to drones to differentiate them from military use.

A UAS carrying a camera can provide aerial scene monitoring and topography maps to help fire captains deploy resources more efficiently. At night, floodlights from the drone can illuminate the landscape for firefighters. They can drop off payloads of blankets, parachutes, life preservers or radio devices for stranded people to communicate, too. And like the robot, the UAS reduces risks for ground crews and helicopter pilots by limiting their contact with toxic fumes, hazardous chemicals, and explosive materials.

“The nice thing about drones is that they perform multiple missions at once,” says Sean Triplett, team lead of fire and aviation management, tools and technology at the Forest Service.

Experts predict we’ll see swarms of drones dropping water and fire retardant on burning buildings and forests in the near future.

The UAS is especially helpful during wildfires because it can track fires, get ahead of wind currents and warn firefighters of wind shifts in real time. The U.S. Forest Service also uses long endurance, solar-powered drones that can fly for up to 30 days at a time to detect early signs of wildfire. Wildfires are no longer seasonal in California – they are a year-long threat, notes Thanh Nguyen, fire captain at the Orange County Fire Authority.

In March, Nguyen’s crew deployed a drone to scope out a huge landslide following torrential rains in San Clemente, CA. Emergency responders used photos and videos from the drone to survey the evacuated area, enabling them to stay clear of ground on the hillside that was still sliding.

Improvements in drone batteries are enabling them to fly for longer with heavier payloads. Experts predict we’ll see swarms of drones dropping water and fire retardant on burning buildings and forests in the near future.

AI to the rescue

The biggest peril for a firefighter is often what they don’t see coming. Flashovers are a leading cause of firefighter deaths, for example. They occur when flammable materials in an enclosed area ignite almost instantaneously. Or dangerous backdrafts can happen when a firefighter opens a window or door; the air rushing in can ignite a fire without warning.

The Fire Fighting Technology Group at the National Institute of Standards and Technology (NIST) is developing tools and systems to predict these potentially lethal events with computer models and artificial intelligence.

Partnering with other institutions, NIST researchers developed the Flashover Prediction Neural Network (FlashNet) after looking at common house layouts and running sets of scenarios through a machine-learning model. In the lab, FlashNet was able to predict a flashover 30 seconds before it happened with 92.1% success. When ready for release, the technology will be bundled with sensors that are already installed in buildings, says Anthony Putorti, leader of the NIST group.

The NIST team also examined data from hundreds of backdrafts as a basis for a machine-learning model to predict them. In testing chambers the model predicted them correctly 70.8% of the time; accuracy increased to 82.4% when measures of backdrafts were taken in more positions at different heights in the chambers. Developers are working on how to integrate the AI into a small handheld device that can probe the air of a room through cracks around a door or through a created opening, Putorti says. This way, the air can be analyzed with the device to alert firefighters of any significant backdraft risk.

Early wildfire detection technologies based on AI are in the works, too. The Forest Service predicts the acreage burned each year during wildfires will more than triple in the next 80 years. By gathering information on historic fires, weather patterns, and topography, says White, AI can help firefighters manage wildfires before they grow out of control and create effective evacuation plans based on population data and fire patterns.

The future is connectivity

We are in our infancy with “smart firefighting,” says Casey Grant, executive director emeritus of the Fire Protection Research Foundation. Grant foresees a new era of cyber-physical systems for firefighters—a massive integration of wireless networks, advanced sensors, 3D simulations, and cloud services. To enhance teamwork, the system will connect all branches of emergency responders—fire, emergency medical services, law enforcement.

FirstNet (First Responder Network Authority) now provides a nationwide high-speed broadband network with 5G capabilities for first responders through a terrestrial cell network. Battling wildfires, however, the Forest Service needed an alternative because they don’t always have access to a power source. In 2022, they contracted with Aerostar for a high altitude balloon (60,000 feet up) that can extend cell phone power and LTE. “It puts a bubble of connectivity over the fire to hook in the internet,” Triplett explains.

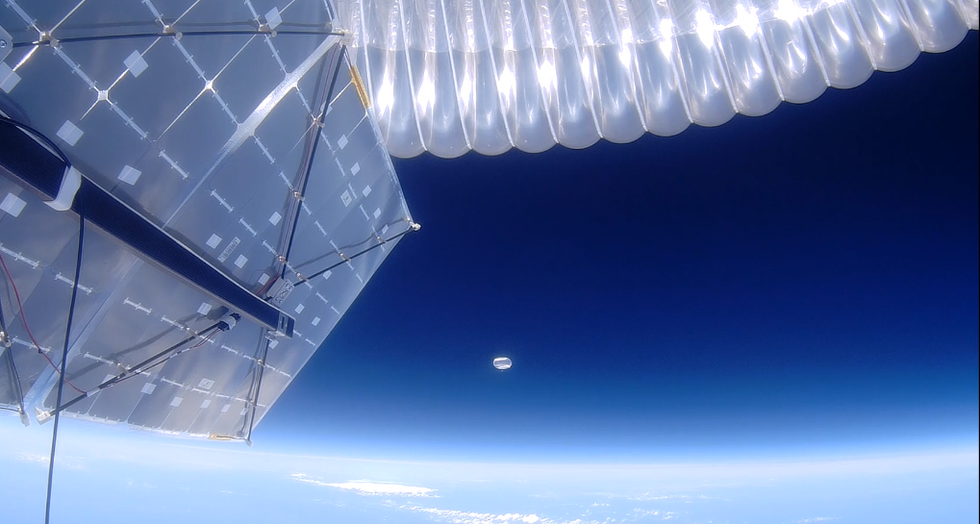

A high altitude balloon, 60,000 feet high, can extend cell phone power and LTE, putting a "bubble" of internet connectivity over fires.

Courtesy of USDA Forest Service

Advances in harvesting, processing and delivering data will improve safety and decision-making for firefighters, Grant sums up. Smart systems may eventually calculate fire flow paths and make recommendations about the best ways to navigate specific fire conditions. NIST’s plan to combine FlashNet with sensors is one example.

The biggest challenge is developing firefighting technology that can work across multiple channels—federal, state, local and tribal systems as well as for fire, police and other emergency services— in any location, says Triplett. “When there’s a wildfire, there are no political boundaries,” he says. “All hands are on deck.”

New device can diagnose concussions using AI

A new test called the EyeBox could provide a more objective - and portable - tool to measure whether people have concussions in stadiums and hospitals.

For a long time after Mary Smith hit her head, she was not able to function. Test after test came back normal, so her doctors ruled out the concussion, but she knew something was wrong. Finally, when she took a test with a novel EyeBOX device, recently approved by the FDA, she learned she indeed had been dealing with the aftermath of a concussion.

“I felt like even my husband and doctors thought I was faking it or crazy,” recalls Smith, who preferred not to disclose her real name. “When I took the EyeBOX test it showed that my eyes were not moving together and my BOX score was abnormal.” To her diagnosticians, scientists at the Minneapolis-based company Oculogica who developed the EyeBOX, these markers were concussion signs. “I cried knowing that finally someone could figure out what was wrong with me and help me get better,” she says.

Concussion affects around 42 million people worldwide. While it’s increasingly common in the news because of sports injuries, anything that causes damage to the head, from a fall to a car accident, can result in a concussion. The sudden blow or jolt can disrupt the normal way the brain works. In the immediate aftermath, people may suffer from headaches, lose consciousness and experience dizziness, confusion and vomiting. Some recover but others have side effects that can last for years, particularly affecting memory and concentration.

There is no simple standard-of-care test to confirm a concussion or rule it out. Neither do they appear on MRI and CT scans. Instead, medical professionals use more indirect approaches that test symptoms of concussions, such as assessments of patients’ learning and memory skills, ability to concentrate and problem solving. They also look at balance and coordination. Most tests are in the form of questionnaires or symptom checklists. Consequently, they have limitations, can be biased and may miss a concussion or produce a false positive. Some people suspected of having a concussion may ordinarily have difficulties with literary and problem-solving tests because of language challenges or education levels.

Another problem with current tests is that patients, particularly soldiers who want to return to combat and athletes who would like to keep competing, could try and hide their symptoms to avoid being diagnosed with a brain injury. Trauma physicians who work with concussion patients have the need for a tool that is more objective and consistent.

“This type of assessment doesn’t rely on the patient's education level, willingness to follow instructions or cooperation. You can’t game this.” -- Uzma Samadani, founder of Oculogica

“The importance of having an objective measurement tool for the diagnosis of concussion is of great importance,” says Douglas Powell, associate professor of biomechanics at the University of Memphis, with research interests in sports injury and concussion. “While there are a number of promising systems or metrics, we have yet to develop a system that is portable, accessible and objective for use on the sideline and in the clinic. The EyeBOX may be able to address these issues, though time will be the ultimate test of performance.”

The EyeBOX as a window inside the brain

Using eye movements to diagnose a concussion has emerged as a promising technique since around 2010. Oculogica combined eye movements with AI to develop the EyeBOX to develop an unbiased objective diagnostic tool.

“What’s so great about this type of assessment is it doesn’t rely on the patient's education level, willingness to follow instructions or cooperation,” says Uzma Samadani, a neurosurgeon and brain injury researcher at the University of Minnesota, who founded Oculogica. “You can’t game this. It assesses functions that are prompted by your brain.”

In 2010, Samadani was working on a clinical trial to improve the outcome of brain injuries. The team needed some way to measure if seriously brain injured patients were improving. One thing patients could do was watch TV. So Samadani designed and patented an AI-based algorithm that tracks the relationship between eye movement and concussion.

The EyeBOX test requires patients to watch movie or music clips for 220 seconds. An eye tracking camera records subconscious eye movements, tracking eye positions 500 times per seconds as patients watch the video. It collects over 100,000 data points. The device then uses AI to assess whether there’s any disruptions from the normal way the eyes move.

Cranial nerves are responsible for transmitting information between the brain and the body. Many are involved in eye movement. Pressure caused by a concussion can affect how these nerves work. So tracking how the eyes move can indicate if there’s anything wrong with the cranial nerves and where the problem lies.

If someone is healthy, their eyes should be able to focus on an object, follow movement and both eyes should be coordinated with each other. The EyeBox can detect abnormalities. For example, if a patient’s eyes are coordinated but they are not moving as they should, that indicates issues in the central brain stem, whilst only one eye moving abnormally suggests that a particular nerve section is affected.

Uzma Samadani with the EyeBOX device

Courtesy Oculogica

“The EyeBOX is a monitor for cranial nerves,” says Samadani. “Essentially it’s a form of digital neurological exam. “Several other eye-tracking techniques already exist, but they rely on subjective self-reported symptoms. Many also require a baseline, a measure of how patients reacted when they were healthy, which often isn’t available.

VOMS (Vestibular Ocular Motor Screen) is one of the most accurate diagnostic tests used in clinics in combination with other tests, but it is subjective. It involves a therapist getting patients to move their head or eyes as they focus or follow a particular object. Patients then report their symptoms.

The King-Devick test measures how fast patients can read numbers and compares it to a baseline. Since it is mainly used for athletes, the initial test is completed before the season starts. But participants can manipulate it. It also cannot be used in emergency rooms because the majority of patients wouldn’t have prior baseline tests.

Unlike these tests, EyeBOX doesn’t use a baseline and is objective because it doesn’t rely on patients’ answers. “It shows great promise,” says Thomas Wilcockson, a senior lecturer of psychology in Loughborough University, who is an expert in using eye tracking techniques in neurological disorders. “Baseline testing of eye movements is not always possible. Alternative measures of concussion currently in development, including work with VR headsets, seem to currently require it. Therefore the EyeBOX may have an advantage.”

A technology that’s still evolving

In their last clinical trial, Oculogica used the EyeBOX to test 46 patients who had concussion and 236 patients who did not. The sensitivity of the EyeBOX, or the probability of it correctly identifying the patient’s concussion, was 80.4 percent. Meanwhile, the test accurately ruled out a concussion in 66.1 percent of cases. This is known as its specificity score.

While the team is working on improving the numbers, experts who treat concussion patients find the device promising. “I strongly support their use of eye tracking for diagnostic decision making,” says Douglas Powell. “But for diagnostic tests, we would prefer at least one of the sensitivity or specificity values to be greater than 90 percent. Powell compares EyeBOX with the Buffalo Concussion Treadmill Test, which has sensitivity and specificity values of 73 and 78 percent, respectively. The VOMS also has shown greater accuracy than the EyeBOX, at least for now. Still, EyeBOX is competitive with the best diagnostic testing available for concussion and Powell hopes that its detection prowess will improve. “I anticipate that the algorithms being used by Oculogica will be under continuous revision and expect the results will improve within the next several years.”

“The color of your skin can have a huge impact in how quickly you are triaged and managed for brain injury. People of color have significantly worse outcomes after traumatic brain injury than people who are white.” -- Uzma Samadani, founder of Oculogica

Powell thinks the EyeBOX could be an important complement to other concussion assessments.

“The Oculogica product is a viable diagnostic tool that supports clinical decision making. However, concussion is an injury that can present with a wide array of symptoms, and the use of technology such as the Oculogica should always be a supplement to patient interaction.”

Ioannis Mavroudis, a consultant neurologist at Leeds Teaching Hospital, agrees that the EyeBOX has promise, but cautions that concussions are too complex to rely on the device alone. For example, not all concussions affect how eyes move. “I believe that it can definitely help, however not all concussions show changes in eye movements. I believe that if this could be combined with a cognitive assessment the results would be impressive.”

The Oculogica team submitted their clinical data for FDA approval and received it in 2018. Now, they’re working to bring the test to the commercial market and using the device clinically to help diagnose concussions for clients. They also want to look at other areas of brain health in the next few years. Samadani believes that the EyeBOX could possibly be used to detect diseases like multiple sclerosis or other neurological conditions. “It’s a completely new way of figuring out what someone’s neurological exam is and we’re only beginning to realize the potential,” says Samadani.

One of Samadani’s biggest aspirations is to help reduce inequalities in healthcare because of skin color and other factors like money or language barriers. From that perspective, the EyeBOX’s greatest potential could be in emergency rooms. It can help diagnose concussions in addition to the questionnaires, assessments and symptom checklists, currently used in the emergency departments. Unlike these more subjective tests, EyeBOX can produce an objective analysis of brain injury through AI when patients are admitted and assessed, unrelated to their socioeconomic status, education, or language abilities. Studies suggest that there are racial disparities in how patients with brain injuries are treated, such as how quickly they're assessed and get a treatment plan.

“The color of your skin can have a huge impact in how quickly you are triaged and managed for brain injury,” says Samadani. “As a result of that, people of color have significantly worse outcomes after traumatic brain injury than people who are white. The EyeBOX has the potential to reduce inequalities,” she explains.

“If you had a digital neurological tool that you could screen and triage patients on admission to the emergency department you would potentially be able to make sure that everybody got the same standard of care,” says Samadani. “My goal is to change the way brain injury is diagnosed and defined.”