AI and you: Is the promise of personalized nutrition apps worth the hype?

Personalized nutrition apps could provide valuable data to people trying to eat healthier, though more research must be done to show effectiveness.

As a type 2 diabetic, Michael Snyder has long been interested in how blood sugar levels vary from one person to another in response to the same food, and whether a more personalized approach to nutrition could help tackle the rapidly cascading levels of diabetes and obesity in much of the western world.

Eight years ago, Snyder, who directs the Center for Genomics and Personalized Medicine at Stanford University, decided to put his theories to the test. In the 2000s continuous glucose monitoring, or CGM, had begun to revolutionize the lives of diabetics, both type 1 and type 2. Using spherical sensors which sit on the upper arm or abdomen – with tiny wires that pierce the skin – the technology allowed patients to gain real-time updates on their blood sugar levels, transmitted directly to their phone.

It gave Snyder an idea for his research at Stanford. Applying the same technology to a group of apparently healthy people, and looking for ‘spikes’ or sudden surges in blood sugar known as hyperglycemia, could provide a means of observing how their bodies reacted to an array of foods.

“We discovered that different foods spike people differently,” he says. “Some people spike to pasta, others to bread, others to bananas, and so on. It’s very personalized and our feeling was that building programs around these devices could be extremely powerful for better managing people’s glucose.”

Unbeknown to Snyder at the time, thousands of miles away, a group of Israeli scientists at the Weizmann Institute of Science were doing exactly the same experiments. In 2015, they published a landmark paper which used CGM to track the blood sugar levels of 800 people over several days, showing that the biological response to identical foods can vary wildly. Like Snyder, they theorized that giving people a greater understanding of their own glucose responses, so they spend more time in the normal range, may reduce the prevalence of type 2 diabetes.

The commercial potential of such apps is clear, but the underlying science continues to generate intriguing findings.

“At the moment 33 percent of the U.S. population is pre-diabetic, and 70 percent of those pre-diabetics will become diabetic,” says Snyder. “Those numbers are going up, so it’s pretty clear we need to do something about it.”

Fast forward to 2022,and both teams have converted their ideas into subscription-based dietary apps which use artificial intelligence to offer data-informed nutritional and lifestyle recommendations. Snyder’s spinoff, January AI, combines CGM information with heart rate, sleep, and activity data to advise on foods to avoid and the best times to exercise. DayTwo–a start-up which utilizes the findings of Weizmann Institute of Science–obtains microbiome information by sequencing stool samples, and combines this with blood glucose data to rate ‘good’ and ‘bad’ foods for a particular person.

“CGMs can be used to devise personalized diets,” says Eran Elinav, an immunology professor and microbiota researcher at the Weizmann Institute of Science in addition to serving as a scientific consultant for DayTwo. “However, this process can be cumbersome. Therefore, in our lab we created an algorithm, based on data acquired from a big cohort of people, which can accurately predict post-meal glucose responses on a personal basis.”

The commercial potential of such apps is clear. DayTwo, who market their product to corporate employers and health insurers rather than individual consumers, recently raised $37 million in funding. But the underlying science continues to generate intriguing findings.

Last year, Elinav and colleagues published a study on 225 individuals with pre-diabetes which found that they achieved better blood sugar control when they followed a personalized diet based on DayTwo’s recommendations, compared to a Mediterranean diet. The journal Cell just released a new paper from Snyder’s group which shows that different types of fibre benefit people in different ways.

“The idea is you hear different fibres are good for you,” says Snyder. “But if you look at fibres they’re all over the map—it’s like saying all animals are the same. The responses are very individual. For a lot of people [a type of fibre called] arabinoxylan clearly reduced cholesterol while the fibre inulin had no effect. But in some people, it was the complete opposite.”

Eight years ago, Stanford's Michael Snyder began studying how continuous glucose monitors could be used by patients to gain real-time updates on their blood sugar levels, transmitted directly to their phone.

The Snyder Lab, Stanford Medicine

Because of studies like these, interest in precision nutrition approaches has exploded in recent years. In January, the National Institutes of Health announced that they are spending $170 million on a five year, multi-center initiative which aims to develop algorithms based on a whole range of data sources from blood sugar to sleep, exercise, stress, microbiome and even genomic information which can help predict which diets are most suitable for a particular individual.

“There's so many different factors which influence what you put into your mouth but also what happens to different types of nutrients and how that ultimately affects your health, which means you can’t have a one-size-fits-all set of nutritional guidelines for everyone,” says Bruce Y. Lee, professor of health policy and management at the City University of New York Graduate School of Public Health.

With the falling costs of genomic sequencing, other precision nutrition clinical trials are choosing to look at whether our genomes alone can yield key information about what our diets should look like, an emerging field of research known as nutrigenomics.

The ASPIRE-DNA clinical trial at Imperial College London is aiming to see whether particular genetic variants can be used to classify individuals into two groups, those who are more glucose sensitive to fat and those who are more sensitive to carbohydrates. By following a tailored diet based on these sensitivities, the trial aims to see whether it can prevent people with pre-diabetes from developing the disease.

But while much hope is riding on these trials, even precision nutrition advocates caution that the field remains in the very earliest of stages. Lars-Oliver Klotz, professor of nutrigenomics at Friedrich-Schiller-University in Jena, Germany, says that while the overall goal is to identify means of avoiding nutrition-related diseases, genomic data alone is unlikely to be sufficient to prevent obesity and type 2 diabetes.

“Genome data is rather simple to acquire these days as sequencing techniques have dramatically advanced in recent years,” he says. “However, the predictive value of just genome sequencing is too low in the case of obesity and prediabetes.”

Others say that while genomic data can yield useful information in terms of how different people metabolize different types of fat and specific nutrients such as B vitamins, there is a need for more research before it can be utilized in an algorithm for making dietary recommendations.

“I think it’s a little early,” says Eileen Gibney, a professor at University College Dublin. “We’ve identified a limited number of gene-nutrient interactions so far, but we need more randomized control trials of people with different genetic profiles on the same diet, to see whether they respond differently, and if that can be explained by their genetic differences.”

Some start-ups have already come unstuck for promising too much, or pushing recommendations which are not based on scientifically rigorous trials. The world of precision nutrition apps was dubbed a ‘Wild West’ by some commentators after the founders of uBiome – a start-up which offered nutritional recommendations based on information obtained from sequencing stool samples –were charged with fraud last year. The weight-loss app Noom, which was valued at $3.7 billion in May 2021, has been criticized on Twitter by a number of users who claimed that its recommendations have led to them developed eating disorders.

With precision nutrition apps marketing their technology at healthy individuals, question marks have also been raised about the value which can be gained through non-diabetics monitoring their blood sugar through CGM. While some small studies have found that wearing a CGM can make overweight or obese individuals more motivated to exercise, there is still a lack of conclusive evidence showing that this translates to improved health.

However, independent researchers remain intrigued by the technology, and say that the wealth of data generated through such apps could be used to help further stratify the different types of people who become at risk of developing type 2 diabetes.

“CGM not only enables a longer sampling time for capturing glucose levels, but will also capture lifestyle factors,” says Robert Wagner, a diabetes researcher at University Hospital Düsseldorf. “It is probable that it can be used to identify many clusters of prediabetic metabolism and predict the risk of diabetes and its complications, but maybe also specific cardiometabolic risk constellations. However, we still don’t know which forms of diabetes can be prevented by such approaches and how feasible and long-lasting such self-feedback dietary modifications are.”

Snyder himself has now been wearing a CGM for eight years, and he credits the insights it provides with helping him to manage his own diabetes. “My CGM still gives me novel insights into what foods and behaviors affect my glucose levels,” he says.

He is now looking to run clinical trials with his group at Stanford to see whether following a precision nutrition approach based on CGM and microbiome data, combined with other health information, can be used to reverse signs of pre-diabetes. If it proves successful, January AI may look to incorporate microbiome data in future.

“Ultimately, what I want to do is be able take people’s poop samples, maybe a blood draw, and say, ‘Alright, based on these parameters, this is what I think is going to spike you,’ and then have a CGM to test that out,” he says. “Getting very predictive about this, so right from the get go, you can have people better manage their health and then use the glucose monitor to help follow that.”

Researchers Are Experimenting With Magic Mushrooms' Fascinating Ability to Improve Mental Health Disorders

Magic mushrooms, in conjunction with a psychotherapist's treatment, may be helpful in treating addiction, depression, anxiety, and other mental health ailments.

Mental illness is a dark undercurrent in the lives of tens of millions of Americans. According to the World Health Organization, about 450 million people worldwide have a mental health disorder, which cut across all demographics, cultures, and socioeconomic classes.

One area of research seems to herald the first major breakthrough in decades — hallucinogen-assisted psychotherapy.

The U.S. National Institute on Mental Health estimates that severely debilitating mental health disorders cost the U.S. more than $300 billion per year, and that's not even counting the human toll of broken lives, devastated families, and a health care system stretched to the limit.

However, one area of research seems to herald the first major breakthrough in decades — hallucinogen-assisted psychotherapy. Drugs like psilocybin (obtained from "magic mushrooms"), LSD, and MDMA (known as the club drug, ecstasy) are being tested in combination with talk therapy for a variety of mental illnesses. These drugs, administered by a psychotherapist in a safe and controlled environment, are showing extraordinary results that other conventional treatments would take years to accomplish.

But the therapy will likely continue to face an uphill legal battle before it achieves FDA approval. It is up against not only current drug laws (all psychedelics remain illegal on the federal level) and strict FDA regulations, but a powerful status quo that has institutionalized fear of any drug used for recreational purposes.

How We Got Here

According to researchers Sean Belouin and Jack Henningfield, the use of psychedelic drugs has a long and winding history. It's believed that hallucinogenic substances have been used in healing ceremonies and religious rituals for thousands of years. Indigenous people in the U.S., Mexico, and Central and South America still use distillations from the peyote cactus and other hallucinogens in their religious ceremonies. And psilocybin mushrooms, also capable of causing hallucinations, grow throughout the world and are thought to have been used for millennia.

But psychedelic drugs didn't receive much research until 1943, when LSD's psychoactive effects were discovered by chemist Albert Hoffman. Hoffman tested the compound he had discovered years earlier on himself and found that the drug had profound mind-altering effects. He made the drug available to psychiatrists who were interested in testing it out as an adjunct to talk therapy. There were no truly effective drugs at the time for mental illnesses, and psychiatrists early on saw the possibility of psychedelics providing a kind of emotional catharsis that might represent therapeutic breakthroughs for many mental conditions.

During the 1950s and early 1960s, psychedelic drugs saw an increase in use within psychology, according to a 2018 article in Neuropharmacology. During this time, research on LSD and other hallucinogens was the subject of over 1,000 scientific papers, six international conferences, and several dozen books. LSD was widely prescribed to psychiatric patients, and by 1958, Hoffman had identified psilocybin as the hallucinogenic in "magic mushrooms," which was also administered. By 1965 some type of hallucinogenic had been given to more than 40,000 patients.

Then came a sea change. Psychedelic drugs caught the public's attention and there was widespread experimentation. The association with Hippie counterculture alarmed many and led to a legal and cultural backlash that stigmatized psychedelics for decades to come. In the mid-1960s, psychedelics were designated Schedule 1 drugs in the U.S., meaning they were seen as having "no accepted medical use and a high potential of abuse." Schedule 1 also implied that the drugs were more dangerous than cocaine, methamphetamine, Vicodin, and oxycodone, a perception that was far from proven but became an institutionalized part of drug enforcement. Medical use ceased and research dwindled down to close to zero.

For years, research into hallucinogenic-assisted therapy was basically dormant, until the 1990s when interest started to revive. In the 2000s, the first modern clinical trials of psilocybin were done by Francisco Moreno at the University of Arizona and Matthew Johnson at Johns Hopkins. Scientists in the 2010s, including Robin Carhart-Harris, started studying the use of psychedelics in the treatment of major depressive disorder (MDD).

In small trials with these patients, results showed significant and long-term improvement (for at least six months) after only two episodes of psilocybin-assisted therapy. In several studies, the guided experience of administering one of the psychedelic drugs along with psychotherapy seemed to result in marked improvement in a variety of disorders, including depression, anxiety, PTSD, and addiction.

The drugs allowed patients to experience a radical reframing of reality, helping them to become "unstuck" from the anxious and negative tape loops that played in their heads. According to Michael Pollan, an American author and professor of journalism who wrote the book, "How to Change Your Mind: What the New Science of Psychedelics Teaches Us About Consciousness, Dying, Addiction, Depression and Transcendence," psychedelics allow patients to see their lives through a kind of wide angle, where boundaries vanish and they're able to experience "consciousness without self." This perspective is usually accompanied by profound feelings of oneness with the universe.

Pollan likens the effect to a fresh blanketing of snow over the deep ruts of unproductive thinking, which characterize depression and other mental disorders. Once the new snow has fallen, the ruts disappear and a new path can be chosen. Relief from symptoms comes immediately, and in numerous studies, is sustained for months.

In spite of growing evidence for the safety and efficacy of psychedelic-assisted psychotherapy, the practice has major hurdles to cross on its quest for FDA approval.

Some of the most influential studies have focused on testing the use of psilocybin to treat end-of-life anxiety in patients diagnosed with a terminal illness. In 2016, Stephen Ross and colleagues tested a single dose of psilocybin on 29 subjects with end-of-life anxiety due to a terminal cancer diagnosis. A control group received a niacin pill. The researchers reported that of the 29 receiving psilocybin, all of the patients had "immediate, substantial, and sustained clinical benefits," even after six months.

In spite of growing evidence for the safety and efficacy of psychedelic-assisted psychotherapy, the practice has major hurdles to cross on its quest for FDA approval. The National Institutes of Health is not currently supporting any clinical trials and the research relies on private sources of funding, often with small research organizations that cannot afford the high cost of clinical trials.

Given the controversial nature of the drugs, researchers in psychedelic-assisted therapies may be cautious about publicity. Leapsmag reached out to several leaders in the field but none agreed to an interview.

Looking Ahead

Still, interest is building in the combination of psychedelic drugs and psychotherapy for treatment-resistant mental illnesses. Two months ago, Johns Hopkins University launched a new psychedelic research center with an infusion of $17 million from private investors. The center will focus on psychedelic-assisted therapies for opioid addiction, Alzheimer's disease, PTSD and major depression, to name just a few. Currently, of 51 cancer patients enrolled in a Hopkins study, more than half reported a decrease in depression and anxiety after receiving therapy with psilocybin. Two thirds even claimed that the experience was one of the most meaningful of their lives.

It is not unheard of for Schedule 1 drugs to make their way into medical use if they're shown to provide a bonafide improvement in a medical condition through well-designed clinical trials. MDMA, for example, has been designated a Breakthrough Therapy by the FDA as part of an Investigational New Drug Application. The FDA has agreed to a special protocol assessment that could speed up phase three clinical trials. The next step is for the data to be submitted to the FDA for an in-depth regulatory review. If the FDA agrees, MDMA-assisted therapy could be legalized.

Will the positive buzz around psychedelics persuade the NIH to provide the millions of dollars needed to push the field forward?

Robin Carhart-Harris believes the first drug that will receive FDA clearance is psilocybin, which he speculates could become legal in the next five to ten years. However, the field of psychedelic-assisted therapy needs more and larger clinical trials, preferably with the support of the NIH.

As Rucker and colleagues noted, the scientific literature bends toward the theme that the drugs are not necessarily therapeutic in and of themselves. It's the use of hallucinogens within a "psychologically supportive context" with a trained expert that's helpful. It's currently unknown how many users of recreational drugs are self-medicating for depression, anxiety, or other mental illnesses. But without the guidance of a knowledgeable psychotherapist, those who are self-medicating may not be helping themselves at all.

Will the positive buzz around psychedelics persuade the NIH to provide the millions of dollars needed to push the field forward? Given the changing climate in public opinion around these drugs and the need for breakthroughs in mental health therapies, it's possible that in the foreseeable future, this bold new therapy will become part of the mental health arsenal.

How 30 Years of Heart Surgeries Taught My Dad How to Live

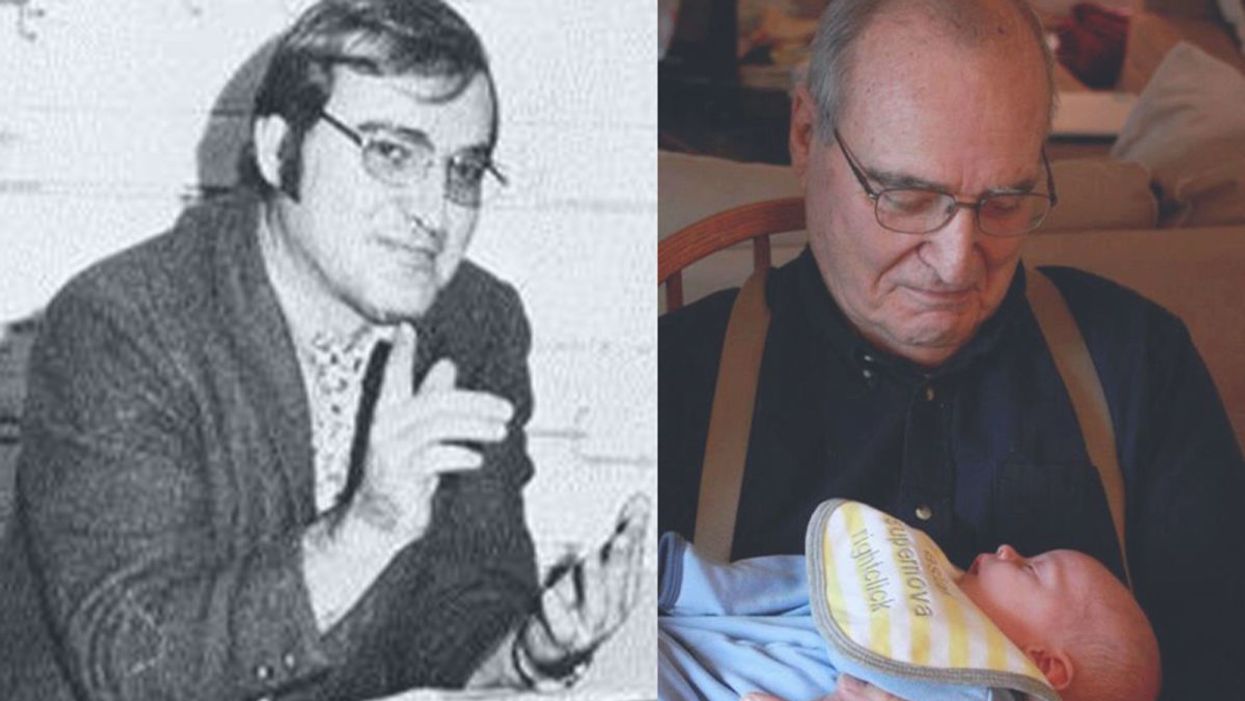

A mid-1970s photo of the author's father, and him holding a grandchild in 2012.

[Editor's Note: This piece is the winner of our 2019 essay contest, which prompted readers to reflect on the question: "How has an advance in science or medicine changed your life?"]

My father did not expect to live past the age of 50. Neither of his parents had done so. And he also knew how he would die: by heart attack, just as his father did.

In July of 1976, he had his first heart attack, days before his 40th birthday.

My dad lived the first 40 years of his life with this knowledge buried in his bones. He started smoking at the age of 12, and was drinking before he was old enough to enlist in the Navy. He had a sarcastic, often cruel, sense of humor that could drive my mother, my sister and me into tears. He was not an easy man to live with, but that was okay by him - he didn't expect to live long.

In July of 1976, he had his first heart attack, days before his 40th birthday. I was 13, and my sister was 11. He needed quadruple bypass surgery. Our small town hospital was not equipped to do this type of surgery; he would have to be transported 40 miles away to a heart center. I understood this journey to mean that my father was seriously ill, and might die in the hospital, away from anyone he knew. And my father knew a lot of people - he was a popular high school English teacher, in a town with only three high schools. He knew generations of students and their parents. Our high school football team did a blood drive in his honor.

During a trip to Disney World in 1974, Dad was suffering from angina the entire time but refused to tell me (left) and my sister, Kris.

Quadruple bypass surgery in 1976 meant that my father's breastbone was cut open by a sternal saw. His ribcage was spread wide. After the bypass surgery, his bones would be pulled back together, and tied in place with wire. The wire would later be pulled out of his body when the bones knitted back together. It would take months before he was fully healed.

Dad was in the hospital for the rest of the summer and into the start of the new school year. Going to visit him was farther than I could ride my bicycle; it meant planning a trip in the car and going onto the interstate. The first time I was allowed to visit him in the ICU, he was lying in bed, and then pushed himself to sit up. The heart monitor he was attached to spiked up and down, and I fainted. I didn't know that heartbeats change when you move; television medical dramas never showed that - I honestly thought that I had driven my father into another heart attack.

Only a few short years after that, my father returned to the big hospital to have his heart checked with a new advance in heart treatment: a CT scan. This would allow doctors to check for clogged arteries and treat them before a fatal heart attack. The procedure identified a dangerous blockage, and my father was admitted immediately. This time, however, there was no need to break bones to get to the problem; my father was home within a month.

During the late 1970's, my father changed none of his habits. He was still smoking, and he continued to drink. But now, he was also taking pills - pills to manage the pain. He would pop a nitroglycerin tablet under his tongue whenever he was experiencing angina (I have a vivid memory of him doing this during my driving lessons), but he never mentioned that he was in pain. Instead, he would snap at one of us, or joke that we were killing him.

I think he finally determined that, if he was going to have these extra decades of life, he wanted to make them count.

Being the kind of guy he was, my father never wanted to talk about his health. Any admission of pain implied that he couldn't handle pain. He would try to "muscle through" his angina, as if his willpower would be stronger than his heart muscle. His efforts would inevitably fail, leaving him angry and ready to lash out at anyone or anything. He would blame one of us as a reason he "had" to take valium or pop a nitro tablet. Dinners often ended in shouts and tears, and my father stalking to the television room with a bottle of red wine.

In the 1980's while I was in college, my father had another heart attack. But now, less than 10 years after his first, medicine had changed: our hometown hospital had the technology to run dye through my father's blood stream, identify the blockages, and do preventative care that involved statins and blood thinners. In one case, the doctors would take blood vessels from my father's legs, and suture them to replace damaged arteries around his heart. New advances in cholesterol medication and treatments for angina could extend my father's life by many years.

My father decided it was time to quit smoking. It was the first significant health step I had ever seen him take. Until then, he treated his heart issues as if they were inevitable, and there was nothing that he could do to change what was happening to him. Quitting smoking was the first sign that my father was beginning to move out of his fatalistic mindset - and the accompanying fatal behaviors that all pointed to an early death.

In 1986, my father turned 50. He had now lived longer than either of his parents. The habits he had learned from them could be changed. He had stopped smoking - what else could he do?

It was a painful decade for all of us. My parents divorced. My sister quit college. I moved to the other side of the country and stopped speaking to my father for almost 10 years. My father remarried, and divorced a second time. I stopped counting the number of times he was in and out of the hospital with heart-related issues.

In the early 1990's, my father reached out to me. I think he finally determined that, if he was going to have these extra decades of life, he wanted to make them count. He traveled across the country to spend a week with me, to meet my friends, and to rebuild his relationship with me. He did the same with my sister. He stopped drinking. He was more forthcoming about his health, and admitted that he was taking an antidepressant. His humor became less cruel and sadistic. He took an active interest in the world. He became part of my life again.

The 1990's was also the decade of angioplasty. My father explained it to me like this: during his next surgery, the doctors would place balloons in his arteries, and inflate them. The balloons would then be removed (or dissolve), leaving the artery open again for blood. He had several of these surgeries over the next decade.

When my father was in his 60's, he danced at with me at my wedding. It was now 10 years past the time he had expected to live, and his life was transformed. He was living with a woman I had known since I was a child, and my wife and I would make regular visits to their home. My father retired from teaching, became an avid gardener, and always had a home project underway. He was a happy man.

Dancing with my father at my wedding in 1998.

Then, in the mid 2000's, my father faced another serious surgery. Years of arterial surgery, angioplasty, and damaged heart muscle were taking their toll. He opted to undergo a life-saving surgery at Cleveland Clinic. By this time, I was living in New York and my sister was living in Arizona. We both traveled to the Midwest to be with him. Dad was unconscious most of the time. We took turns holding his hand in the ICU, encouraging him to regain his will to live, and making outrageous threats if he didn't listen to us.

The nursing staff were wonderful. I remember telling them that my father had never expected to live this long. One of the nurses pointed out that most of the patients in their ward were in their 70's and 80's, and a few were in their 90's. She reminded me that just a decade earlier, most hospitals were unwilling to do the kind of surgery my father had received on patients his age. In the first decade of the 21st century, however, things were different: 90-year-olds could now undergo heart surgery and live another decade. My father was on the "young" side of their patients.

The Cleveland Clinic visit would be the last major heart surgery my father would have. Not that he didn't return to his local hospital a few times after that: he broke his neck -- not once, but twice! -- slipping on ice. And in the 2010's, he began to show signs of dementia, and needed more home care. His partner, who had her own health issues, was not able to provide the level of care my father needed. My sister invited him to move in with her, and in 2015, I traveled with him to Arizona to get him settled in.

After a few months, he accepted home hospice. We turned off his pacemaker when the hospice nurse explained to us that the job of a pacemaker is to literally jolt a patient's heart back into beating. The jolts were happening more and more frequently, causing my Dad additional, unwanted pain.

My father in 2015, a few months before his death.

My father died in February 2016. His body carried the scars and implants of 30 years of cardiac surgeries, from the ugly breastbone scar from the 1970's to scars on his arms and legs from borrowed blood vessels, to the tiny red circles of robotic incisions from the 21st century. The arteries and veins feeding his heart were a patchwork of transplanted leg veins and fragile arterial walls pressed thinner by balloons.

And my father died with no regrets or unfinished business. He died in my sister's home, with his long-time partner by his side. Medical advancements had given him the opportunity to live 30 years longer than he expected. But he was the one who decided how to live those extra years. He was the one who made the years matter.