AI and you: Is the promise of personalized nutrition apps worth the hype?

Personalized nutrition apps could provide valuable data to people trying to eat healthier, though more research must be done to show effectiveness.

As a type 2 diabetic, Michael Snyder has long been interested in how blood sugar levels vary from one person to another in response to the same food, and whether a more personalized approach to nutrition could help tackle the rapidly cascading levels of diabetes and obesity in much of the western world.

Eight years ago, Snyder, who directs the Center for Genomics and Personalized Medicine at Stanford University, decided to put his theories to the test. In the 2000s continuous glucose monitoring, or CGM, had begun to revolutionize the lives of diabetics, both type 1 and type 2. Using spherical sensors which sit on the upper arm or abdomen – with tiny wires that pierce the skin – the technology allowed patients to gain real-time updates on their blood sugar levels, transmitted directly to their phone.

It gave Snyder an idea for his research at Stanford. Applying the same technology to a group of apparently healthy people, and looking for ‘spikes’ or sudden surges in blood sugar known as hyperglycemia, could provide a means of observing how their bodies reacted to an array of foods.

“We discovered that different foods spike people differently,” he says. “Some people spike to pasta, others to bread, others to bananas, and so on. It’s very personalized and our feeling was that building programs around these devices could be extremely powerful for better managing people’s glucose.”

Unbeknown to Snyder at the time, thousands of miles away, a group of Israeli scientists at the Weizmann Institute of Science were doing exactly the same experiments. In 2015, they published a landmark paper which used CGM to track the blood sugar levels of 800 people over several days, showing that the biological response to identical foods can vary wildly. Like Snyder, they theorized that giving people a greater understanding of their own glucose responses, so they spend more time in the normal range, may reduce the prevalence of type 2 diabetes.

The commercial potential of such apps is clear, but the underlying science continues to generate intriguing findings.

“At the moment 33 percent of the U.S. population is pre-diabetic, and 70 percent of those pre-diabetics will become diabetic,” says Snyder. “Those numbers are going up, so it’s pretty clear we need to do something about it.”

Fast forward to 2022,and both teams have converted their ideas into subscription-based dietary apps which use artificial intelligence to offer data-informed nutritional and lifestyle recommendations. Snyder’s spinoff, January AI, combines CGM information with heart rate, sleep, and activity data to advise on foods to avoid and the best times to exercise. DayTwo–a start-up which utilizes the findings of Weizmann Institute of Science–obtains microbiome information by sequencing stool samples, and combines this with blood glucose data to rate ‘good’ and ‘bad’ foods for a particular person.

“CGMs can be used to devise personalized diets,” says Eran Elinav, an immunology professor and microbiota researcher at the Weizmann Institute of Science in addition to serving as a scientific consultant for DayTwo. “However, this process can be cumbersome. Therefore, in our lab we created an algorithm, based on data acquired from a big cohort of people, which can accurately predict post-meal glucose responses on a personal basis.”

The commercial potential of such apps is clear. DayTwo, who market their product to corporate employers and health insurers rather than individual consumers, recently raised $37 million in funding. But the underlying science continues to generate intriguing findings.

Last year, Elinav and colleagues published a study on 225 individuals with pre-diabetes which found that they achieved better blood sugar control when they followed a personalized diet based on DayTwo’s recommendations, compared to a Mediterranean diet. The journal Cell just released a new paper from Snyder’s group which shows that different types of fibre benefit people in different ways.

“The idea is you hear different fibres are good for you,” says Snyder. “But if you look at fibres they’re all over the map—it’s like saying all animals are the same. The responses are very individual. For a lot of people [a type of fibre called] arabinoxylan clearly reduced cholesterol while the fibre inulin had no effect. But in some people, it was the complete opposite.”

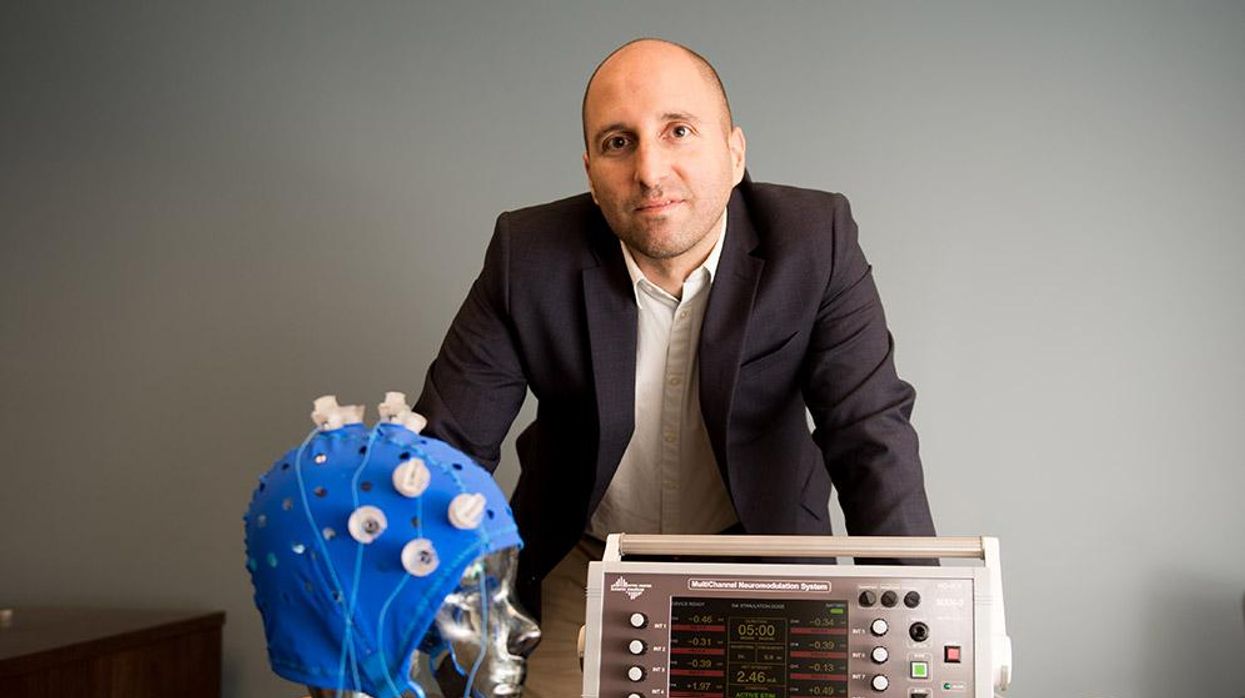

Eight years ago, Stanford's Michael Snyder began studying how continuous glucose monitors could be used by patients to gain real-time updates on their blood sugar levels, transmitted directly to their phone.

The Snyder Lab, Stanford Medicine

Because of studies like these, interest in precision nutrition approaches has exploded in recent years. In January, the National Institutes of Health announced that they are spending $170 million on a five year, multi-center initiative which aims to develop algorithms based on a whole range of data sources from blood sugar to sleep, exercise, stress, microbiome and even genomic information which can help predict which diets are most suitable for a particular individual.

“There's so many different factors which influence what you put into your mouth but also what happens to different types of nutrients and how that ultimately affects your health, which means you can’t have a one-size-fits-all set of nutritional guidelines for everyone,” says Bruce Y. Lee, professor of health policy and management at the City University of New York Graduate School of Public Health.

With the falling costs of genomic sequencing, other precision nutrition clinical trials are choosing to look at whether our genomes alone can yield key information about what our diets should look like, an emerging field of research known as nutrigenomics.

The ASPIRE-DNA clinical trial at Imperial College London is aiming to see whether particular genetic variants can be used to classify individuals into two groups, those who are more glucose sensitive to fat and those who are more sensitive to carbohydrates. By following a tailored diet based on these sensitivities, the trial aims to see whether it can prevent people with pre-diabetes from developing the disease.

But while much hope is riding on these trials, even precision nutrition advocates caution that the field remains in the very earliest of stages. Lars-Oliver Klotz, professor of nutrigenomics at Friedrich-Schiller-University in Jena, Germany, says that while the overall goal is to identify means of avoiding nutrition-related diseases, genomic data alone is unlikely to be sufficient to prevent obesity and type 2 diabetes.

“Genome data is rather simple to acquire these days as sequencing techniques have dramatically advanced in recent years,” he says. “However, the predictive value of just genome sequencing is too low in the case of obesity and prediabetes.”

Others say that while genomic data can yield useful information in terms of how different people metabolize different types of fat and specific nutrients such as B vitamins, there is a need for more research before it can be utilized in an algorithm for making dietary recommendations.

“I think it’s a little early,” says Eileen Gibney, a professor at University College Dublin. “We’ve identified a limited number of gene-nutrient interactions so far, but we need more randomized control trials of people with different genetic profiles on the same diet, to see whether they respond differently, and if that can be explained by their genetic differences.”

Some start-ups have already come unstuck for promising too much, or pushing recommendations which are not based on scientifically rigorous trials. The world of precision nutrition apps was dubbed a ‘Wild West’ by some commentators after the founders of uBiome – a start-up which offered nutritional recommendations based on information obtained from sequencing stool samples –were charged with fraud last year. The weight-loss app Noom, which was valued at $3.7 billion in May 2021, has been criticized on Twitter by a number of users who claimed that its recommendations have led to them developed eating disorders.

With precision nutrition apps marketing their technology at healthy individuals, question marks have also been raised about the value which can be gained through non-diabetics monitoring their blood sugar through CGM. While some small studies have found that wearing a CGM can make overweight or obese individuals more motivated to exercise, there is still a lack of conclusive evidence showing that this translates to improved health.

However, independent researchers remain intrigued by the technology, and say that the wealth of data generated through such apps could be used to help further stratify the different types of people who become at risk of developing type 2 diabetes.

“CGM not only enables a longer sampling time for capturing glucose levels, but will also capture lifestyle factors,” says Robert Wagner, a diabetes researcher at University Hospital Düsseldorf. “It is probable that it can be used to identify many clusters of prediabetic metabolism and predict the risk of diabetes and its complications, but maybe also specific cardiometabolic risk constellations. However, we still don’t know which forms of diabetes can be prevented by such approaches and how feasible and long-lasting such self-feedback dietary modifications are.”

Snyder himself has now been wearing a CGM for eight years, and he credits the insights it provides with helping him to manage his own diabetes. “My CGM still gives me novel insights into what foods and behaviors affect my glucose levels,” he says.

He is now looking to run clinical trials with his group at Stanford to see whether following a precision nutrition approach based on CGM and microbiome data, combined with other health information, can be used to reverse signs of pre-diabetes. If it proves successful, January AI may look to incorporate microbiome data in future.

“Ultimately, what I want to do is be able take people’s poop samples, maybe a blood draw, and say, ‘Alright, based on these parameters, this is what I think is going to spike you,’ and then have a CGM to test that out,” he says. “Getting very predictive about this, so right from the get go, you can have people better manage their health and then use the glucose monitor to help follow that.”

The Friday Five: How to exercise for cancer prevention

How to exercise for cancer prevention. Plus, a device that brings relief to back pain, ingredients for reducing Alzheimer's risk, the world's oldest disease could make you young again, and more.

The Friday Five covers five stories in research that you may have missed this week. There are plenty of controversies and troubling ethical issues in science – and we get into many of them in our online magazine – but this news roundup focuses on scientific creativity and progress to give you a therapeutic dose of inspiration headed into the weekend.

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

Here are the promising studies covered in this week's Friday Five:

- How to exercise for cancer prevention

- A device that brings relief to back pain

- Ingredients for reducing Alzheimer's risk

- Is the world's oldest disease the fountain of youth?

- Scared of crossing bridges? Your phone can help

New approach to brain health is sparking memories

This fall, Robert Reinhart of Boston University published a study finding that electrical stimulation can boost memory - and Reinhart was surprised to discover the effects lasted a full month.

What if a few painless electrical zaps to your brain could help you recall names, perform better on Wordle or even ward off dementia?

This is where neuroscientists are going in efforts to stave off age-related memory loss as well as Alzheimer’s disease. Medications have shown limited effectiveness in reversing or managing loss of brain function so far. But new studies suggest that firing up an aging neural network with electrical or magnetic current might keep brains spry as we age.

Welcome to non-invasive brain stimulation (NIBS). No surgery or anesthesia is required. One day, a jolt in the morning with your own battery-operated kit could replace your wake-up coffee.

Scientists believe brain circuits tend to uncouple as we age. Since brain neurons communicate by exchanging electrical impulses with each other, the breakdown of these links and associations could be what causes the “senior moment”—when you can’t remember the name of the movie you just watched.

In 2019, Boston University researchers led by Robert Reinhart, director of the Cognitive and Clinical Neuroscience Laboratory, showed that memory loss in healthy older adults is likely caused by these disconnected brain networks. When Reinhart and his team stimulated two key areas of the brain with mild electrical current, they were able to bring the brains of older adult subjects back into sync — enough so that their ability to remember small differences between two images matched that of much younger subjects for at least 50 minutes after the testing stopped.

Reinhart wowed the neuroscience community once again this fall. His newer study in Nature Neuroscience presented 150 healthy participants, ages 65 to 88, who were able to recall more words on a given list after 20 minutes of low-intensity electrical stimulation sessions over four consecutive days. This amounted to a 50 to 65 percent boost in their recall.

Even Reinhart was surprised to discover the enhanced performance of his subjects lasted a full month when they were tested again later. Those who benefited most were the participants who were the most forgetful at the start.

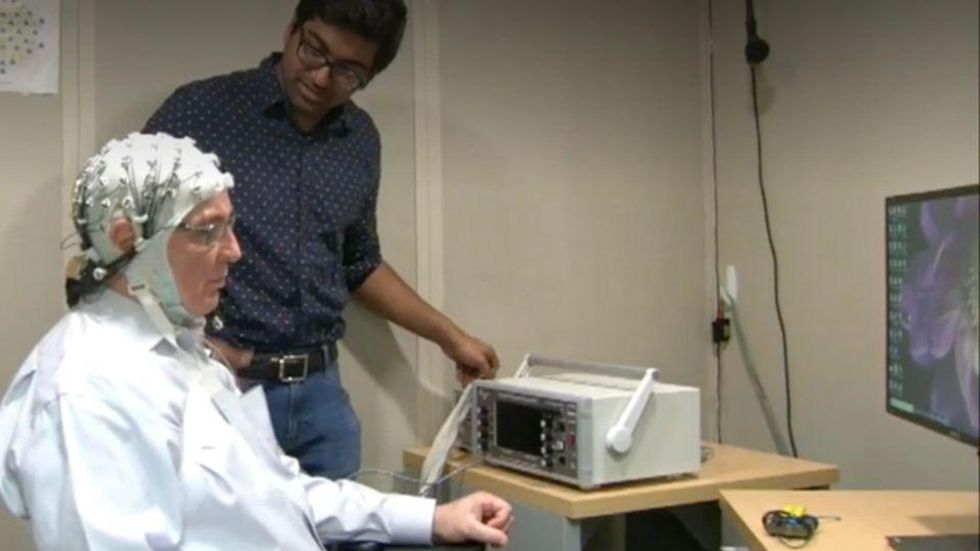

An older person participates in Robert Reinhart's research on brain stimulation.

Robert Reinhart

Reinhart’s subjects only suffered normal age-related memory deficits, but NIBS has great potential to help people with cognitive impairment and dementia, too, says Krista Lanctôt, the Bernick Chair of Geriatric Psychopharmacology at Sunnybrook Health Sciences Center in Toronto. Plus, “it is remarkably safe,” she says.

Lanctôt was the senior author on a meta-analysis of brain stimulation studies published last year on people with mild cognitive impairment or later stages of Alzheimer’s disease. The review concluded that magnetic stimulation to the brain significantly improved the research participants’ neuropsychiatric symptoms, such as apathy and depression. The stimulation also enhanced global cognition, which includes memory, attention, executive function and more.

This is the frontier of neuroscience.

The two main forms of NIBS – and many questions surrounding them

There are two types of NIBS. They differ based on whether electrical or magnetic stimulation is used to create the electric field, the type of device that delivers the electrical current and the strength of the current.

Transcranial Current Brain Stimulation (tES) is an umbrella term for a group of techniques using low-wattage electrical currents to manipulate activity in the brain. The current is delivered to the scalp or forehead via electrodes attached to a nylon elastic cap or rubber headband.

Variations include how the current is delivered—in an alternating pattern or in a constant, direct mode, for instance. Tweaking frequency, potency or target brain area can produce different effects as well. Reinhart’s 2022 study demonstrated that low or high frequencies and alternating currents were uniquely tied to either short-term or long-term memory improvements.

Sessions may be 20 minutes per day over the course of several days or two weeks. “[The subject] may feel a tingling, warming, poking or itching sensation,” says Reinhart, which typically goes away within a minute.

The other main approach to NIBS is Transcranial Magnetic Simulation (TMS). It involves the use of an electromagnetic coil that is held or placed against the forehead or scalp to activate nerve cells in the brain through short pulses. The stimulation is stronger than tES but similar to a magnetic resonance imaging (MRI) scan.

The subject may feel a slight knocking or tapping on the head during a 20-to-60-minute session. Scalp discomfort and headaches are reported by some; in very rare cases, a seizure can occur.

No head-to-head trials have been conducted yet to evaluate the differences and effectiveness between electrical and magnetic current stimulation, notes Lanctôt, who is also a professor of psychiatry and pharmacology at the University of Toronto. Although TMS was approved by the FDA in 2008 to treat major depression, both techniques are considered experimental for the purpose of cognitive enhancement.

“One attractive feature of tES is that it’s inexpensive—one-fifth the price of magnetic stimulation,” Reinhart notes.

Don’t confuse either of these procedures with the horrors of electroconvulsive therapy (ECT) in the 1950s and ‘60s. ECT is a more powerful, riskier procedure used only as a last resort in treating severe mental illness today.

Clinical studies on NIBS remain scarce. Standardized parameters and measures for testing have not been developed. The high heterogeneity among the many existing small NIBS studies makes it difficult to draw general conclusions. Few of the studies have been replicated and inconsistencies abound.

Scientists are still lacking so much fundamental knowledge about the brain and how it works, says Reinhart. “We don’t know how information is represented in the brain or how it’s carried forward in time. It’s more complex than physics.”

Lanctôt’s meta-analysis showed improvements in global cognition from delivering the magnetic form of the stimulation to people with Alzheimer’s, and this finding was replicated inan analysis in the Journal of Prevention of Alzheimer’s Disease this fall. Neither meta-analysis found clear evidence that applying the electrical currents, was helpful for Alzheimer’s subjects, but Lanctôt suggests this might be merely because the sample size for tES was smaller compared to the groups that received TMS.

At the same time, London neuroscientist Marco Sandrini, senior lecturer in psychology at the University of Roehampton, critically reviewed a series of studies on the effects of tES on episodic memory. Often declining with age, episodic memory relates to recalling a person’s own experiences from the past. Sandrini’s review concluded that delivering tES to the prefrontal or temporoparietal cortices of the brain might enhance episodic memory in older adults with Alzheimer’s disease and amnesiac mild cognitive impairment (the predementia phase of Alzheimer’s when people start to have symptoms).

Researchers readily tick off studies needed to explore, clarify and validate existing NIBS data. What is the optimal stimulus session frequency, spacing and duration? How intense should the stimulus be and where should it be targeted for what effect? How might genetics or degree of brain impairment affect responsiveness? Would adjunct medication or cognitive training boost positive results? Could administering the stimulus while someone sleeps expedite memory consolidation?

Using MRI or another brain scan along with computational modeling of the current flow, a clinician could create a treatment that is customized to each person’s brain.

While Sandrini’s review reported improvements induced by tES in the recall or recognition of words and images, there is no evidence it will translate into improvements in daily activities. This is another question that will require more research and testing, Sandrini notes.

Scientists are still lacking so much fundamental knowledge about the brain and how it works, says Reinhart. “We don’t know how information is represented in the brain or how it’s carried forward in time. It’s more complex than physics.”

Where the science is headed

Learning how to apply precision medicine to NIBS is the next focus in advancing this technology, says Shankar Tumati, a post-doctoral fellow working with Lanctôt.

There is great variability in each person’s brain anatomy—the thickness of the skull, the brain’s unique folds, the amount of cerebrospinal fluid. All of these structural differences impact how electrical or magnetic stimulation is distributed in the brain and ultimately the effects.

Using MRI or another brain scan along with computational modeling of the current flow, a clinician could create a treatment that is customized to each person’s brain, from where to put the electrodes to determining the exact dose and duration of stimulation needed to achieve lasting results, Sandrini says.

Above all, most neuroscientists say that largescale research studies over long periods of time are necessary to confirm the safety and durability of this therapy for the purpose of boosting memory. Short of that, there can be no FDA approval or medical regulation for this clinical use.

Lanctôt urges people to seek out clinical NIBS trials in their area if they want to see the science advance. “That is how we’ll find the answers,” she says, predicting it will be 5 to 10 years to develop each additional clinical application of NIBS. Ultimately, she predicts that reigning in Alzheimer’s disease and mild cognitive impairment will require a multi-pronged approach that includes lifestyle and medications, too.

Sandrini believes that scientific efforts should focus on preventing or delaying Alzheimer’s. “We need to start intervention earlier—as soon as people start to complain about forgetting things,” he says. “Changes in the brain start 10 years before [there is a problem]. Once Alzheimer’s develops, it is too late.”