If New Metal Legs Let You Run 20 Miles/Hour, Would You Amputate Your Own?

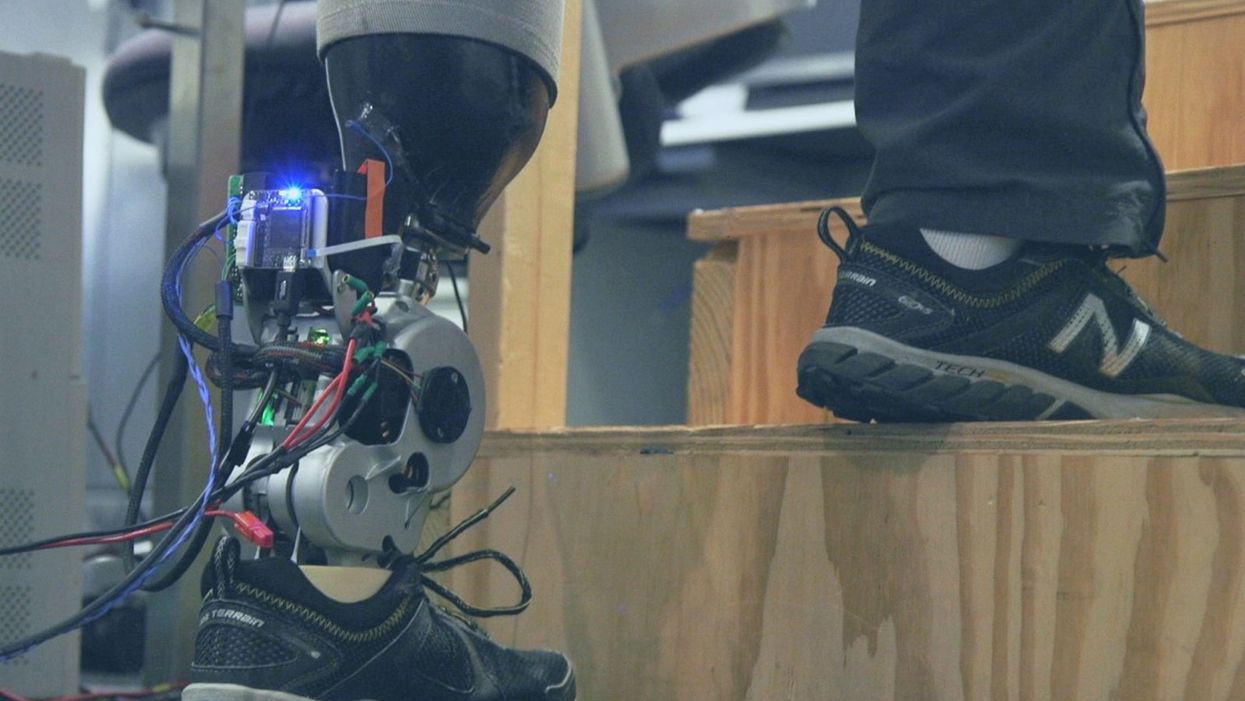

A patient with below-knee AMI amputation walks up the stairs.

"Here's a question for you," I say to our dinner guests, dodging a knowing glance from my wife. "Imagine a future in which you could surgically replace your legs with robotic substitutes that had all the functionality and sensation of their biological counterparts. Let's say these new legs would allow you to run all day at 20 miles per hour without getting tired. Would you have the surgery?"

Why are we so married to the arbitrary distinction between rehabilitating and augmenting?

Like most people I pose this question to, our guests respond with some variation on the theme of "no way"; the idea of undergoing a surgical procedure with the sole purpose of augmenting performance beyond traditional human limits borders on the unthinkable.

"Would your answer change if you had arthritis in your knees?" This is where things get interesting. People think differently about intervention when injury or illness is involved. The idea of a major surgery becomes more tractable to us in the setting of rehabilitation.

Consider the simplistic example of human walking speed. The average human walks at a baseline three miles per hour. If someone is only able to walk at one mile per hour, we do everything we can to increase their walking ability. However, to take a person who is already able to walk at three miles per hour and surgically alter their body so that they can walk twice as fast seems, to us, unreasonable.

What fascinates me about this is that the three-mile-per-hour baseline is set by arbitrary limitations of the healthy human body. If we ignore this reference point altogether, and consider that each case simply offers an improvement in walking ability, the line between augmentation and rehabilitation all but disappears. Why, then, are we so married to this arbitrary distinction between rehabilitating and augmenting? What makes us hold so tightly to baseline human function?

Where We Stand Now

As the functionality of advanced prosthetic devices continues to increase at an astounding rate, questions like these are becoming more relevant. Experimental prostheses, intended for the rehabilitation of people with amputation, are now able to replicate the motions of biological limbs with high fidelity. Neural interfacing technologies enable a person with amputation to control these devices with their brain and nervous system. Before long, synthetic body parts will outperform biological ones.

Our approach allows people to not only control a prosthesis with their brain, but also to feel its movements as if it were their own limb.

Against this backdrop, my colleagues and I developed a methodology to improve the connection between the biological body and a synthetic limb. Our approach, known as the agonist-antagonist myoneural interface ("AMI" for short), enables us to reflect joint movement sensations from a prosthetic limb onto the human nervous system. In other words, the AMI allows people to not only control a prosthesis with their brain, but also to feel its movements as if it were their own limb. The AMI involves a reimagining of the amputation surgery, so that the resultant residual limb is better suited to interact with a neurally-controlled prosthesis. In addition to increasing functionality, the AMI was designed with the primary goal of enabling adoption of a prosthetic limb as part of a patient's physical identity (known as "embodiment").

Early results have been remarkable. Patients with below-knee AMI amputation are better able to control an experimental prosthetic leg, compared to people who had their legs amputated in the traditional way. In addition, the AMI patients show increased evidence of embodiment. They identify with the device, and describe feeling as though it is part of them, part of self.

Where We're Going

True embodiment of robotic devices has the potential to fundamentally alter humankind's relationship with the built world. Throughout history, humans have excelled as tool builders. We innovate in ways that allow us to design and augment the world around us. However, tools for augmentation are typically external to our body identity; there is a clean line drawn between smart phone and self. As we advance our ability to integrate synthetic systems with physical identity, humanity will have the capacity to sculpt that very identity, rather than just the world in which it exists.

For this potential to be realized, we will need to let go of our reservations about surgery for augmentation. In reality, this shift has already begun. Consider the approximately 17.5 million surgical and minimally invasive cosmetic procedures performed in the United States in 2017 alone. Many of these represent patients with no demonstrated medical need, who have opted to undergo a surgical procedure for the sole purpose of synthetically enhancing their body. The ethical basis for such a procedure is built on the individual perception that the benefits of that procedure outweigh its costs.

At present, it seems absurd that amputation would ever reach this point. However, as robotic technology improves and becomes more integrated with self, the balance of cost and benefit will shift, lending a new perspective on what now seems like an unfathomable decision to electively amputate a healthy limb. When this barrier is crossed, we will collide head-on with the question of whether it is acceptable for a person to "upgrade" such an essential part of their body.

At a societal level, the potential benefits of physical augmentation are far-reaching. The world of robotic limb augmentation will be a world of experienced surgeons whose hands are perfectly steady, firefighters whose legs allow them to kick through walls, and athletes who never again have to worry about injury. It will be a world in which a teenage boy and his grandmother embark together on a four-hour sprint through the woods, for the sheer joy of it. It will be a world in which the human experience is fundamentally enriched, because our bodies, which play such a defining role in that experience, are truly malleable.

This is not to say that such societal benefits stand without potential costs. One justifiable concern is the misuse of augmentative technologies. We are all quite familiar with the proverbial supervillain whose nervous system has been fused to that of an all-powerful robot.

The world of robotic limb augmentation will be a world of experienced surgeons whose hands are perfectly steady.

In reality, misuse is likely to be both subtler and more insidious than this. As with all new technology, careful legislation will be necessary to work against those who would hijack physical augmentations for violent or oppressive purposes. It will also be important to ensure broad access to these technologies, to protect against further socioeconomic stratification. This particular issue is helped by the tendency of the cost of a technology to scale inversely with market size. It is my hope that when robotic augmentations are as ubiquitous as cell phones, the technology will serve to equalize, rather than to stratify.

In our future bodies, when we as a society decide that the benefits of augmentation outweigh the costs, it will no longer matter whether the base materials that make us up are biological or synthetic. When our AMI patients are connected to their experimental prosthesis, it is irrelevant to them that the leg is made of metal and carbon fiber; to them, it is simply their leg. After our first patient wore the experimental prosthesis for the first time, he sent me an email that provides a look at the immense possibility the future holds:

What transpired is still slowly sinking in. I keep trying to describe the sensation to people. Then this morning my daughter asked me if I felt like a cyborg. The answer was, "No, I felt like I had a foot."

Thanks to safety cautions from the COVID-19 pandemic, a strain of influenza has been completely eliminated.

If you were one of the millions who masked up, washed your hands thoroughly and socially distanced, pat yourself on the back—you may have helped change the course of human history.

Scientists say that thanks to these safety precautions, which were introduced in early 2020 as a way to stop transmission of the novel COVID-19 virus, a strain of influenza has been completely eliminated. This marks the first time in human history that a virus has been wiped out through non-pharmaceutical interventions, such as vaccines.

The flu shot, explained

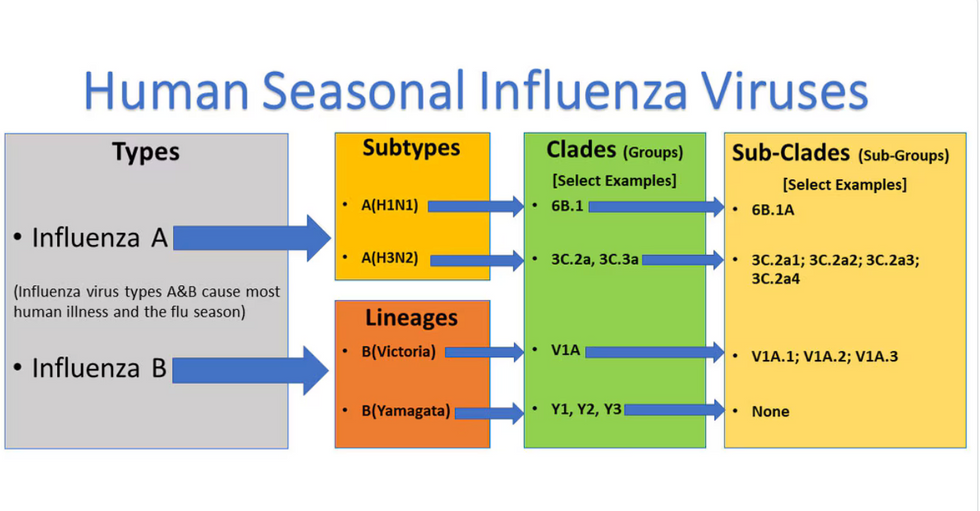

Influenza viruses type A and B are responsible for the majority of human illnesses and the flu season.

Centers for Disease Control

For more than a decade, flu shots have protected against two types of the influenza virus–type A and type B. While there are four different strains of influenza in existence (A, B, C, and D), only strains A, B, and C are capable of infecting humans, and only A and B cause pandemics. In other words, if you catch the flu during flu season, you’re most likely sick with flu type A or B.

Flu vaccines contain inactivated—or dead—influenza virus. These inactivated viruses can’t cause sickness in humans, but when administered as part of a vaccine, they teach a person’s immune system to recognize and kill those viruses when they’re encountered in the wild.

Each spring, a panel of experts gives a recommendation to the US Food and Drug Administration on which strains of each flu type to include in that year’s flu vaccine, depending on what surveillance data says is circulating and what they believe is likely to cause the most illness during the upcoming flu season. For the past decade, Americans have had access to vaccines that provide protection against two strains of influenza A and two lineages of influenza B, known as the Victoria lineage and the Yamagata lineage. But this year, the seasonal flu shot won’t include the Yamagata strain, because the Yamagata strain is no longer circulating among humans.

How Yamagata Disappeared

Flu surveillance data from the Global Initiative on Sharing All Influenza Data (GISAID) shows that the Yamagata lineage of flu type B has not been sequenced since April 2020.

Nature

Experts believe that the Yamagata lineage had already been in decline before the pandemic hit, likely because the strain was naturally less capable of infecting large numbers of people compared to the other strains. When the COVID-19 pandemic hit, the resulting safety precautions such as social distancing, isolating, hand-washing, and masking were enough to drive the virus into extinction completely.

Because the strain hasn’t been circulating since 2020, the FDA elected to remove the Yamagata strain from the seasonal flu vaccine. This will mark the first time since 2012 that the annual flu shot will be trivalent (three-component) rather than quadrivalent (four-component).

Should I still get the flu shot?

The flu shot will protect against fewer strains this year—but that doesn’t mean we should skip it. Influenza places a substantial health burden on the United States every year, responsible for hundreds of thousands of hospitalizations and tens of thousands of deaths. The flu shot has been shown to prevent millions of illnesses each year (more than six million during the 2022-2023 season). And while it’s still possible to catch the flu after getting the flu shot, studies show that people are far less likely to be hospitalized or die when they’re vaccinated.

Another unexpected benefit of dropping the Yamagata strain from the seasonal vaccine? This will possibly make production of the flu vaccine faster, and enable manufacturers to make more vaccines, helping countries who have a flu vaccine shortage and potentially saving millions more lives.

After his grandmother’s dementia diagnosis, one man invented a snack to keep her healthy and hydrated.

Founder Lewis Hornby and his grandmother Pat, sampling Jelly Drops—an edible gummy containing water and life-saving electrolytes.

On a visit to his grandmother’s nursing home in 2016, college student Lewis Hornby made a shocking discovery: Dehydration is a common (and dangerous) problem among seniors—especially those that are diagnosed with dementia.

Hornby’s grandmother, Pat, had always had difficulty keeping up her water intake as she got older, a common issue with seniors. As we age, our body composition changes, and we naturally hold less water than younger adults or children, so it’s easier to become dehydrated quickly if those fluids aren’t replenished. What’s more, our thirst signals diminish naturally as we age as well—meaning our body is not as good as it once was in letting us know that we need to rehydrate. This often creates a perfect storm that commonly leads to dehydration. In Pat’s case, her dehydration was so severe she nearly died.

When Lewis Hornby visited his grandmother at her nursing home afterward, he learned that dehydration especially affects people with dementia, as they often don’t feel thirst cues at all, or may not recognize how to use cups correctly. But while dementia patients often don’t remember to drink water, it seemed to Hornby that they had less problem remembering to eat, particularly candy.

Hornby wanted to create a solution for elderly people who struggled keeping their fluid intake up. He spent the next eighteen months researching and designing a solution and securing funding for his project. In 2019, Hornby won a sizable grant from the Alzheimer’s Society, a UK-based care and research charity for people with dementia and their caregivers. Together, through the charity’s Accelerator Program, they created a bite-sized, sugar-free, edible jelly drop that looked and tasted like candy. The candy, called Jelly Drops, contained 95% water and electrolytes—important minerals that are often lost during dehydration. The final product launched in 2020—and was an immediate success. The drops were able to provide extra hydration to the elderly, as well as help keep dementia patients safe, since dehydration commonly leads to confusion, hospitalization, and sometimes even death.

Not only did Jelly Drops quickly become a favorite snack among dementia patients in the UK, but they were able to provide an additional boost of hydration to hospital workers during the pandemic. In NHS coronavirus hospital wards, patients infected with the virus were regularly given Jelly Drops to keep their fluid levels normal—and staff members snacked on them as well, since long shifts and personal protective equipment (PPE) they were required to wear often left them feeling parched.

In April 2022, Jelly Drops launched in the United States. The company continues to donate 1% of its profits to help fund Alzheimer’s research.