Is Finding Out Your Baby’s Genetics A New Responsibility of Parenting?

A doctor pricks the heel of a newborn for a blood test.

Hours after a baby is born, its heel is pricked with a lancet. Drops of the infant's blood are collected on a porous card, which is then mailed to a state laboratory. The dried blood spots are screened for around thirty conditions, including phenylketonuria (PKU), the metabolic disorder that kick-started this kind of newborn screening over 60 years ago. In the U.S., parents are not asked for permission to screen their child. Newborn screening programs are public health programs, and the assumption is that no good parent would refuse a screening test that could identify a serious yet treatable condition in their baby.

Learning as much as you can about your child's health might seem like a natural obligation of parenting. But it's an assumption that I think needs to be much more closely examined.

Today, with the introduction of genome sequencing into clinical medicine, some are asking whether newborn screening goes far enough. As the cost of sequencing falls, should parents take a more expansive look at their children's health, learning not just whether they have a rare but treatable childhood condition, but also whether they are at risk for untreatable conditions or for diseases that, if they occur at all, will strike only in adulthood? Should genome sequencing be a part of every newborn's care?

It's an idea that appeals to Anne Wojcicki, the founder and CEO of the direct-to-consumer genetic testing company 23andMe, who in a 2016 interview with The Guardian newspaper predicted that having newborns tested would soon be considered standard practice—"as critical as testing your cholesterol"—and a new responsibility of parenting. Wojcicki isn't the only one excited to see everyone's genes examined at birth. Francis Collins, director of the National Institutes of Health and perhaps the most prominent advocate of genomics in the United States, has written that he is "almost certain … that whole-genome sequencing will become part of new-born screening in the next few years." Whether that would happen through state-mandated screening programs, or as part of routine pediatric care—or perhaps as a direct-to-consumer service that parents purchase at birth or receive as a baby-shower gift—is not clear.

Learning as much as you can about your child's health might seem like a natural obligation of parenting. But it's an assumption that I think needs to be much more closely examined, both because the results that genome sequencing can return are more complex and more uncertain than one might expect, and because parents are not actually responsible for their child's lifelong health and well-being.

What is a parent supposed to do about such a risk except worry?

Existing newborn screening tests look for the presence of rare conditions that, if identified early in life, before the child shows any symptoms, can be effectively treated. Sequencing could identify many of these same kinds of conditions (and it might be a good tool if it could be targeted to those conditions alone), but it would also identify gene variants that confer an increased risk rather than a certainty of disease. Occasionally that increased risk will be significant. About 12 percent of women in the general population will develop breast cancer during their lives, while those who have a harmful BRCA1 or BRCA2 gene variant have around a 70 percent chance of developing the disease. But for many—perhaps most—conditions, the increased risk associated with a particular gene variant will be very small. Researchers have identified over 600 genes that appear to be associated with schizophrenia, for example, but any one of those confers only a tiny increase in risk for the disorder. What is a parent supposed to do about such a risk except worry?

Sequencing results are uncertain in other important ways as well. While we now have the ability to map the genome—to create a read-out of the pairs of genetic letters that make up a person's DNA—we are still learning what most of it means for a person's health and well-being. Researchers even have a name for gene variants they think might be associated with a disease or disorder, but for which they don't have enough evidence to be sure. They are called "variants of unknown (or uncertain) significance (VUS), and they pop up in most people's sequencing results. In cancer genetics, where much research has been done, about 1 in 5 gene variants are reclassified over time. Most are downgraded, which means that a good number of VUS are eventually designated benign.

While one parent might reasonably decide to learn about their child's risk for a condition about which nothing can be done medically, a different, yet still thoroughly reasonable, parent might prefer to remain ignorant so that they can enjoy the time before their child is afflicted.

Then there's the puzzle of what to do about results that show increased risk or even certainty for a condition that we have no idea how to prevent. Some genomics advocates argue that even if a result is not "medically actionable," it might have "personal utility" because it allows parents to plan for their child's future needs, to enroll them in research, or to connect with other families whose children carry the same genetic marker.

Finding a certain gene variant in one child might inform parents' decisions about whether to have another—and if they do, about whether to use reproductive technologies or prenatal testing to select against that variant in a future child. I have no doubt that for some parents these personal utility arguments are persuasive, but notice how far we've now strayed from the serious yet treatable conditions that motivated governments to set up newborn screening programs, and to mandate such testing for all.

Which brings me to the other problem with the call for sequencing newborn babies: the idea that even if it's not what the law requires, it's what good parents should do. That idea is very compelling when we're talking about sequencing results that show a serious threat to the child's health, especially when interventions are available to prevent or treat that condition. But as I have shown, many sequencing results are not of this type.

While one parent might reasonably decide to learn about their child's risk for a condition about which nothing can be done medically, a different, yet still thoroughly reasonable, parent might prefer to remain ignorant so that they can enjoy the time before their child is afflicted. This parent might decide that the worry—and the hypervigilence it could inspire in them—is not in their child's best interest, or indeed in their own. This parent might also think that it should be up to the child, when he or she is older, to decide whether to learn about his or her risk for adult-onset conditions, especially given that many adults at high familial risk for conditions like Alzheimer's or Huntington's disease choose never to be tested. This parent will value the child's future autonomy and right not to know more than they value the chance to prepare for a health risk that won't strike the child until 40 or 50 years in the future.

Parents are not obligated to learn about their children's risk for a condition that cannot be prevented, has a small risk of occurring, or that would appear only in adulthood.

Contemporary understandings of parenting are famously demanding. We are asked to do everything within our power to advance our children's health and well-being—to act always in our children's best interests. Against that backdrop, the need to sequence every newborn baby's genome might seem obvious. But we should be skeptical. Many sequencing results are complex and uncertain. Parents are not obligated to learn about their children's risk for a condition that cannot be prevented, has a small risk of occurring, or that would appear only in adulthood. To suggest otherwise is to stretch parental responsibilities beyond the realm of childhood and beyond factors that parents can control.

Doctors worry that fungal pathogens may cause the next pandemic.

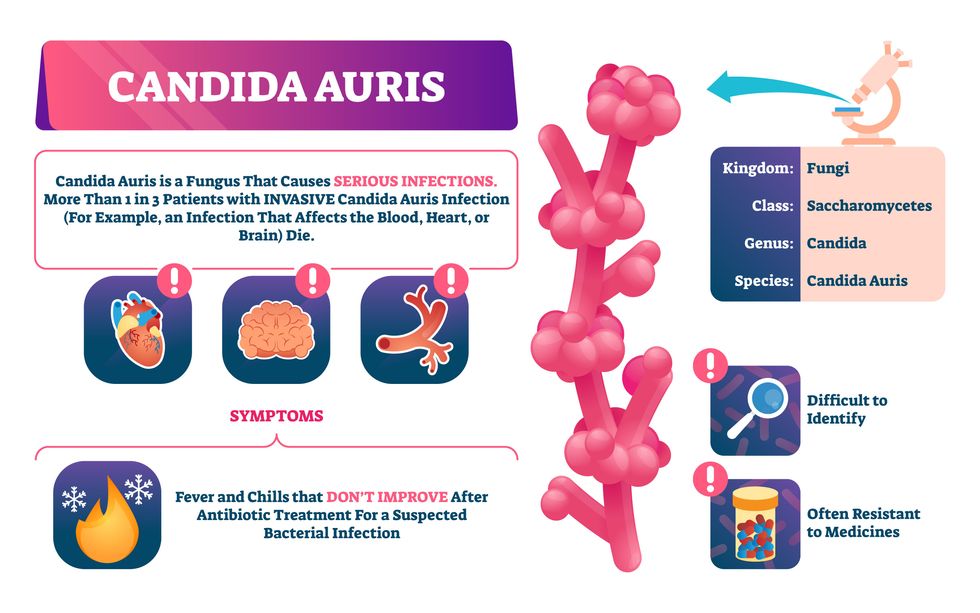

Bacterial antibiotic resistance has been a concern in the medical field for several years. Now a new, similar threat is arising: drug-resistant fungal infections. The Centers for Disease Control and Prevention considers antifungal and antimicrobial resistance to be among the world’s greatest public health challenges.

One particular type of fungal infection caused by Candida auris is escalating rapidly throughout the world. And to make matters worse, C. auris is becoming increasingly resistant to current antifungal medications, which means that if you develop a C. auris infection, the drugs your doctor prescribes may not work. “We’re effectively out of medicines,” says Thomas Walsh, founding director of the Center for Innovative Therapeutics and Diagnostics, a translational research center dedicated to solving the antimicrobial resistance problem. Walsh spoke about the challenges at a Demy-Colton Virtual Salon, one in a series of interactive discussions among life science thought leaders.

Although C. auris typically doesn’t sicken healthy people, it afflicts immunocompromised hospital patients and may cause severe infections that can lead to sepsis, a life-threatening condition in which the overwhelmed immune system begins to attack the body’s own organs. Between 30 and 60 percent of patients who contract a C. auris infection die from it, according to the CDC. People who are undergoing stem cell transplants, have catheters or have taken antifungal or antibiotic medicines are at highest risk. “We’re coming to a perfect storm of increasing resistance rates, increasing numbers of immunosuppressed patients worldwide and a bug that is adapting to higher temperatures as the climate changes,” says Prabhavathi Fernandes, chair of the National BioDefense Science Board.

Most Candida species aren’t well-adapted to our body temperatures so they aren’t a threat. C. auris, however, thrives at human body temperatures.

Although medical professionals aren’t concerned at this point about C. auris evolving to affect healthy people, they worry that its presence in hospitals can turn routine surgeries into life-threatening calamities. “It’s coming,” says Fernandes. “It’s just a matter of time.”

An emerging global threat

“Fungi are found in the environment,” explains Fernandes, so Candida spores can easily wind up on people’s skin. In hospitals, they can be transferred from contact with healthcare workers or contaminated surfaces. Most Candida species aren’t well-adapted to our body temperatures so they aren’t a threat. C. auris, however, thrives at human body temperatures. It can enter the body during medical treatments that break the skin—and cause an infection. Overall, fungal infections cost some $48 billion in the U.S. each year. And infection rates are increasing because, in an ironic twist, advanced medical therapies are enabling severely ill patients to live longer and, therefore, be exposed to this pathogen.

The first-ever case of a C. auris infection was reported in Japan in 2009, although an analysis of Candida samples dated the earliest strain to a 1996 sample from South Korea. Since then, five separate varieties – called clades, which are similar to strains among bacteria – developed independently in different geographies: South Asia, East Asia, South Africa, South America and, recently, Iran. So far, C. auris infections have been reported in 35 countries.

In the U.S., the first infection was reported in 2016, and the CDC started tracking it nationally two years later. During that time, 5,654 cases have been reported to the CDC, which only tracks U.S. data.

What’s more notable than the number of cases is their rate of increase. In 2016, new cases increased by 175 percent and, on average, they have approximately doubled every year. From 2016 through 2022, the number of infections jumped from 63 to 2,377, a roughly 37-fold increase.

“This reminds me of what we saw with epidemics from 2013 through 2020… with Ebola, Zika and the COVID-19 pandemic,” says Robin Robinson, CEO of Spriovas and founding director of the Biomedical Advanced Research and Development Authority (BARDA), which is part of the U.S. Department of Health and Human Services. These epidemics started with a hockey stick trajectory, Robinson says—a gradual growth leading to a sharp spike, just like the shape of a hockey stick.

Another challenge is that right now medics don’t have rapid diagnostic tests for fungal infections. Currently, patients are often misdiagnosed because C. auris resembles several other easily treated fungi. Or they are diagnosed long after the infection begins and is harder to treat.

The problem is that existing diagnostics tests can only identify C. auris once it reaches the bloodstream. Yet, because this pathogen infects bodily tissues first, it should be possible to catch it much earlier before it becomes life-threatening. “We have to diagnose it before it reaches the bloodstream,” Walsh says.

The most alarming fact is that some Candida infections no longer respond to standard therapeutics.

“We need to focus on rapid diagnostic tests that do not rely on a positive blood culture,” says John Sperzel, president and CEO of T2 Biosystems, a company specializing in diagnostics solutions. Blood cultures typically take two to three days for the concentration of Candida to become large enough to detect. The company’s novel test detects about 90 percent of Candida species within three to five hours—thanks to its ability to spot minute quantities of the pathogen in blood samples instead of waiting for them to incubate and proliferate.

Unlike other Candida species C. auris thrives at human body temperatures

Adobe Stock

Tackling the resistance challenge

The most alarming fact is that some Candida infections no longer respond to standard therapeutics. The number of cases that stopped responding to echinocandin, the first-line therapy for most Candida infections, tripled in 2020, according to a study by the CDC.

Now, each of the first four clades shows varying levels of resistance to all three commonly prescribed classes of antifungal medications, such as azoles, echinocandins, and polyenes. For example, 97 percent of infections from C. auris Clade I are resistant to fluconazole, 54 percent to voriconazole and 30 percent of amphotericin. Nearly half are resistant to multiple antifungal drugs. Even with Clade II fungi, which has the least resistance of all the clades, 11 to 14 percent have become resistant to fluconazole.

Anti-fungal therapies typically target specific chemical compounds present on fungi’s cell membranes, but not on human cells—otherwise the medicine would cause damage to our own tissues. Fluconazole and other azole antifungals target a compound called ergosterol, preventing the fungal cells from replicating. Over the years, however, C. auris evolved to resist it, so existing fungal medications don’t work as well anymore.

A newer class of drugs called echinocandins targets a different part of the fungal cell. “The echinocandins – like caspofungin – inhibit (a part of the fungi) involved in making glucan, which is an essential component of the fungal cell wall and is not found in human cells,” Fernandes says. New antifungal treatments are needed, she adds, but there are only a few magic bullets that will hit just the fungus and not the human cells.

Research to fight infections also has been challenged by a lack of government support. That is changing now that BARDA is requesting proposals to develop novel antifungals. “The scope includes C. auris, as well as antifungals following a radiological/nuclear emergency, says BARDA spokesperson Elleen Kane.

The remaining challenge is the number of patients available to participate in clinical trials. Large numbers are needed, but the available patients are quite sick and often die before trials can be completed. Consequently, few biopharmaceutical companies are developing new treatments for C. auris.

ClinicalTrials.gov reports only two drugs in development for invasive C. auris infections—those than can spread throughout the body rather than localize in one particular area, like throat or vaginal infections: ibrexafungerp by Scynexis, Inc., fosmanogepix, by Pfizer.

Scynexis’ ibrexafungerp appears active against C. auris and other emerging, drug-resistant pathogens. The FDA recently approved it as a therapy for vaginal yeast infections and it is undergoing Phase III clinical trials against invasive candidiasis in an attempt to keep the infection from spreading.

“Ibreafungerp is structurally different from other echinocandins,” Fernandes says, because it targets a different part of the fungus. “We’re lucky it has activity against C. auris.”

Pfizer’s fosmanogepix is in Phase II clinical trials for patients with invasive fungal infections caused by multiple Candida species. Results are showing significantly better survival rates for people taking fosmanogepix.

Although C. auris does pose a serious threat to healthcare worldwide, scientists try to stay optimistic—because they recognized the problem early enough, they might have solutions in place before the perfect storm hits. “There is a bit of hope,” says Robinson. “BARDA has finally been able to fund the development of new antifungal agents and, hopefully, this year we can get several new classes of antifungals into development.”

New elevators could lift up our access to space

A space elevator would be cheaper and cleaner than using rockets

Story by Big Think

When people first started exploring space in the 1960s, it cost upwards of $80,000 (adjusted for inflation) to put a single pound of payload into low-Earth orbit.

A major reason for this high cost was the need to build a new, expensive rocket for every launch. That really started to change when SpaceX began making cheap, reusable rockets, and today, the company is ferrying customer payloads to LEO at a price of just $1,300 per pound.

This is making space accessible to scientists, startups, and tourists who never could have afforded it previously, but the cheapest way to reach orbit might not be a rocket at all — it could be an elevator.

The space elevator

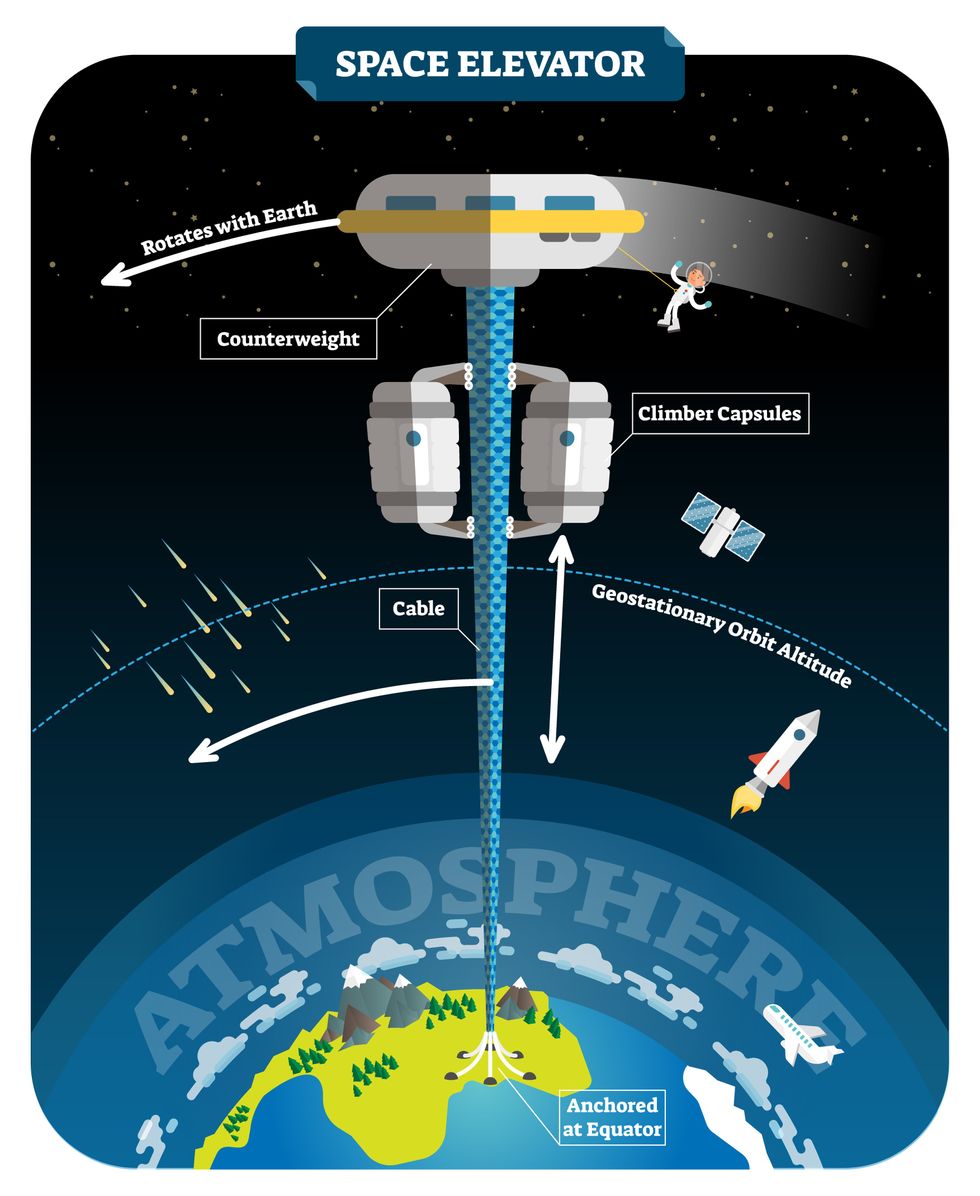

The seeds for a space elevator were first planted by Russian scientist Konstantin Tsiolkovsky in 1895, who, after visiting the 1,000-foot (305 m) Eiffel Tower, published a paper theorizing about the construction of a structure 22,000 miles (35,400 km) high.

This would provide access to geostationary orbit, an altitude where objects appear to remain fixed above Earth’s surface, but Tsiolkovsky conceded that no material could support the weight of such a tower.

We could then send electrically powered “climber” vehicles up and down the tether to deliver payloads to any Earth orbit.

In 1959, soon after Sputnik, Russian engineer Yuri N. Artsutanov proposed a way around this issue: instead of building a space elevator from the ground up, start at the top. More specifically, he suggested placing a satellite in geostationary orbit and dropping a tether from it down to Earth’s equator. As the tether descended, the satellite would ascend. Once attached to Earth’s surface, the tether would be kept taut, thanks to a combination of gravitational and centrifugal forces.

We could then send electrically powered “climber” vehicles up and down the tether to deliver payloads to any Earth orbit. According to physicist Bradley Edwards, who researched the concept for NASA about 20 years ago, it’d cost $10 billion and take 15 years to build a space elevator, but once operational, the cost of sending a payload to any Earth orbit could be as low as $100 per pound.

“Once you reduce the cost to almost a Fed-Ex kind of level, it opens the doors to lots of people, lots of countries, and lots of companies to get involved in space,” Edwards told Space.com in 2005.

In addition to the economic advantages, a space elevator would also be cleaner than using rockets — there’d be no burning of fuel, no harmful greenhouse emissions — and the new transport system wouldn’t contribute to the problem of space junk to the same degree that expendable rockets do.

So, why don’t we have one yet?

Tether troubles

Edwards wrote in his report for NASA that all of the technology needed to build a space elevator already existed except the material needed to build the tether, which needs to be light but also strong enough to withstand all the huge forces acting upon it.

The good news, according to the report, was that the perfect material — ultra-strong, ultra-tiny “nanotubes” of carbon — would be available in just two years.

“[S]teel is not strong enough, neither is Kevlar, carbon fiber, spider silk, or any other material other than carbon nanotubes,” wrote Edwards. “Fortunately for us, carbon nanotube research is extremely hot right now, and it is progressing quickly to commercial production.”Unfortunately, he misjudged how hard it would be to synthesize carbon nanotubes — to date, no one has been able to grow one longer than 21 inches (53 cm).

Further research into the material revealed that it tends to fray under extreme stress, too, meaning even if we could manufacture carbon nanotubes at the lengths needed, they’d be at risk of snapping, not only destroying the space elevator, but threatening lives on Earth.

Looking ahead

Carbon nanotubes might have been the early frontrunner as the tether material for space elevators, but there are other options, including graphene, an essentially two-dimensional form of carbon that is already easier to scale up than nanotubes (though still not easy).

Contrary to Edwards’ report, Johns Hopkins University researchers Sean Sun and Dan Popescu say Kevlar fibers could work — we would just need to constantly repair the tether, the same way the human body constantly repairs its tendons.

“Using sensors and artificially intelligent software, it would be possible to model the whole tether mathematically so as to predict when, where, and how the fibers would break,” the researchers wrote in Aeon in 2018.

“When they did, speedy robotic climbers patrolling up and down the tether would replace them, adjusting the rate of maintenance and repair as needed — mimicking the sensitivity of biological processes,” they continued.Astronomers from the University of Cambridge and Columbia University also think Kevlar could work for a space elevator — if we built it from the moon, rather than Earth.

They call their concept the Spaceline, and the idea is that a tether attached to the moon’s surface could extend toward Earth’s geostationary orbit, held taut by the pull of our planet’s gravity. We could then use rockets to deliver payloads — and potentially people — to solar-powered climber robots positioned at the end of this 200,000+ mile long tether. The bots could then travel up the line to the moon’s surface.

This wouldn’t eliminate the need for rockets to get into Earth’s orbit, but it would be a cheaper way to get to the moon. The forces acting on a lunar space elevator wouldn’t be as strong as one extending from Earth’s surface, either, according to the researchers, opening up more options for tether materials.

“[T]he necessary strength of the material is much lower than an Earth-based elevator — and thus it could be built from fibers that are already mass-produced … and relatively affordable,” they wrote in a paper shared on the preprint server arXiv.

After riding up the Earth-based space elevator, a capsule would fly to a space station attached to the tether of the moon-based one.

Electrically powered climber capsules could go up down the tether to deliver payloads to any Earth orbit.

Adobe Stock

Some Chinese researchers, meanwhile, aren’t giving up on the idea of using carbon nanotubes for a space elevator — in 2018, a team from Tsinghua University revealed that they’d developed nanotubes that they say are strong enough for a tether.

The researchers are still working on the issue of scaling up production, but in 2021, state-owned news outlet Xinhua released a video depicting an in-development concept, called “Sky Ladder,” that would consist of space elevators above Earth and the moon.

After riding up the Earth-based space elevator, a capsule would fly to a space station attached to the tether of the moon-based one. If the project could be pulled off — a huge if — China predicts Sky Ladder could cut the cost of sending people and goods to the moon by 96 percent.

The bottom line

In the 120 years since Tsiolkovsky looked at the Eiffel Tower and thought way bigger, tremendous progress has been made developing materials with the properties needed for a space elevator. At this point, it seems likely we could one day have a material that can be manufactured at the scale needed for a tether — but by the time that happens, the need for a space elevator may have evaporated.

Several aerospace companies are making progress with their own reusable rockets, and as those join the market with SpaceX, competition could cause launch prices to fall further.

California startup SpinLaunch, meanwhile, is developing a massive centrifuge to fling payloads into space, where much smaller rockets can propel them into orbit. If the company succeeds (another one of those big ifs), it says the system would slash the amount of fuel needed to reach orbit by 70 percent.

Even if SpinLaunch doesn’t get off the ground, several groups are developing environmentally friendly rocket fuels that produce far fewer (or no) harmful emissions. More work is needed to efficiently scale up their production, but overcoming that hurdle will likely be far easier than building a 22,000-mile (35,400-km) elevator to space.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.