The New Prospective Parenthood: When Does More Info Become Too Much?

Obstetric ultrasound of a fourth-month fetus.

Peggy Clark was 12 weeks pregnant when she went in for a nuchal translucency (NT) scan to see whether her unborn son had Down syndrome. The sonographic scan measures how much fluid has accumulated at the back of the baby's neck: the more fluid, the higher the likelihood of an abnormality. The technician said the baby was in such an odd position, the test couldn't be done. Clark, whose name has been changed to protect her privacy, was told to come back in a week and a half to see if the baby had moved.

"With the growing sophistication of prenatal tests, it seems that the more questions are answered, the more new ones arise."

"It was like the baby was saying, 'I don't want you to know,'" she recently recalled.

When they went back, they found the baby had a thickened neck. It's just one factor in identifying Down's, but it's a strong indication. At that point, she was 13 weeks and four days pregnant. She went to the doctor the next day for a blood test. It took another two weeks for the results, which again came back positive, though there was still a .3% margin of error. Clark said she knew she wanted to terminate the pregnancy if the baby had Down's, but she didn't want the guilt of knowing there was a small chance the tests were wrong. At that point, she was too late to do a Chorionic villus sampling (CVS), when chorionic villi cells are removed from the placenta and sequenced. And she was too early to do an amniocentesis, which isn't done until between 14 and 20 weeks of the pregnancy. So she says she had to sit and wait, calling those few weeks "brutal."

By the time they did the amnio, she was already nearly 18 weeks pregnant and was getting really big. When that test also came back positive, she made the anguished decision to end the pregnancy.

Now, three years after Clark's painful experience, a newer form of prenatal testing routinely gives would-be parents more information much earlier on, especially for women who are over 35. As soon as nine weeks into their pregnancies, women can have a simple blood test to determine if there are abnormalities in the DNA of chromosomes 21, which indicates Down syndrome, as well as in chromosomes 13 and 18. Using next-generation sequencing technologies, the test separates out and examines circulating fetal cells in the mother's blood, which eliminates the risks of drawing fluid directly from the fetus or placenta.

"Finding out your baby has Down syndrome at 11 or 12 weeks is much easier for parents to make any decision they may want to make, as opposed to 16 or 17 weeks," said Dr. Leena Nathan, an obstetrician-gynecologist in UCLA's healthcare system. "People are much more willing or able to perhaps make a decision to terminate the pregnancy."

But with the growing sophistication of prenatal tests, it seems that the more questions are answered, the more new ones arise--questions that previous generations have never had to face. And as genomic sequencing improves in its predictive accuracy at the earliest stages of life, the challenges only stand to increase. Imagine, for example, learning your child's lifetime risk of breast cancer when you are ten weeks pregnant. Would you terminate if you knew she had a 70 percent risk? What about 40 percent? Lots of hard questions. Few easy answers. Once the cost of whole genome sequencing drops low enough, probably within the next five to ten years according to experts, such comprehensive testing may become the new standard of care. Welcome to the future of prospective parenthood.

"In one way, it's a blessing to have this information. On the other hand, it's very difficult to deal with."

How Did We Get Here?

Prenatal testing is not new. In 1979, amniocentesis was used to detect whether certain inherited diseases had been passed on to the fetus. Through the 1980s, parents could be tested to see if they carried disease like Tay-Sachs, Sickle cell anemia, Cystic fibrosis and Duchenne muscular dystrophy. By the early 1990s, doctors could test for even more genetic diseases and the CVS test was beginning to become available.

A few years later, a technique called preimplantation genetic diagnosis (PGD) emerged, in which embryos created in a lab with sperm and harvested eggs would be allowed to grow for several days and then cells would be removed and tested to see if any carried genetic diseases. Those that weren't affected could be transferred back to the mother. Once in vitro fertilization (IVF) took off, so did genetic testing. The labs test the embryonic cells and get them back to the IVF facilities within 24 hours so that embryo selection can occur. In the case of IVF, genetic tests are done so early, parents don't even have to decide whether to terminate a pregnancy. Embryos with issues often aren't even used.

"It was a very expensive endeavor but exciting to see our ability to avoid disorders, especially for families that don't want to terminate a pregnancy," said Sara Katsanis, an expert in genetic testing who teaches at Duke University. "In one way, it's a blessing to have this information (about genetic disorders). On the other hand, it's very difficult to deal with. To make that decision about whether to terminate a pregnancy is very hard."

Just Because We Can, Does It Mean We Should?

Parents in the future may not only find out whether their child has a genetic disease but will be able to potentially fix the problem through a highly controversial process called gene editing. But because we can, does it mean we should? So far, genes have been edited in other species, but to date, the procedure has not been used on an unborn child for reproductive purposes apart from research.

"There's a lot of bioethics debate and convening of groups to try to figure out where genetic manipulation is going to be useful and necessary, and where it is going to need some restrictions," said Katsanis. She notes that it's very useful in areas like cancer research, so one wouldn't want to over-regulate it.

There are already some criteria as to which genes can be manipulated and which should be left alone, said Evan Snyder, professor and director of the Center for Stem Cells and Regenerative Medicine at Sanford Children's Health Research Center in La Jolla, Calif. He noted that genes don't stand in isolation. That is, if you modify one that causes disease, will it disrupt others? There may be unintended consequences, he added.

"As the technical dilemmas get fixed, some of the ethical dilemmas get fixed. But others arise. It's kind of like ethical whack-a-mole."

But gene editing of embryos may take years to become an acceptable practice, if ever, so a more pressing issue concerns the rationale behind embryo selection during IVF. Prospective parents can end up with anywhere from zero to thirty embryos from the procedure and must choose only one (rarely two) to implant. Since embryos are routinely tested now for certain diseases, and selected or discarded based on that information, should it be ethical—and legal—to make selections based on particular traits, too? To date so far, parents can select for gender, but no other traits. Whether trait selection becomes routine is a matter of time and business opportunity, Katsanis said. So far, the old-fashioned way of making a baby combined with the luck of the draw seems to be the preferred method for the marketplace. But that could change.

"You can easily see a family deciding not to implant a lethal gene for Tay-Sachs or Duchene or Cystic fibrosis. It becomes more ethically challenging when you make a decision to implant girls and not any of the boys," said Snyder. "And then as we get better and better, we can start assigning genes to certain skills and this starts to become science fiction."

Once a pregnancy occurs, prospective parents of all stripes will face decisions about whether to keep the fetus based on the information that increasingly robust prenatal testing will provide. What influences their decision is the crux of another ethical knot, said Snyder. A clear-cut rationale would be if the baby is anencephalic, or it has no brain. A harder one might be, "It's a girl, and I wanted a boy," or "The child will only be 5' 2" tall in adulthood."

"Those are the extremes, but the ultimate question is: At what point is it a legitimate response to say, I don't want to keep this baby?'" he said. Of course, people's responses will vary, so the bigger conundrum for society is: Where should a line be drawn—if at all? Should a woman who is within the legal scope of termination (up to around 24 weeks, though it varies by state) be allowed to terminate her pregnancy for any reason whatsoever? Or must she have a so-called "legitimate" rationale?

"As the technical dilemmas get fixed, some of the ethical dilemmas get fixed. But others arise. It's kind of like ethical whack-a-mole," Snyder said.

One of the newer moles to emerge is, if one can fix a damaged gene, for how long should it be fixed? In one child? In the family's whole line, going forward? If the editing is done in the embryo right after the egg and sperm have united and before the cells begin dividing and becoming specialized, when, say, there are just two or four cells, it will likely affect that child's entire reproductive system and thus all of that child's progeny going forward.

"This notion of changing things forever is a major debate," Snyder said. "It literally gets into metaphysics. On the one hand, you could say, well, wouldn't it be great to get rid of Cystic fibrosis forever? What bad could come of getting rid of a mutant gene forever? But we're not smart enough to know what other things the gene might be doing, and how disrupting one thing could affect this network."

As with any tool, there are risks and benefits, said Michael Kalichman, Director of the Research Ethics Program at the University of California San Diego. While we can envision diverse benefits from a better understanding of human biology and medicine, it is clear that our species can also misuse those tools – from stigmatizing children with certain genetic traits as being "less than," aka dystopian sci-fi movies like Gattaca, to judging parents for making sure their child carries or doesn't carry a particular trait.

"The best chance to ensure that the benefits of this technology will outweigh the risks," Kalichman said, "is for all stakeholders to engage in thoughtful conversations, strive for understanding of diverse viewpoints, and then develop strategies and policies to protect against those uses that are considered to be problematic."

Send in the Robots: A Look into the Future of Firefighting

Drones are just one of several new technologies that are rising to the challenge of more frequent wildfires.

April in Paris stood still. Flames engulfed the beloved Notre Dame Cathedral as the world watched, horrified, in 2019. The worst looked inevitable when firefighters were forced to retreat from the out-of-control fire.

But the Paris Fire Brigade had an ace up their sleeve: Colossus, a firefighting robot. The seemingly indestructible tank-like machine ripped through the blaze with its motorized water cannon. It was able to put out flames in places that would have been deadly for firefighters.

Firefighting is entering a new era, driven by necessity. Conventional methods of managing fires have been no match for the fiercer, more expansive fires being triggered by climate change, urban sprawl, and susceptible wooded areas.

Robots have been a game-changer. Inspired by Paris, the Los Angeles Fire Department (LAFD) was the first in the U.S. to deploy a firefighting robot in 2021, the Thermite Robotics System 3 – RS3, for short.

RS3 is a 3,500-pound turbine on a crawler—the size of a Smart car—with a 36.8 horsepower engine that can go for 20 hours without refueling. It can plow through hazardous terrain, move cars from its path, and pull an 8,000-pound object from a fire.

All that while spurting 2,500 gallons of water per minute with a rear exhaust fan clearing the smoke. At a recent trade show, RS3 was billed as equivalent to 10 firefighters. The Los Angeles Times referred to it as “a droid on steroids.”

Robots such as the Thermite RS3 can plow through hazardous terrain and pull an 8,000-pound object from a fire.

Los Angeles Fire Department

The advantage of the robot is obvious. Operated remotely from a distance, it greatly reduces an emergency responder’s exposure to danger, says Wade White, assistant chief of the LAFD. The robot can be sent into airplane fires, nuclear reactors, hazardous areas with carcinogens (think East Palestine, Ohio), or buildings where a roof collapse is imminent.

Advances for firefighters are taking many other forms as well. Fibers have been developed that make the firefighter’s coat lighter and more protective from carcinogens. New wearable devices track firefighters’ biometrics in real time so commanders can monitor their heat stress and exertion levels. A sensor patch is in development which takes readings every four seconds to detect dangerous gases such as methane and carbon dioxide. A sonic fire extinguisher is being explored that uses low frequency soundwaves to remove oxygen from air molecules without unhealthy chemical compounds.

The demand for this technology is only increasing, especially with the recent rise in wildfires. In 2021, fires were responsible for 3,800 deaths and 14,700 injuries of civilians in this country. Last year, 68,988 wildfires burned down 7.6 million acres. Whether the next generation of firefighting can address these new challenges could depend on special cameras, robots of the aerial variety, AI and smart systems.

Fighting fire with cameras

Another key innovation for firefighters is a thermal imaging camera (TIC) that improves visibility through smoke. “At a fire, you might not see your hand in front of your face,” says White. “Using the TIC screen, you can find the door to get out safely or see a victim in the corner.” Since these cameras were introduced in the 1990s, the price has come down enough (from $10,000 or more to about $700) that every LAFD firefighter on duty has been carrying one since 2019, says White.

TICs are about the size of a cell phone. The camera can sense movement and body heat so it is ideal as a search tool for people trapped in buildings. If a firefighter has not moved in 30 seconds, the motion detector picks that up, too, and broadcasts a distress signal and directional information to others.

To enable firefighters to operate the camera hands-free, the newest TICs can attach inside a helmet. The firefighter sees the images inside their mask.

TICs also can be mounted on drones to get a bird’s-eye, 360 degree view of a disaster or scout for hot spots through the smoke. In addition, the camera can take photos to aid arson investigations or help determine the cause of a fire.

More help From above

Firefighters prefer the term “unmanned aerial systems” (UAS) to drones to differentiate them from military use.

A UAS carrying a camera can provide aerial scene monitoring and topography maps to help fire captains deploy resources more efficiently. At night, floodlights from the drone can illuminate the landscape for firefighters. They can drop off payloads of blankets, parachutes, life preservers or radio devices for stranded people to communicate, too. And like the robot, the UAS reduces risks for ground crews and helicopter pilots by limiting their contact with toxic fumes, hazardous chemicals, and explosive materials.

“The nice thing about drones is that they perform multiple missions at once,” says Sean Triplett, team lead of fire and aviation management, tools and technology at the Forest Service.

Experts predict we’ll see swarms of drones dropping water and fire retardant on burning buildings and forests in the near future.

The UAS is especially helpful during wildfires because it can track fires, get ahead of wind currents and warn firefighters of wind shifts in real time. The U.S. Forest Service also uses long endurance, solar-powered drones that can fly for up to 30 days at a time to detect early signs of wildfire. Wildfires are no longer seasonal in California – they are a year-long threat, notes Thanh Nguyen, fire captain at the Orange County Fire Authority.

In March, Nguyen’s crew deployed a drone to scope out a huge landslide following torrential rains in San Clemente, CA. Emergency responders used photos and videos from the drone to survey the evacuated area, enabling them to stay clear of ground on the hillside that was still sliding.

Improvements in drone batteries are enabling them to fly for longer with heavier payloads. Experts predict we’ll see swarms of drones dropping water and fire retardant on burning buildings and forests in the near future.

AI to the rescue

The biggest peril for a firefighter is often what they don’t see coming. Flashovers are a leading cause of firefighter deaths, for example. They occur when flammable materials in an enclosed area ignite almost instantaneously. Or dangerous backdrafts can happen when a firefighter opens a window or door; the air rushing in can ignite a fire without warning.

The Fire Fighting Technology Group at the National Institute of Standards and Technology (NIST) is developing tools and systems to predict these potentially lethal events with computer models and artificial intelligence.

Partnering with other institutions, NIST researchers developed the Flashover Prediction Neural Network (FlashNet) after looking at common house layouts and running sets of scenarios through a machine-learning model. In the lab, FlashNet was able to predict a flashover 30 seconds before it happened with 92.1% success. When ready for release, the technology will be bundled with sensors that are already installed in buildings, says Anthony Putorti, leader of the NIST group.

The NIST team also examined data from hundreds of backdrafts as a basis for a machine-learning model to predict them. In testing chambers the model predicted them correctly 70.8% of the time; accuracy increased to 82.4% when measures of backdrafts were taken in more positions at different heights in the chambers. Developers are working on how to integrate the AI into a small handheld device that can probe the air of a room through cracks around a door or through a created opening, Putorti says. This way, the air can be analyzed with the device to alert firefighters of any significant backdraft risk.

Early wildfire detection technologies based on AI are in the works, too. The Forest Service predicts the acreage burned each year during wildfires will more than triple in the next 80 years. By gathering information on historic fires, weather patterns, and topography, says White, AI can help firefighters manage wildfires before they grow out of control and create effective evacuation plans based on population data and fire patterns.

The future is connectivity

We are in our infancy with “smart firefighting,” says Casey Grant, executive director emeritus of the Fire Protection Research Foundation. Grant foresees a new era of cyber-physical systems for firefighters—a massive integration of wireless networks, advanced sensors, 3D simulations, and cloud services. To enhance teamwork, the system will connect all branches of emergency responders—fire, emergency medical services, law enforcement.

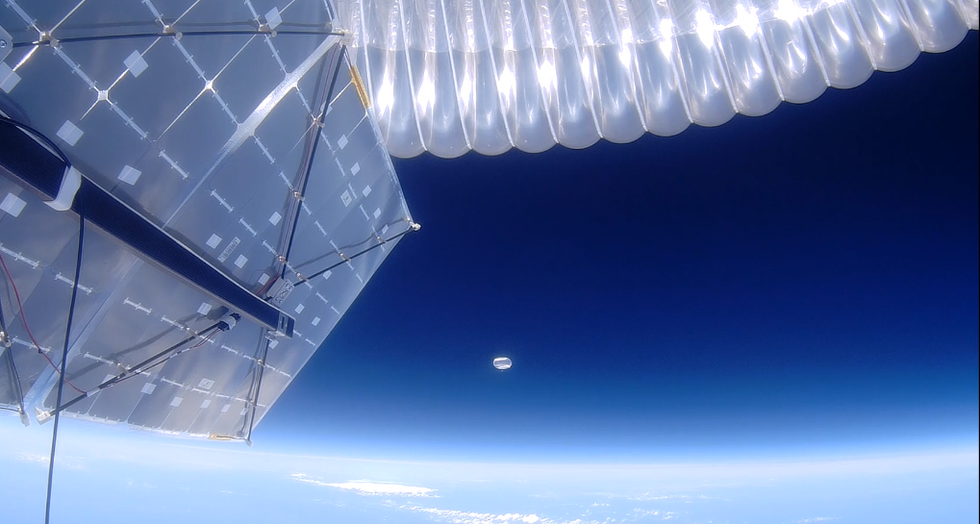

FirstNet (First Responder Network Authority) now provides a nationwide high-speed broadband network with 5G capabilities for first responders through a terrestrial cell network. Battling wildfires, however, the Forest Service needed an alternative because they don’t always have access to a power source. In 2022, they contracted with Aerostar for a high altitude balloon (60,000 feet up) that can extend cell phone power and LTE. “It puts a bubble of connectivity over the fire to hook in the internet,” Triplett explains.

A high altitude balloon, 60,000 feet high, can extend cell phone power and LTE, putting a "bubble" of internet connectivity over fires.

Courtesy of USDA Forest Service

Advances in harvesting, processing and delivering data will improve safety and decision-making for firefighters, Grant sums up. Smart systems may eventually calculate fire flow paths and make recommendations about the best ways to navigate specific fire conditions. NIST’s plan to combine FlashNet with sensors is one example.

The biggest challenge is developing firefighting technology that can work across multiple channels—federal, state, local and tribal systems as well as for fire, police and other emergency services— in any location, says Triplett. “When there’s a wildfire, there are no political boundaries,” he says. “All hands are on deck.”

New device can diagnose concussions using AI

A new test called the EyeBox could provide a more objective - and portable - tool to measure whether people have concussions in stadiums and hospitals.

For a long time after Mary Smith hit her head, she was not able to function. Test after test came back normal, so her doctors ruled out the concussion, but she knew something was wrong. Finally, when she took a test with a novel EyeBOX device, recently approved by the FDA, she learned she indeed had been dealing with the aftermath of a concussion.

“I felt like even my husband and doctors thought I was faking it or crazy,” recalls Smith, who preferred not to disclose her real name. “When I took the EyeBOX test it showed that my eyes were not moving together and my BOX score was abnormal.” To her diagnosticians, scientists at the Minneapolis-based company Oculogica who developed the EyeBOX, these markers were concussion signs. “I cried knowing that finally someone could figure out what was wrong with me and help me get better,” she says.

Concussion affects around 42 million people worldwide. While it’s increasingly common in the news because of sports injuries, anything that causes damage to the head, from a fall to a car accident, can result in a concussion. The sudden blow or jolt can disrupt the normal way the brain works. In the immediate aftermath, people may suffer from headaches, lose consciousness and experience dizziness, confusion and vomiting. Some recover but others have side effects that can last for years, particularly affecting memory and concentration.

There is no simple standard-of-care test to confirm a concussion or rule it out. Neither do they appear on MRI and CT scans. Instead, medical professionals use more indirect approaches that test symptoms of concussions, such as assessments of patients’ learning and memory skills, ability to concentrate and problem solving. They also look at balance and coordination. Most tests are in the form of questionnaires or symptom checklists. Consequently, they have limitations, can be biased and may miss a concussion or produce a false positive. Some people suspected of having a concussion may ordinarily have difficulties with literary and problem-solving tests because of language challenges or education levels.

Another problem with current tests is that patients, particularly soldiers who want to return to combat and athletes who would like to keep competing, could try and hide their symptoms to avoid being diagnosed with a brain injury. Trauma physicians who work with concussion patients have the need for a tool that is more objective and consistent.

“This type of assessment doesn’t rely on the patient's education level, willingness to follow instructions or cooperation. You can’t game this.” -- Uzma Samadani, founder of Oculogica

“The importance of having an objective measurement tool for the diagnosis of concussion is of great importance,” says Douglas Powell, associate professor of biomechanics at the University of Memphis, with research interests in sports injury and concussion. “While there are a number of promising systems or metrics, we have yet to develop a system that is portable, accessible and objective for use on the sideline and in the clinic. The EyeBOX may be able to address these issues, though time will be the ultimate test of performance.”

The EyeBOX as a window inside the brain

Using eye movements to diagnose a concussion has emerged as a promising technique since around 2010. Oculogica combined eye movements with AI to develop the EyeBOX to develop an unbiased objective diagnostic tool.

“What’s so great about this type of assessment is it doesn’t rely on the patient's education level, willingness to follow instructions or cooperation,” says Uzma Samadani, a neurosurgeon and brain injury researcher at the University of Minnesota, who founded Oculogica. “You can’t game this. It assesses functions that are prompted by your brain.”

In 2010, Samadani was working on a clinical trial to improve the outcome of brain injuries. The team needed some way to measure if seriously brain injured patients were improving. One thing patients could do was watch TV. So Samadani designed and patented an AI-based algorithm that tracks the relationship between eye movement and concussion.

The EyeBOX test requires patients to watch movie or music clips for 220 seconds. An eye tracking camera records subconscious eye movements, tracking eye positions 500 times per seconds as patients watch the video. It collects over 100,000 data points. The device then uses AI to assess whether there’s any disruptions from the normal way the eyes move.

Cranial nerves are responsible for transmitting information between the brain and the body. Many are involved in eye movement. Pressure caused by a concussion can affect how these nerves work. So tracking how the eyes move can indicate if there’s anything wrong with the cranial nerves and where the problem lies.

If someone is healthy, their eyes should be able to focus on an object, follow movement and both eyes should be coordinated with each other. The EyeBox can detect abnormalities. For example, if a patient’s eyes are coordinated but they are not moving as they should, that indicates issues in the central brain stem, whilst only one eye moving abnormally suggests that a particular nerve section is affected.

Uzma Samadani with the EyeBOX device

Courtesy Oculogica

“The EyeBOX is a monitor for cranial nerves,” says Samadani. “Essentially it’s a form of digital neurological exam. “Several other eye-tracking techniques already exist, but they rely on subjective self-reported symptoms. Many also require a baseline, a measure of how patients reacted when they were healthy, which often isn’t available.

VOMS (Vestibular Ocular Motor Screen) is one of the most accurate diagnostic tests used in clinics in combination with other tests, but it is subjective. It involves a therapist getting patients to move their head or eyes as they focus or follow a particular object. Patients then report their symptoms.

The King-Devick test measures how fast patients can read numbers and compares it to a baseline. Since it is mainly used for athletes, the initial test is completed before the season starts. But participants can manipulate it. It also cannot be used in emergency rooms because the majority of patients wouldn’t have prior baseline tests.

Unlike these tests, EyeBOX doesn’t use a baseline and is objective because it doesn’t rely on patients’ answers. “It shows great promise,” says Thomas Wilcockson, a senior lecturer of psychology in Loughborough University, who is an expert in using eye tracking techniques in neurological disorders. “Baseline testing of eye movements is not always possible. Alternative measures of concussion currently in development, including work with VR headsets, seem to currently require it. Therefore the EyeBOX may have an advantage.”

A technology that’s still evolving

In their last clinical trial, Oculogica used the EyeBOX to test 46 patients who had concussion and 236 patients who did not. The sensitivity of the EyeBOX, or the probability of it correctly identifying the patient’s concussion, was 80.4 percent. Meanwhile, the test accurately ruled out a concussion in 66.1 percent of cases. This is known as its specificity score.

While the team is working on improving the numbers, experts who treat concussion patients find the device promising. “I strongly support their use of eye tracking for diagnostic decision making,” says Douglas Powell. “But for diagnostic tests, we would prefer at least one of the sensitivity or specificity values to be greater than 90 percent. Powell compares EyeBOX with the Buffalo Concussion Treadmill Test, which has sensitivity and specificity values of 73 and 78 percent, respectively. The VOMS also has shown greater accuracy than the EyeBOX, at least for now. Still, EyeBOX is competitive with the best diagnostic testing available for concussion and Powell hopes that its detection prowess will improve. “I anticipate that the algorithms being used by Oculogica will be under continuous revision and expect the results will improve within the next several years.”

“The color of your skin can have a huge impact in how quickly you are triaged and managed for brain injury. People of color have significantly worse outcomes after traumatic brain injury than people who are white.” -- Uzma Samadani, founder of Oculogica

Powell thinks the EyeBOX could be an important complement to other concussion assessments.

“The Oculogica product is a viable diagnostic tool that supports clinical decision making. However, concussion is an injury that can present with a wide array of symptoms, and the use of technology such as the Oculogica should always be a supplement to patient interaction.”

Ioannis Mavroudis, a consultant neurologist at Leeds Teaching Hospital, agrees that the EyeBOX has promise, but cautions that concussions are too complex to rely on the device alone. For example, not all concussions affect how eyes move. “I believe that it can definitely help, however not all concussions show changes in eye movements. I believe that if this could be combined with a cognitive assessment the results would be impressive.”

The Oculogica team submitted their clinical data for FDA approval and received it in 2018. Now, they’re working to bring the test to the commercial market and using the device clinically to help diagnose concussions for clients. They also want to look at other areas of brain health in the next few years. Samadani believes that the EyeBOX could possibly be used to detect diseases like multiple sclerosis or other neurological conditions. “It’s a completely new way of figuring out what someone’s neurological exam is and we’re only beginning to realize the potential,” says Samadani.

One of Samadani’s biggest aspirations is to help reduce inequalities in healthcare because of skin color and other factors like money or language barriers. From that perspective, the EyeBOX’s greatest potential could be in emergency rooms. It can help diagnose concussions in addition to the questionnaires, assessments and symptom checklists, currently used in the emergency departments. Unlike these more subjective tests, EyeBOX can produce an objective analysis of brain injury through AI when patients are admitted and assessed, unrelated to their socioeconomic status, education, or language abilities. Studies suggest that there are racial disparities in how patients with brain injuries are treated, such as how quickly they're assessed and get a treatment plan.

“The color of your skin can have a huge impact in how quickly you are triaged and managed for brain injury,” says Samadani. “As a result of that, people of color have significantly worse outcomes after traumatic brain injury than people who are white. The EyeBOX has the potential to reduce inequalities,” she explains.

“If you had a digital neurological tool that you could screen and triage patients on admission to the emergency department you would potentially be able to make sure that everybody got the same standard of care,” says Samadani. “My goal is to change the way brain injury is diagnosed and defined.”