We Pioneered a Technology to Save Millions of Poor Children, But a Worldwide Smear Campaign Has Blocked It

On left, a picture of white rice next to Golden Rice, and on right, a girl who lost one eye due to vitamin A deficiency.

In a few weeks it will be 20 years that we three have been working together. Our project has been independently praised as one of the most influential of all projects of the last 50 years.

Two of us figured out how to make rice produce a source of vitamin A, and the rice becomes a golden color instead of white.

The project's objectives have been admired by some and vilified by others. It has directly involved teams of highly motivated people from a handful of nations, from both the private and public sector. A book, dedicated to the three of us, has been written about our work. Nevertheless, success has, so far, eluded us all. The story of our thwarted efforts is a tragedy that we hope will soon – finally – reach a milestone of potentially profound significance for humanity.

So, what have we been working on, and why haven't we succeeded yet?

Food: everybody needs it, and many are fortunate enough to have enough, even too much of it. Food is a highly emotional subject on every continent and in every culture. For a healthy life our food has to provide energy, as well as, in very small amounts, minerals and vitamins. A varied diet, easily achieved and common in industrialised countries, provides everything.

But poor people in countries where rice is grown often eat little else. White rice only provides energy: no minerals or vitamins. And the lack of one of the vitamins, vitamin A, is responsible for killing around 4,500 poor children every day. Lack of vitamin A is the biggest killer of children, and also the main cause of irreversible childhood blindness.

Our project is about fixing this one dietary deficiency – vitamin A – in this one crop – rice – for this one group of people. It is a huge group though: half of the world's population live by eating a lot of rice every day. Two of us (PB & IP) figured out how to make rice produce a source of vitamin A, and the rice becomes a golden color instead of white. The source is beta-carotene, which the human body converts to vitamin A. Beta-carotene is what makes carrots orange. Our rice is called "Golden Rice."

The technology has been donated to assist those rice eaters who suffer from vitamin A deficiency ('VAD') so that Golden Rice will cost no more than white rice, there will be no restrictions on the small farmers who grow it, and nothing extra to pay for the additional nutrition. Very small amounts of beta-carotene will contribute to alleviation of VAD, and even the earliest version of Golden Rice – which had smaller amounts than today's Golden Rice - would have helped. So far, though, no small farmer has been allowed to grow it. What happened?

To create Golden Rice, it was necessary to precisely add two genes to the 30,000 genes normally present in rice plants. One of the genes is from maize, also known as corn, and the other from a commonly eaten soil bacterium. The only difference from white rice is that Golden Rice contains beta-carotene.

It has been proven to be safe to man and the environment, and consumption of only small quantities of Golden Rice will combat VAD, with no chance of overdosing. All current Golden Rice results from one introduction of these two genes in 2004. But the use of that method – once, 15 years ago - means that Golden Rice is a 'GMO' ('genetically modified organism'). The enzymes used in the manufacture of bread, cheese, beer and wine, and the insulin which diabetics take to keep them alive, are all made from GMOs too.

The first GMO crops were created by agri-business companies. Suspicion of the technology and suspicion of commercial motivations merged, only for crop (but not enzymes or pharmaceutical) applications of GMO technology. Activists motivated by these suspicions were successful in getting the 'precautionary principle' incorporated in an international treaty which has been ratified by 166 countries and the European Union – The Cartagena Protocol.

The equivalent of 13 jumbo jets full of children crashes into the ground every day and kills them all, because of vitamin A deficiency.

This protocol is the basis of national rules governing the introduction of GMO crops in every signatory country. Government regulators in, and for, each country must agree before a GMO crop can be 'registered' to be allowed to be used by the public in that country. Currently regulatory decisions to allow Golden Rice release are being considered in Bangladesh and the Philippines.

The Cartagena Protocol obliges the regulators in each country to consider all possible risks, and to take no account of any possible benefits. Because the anti-gmo-activists' initial concerns were principally about the environment, the responsibility for governments' regulation for GMO crops – even for Golden Rice, a public health project delivered through agriculture – usually rests with the Ministry of the Environment, not the Ministry of Health or the Ministry of Agriculture.

Activists discovered, before Golden Rice was created, that inducing fear of GMO food crops from 'multinational agribusinesses' was very good for generating donations from a public that was largely illiterate about food technology and production. And this source of emotionally charged donations would cease if Golden Rice was proven to save sight and lives, because Golden Rice represented the opposite of all the tropes used in anti-GMO campaigns.

Golden Rice is created to deliver a consumer benefit, it is not for profit – to multinational agribusiness or anyone else; the technology originated in the public sector and is being delivered through the public sector. It is entirely altruistic in its motivations; which activists find impossible to accept. So, the activists believed, suspicion against Golden Rice had to be amplified, Golden Rice had to be stopped: "If we lose the Golden Rice battle, we lose the GMO war."

Activism continues to this day. And any Environment Ministry, with no responsibility for public health or agriculture, and of course an interest in avoiding controversy about its regulatory decisions, is vulnerable to such activism.

The anti-GMO crop campaigns, and especially anti-Golden Rice campaigns, have been extraordinarily effective. If so much regulation by governments is required, surely there must be something to be suspicious about: 'There is no smoke without fire'. The suspicion pervades research institutions and universities, the publishers of scientific journals and The World Health Organisation, and UNICEF: even the most scientifically literate are fearful of entanglement in activist-stoked public controversy.

The equivalent of 13 jumbo jets full of children crashes into the ground every day and kills them all, because of VAD. Yet the solution of Golden Rice, developed by national scientists in the counties where VAD is endemic, is ignored because of fear of controversy, and because poor children's deaths can be ignored without controversy.

Perhaps more controversy lies in not taking scientifically based regulatory decisions than in taking them.

The tide is turning, however. 151 Nobel Laureates, a very significant proportion of all Nobel Laureates, have called on the UN, governments of the world, and Greenpeace to cease their unfounded vilification of GMO crops in general and Golden Rice in particular. A recent Golden Rice article commented, "What shocks me is that some activists continue to misrepresent the truth about the rice. The cynic in me expects profit-driven multinationals to behave unethically, but I want to think that those voluntarily campaigning on issues they care about have higher standards."

The recently published book has exposed the frustrating saga in simple detail. And the publicity from all the above is perhaps starting to change the balance of where controversy lies. Perhaps more controversy lies in not taking scientifically based regulatory decisions than in taking them.

But until they are taken, while there continues a chance of frustrating the objectives of the Golden Rice project, the antagonism will continue. And despite a solution so close at hand, VAD-induced death and blindness, and the misery of affected families, will continue also.

© The Authors 2019. This article is distributed under the terms of the Creative Commons Attribution 4.0 International License, which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The Creative Commons Public Domain Dedication waiver applies to the data made available in this article, unless otherwise stated.

Facial Recognition Can Reduce Racial Profiling and False Arrests

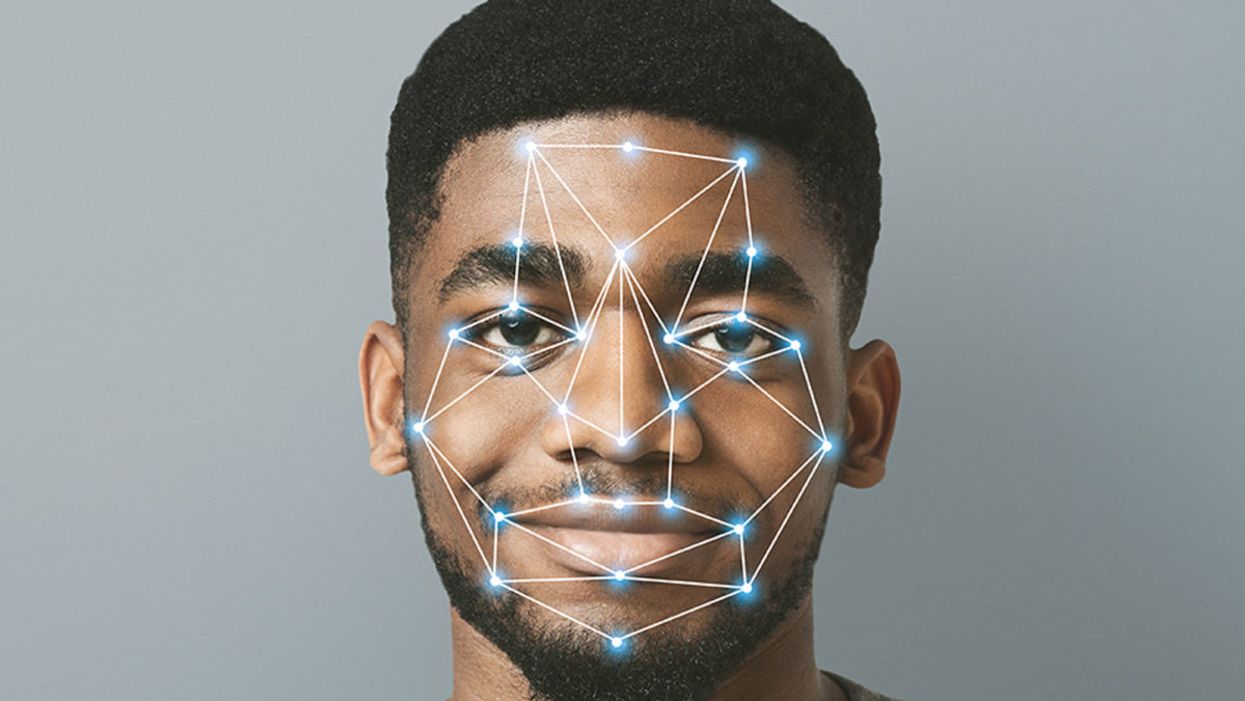

The use of face recognition technology is expanding exponentially right now.

[Editor's Note: This essay is in response to our current Big Question, which we posed to experts with different perspectives: "Do you think the use of facial recognition technology by the police or government should be banned? If so, why? If not, what limits, if any, should be placed on its use?"]

Opposing facial recognition technology has become an article of faith for civil libertarians. Many who supported the bans in cities like San Francisco and Oakland have declared the technology to be inherently racist and abusive.

The greatest danger would be to categorically oppose this technology and pretend that it will simply go away.

I have spent my career as a criminal defense attorney and a civil libertarian -- and I do not fear it. Indeed, I see it as positive so long as it is appropriately regulated and controlled.

We are living in the beginning of a biometric age, where technology uses our physical or biological characteristics for a variety of products and services. It holds great promises as well as great risks. The greatest danger, however, would be to categorically oppose this technology and pretend that it will simply go away.

This is an age driven as much by consumer as it is government demand. Living in denial may be emotionally appealing, but it will only hasten the creation of post-privacy world. If we do not address this emerging technology, movements in public will increasingly result in instant recognition and even tracking. It is the type of fish-bowl society that strips away any expectation of privacy in our interactions and associations.

The biometrics field is expanding exponentially, largely due to the popularity of consumer products using facial recognition technology (FRT) -- from the iPhone program to shopping ones that recognize customers.

But the privacy community is losing this battle because it is using the privacy rationales and doctrines forged in the earlier electronic surveillance periods. Just as generals are often accused of planning to fight the last war, civil libertarians can sometimes cling to past models despite their decreasing relevance in the current world.

I see FRT as having positive implications that are worth pursuing. When properly used, biometrics can actually enhance privacy interests and even reduce racial profiling by reducing false arrests and the warrantless "patdowns" allowed by the Supreme Court. Bans not only deny police a technology widely used by businesses, but return police to the highly flawed default of "eye balling" suspects -- a system with a considerably higher error rate than top FRT programs.

Officers are often wrong and stop a great number of suspects in the hopes of finding a wanted felon.

A study in Australia showed that passport officers who had taken photographs of subjects in ideal conditions nonetheless experienced high error rates when identifying them shortly afterward, including 14 percent false acceptance rates. Currently, officers stop suspects based on their memory from seeing a photograph days or weeks earlier. They are often wrong and stop a great number of suspects in the hopes of finding a wanted felon. The best FRT programs achieve an astonishing accuracy rate, though real-world implementation has challenges that must be addressed.

One legitimate concern raised in early studies showed higher error rates in recognitions for certain groups, particularly African American women. An MIT study finding that error rate prompted major improvements in the algorithms as well as training changes to greatly reduce the frequency of errors. The issue remains a concern, but there is nothing inherently racist in algorithms. These are a set of computer instructions that isolate and process with the parameters and conditions set by creators.

To be sure, there is room for improvement in some algorithms. Tests performed by the American Civil Liberties Union (ACLU) reportedly showed only an 80 percent accuracy rate in comparing mug shots to pictures of members of Congress when using Amazon's "Rekognition" system. It recently showed the same 80 percent rate in doing the same comparison to members of the California legislators.

However, different algorithms are available with differing levels of performance. Moreover, these products can be set with a lower discrimination level. The fact is that the top algorithms tested by the National Institute of Standards and Technology showed that their accuracy rate is greater than 99 percent.

The greatest threat of biometric technologies is to democratic values.

Assuming a top-performing algorithm is used, the result could be highly beneficial for civil liberties as opposed to the alternative of "eye balling" suspects. Consider the Boston Bombing where police declared a "containment zone" and forced families into the street with their hands in the air.

The suspect, Dzhokhar Tsarnaev, moved around Boston and was ultimately found outside the "containment zone" once authorities abandoned near martial law. He was caught on some surveillance systems but not identified. FRT can help law enforcement avoid time-consuming area searches and the questionable practice of forcing people out of their homes to physically examine them.

If we are to avoid a post-privacy world, we will have to redefine what we are trying to protect and reconceive how we hope to protect it. In my view, the greatest threat of biometric technologies is to democratic values. Authoritarian nations like China have made huge investments into FRT precisely because they know that the threat of recognition in public deters citizens from associating or interacting with protesters or dissidents. Recognition changes conduct. That chilling effect is what we have the worry about the most.

Conventional privacy doctrines do not offer much protection. The very concept of "public privacy" is treated as something of an oxymoron by courts. Public acts and associations are treated as lacking any reasonable expectation of privacy. In the same vein, the right to anonymity is not a strong avenue for protection. We are not living in an anonymous world anymore.

Consumers want products like FaceFind, which link their images with others across social media. They like "frictionless" transactions and authentications using faceprints. Despite the hyperbole in places like San Francisco, civil libertarians will not succeed in getting that cat to walk backwards.

The basis for biometric privacy protection should not be focused on anonymity, but rather obscurity. You will be increasingly subject to transparency-forcing technology, but we can legislatively mandate ways of obscuring that information. That is the objective of the Biometric Privacy Act that I have proposed in recent research. However, no such comprehensive legislation has passed through Congress.

The ability to spot fraudulent entries at airports or recognizing a felon in flight has obvious benefits for all citizens.

We also need to recognize that FRT has many beneficial uses. Biometric guns can reduce accidents and criminals' conduct. New authentications using FRT and other biometric programs could reduce identity theft.

And, yes, FRT could help protect against unnecessary police stops or false arrests. Finally, and not insignificantly, this technology could stop serious crimes, from terrorist attacks to the capturing of dangerous felons. The ability to spot fraudulent entries at airports or recognizing a felon in flight has obvious benefits for all citizens.

We can live and thrive in a biometric era. However, we will need to bring together civil libertarians with business and government experts if we are going to control this technology rather than have it control us.

[Editor's Note: Read the opposite perspective here.]

The Case for an Outright Ban on Facial Recognition Technology

Some experts worry that facial recognition technology is a dangerous enough threat to our basic rights that it should be entirely banned from police and government use.

[Editor's Note: This essay is in response to our current Big Question, which we posed to experts with different perspectives: "Do you think the use of facial recognition technology by the police or government should be banned? If so, why? If not, what limits, if any, should be placed on its use?"]

In a surprise appearance at the tail end of Amazon's much-hyped annual product event last month, CEO Jeff Bezos casually told reporters that his company is writing its own facial recognition legislation.

The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct.

It seems that when you're the wealthiest human alive, there's nothing strange about your company––the largest in the world profiting from the spread of face surveillance technology––writing the rules that govern it.

But if lawmakers and advocates fall into Silicon Valley's trap of "regulating" facial recognition and other forms of invasive biometric surveillance, that's exactly what will happen.

Industry-friendly regulations won't fix the dangers inherent in widespread use of face scanning software, whether it's deployed by governments or for commercial purposes. The use of this technology in public places and for surveillance purposes should be banned outright, and its use by private companies and individuals should be severely restricted. As artificial intelligence expert Luke Stark wrote, it's dangerous enough that it should be outlawed for "almost all practical purposes."

Like biological or nuclear weapons, facial recognition poses such a profound threat to the future of humanity and our basic rights that any potential benefits are far outweighed by the inevitable harms.

We live in cities and towns with an exponentially growing number of always-on cameras, installed in everything from cars to children's toys to Amazon's police-friendly doorbells. The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct. It's a world where nearly everything we do, everywhere we go, everyone we associate with, and everything we buy — or look at and even think of buying — is recorded and can be tracked and analyzed at a mass scale for unimaginably awful purposes.

Biometric tracking enables the automated and pervasive monitoring of an entire population. There's ample evidence that this type of dragnet mass data collection and analysis is not useful for public safety, but it's perfect for oppression and social control.

Law enforcement defenders of facial recognition often state that the technology simply lets them do what they would be doing anyway: compare footage or photos against mug shots, drivers licenses, or other databases, but faster. And they're not wrong. But the speed and automation enabled by artificial intelligence-powered surveillance fundamentally changes the impact of that surveillance on our society. Being able to do something exponentially faster, and using significantly less human and financial resources, alters the nature of that thing. The Fourth Amendment becomes meaningless in a world where private companies record everything we do and provide governments with easy tools to request and analyze footage from a growing, privately owned, panopticon.

Tech giants like Microsoft and Amazon insist that facial recognition will be a lucrative boon for humanity, as long as there are proper safeguards in place. This disingenuous call for regulation is straight out of the same lobbying playbook that telecom companies have used to attack net neutrality and Silicon Valley has used to scuttle meaningful data privacy legislation. Companies are calling for regulation because they want their corporate lawyers and lobbyists to help write the rules of the road, to ensure those rules are friendly to their business models. They're trying to skip the debate about what role, if any, technology this uniquely dangerous should play in a free and open society. They want to rush ahead to the discussion about how we roll it out.

We need spaces that are free from government and societal intrusion in order to advance as a civilization.

Facial recognition is spreading very quickly. But backlash is growing too. Several cities have already banned government entities, including police and schools, from using biometric surveillance. Others have local ordinances in the works, and there's state legislation brewing in Michigan, Massachusetts, Utah, and California. Meanwhile, there is growing bipartisan agreement in U.S. Congress to rein in government use of facial recognition. We've also seen significant backlash to facial recognition growing in the U.K., within the European Parliament, and in Sweden, which recently banned its use in schools following a fine under the General Data Protection Regulation (GDPR).

At least two frontrunners in the 2020 presidential campaign have backed a ban on law enforcement use of facial recognition. Many of the largest music festivals in the world responded to Fight for the Future's campaign and committed to not use facial recognition technology on music fans.

There has been widespread reporting on the fact that existing facial recognition algorithms exhibit systemic racial and gender bias, and are more likely to misidentify people with darker skin, or who are not perceived by a computer to be a white man. Critics are right to highlight this algorithmic bias. Facial recognition is being used by law enforcement in cities like Detroit right now, and the racial bias baked into that software is doing harm. It's exacerbating existing forms of racial profiling and discrimination in everything from public housing to the criminal justice system.

But the companies that make facial recognition assure us this bias is a bug, not a feature, and that they can fix it. And they might be right. Face scanning algorithms for many purposes will improve over time. But facial recognition becoming more accurate doesn't make it less of a threat to human rights. This technology is dangerous when it's broken, but at a mass scale, it's even more dangerous when it works. And it will still disproportionately harm our society's most vulnerable members.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites.

We need spaces that are free from government and societal intrusion in order to advance as a civilization. If technology makes it so that laws can be enforced 100 percent of the time, there is no room to test whether those laws are just. If the U.S. government had ubiquitous facial recognition surveillance 50 years ago when homosexuality was still criminalized, would the LGBTQ rights movement ever have formed? In a world where private spaces don't exist, would people have felt safe enough to leave the closet and gather, build community, and form a movement? Freedom from surveillance is necessary for deviation from social norms as well as to dissent from authority, without which societal progress halts.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites. Drawing a line in the sand around tech-enhanced surveillance is the fundamental fight of this generation. Lining up to get our faces scanned to participate in society doesn't just threaten our privacy, it threatens our humanity, and our ability to be ourselves.

[Editor's Note: Read the opposite perspective here.]