New tech for prison reform spreads to 11 states

The U.S. has the highest incarceration rate in the world, costing $182 billion per year, partly because its antiquated data systems often fail to identify people who should be released. A tech nonprofit is trying to change that.

A new non-profit called Recidiviz is using data technology to reduce the size of the U.S. criminal justice system. The bi-coastal company (SF and NYC) is currently working with 11 states to improve their systems and, so far, has helped remove nearly 69,000 people — ones left floundering in jail or on parole when they should have been released.

“The root cause is fragmentation,” says Clementine Jacoby, 31, a software engineer who worked at Google before co-founding Recidiviz in 2019. In the 1970s and 80s, the U.S. built a series of disconnected data systems, and this patchwork is still being used by criminal justice authorities today. It requires parole officers to manually calculate release dates, leading to errors in many cases. “[They] have done everything they need to do to earn their release, but they're still stuck in the system,” Jacoby says.

Recidiviz has built a platform that connects the different databases, with the goal of identifying people who are already qualified for release but remain behind bars or on supervision. “Think of Recidiviz like Google Maps,” says Jacoby, who worked on Maps when she was at the tech giant. Google Maps takes in data from different sources – satellite images, street maps, local business data — and organizes it into one easy view. “Recidiviz does something similar with criminal justice data,” Jacoby explains, “making it easy to identify people eligible to come home or to move to less intensive levels of supervision.”

People like Jacoby’s uncle. His experience with incarceration is what inspired her passion for criminal justice reform in the first place.

The problems are vast

The U.S. has the highest incarceration rate in the world — 2 million people according to the watchdog group, Prison Policy Initiative — at a cost of $182 billion a year. The numbers could be a lot lower if not for an array of problems including inaccurate sentencing calculations, flawed algorithms and parole violations laws.

Sentencing miscalculations

To determine eligibility for release, the current system requires corrections officers to check 21 different requirements spread across five different databases for each of the 90 to 100 people under their supervision. These manual calculations are time prohibitive, says Jacoby, and fall victim to human error.

In addition, Recidiviz found that policies aimed at helping to reduce the prison population don’t always work correctly. A key example is time off for good behavior laws that allow inmates to earn one day off for every 30 days of good behavior. Some states' data systems are built to calculate time off as one day per month of good behavior, rather than per day. Over the course of a decade-long sentence, Jacoby says these miscalculations can lead to a huge discrepancy in the calculated release data and the actual release date.

Algorithms

Commercial algorithm-based software systems for risk assessment continue to be widely used in the criminal justice system, even though a 2018 study published in Science Advances exposed their limitations. After the study went viral, it took three years for the Justice Department to issue a report on their own flawed algorithms used to reduce the federal prison population as part of the 2018 First Step Act. The program, it was determined, overestimated the risk of putting inmates of color into early-release programs.

Despite its name, Recidiviz does not build these types of algorithms for predicting recidivism, or whether someone will commit another crime after being released from prison. Rather, Jacoby says the company’s "descriptive analytics” approach is specifically intended to weed out incarceration inequalities and avoid algorithmic pitfalls.

Parole violation laws

Research shows that 350,000 people a year — about a quarter of the total prison population — are sent back not because they’ve committed another crime, but because they’ve broken a specific rule of their probation. “Things that wouldn't send you or I to prison, but would send someone on parole,” such as crossing county lines or being in the presence of alcohol when they shouldn’t be, are inflating the prison population, says Jacoby.

It’s personal for the co-founder and CEO

“I grew up with an uncle who went into the prison system,” Jacoby says. At 19, he was sentenced to ten years in prison for a non-violent crime. A few months after being released from jail, he was sent back for a non-violent parole violation.

“For my family, the fact that one in four prison admissions are driven not by a crime but by someone who's broken a rule on probation and parole was really profound because that happened to my uncle,” Jacoby says. The experience led her to begin studying criminal justice in high school, then college. She continued her dive into how the criminal justice system works as part of her Passion Project while at Google, a program that allows employees to spend 20 percent of their time on pro-bono work. Two colleagues whose family members had also been stuck in the system joined her.

As part of the project, Jacoby interviewed hundreds of people involved in the criminal justice system. “Those on the right, those on the left, agreed that bad data was slowing down reform,” she says. Their research brought them to North Dakota where they began to understand the root of the problem. The corrections department is making “huge, consequential decisions every day [without] … the data,” Jacoby says. In a new video by Recidiviz not yet released, Jacoby recounts her exchange with the state’s director of corrections who told her, “‘It’s not that we have the data and we just don’t know how to make it public; we don’t have the information you think we have.'"

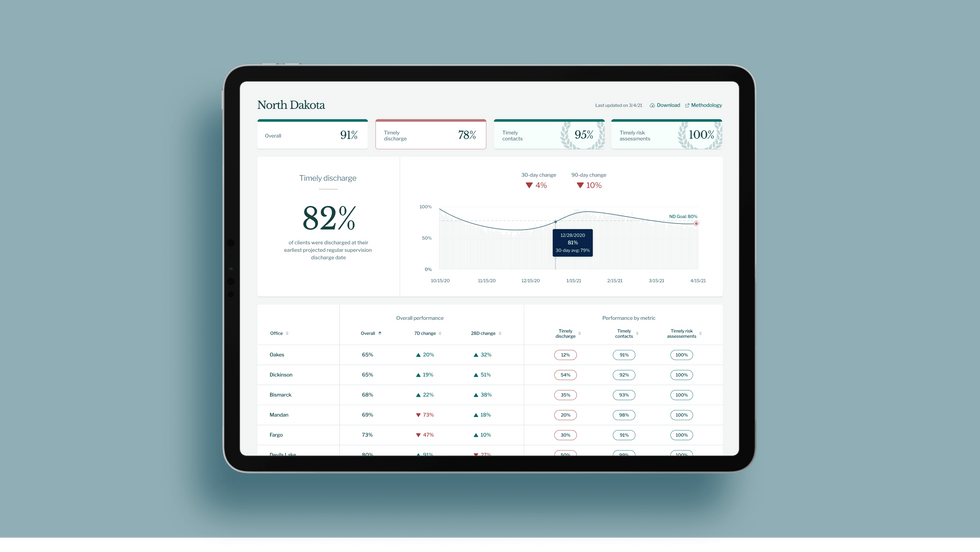

A mock-up (with fake data) of the types of dashboards and insights that Recidiviz provides to state governments.

Recidiviz

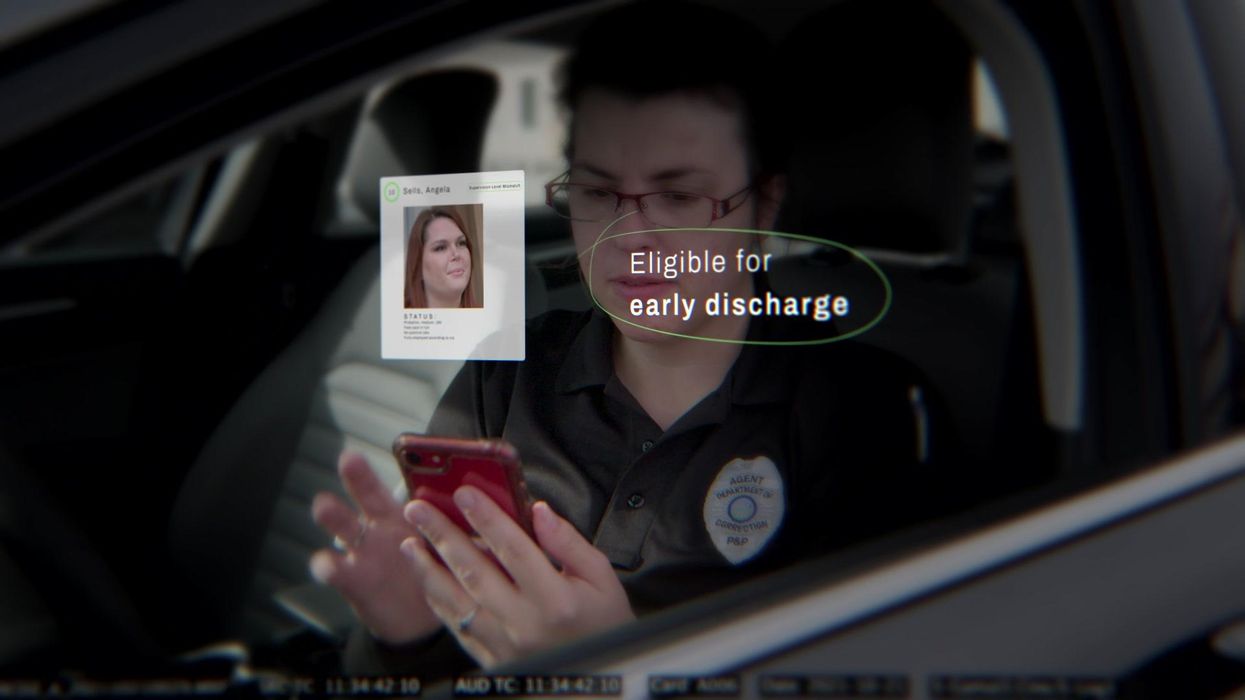

As a software engineer, Jacoby says the comment made no sense to her — until she witnessed it first-hand. “We spent a lot of time driving around in cars with corrections directors and parole officers watching them use these incredibly taxing, frankly terrible, old data systems,” Jacoby says.

As they weeded through thousands of files — some computerized, some on paper — they unearthed the consequences of bad data: Hundreds of people in prison well past their release date and thousands more whose release from parole was delayed because of minor paperwork issues. They found individuals stuck in parole because they hadn’t checked one last item off their eligibility list — like simply failing to provide their parole officer with a paystub. And, even when parolees advocated for themselves, the archaic system made it difficult for their parole officers to confirm their eligibility, so they remained in the system. Jacoby and her team also unpacked specific policies that drive racial disparities — such as fines and fees.

The Solution

It’s more than a trivial technical challenge to bring the incomplete, fragmented data onto a 21st century data platform. It takes months for Recidiviz to sift through a state’s information systems to connect databases “with the goal of tracking a person all the way through their journey and find out what’s working for 18- to 25-year-old men, what’s working for new mothers,” explains Jacoby in the video.

TED Talk: How bad data traps people in the U.S. justice system

TED Fellow Clementine Jacoby's TED Talk went live on Jan. 13. It describes how we can fix bad data in the criminal justice system, "bringing thousands of people home, reducing costs and improving public safety along the way."

Clementine Jacoby • TED2022

Ojmarrh Mitchell, an associate professor in the School of Criminology and Criminal Justice at Arizona State University, who is not involved with the company, says what Recidiviz is doing is “remarkable.” His perspective goes beyond academic analysis. In his pre-academic years, Mitchell was a probation officer, working within the framework of the “well known, but invisible” information sharing issues that plague criminal justice departments. The flexibility of Recidiviz’s approach is what makes it especially innovative, he says. “They identify the specific gaps in each jurisdiction and tailor a solution for that jurisdiction.”

On the downside, the process used by Recidiviz is “a bit opaque,” Mitchell says, with few details available on how Recidiviz designs its tools and tracks outcomes. By sharing more information about how its actions lead to progress in a given jurisdiction, Recidiviz could help reformers in other places figure out which programs have the best potential to work well.

The eleven states in which Recidiviz is working include California, Colorado, Maine, Michigan, Missouri, Pennsylvania and Tennessee. And a pilot program launched last year in Idaho, if scaled nationally, with could reduce the number of people in the criminal justice system by a quarter of a million people, Jacoby says. As part of the pilot, rather than relying on manual calculations, Recidiviz is equipping leaders and the probation officers with actionable information with a few clicks of an app that Recidiviz built.

Mitchell is disappointed that there’s even the need for Recidiviz. “This is a problem that government agencies have a responsibility to address,” he says. “But they haven’t.” For one company to come along and fill such a large gap is “remarkable.”

A recent study in The Lancet Oncology showed that AI found 20 percent more cancers on mammogram screens than radiologists alone.

Since the early 2000s, AI systems have eliminated more than 1.7 million jobs, and that number will only increase as AI improves. Some research estimates that by 2025, AI will eliminate more than 85 million jobs.

But for all the talk about job security, AI is also proving to be a powerful tool in healthcare—specifically, cancer detection. One recently published study has shown that, remarkably, artificial intelligence was able to detect 20 percent more cancers in imaging scans than radiologists alone.

Published in The Lancet Oncology, the study analyzed the scans of 80,000 Swedish women with a moderate hereditary risk of breast cancer who had undergone a mammogram between April 2021 and July 2022. Half of these scans were read by AI and then a radiologist to double-check the findings. The second group of scans was read by two researchers without the help of AI. (Currently, the standard of care across Europe is to have two radiologists analyze a scan before diagnosing a patient with breast cancer.)

The study showed that the AI group detected cancer in 6 out of every 1,000 scans, while the radiologists detected cancer in 5 per 1,000 scans. In other words, AI found 20 percent more cancers than the highly-trained radiologists.

But even though the AI was better able to pinpoint cancer on an image, it doesn’t mean radiologists will soon be out of a job. Dr. Laura Heacock, a breast radiologist at NYU, said in an interview with CNN that radiologists do much more than simply screening mammograms, and that even well-trained technology can make errors. “These tools work best when paired with highly-trained radiologists who make the final call on your mammogram. Think of it as a tool like a stethoscope for a cardiologist.”

AI is still an emerging technology, but more and more doctors are using them to detect different cancers. For example, researchers at MIT have developed a program called MIRAI, which looks at patterns in patient mammograms across a series of scans and uses an algorithm to model a patient's risk of developing breast cancer over time. The program was "trained" with more than 200,000 breast imaging scans from Massachusetts General Hospital and has been tested on over 100,000 women in different hospitals across the world. According to MIT, MIRAI "has been shown to be more accurate in predicting the risk for developing breast cancer in the short term (over a 3-year period) compared to traditional tools." It has also been able to detect breast cancer up to five years before a patient receives a diagnosis.

The challenges for cancer-detecting AI tools now is not just accuracy. AI tools are also being challenged to perform consistently well across different ages, races, and breast density profiles, particularly given the increased risks that different women face. For example, Black women are 42 percent more likely than white women to die from breast cancer, despite having nearly the same rates of breast cancer as white women. Recently, an FDA-approved AI device for screening breast cancer has come under fire for wrongly detecting cancer in Black patients significantly more often than white patients.

As AI technology improves, radiologists will be able to accurately scan a more diverse set of patients at a larger volume than ever before, potentially saving more lives than ever.

Here's how one doctor overcame extraordinary odds to help create the birth control pill

Dr. Percy Julian had so many personal and professional obstacles throughout his life, it’s amazing he was able to accomplish anything at all. But this hidden figure not only overcame these incredible obstacles, he also laid the foundation for the creation of the birth control pill.

Julian’s first obstacle was growing up in the Jim Crow-era south in the early part of the twentieth century, where racial segregation kept many African-Americans out of schools, libraries, parks, restaurants, and more. Despite limited opportunities and education, Julian was accepted to DePauw University in Indiana, where he majored in chemistry. But in college, Julian encountered another obstacle: he wasn’t allowed to stay in DePauw’s student housing because of segregation. Julian found lodging in an off-campus boarding house that refused to serve him meals. To pay for his room, board, and food, Julian waited tables and fired furnaces while he studied chemistry full-time. Incredibly, he graduated in 1920 as valedictorian of his class.

After graduation, Julian landed a fellowship at Harvard University to study chemistry—but here, Julian ran into yet another obstacle. Harvard thought that white students would resent being taught by Julian, an African-American man, so they withdrew his teaching assistantship. Julian instead decided to complete his PhD at the University of Vienna in Austria. When he did, he became one of the first African Americans to ever receive a PhD in chemistry.

Julian received offers for professorships, fellowships, and jobs throughout the 1930s, due to his impressive qualifications—but these offers were almost always revoked when schools or potential employers found out Julian was black. In one instance, Julian was offered a job at the Institute of Paper Chemistory in Appleton, Wisconsin—but Appleton, like many cities in the United States at the time, was known as a “sundown town,” which meant that black people weren’t allowed to be there after dark. As a result, Julian lost the job.

During this time, Julian became an expert at synthesis, which is the process of turning one substance into another through a series of planned chemical reactions. Julian synthesized a plant compound called physostigmine, which would later become a treatment for an eye disease called glaucoma.

In 1936, Julian was finally able to land—and keep—a job at Glidden, and there he found a way to extract soybean protein. This was used to produce a fire-retardant foam used in fire extinguishers to smother oil and gasoline fires aboard ships and aircraft carriers, and it ended up saving the lives of thousands of soldiers during World War II.

At Glidden, Julian found a way to synthesize human sex hormones such as progesterone, estrogen, and testosterone, from plants. This was a hugely profitable discovery for his company—but it also meant that clinicians now had huge quantities of these hormones, making hormone therapy cheaper and easier to come by. His work also laid the foundation for the creation of hormonal birth control: Without the ability to synthesize these hormones, hormonal birth control would not exist.

Julian left Glidden in the 1950s and formed his own company, called Julian Laboratories, outside of Chicago, where he manufactured steroids and conducted his own research. The company turned profitable within a year, but even so Julian’s obstacles weren’t over. In 1950 and 1951, Julian’s home was firebombed and attacked with dynamite, with his family inside. Julian often had to sit out on the front porch of his home with a shotgun to protect his family from violence.

But despite years of racism and violence, Julian’s story has a happy ending. Julian’s family was eventually welcomed into the neighborhood and protected from future attacks (Julian’s daughter lives there to this day). Julian then became one of the country’s first black millionaires when he sold his company in the 1960s.

When Julian passed away at the age of 76, he had more than 130 chemical patents to his name and left behind a body of work that benefits people to this day.