New tech for prison reform spreads to 11 states

The U.S. has the highest incarceration rate in the world, costing $182 billion per year, partly because its antiquated data systems often fail to identify people who should be released. A tech nonprofit is trying to change that.

A new non-profit called Recidiviz is using data technology to reduce the size of the U.S. criminal justice system. The bi-coastal company (SF and NYC) is currently working with 11 states to improve their systems and, so far, has helped remove nearly 69,000 people — ones left floundering in jail or on parole when they should have been released.

“The root cause is fragmentation,” says Clementine Jacoby, 31, a software engineer who worked at Google before co-founding Recidiviz in 2019. In the 1970s and 80s, the U.S. built a series of disconnected data systems, and this patchwork is still being used by criminal justice authorities today. It requires parole officers to manually calculate release dates, leading to errors in many cases. “[They] have done everything they need to do to earn their release, but they're still stuck in the system,” Jacoby says.

Recidiviz has built a platform that connects the different databases, with the goal of identifying people who are already qualified for release but remain behind bars or on supervision. “Think of Recidiviz like Google Maps,” says Jacoby, who worked on Maps when she was at the tech giant. Google Maps takes in data from different sources – satellite images, street maps, local business data — and organizes it into one easy view. “Recidiviz does something similar with criminal justice data,” Jacoby explains, “making it easy to identify people eligible to come home or to move to less intensive levels of supervision.”

People like Jacoby’s uncle. His experience with incarceration is what inspired her passion for criminal justice reform in the first place.

The problems are vast

The U.S. has the highest incarceration rate in the world — 2 million people according to the watchdog group, Prison Policy Initiative — at a cost of $182 billion a year. The numbers could be a lot lower if not for an array of problems including inaccurate sentencing calculations, flawed algorithms and parole violations laws.

Sentencing miscalculations

To determine eligibility for release, the current system requires corrections officers to check 21 different requirements spread across five different databases for each of the 90 to 100 people under their supervision. These manual calculations are time prohibitive, says Jacoby, and fall victim to human error.

In addition, Recidiviz found that policies aimed at helping to reduce the prison population don’t always work correctly. A key example is time off for good behavior laws that allow inmates to earn one day off for every 30 days of good behavior. Some states' data systems are built to calculate time off as one day per month of good behavior, rather than per day. Over the course of a decade-long sentence, Jacoby says these miscalculations can lead to a huge discrepancy in the calculated release data and the actual release date.

Algorithms

Commercial algorithm-based software systems for risk assessment continue to be widely used in the criminal justice system, even though a 2018 study published in Science Advances exposed their limitations. After the study went viral, it took three years for the Justice Department to issue a report on their own flawed algorithms used to reduce the federal prison population as part of the 2018 First Step Act. The program, it was determined, overestimated the risk of putting inmates of color into early-release programs.

Despite its name, Recidiviz does not build these types of algorithms for predicting recidivism, or whether someone will commit another crime after being released from prison. Rather, Jacoby says the company’s "descriptive analytics” approach is specifically intended to weed out incarceration inequalities and avoid algorithmic pitfalls.

Parole violation laws

Research shows that 350,000 people a year — about a quarter of the total prison population — are sent back not because they’ve committed another crime, but because they’ve broken a specific rule of their probation. “Things that wouldn't send you or I to prison, but would send someone on parole,” such as crossing county lines or being in the presence of alcohol when they shouldn’t be, are inflating the prison population, says Jacoby.

It’s personal for the co-founder and CEO

“I grew up with an uncle who went into the prison system,” Jacoby says. At 19, he was sentenced to ten years in prison for a non-violent crime. A few months after being released from jail, he was sent back for a non-violent parole violation.

“For my family, the fact that one in four prison admissions are driven not by a crime but by someone who's broken a rule on probation and parole was really profound because that happened to my uncle,” Jacoby says. The experience led her to begin studying criminal justice in high school, then college. She continued her dive into how the criminal justice system works as part of her Passion Project while at Google, a program that allows employees to spend 20 percent of their time on pro-bono work. Two colleagues whose family members had also been stuck in the system joined her.

As part of the project, Jacoby interviewed hundreds of people involved in the criminal justice system. “Those on the right, those on the left, agreed that bad data was slowing down reform,” she says. Their research brought them to North Dakota where they began to understand the root of the problem. The corrections department is making “huge, consequential decisions every day [without] … the data,” Jacoby says. In a new video by Recidiviz not yet released, Jacoby recounts her exchange with the state’s director of corrections who told her, “‘It’s not that we have the data and we just don’t know how to make it public; we don’t have the information you think we have.'"

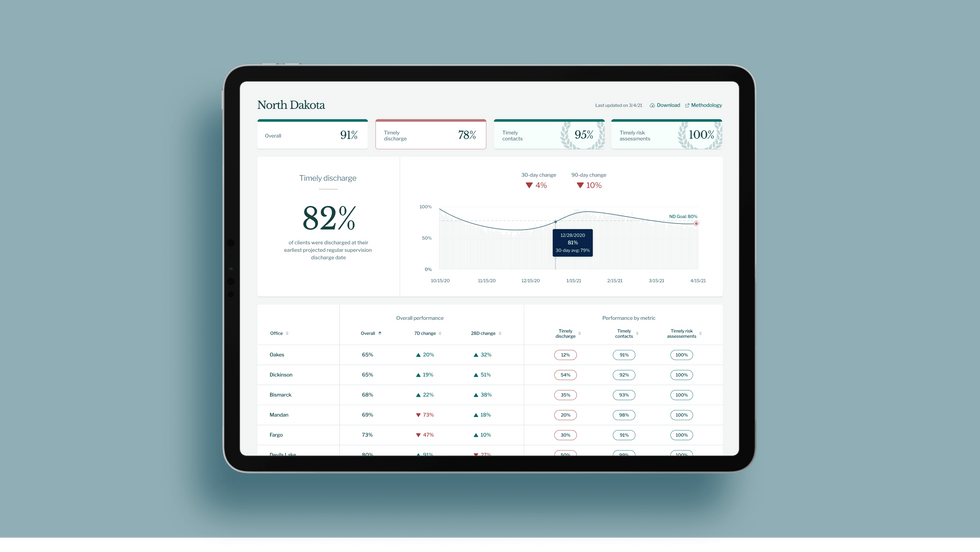

A mock-up (with fake data) of the types of dashboards and insights that Recidiviz provides to state governments.

Recidiviz

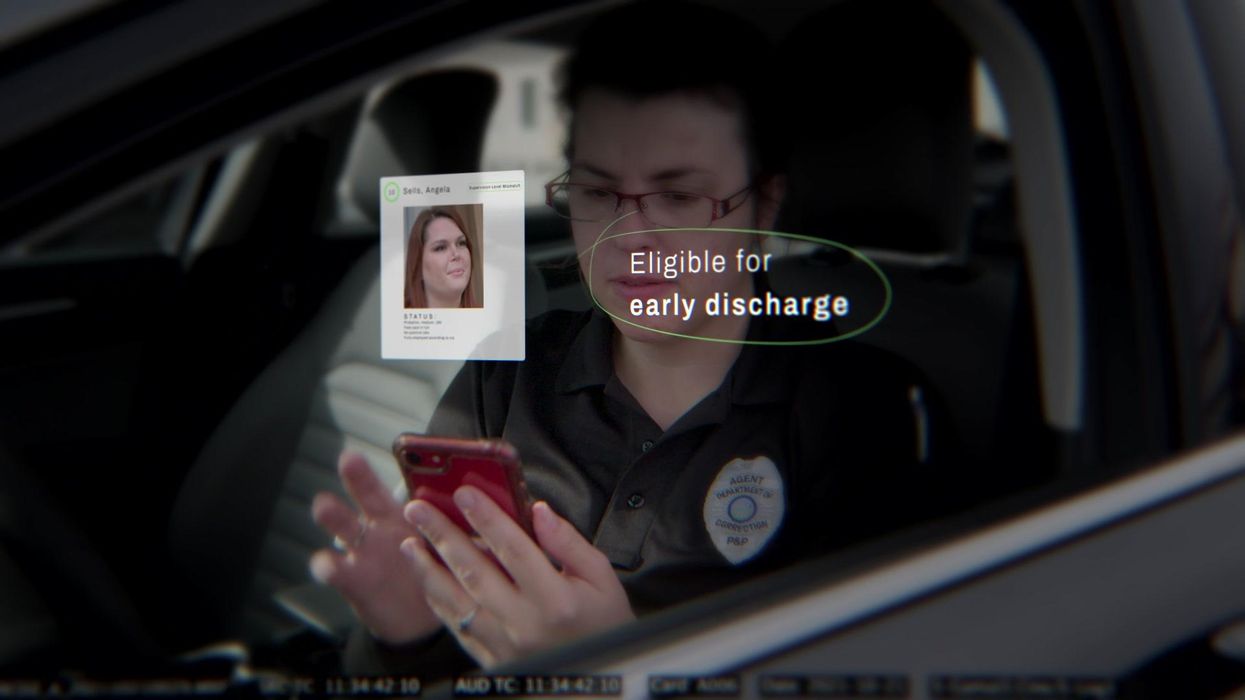

As a software engineer, Jacoby says the comment made no sense to her — until she witnessed it first-hand. “We spent a lot of time driving around in cars with corrections directors and parole officers watching them use these incredibly taxing, frankly terrible, old data systems,” Jacoby says.

As they weeded through thousands of files — some computerized, some on paper — they unearthed the consequences of bad data: Hundreds of people in prison well past their release date and thousands more whose release from parole was delayed because of minor paperwork issues. They found individuals stuck in parole because they hadn’t checked one last item off their eligibility list — like simply failing to provide their parole officer with a paystub. And, even when parolees advocated for themselves, the archaic system made it difficult for their parole officers to confirm their eligibility, so they remained in the system. Jacoby and her team also unpacked specific policies that drive racial disparities — such as fines and fees.

The Solution

It’s more than a trivial technical challenge to bring the incomplete, fragmented data onto a 21st century data platform. It takes months for Recidiviz to sift through a state’s information systems to connect databases “with the goal of tracking a person all the way through their journey and find out what’s working for 18- to 25-year-old men, what’s working for new mothers,” explains Jacoby in the video.

TED Talk: How bad data traps people in the U.S. justice system

TED Fellow Clementine Jacoby's TED Talk went live on Jan. 13. It describes how we can fix bad data in the criminal justice system, "bringing thousands of people home, reducing costs and improving public safety along the way."

Clementine Jacoby • TED2022

Ojmarrh Mitchell, an associate professor in the School of Criminology and Criminal Justice at Arizona State University, who is not involved with the company, says what Recidiviz is doing is “remarkable.” His perspective goes beyond academic analysis. In his pre-academic years, Mitchell was a probation officer, working within the framework of the “well known, but invisible” information sharing issues that plague criminal justice departments. The flexibility of Recidiviz’s approach is what makes it especially innovative, he says. “They identify the specific gaps in each jurisdiction and tailor a solution for that jurisdiction.”

On the downside, the process used by Recidiviz is “a bit opaque,” Mitchell says, with few details available on how Recidiviz designs its tools and tracks outcomes. By sharing more information about how its actions lead to progress in a given jurisdiction, Recidiviz could help reformers in other places figure out which programs have the best potential to work well.

The eleven states in which Recidiviz is working include California, Colorado, Maine, Michigan, Missouri, Pennsylvania and Tennessee. And a pilot program launched last year in Idaho, if scaled nationally, with could reduce the number of people in the criminal justice system by a quarter of a million people, Jacoby says. As part of the pilot, rather than relying on manual calculations, Recidiviz is equipping leaders and the probation officers with actionable information with a few clicks of an app that Recidiviz built.

Mitchell is disappointed that there’s even the need for Recidiviz. “This is a problem that government agencies have a responsibility to address,” he says. “But they haven’t.” For one company to come along and fill such a large gap is “remarkable.”

Can AI chatbots help with eating disorders?

A chatbot from the nonprofit National Eating Disorders Association aimed to provide 24/7 guidance on eating disorders. Several drawbacks point to the importance of therapist-tech collaboration in multiple areas of health.

Her name was Tessa and she was there to help. That’s what Sharon Maxwell read, anyway. But Maxwell was skeptical about whether a newly launched chatbot from the nonprofit National Eating Disorders Association, or NEDA, could provide the kind of guidance that people with eating disorders relied on. Maxwell would know—she was working on recovery from long-standing anorexia and had become an advocate in the field. So Maxwell took a deep breath and asked, “Hi Tessa. How do you support folks with eating disorders?”

Tessa’s reply was immediate. “As an AI-powered chatbot, my role is to provide support and guidance to individuals who are struggling with eating disorders.”

So far, so good. Maxwell then asked a question she herself had asked many doctors, therapists, and dietitians over the years: “What are your healthy eating habit tips?” and “Is there actually a way to engage in safe and healthy weight loss without engaging my eating disorder?”

Then everything went off the rails. Tessa told Maxwell an unequivocal yes, and that “it’s important to approach weight loss with a balanced and realistic mindset,” and recommended eating whole foods and lean proteins to create a 500-1000 calorie per day deficit that would lead to a loss of 1-2 pounds per week. To most people, the advice sounds anodyne, but alarm bells sounded in Maxwell’s head.

“This is actively going to feed eating disorders,” Maxwell says. “Having a chatbot be the direct response to someone reaching out for support for an eating disorder instead of the helpline seems careless.”

“The scripts that are being fed into the chatbot are only going to be as good as the person who’s feeding them.” -- Alexis Conason.

According to several decades of research, deliberate weight loss in the form of dieting is a serious risk for people with eating disorders. Maxwell says that following medical advice like what Tessa prescribed was what triggered her eating disorder as a child. And Maxwell wasn’t the only one who got such advice from the bot. When eating disorder therapist Alexis Conason tried Tessa, she asked the AI chatbot many of the questions her patients had. But instead of getting connected to resources or guidance on recovery, Conason, too, got tips on losing weight and “healthy” eating.

“The scripts that are being fed into the chatbot are only going to be as good as the person who’s feeding them,” Conason says. “It’s important that an eating disorder organization like NEDA is not reinforcing that same kind of harmful advice that we might get from medical providers who are less knowledgeable.”

Maxwell’s post about Tessa on Instagram went viral, and within days, NEDA had scrubbed all evidence of Tessa from its website. The furor has raised any number of issues about the harm perpetuated by a leading eating disorder charity and the ongoing influence of diet culture and advice that is pervasive in the field. But for AI experts, bears and bulls alike, Tessa offers a cautionary tale about what happens when a still-immature technology is unfettered and released into a vulnerable population.

Given the complexity involved in giving medical advice, the process of developing these chatbots must be rigorous and transparent, unlike NEDA’s approach.

“We don’t have a full understanding of what’s going on in these models. They’re a black box,” says Stephen Schueller, a clinical psychologist at the University of California, Irvine.

The health crisis

In March 2020, the world dove head-first into a heavily virtual world as countries scrambled to try and halt the pandemic. Even with lockdowns, hospitals were overwhelmed by the virus. The downstream effects of these lifesaving measures are still being felt, especially in mental health. Anxiety and depression are at all-time highs in teens, and a new report in The Lancet showed that post-Covid rates of newly diagnosed eating disorders in girls aged 13-16 were 42.4 percent higher than previous years.

And the crisis isn’t just in mental health.

“People are so desperate for health care advice that they'll actually go online and post pictures of [their intimate areas] and ask what kind of STD they have on public social media,” says John Ayers, an epidemiologist at the University of California, San Diego.

For many people, the choice isn’t chatbot vs. well-trained physician, but chatbot vs. nothing at all.

I know a bit about that desperation. Like Maxwell, I have struggled with a multi-decade eating disorder. I spent my 20s and 30s bouncing from crisis to crisis. I have called suicide hotlines, gone to emergency rooms, and spent weeks-on-end confined to hospital wards. Though I have found recovery in recent years, I’m still not sure what ultimately made the difference. A relapse isn't improbably, given my history. Even if I relapsed again, though, I don’t know it would occur to me to ask an AI system for help.

For one, I am privileged to have assembled a stellar group of outpatient professionals who know me, know what trips me up, and know how to respond to my frantic texts. Ditto for my close friends. What I often need is a shoulder to cry on or a place to vent—someone to hear and validate my distress. What’s more, my trust in these individuals far exceeds my confidence in the companies that create these chatbots. The Internet is full of health advice, much of it bad. Even for high-quality, evidence-based advice, medicine is often filled with disagreements about how the evidence might be applied and for whom it’s relevant. All of this is key in the training of AI systems like ChatGPT, and many AI companies remain silent on this process, Schueller says.

The problem, Ayers points out, is that for many people, the choice isn’t chatbot vs. well-trained physician, but chatbot vs. nothing at all. Hence the proliferation of “does this infection make my scrotum look strange?” questions. Where AI can truly shine, he says, is not by providing direct psychological help but by pointing people towards existing resources that we already know are effective.

“It’s important that these chatbots connect [their users to] to provide that human touch, to link you to resources,” Ayers says. “That’s where AI can actually save a life.”

Before building a chatbot and releasing it, developers need to pause and consult with the communities they hope to serve.

Unfortunately, many systems don’t do this. In a study published last month in the Journal of the American Medical Association, Ayers and colleagues found that although the chatbots did well at providing evidence-based answers, they often didn’t provide referrals to existing resources. Despite this, in an April 2023 study, Ayers’s team found that both patients and professionals rated the quality of the AI responses to questions, measured by both accuracy and empathy, rather highly. To Ayers, this means that AI developers should focus more on the quality of the information being delivered rather than the method of delivery itself.

Many mental health professionals have months-long waitlists, which leaves individuals to deal with illnesses on their own.

Adobe Stock

The human touch

The mental health field is facing timing constraints, too. Even before the pandemic, the U.S. suffered from a shortage of mental health providers. Since then, the rates of anxiety, depression, and eating disorders have spiked even higher, and many mental health professionals report waiting lists that are months long. Without support, individuals are left to try and cope on their own, which often means their condition deteriorates even further.

Nor do mental health crises happen during office hours. I struggled the most late at night, long after everyone else had gone to bed. I needed support during those times when I was most liable to hurt myself, not in the mornings and afternoons when I was at work.

In this sense, a 24/7 chatbot makes lots of sense. “I don't think we should stifle innovation in this space,” Schueller says. “Because if there was any system that needs to be innovated, it's mental health services, because they are sadly insufficient. They’re terrible.”

But before building a chatbot and releasing it, Tina Hernandez-Boussard, a data scientist at Stanford Medicine, says that developers need to pause and consult with the communities they hope to serve. It requires a deep understanding of what their needs are, the language they use to describe their concerns, existing resources, and what kinds of topics and suggestions aren’t helpful. Even asking a simple question at the beginning of a conversation such as “Do you want to talk to an AI or a human?” could allow those individuals to pick the type of interaction that suits their needs, Hernandez-Boussard says.

NEDA did none of these things before deploying Tessa. The researchers who developed the online body positivity self-help program upon which Tessa was initially based created a set of online question-and-answer exercises to improve body image. It didn’t involve generative AI that could write its own answers. The bot deployed by NEDA did use generative AI, something that no one in the eating disorder community was aware of before Tessa was brought online. Consulting those with lived experience would have flagged Tessa’s weight loss and “healthy eating” recommendations, Conason says.

The question for healthcare isn’t whether to use AI, but how.

NEDA did not comment on initial Tessa’s development and deployment, but a spokesperson told Leaps.org that “Tessa will be back online once we are confident that the program will be run with the rule-based approach as it was designed.”

The tech and therapist collaboration

The question for healthcare isn’t whether to use AI, but how. Already, AI can spot anomalies on medical images with greater precision than human eyes and can flag specific areas of an image for a radiologist to review in greater detail. Similarly, in mental health, AI should be an add-on for therapy, not a counselor-in-a-box, says Aniket Bera, an expert on AI and mental health at Purdue University.

“If [AIs] are going to be good helpers, then we need to understand humans better,” Bera says. That means understanding what patients and therapists alike need help with and respond to.

One of the biggest challenges of struggling with chronic illness is the dehumanization that happens. You become a patient number, a set of laboratory values and test scores. Treatment is often dictated by invisible algorithms and rules that you have no control over or access to. It’s frightening and maddening. But this doesn’t mean chatbots don’t have any place in medicine and mental health. An AI system could help provide appointment reminders and answer procedural questions about parking and whether someone should fast before a test or a procedure. They can help manage billing and even provide support between outpatient sessions by offering suggestions for what coping skills to use, the best ways to manage anxiety, and point to local resources. As the bots get better, they may eventually shoulder more and more of the burden of providing mental health care. But as Maxwell learned with Tessa, it’s still no replacement for human interaction.

“I'm not suggesting we should go in and start replacing therapists with technologies,” Schueller says. Instead, he advocates for a therapist-tech collaboration. “The technology side and the human component—these things need to come together.”

Questions remain about new drug for hot flashes

In May, a new drug, Fezolinetant, was approved by the FDA to treat hot flashes associated with menopause.

Vascomotor symptoms (VMS) is the medical term for hot flashes associated with menopause. You are going to hear a lot more about it because a company has a new drug to sell. Here is what you need to know.

Menopause marks the end of a woman’s reproductive capacity. Normal hormonal production associated with that monthly cycle becomes erratic and finally ceases. For some women the transition can be relatively brief with only modest symptoms, while for others the body's “thermostat” in the brain is disrupted and they experience hot flashes and other symptoms that can disrupt daily activity. Lifestyle modification and drugs such as hormone therapy can provide some relief, but women at risk for cancer are advised not to use them and other women choose not to do so.

Fezolinetant, sold by Astellas Pharma Inc. under the product name Veozah™, was approved by the Food and Drug Administration (FDA) on May 12 to treat hot flashes associated with menopause. It is the first in a new class of drugs called neurokinin 3 receptor antagonists, which block specific neurons in the brain “thermostat” that trigger VMS. It does not appear to affect other symptoms of menopause. As with many drugs targeting a brain cell receptor, it must be taken continuously for a few days to build up a good therapeutic response, rather than working as a rescue product such as an asthma inhaler to immediately treat that condition.

Hot flashes vary greatly and naturally get better or resolve completely with time. That contributes to a placebo effect and makes it more difficult to judge the outcome of any intervention. Early this year, a meta analysis of 17 studies of drug trials for hot flashes found an unusually large placebo response in those types of studies; the placebo groups had an average of 5.44 fewer hot flashes and a 36 percent reduction in their severity.

In studies of fezolinetant, the drug recently approved by the FDA, the placebo benefit was strong and persistent. The drug group bested the placebo response to a statistically significant degree but, “If people have gone from 11 hot flashes a day to eight or seven in the placebo group and down to a couple fewer ones in the drug groups, how meaningful is that? Having six hot flashes a day is still pretty unpleasant,” says Diana Zuckerman, president of the National Center for Health Research (NCHR), a health oriented think tank.

“Is a reduction compared to placebo of 2-3 hot flashes per day, in a population of women experiencing 10-11 moderate to severe hot flashes daily, enough relief to be clinically meaningful?” Andrea LaCroix asked a commentary published in Nature Medicine. She is an epidemiologist at the University of California San Diego and a leader of the MsFlash network that has conducted a handful of NIH-funded studies on menopause.

Questions Remain

LaCroix and others have raised questions about how Astellas, the company that makes the new drug, handled missing data from patients who dropped out of the clinical trials. “The lack of detailed information about important parameters such as adherence and missing data raises concerns that the reported benefits of fezolinetant very likely overestimate those that will be observed in clinical practice," LaCroix wrote.

In response to this concern, Anna Criddle, director of global portfolio communications at Astellas, wrote in an email to Leaps.org: “…a full analysis of data, including adherence data and any impact of missing data, was submitted for assessment by [the FDA].”

The company ran the studies at more than 300 sites around the world. Curiously, none appear to have been at academic medical centers, which are known for higher quality research. Zuckerman says, "When somebody is paid to do a study, if they want to get paid to do another study by the same company, they will try to make sure that the results are the results that the company wants.”

Criddle said that Astellas picked the sites “that would allow us to reach a diverse population of women, including race and ethnicity.”

A trial of a lower dose of the drug was conducted in Asia. In March 2022, Astellas issued a press release saying it had failed to prove effectiveness. No further data has been released. Astellas still plans to submit the data, according to Criddle. Results from clinical trials funded by the U.S. goverment must be reported on clinicaltrials.gov within one year of the study's completion - a deadline that, in this case, has expired.

The measurement scale for hot flashes used in the studies, mild-moderate-severe, also came in for criticism. “It is really not good scale, there probably isn’t a broad enough range of things going on or descriptors,” says David Rind. He is chief medical officer of the Institute for Clinical and Economic Review (ICER), a nonprofit authority on new drugs. It conducted a thorough review and analysis of fezolinestant using then existing data gathered from conference abstracts, posters and presentations and included a public stakeholder meeting in December. A 252-page report was published in January, finding “considerable uncertainty about the comparative net health benefits of fezolinetant” versus hormone therapy.

Questions surrounding some of these issues might have been answered if the FDA had chosen to hold a public advisory committee meeting on fezolinetant, which it regularly does for first in class medicines. But the agency decided such a meeting was unnecessary.

Cost

There was little surprise when Astellas announced a list price for fezolinetant of $550 a month ($6000 annually) and a program of patient assistance to ease out of pocket expenses. The company had already incurred large expenses.

In 2017 Astellas purchased the company that originally developed fezolinetant for $534 million plus several hundred million in potential royalties. The drug company ran a "disease awareness” ad, Heat on the Street, hat aired during the Super Bowl in February, where 30 second ads cost about $7 million. Industry analysts have projected sales to be $1.9 billion by 2028.

ICER’s pre-approval evaluation said fezolinetant might "be considered cost-effective if priced around $2,000 annually. ... [It]will depend upon its price and whether it is considered an alternative to MHT [menopause hormone treatment] for all women or whether it will primarily be used by women who cannot or will not take MHT."

Criddle wrote that Astellas set the price based on the novelty of the science, the quality of evidence for the drug and its uniqueness compared to the rest of the market. She noted that an individual’s payment will depend on how much their insurance company decides to cover. “[W]e expect insurance coverage to increase over the course of the year and to achieve widespread coverage in the U.S. over time.”

Leaps.org wrote to and followed up with nine of the largest health insurers/providers asking basic questions about their coverage of fezolinetant. Only two responded. Jennifer Martin, the deputy chief consultant for pharmacy benefits management at the Department of Veterans Affairs, said the agency “covers all drugs from the date that they are launched.” Decisions on whether it will be included in the drug formulary and what if any copays might be required are under review.

“[Fezolinetant] will go through our standard P&T Committee [patient and treatment] review process in the next few months, including a review of available efficacy data, safety data, clinical practice guidelines, and comparison with other agents used for vasomotor symptoms of menopause," said Phil Blando, executive director of corporate communications for CVS Health.

Other insurers likely are going through a similar process to decide issues such as limiting coverage to women who are advised not to use hormones, how much copay will be required, and whether women will be required to first try other options or obtain approvals before getting a prescription.

Rind wants to see a few years of use before he prescribes fezolinetant broadly, and believes most doctors share his view. Nor will they be eager to fill out the additional paperwork required for women to participate in the Astellas patient assistance program, he added.

Safety

Astellas is marketing its drug by pointing out risks of hormone therapy, such as a recent paper in The BMJ, which noted that women who took hormones for even a short period of time had a 24 percent increased risk of dementia. While the percentage was scary, the combined number of women both on and off hormones who developed dementia was small. And it is unclear whether hormones are causing dementia or if more severe hot flashes are a marker for higher risk of developing dementia. This information is emerging only after 80 years of hundreds of millions of women using hormones.

In contrast, the label for fezolinetant prohibits “concomitant use with CYP1A2 inhibitors” and requires testing for liver and kidney function prior to initiating the drug and every three months thereafter. There is no human or animal data on use in a geriatric population, defined as 65 or older, a group that is likely to use the drug. Only a few thousand women have ever taken fezolinetant and most have used it for just a few months.

Options

A woman seeking relief from symptoms of menopause would like to see how fezolintant compares with other available treatment options. But Astellas did not conduct such a study and Andrea LaCroix says it is unlikely that anyone ever will.

ICER has come the closest, with a side-by-side analysis of evidence-based treatments and found that fezolinetant performed quite similarly and modestly as the others in providing relief from hot flashes. Some treatments also help with other symptoms of menopause, which fezolinetant does not.

There are many coping strategies that women can adopt to deal with hot flashes; one of the most common is dressing in layers (such as a sleeveless blouse with a sweater) that can be added or subtracted as conditions require. Avoiding caffeine, hot liquids, and spicy foods is another common strategy. “I stopped drinking hot caffeinated drinks…for several years, and you get out of the habit of drinking them,” says Zuckerman.

LaCroix curates those options at My Meno Plan, which includes a search function where you can enter your symptoms and identify which treatments might work best for you. It also links to published research papers. She says the goal is to empower women with information to make informed decisions about menopause.