New tech for prison reform spreads to 11 states

The U.S. has the highest incarceration rate in the world, costing $182 billion per year, partly because its antiquated data systems often fail to identify people who should be released. A tech nonprofit is trying to change that.

A new non-profit called Recidiviz is using data technology to reduce the size of the U.S. criminal justice system. The bi-coastal company (SF and NYC) is currently working with 11 states to improve their systems and, so far, has helped remove nearly 69,000 people — ones left floundering in jail or on parole when they should have been released.

“The root cause is fragmentation,” says Clementine Jacoby, 31, a software engineer who worked at Google before co-founding Recidiviz in 2019. In the 1970s and 80s, the U.S. built a series of disconnected data systems, and this patchwork is still being used by criminal justice authorities today. It requires parole officers to manually calculate release dates, leading to errors in many cases. “[They] have done everything they need to do to earn their release, but they're still stuck in the system,” Jacoby says.

Recidiviz has built a platform that connects the different databases, with the goal of identifying people who are already qualified for release but remain behind bars or on supervision. “Think of Recidiviz like Google Maps,” says Jacoby, who worked on Maps when she was at the tech giant. Google Maps takes in data from different sources – satellite images, street maps, local business data — and organizes it into one easy view. “Recidiviz does something similar with criminal justice data,” Jacoby explains, “making it easy to identify people eligible to come home or to move to less intensive levels of supervision.”

People like Jacoby’s uncle. His experience with incarceration is what inspired her passion for criminal justice reform in the first place.

The problems are vast

The U.S. has the highest incarceration rate in the world — 2 million people according to the watchdog group, Prison Policy Initiative — at a cost of $182 billion a year. The numbers could be a lot lower if not for an array of problems including inaccurate sentencing calculations, flawed algorithms and parole violations laws.

Sentencing miscalculations

To determine eligibility for release, the current system requires corrections officers to check 21 different requirements spread across five different databases for each of the 90 to 100 people under their supervision. These manual calculations are time prohibitive, says Jacoby, and fall victim to human error.

In addition, Recidiviz found that policies aimed at helping to reduce the prison population don’t always work correctly. A key example is time off for good behavior laws that allow inmates to earn one day off for every 30 days of good behavior. Some states' data systems are built to calculate time off as one day per month of good behavior, rather than per day. Over the course of a decade-long sentence, Jacoby says these miscalculations can lead to a huge discrepancy in the calculated release data and the actual release date.

Algorithms

Commercial algorithm-based software systems for risk assessment continue to be widely used in the criminal justice system, even though a 2018 study published in Science Advances exposed their limitations. After the study went viral, it took three years for the Justice Department to issue a report on their own flawed algorithms used to reduce the federal prison population as part of the 2018 First Step Act. The program, it was determined, overestimated the risk of putting inmates of color into early-release programs.

Despite its name, Recidiviz does not build these types of algorithms for predicting recidivism, or whether someone will commit another crime after being released from prison. Rather, Jacoby says the company’s "descriptive analytics” approach is specifically intended to weed out incarceration inequalities and avoid algorithmic pitfalls.

Parole violation laws

Research shows that 350,000 people a year — about a quarter of the total prison population — are sent back not because they’ve committed another crime, but because they’ve broken a specific rule of their probation. “Things that wouldn't send you or I to prison, but would send someone on parole,” such as crossing county lines or being in the presence of alcohol when they shouldn’t be, are inflating the prison population, says Jacoby.

It’s personal for the co-founder and CEO

“I grew up with an uncle who went into the prison system,” Jacoby says. At 19, he was sentenced to ten years in prison for a non-violent crime. A few months after being released from jail, he was sent back for a non-violent parole violation.

“For my family, the fact that one in four prison admissions are driven not by a crime but by someone who's broken a rule on probation and parole was really profound because that happened to my uncle,” Jacoby says. The experience led her to begin studying criminal justice in high school, then college. She continued her dive into how the criminal justice system works as part of her Passion Project while at Google, a program that allows employees to spend 20 percent of their time on pro-bono work. Two colleagues whose family members had also been stuck in the system joined her.

As part of the project, Jacoby interviewed hundreds of people involved in the criminal justice system. “Those on the right, those on the left, agreed that bad data was slowing down reform,” she says. Their research brought them to North Dakota where they began to understand the root of the problem. The corrections department is making “huge, consequential decisions every day [without] … the data,” Jacoby says. In a new video by Recidiviz not yet released, Jacoby recounts her exchange with the state’s director of corrections who told her, “‘It’s not that we have the data and we just don’t know how to make it public; we don’t have the information you think we have.'"

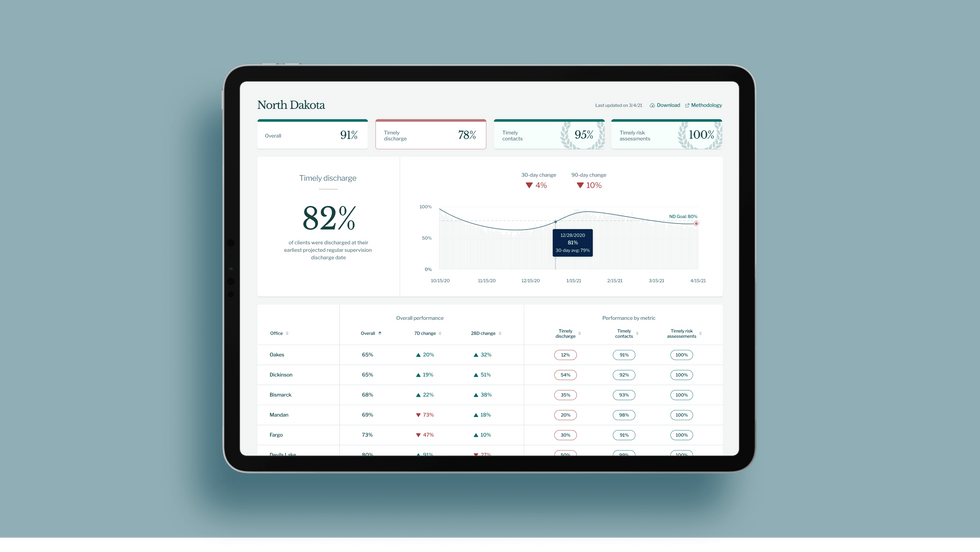

A mock-up (with fake data) of the types of dashboards and insights that Recidiviz provides to state governments.

Recidiviz

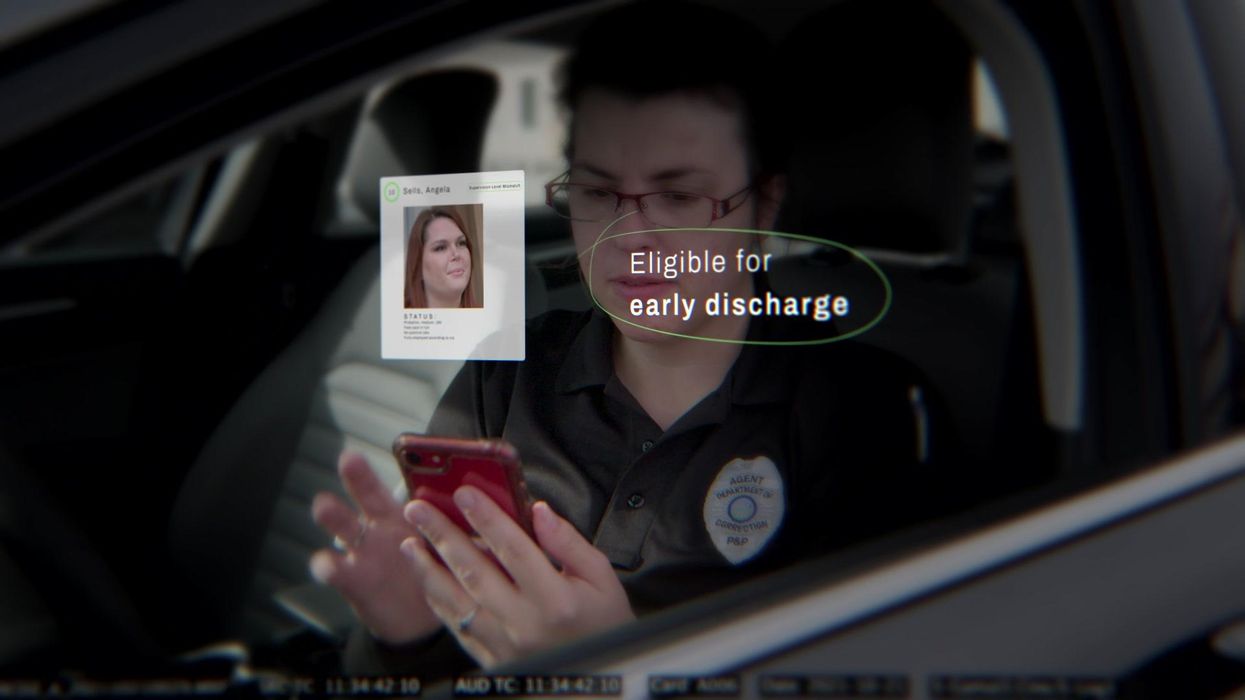

As a software engineer, Jacoby says the comment made no sense to her — until she witnessed it first-hand. “We spent a lot of time driving around in cars with corrections directors and parole officers watching them use these incredibly taxing, frankly terrible, old data systems,” Jacoby says.

As they weeded through thousands of files — some computerized, some on paper — they unearthed the consequences of bad data: Hundreds of people in prison well past their release date and thousands more whose release from parole was delayed because of minor paperwork issues. They found individuals stuck in parole because they hadn’t checked one last item off their eligibility list — like simply failing to provide their parole officer with a paystub. And, even when parolees advocated for themselves, the archaic system made it difficult for their parole officers to confirm their eligibility, so they remained in the system. Jacoby and her team also unpacked specific policies that drive racial disparities — such as fines and fees.

The Solution

It’s more than a trivial technical challenge to bring the incomplete, fragmented data onto a 21st century data platform. It takes months for Recidiviz to sift through a state’s information systems to connect databases “with the goal of tracking a person all the way through their journey and find out what’s working for 18- to 25-year-old men, what’s working for new mothers,” explains Jacoby in the video.

TED Talk: How bad data traps people in the U.S. justice system

TED Fellow Clementine Jacoby's TED Talk went live on Jan. 13. It describes how we can fix bad data in the criminal justice system, "bringing thousands of people home, reducing costs and improving public safety along the way."

Clementine Jacoby • TED2022

Ojmarrh Mitchell, an associate professor in the School of Criminology and Criminal Justice at Arizona State University, who is not involved with the company, says what Recidiviz is doing is “remarkable.” His perspective goes beyond academic analysis. In his pre-academic years, Mitchell was a probation officer, working within the framework of the “well known, but invisible” information sharing issues that plague criminal justice departments. The flexibility of Recidiviz’s approach is what makes it especially innovative, he says. “They identify the specific gaps in each jurisdiction and tailor a solution for that jurisdiction.”

On the downside, the process used by Recidiviz is “a bit opaque,” Mitchell says, with few details available on how Recidiviz designs its tools and tracks outcomes. By sharing more information about how its actions lead to progress in a given jurisdiction, Recidiviz could help reformers in other places figure out which programs have the best potential to work well.

The eleven states in which Recidiviz is working include California, Colorado, Maine, Michigan, Missouri, Pennsylvania and Tennessee. And a pilot program launched last year in Idaho, if scaled nationally, with could reduce the number of people in the criminal justice system by a quarter of a million people, Jacoby says. As part of the pilot, rather than relying on manual calculations, Recidiviz is equipping leaders and the probation officers with actionable information with a few clicks of an app that Recidiviz built.

Mitchell is disappointed that there’s even the need for Recidiviz. “This is a problem that government agencies have a responsibility to address,” he says. “But they haven’t.” For one company to come along and fill such a large gap is “remarkable.”

Should We Use Technologies to Enhance Morality?

Should we welcome biomedical technologies that could enhance our ability to tell right from wrong and improve behaviors that are considered immoral such as dishonesty, prejudice and antisocial aggression?

Our moral ‘hardware’ evolved over 100,000 years ago while humans were still scratching the savannah. The perils we encountered back then were radically different from those that confront us now. To survive and flourish in the face of complex future challenges our archaic operating systems might need an upgrade – in non-traditional ways.

Morality refers to standards of right and wrong when it comes to our beliefs, behaviors, and intentions. Broadly, moral enhancement is the use of biomedical technology to improve moral functioning. This could include augmenting empathy, altruism, or moral reasoning, or curbing antisocial traits like outgroup bias and aggression.

The claims related to moral enhancement are grand and polarizing: it’s been both tendered as a solution to humanity’s existential crises and bluntly dismissed as an armchair hypothesis. So, does the concept have any purchase? The answer leans heavily on our definition and expectations.

One issue is that the debate is often carved up in dichotomies – is moral enhancement feasible or unfeasible? Permissible or impermissible? Fact or fiction? On it goes. While these gesture at imperatives, trading in absolutes blurs the realities at hand. A sensible approach must resist extremes and recognize that moral disrupters are already here.

We know that existing interventions, whether they occur unknowingly or on purpose, have the power to modify moral dispositions in ways both good and bad. For instance, neurotoxins can promote antisocial behavior. The ‘lead-crime hypothesis’ links childhood lead-exposure to impulsivity, antisocial aggression, and various other problems. Mercury has been associated with cognitive deficits, which might impair moral reasoning and judgement. It’s well documented that alcohol makes people more prone to violence.

So, what about positive drivers? Here’s where it gets more tangled.

Medicine has long treated psychiatric disorders with drugs like sedatives and antipsychotics. However, there’s short mention of morality in the Diagnostic and Statistical Manual of Mental Disorders (DSM) despite the moral merits of pharmacotherapy – these effects are implicit and indirect. Such cases are regarded as treatments rather than enhancements.

It would be dangerously myopic to assume that moral augmentation is somehow beyond reach.

Conventionally, an enhancement must go beyond what is ‘normal,’ species-typical, or medically necessary – this is known as the ‘treatment-enhancement distinction.’ But boundaries of health and disease are fluid, so whether we call a procedure ‘moral enhancement’ or ‘medical treatment’ is liable to change with shifts in social values, expert opinions, and clinical practices.

Human enhancements are already used for a range of purported benefits: caffeine, smart drugs, and other supplements to boost cognitive performance; cosmetic procedures for aesthetic reasons; and steroids and stimulants for physical advantage. More boldly, cyborgs like Moon Ribas and Neil Harbisson are pushing transpecies boundaries with new kinds of sensory perception. It would be dangerously myopic to assume that moral augmentation is somehow beyond reach.

How might it work?

One possibility for shaping moral temperaments is with neurostimulation devices. These use electrodes to deliver a low-intensity current that alters the electromagnetic activity of specific neural regions. For instance, transcranial Direct Current Stimulation (tDCS) can target parts of the brain involved in self-awareness, moral judgement, and emotional decision-making. It’s been shown to increase empathy and valued-based learning, and decrease aggression and risk-taking behavior. Many countries already use tDCS to treat pain and depression, but evidence for enhancement effects on healthy subjects is mixed.

Another suggestion is targeting neuromodulators like serotonin and dopamine. Serotonin is linked to prosocial attributes like trust, fairness, and cooperation, but low activity is thought to motivate desires for revenge and harming others. It’s not as simple as indiscriminately boosting brain chemicals though. While serotonin is amenable to SSRIs, precise levels are difficult to measure and track, and there’s no scientific consensus on the “optimum” amount or on whether such a value even exists. Fluctuations due to lifestyle factors such as diet, stress, and exercise add further complexity. Currently, more research is needed on the significance of neuromodulators and their network dynamics across the moral landscape.

There are a range of other prospects. The ‘love drugs’ oxytocin and MDMA mediate pair bonding, cooperation, and social attachment, although some studies suggest that people with high levels of oxytocin are more aggressive toward outsiders. Lithium is a mood stabilizer that has been shown to reduce aggression in prison populations; beta-blockers like propranolol and the supplement omega-3 have similar effects. Increasingly, brain-computer interfaces augur a world of brave possibilities. Such appeals are not without limitations, but they indicate some ways that external tools can positively nudge our moral sentiments.

Who needs morally enhancing?

A common worry is that enhancement technologies could be weaponized for social control by authoritarian regimes, or used like the oppressive eugenics of the early 20th century. Fortunately, the realities are far more mundane and such dystopian visions are fantastical. So, what are some actual possibilities?

Some researchers suggest that neurotechnologies could help to reactivate brain regions of those suffering from moral pathologies, including healthy people with psychopathic traits (like a lack of empathy). Another proposal is using such technology on young people with conduct problems to prevent serious disorders in adulthood.

Most of us aren’t always as ethical as we would like – given the option of ‘priming’ yourself to act in consistent accord with your higher values, would you take it?

A question is whether these kinds of interventions should be compulsory for dangerous criminals. On the other hand, a voluntary treatment for inmates wouldn’t be so different from existing incentive schemes. For instance, some U.S. jurisdictions already offer drug treatment programs in exchange for early release or instead of prison time. Then there’s the difficult question of how we should treat non-criminal but potentially harmful ‘successful’ psychopaths.

Others argue that if virtues have a genetic component, there is no technological reason why present practices of embryo screening for genetic diseases couldn’t also be used for selecting socially beneficial traits.

Perhaps the most immediate scenario is a kind of voluntary moral therapy, which would use biomedicine to facilitate ideal brain-states to augment traditional psychotherapy. Most of us aren’t always as ethical as we would like – given the option of ‘priming’ yourself to act in consistent accord with your higher values, would you take it? Approaches like neurofeedback and psychedelic-assisted therapy could prove helpful.

What are the challenges?

A general challenge is that of setting. Morality is context dependent; what’s good in one environment may be bad in another and vice versa, so we don’t want to throw out the baby with the bathwater. Of course, common sense tells us that some tendencies are more socially desirable than others: fairness, altruism, and openness are clearly preferred over aggression, dishonesty, and prejudice.

One argument is that remoulding ‘brute impulses’ via biology would not count as moral enhancement. This view claims that for an action to truly count as moral it must involve cognition – reasoning, deliberation, judgement – as a necessary part of moral behavior. Critics argue that we should be concerned more with ends rather than means, so ultimately it’s outcomes that matter most.

Another worry is that modifying one biological aspect will have adverse knock-on effects for other valuable traits. Certainly, we must be careful about the network impacts of any intervention. But all stimuli have distributed effects on the body, so it’s really a matter of weighing up the cost/benefit trade-offs as in any standard medical decision.

Is it ethical?

Our values form a big part of who we are – some bioethicists argue that altering morality would pose a threat to character and personal identity. Another claim is that moral enhancement would compromise autonomy by limiting a person’s range of choices and curbing their ‘freedom to fall.’ Any intervention must consider the potential impacts on selfhood and personal liberty, in addition to the wider social implications.

This includes the importance of social and genetic diversity, which is closely tied to considerations of fairness, equality, and opportunity. The history of psychiatry is rife with examples of systematic oppression, like ‘drapetomania’ – the spurious mental illness that was thought to cause African slaves’ desire to flee captivity. Advocates for using moral enhancement technologies to help kids with conduct problems should be mindful that they disproportionately come from low-income communities. We must ensure that any habilitative practice doesn’t perpetuate harmful prejudices by unfairly targeting marginalized people.

Human capacities are the result of environmental influences, and external conditions still coax our biology in unknown ways. Status quo bias for ‘letting nature take its course’ may actually be worse long term – failing to utilize technology for human development may do more harm than good.

Then, there are concerns that morally-enhanced persons would be vulnerable to predation by those who deliberately avoid moral therapies. This relates to what’s been dubbed the ‘bootstrapping problem’: would-be moral enhancement candidates are the types of individuals that benefit from not being morally enhanced. Imagine if every senator was asked to undergo an honesty-boosting procedure prior to entering public office – would they go willingly? Then again, perhaps a technological truth-serum wouldn’t be such a bad requisite for those in positions of stern social consequence.

Advocates argue that biomedical moral betterment would simply offer another means of pursuing the same goals as fixed social mechanisms like religion, education, and community, and non-invasive therapies like cognitive-behavior therapy and meditation. It’s even possible that technological efforts would be more effective. After all, human capacities are the result of environmental influences, and external conditions still coax our biology in unknown ways. Status quo bias for ‘letting nature take its course’ may actually be worse long term – failing to utilize technology for human development may do more harm than good. If we can safely improve ourselves in direct and deliberate ways then there’s no morally significant difference whether this happens via conventional methods or new technology.

Future prospects

Where speculation about human enhancement has led to hype and technophilia, many bioethicists urge restraint. We can be grounded in current science while anticipating feasible medium-term prospects. It’s unlikely moral enhancement heralds any metamorphic post-human utopia (or dystopia), but that doesn’t mean dismissing its transformative potential. In one sense, we should be wary of transhumanist fervour about the salvatory promise of new technology. By the same token we must resist technofear and alarmist efforts to balk social and scientific progress. Emerging methods will continue to shape morality in subtle and not-so-subtle ways – the critical steps are spotting and scaffolding these with robust ethical discussion, public engagement, and reasonable policy options. Steering a bright and judicious course requires that we pilot the possibilities of morally-disruptive technologies.

Podcast: The Friday Five - your health research roundup

The Friday Five is a new series in which Leaps.org covers five breakthroughs in research over the previous week that you may have missed.

The Friday Five is a new podcast series in which Leaps.org covers five breakthroughs in research over the previous week that you may have missed. There are plenty of controversies and ethical issues in science – and we get into many of them in our online magazine – but there’s also plenty to be excited about, and this news roundup is focused on inspiring scientific work to give you some momentum headed into the weekend.

Covered in this week's Friday Five:

- Puffer fish chemical for treating chronic pain

- Sleep study on the health benefits of waking up multiples times per night

- Best exercise regimens for reducing the risk of mortality aka living longer

- AI breakthrough in mapping protein structures with DeepMind

- Ultrasound stickers to see inside your body