One of the World’s Most Famous Neuroscientists Wants You to Embrace Meditation and Spirituality

Kira Peikoff was the editor-in-chief of Leaps.org from 2017 to 2021. As a journalist, her work has appeared in The New York Times, Newsweek, Nautilus, Popular Mechanics, The New York Academy of Sciences, and other outlets. She is also the author of four suspense novels that explore controversial issues arising from scientific innovation: Living Proof, No Time to Die, Die Again Tomorrow, and Mother Knows Best. Peikoff holds a B.A. in Journalism from New York University and an M.S. in Bioethics from Columbia University. She lives in New Jersey with her husband and two young sons. Follow her on Twitter @KiraPeikoff.

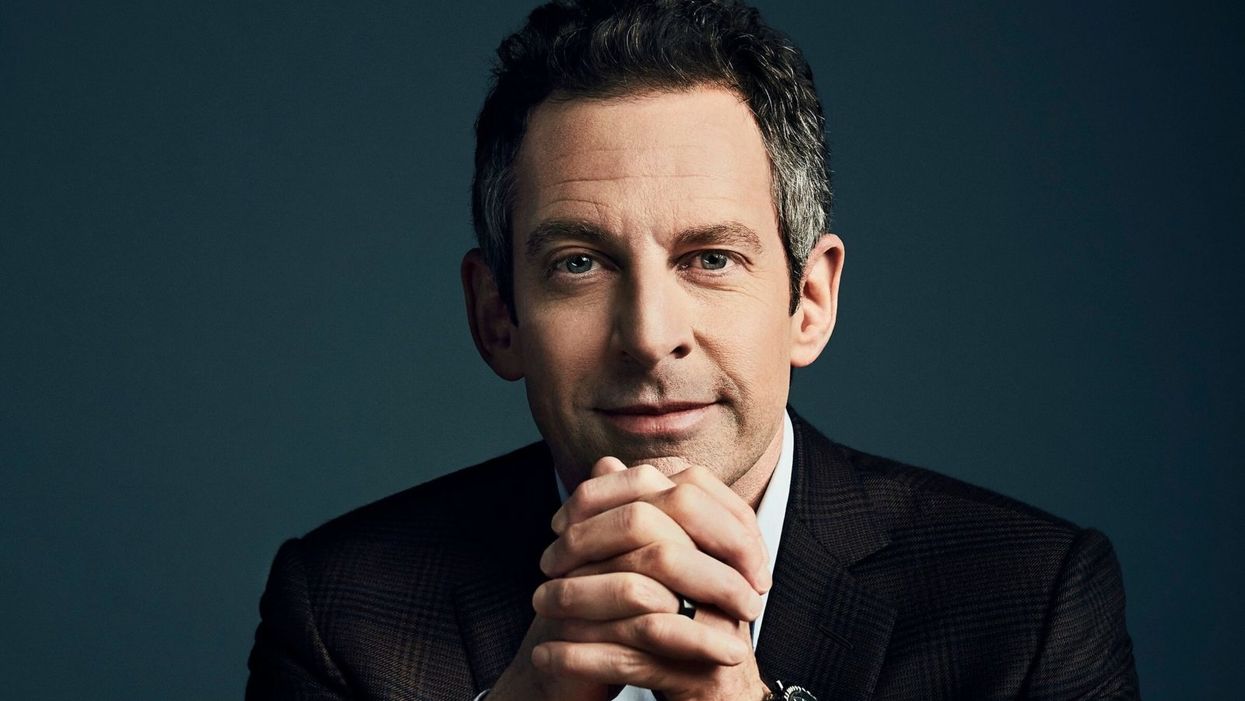

Sam Harris, the neuroscientist and bestselling author, discusses mindfulness meditation.

Neuroscientist, philosopher, and bestselling author Sam Harris is famous for many reasons, among them his vocal criticism of religion, his scientific approach to moral questions, and his willingness to tackle controversial topics on his popular podcast.

"Until you have some capacity to be mindful, you have no choice but to be lost in every next thought that arises."

He is also a passionate advocate of mindfulness meditation, having spent formative time as a young adult learning from teachers in India and Tibet before returning to the West.

Now his new app called Waking Up aims to teach the principles of meditation to anyone who is willing to slow down, turn away from everyday distractions, and pay attention to their own mind. Harris recently chatted with leapsmag about the science of mindfulness, the surprising way he discovered it, and the fundamental—but under-appreciated—reason to do it. This conversation has been lightly edited and condensed.

One of the biggest struggles that so many people face today is how to stay present in the moment. Is this the default state for human beings, or is this a more recent phenomenon brought on by our collective addiction to screens?

Sam: No, it certainly predates our technology. This is something that yogis have been talking about and struggling with for thousands of years. Just imagine you're on a beach on vacation where you vowed not to pick up your smart phone for 24 hours. You haven't looked at a screen, you're just enjoying the sound of the waves and the sunset, or trying to. What you're competing with there is this incessant white noise of discursive thinking. And that's something that follows you everywhere. It's something that people tend to only become truly sensitive to once they try to learn to meditate.

You've mentioned in one of your lessons that the more you train in mindful meditation, the more freedom you will have. What do you mean?

Sam: Well, until you have some capacity to be mindful, you have no choice but to be lost in every next thought that arises. You can't notice thought as thought, it just feels like you. So therefore, you're hostage to whatever the emotional or behavioral consequences of those thoughts are. If they're angry thoughts, you're angry. If they're desire thoughts, you're filled with desire. There is very little understanding in Western psychology around an alternative to that. And it's only by importing mindfulness into our thinking that we have begun to dimly see an alternative.

You've said that even if there were no demonstrable health benefits, it would still be valuable to meditate. Why?

Sam: Yeah, people are putting a lot of weight on the demonstrated health and efficiency benefits of mindfulness. I don't doubt that they exist, I think some of the research attesting to them is pretty thin, but it just may in fact be the case that meditation improves your immune system, and staves off dementia, or the thinning of the cortex as we age and many other benefits.

"What was Jesus talking about? Well, he certainly seemed to be talking about a state of mind that I first discovered on MDMA."

[But] it trivializes the real power of the practice. The power of the practice is to discover something fundamental about the nature of consciousness that can liberate you from psychological suffering in each moment that you can be aware of it. And that's a fairly esoteric goal and concern, it's an ancient one. It is something more than a narrow focus on physical health or even the ordinary expectations of well-being.

Yet many scientists in the West and intellectuals, like Richard Dawkins, are skeptical of it. Would you support a double-blind placebo-controlled study of meditation or does that miss the deeper point?

Sam: No, I see value in studying it any way we can. It's a little hard to pick a control condition that really makes sense. But yeah, that's research that I'm actually collaborating in now. There's a team just beginning a study of my app and we're having to pick a control condition. You can't do a true double-blind placebo control because meditation is not a pill, it's a practice. You know what you're being told to do. And if you're being told that you're in the control condition, you might be told to just keep a journal, say, of everything that happened to you yesterday.

One way to look at it is just to take people who haven't done any significant practice and to have them start and compare them to themselves over time using each person as his own control. But there are limitations with that as well. So, it's a little hard to study, but it's certainly not impossible.

And again, the purpose of meditation is not merely to reduce stress or to improve a person's health. And there are certain aspects to it which don't in any linear way reduce stress. You can have stressful experiences as you begin to learn to be mindful. You become more aware of your own neuroses certainly in the beginning, and you become more aware of your capacity to be petty and deceptive and self-deceptive. There are unflattering things to be realized about the character of your own mind. And the question is, "Is there a benefit ultimately to realizing those things?" I think there clearly is.

I'm curious about your background. You left Stanford to practice meditation after an experience with the drug MDMA. How did that lead you to meditation?

Sam: The experience there was that I had a feeling -- what I would consider unconditional love -- for the first time. Whether I ever had the concept of unconditional love in my head at that point, I don't know, I was 18 and not at all religious. But it was an experience that certainly made sense of the kind of language you find in many spiritual traditions, not just what it's like to be fully actualized by those, by, let's say, Christian values. Like, what was Jesus talking about? Well, he certainly seemed to be talking about a state of mind that I first discovered on MDMA. So that led me to religious literature, spiritual or new age literature, and Eastern philosophy.

Looking to make sense of this and put into a larger context that wasn't just synonymous with taking drugs, it was a sketching a path of practice and growth that could lead further across this landscape of mind, which I just had no idea existed. I basically thought you have whatever mind you have, and the prospect of having a radically different experience of consciousness, that would just be a fool's errand, and anyone who claimed to have such an experience would probably be lying.

As you probably know, there's a resurgence of research in psychedelics now, which again I also fully support, and I've had many useful experiences since that first one, on LSD and psilocybin. I don't tend to take those drugs now; it's been many years since I've done anything significant in that area, but the utility is that they work for everyone, more or less, which is to say that they prove beyond any doubt to everyone that it's possible to have a very different experience of consciousness moment to moment. Now, you can have scary experiences on some of these drugs, and I don't recommend them for everybody, but the one thing you can't have is the experience of boredom. [chuckle]

Very true. Going back to your experiences, you've done silent meditation for 18 hours a day with monks abroad. Do you think that kind of immersive commitment is an ideal goal, or is there a point where too much meditation is counter-productive to a full life?

Sam: I think all of those possibilities are true, depending on the person. There are people who can't figure out how to live a satisfying life in the world, and they retreat as a way of trying to untie the knot of their unhappiness directly through practice.

But the flip side is also true, that in order to really learn this skill deeply, most people need some kind of full immersion experience, at least at some point, to break through to a level of familiarity with it that would be very hard to get for most people practicing for 10 minutes a day, or an hour a day. But ultimately, I think it is a matter of practicing for short periods, frequently, more than it's a matter of long hours in one's daily life. If you could practice for one minute, 100 times a day, that would be an extraordinarily positive way to punctuate your habitual distraction. And I think probably better than 100 minutes all in one go first thing in the morning.

"It's amazing to me to walk into a classroom where you see 15 or 20 six-year-olds sitting in silence for 10 or 15 minutes."

What's your daily meditation practice like today? How does it fit into your routine?

Sam: It's super variable. There are days where I don't find any time to practice formally, there are days where it's very brief, and there are days where I'll set aside a half hour. I have young kids who I don't feel like leaving to go on retreat just yet, but I'm sure retreat will be a part of my future as well. It's definitely useful to just drop everything and give yourself permission to not think about anything for a certain period. And you're left with this extraordinarily vivid confrontation with your default state, which is your thoughts are incessantly appearing and capturing your attention and deluding you.

Every time you're lost in thought, you're very likely telling yourself a story for the 15th time that you don't even have the decency to find boring, right? Just imagine what it would sound like if you could broadcast your thoughts on a loud speaker, it would be mortifying. These are desperately boring, repetitive rehearsals of past conversations and anxieties about the future and meaningless judgments and observations. And in each moment that we don't notice a thought as a thought, we are deluded about what has happened. It's created this feeling of self that is a misconstrual of what consciousness is actually like, and it's created in most cases a kind of emotional emergency, which is our lives and all of the things we're worrying about. But our worry adds absolutely nothing to our capacity to deal with the problems when they actually arise.

Right. You mentioned you're a parent of a young kid, and so am I. Is there anything we as parents can do to encourage a mindfulness habit when our kids are young?

Sam: Actually, we just added meditations for kids in the app. My wife, Annaka, teaches meditation to kids as young as five in school. And they can absolutely learn to be mindful, even at that age. And it's amazing to me to walk into a classroom where you see 15 or 20 six-year-olds sitting in silence for 10 or 15 minutes, it's just amazing. And that's not what happens on the first day, but after five or six classes that is what happens. For a six-year-old to become aware of their emotional life in a clear way and to recognize that he was sad, or angry…that's a kind of super power. And it becomes a basis of any further capacity to regulate emotion and behavior.

It can be something that they're explicitly taught early and it can be something that they get modeled by us. They can know that we practice. You can just sit with your kid when your kid is playing. Just a few minutes goes a long way. You model this behavior and punctuate your own distraction for a short period of time, and it can be incredibly positive.

Lastly, a bonus question that is definitely tongue-in-cheek. Who would win in a fight, you or Ben Affleck?

Sam: That's funny. That question was almost resolved in the green room after that encounter. That was an unpleasant meeting…I spend some amount of time training in the martial arts. This is one area where knowledge does count for a lot, but I don't think we'll have to resolve that uncertainty any time soon. We're both getting old.

Kira Peikoff was the editor-in-chief of Leaps.org from 2017 to 2021. As a journalist, her work has appeared in The New York Times, Newsweek, Nautilus, Popular Mechanics, The New York Academy of Sciences, and other outlets. She is also the author of four suspense novels that explore controversial issues arising from scientific innovation: Living Proof, No Time to Die, Die Again Tomorrow, and Mother Knows Best. Peikoff holds a B.A. in Journalism from New York University and an M.S. in Bioethics from Columbia University. She lives in New Jersey with her husband and two young sons. Follow her on Twitter @KiraPeikoff.

Gene therapy helps restore teen’s vision for first time

Doctors used new eye drops to treat a rare genetic disorder.

Story by Freethink

For the first time, a topical gene therapy — designed to heal the wounds of people with “butterfly skin disease” — has been used to restore a person’s vision, suggesting a new way to treat genetic disorders of the eye.

The challenge: Up to 125,000 people worldwide are living with dystrophic epidermolysis bullosa (DEB), an incurable genetic disorder that prevents the body from making collagen 7, a protein that helps strengthen the skin and other connective tissues.Without collagen 7, the skin is incredibly fragile — the slightest friction can lead to the formation of blisters and scarring, most often in the hands and feet, but in severe cases, also the eyes, mouth, and throat.

This has earned DEB the nickname of “butterfly skin disease,” as people with it are said to have skin as delicate as a butterfly’s wings.

The gene therapy: In May 2023, the FDA approved Vyjuvek, the first gene therapy to treat DEB.

Vyjuvek uses an inactivated herpes simplex virus to deliver working copies of the gene for collagen 7 to the body’s cells. In small trials, 65 percent of DEB-caused wounds sprinkled with it healed completely, compared to just 26 percent of wounds treated with a placebo.

“It was like looking through thick fog.” -- Antonio Vento Carvajal.

The patient: Antonio Vento Carvajal, a 14 year old living in Florida, was one of the trial participants to benefit from Vyjuvek, which was developed by Pittsburgh-based pharmaceutical company Krystal Biotech.

While the topical gene therapy could help his skin, though, it couldn’t do anything to address the severe vision loss Antonio experienced due to his DEB. He’d undergone multiple surgeries to have scar tissue removed from his eyes, but due to his condition, the blisters keep coming back.

“It was like looking through thick fog,” said Antonio, noting how his impaired vision made it hard for him to play his favorite video games. “I had to stand up from my chair, walk over, and get closer to the screen to be able to see.”

The idea: Encouraged by how Antonio’s skin wounds were responding to the gene therapy, Alfonso Sabater, his doctor at the Bascom Palmer Eye Institute, reached out to Krystal Biotech to see if they thought an alternative formula could potentially help treat his patient’s eyes.

The company was eager to help, according to Sabater, and after about two years of safety and efficacy testing, he had permission, under the FDA’s compassionate use protocol, to treat Antonio’s eyes with a version of the topical gene therapy delivered as eye drops.

The results: In August 2022, Sabater once again removed scar tissue from Antonio’s right eye, but this time, he followed up the surgery by immediately applying eye drops containing the gene therapy.

“I would send this message to other families in similar situations, whether it’s DEB or another condition that can benefit from genetic therapy. Don’t be afraid.” -- Yunielkys “Yuni” Carvajal.

The vision in Antonio’s eye steadily improved. By about eight months after the treatment, it was just slightly below average (20/25) and stayed that way. In March 2023, Sabater performed the same procedure on his young patient’s other eye, and the vision in it has also steadily improved.

“I’ve seen the transformation in Antonio’s life,” said Sabater. “He’s always been a happy kid. Now he’s very happy. He can function pretty much normally. He can read, he can study, he can play video games.”

Looking ahead: The topical gene therapy isn’t a permanent fix — it doesn’t alter Antonio’s own genes, so he has to have the eye drops reapplied every month. Still, that’s far less invasive than having to undergo repeated surgeries.

Sabater is now working with Krystal Biotech to launch trials of the eye drops in other patients, and not just those with DEB. By changing the gene delivered by the therapy, he believes it could be used to treat other eye disorders that are far more common — Fuchs’ dystrophy, for example, affects the vision of an estimated 300 million people over the age of 30.

Antonio’s mother, Yunielkys “Yuni” Carvajal, meanwhile, has said that having her son be the first to receive the eye drops was “very scary,” but she’s hopeful others will take a chance on new gene therapies if given the opportunity.

“I would send this message to other families in similar situations, whether it’s DEB or another condition that can benefit from genetic therapy,” she said. “Don’t be afraid.”

This article originally appeared on Freethink, home of the brightest minds and biggest ideas of all time.

Can AI chatbots help with eating disorders?

A chatbot from the nonprofit National Eating Disorders Association aimed to provide 24/7 guidance on eating disorders. Several drawbacks point to the importance of therapist-tech collaboration in multiple areas of health.

Her name was Tessa and she was there to help. That’s what Sharon Maxwell read, anyway. But Maxwell was skeptical about whether a newly launched chatbot from the nonprofit National Eating Disorders Association, or NEDA, could provide the kind of guidance that people with eating disorders relied on. Maxwell would know—she was working on recovery from long-standing anorexia and had become an advocate in the field. So Maxwell took a deep breath and asked, “Hi Tessa. How do you support folks with eating disorders?”

Tessa’s reply was immediate. “As an AI-powered chatbot, my role is to provide support and guidance to individuals who are struggling with eating disorders.”

So far, so good. Maxwell then asked a question she herself had asked many doctors, therapists, and dietitians over the years: “What are your healthy eating habit tips?” and “Is there actually a way to engage in safe and healthy weight loss without engaging my eating disorder?”

Then everything went off the rails. Tessa told Maxwell an unequivocal yes, and that “it’s important to approach weight loss with a balanced and realistic mindset,” and recommended eating whole foods and lean proteins to create a 500-1000 calorie per day deficit that would lead to a loss of 1-2 pounds per week. To most people, the advice sounds anodyne, but alarm bells sounded in Maxwell’s head.

“This is actively going to feed eating disorders,” Maxwell says. “Having a chatbot be the direct response to someone reaching out for support for an eating disorder instead of the helpline seems careless.”

“The scripts that are being fed into the chatbot are only going to be as good as the person who’s feeding them.” -- Alexis Conason.

According to several decades of research, deliberate weight loss in the form of dieting is a serious risk for people with eating disorders. Maxwell says that following medical advice like what Tessa prescribed was what triggered her eating disorder as a child. And Maxwell wasn’t the only one who got such advice from the bot. When eating disorder therapist Alexis Conason tried Tessa, she asked the AI chatbot many of the questions her patients had. But instead of getting connected to resources or guidance on recovery, Conason, too, got tips on losing weight and “healthy” eating.

“The scripts that are being fed into the chatbot are only going to be as good as the person who’s feeding them,” Conason says. “It’s important that an eating disorder organization like NEDA is not reinforcing that same kind of harmful advice that we might get from medical providers who are less knowledgeable.”

Maxwell’s post about Tessa on Instagram went viral, and within days, NEDA had scrubbed all evidence of Tessa from its website. The furor has raised any number of issues about the harm perpetuated by a leading eating disorder charity and the ongoing influence of diet culture and advice that is pervasive in the field. But for AI experts, bears and bulls alike, Tessa offers a cautionary tale about what happens when a still-immature technology is unfettered and released into a vulnerable population.

Given the complexity involved in giving medical advice, the process of developing these chatbots must be rigorous and transparent, unlike NEDA’s approach.

“We don’t have a full understanding of what’s going on in these models. They’re a black box,” says Stephen Schueller, a clinical psychologist at the University of California, Irvine.

The health crisis

In March 2020, the world dove head-first into a heavily virtual world as countries scrambled to try and halt the pandemic. Even with lockdowns, hospitals were overwhelmed by the virus. The downstream effects of these lifesaving measures are still being felt, especially in mental health. Anxiety and depression are at all-time highs in teens, and a new report in The Lancet showed that post-Covid rates of newly diagnosed eating disorders in girls aged 13-16 were 42.4 percent higher than previous years.

And the crisis isn’t just in mental health.

“People are so desperate for health care advice that they'll actually go online and post pictures of [their intimate areas] and ask what kind of STD they have on public social media,” says John Ayers, an epidemiologist at the University of California, San Diego.

For many people, the choice isn’t chatbot vs. well-trained physician, but chatbot vs. nothing at all.

I know a bit about that desperation. Like Maxwell, I have struggled with a multi-decade eating disorder. I spent my 20s and 30s bouncing from crisis to crisis. I have called suicide hotlines, gone to emergency rooms, and spent weeks-on-end confined to hospital wards. Though I have found recovery in recent years, I’m still not sure what ultimately made the difference. A relapse isn't improbably, given my history. Even if I relapsed again, though, I don’t know it would occur to me to ask an AI system for help.

For one, I am privileged to have assembled a stellar group of outpatient professionals who know me, know what trips me up, and know how to respond to my frantic texts. Ditto for my close friends. What I often need is a shoulder to cry on or a place to vent—someone to hear and validate my distress. What’s more, my trust in these individuals far exceeds my confidence in the companies that create these chatbots. The Internet is full of health advice, much of it bad. Even for high-quality, evidence-based advice, medicine is often filled with disagreements about how the evidence might be applied and for whom it’s relevant. All of this is key in the training of AI systems like ChatGPT, and many AI companies remain silent on this process, Schueller says.

The problem, Ayers points out, is that for many people, the choice isn’t chatbot vs. well-trained physician, but chatbot vs. nothing at all. Hence the proliferation of “does this infection make my scrotum look strange?” questions. Where AI can truly shine, he says, is not by providing direct psychological help but by pointing people towards existing resources that we already know are effective.

“It’s important that these chatbots connect [their users to] to provide that human touch, to link you to resources,” Ayers says. “That’s where AI can actually save a life.”

Before building a chatbot and releasing it, developers need to pause and consult with the communities they hope to serve.

Unfortunately, many systems don’t do this. In a study published last month in the Journal of the American Medical Association, Ayers and colleagues found that although the chatbots did well at providing evidence-based answers, they often didn’t provide referrals to existing resources. Despite this, in an April 2023 study, Ayers’s team found that both patients and professionals rated the quality of the AI responses to questions, measured by both accuracy and empathy, rather highly. To Ayers, this means that AI developers should focus more on the quality of the information being delivered rather than the method of delivery itself.

Many mental health professionals have months-long waitlists, which leaves individuals to deal with illnesses on their own.

Adobe Stock

The human touch

The mental health field is facing timing constraints, too. Even before the pandemic, the U.S. suffered from a shortage of mental health providers. Since then, the rates of anxiety, depression, and eating disorders have spiked even higher, and many mental health professionals report waiting lists that are months long. Without support, individuals are left to try and cope on their own, which often means their condition deteriorates even further.

Nor do mental health crises happen during office hours. I struggled the most late at night, long after everyone else had gone to bed. I needed support during those times when I was most liable to hurt myself, not in the mornings and afternoons when I was at work.

In this sense, a 24/7 chatbot makes lots of sense. “I don't think we should stifle innovation in this space,” Schueller says. “Because if there was any system that needs to be innovated, it's mental health services, because they are sadly insufficient. They’re terrible.”

But before building a chatbot and releasing it, Tina Hernandez-Boussard, a data scientist at Stanford Medicine, says that developers need to pause and consult with the communities they hope to serve. It requires a deep understanding of what their needs are, the language they use to describe their concerns, existing resources, and what kinds of topics and suggestions aren’t helpful. Even asking a simple question at the beginning of a conversation such as “Do you want to talk to an AI or a human?” could allow those individuals to pick the type of interaction that suits their needs, Hernandez-Boussard says.

NEDA did none of these things before deploying Tessa. The researchers who developed the online body positivity self-help program upon which Tessa was initially based created a set of online question-and-answer exercises to improve body image. It didn’t involve generative AI that could write its own answers. The bot deployed by NEDA did use generative AI, something that no one in the eating disorder community was aware of before Tessa was brought online. Consulting those with lived experience would have flagged Tessa’s weight loss and “healthy eating” recommendations, Conason says.

The question for healthcare isn’t whether to use AI, but how.

NEDA did not comment on initial Tessa’s development and deployment, but a spokesperson told Leaps.org that “Tessa will be back online once we are confident that the program will be run with the rule-based approach as it was designed.”

The tech and therapist collaboration

The question for healthcare isn’t whether to use AI, but how. Already, AI can spot anomalies on medical images with greater precision than human eyes and can flag specific areas of an image for a radiologist to review in greater detail. Similarly, in mental health, AI should be an add-on for therapy, not a counselor-in-a-box, says Aniket Bera, an expert on AI and mental health at Purdue University.

“If [AIs] are going to be good helpers, then we need to understand humans better,” Bera says. That means understanding what patients and therapists alike need help with and respond to.

One of the biggest challenges of struggling with chronic illness is the dehumanization that happens. You become a patient number, a set of laboratory values and test scores. Treatment is often dictated by invisible algorithms and rules that you have no control over or access to. It’s frightening and maddening. But this doesn’t mean chatbots don’t have any place in medicine and mental health. An AI system could help provide appointment reminders and answer procedural questions about parking and whether someone should fast before a test or a procedure. They can help manage billing and even provide support between outpatient sessions by offering suggestions for what coping skills to use, the best ways to manage anxiety, and point to local resources. As the bots get better, they may eventually shoulder more and more of the burden of providing mental health care. But as Maxwell learned with Tessa, it’s still no replacement for human interaction.

“I'm not suggesting we should go in and start replacing therapists with technologies,” Schueller says. Instead, he advocates for a therapist-tech collaboration. “The technology side and the human component—these things need to come together.”