Alzheimer’s prevention may be less about new drugs, more about income, zip code and education

(Left to right) Vickie Naylor, Bernadine Clay, and Donna Maxey read a memory prompt as they take part in the Sharing History through Active Reminiscence and Photo-Imagery (SHARP) study, September 20, 2017.

That your risk of Alzheimer’s disease depends on your salary, what you ate as a child, or the block where you live may seem implausible. But researchers are discovering that social determinants of health (SDOH) play an outsized role in Alzheimer’s disease and related dementias, possibly more than age, and new strategies are emerging for how to address these factors.

At the 2022 Alzheimer’s Association International Conference, a series of presentations offered evidence that a string of socioeconomic factors—such as employment status, social support networks, education and home ownership—significantly affected dementia risk, even when adjusting data for genetic risk. What’s more, memory declined more rapidly in people who earned lower wages and slower in people who had parents of higher socioeconomic status.

In 2020, a first-of-its kind study in JAMA linked Alzheimer’s incidence to “neighborhood disadvantage,” which is based on SDOH indicators. Through autopsies, researchers analyzed brain tissue markers related to Alzheimer’s and found an association with these indicators. In 2022, Ryan Powell, the lead author of that study, published further findings that neighborhood disadvantage was connected with having more neurofibrillary tangles and amyloid plaques, the main pathological features of Alzheimer's disease.

As of yet, little is known about the biological processes behind this, says Powell, director of data science at the Center for Health Disparities Research at the University of Wisconsin School of Medicine and Public Health. “We know the association but not the direct causal pathway.”

The corroborative findings keep coming. In a Nature study published a few months after Powell’s study, every social determinant investigated affected Alzheimer’s risk except for marital status. The links were highest for income, education, and occupational status.

Clinical trials on new Alzheimer’s medications get all the headlines but preventing dementia through policy and public health interventions should not be underestimated.

The potential for prevention is significant. One in three older adults dies with Alzheimer's or another dementia—more than breast and prostate cancers combined. Further, a 2020 report from the Lancet Commission determined that about 40 percent of dementia cases could theoretically be prevented or delayed by managing the risk factors that people can modify.

Take inactivity. Older adults who took 9,800 steps daily were half as likely to develop dementia over the next 7 years, in a 2022 JAMA study. Hearing loss, another risk factor that can be managed, accounts for about 9 percent of dementia cases.

Clinical trials on new Alzheimer’s medications get all the headlines but preventing dementia through policy and public health interventions should not be underestimated. Simply slowing the course of Alzheimer’s or delaying its onset by five years would cut the incidence in half, according to the Global Council on Brain Health.

Minorities Hit the Hardest

The World Health Organization defines SDOH as “conditions in which people are born, work, live, and age, and the wider set of forces and systems shaping the conditions of daily life.”

Anyone who exists on processed food, smokes cigarettes, or skimps on sleep has heightened risks for dementia. But minority groups get hit harder. Older Black Americans are twice as likely to have Alzheimer’s or another form of dementia as white Americans; older Hispanics are about one and a half times more likely.

This is due in part to higher rates of diabetes, obesity, and high blood pressure within these communities. These diseases are linked to Alzheimer’s, and SDOH factors multiply the risks. Blacks and Hispanics earn less income on average than white people. This means they are more likely to live in neighborhoods with limited access to healthy food, medical care, and good schools, and suffer greater exposure to noise (which impairs hearing) and air pollution—additional risk factors for dementia.

Related Reading: The Toxic Effects of Noise and What We're Not Doing About it

Plus, when Black people are diagnosed with dementia, their cognitive impairment and neuropsychiatric symptom are more advanced than in white patients. Why? Some African-Americans delay seeing a doctor because of perceived discrimination and a sense they will not be heard, says Carl V. Hill, chief diversity, equity, and inclusion officer at the Alzheimer’s Association.

Misinformation about dementia is another issue in Black communities. The thinking is that Alzheimer’s is genetic or age-related, not realizing that diet and physical activity can improve brain health, Hill says.

African Americans are severely underrepresented in clinical trials for Alzheimer’s, too. So, researchers miss the opportunity to learn more about health disparities. “It’s a bioethical issue,” Hill says. “The people most likely to have Alzheimer’s aren’t included in the trials.”

The Cure: Systemic Change

People think of lifestyle as a choice but there are limitations, says Muniza Anum Majoka, a geriatric psychiatrist and assistant professor of psychiatry at Yale University, who published an overview of SDOH factors that impact dementia. “For a lot of people, those choices [to improve brain health] are not available,” she says. If you don’t live in a safe neighborhood, for example, walking for exercise is not an option.

Hill wants to see the focus of prevention shift from individual behavior change to ensuring everyone has access to the same resources. Advice about healthy eating only goes so far if someone lives in a food desert. Systemic change also means increasing the number of minority physicians and recruiting minorities in clinical drug trials so studies will be relevant to these communities, Hill says.

Based on SDOH impact research, raising education levels has the most potential to prevent dementia. One theory is that highly educated people have a greater brain reserve that enables them to tolerate pathological changes in the brain, thus delaying dementia, says Majoka. Being curious, learning new things and problem-solving also contribute to brain health, she adds. Plus, having more education may be associated with higher socioeconomic status, more access to accurate information and healthier lifestyle choices.

New Strategies

The chasm between what researchers know about brain health and how the knowledge is being applied is huge. “There’s an explosion of interest in this area. We’re just in the first steps,” says Powell. One day, he predicts that physicians will manage Alzheimer’s through precision medicine customized to the patient’s specific risk factors and needs.

Raina Croff, assistant professor of neurology at Oregon Health & Science University School of Medicine, created the SHARP (Sharing History through Active Reminiscence and Photo-imagery) walking program to forestall memory loss in African Americans with mild cognitive impairment or early dementia.

Participants and their caregivers walk in historically black neighborhoods three times a week over six months. A smart tablet provides information about “Memory Markers” they pass, such as the route of a civil rights march. People celebrate their community and culture while “brain health is running in the background,” Croff says.

Photos and memory prompts engage participants in the SHARP program.

OHSU/Kristyna Wentz-Graff

The project began in 2015 as a pilot study in Croff’s hometown of Portland, Ore., expanded to Seattle, and will soon start in Oakland, Calif. “Walking is good for slowing [brain] decline,” she says. A post-study assessment of 40 participants in 2017 showed that half had higher cognitive scores after the program; 78 percent had lower blood pressure; and 44 percent lost weight. Those with mild cognitive impairment showed the most gains. The walkers also reported improved mood and energy along with increased involvement in other activities.

It’s never too late to reap the benefits of working your brain and being socially engaged, Majoka says.

In Milwaukee, the Wisconsin Alzheimer’s Institute launched the The Amazing Grace Chorus® to stave off cognitive decline in seniors. People in early stages of Alzheimer’s practice and perform six concerts each year. The activity provides opportunities for social engagement, mental stimulation, and a support network. Among the benefits, 55 percent reported better communication at home and nearly half of participants said they got involved with more activities after participating in the chorus.

Private companies are offering intervention services to healthcare providers and insurers to manage SDOH, too. One such service, MyHello, makes calls to at-risk people to assess their needs—be it food, transportation or simply a friendly voice. Having a social support network is critical for seniors, says Majoka, noting there was a steep decline in cognitive function among isolated elders during Covid lockdowns.

About 1 in 9 Americans age 65 or older live with Alzheimer’s today. With a surge in people with the disease predicted, public health professionals have to think more broadly about resource targets and effective intervention points, Powell says.

Beyond breakthrough pills, that is. Like Dorothy in Kansas discovering happiness was always in her own backyard, we are beginning to learn that preventing Alzheimer’s is in our reach if only we recognized it.

Real-Time Monitoring of Your Health Is the Future of Medicine

Implantable sensors and other surveillance technologies offer tremendous health benefits -- and ethical challenges.

The same way that it's harder to lose 100 pounds than it is to not gain 100 pounds, it's easier to stop a disease before it happens than to treat an illness once it's developed.

In Morris' dream scenario "everyone will be implanted with a sensor" ("…the same way most people are vaccinated") and the sensor will alert people to go to the doctor if something is awry.

Bio-engineers working on the next generation of diagnostic tools say today's technology, such as colonoscopies or mammograms, are reactionary; that is, they tell a person they are sick often when it's too late to reverse course. Surveillance medicine — such as implanted sensors — will detect disease at its onset, in real time.

What Is Possible?

Ever since the Human Genome Project — which concluded in 2003 after mapping the DNA sequence of all 30,000 human genes — modern medicine has shifted to "personalized medicine." Also called, "precision health," 21st-century doctors can in some cases assess a person's risk for specific diseases from his or her DNA. The information enables women with a BRCA gene mutation, for example, to undergo more frequent screenings for breast cancer or to pro-actively choose to remove their breasts, as a "just in case" measure.

But your DNA is not always enough to determine your risk of illness. Not all genetic mutations are harmful, for example, and people can get sick without a genetic cause, such as with an infection. Hence the need for a more "real-time" way to monitor health.

Aaron Morris, a postdoctoral researcher in the Department of Biomedical Engineering at the University of Michigan, wants doctors to be able to predict illness with pinpoint accuracy well before symptoms show up. Working in the lab of Dr. Lonnie Shea, the team is building "a tiny diagnostic lab" that can live under a person's skin and monitor for illness, 24/7. Currently being tested in mice, the Michigan team's porous biodegradable implant becomes part of the body as "cells move right in," says Morris, allowing engineered tissue to be biopsied and analyzed for diseases. The information collected by the sensors will enable doctors to predict disease flareups, such as for cancer relapses, so that therapies can begin well before a person comes out of remission. The technology will also measure the effectiveness of those therapies in real time.

In Morris' dream scenario "everyone will be implanted with a sensor" ("…the same way most people are vaccinated") and the sensor will alert people to go to the doctor if something is awry.

While it may be four or five decades before Morris' sensor becomes mainstream, "the age of surveillance medicine is here," says Jamie Metzl, a technology and healthcare futurist who penned Hacking Darwin: Genetic Engineering and the Future of Humanity. "It will get more effective and sophisticated and less obtrusive over time," says Metzl.

Already, Google compiles public health data about disease hotspots by amalgamating individual searches for medical symptoms; pill technology can digitally track when and how much medication a patient takes; and, the Apple watch heart app can predict with 85-percent accuracy if an individual using the wrist device has Atrial Fibrulation (AFib) — a condition that causes stroke, blood clots and heart failure, and goes undiagnosed in 700,000 people each year in the U.S.

"We'll never be able to predict everything," says Metzl. "But we will always be able to predict and prevent more and more; that is the future of healthcare and medicine."

Morris believes that within ten years there will be surveillance tools that can predict if an individual has contracted the flu well before symptoms develop.

At City College of New York, Ryan Williams, assistant professor of biomedical engineering, has built an implantable nano-sensor that works with a florescent wand to scope out if cancer cells are growing at the implant site. "Instead of having the ovary or breast removed, the patient could just have this [surveillance] device that can say 'hey we're monitoring for this' in real-time… [to] measure whether the cancer is maybe coming back,' as opposed to having biopsy tests or undergoing treatments or invasive procedures."

Not all surveillance technologies that are being developed need to be implanted. At Case Western, Colin Drummond, PhD, MBA, a data scientist and assistant department chair of the Department of Biomedical Engineering, is building a "surroundable." He describes it as an Alexa-style surveillance system (he's named her Regina) that will "tell" the user, if a need arises for medication, how much to take and when.

Bioethical Red Flags

"Everyone should be extremely excited about our move toward what I call predictive and preventive health care and health," says Metzl. "We should also be worried about it. Because all of these technologies can be used well and they can [also] be abused." The concerns are many layered:

Discriminatory practices

For years now, bioethicists have expressed concerns about employee-sponsored wellness programs that encourage fitness while also tracking employee health data."Getting access to your health data can change the way your employer thinks about your employability," says Keisha Ray, assistant professor at the University of Texas Health Science Center at Houston (UTHealth). Such access can lead to discriminatory practices against employees that are less fit. "Surveillance medicine only heightens those risks," says Ray.

Who owns the data?

Surveillance medicine may help "democratize healthcare" which could be a good thing, says Anita Ho, an associate professor in bioethics at both the University of California, San Francisco and at the University of British Columbia. It would enable easier access by patients to their health data, delivered to smart phones, for example, rather than waiting for a call from the doctor. But, she also wonders who will own the data collected and if that owner has the right to share it or sell it. "A direct-to-consumer device is where the lines get a little blurry," says Ho. Currently, health data collected by Apple Watch is owned by Apple. "So we have to ask bigger ethical questions in terms of what consent should be required" by users.

Insurance coverage

"Consumers of these products deserve some sort of assurance that using a product that will predict future needs won't in any way jeopardize their ability to access care for those needs," says Hastings Center bioethicist Carolyn Neuhaus. She is urging lawmakers to begin tackling policy issues created by surveillance medicine, now, well ahead of the technology becoming mainstream, not unlike GINA, the Genetic Information Nondiscrimination Act of 2008 -- a federal law designed to prevent discrimination in health insurance on the basis of genetic information.

And, because not all Americans have insurance, Ho wants to know, who's going to pay for this technology and how much will it cost?

Trusting our guts

Some bioethicists are concerned that surveillance technology will reduce individuals to their "risk profiles," leaving health care systems to perceive them as nothing more than a "bundle of health and security risks." And further, in our quest to predict and prevent ailments, Neuhaus wonders if an over-reliance on data could damage the ability of future generations to trust their gut and tune into their own bodies?

It "sounds kind of hippy-dippy and feel-goodie," she admits. But in our culture of medicine where efficiency is highly valued, there's "a tendency to not value and appreciate what one feels inside of their own body … [because] it's easier to look at data than to listen to people's really messy stories of how they 'felt weird' the other day. It takes a lot less time to look at a sheet, to read out what the sensor implanted inside your body or planted around your house says."

Ho, too, worries about lost narratives. "For surveillance medicine to actually work we have to think about how we educate clinicians about the utility of these devices and how to how to interpret the data in the broader context of patients' lives."

Over-diagnosing

While one of the goals of surveillance medicine is to cut down on doctor visits, Ho wonders if the technology will have the opposite effect. "People may be going to the doctor more for things that actually are benign and are really not of concern yet," says Ho. She is also concerned that surveillance tools could make healthcare almost "recreational" and underscores the importance of making sure that the goals of surveillance medicine are met before the technology is unleashed.

"We can't just assume that any of these technologies are inherently technologies of liberation."

AI doesn't fix existing healthcare problems

"Knowing that you're going to have a fall or going to relapse or have a disease isn't all that helpful if you have no access to the follow-up care and you can't afford it and you can't afford the prescription medication that's going to ward off the onset," says Neuhaus. "It may still be worth knowing … but we can't fool ourselves into thinking that this technology is going to reshape medicine in America if we don't pay attention to … the infrastructure that we don't currently have."

Race-based medicine

How surveillances devices are tested before being approved for human use is a major concern for Ho. In recent years, alerts have been raised about the homogeneity of study group participants — too white and too male. Ho wonders if the devices will be able to "accurately predict the disease progression for people whose data has not been used in developing the technology?" COVID-19 has killed Black people at a rate 2.5 time greater than white people, for example, and new, virtual clinical research is focused on recruiting more people of color.

The Biggest Question

"We can't just assume that any of these technologies are inherently technologies of liberation," says Metzl.

Especially because we haven't yet asked the 64-thousand dollar question: Would patients even want to know?

Jenny Ahlstrom is an IT professional who was diagnosed at 43 with multiple myeloma, a blood cancer that typically attacks people in their late 60s and 70s and for which there is no cure. She believes that most people won't want to know about their declining health in real time. People like to live "optimistically in denial most of the time. If they don't have a problem, they don't want to really think they have a problem until they have [it]," especially when there is no cure. "Psychologically? That would be hard to know."

Ahlstrom says there's also the issue of trust, something she experienced first-hand when she launched her non-profit, HealthTree, a crowdsourcing tool to help myeloma patients "find their genetic twin" and learn what therapies may or may not work. "People want to share their story, not their data," says Ahlstrom. "We have been so conditioned as a nation to believe that our medical data is so valuable."

Metzl acknowledges that adoption of new technologies will be uneven. But he also believes that "over time, it will be abundantly clear that it's much, much cheaper to predict and prevent disease than it is to treat disease once it's already emerged."

Beyond cost, the tremendous potential of these technologies to help us live healthier and longer lives is a game-changer, he says, as long as we find ways "to ultimately navigate this terrain and put systems in place ... to minimize any potential harms."

How Smallpox Was Wiped Off the Planet By a Vaccine and Global Cooperation

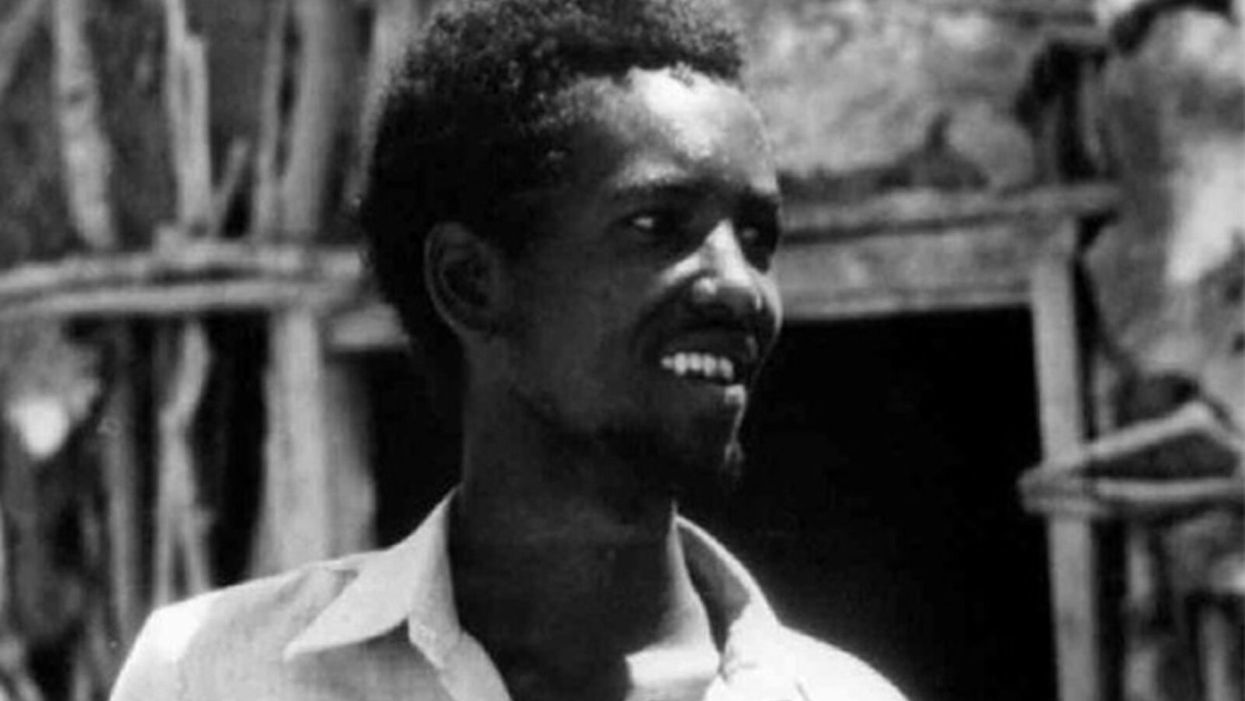

The world's last recorded case of endemic smallpox was in Ali Maow Maalin, of Merka, Somalia, in October 1977. He made a full recovery.

For 3000 years, civilizations all over the world were brutalized by smallpox, an infectious and deadly virus characterized by fever and a rash of painful, oozing sores.

Doctors had to contend with wars, floods, and language barriers to make their campaign a success.

Smallpox was merciless, killing one third of people it infected and leaving many survivors permanently pockmarked and blind. Although smallpox was more common during the 18th and 19th centuries, it was still a leading cause of death even up until the early 1950s, killing an estimated 50 million people annually.

A Primitive Cure

Sometime during the 10th century, Chinese physicians figured out that exposing people to a tiny bit of smallpox would sometimes result in a milder infection and immunity to the disease afterward (if the person survived). Desperate for a cure, people would huff powders made of smallpox scabs or insert smallpox pus into their skin, all in the hopes of getting immunity without having to get too sick. However, this method – called inoculation – didn't always work. People could still catch the full-blown disease, spread it to others, or even catch another infectious disease like syphilis in the process.

A Breakthrough Treatment

For centuries, inoculation – however imperfect – was the only protection the world had against smallpox. But in the late 18th century, an English physician named Edward Jenner created a more effective method. Jenner discovered that inoculating a person with cowpox – a much milder relative of the smallpox virus – would make that person immune to smallpox as well, but this time without the possibility of actually catching or transmitting smallpox. His breakthrough became the world's first vaccine against a contagious disease. Other researchers, like Louis Pasteur, would use these same principles to make vaccines for global killers like anthrax and rabies. Vaccination was considered a miracle, conferring all of the rewards of having gotten sick (immunity) without the risk of death or blindness.

Scaling the Cure

As vaccination became more widespread, the number of global smallpox deaths began to drop, particularly in Europe and the United States. But even as late as 1967, smallpox was still killing anywhere from 10 to 15 million people in poorer parts of the globe. The World Health Assembly (a decision-making body of the World Health Organization) decided that year to launch the first coordinated effort to eradicate smallpox from the planet completely, aiming for 80 percent vaccine coverage in every country in which the disease was endemic – a total of 33 countries.

But officials knew that eradicating smallpox would be easier said than done. Doctors had to contend with wars, floods, and language barriers to make their campaign a success. The vaccination initiative in Bangladesh proved the most challenging, due to its population density and the prevalence of the disease, writes journalist Laurie Garrett in her book, The Coming Plague.

In one instance, French physician Daniel Tarantola on assignment in Bangladesh confronted a murderous gang that was thought to be spreading smallpox throughout the countryside during their crime sprees. Without police protection, Tarantola confronted the gang and "faced down guns" in order to immunize them, protecting the villagers from repeated outbreaks.

Because not enough vaccines existed to vaccinate everyone in a given country, doctors utilized a strategy called "ring vaccination," which meant locating individual outbreaks and vaccinating all known and possible contacts to stop an outbreak at its source. Fewer than 50 percent of the population in Nigeria received a vaccine, for example, but thanks to ring vaccination, it was eradicated in that country nonetheless. Doctors worked tirelessly for the next eleven years to immunize as many people as possible.

The World Health Organization declared smallpox officially eradicated on May 8, 1980.

A Resounding Success

In November 1975, officials discovered a case of variola major — the more virulent strain of the smallpox virus — in a three-year-old Bangladeshi girl named Rahima Banu. Banu was forcibly quarantined in her family's home with armed guards until the risk of transmission had passed, while officials went door-to-door vaccinating everyone within a five-mile radius. Two years later, the last case of variola major in human history was reported in Somalia. When no new community-acquired cases appeared after that, the World Health Organization declared smallpox officially eradicated on May 8, 1980.

Because of smallpox, we now know it's possible to completely eliminate a disease. But is it likely to happen again with other diseases, like COVID-19? Some scientists aren't so sure. As dangerous as smallpox was, it had a few characteristics that made eradication possibly easier than for other diseases. Smallpox, for instance, has no animal reservoir, meaning that it could not circulate in animals and resurge in a human population at a later date. Additionally, a person who had smallpox once was guaranteed immunity from the disease thereafter — which is not the case for COVID-19.

In The Coming Plague, Japanese physician Isao Arita, who led the WHO's Smallpox Eradication Unit, admitted to routinely defying orders from the WHO, mobilizing to parts of the world without official approval and sometimes even vaccinating people against their will. "If we hadn't broken every single WHO rule many times over, we would have never defeated smallpox," Arita said. "Never."

Still, thanks to the life-saving technology of vaccines – and the tireless efforts of doctors and scientists across the globe – a once-lethal disease is now a thing of the past.