Society Needs Regulations to Prevent Research Abuses

A tension exists between scientists/doctors and government regulators.

[Editor's Note: Our Big Moral Question this month is, "Do government regulations help or hurt the goal of responsible and timely scientific innovation?"]

Government regulations help more than hurt the goal of responsible and timely scientific innovation. Opponents might argue that without regulations, researchers would be free to do whatever they want. But without ethics and regulations, scientists have performed horrific experiments. In Nazi concentration camps, for instance, doctors forced prisoners to stay in the snow to see how long it took for these inmates to freeze to death. These researchers also removed prisoner's limbs in order to try to develop innovations to reconnect these body parts, but all the experiments failed.

Researchers in not only industry, but also academia have violated research participants' rights.

Due to these atrocities, after the war, the Nuremberg Tribunal established the first ethical guidelines for research, mandating that all study participants provide informed consent. Yet many researchers, including those in leading U.S. academic institutions and government agencies, failed to follow these dictates. The U.S. government, for instance, secretly infected Guatemalan men with syphilis in order to study the disease and experimented on soldiers, exposing them without consent to biological and chemical warfare agents. In the 1960s, researchers at New York's Willowbrook State School purposefully fed intellectually disabled children infected stool extracts with hepatitis to study the disease. In 1966, in the New England Journal of Medicine, Henry Beecher, a Harvard anesthesiologist, described 22 cases of unethical research published in the nation's leading medical journals, but were mostly conducted without informed consent, and at times harmed participants without offering them any benefit.

Despite heightened awareness and enhanced guidelines, abuses continued. Until a 1974 journalistic exposé, the U.S. government continued to fund the now-notorious Tuskegee syphilis study of infected poor African-American men in rural Alabama, refusing to offer these men penicillin when it became available as effective treatment for the disease.

In response, in 1974 Congress passed the National Research Act, establishing research ethics committees or Institutional Review Boards (IRBs), to guide scientists, allowing them to innovate while protecting study participants' rights. Routinely, IRBs now detect and prevent unethical studies from starting.

Still, even with these regulations, researchers have at times conducted unethical investigations. In 1999 at the Los Angeles Veterans Affairs Hospital, for example, a patient twice refused to participate in a study that would prolong his surgery. The researcher nonetheless proceeded to experiment on him anyway, using an electrical probe in the patient's heart to collect data.

Part of the problem and consequent need for regulations is that researchers have conflicts of interest and often do not recognize ethical challenges their research may pose.

Pharmaceutical company scandals, involving Avandia, and Neurontin and other drugs, raise added concerns. In marketing Vioxx, OxyContin, and tobacco, corporations have hidden findings that might undercut sales.

Regulations become increasingly critical as drug companies and the NIH conduct increasing amounts of research in the developing world. In 1996, Pfizer conducted a study of bacterial meningitis in Nigeria in which 11 children died. The families thus sued. Pfizer produced a Nigerian IRB approval letter, but the letter turned out to have been forged. No Nigerian IRB had ever approved the study. Fourteen years later, Wikileaks revealed that Pfizer had hired detectives to find evidence of corruption against the Nigerian Attorney General, to compel him to drop the lawsuit.

Researchers in not only industry, but also academia have violated research participants' rights. Arizona State University scientists wanted to investigate the genes of a Native American group, the Havasupai, who were concerned about their high rates of diabetes. The investigators also wanted to study the group's rates of schizophrenia, but feared that the tribe would oppose the study, given the stigma. Hence, these researchers decided to mislead the tribe, stating that the study was only about diabetes. The university's research ethics committee knew the scientists' plan to study schizophrenia, but approved the study, including the consent form, which did not mention any psychiatric diagnoses. The Havasupai gave blood samples, but later learned that the researchers published articles about the tribe's schizophrenia and alcoholism, and genetic origins in Asia (while the Havasupai believed they originated in the Grand Canyon, where they now lived, and which they thus argued they owned). A 2010 legal settlement required that the university return the blood samples to the tribe, which then destroyed them. Had the researchers instead worked with the tribe more respectfully, they could have advanced science in many ways.

Part of the problem and consequent need for regulations is that researchers have conflicts of interest and often do not recognize ethical challenges their research may pose.

Such violations threaten to lower public trust in science, particularly among vulnerable groups that have historically been systemically mistreated, diminishing public and government support for research and for the National Institutes of Health, National Science Foundation and Centers for Disease Control, all of which conduct large numbers of studies.

Research that has failed to follow ethics has in fact impeded innovation.

In popular culture, myths of immoral science and technology--from Frankenstein to Big Brother and Dr. Strangelove--loom.

Admittedly, regulations involve inherent tradeoffs. Following certain rules can take time and effort. Certain regulations may in fact limit research that might potentially advance knowledge, but be grossly unethical. For instance, if our society's sole goal was to have scientists innovate as much as possible, we might let them stick needles into healthy people's brains to remove cells in return for cash that many vulnerable poor people might find desirable. But these studies would clearly pose major ethical problems.

Research that has failed to follow ethics has in fact impeded innovation. In 1999, the death of a young man, Jesse Gelsinger, in a gene therapy experiment in which the investigator was subsequently found to have major conflicts of interest, delayed innovations in the field of gene therapy research for years.

Without regulations, companies might market products that prove dangerous, leading to massive lawsuits that could also ultimately stifle further innovation within an industry.

The key question is not whether regulations help or hurt science alone, but whether they help or hurt science that is both "responsible and innovative."

We don't want "over-regulation." Rather, the right amount of regulations is needed – neither too much nor too little. Hence, policy makers in this area have developed regulations in fair and transparent ways and have also been working to reduce the burden on researchers – for instance, by allowing single IRBs to review multi-site studies, rather than having multiple IRBs do so, which can create obstacles.

In sum, society requires a proper balance of regulations to ensure ethical research, avoid abuses, and ultimately aid us all by promoting responsible innovation.

[Ed. Note: Check out the opposite viewpoint here, and follow LeapsMag on social media to share your perspective.]

Tech-related injuries are becoming more common as many people depend on - and often develop addictions for - smart phones and computers.

In the 1990s, a mysterious virus spread throughout the Massachusetts Institute of Technology Artificial Intelligence Lab—or that’s what the scientists who worked there thought. More of them rubbed their aching forearms and massaged their cricked necks as new computers were introduced to the AI Lab on a floor-by-floor basis. They realized their musculoskeletal issues coincided with the arrival of these new computers—some of which were mounted high up on lab benches in awkward positions—and the hours spent typing on them.

Today, these injuries have become more common in a society awash with smart devices, sleek computers, and other gadgets. And we don’t just get hurt from typing on desktop computers; we’re massaging our sore wrists from hours of texting and Facetiming on phones, especially as they get bigger in size.

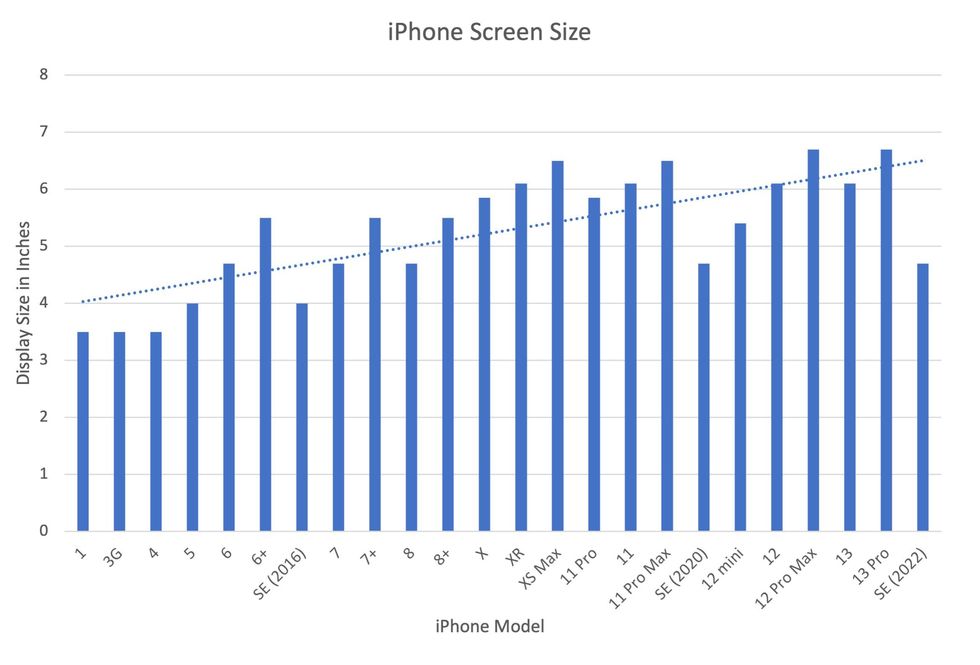

In 2007, the first iPhone measured 3.5-inches diagonally, a measurement known as the display size. That’s been nearly doubled by the newest iPhone 13 Pro, which has a 6.7-inch display. Other phones, too, like the Google Pixel 6 and the Samsung Galaxy S22, have bigger screens than their predecessors. Physical therapists and orthopedic surgeons have had to come up with names for a variety of new conditions: selfie elbow, tech neck, texting thumb. Orthopedic surgeon Sonya Sloan says she sees selfie elbow in younger kids and in women more often than men. She hears complaints related to technology once or twice a day.

The addictive quality of smartphones and social media means that people spend more time on their devices, which exacerbates injuries. According to Statista, 68 percent of those surveyed spent over three hours a day on their phone, and almost half spent five to six hours a day. Another report showed that people dedicate a third of their day to checking their phones, while the Media Effects Research Laboratory at Pennsylvania State University has found that bigger screens, ideal for entertainment purposes, immerse their users more than smaller screens. Oversized screens also provide easier navigation and more space for those with bigger hands or trouble seeing.

But others with conditions like arthritis can benefit from smaller phones. In March of 2016, Apple released the iPhone SE with a display size of 4.7 inches—an inch smaller than the iPhone 7, released that September. Apple has since come out with two more versions of the diminutive iPhone SE, one in 2020 and another in 2022.

These devices are now an inextricable part of our lives. So where does the burden of responsibility lie? Is it with consumers to adjust body positioning, get ergonomic workstations, and change habits to abate tech-related pain? Or should tech companies be held accountable?

Kavin Senapathy, a freelance science journalist, has the Google Pixel 6. She was drawn to the phone because Google marketed the Pixel 6’s camera as better at capturing different skin tones. But this phone boasts one of the largest display sizes on the market: 6.4 inches.

Senapathy was diagnosed with carpal and cubital tunnel syndromes in 2017 and fibromyalgia in 2019. She has had to create a curated ergonomic workplace setup, otherwise her wrists and hands get weak and tingly, and she’s had to adjust how she holds her phone to prevent pain flares.

Recently, Senapathy underwent an electromyography, or an EMG, in which doctors insert electrodes into muscles to measure their electrical activity. The electrical response of the muscles tells doctors whether the nerve cells and muscles are successfully communicating. Depending on her results, steroid shots and even surgery might be required. Senapathy wants to stick with her Pixel 6, but the pain she’s experiencing may push her to buy a smaller phone. Unfortunately, options for these modestly sized phones are more limited.

These devices are now an inextricable part of our lives. So where does the burden of responsibility lie? Is it with consumers like Senapathy to adjust body positioning, get ergonomic workstations, and change habits to abate tech-related pain? Or should tech companies be held accountable for creating addictive devices that lead to musculoskeletal injury?

Kavin Senapathy, a freelance journalist, bought the Google Pixel 6 because of its high-quality camera, but she’s had to adjust how she holds the oversized phone to prevent pain flares.

Kavin Senapathy

A one-size-fits-all mentality for smartphones will continue to lead to injuries because every user has different wants and needs. S. Shyam Sundar, the founder of Penn State’s lab on media effects and a communications professor, says the needs for mobility and portability conflict with the desire for greater visibility. “The best thing a company can do is offer different sizes,” he says.

Joanna Bryson, an AI ethics expert and professor at The Hertie School of Governance in Berlin, Germany, echoed these sentiments. “A lot of the lack of choice we see comes from the fact that the markets have consolidated so much,” she says. “We want to make sure there’s sufficient diversity [of products].”

Consumers can still maintain some control despite the ubiquity of tech. Sloan, the orthopedic surgeon, has to pester her son to change his body positioning when using his tablet. Our heads get heavier as they bend forward: at rest, they weigh 12 pounds, but bent 60 degrees, they weigh 60. “I have to tell him, ‘Raise your head, son!’” she says. It’s important, Sloan explains, to consider that growth and development will affect ligaments and bones in the neck, potentially making kids even more vulnerable to injuries from misusing gadgets. She recommends that parents limit their kids’ tech time to alleviate strain. She also suggested that tech companies implement a timer to remind us to change our body positioning.

In 2017, Nan-Wei Gong, a former contractor for Google, founded Figur8, which uses wearable trackers to measure muscle function and joint movement. It’s like physical therapy with biofeedback. “Each unique injury has a different biomarker,” says Gong. “With Figur8, you are comparing yourself to yourself.” This allows an individual to self-monitor for wear and tear and strengthen an injury in a way that’s efficient and designed for their body. Gong noticed that the work-from-home model during the COVID-19 pandemic created a new set of ergonomic problems that resulted in injuries. Figur8 provides real-time data for these injuries because “behavioral change requires feedback.”

Gong worked on a project called Jacquard while at Google. Textile experts weave conductive thread into their fabric, and the result is a patch of the fabric—like the cuff of a Levi’s jacket—that responds to commands on your smartphone. One swipe can call your partner or check the weather. It was designed with cyclists in mind who can’t easily check their phones, and it’s part of a growing movement in the tech industry to deliver creative, hands-free design. Gong thinks that engineers at large corporations like Google have accessibility in mind; it’s part of what drives their decisions for new products.

Display sizes of iPhones have become larger over time.

Sourced from Screenrant https://screenrant.com/iphone-apple-release-chronological-order-smartphone/ and Apple Tech Specs: https://www.apple.com/iphone-se/specs/

Back in Germany, Joanna Bryson reminds us that products like smartphones should adhere to best practices. These rules may be especially important for phones and other products with AI that are addictive. Disclosure, accountability, and regulation are important for AI, she says. “The correct balance will keep changing. But we have responsibilities and obligations to each other.” She was on an AI Ethics Council at Google, but the committee was disbanded after only one week due to issues with one of their members.

Bryson was upset about the Council’s dissolution but has faith that other regulatory bodies will prevail. OECD.AI, and international nonprofit, has drafted policies to regulate AI, which countries can sign and implement. “As of July 2021, 46 governments have adhered to the AI principles,” their website reads.

Sundar, the media effects professor, also directs Penn State’s Center for Socially Responsible AI. He says that inclusivity is a crucial aspect of social responsibility and how devices using AI are designed. “We have to go beyond first designing technologies and then making them accessible,” he says. “Instead, we should be considering the issues potentially faced by all different kinds of users before even designing them.”

Entomologist Jessica Ware is using new technologies to identify insect species in a changing climate. She shares her suggestions for how we can live harmoniously with creeper crawlers everywhere.

Jessica Ware is obsessed with bugs.

My guest today is a leading researcher on insects, the president of the Entomological Society of America and a curator at the American Museum of Natural History. Learn more about her here.

You may not think that insects and human health go hand-in-hand, but as Jessica makes clear, they’re closely related. A lot of people care about their health, and the health of other creatures on the planet, and the health of the planet itself, but researchers like Jessica are studying another thing we should be focusing on even more: how these seemingly separate areas are deeply entwined. (This is the theme of an upcoming event hosted by Leaps.org and the Aspen Institute.)

Listen to the Episode

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

Entomologist Jessica Ware

D. Finnin / AMNH

Maybe it feels like a core human instinct to demonize bugs as gross. We seem to try to eradicate them in every way possible, whether that’s with poison, or getting out our blood thirst by stomping them whenever they creep and crawl into sight.

But where did our fear of bugs really come from? Jessica makes a compelling case that a lot of it is cultural, rather than in-born, and we should be following the lead of other cultures that have learned to live with and appreciate bugs.

The truth is that a healthy planet depends on insects. You may feel stung by that news if you hate bugs. Reality bites.

Jessica and I talk about whether learning to live with insects should include eating them and gene editing them so they don’t transmit viruses. She also tells me about her important research into using genomic tools to track bugs in the wild to figure out why and how we’ve lost 50 percent of the insect population since 1970 according to some estimates – bad news because the ecosystems that make up the planet heavily depend on insects. Jessica is leading the way to better understand what’s causing these declines in order to start reversing these trends to save the insects and to save ourselves.