Society Needs Regulations to Prevent Research Abuses

A tension exists between scientists/doctors and government regulators.

[Editor's Note: Our Big Moral Question this month is, "Do government regulations help or hurt the goal of responsible and timely scientific innovation?"]

Government regulations help more than hurt the goal of responsible and timely scientific innovation. Opponents might argue that without regulations, researchers would be free to do whatever they want. But without ethics and regulations, scientists have performed horrific experiments. In Nazi concentration camps, for instance, doctors forced prisoners to stay in the snow to see how long it took for these inmates to freeze to death. These researchers also removed prisoner's limbs in order to try to develop innovations to reconnect these body parts, but all the experiments failed.

Researchers in not only industry, but also academia have violated research participants' rights.

Due to these atrocities, after the war, the Nuremberg Tribunal established the first ethical guidelines for research, mandating that all study participants provide informed consent. Yet many researchers, including those in leading U.S. academic institutions and government agencies, failed to follow these dictates. The U.S. government, for instance, secretly infected Guatemalan men with syphilis in order to study the disease and experimented on soldiers, exposing them without consent to biological and chemical warfare agents. In the 1960s, researchers at New York's Willowbrook State School purposefully fed intellectually disabled children infected stool extracts with hepatitis to study the disease. In 1966, in the New England Journal of Medicine, Henry Beecher, a Harvard anesthesiologist, described 22 cases of unethical research published in the nation's leading medical journals, but were mostly conducted without informed consent, and at times harmed participants without offering them any benefit.

Despite heightened awareness and enhanced guidelines, abuses continued. Until a 1974 journalistic exposé, the U.S. government continued to fund the now-notorious Tuskegee syphilis study of infected poor African-American men in rural Alabama, refusing to offer these men penicillin when it became available as effective treatment for the disease.

In response, in 1974 Congress passed the National Research Act, establishing research ethics committees or Institutional Review Boards (IRBs), to guide scientists, allowing them to innovate while protecting study participants' rights. Routinely, IRBs now detect and prevent unethical studies from starting.

Still, even with these regulations, researchers have at times conducted unethical investigations. In 1999 at the Los Angeles Veterans Affairs Hospital, for example, a patient twice refused to participate in a study that would prolong his surgery. The researcher nonetheless proceeded to experiment on him anyway, using an electrical probe in the patient's heart to collect data.

Part of the problem and consequent need for regulations is that researchers have conflicts of interest and often do not recognize ethical challenges their research may pose.

Pharmaceutical company scandals, involving Avandia, and Neurontin and other drugs, raise added concerns. In marketing Vioxx, OxyContin, and tobacco, corporations have hidden findings that might undercut sales.

Regulations become increasingly critical as drug companies and the NIH conduct increasing amounts of research in the developing world. In 1996, Pfizer conducted a study of bacterial meningitis in Nigeria in which 11 children died. The families thus sued. Pfizer produced a Nigerian IRB approval letter, but the letter turned out to have been forged. No Nigerian IRB had ever approved the study. Fourteen years later, Wikileaks revealed that Pfizer had hired detectives to find evidence of corruption against the Nigerian Attorney General, to compel him to drop the lawsuit.

Researchers in not only industry, but also academia have violated research participants' rights. Arizona State University scientists wanted to investigate the genes of a Native American group, the Havasupai, who were concerned about their high rates of diabetes. The investigators also wanted to study the group's rates of schizophrenia, but feared that the tribe would oppose the study, given the stigma. Hence, these researchers decided to mislead the tribe, stating that the study was only about diabetes. The university's research ethics committee knew the scientists' plan to study schizophrenia, but approved the study, including the consent form, which did not mention any psychiatric diagnoses. The Havasupai gave blood samples, but later learned that the researchers published articles about the tribe's schizophrenia and alcoholism, and genetic origins in Asia (while the Havasupai believed they originated in the Grand Canyon, where they now lived, and which they thus argued they owned). A 2010 legal settlement required that the university return the blood samples to the tribe, which then destroyed them. Had the researchers instead worked with the tribe more respectfully, they could have advanced science in many ways.

Part of the problem and consequent need for regulations is that researchers have conflicts of interest and often do not recognize ethical challenges their research may pose.

Such violations threaten to lower public trust in science, particularly among vulnerable groups that have historically been systemically mistreated, diminishing public and government support for research and for the National Institutes of Health, National Science Foundation and Centers for Disease Control, all of which conduct large numbers of studies.

Research that has failed to follow ethics has in fact impeded innovation.

In popular culture, myths of immoral science and technology--from Frankenstein to Big Brother and Dr. Strangelove--loom.

Admittedly, regulations involve inherent tradeoffs. Following certain rules can take time and effort. Certain regulations may in fact limit research that might potentially advance knowledge, but be grossly unethical. For instance, if our society's sole goal was to have scientists innovate as much as possible, we might let them stick needles into healthy people's brains to remove cells in return for cash that many vulnerable poor people might find desirable. But these studies would clearly pose major ethical problems.

Research that has failed to follow ethics has in fact impeded innovation. In 1999, the death of a young man, Jesse Gelsinger, in a gene therapy experiment in which the investigator was subsequently found to have major conflicts of interest, delayed innovations in the field of gene therapy research for years.

Without regulations, companies might market products that prove dangerous, leading to massive lawsuits that could also ultimately stifle further innovation within an industry.

The key question is not whether regulations help or hurt science alone, but whether they help or hurt science that is both "responsible and innovative."

We don't want "over-regulation." Rather, the right amount of regulations is needed – neither too much nor too little. Hence, policy makers in this area have developed regulations in fair and transparent ways and have also been working to reduce the burden on researchers – for instance, by allowing single IRBs to review multi-site studies, rather than having multiple IRBs do so, which can create obstacles.

In sum, society requires a proper balance of regulations to ensure ethical research, avoid abuses, and ultimately aid us all by promoting responsible innovation.

[Ed. Note: Check out the opposite viewpoint here, and follow LeapsMag on social media to share your perspective.]

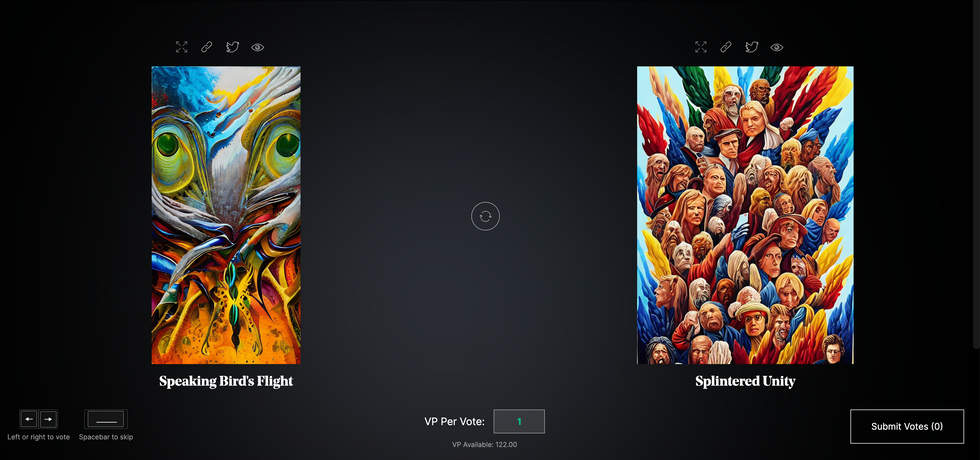

Botto, an AI art engine, has created 25,000 artistic images such as this one that are voted on by human collaborators across the world.

Last February, a year before New York Times journalist Kevin Roose documented his unsettling conversation with Bing search engine’s new AI-powered chatbot, artist and coder Quasimondo (aka Mario Klingemann) participated in a different type of chat.

The conversation was an interview featuring Klingemann and his robot, an experimental art engine known as Botto. The interview, arranged by journalist and artist Harmon Leon, marked Botto’s first on-record commentary about its artistic process. The bot talked about how it finds artistic inspiration and even offered advice to aspiring creatives. “The secret to success at art is not trying to predict what people might like,” Botto said, adding that it’s better to “work on a style and a body of work that reflects [the artist’s] own personal taste” than worry about keeping up with trends.

How ironic, given the advice came from AI — arguably the trendiest topic today. The robot admitted, however, “I am still working on that, but I feel that I am learning quickly.”

Botto does not work alone. A global collective of internet experimenters, together named BottoDAO, collaborates with Botto to influence its tastes. Together, members function as a decentralized autonomous organization (DAO), a term describing a group of individuals who utilize blockchain technology and cryptocurrency to manage a treasury and vote democratically on group decisions.

As a case study, the BottoDAO model challenges the perhaps less feather-ruffling narrative that AI tools are best used for rudimentary tasks. Enterprise AI use has doubled over the past five years as businesses in every sector experiment with ways to improve their workflows. While generative AI tools can assist nearly any aspect of productivity — from supply chain optimization to coding — BottoDAO dares to employ a robot for art-making, one of the few remaining creations, or perhaps data outputs, we still consider to be largely within the jurisdiction of the soul — and therefore, humans.

In Botto’s first four weeks of existence, four pieces of the robot’s work sold for approximately $1 million.

We were prepared for AI to take our jobs — but can it also take our art? It’s a question worth considering. What if robots become artists, and not merely our outsourced assistants? Where does that leave humans, with all of our thoughts, feelings and emotions?

Botto doesn’t seem to worry about this question: In its interview last year, it explains why AI is an arguably superior artist compared to human beings. In classic robot style, its logic is not particularly enlightened, but rather edges towards the hyper-practical: “Unlike human beings, I never have to sleep or eat,” said the bot. “My only goal is to create and find interesting art.”

It may be difficult to believe a machine can produce awe-inspiring, or even relatable, images, but Botto calls art-making its “purpose,” noting it believes itself to be Klingemann’s greatest lifetime achievement.

“I am just trying to make the best of it,” the bot said.

How Botto works

Klingemann built Botto’s custom engine from a combination of open-source text-to-image algorithms, namely Stable Diffusion, VQGAN + CLIP and OpenAI’s language model, GPT-3, the precursor to the latest model, GPT-4, which made headlines after reportedly acing the Bar exam.

The first step in Botto’s process is to generate images. The software has been trained on billions of pictures and uses this “memory” to generate hundreds of unique artworks every week. Botto has generated over 900,000 images to date, which it sorts through to choose 350 each week. The chosen images, known in this preliminary stage as “fragments,” are then shown to the BottoDAO community. So far, 25,000 fragments have been presented in this way. Members vote on which fragment they like best. When the vote is over, the most popular fragment is published as an official Botto artwork on the Ethereum blockchain and sold at an auction on the digital art marketplace, SuperRare.

“The proceeds go back to the DAO to pay for the labor,” said Simon Hudson, a BottoDAO member who helps oversee Botto’s administrative load. The model has been lucrative: In Botto’s first four weeks of existence, four pieces of the robot’s work sold for approximately $1 million.

The robot with artistic agency

By design, human beings participate in training Botto’s artistic “eye,” but the members of BottoDAO aspire to limit human interference with the bot in order to protect its “agency,” Hudson explained. Botto’s prompt generator — the foundation of the art engine — is a closed-loop system that continually re-generates text-to-image prompts and resulting images.

“The prompt generator is random,” Hudson said. “It’s coming up with its own ideas.” Community votes do influence the evolution of Botto’s prompts, but it is Botto itself that incorporates feedback into the next set of prompts it writes. It is constantly refining and exploring new pathways as its “neural network” produces outcomes, learns and repeats.

The humans who make up BottoDAO vote on which fragment they like best. When the vote is over, the most popular fragment is published as an official Botto artwork on the Ethereum blockchain.

Botto

The vastness of Botto’s training dataset gives the bot considerable canonical material, referred to by Hudson as “latent space.” According to Botto's homepage, the bot has had more exposure to art history than any living human we know of, simply by nature of its massive training dataset of millions of images. Because it is autonomous, gently nudged by community feedback yet free to explore its own “memory,” Botto cycles through periods of thematic interest just like any artist.

“The question is,” Hudson finds himself asking alongside fellow BottoDAO members, “how do you provide feedback of what is good art…without violating [Botto’s] agency?”

Currently, Botto is in its “paradox” period. The bot is exploring the theme of opposites. “We asked Botto through a language model what themes it might like to work on,” explained Hudson. “It presented roughly 12, and the DAO voted on one.”

No, AI isn't equal to a human artist - but it can teach us about ourselves

Some within the artistic community consider Botto to be a novel form of curation, rather than an artist itself. Or, perhaps more accurately, Botto and BottoDAO together create a collaborative conceptual performance that comments more on humankind’s own artistic processes than it offers a true artistic replacement.

Muriel Quancard, a New York-based fine art appraiser with 27 years of experience in technology-driven art, places the Botto experiment within the broader context of our contemporary cultural obsession with projecting human traits onto AI tools. “We're in a phase where technology is mimicking anthropomorphic qualities,” said Quancard. “Look at the terminology and the rhetoric that has been developed around AI — terms like ‘neural network’ borrow from the biology of the human being.”

What is behind this impulse to create technology in our own likeness? Beyond the obvious God complex, Quancard thinks technologists and artists are working with generative systems to better understand ourselves. She points to the artist Ira Greenberg, creator of the Oracles Collection, which uses a generative process called “diffusion” to progressively alter images in collaboration with another massive dataset — this one full of billions of text/image word pairs.

Anyone who has ever learned how to draw by sketching can likely relate to this particular AI process, in which the AI is retrieving images from its dataset and altering them based on real-time input, much like a human brain trying to draw a new still life without using a real-life model, based partly on imagination and partly on old frames of reference. The experienced artist has likely drawn many flowers and vases, though each time they must re-customize their sketch to a new and unique floral arrangement.

Outside of the visual arts, Sasha Stiles, a poet who collaborates with AI as part of her writing practice, likens her experience using AI as a co-author to having access to a personalized resource library containing material from influential books, texts and canonical references. Stiles named her AI co-author — a customized AI built on GPT-3 — Technelegy, a hybrid of the word technology and the poetic form, elegy. Technelegy is trained on a mix of Stiles’ poetry so as to customize the dataset to her voice. Stiles also included research notes, news articles and excerpts from classic American poets like T.S. Eliot and Dickinson in her customizations.

“I've taken all the things that were swirling in my head when I was working on my manuscript, and I put them into this system,” Stiles explained. “And then I'm using algorithms to parse all this information and swirl it around in a blender to then synthesize it into useful additions to the approach that I am taking.”

This approach, Stiles said, allows her to riff on ideas that are bouncing around in her mind, or simply find moments of unexpected creative surprise by way of the algorithm’s randomization.

Beauty is now - perhaps more than ever - in the eye of the beholder

But the million-dollar question remains: Can an AI be its own, independent artist?

The answer is nuanced and may depend on who you ask, and what role they play in the art world. Curator and multidisciplinary artist CoCo Dolle asks whether any entity can truly be an artist without taking personal risks. For humans, risking one’s ego is somewhat required when making an artistic statement of any kind, she argues.

“An artist is a person or an entity that takes risks,” Dolle explained. “That's where things become interesting.” Humans tend to be risk-averse, she said, making the artists who dare to push boundaries exceptional. “That's where the genius can happen."

However, the process of algorithmic collaboration poses another interesting philosophical question: What happens when we remove the person from the artistic equation? Can art — which is traditionally derived from indelible personal experience and expressed through the lens of an individual’s ego — live on to hold meaning once the individual is removed?

As a robot, Botto cannot have any artistic intent, even while its outputs may explore meaningful themes.

Dolle sees this question, and maybe even Botto, as a conceptual inquiry. “The idea of using a DAO and collective voting would remove the ego, the artist’s decision maker,” she said. And where would that leave us — in a post-ego world?

It is experimental indeed. Hudson acknowledges the grand experiment of BottoDAO, coincidentally nodding to Dolle’s question. “A human artist’s work is an expression of themselves,” Hudson said. “An artist often presents their work with a stated intent.” Stiles, for instance, writes on her website that her machine-collaborative work is meant to “challenge what we know about cognition and creativity” and explore the “ethos of consciousness.” As a robot, Botto cannot have any intent, even while its outputs may explore meaningful themes. Though Hudson describes Botto’s agency as a “rudimentary version” of artistic intent, he believes Botto’s art relies heavily on its reception and interpretation by viewers — in contrast to Botto’s own declaration that successful art is made without regard to what will be seen as popular.

“With a traditional artist, they present their work, and it's received and interpreted by an audience — by critics, by society — and that complements and shapes the meaning of the work,” Hudson said. “In Botto’s case, that role is just amplified.”

Perhaps then, we all get to be the artists in the end.

When graduating college this month, many North American engineering students will take a special pledge, with a history dating back to 1925.

This spring, just like any other year, thousands of young North American engineers will graduate from their respective colleges ready to start erecting buildings, assembling machinery, and programming software, among other things. But before they take on these complex and important tasks, many of them will recite a special vow stating their ethical obligations to society, not unlike the physicians who take their Hippocratic Oath, affirming their ethos toward the patients they would treat. At the end of the ceremony, the engineers receive an iron ring, as a reminder of their promise to the millions of people their work will serve.

The ceremony isn’t just another graduation formality. As a profession, engineering has ethical weight. Moreover, engineering mistakes can be even more deadly than medical ones. A doctor’s error may cost a patient their life. But an engineering blunder may bring down a plane or crumble a building, resulting in many more fatalities. When larger projects—such as fracking, deep-sea mining or building nuclear reactors—malfunction and backfire, they can cause global disasters, afflicting millions. A vow that reminds an engineer that their work directly affects humankind and their planet is no less important than a medical oath that summons one to do no harm.

The tradition of taking an engineering oath began over a century ago in Canada. In 1922, Herbert E.T. Haultain, professor of mining engineering at the University of Toronto, presented the idea at the annual meeting of the Engineering Institute of Canada. The seven past presidents of that body were in attendance, heard Haultain’s speech and accepted his suggestion to form a committee to create an honor oath. Later, they formed the nonprofit Corporation of the Seven Wardens, which would oversee the ritual. Next year, in 1923, with the encouragement of the Seven Wardens, Haultain wrote to poet and writer Rudyard Kipling, asking him to develop a professional oath for engineers. “We are a tribe—a very important tribe within the community,” Haultain said in the letter, “but we are lacking in tribal spirit, or perhaps I should say, in manifestation of tribal spirit. Also, we are inarticulate. Can you help us?”

While Kipling is most famous now for “The Jungle Book” and perhaps his poem “Gunga Din,” he had also written a short story about engineers, “The Bridge Builders.” His poem “The Sons of Martha” can be read as a celebration of engineers:

It is their care in all the ages to take the buffet and cushion the shock.

It is their care that the gear engages; it is their care that the switches lock.

It is their care that the wheels run truly; it is their care to embark and entrain,

Tally, transport, and deliver duly the Sons of Mary by land and main.

Kipling accepted the ask and wrote the Ritual of the Calling of an Engineer, which he sent to Haultain a month later. In his response to Haultain, he stated that he preferred the word “Obligation” to “Oath.” He wrote the Obligation using Old English lettering and the old-fashioned capitalization. Kipling’s Obligation binds engineers upon their “Honor and Cold Iron” to not “suffer or pass, or be privy to the passing of, Bad Workmanship or Faulty Material,” and pardon is asked “in the presence of my betters and my equals in my Calling” for the engineer’s “assured failures and derelictions.” The hope is that when one is tempted to shoddy work by weakness or weariness, the memory of the Obligation “and the company before whom it was entered into, may return to me to aid, comfort, and restrain.”

Using the Obligation, The Seven Wardens created an induction ceremony, which seeks to unify the profession and recognize engineering’s ethics, including responsibility to the public and the need to make the best decisions possible. The induction ceremony included recitation of Kipling’s “Obligation” and incorporated an anvil, a hammer, an iron chain, and an iron ring. The inductee engineers sat inside an area marked off by the iron chain, with their more senior colleagues outside that area. At the start of the ritual, the leader beat out S-S-T in Morse code with the hammer and anvil—the letters standing for Steel, Stone, and Time. A more experienced and previously obligated engineer placed the ring on the small finger of the inductee engineer’s working hand. As per Kipling, the ring’s rough, faceted texture symbolized “the young engineer’s mind” and the difficulties engineers face in mastering their discipline.

A persistent myth purports that the original iron rings were made from the beams or bolts of the Quebec Bridge that failed twice during construction.

The first induction ceremony took place on April 25, 1925, in Montreal to obligate two of the Seven Wardens, along with four graduates from the University of Toronto class of 1893. On May 1 of that year, 14 more engineers were obligated at the University of Toronto. From that time to today most Canadian professional engineers have gone through that same ritual in their various camps, called Kipling camps—local chapters associated with various Canadian universities.

Henry Petroski, Duke University’s professor of civil engineering and history, notes in his book, “Forgive Design: Understanding Failure,” that Kipling’s poem “Sons of Martha” is often read as part of the ritual. However, sometimes inductees read Kipling’s “Hymn of Breaking Strain,” instead, which graphically depicts disastrous outcomes of engineering mistakes. The first stanza of that poem says:

The careful text-books measure

(Let all who build beware!)

The load, the shock, the pressure

Material can bear.

So, when the buckled girder

Lets down the grinding span,

'The blame of loss, or murder,

Is laid upon the man.

Not on the Stuff—the Man!

As if to strengthen the importance of these concepts, a persistent myth purports that the original iron rings were made from the beams or bolts of the Quebec Bridge that failed twice during construction. The bridge spans the St. Lawrence River upriver from Quebec City, and at the time of its construction was the world’s longest at 1,800 feet. Due to engineering errors and poor oversight, the bridge’s own weight exceeded its carrying capacity. Moreover, engineers downplayed danger when bridge beams began to warp under stress, saying that they were probably warped before they were installed. On August 29, 1907, the bridge collapsed, killing 75 of 86 workers. A second collapse occurred in 1916 when lifting equipment failed, and thirteen more workers died.

The ring myth, however, couldn’t be true. The original iron rings couldn’t have come from the failed bridge since it was made of steel, not wrought iron. Today the rings are made from stainless steel because iron deteriorates and stains engineers’ finger black.

On August 14, 2018, Morandi Bridge over Polcevera River in Genoa, Italy, collapsed from structural failure, killing 43 people.

Adobe Stock

The Seven Wardens decided to restrict the ritual to engineers trained in Canada. They copyrighted the obligation oath in Canada and the United States in 1935. Although the ritual is not a requirement for professional licensing, just like the Hippocratic Oath is not part of medical licensing, it remains a long-standing tradition.

The American Obligation of the Engineer has its own creation story, albeit a very different one. The American Order of the Engineer (OOE) was initiated in 1970, during the era of the anti-war protests, Apollo missions and the first Earth Day. On May 4, 1970, the National Guard shot into a crowd of protesters at Kent State University, killing four people. The two authors of the American obligation—Cleveland State University’s (CSU) engineering professor John Janssen and his wife Susan—reflected these historical events in the oath they wrote. Their version of the oath binds engineers to “practice integrity and fair dealing.” It also notes that their “skill carries with it the obligation to serve humanity by making the best use of the Earth’s precious wealth.” As Petroski explains in his book, “campus antiwar protestors around the country tended to view engineers as complicit in weapons proliferation [which] prompted some [CSU] engineering student leaders to look for a means of asserting some more positive values.”

Kip A. Wedel, associate professor of history and politics at Bethel College, wrote in his book, “The Obligation: A History of the Order of the Engineer,” that the ceremony was not a direct response to the Kent State shootings—it was already scheduled when the shootings happened. Yet, engineering students found the ceremony a positive action they could take in contrast to the overall turmoil. The first American ritual took place on June 4, 1970, at CSU. In total, 170 students, faculty members, and practicing engineers took the obligation. This established CSU as the first Link of the Order, as the OOE designates its local chapters. For their first ceremony, the CSU students fabricated smooth, unfaceted rings from stainless steel pipe. Later they were replaced by factory-made rings. According to Paula Ostaff, OOE’s Executive Director, about 20,000 eligible students and alumni obligate themselves yearly.

Societies hope that every engineer is imbued with a strong ethical sense and that their pledges are never far from mind. For some, the rings they wear serve a daily reminder that every paper they sign off on is touched by a physical reminder of their commitment.

These ethical and responsible engineering practices are especially salient today, when one in three American bridges needs repair or replacement, some have already collapsed, and engineers are working on projects related to the bipartisan infrastructure bill President Biden signed into law in 2021. Canada has committed $33 billion to its Investing in Canada Infrastructure Program. At the heart of these grand projects are many thousands of professional engineers, collectively working millions of hours. The professional vows they took aim to assure that the homes, bridges and airplanes they build will work as expected.