Society Needs Regulations to Prevent Research Abuses

A tension exists between scientists/doctors and government regulators.

[Editor's Note: Our Big Moral Question this month is, "Do government regulations help or hurt the goal of responsible and timely scientific innovation?"]

Government regulations help more than hurt the goal of responsible and timely scientific innovation. Opponents might argue that without regulations, researchers would be free to do whatever they want. But without ethics and regulations, scientists have performed horrific experiments. In Nazi concentration camps, for instance, doctors forced prisoners to stay in the snow to see how long it took for these inmates to freeze to death. These researchers also removed prisoner's limbs in order to try to develop innovations to reconnect these body parts, but all the experiments failed.

Researchers in not only industry, but also academia have violated research participants' rights.

Due to these atrocities, after the war, the Nuremberg Tribunal established the first ethical guidelines for research, mandating that all study participants provide informed consent. Yet many researchers, including those in leading U.S. academic institutions and government agencies, failed to follow these dictates. The U.S. government, for instance, secretly infected Guatemalan men with syphilis in order to study the disease and experimented on soldiers, exposing them without consent to biological and chemical warfare agents. In the 1960s, researchers at New York's Willowbrook State School purposefully fed intellectually disabled children infected stool extracts with hepatitis to study the disease. In 1966, in the New England Journal of Medicine, Henry Beecher, a Harvard anesthesiologist, described 22 cases of unethical research published in the nation's leading medical journals, but were mostly conducted without informed consent, and at times harmed participants without offering them any benefit.

Despite heightened awareness and enhanced guidelines, abuses continued. Until a 1974 journalistic exposé, the U.S. government continued to fund the now-notorious Tuskegee syphilis study of infected poor African-American men in rural Alabama, refusing to offer these men penicillin when it became available as effective treatment for the disease.

In response, in 1974 Congress passed the National Research Act, establishing research ethics committees or Institutional Review Boards (IRBs), to guide scientists, allowing them to innovate while protecting study participants' rights. Routinely, IRBs now detect and prevent unethical studies from starting.

Still, even with these regulations, researchers have at times conducted unethical investigations. In 1999 at the Los Angeles Veterans Affairs Hospital, for example, a patient twice refused to participate in a study that would prolong his surgery. The researcher nonetheless proceeded to experiment on him anyway, using an electrical probe in the patient's heart to collect data.

Part of the problem and consequent need for regulations is that researchers have conflicts of interest and often do not recognize ethical challenges their research may pose.

Pharmaceutical company scandals, involving Avandia, and Neurontin and other drugs, raise added concerns. In marketing Vioxx, OxyContin, and tobacco, corporations have hidden findings that might undercut sales.

Regulations become increasingly critical as drug companies and the NIH conduct increasing amounts of research in the developing world. In 1996, Pfizer conducted a study of bacterial meningitis in Nigeria in which 11 children died. The families thus sued. Pfizer produced a Nigerian IRB approval letter, but the letter turned out to have been forged. No Nigerian IRB had ever approved the study. Fourteen years later, Wikileaks revealed that Pfizer had hired detectives to find evidence of corruption against the Nigerian Attorney General, to compel him to drop the lawsuit.

Researchers in not only industry, but also academia have violated research participants' rights. Arizona State University scientists wanted to investigate the genes of a Native American group, the Havasupai, who were concerned about their high rates of diabetes. The investigators also wanted to study the group's rates of schizophrenia, but feared that the tribe would oppose the study, given the stigma. Hence, these researchers decided to mislead the tribe, stating that the study was only about diabetes. The university's research ethics committee knew the scientists' plan to study schizophrenia, but approved the study, including the consent form, which did not mention any psychiatric diagnoses. The Havasupai gave blood samples, but later learned that the researchers published articles about the tribe's schizophrenia and alcoholism, and genetic origins in Asia (while the Havasupai believed they originated in the Grand Canyon, where they now lived, and which they thus argued they owned). A 2010 legal settlement required that the university return the blood samples to the tribe, which then destroyed them. Had the researchers instead worked with the tribe more respectfully, they could have advanced science in many ways.

Part of the problem and consequent need for regulations is that researchers have conflicts of interest and often do not recognize ethical challenges their research may pose.

Such violations threaten to lower public trust in science, particularly among vulnerable groups that have historically been systemically mistreated, diminishing public and government support for research and for the National Institutes of Health, National Science Foundation and Centers for Disease Control, all of which conduct large numbers of studies.

Research that has failed to follow ethics has in fact impeded innovation.

In popular culture, myths of immoral science and technology--from Frankenstein to Big Brother and Dr. Strangelove--loom.

Admittedly, regulations involve inherent tradeoffs. Following certain rules can take time and effort. Certain regulations may in fact limit research that might potentially advance knowledge, but be grossly unethical. For instance, if our society's sole goal was to have scientists innovate as much as possible, we might let them stick needles into healthy people's brains to remove cells in return for cash that many vulnerable poor people might find desirable. But these studies would clearly pose major ethical problems.

Research that has failed to follow ethics has in fact impeded innovation. In 1999, the death of a young man, Jesse Gelsinger, in a gene therapy experiment in which the investigator was subsequently found to have major conflicts of interest, delayed innovations in the field of gene therapy research for years.

Without regulations, companies might market products that prove dangerous, leading to massive lawsuits that could also ultimately stifle further innovation within an industry.

The key question is not whether regulations help or hurt science alone, but whether they help or hurt science that is both "responsible and innovative."

We don't want "over-regulation." Rather, the right amount of regulations is needed – neither too much nor too little. Hence, policy makers in this area have developed regulations in fair and transparent ways and have also been working to reduce the burden on researchers – for instance, by allowing single IRBs to review multi-site studies, rather than having multiple IRBs do so, which can create obstacles.

In sum, society requires a proper balance of regulations to ensure ethical research, avoid abuses, and ultimately aid us all by promoting responsible innovation.

[Ed. Note: Check out the opposite viewpoint here, and follow LeapsMag on social media to share your perspective.]

Dr. May Edward Chinn, Kizzmekia Corbett, PhD., and Alice Ball, among others, have been behind some of the most important scientific work of the last century.

If you look back on the last century of scientific achievements, you might notice that most of the scientists we celebrate are overwhelmingly white, while scientists of color take a backseat. Since the Nobel Prize was introduced in 1901, for example, no black scientists have landed this prestigious award.

The work of black women scientists has gone unrecognized in particular. Their work uncredited and often stolen, black women have nevertheless contributed to some of the most important advancements of the last 100 years, from the polio vaccine to GPS.

Here are five black women who have changed science forever.

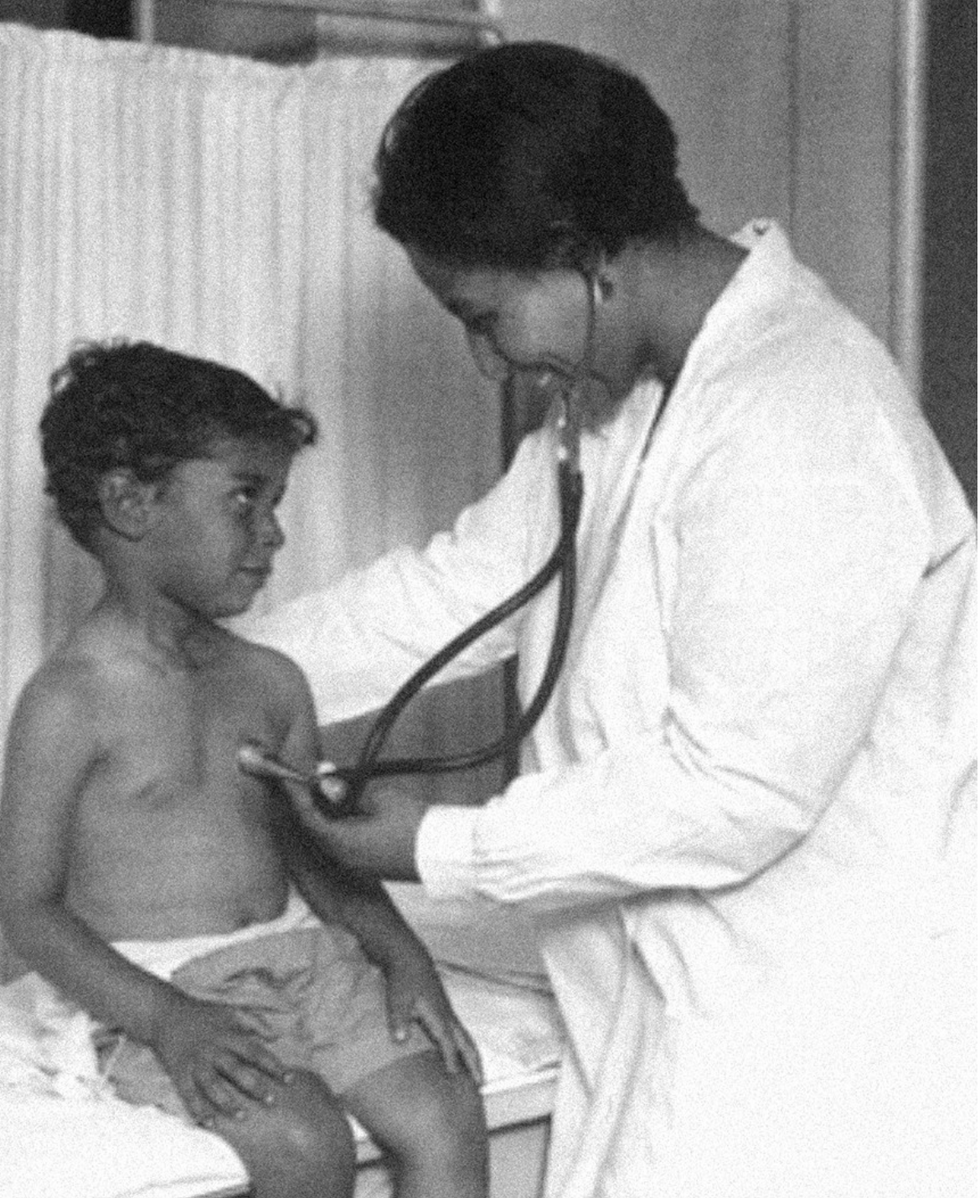

Dr. May Edward Chinn

Dr. May Edward Chinn practicing medicine in Harlem

George B. Davis, PhD.

Chinn was born to poor parents in New York City just before the start of the 20th century. Although she showed great promise as a pianist, playing with the legendary musician Paul Robeson throughout the 1920s, she decided to study medicine instead. Chinn, like other black doctors of the time, were barred from studying or practicing in New York hospitals. So Chinn formed a private practice and made house calls, sometimes operating in patients’ living rooms, using an ironing board as a makeshift operating table.

Chinn worked among the city’s poor, and in doing this, started to notice her patients had late-stage cancers that often had gone undetected or untreated for years. To learn more about cancer and its prevention, Chinn begged information off white doctors who were willing to share with her, and even accompanied her patients to other clinic appointments in the city, claiming to be the family physician. Chinn took this information and integrated it into her own practice, creating guidelines for early cancer detection that were revolutionary at the time—for instance, checking patient health histories, checking family histories, performing routine pap smears, and screening patients for cancer even before they showed symptoms. For years, Chinn was the only black female doctor working in Harlem, and she continued to work closely with the poor and advocate for early cancer screenings until she retired at age 81.

Alice Ball

Pictorial Press Ltd/Alamy

Alice Ball was a chemist best known for her groundbreaking work on the development of the “Ball Method,” the first successful treatment for those suffering from leprosy during the early 20th century.

In 1916, while she was an undergraduate student at the University of Hawaii, Ball studied the effects of Chaulmoogra oil in treating leprosy. This oil was a well-established therapy in Asian countries, but it had such a foul taste and led to such unpleasant side effects that many patients refused to take it.

So Ball developed a method to isolate and extract the active compounds from Chaulmoogra oil to create an injectable medicine. This marked a significant breakthrough in leprosy treatment and became the standard of care for several decades afterward.

Unfortunately, Ball died before she could publish her results, and credit for this discovery was given to another scientist. One of her colleagues, however, was able to properly credit her in a publication in 1922.

Henrietta Lacks

onathan Newton/The Washington Post/Getty

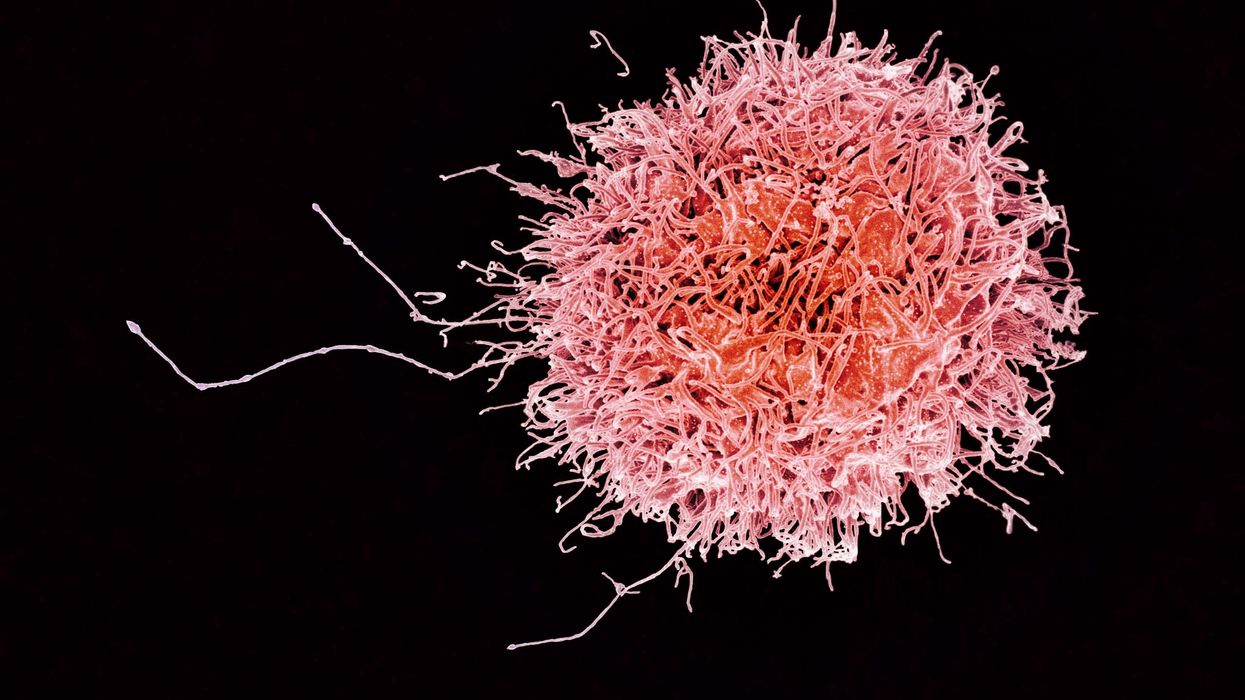

The person who arguably contributed the most to scientific research in the last century, surprisingly, wasn’t even a scientist. Henrietta Lacks was a tobacco farmer and mother of five children who lived in Maryland during the 1940s. In 1951, Lacks visited Johns Hopkins Hospital where doctors found a cancerous tumor on her cervix. Before treating the tumor, the doctor who examined Lacks clipped two small samples of tissue from Lacks’ cervix without her knowledge or consent—something unthinkable today thanks to informed consent practices, but commonplace back then.

As Lacks underwent treatment for her cancer, her tissue samples made their way to the desk of George Otto Gey, a cancer researcher at Johns Hopkins. He noticed that unlike the other cell cultures that came into his lab, Lacks’ cells grew and multiplied instead of dying out. Lacks’ cells were “immortal,” meaning that because of a genetic defect, they were able to reproduce indefinitely as long as certain conditions were kept stable inside the lab.

Gey started shipping Lacks’ cells to other researchers across the globe, and scientists were thrilled to have an unlimited amount of sturdy human cells with which to experiment. Long after Lacks died of cervical cancer in 1951, her cells continued to multiply and scientists continued to use them to develop cancer treatments, to learn more about HIV/AIDS, to pioneer fertility treatments like in vitro fertilization, and to develop the polio vaccine. To this day, Lacks’ cells have saved an estimated 10 million lives, and her family is beginning to get the compensation and recognition that Henrietta deserved.

Dr. Gladys West

Andre West

Gladys West was a mathematician who helped invent something nearly everyone uses today. West started her career in the 1950s at the Naval Surface Warfare Center Dahlgren Division in Virginia, and took data from satellites to create a mathematical model of the Earth’s shape and gravitational field. This important work would lay the groundwork for the technology that would later become the Global Positioning System, or GPS. West’s work was not widely recognized until she was honored by the US Air Force in 2018.

Dr. Kizzmekia "Kizzy" Corbett

TIME Magazine

At just 35 years old, immunologist Kizzmekia “Kizzy” Corbett has already made history. A viral immunologist by training, Corbett studied coronaviruses at the National Institutes of Health (NIH) and researched possible vaccines for coronaviruses such as SARS (Severe Acute Respiratory Syndrome) and MERS (Middle East Respiratory Syndrome).

At the start of the COVID pandemic, Corbett and her team at the NIH partnered with pharmaceutical giant Moderna to develop an mRNA-based vaccine against the virus. Corbett’s previous work with mRNA and coronaviruses was vital in developing the vaccine, which became one of the first to be authorized for emergency use in the United States. The vaccine, along with others, is responsible for saving an estimated 14 million lives.On today’s episode of Making Sense of Science, I’m honored to be joined by Dr. Paul Song, a physician, oncologist, progressive activist and biotech chief medical officer. Through his company, NKGen Biotech, Dr. Song is leveraging the power of patients’ own immune systems by supercharging the body’s natural killer cells to make new treatments for Alzheimer’s and cancer.

Whereas other treatments for Alzheimer’s focus directly on reducing the build-up of proteins in the brain such as amyloid and tau in patients will mild cognitive impairment, NKGen is seeking to help patients that much of the rest of the medical community has written off as hopeless cases, those with late stage Alzheimer’s. And in small studies, NKGen has shown remarkable results, even improvement in the symptoms of people with these very progressed forms of Alzheimer’s, above and beyond slowing down the disease.

In the realm of cancer, Dr. Song is similarly setting his sights on another group of patients for whom treatment options are few and far between: people with solid tumors. Whereas some gradual progress has been made in treating blood cancers such as certain leukemias in past few decades, solid tumors have been even more of a challenge. But Dr. Song’s approach of using natural killer cells to treat solid tumors is promising. You may have heard of CAR-T, which uses genetic engineering to introduce cells into the body that have a particular function to help treat a disease. NKGen focuses on other means to enhance the 40 plus receptors of natural killer cells, making them more receptive and sensitive to picking out cancer cells.

Paul Y. Song, MD is currently CEO and Vice Chairman of NKGen Biotech. Dr. Song’s last clinical role was Asst. Professor at the Samuel Oschin Cancer Center at Cedars Sinai Medical Center.

Dr. Song served as the very first visiting fellow on healthcare policy in the California Department of Insurance in 2013. He is currently on the advisory board of the Pritzker School of Molecular Engineering at the University of Chicago and a board member of Mercy Corps, The Center for Health and Democracy, and Gideon’s Promise.

Dr. Song graduated with honors from the University of Chicago and received his MD from George Washington University. He completed his residency in radiation oncology at the University of Chicago where he served as Chief Resident and did a brachytherapy fellowship at the Institute Gustave Roussy in Villejuif, France. He was also awarded an ASTRO research fellowship in 1995 for his research in radiation inducible gene therapy.

With Dr. Song’s leadership, NKGen Biotech’s work on natural killer cells represents cutting-edge science leading to key findings and important pieces of the puzzle for treating two of humanity’s most intractable diseases.

Show links

- Paul Song LinkedIn

- NKGen Biotech on Twitter - @NKGenBiotech

- NKGen Website: https://nkgenbiotech.com/

- NKGen appoints Paul Song

- Patient Story: https://pix11.com/news/local-news/long-island/promising-new-treatment-for-advanced-alzheimers-patients/

- FDA Clearance: https://nkgenbiotech.com/nkgen-biotech-receives-ind-clearance-from-fda-for-snk02-allogeneic-natural-killer-cell-therapy-for-solid-tumors/Q3 earnings data: https://www.nasdaq.com/press-release/nkgen-biotech-inc.-reports-third-quarter-2023-financial-results-and-business