Trading syphilis for malaria: How doctors treated one deadly disease by infecting patients with another

In the 1920s, doctors induced a high fever in patients - so called "fever therapy" - as a way to help them recover from syphilis, though it involved ethical problems.

If you had lived one hundred years ago, syphilis – a bacterial infection spread by sexual contact – would likely have been one of your worst nightmares. Even though syphilis still exists, it can now be detected early and cured quickly with a course of antibiotics. Back then, however, before antibiotics and without an easy way to detect the disease, syphilis was very often a death sentence.

To understand how feared syphilis once was, it’s important to understand exactly what it does if it’s allowed to progress: the infections start off as small, painless sores or even a single sore near the vagina, penis, anus, or mouth. The sores disappear around three to six weeks after the initial infection – but untreated, syphilis moves into a secondary stage, often presenting as a mild rash in various areas of the body (such as the palms of a person’s hands) or through other minor symptoms. The disease progresses from there, often quietly and without noticeable symptoms, sometimes for decades before it reaches its final stages, where it can cause blindness, organ damage, and even dementia. Research indicates, in fact, that as much as 10 percent of psychiatric admissions in the early 20th century were due to dementia caused by syphilis, also known as neurosyphilis.

Like any bacterial disease, syphilis can affect kids, too. Though it’s spread primarily through sexual contact, it can also be transmitted from mother to child during birth, causing lifelong disability.

The poet-physician Aldabert Bettman, who wrote fictionalized poems based on his experiences as a doctor in the 1930s, described the effect syphilis could have on an infant in his poem Daniel Healy:

I always got away clean

when I went out

With the boys.

The night before

I was married

I went out,—But was not so fortunate;

And I infected

My bride.

When little Daniel

Was born

His eyes discharged;

And I dared not tell

That because

I had seen too much

Little Daniel sees not at all

Given the horrors of untreated syphilis, it’s maybe not surprising that people would go to extremes to try and treat it. One of the earliest remedies for syphilis, dating back to 15th century Naples, was using mercury – either rubbing it on the skin where blisters appeared, or breathing it in as a vapor. (Not surprisingly, many people who underwent this type of “treatment” died of mercury poisoning.)

Other primitive treatments included using tinctures made of a flowering plant called guaiacum, as well as inducing “sweat baths” to eliminate the syphilitic toxins. In 1910, an arsenic-based drug called Salvarsan hit the market and was hailed as a “magic bullet” for its ability to target and destroy the syphilis-causing bacteria without harming the patient. However, while Salvarsan was effective in treating early-stage syphilis, it was largely ineffective by the time the infection progressed beyond the second stage. Tens of thousands of people each year continued to die of syphilis or were otherwise shipped off to psychiatric wards due to neurosyphilis.

It was in one of these psychiatric units in the early 20th century that Dr. Julius Wagner-Juaregg got the idea for a potential cure.

Wagner-Juaregg was an Austrian-born physician trained in “experimental pathology” at the University of Vienna. Wagner-Juaregg started his medical career conducting lab experiments on animals and then moved on to work at different psychiatric clinics in Vienna, despite having no training in psychiatry or neurology.

Wagner-Juaregg’s work was controversial to say the least. At the time, medicine – particularly psychiatric medicine – did not have anywhere near the same rigorous ethical standards that doctors, researchers, and other scientists are bound to today. Wagner-Juaregg would devise wild theories about the cause of their psychiatric ailments and then perform experimental procedures in an attempt to cure them. (As just one example, Wagner-Juaregg would sterilize his adolescent male patients, thinking “excessive masturbation” was the cause of their schizophrenia.)

But sometimes these wild theories paid off. In 1883, during his residency, Wagner-Juaregg noted that a female patient with mental illness who had contracted a skin infection and suffered a high fever experienced a sudden (and seemingly miraculous) remission from her psychosis symptoms after the fever had cleared. Wagner-Juaregg theorized that inducing a high fever in his patients with neurosyphilis could help them recover as well.

Eventually, Wagner-Juaregg was able to put his theory to the test. Around 1890, Wagner-Juaregg got his hands on something called tuberculin, a therapeutic treatment created by the German microbiologist Robert Koch in order to cure tuberculosis. Tuberculin would later turn out to be completely ineffective for treating tuberculosis, often creating severe immune responses in patients – but for a short time, Wagner-Juaregg had some success in using tuberculin to help his dementia patients. Giving his patients tuberculin resulted in a high fever – and after completing the treatment, Wagner-Jauregg reported that his patient’s dementia was completely halted. The success was short-lived, however: Wagner-Juaregg eventually had to discontinue tuberculin as a treatment, as it began to be considered too toxic.

By 1917, Wagner-Juaregg’s theory about syphilis and fevers was becoming more credible – and one day a new opportunity presented itself when a wounded soldier, stricken with malaria and a related fever, was accidentally admitted to his psychiatric unit.

When his findings were published in 1918, Wagner-Juaregg’s so-called “fever therapy” swept the globe.

What Wagner-Juaregg did next was ethically deplorable by any standard: Before he allowed the soldier any quinine (the standard treatment for malaria at the time), Wagner-Juaregg took a small sample of the soldier’s blood and inoculated three syphilis patients with the sample, rubbing the blood on their open syphilitic blisters.

It’s unclear how well the malaria treatment worked for those three specific patients – but Wagner-Juaregg’s records show that in the span of one year, he inoculated a total of nine patients with malaria, for the sole purpose of inducing fevers, and six of them made a full recovery. Wagner-Juaregg’s treatment was so successful, in fact, that one of his inoculated patients, an actor who was unable to work due to his dementia, was eventually able to find work again and return to the stage. Two additional patients – a military officer and a clerk – recovered from their once-terminal illnesses and returned to their former careers as well.

When his findings were published in 1918, Wagner-Juaregg’s so-called “fever therapy” swept the globe. The treatment was hailed as a breakthrough – but it still had risks. Malaria itself had a mortality rate of about 15 percent at the time. Many people considered that to be a gamble worth taking, compared to dying a painful, protracted death from syphilis.

Malaria could also be effectively treated much of the time with quinine, whereas other fever-causing illnesses were not so easily treated. Triggering a fever by way of malaria specifically, therefore, became the standard of care.

Tens of thousands of people with syphilitic dementia would go on to be treated with fever therapy until the early 1940s, when a combination of Salvarsan and penicillin caused syphilis infections to decline. Eventually, neurosyphilis became rare, and then nearly unheard of.

Despite his contributions to medicine, it’s important to note that Wagner-Juaregg was most definitely not a person to idolize. In fact, he was an outspoken anti-Semite and proponent of eugenics, arguing that Jews were more prone to mental illness and that people who were mentally ill should be forcibly sterilized. (Wagner-Juaregg later became a Nazi sympathizer during Hitler’s rise to power even though, bizarrely, his first wife was Jewish.) Another problematic issue was that his fever therapy involved experimental treatments on many who, due to their cognitive issues, could not give informed consent.

Lack of consent was also a fundamental problem with the syphilis study at Tuskegee, appalling research that began just 14 years after Wagner-Juaregg published his “fever therapy” findings.

Still, despite his outrageous views, Wagner-Juaregg was awarded the Nobel Prize in Medicine or Physiology in 1927 – and despite some egregious human rights abuses, the miraculous “fever therapy” was partly responsible for taming one of the deadliest plagues in human history.

Researchers are looking to engineer chocolate with less oil, which could reduce some of its detriments to health.

Creamy milk with velvety texture. Dark with sprinkles of sea salt. Crunchy hazelnut-studded chunks. Chocolate is a treat that appeals to billions of people worldwide, no matter the age. And it’s not only the taste, but the feel of a chocolate morsel slowly melting in our mouths—the smoothness and slipperiness—that’s part of the overwhelming satisfaction. Why is it so enjoyable?

That’s what an interdisciplinary research team of chocolate lovers from the University of Leeds School of Food Science and Nutrition and School of Mechanical Engineering in the U.K. resolved to study in 2021. They wanted to know, “What is making chocolate that desirable?” says Siavash Soltanahmadi, one of the lead authors of a new study about chocolates hedonistic quality.

Besides addressing the researchers’ general curiosity, their answers might help chocolate manufacturers make the delicacy even more enjoyable and potentially healthier. After all, chocolate is a billion-dollar industry. Revenue from chocolate sales, whether milk or dark, is forecasted to grow 13 percent by 2027 in the U.K. In the U.S., chocolate and candy sales increased by 11 percent from 2020 to 2021, on track to reach $44.9 billion by 2026. Figuring out how chocolate affects the human palate could up the ante even more.

Building a 3D tongue

The team began by building a 3D tongue to analyze the physical process by which chocolate breaks down inside the mouth.

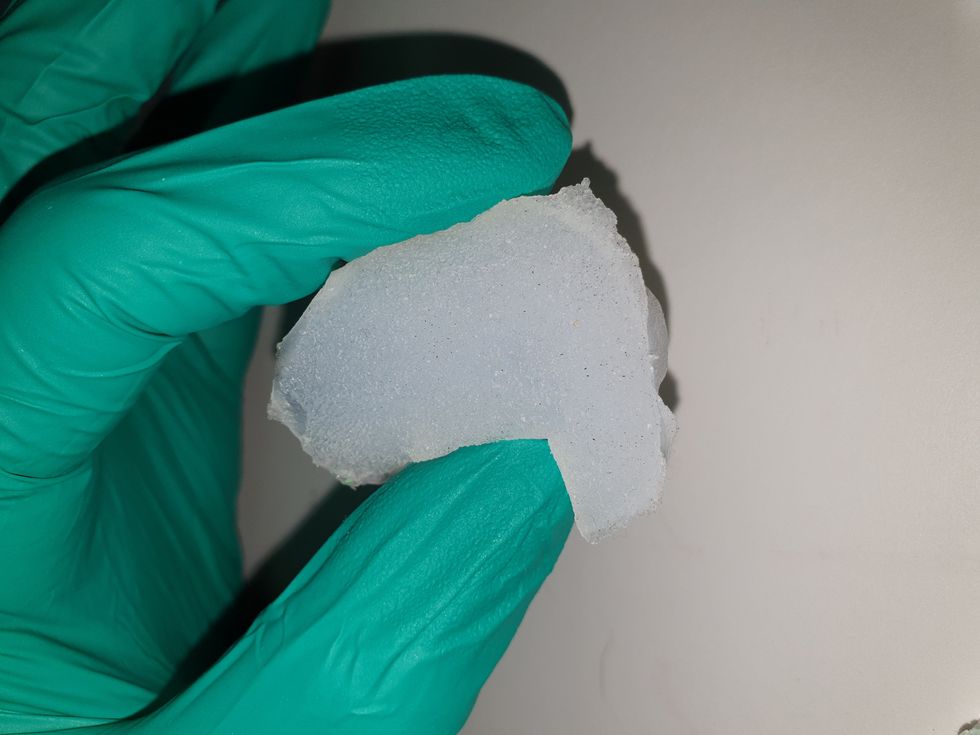

As part of the effort, reported earlier this year in the scientific journal ACS Applied Materials and Interfaces, the team studied a large variety of human tongues with the intention to build an “average” 3D model, says Soltanahmadi, a lubrication scientist. When it comes to edible substances, lubrication science looks at how food feels in the mouth and can help design foods that taste better and have more satisfying texture or health benefits.

There are variations in how people enjoy chocolate; some chew it while others “lick it” inside their mouths.

Tongue impressions from human participants studied using optical imaging helped the team build a tongue with key characteristics. “Our tongue is not a smooth muscle, it’s got some texture, it has got some roughness,” Soltanahmadi says. From those images, the team came up with a digital design of an average tongue and, using 3D printed molds, built a “mimic tongue.” They also added elastomers—such as silicone or polyurethane—to mimic the roughness, the texture and the mechanical properties of a real tongue. “Wettability" was another key component of the 3D tongue, Soltanahmadi says, referring to whether a surface mixes with water (hydrophilic) or, in the case of oil, resists it (hydrophobic).

Notably, the resulting artificial 3D-tongues looked nothing like the human version, but they were good mimics. The scientists also created “testing kits” that produced data on various physical parameters. One such parameter was viscosity, the measure of how gooey a food or liquid is — honey is more viscous compared to water, for example. Another was tribology, which defines how slippery something is — high fat yogurt is more slippery than low fat yogurt; milk can be more slippery than water. The researchers then mixed chocolate with artificial saliva and spread it on the 3D tongue to measure the tribology and the viscosity. From there they were able to study what happens inside the mouth when we eat chocolate.

The team focused on the stages of lubrication and the location of the fat in the chocolate, a process that has rarely been researched.

The artificial 3D-tongues look nothing like human tongues, but they function well enough to do the job.

Courtesy Anwesha Sarkar and University of Leeds

The oral processing of chocolate

We process food in our mouths in several stages, Soltanahmadi says. And there is variation in these stages depending on the type of food. So, the oral processing of a piece of meat would be different from, say, the processing of jelly or popcorn.

There are variations with chocolate, in particular; some people chew it while others use their tongues to explore it (within their mouths), Soltanahmadi explains. “Usually, from a consumer perspective, what we find is that if you have a luxury kind of a chocolate, then people tend to start with licking the chocolate rather than chewing it.” The researchers used a luxury brand of dark chocolate and focused on the process of licking rather than chewing.

As solid cocoa particles and fat are released, the emulsion envelops the tongue and coats the palette creating a smooth feeling of chocolate all over the mouth. That tactile sensation is part of the chocolate’s hedonistic appeal we crave.

Understanding the make-up of the chocolate was also an important step in the study. “Chocolate is a composite material. So, it has cocoa butter, which is oil, it has some particles in it, which is cocoa solid, and it has sugars," Soltanahmadi says. "Dark chocolate has less oil, for example, and less sugar in it, most of the time."

The researchers determined that the oral processing of chocolate begins as soon as it enters a person’s mouth; it starts melting upon exposure to one’s body temperature, even before the tongue starts moving, Soltanahmadi says. Then, lubrication begins. “[Saliva] mixes with the oily chocolate and it makes an emulsion." An emulsion is a fluid with a watery (or aqueous) phase and an oily phase. As chocolate breaks down in the mouth, that solid piece turns into a smooth emulsion with a fatty film. “The oil from the chocolate becomes droplets in a continuous aqueous phase,” says Soltanahmadi. In other words, as solid cocoa particles and fat are released, the emulsion envelops the tongue and coats the palette, creating a smooth feeling of chocolate all over the mouth. That tactile sensation is part of the chocolate’s hedonistic appeal we crave, says Soltanahmadi.

Finding the sweet spot

After determining how chocolate is orally processed, the research team wanted to find the exact sweet spot of the breakdown of solid cocoa particles and fat as they are released into the mouth. They determined that the epicurean pleasure comes only from the chocolate's outer layer of fat; the secondary fatty layers inside the chocolate don’t add to the sensation. It was this final discovery that helped the team determine that it might be possible to produce healthier chocolate that would contain less oil, says Soltanahmadi. And therefore, less fat.

Rongjia Tao, a physicist at Temple University in Philadelphia, thinks the Leeds study and the concept behind it is “very interesting.” Tao, himself, did a study in 2016 and found he could reduce fat in milk chocolate by 20 percent. He believes that the Leeds researchers’ discovery about the first layer of fat being more important for taste than the other layer can inform future chocolate manufacturing. “As a scientist I consider this significant and an important starting point,” he says.

Chocolate is rich in polyphenols, naturally occurring compounds also found in fruits and vegetables, such as grapes, apples and berries. Research found that plant polyphenols can protect against cancer, diabetes and osteoporosis as well as cardiovascular ad neurodegenerative diseases.

Not everyone thinks it’s a good idea, such as chef Michael Antonorsi, founder and owner of Chuao Chocolatier, one of the leading chocolate makers in the U.S. First, he says, “cacao fat is definitely a good fat.” Second, he’s not thrilled that science is trying to interfere with nature. “Every time we've tried to intervene and change nature, we get things out of balance,” says Antonorsi. “There’s a reason cacao is botanically known as food of the gods. The botanical name is the Theobroma cacao: Theobroma in ancient Greek, Theo is God and Brahma is food. So it's a food of the gods,” Antonorsi explains. He’s doubtful that a chocolate made only with a top layer of fat will produce the same epicurean satisfaction. “You're not going to achieve the same sensation because that surface fat is going to dissipate and there is no fat from behind coming to take over,” he says.

Without layers of fat, Antonorsi fears the deeply satisfying experiential part of savoring chocolate will be lost. The University of Leeds team, however, thinks that it may be possible to make chocolate healthier - when consumed in limited amounts - without sacrificing its taste. They believe the concept of less fatty but no less slick chocolate will resonate with at least some chocolate-makers and consumers, too.

Chocolate already contains some healthful compounds. Its cocoa particles have “loads of health benefits,” says Soltanahmadi. Dark chocolate usually has more cocoa than milk chocolate. Some experts recommend that dark chocolate should contain at least 70 percent cocoa in order for it to offer some health benefit. Research has shown that the cocoa in chocolate is rich in polyphenols, naturally occurring compounds also found in fruits and vegetables, such as grapes, apples and berries. Research has shown that consuming plant polyphenols can be protective against cancer, diabetes and osteoporosis as well as cardiovascular and neurodegenerative diseases.

“So keeping the healthy part of it and reducing the oily part of it, which is not healthy, but is giving you that indulgence of it … that was the final aim,” Soltanahmadi says. He adds that the team has been approached by individuals in the chocolate industry about their research. “Everyone wants to have a healthy chocolate, which at the same time tastes brilliant and gives you that self-indulging experience.”

Probiotic bacteria can be engineered to fight antibiotic-resistant superbugs by releasing chemicals that kill them.

In 1945, almost two decades after Alexander Fleming discovered penicillin, he warned that as antibiotics use grows, they may lose their efficiency. He was prescient—the first case of penicillin resistance was reported two years later. Back then, not many people paid attention to Fleming’s warning. After all, the “golden era” of the antibiotics age had just began. By the 1950s, three new antibiotics derived from soil bacteria — streptomycin, chloramphenicol, and tetracycline — could cure infectious diseases like tuberculosis, cholera, meningitis and typhoid fever, among others.

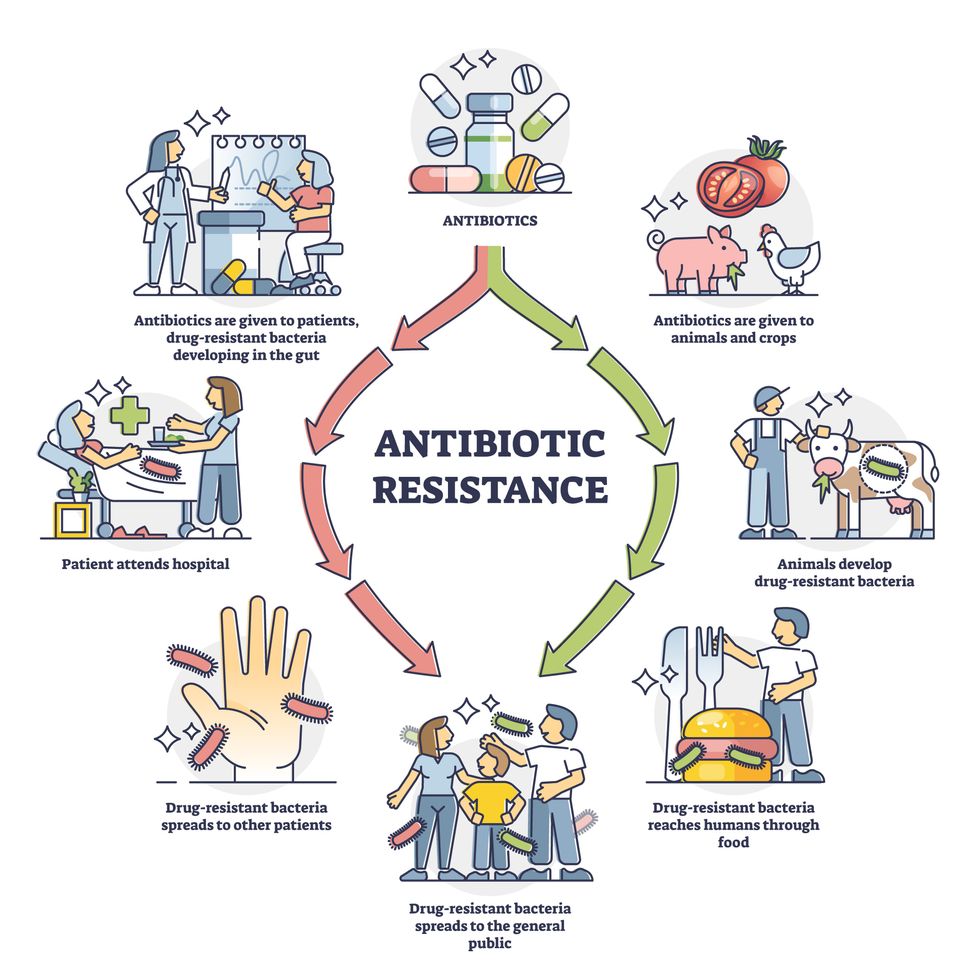

Today, these antibiotics and many of their successors developed through the 1980s are gradually losing their effectiveness. The extensive overuse and misuse of antibiotics led to the rise of drug resistance. The livestock sector buys around 80 percent of all antibiotics sold in the U.S. every year. Farmers feed cows and chickens low doses of antibiotics to prevent infections and fatten up the animals, which eventually causes resistant bacterial strains to evolve. If manure from cattle is used on fields, the soil and vegetables can get contaminated with antibiotic-resistant bacteria. Another major factor is doctors overprescribing antibiotics to humans, particularly in low-income countries. Between 2000 to 2018, the global rates of human antibiotic consumption shot up by 46 percent.

In recent years, researchers have been exploring a promising avenue: the use of synthetic biology to engineer new bacteria that may work better than antibiotics. The need continues to grow, as a Lancet study linked antibiotic resistance to over 1.27 million deaths worldwide in 2019, surpassing HIV/AIDS and malaria. The western sub-Saharan Africa region had the highest death rate (27.3 people per 100,000).

Researchers warn that if nothing changes, by 2050, antibiotic resistance could kill 10 million people annually.

To make it worse, our remedy pipelines are drying up. Out of the 18 biggest pharmaceutical companies, 15 abandoned antibiotic development by 2013. According to the AMR Action Fund, venture capital has remained indifferent towards biotech start-ups developing new antibiotics. In 2019, at least two antibiotic start-ups filed for bankruptcy. As of December 2020, there were 43 new antibiotics in clinical development. But because they are based on previously known molecules, scientists say they are inadequate for treating multidrug-resistant bacteria. Researchers warn that if nothing changes, by 2050, antibiotic resistance could kill 10 million people annually.

The rise of synthetic biology

To circumvent this dire future, scientists have been working on alternative solutions using synthetic biology tools, meaning genetically modifying good bacteria to fight the bad ones.

From the time life evolved on earth around 3.8 billion years ago, bacteria have engaged in biological warfare. They constantly strategize new methods to combat each other by synthesizing toxic proteins that kill competition.

For example, Escherichia coli produces bacteriocins or toxins to kill other strains of E.coli that attempt to colonize the same habitat. Microbes like E.coli (which are not all pathogenic) are also naturally present in the human microbiome. The human microbiome harbors up to 100 trillion symbiotic microbial cells. The majority of them are beneficial organisms residing in the gut at different compositions.

The chemicals that these “good bacteria” produce do not pose any health risks to us, but can be toxic to other bacteria, particularly to human pathogens. For the last three decades, scientists have been manipulating bacteria’s biological warfare tactics to our collective advantage.

In the late 1990s, researchers drew inspiration from electrical and computing engineering principles that involve constructing digital circuits to control devices. In certain ways, every cell in living organisms works like a tiny computer. The cell receives messages in the form of biochemical molecules that cling on to its surface. Those messages get processed within the cells through a series of complex molecular interactions.

Synthetic biologists can harness these living cells’ information processing skills and use them to construct genetic circuits that perform specific instructions—for example, secrete a toxin that kills pathogenic bacteria. “Any synthetic genetic circuit is merely a piece of information that hangs around in the bacteria’s cytoplasm,” explains José Rubén Morones-Ramírez, a professor at the Autonomous University of Nuevo León, Mexico. Then the ribosome, which synthesizes proteins in the cell, processes that new information, making the compounds scientists want bacteria to make. “The genetic circuit remains separated from the living cell’s DNA,” Morones-Ramírez explains. When the engineered bacteria replicates, the genetic circuit doesn’t become part of its genome.

Highly intelligent by bacterial standards, some multidrug resistant V. cholerae strains can also “collaborate” with other intestinal bacterial species to gain advantage and take hold of the gut.

In 2000, Boston-based researchers constructed an E.coli with a genetic switch that toggled between turning genes on and off two. Later, they built some safety checks into their bacteria. “To prevent unintentional or deleterious consequences, in 2009, we built a safety switch in the engineered bacteria’s genetic circuit that gets triggered after it gets exposed to a pathogen," says James Collins, a professor of biological engineering at MIT and faculty member at Harvard University’s Wyss Institute. “After getting rid of the pathogen, the engineered bacteria is designed to switch off and leave the patient's body.”

Overuse and misuse of antibiotics causes resistant strains to evolve

Adobe Stock

Seek and destroy

As the field of synthetic biology developed, scientists began using engineered bacteria to tackle superbugs. They first focused on Vibrio cholerae, which in the 19th and 20th century caused cholera pandemics in India, China, the Middle East, Europe, and Americas. Like many other bacteria, V. cholerae communicate with each other via quorum sensing, a process in which the microorganisms release different signaling molecules, to convey messages to its brethren. Highly intelligent by bacterial standards, some multidrug resistant V. cholerae strains can also “collaborate” with other intestinal bacterial species to gain advantage and take hold of the gut. When untreated, cholera has a mortality rate of 25 to 50 percent and outbreaks frequently occur in developing countries, especially during floods and droughts.

Sometimes, however, V. cholerae makes mistakes. In 2008, researchers at Cornell University observed that when quorum sensing V. cholerae accidentally released high concentrations of a signaling molecule called CAI-1, it had a counterproductive effect—the pathogen couldn’t colonize the gut.

So the group, led by John March, professor of biological and environmental engineering, developed a novel strategy to combat V. cholerae. They genetically engineered E.coli to eavesdrop on V. cholerae communication networks and equipped it with the ability to release the CAI-1 molecules. That interfered with V. cholerae progress. Two years later, the Cornell team showed that V. cholerae-infected mice treated with engineered E.coli had a 92 percent survival rate.

These findings inspired researchers to sic the good bacteria present in foods like yogurt and kimchi onto the drug-resistant ones.

Three years later in 2011, Singapore-based scientists engineered E.coli to detect and destroy Pseudomonas aeruginosa, an often drug-resistant pathogen that causes pneumonia, urinary tract infections, and sepsis. Once the genetically engineered E.coli found its target through its quorum sensing molecules, it then released a peptide, that could eradicate 99 percent of P. aeruginosa cells in a test-tube experiment. The team outlined their work in a Molecular Systems Biology study.

“At the time, we knew that we were entering new, uncharted territory,” says lead author Matthew Chang, an associate professor and synthetic biologist at the National University of Singapore and lead author of the study. “To date, we are still in the process of trying to understand how long these microbes stay in our bodies and how they might continue to evolve.”

More teams followed the same path. In a 2013 study, MIT researchers also genetically engineered E.coli to detect P. aeruginosa via the pathogen’s quorum-sensing molecules. It then destroyed the pathogen by secreting a lab-made toxin.

Probiotics that fight

A year later in 2014, a Nature study found that the abundance of Ruminococcus obeum, a probiotic bacteria naturally occurring in the human microbiome, interrupts and reduces V.cholerae’s colonization— by detecting the pathogen’s quorum sensing molecules. The natural accumulation of R. obeum in Bangladeshi adults helped them recover from cholera despite living in an area with frequent outbreaks.

The findings from 2008 to 2014 inspired Collins and his team to delve into how good bacteria present in foods like yogurt and kimchi can attack drug-resistant bacteria. In 2018, Collins and his team developed the engineered probiotic strategy. They tweaked a bacteria commonly found in yogurt called Lactococcus lactis to treat cholera.

Engineered bacteria can be trained to target pathogens when they are at their most vulnerable metabolic stage in the human gut. --José Rubén Morones-Ramírez.

More scientists followed with more experiments. So far, researchers have engineered various probiotic organisms to fight pathogenic bacteria like Staphylococcus aureus (leading cause of skin, tissue, bone, joint and blood infections) and Clostridium perfringens (which causes watery diarrhea) in test-tube and animal experiments. In 2020, Russian scientists engineered a probiotic called Pichia pastoris to produce an enzyme called lysostaphin that eradicated S. aureus in vitro. Another 2020 study from China used an engineered probiotic bacteria Lactobacilli casei as a vaccine to prevent C. perfringens infection in rabbits.

In a study last year, Ramírez’s group at the Autonomous University of Nuevo León, engineered E. coli to detect quorum-sensing molecules from Methicillin-resistant Staphylococcus aureus or MRSA, a notorious superbug. The E. coli then releases a bacteriocin that kills MRSA. “An antibiotic is just a molecule that is not intelligent,” says Ramírez. “On the other hand, engineered bacteria can be trained to target pathogens when they are at their most vulnerable metabolic stage in the human gut.”

Collins and Timothy Lu, an associate professor of biological engineering at MIT, found that engineered E. coli can help treat other conditions—such as phenylketonuria, a rare metabolic disorder, that causes the build-up of an amino acid phenylalanine. Their start-up Synlogic aims to commercialize the technology, and has completed a phase 2 clinical trial.

Circumventing the challenges

The bacteria-engineering technique is not without pitfalls. One major challenge is that beneficial gut bacteria produce their own quorum-sensing molecules that can be similar to those that pathogens secrete. If an engineered bacteria’s biosensor is not specific enough, it will be ineffective.

Another concern is whether engineered bacteria might mutate after entering the gut. “As with any technology, there are risks where bad actors could have the capability to engineer a microbe to act quite nastily,” says Collins of MIT. But Collins and Ramírez both insist that the chances of the engineered bacteria mutating on its own are virtually non-existent. “It is extremely unlikely for the engineered bacteria to mutate,” Ramírez says. “Coaxing a living cell to do anything on command is immensely challenging. Usually, the greater risk is that the engineered bacteria entirely lose its functionality.”

However, the biggest challenge is bringing the curative bacteria to consumers. Pharmaceutical companies aren’t interested in antibiotics or their alternatives because it’s less profitable than developing new medicines for non-infectious diseases. Unlike the more chronic conditions like diabetes or cancer that require long-term medications, infectious diseases are usually treated much quicker. Running clinical trials are expensive and antibiotic-alternatives aren’t lucrative enough.

“Unfortunately, new medications for antibiotic resistant infections have been pushed to the bottom of the field,” says Lu of MIT. “It's not because the technology does not work. This is more of a market issue. Because clinical trials cost hundreds of millions of dollars, the only solution is that governments will need to fund them.” Lu stresses that societies must lobby to change how the modern healthcare industry works. “The whole world needs better treatments for antibiotic resistance.”