The Case for an Outright Ban on Facial Recognition Technology

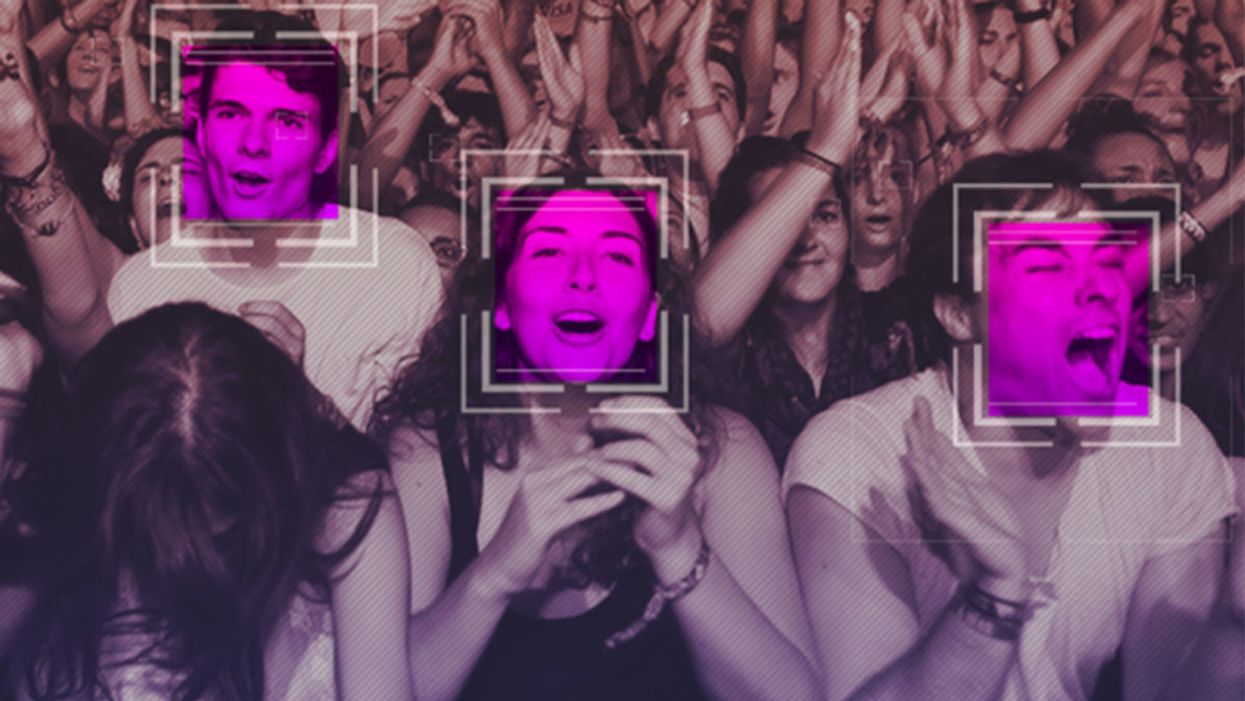

Some experts worry that facial recognition technology is a dangerous enough threat to our basic rights that it should be entirely banned from police and government use.

[Editor's Note: This essay is in response to our current Big Question, which we posed to experts with different perspectives: "Do you think the use of facial recognition technology by the police or government should be banned? If so, why? If not, what limits, if any, should be placed on its use?"]

In a surprise appearance at the tail end of Amazon's much-hyped annual product event last month, CEO Jeff Bezos casually told reporters that his company is writing its own facial recognition legislation.

The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct.

It seems that when you're the wealthiest human alive, there's nothing strange about your company––the largest in the world profiting from the spread of face surveillance technology––writing the rules that govern it.

But if lawmakers and advocates fall into Silicon Valley's trap of "regulating" facial recognition and other forms of invasive biometric surveillance, that's exactly what will happen.

Industry-friendly regulations won't fix the dangers inherent in widespread use of face scanning software, whether it's deployed by governments or for commercial purposes. The use of this technology in public places and for surveillance purposes should be banned outright, and its use by private companies and individuals should be severely restricted. As artificial intelligence expert Luke Stark wrote, it's dangerous enough that it should be outlawed for "almost all practical purposes."

Like biological or nuclear weapons, facial recognition poses such a profound threat to the future of humanity and our basic rights that any potential benefits are far outweighed by the inevitable harms.

We live in cities and towns with an exponentially growing number of always-on cameras, installed in everything from cars to children's toys to Amazon's police-friendly doorbells. The use of computer algorithms to analyze massive databases of footage and photographs could render human privacy extinct. It's a world where nearly everything we do, everywhere we go, everyone we associate with, and everything we buy — or look at and even think of buying — is recorded and can be tracked and analyzed at a mass scale for unimaginably awful purposes.

Biometric tracking enables the automated and pervasive monitoring of an entire population. There's ample evidence that this type of dragnet mass data collection and analysis is not useful for public safety, but it's perfect for oppression and social control.

Law enforcement defenders of facial recognition often state that the technology simply lets them do what they would be doing anyway: compare footage or photos against mug shots, drivers licenses, or other databases, but faster. And they're not wrong. But the speed and automation enabled by artificial intelligence-powered surveillance fundamentally changes the impact of that surveillance on our society. Being able to do something exponentially faster, and using significantly less human and financial resources, alters the nature of that thing. The Fourth Amendment becomes meaningless in a world where private companies record everything we do and provide governments with easy tools to request and analyze footage from a growing, privately owned, panopticon.

Tech giants like Microsoft and Amazon insist that facial recognition will be a lucrative boon for humanity, as long as there are proper safeguards in place. This disingenuous call for regulation is straight out of the same lobbying playbook that telecom companies have used to attack net neutrality and Silicon Valley has used to scuttle meaningful data privacy legislation. Companies are calling for regulation because they want their corporate lawyers and lobbyists to help write the rules of the road, to ensure those rules are friendly to their business models. They're trying to skip the debate about what role, if any, technology this uniquely dangerous should play in a free and open society. They want to rush ahead to the discussion about how we roll it out.

We need spaces that are free from government and societal intrusion in order to advance as a civilization.

Facial recognition is spreading very quickly. But backlash is growing too. Several cities have already banned government entities, including police and schools, from using biometric surveillance. Others have local ordinances in the works, and there's state legislation brewing in Michigan, Massachusetts, Utah, and California. Meanwhile, there is growing bipartisan agreement in U.S. Congress to rein in government use of facial recognition. We've also seen significant backlash to facial recognition growing in the U.K., within the European Parliament, and in Sweden, which recently banned its use in schools following a fine under the General Data Protection Regulation (GDPR).

At least two frontrunners in the 2020 presidential campaign have backed a ban on law enforcement use of facial recognition. Many of the largest music festivals in the world responded to Fight for the Future's campaign and committed to not use facial recognition technology on music fans.

There has been widespread reporting on the fact that existing facial recognition algorithms exhibit systemic racial and gender bias, and are more likely to misidentify people with darker skin, or who are not perceived by a computer to be a white man. Critics are right to highlight this algorithmic bias. Facial recognition is being used by law enforcement in cities like Detroit right now, and the racial bias baked into that software is doing harm. It's exacerbating existing forms of racial profiling and discrimination in everything from public housing to the criminal justice system.

But the companies that make facial recognition assure us this bias is a bug, not a feature, and that they can fix it. And they might be right. Face scanning algorithms for many purposes will improve over time. But facial recognition becoming more accurate doesn't make it less of a threat to human rights. This technology is dangerous when it's broken, but at a mass scale, it's even more dangerous when it works. And it will still disproportionately harm our society's most vulnerable members.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites.

We need spaces that are free from government and societal intrusion in order to advance as a civilization. If technology makes it so that laws can be enforced 100 percent of the time, there is no room to test whether those laws are just. If the U.S. government had ubiquitous facial recognition surveillance 50 years ago when homosexuality was still criminalized, would the LGBTQ rights movement ever have formed? In a world where private spaces don't exist, would people have felt safe enough to leave the closet and gather, build community, and form a movement? Freedom from surveillance is necessary for deviation from social norms as well as to dissent from authority, without which societal progress halts.

Persistent monitoring and policing of our behavior breeds conformity, benefits tyrants, and enriches elites. Drawing a line in the sand around tech-enhanced surveillance is the fundamental fight of this generation. Lining up to get our faces scanned to participate in society doesn't just threaten our privacy, it threatens our humanity, and our ability to be ourselves.

[Editor's Note: Read the opposite perspective here.]

When doctors couldn’t stop her daughter’s seizures, this mom earned a PhD and found a treatment herself.

Savannah Salazar (left) and her mother, Tracy Dixon-Salazaar, who earned a PhD in neurobiology in the quest for a treatment of her daughter's seizure disorder.

Twenty-eight years ago, Tracy Dixon-Salazaar woke to the sound of her daughter, two-year-old Savannah, in the midst of a medical emergency.

“I entered [Savannah’s room] to see her tiny little body jerking about violently in her bed,” Tracy said in an interview. “I thought she was choking.” When she and her husband frantically called 911, the paramedic told them it was likely that Savannah had had a seizure—a term neither Tracy nor her husband had ever heard before.

Over the next several years, Savannah’s seizures continued and worsened. By age five Savannah was having seizures dozens of times each day, and her parents noticed significant developmental delays. Savannah was unable to use the restroom and functioned more like a toddler than a five-year-old.

Doctors were mystified: Tracy and her husband had no family history of seizures, and there was no event—such as an injury or infection—that could have caused them. Doctors were also confused as to why Savannah’s seizures were happening so frequently despite trying different seizure medications.

Doctors eventually diagnosed Savannah with Lennox-Gaustaut Syndrome, or LGS, an epilepsy disorder with no cure and a poor prognosis. People with LGS are often resistant to several kinds of anti-seizure medications, and often suffer from developmental delays and behavioral problems. People with LGS also have a higher chance of injury as well as a higher chance of sudden unexpected death (SUDEP) due to the frequent seizures. In about 70 percent of cases, LGS has an identifiable cause such as a brain injury or genetic syndrome. In about 30 percent of cases, however, the cause is unknown.

Watching her daughter struggle through repeated seizures was devastating to Tracy and the rest of the family.

“This disease, it comes into your life. It’s uninvited. It’s unannounced and it takes over every aspect of your daily life,” said Tracy in an interview with Today.com. “Plus it’s attacking the thing that is most precious to you—your kid.”

Desperate to find some answers, Tracy began combing the medical literature for information about epilepsy and LGS. She enrolled in college courses to better understand the papers she was reading.

“Ironically, I thought I needed to go to college to take English classes to understand these papers—but soon learned it wasn’t English classes I needed, It was science,” Tracy said. When she took her first college science course, Tracy says, she “fell in love with the subject.”

Tracy was now a caregiver to Savannah, who continued to have hundreds of seizures a month, as well as a full-time student, studying late into the night and while her kids were at school, using classwork as “an outlet for the pain.”

“I couldn’t help my daughter,” Tracy said. “Studying was something I could do.”

Twelve years later, Tracy had earned a PhD in neurobiology.

After her post-doctoral training, Tracy started working at a lab that explored the genetics of epilepsy. Savannah’s doctors hadn’t found a genetic cause for her seizures, so Tracy decided to sequence her genome again to check for other abnormalities—and what she found was life-changing.

Tracy discovered that Savannah had a calcium channel mutation, meaning that too much calcium was passing through Savannah’s neural pathways, leading to seizures. The information made sense to Tracy: Anti-seizure medications often leech calcium from a person’s bones. When doctors had prescribed Savannah calcium supplements in the past to counteract these effects, her seizures had gotten worse every time she took the medication. Tracy took her discovery to Savannah’s doctor, who agreed to prescribe her a calcium blocker.

The change in Savannah was almost immediate.

Within two weeks, Savannah’s seizures had decreased by 95 percent. Once on a daily seven-drug regimen, she was soon weaned to just four, and then three. Amazingly, Tracy started to notice changes in Savannah’s personality and development, too.

“She just exploded in her personality and her talking and her walking and her potty training and oh my gosh she is just so sassy,” Tracy said in an interview.

Since starting the calcium blocker eleven years ago, Savannah has continued to make enormous strides. Though still unable to read or write, Savannah enjoys puzzles and social media. She’s “obsessed” with boys, says Tracy. And while Tracy suspects she’ll never be able to live independently, she and her daughter can now share more “normal” moments—something she never anticipated at the start of Savannah’s journey with LGS. While preparing for an event, Savannah helped Tracy get ready.

“We picked out a dress and it was the first time in our lives that we did something normal as a mother and a daughter,” she said. “It was pretty cool.”

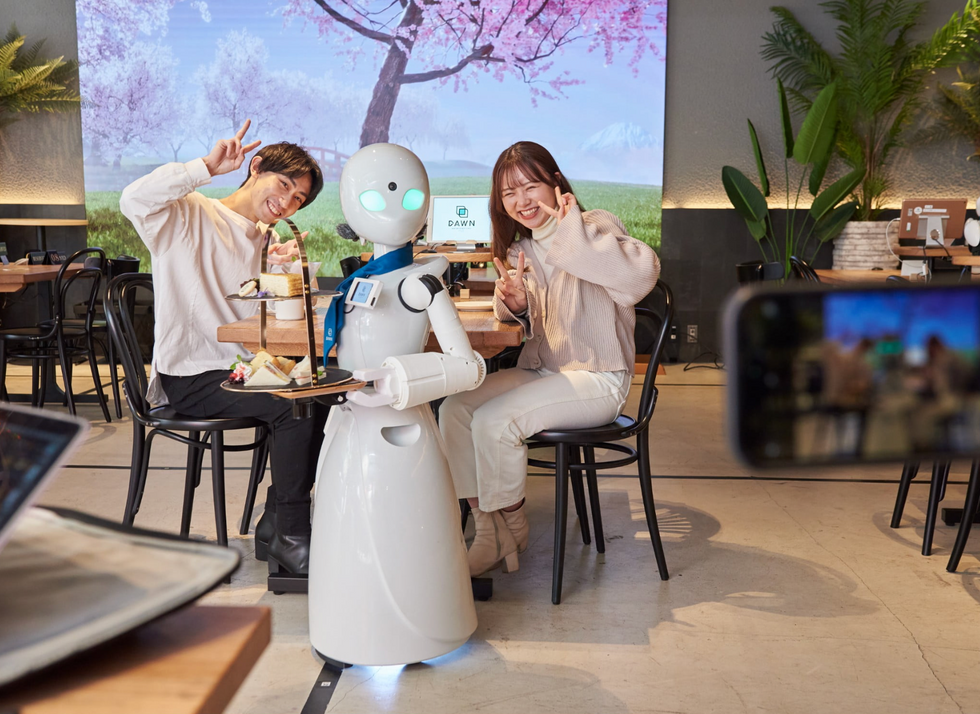

A robot server, controlled remotely by a disabled worker, delivers drinks to patrons at the DAWN cafe in Tokyo.

A sleek, four-foot tall white robot glides across a cafe storefront in Tokyo’s Nihonbashi district, holding a two-tiered serving tray full of tea sandwiches and pastries. The cafe’s patrons smile and say thanks as they take the tray—but it’s not the robot they’re thanking. Instead, the patrons are talking to the person controlling the robot—a restaurant employee who operates the avatar from the comfort of their home.

It’s a typical scene at DAWN, short for Diverse Avatar Working Network—a cafe that launched in Tokyo six years ago as an experimental pop-up and quickly became an overnight success. Today, the cafe is a permanent fixture in Nihonbashi, staffing roughly 60 remote workers who control the robots remotely and communicate to customers via a built-in microphone.

More than just a creative idea, however, DAWN is being hailed as a life-changing opportunity. The workers who control the robots remotely (known as “pilots”) all have disabilities that limit their ability to move around freely and travel outside their homes. Worldwide, an estimated 16 percent of the global population lives with a significant disability—and according to the World Health Organization, these disabilities give rise to other problems, such as exclusion from education, unemployment, and poverty.

These are all problems that Kentaro Yoshifuji, founder and CEO of Ory Laboratory, which supplies the robot servers at DAWN, is looking to correct. Yoshifuji, who was bedridden for several years in high school due to an undisclosed health problem, launched the company to help enable people who are house-bound or bedridden to more fully participate in society, as well as end the loneliness, isolation, and feelings of worthlessness that can sometimes go hand-in-hand with being disabled.

“It’s heartbreaking to think that [people with disabilities] feel they are a burden to society, or that they fear their families suffer by caring for them,” said Yoshifuji in an interview in 2020. “We are dedicating ourselves to providing workable, technology-based solutions. That is our purpose.”

Shota, Kuwahara, a DAWN employee with muscular dystrophy, agrees. "There are many difficulties in my daily life, but I believe my life has a purpose and is not being wasted," he says. "Being useful, able to help other people, even feeling needed by others, is so motivational."