To Make Science Engaging, We Need a Sesame Street for Adults

A new kind of television series could establish the baseline narratives for novel science like gene editing, quantum computing, or artificial intelligence.

This article is part of the magazine, "The Future of Science In America: The Election Issue," co-published by LeapsMag, the Aspen Institute Science & Society Program, and GOOD.

In the mid-1960s, a documentary producer in New York City wondered if the addictive jingles, clever visuals, slogans, and repetition of television ads—the ones that were captivating young children of the time—could be harnessed for good. Over the course of three months, she interviewed educators, psychologists, and artists, and the result was a bonanza of ideas.

Perhaps a new TV show could teach children letters and numbers in short animated sequences? Perhaps adults and children could read together with puppets providing comic relief and prompting interaction from the audience? And because it would be broadcast through a device already in almost every home, perhaps this show could reach across socioeconomic divides and close an early education gap?

Soon after Joan Ganz Cooney shared her landmark report, "The Potential Uses of Television in Preschool Education," in 1966, she was prototyping show ideas, attracting funding from The Carnegie Corporation, The Ford Foundation, and The Corporation for Public Broadcasting, and co-founding the Children's Television Workshop with psychologist Lloyd Morrisett. And then, on November 10, 1969, informal learning was transformed forever with the premiere of Sesame Street on public television.

For its first season, Sesame Street won three Emmy Awards and a Peabody Award. Its star, Big Bird, landed on the cover of Time Magazine, which called the show "TV's gift to children." Fifty years later, it's hard to imagine an approach to informal preschool learning that isn't Sesame Street.

And that approach can be boiled down to one word: Entertainment.

Despite decades of evidence from Sesame Street—one of the most studied television shows of all time—and more research from social science, psychology, and media communications, we haven't yet taken Ganz Cooney's concepts to heart in educating adults. Adults have news programs and documentaries and educational YouTube channels, but no Sesame Street. So why don't we? Here's how we can design a new kind of television to make science engaging and accessible for a public that is all too often intimidated by it.

We have to start from the realization that America is a nation of high-school graduates. By the end of high school, students have decided to abandon science because they think it's too difficult, and as a nation, we've made it acceptable for any one of us to say "I'm not good at science" and offload thinking to the ones who might be. So, is it surprising that a large number of Americans are likely to believe in conspiracy theories like the 25% that believe the release of COVID-19 was planned, the one in ten who believe the Moon landing was a hoax, or the 30–40% that think the condensation trails of planes are actually nefarious chemtrails? If we're meeting people where they are, the aim can't be to get the audience from an A to an A+, but from an F to a D, and without judgment of where they are starting from.

There's also a natural compulsion for a well-meaning educator to fill a literacy gap with a barrage of information, but this is what I call "factsplaining," and we know it doesn't work. And worse, it can backfire. In one study from 2014, parents were provided with factual information about vaccine safety, and it was the group that was already the most averse to vaccines that uniquely became even more averse.

Why? Our social identities and cognitive biases are stubborn gatekeepers when it comes to processing new information. We filter ideas through pre-existing beliefs—our values, our religions, our political ideologies. Incongruent ideas are rejected. Congruent ideas, no matter how absurd, are allowed through. We hear what we want to hear, and then our brains justify the input by creating narratives that preserve our identities. Even when we have all the facts, we can use them to support any worldview.

But social science has revealed many mechanisms for hijacking these processes through narrative storytelling, and this can form the foundation of a new kind of educational television.

Could new television series establish the baseline narratives for novel science like gene editing, quantum computing, or artificial intelligence?

As media creators, we can reject factsplaining and instead construct entertaining narratives that disrupt cognitive processes. Two-decade-old research tells us when people are immersed in entertaining fiction narratives, they loosen their defenses, opening a path for new information, editing attitudes, and inspiring new behavior. Where news about hot-button issues like climate change or vaccination might trigger resistance or a backfire effect, fiction can be crafted to be absorbing and, as a result, persuasive.

But the narratives can't be stuffed with information. They must be simplified. If this feels like the opposite of what an educator should be doing, it is possible to reduce the complexity of information, without oversimplification, through "exemplification," a framing device to tell the stories of individuals in specific circumstances that can speak to the greater issue without needing to explain it all. It's a technique you've seen used in biopics. The Discovery Channel true-crime miniseries Manhunt: Unabomber does many things well from a science storytelling perspective, including exemplifying the virtues of the scientific method through a character who argues for a new field of science, forensic linguistics, to catch one of the most notorious domestic terrorists in U.S. history.

We must also appeal to the audience's curiosity. We know curiosity is such a strong driver of human behavior that it can even counteract the biases put up by one's political ideology around subjects like climate change. If we treat science information like a product—and we should—advertising research tells us we can maximize curiosity though a Goldilocks effect. If the information is too complex, your show might as well be a PowerPoint presentation. If it's too simple, it's Sesame Street. There's a sweet spot for creating intrigue about new information when there's a moderate cognitive gap.

The science of "identification" tells us that the more the main character is endearing to a viewer, the more likely the viewer will adopt the character's worldview and journey of change. This insight further provides incentives to craft characters reflective of our audiences. If we accept our biases for what they are, we can understand why the messenger becomes more important than the message, because, without an appropriate messenger, the message becomes faint and ineffective. And research confirms that the stereotype-busting doctor-skeptic Dana Scully of The X-Files, a popular science-fiction series, was an inspiration for a generation of women who pursued science careers.

With these directions, we can start making a new kind of television. But is television itself still the right delivery medium? Americans do spend six hours per day—a quarter of their lives—watching video. And even with the rise of social media and apps, science-themed television shows remain popular, with four out of five adults reporting that they watch shows about science at least sometimes. CBS's The Big Bang Theory was the most-watched show on television in the 2017–2018 season, and Cartoon Network's Rick & Morty is the most popular comedy series among millennials. And medical and forensic dramas continue to be broadcast staples. So yes, it's as true today as it was in the 1980s when George Gerbner, the "cultivation theory" researcher who studied the long-term impacts of television images, wrote, "a single episode on primetime television can reach more people than all science and technology promotional efforts put together."

We know from cultivation theory that media images can shape our views of scientists. Quick, picture a scientist! Was it an old, white man with wild hair in a lab coat? If most Americans don't encounter research science firsthand, it's media that dictates how we perceive science and scientists. Characters like Sheldon Cooper and Rick Sanchez become the model. But we can correct that by representing professionals more accurately on-screen and writing characters more like Dana Scully.

Could new television series establish the baseline narratives for novel science like gene editing, quantum computing, or artificial intelligence? Or could new series counter the misinfodemics surrounding COVID-19 and vaccines through more compelling, corrective narratives? Social science has given us a blueprint suggesting they could. Binge-watching a show like the surreal NBC sitcom The Good Place doesn't replace a Ph.D. in philosophy, but its use of humor plants the seed of continued interest in a new subject. The goal of persuasive entertainment isn't to replace formal education, but it can inspire, shift attitudes, increase confidence in the knowledge of complex issues, and otherwise prime viewers for continued learning.

[Editor's Note: To read other articles in this special magazine issue, visit the beautifully designed e-reader version.]

An astronaut peers through a portal in outer space.

What if people could just survive on sunlight like plants?

The admittedly outlandish question occurred to me after reading about how climate change will exacerbate drought, flooding, and worldwide food shortages. Many of these problems could be eliminated if human photosynthesis were possible. Had anyone ever tried it?

Extreme space travel exists at an ethically unique spot that makes human experimentation much more palatable.

I emailed Sidney Pierce, professor emeritus in the Department of Integrative Biology at the University of South Florida, who studies a type of sea slug, Elysia chlorotica, that eats photosynthetic algae, incorporating the algae's key cell structure into itself. It's still a mystery how exactly a slug can operate the part of the cell that converts sunlight into energy, which requires proteins made by genes to function, but the upshot is that the slugs can (and do) live on sunlight in-between feedings.

Pierce says he gets questions about human photosynthesis a couple of times a year, but it almost certainly wouldn't be worth it to try to develop the process in a human. "A high-metabolic rate, large animal like a human could probably not survive on photosynthesis," he wrote to me in an email. "The main reason is a lack of surface area. They would either have to grow leaves or pull a trailer covered with them."

In short: Plants have already exploited the best tricks for subsisting on photosynthesis, and unless we want to look and act like plants, we won't have much success ourselves. Not that it stopped Pierce from trying to develop human photosynthesis technology anyway: "I even tried to sell it to the Navy back in the day," he told me. "Imagine photosynthetic SEALS."

It turns out, however, that while no one is actively trying to create photosynthetic humans, scientists are considering the ways humans might need to change to adapt to future environments, either here on the rapidly changing Earth or on another planet. Rice University biologist Scott Solomon has written an entire book, Future Humans, in which he explores the environmental pressures that are likely to influence human evolution from this point forward. On Earth, Solomon says, infectious disease will remain a major driver of change. As for Mars, the big two are lower gravity and radiation, the latter of which bombards the Martian surface constantly because the planet has no magnetosphere.

Although he considers this example "pretty out there," Solomon says one possible solution to Mars' magnetic assault could leave humans not photosynthetic green, but orange, thanks to pigments called carotenoids that are responsible for the bright hues of pumpkins and carrots.

"Carotenoids protect against radiation," he says. "Usually only plants and microbes can produce carotenoids, but there's at least one kind of insect, a particular type of aphid, that somehow acquired the gene for making carotenoids from a fungus. We don't exactly know how that happened, but now they're orange... I view that as an example of, hey, maybe humans on Mars will evolve new kinds of pigmentation that will protect us from the radiation there."

We could wait for an orange human-producing genetic variation to occur naturally, or with new gene editing techniques such as CRISPR-Cas9, we could just directly give astronauts genetic advantages such as carotenoid-producing skin. This may not be as far-off as it sounds: Extreme space travel exists at an ethically unique spot that makes human experimentation much more palatable. If an astronaut already plans to subject herself to the enormous experiment of traveling to, and maybe living out her days on, a dangerous and faraway planet, do we have any obligation to provide all the protection we can?

Probably the most vocal person trying to figure out what genetic protections might help astronauts is Cornell geneticist Chris Mason. His lab has outlined a 10-phase, 500-year plan for human survival, starting with the comparatively modest goal of establishing which human genes are not amenable to change and should be marked with a "Do not disturb" sign.

To be clear, Mason is not actually modifying human beings. Instead, his lab has studied genes in radiation-resistant bacteria, such as the Deinococcus genus. They've expressed proteins called DSUP from tardigrades, tiny water bears that can survive in space, in human cells. They've looked into p53, a gene that is overexpressed in elephants and seems to protect them from cancer. They also developed a protocol to work on the NASA twin study comparing astronauts Scott Kelly, who spent a year aboard the International Space Station, and his brother Mark, who did not, to find out what effects space tends to have on genes in the first place.

In a talk he gave in December, Mason reported that 8.7 percent of Scott Kelly's genes—mostly those associated with immune function, DNA repair, and bone formation—did not return to normal after the astronaut had been home for six months. "Some of these space genes, we could engineer them, activate them, have them be hyperactive when you go to space," he said in that same talk. "When we think about having the hubris to go to a faraway planet...it seems like an almost impossible idea….but I really like people and I want us to survive for a long time, and this is the first step on the stairwell to survive out of the solar system."

What is the most important ability we could give our future selves through science?

There are others performing studies to figure out what capabilities we might bestow on the future-proof superhuman, but none of them are quite as extreme as photosynthesis (although all of them are useful). At Harvard, geneticist George Church wants to engineer cells to be resistant to viruses, such as the common cold and HIV. At Columbia, synthetic biologist Harris Wang is addressing self-sufficient humans more directly—trying to spur kidney cells to produce amino acids that are normally only available from diet.

But perhaps Future Humans author Scott Solomon has the most radical idea. I asked him a version of the classic What would be your superhero power? question: What does he see as the most important ability we could give our future selves through science?

"The empathy gene," he said. "The ability to put yourself in someone else's shoes and see the world as they see it. I think it would solve a lot of our problems."

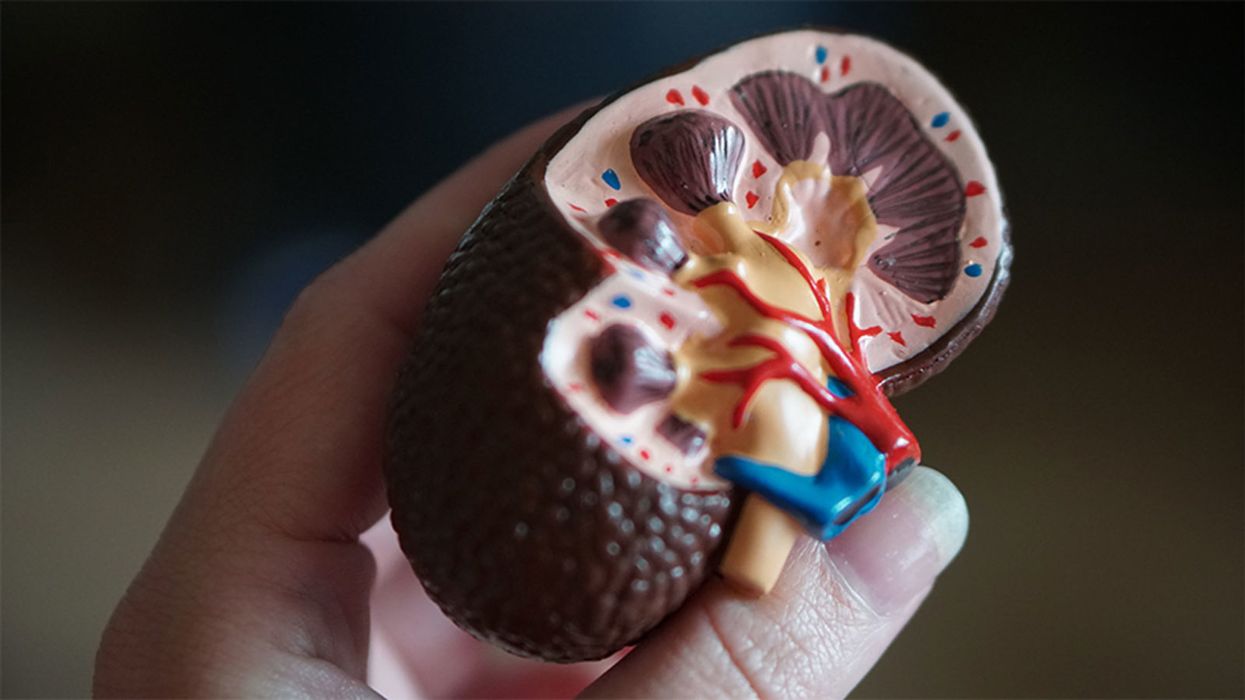

A model of a human kidney.

Science's dream of creating perfect custom organs on demand as soon as a patient needs one is still a long way off. But tiny versions are already serving as useful research tools and stepping stones toward full-fledged replacements.

Although organoids cannot yet replace kidneys, they are invaluable tools for research.

The Lowdown

Australian researchers have grown hundreds of mini human kidneys in the past few years. Known as organoids, they function much like their full-grown counterparts, minus a few features due to a lack of blood supply.

Cultivated in a petri dish, these kidneys are still a shadow of their human counterparts. They grow no larger than one-sixth of an inch in diameter; fully developed organs are up to five inches in length. They contain no more than a few dozen nephrons, the kidney's individual blood-filtering unit, whereas a fully-grown kidney has about 1 million nephrons. And the dish variety live for just a few weeks.

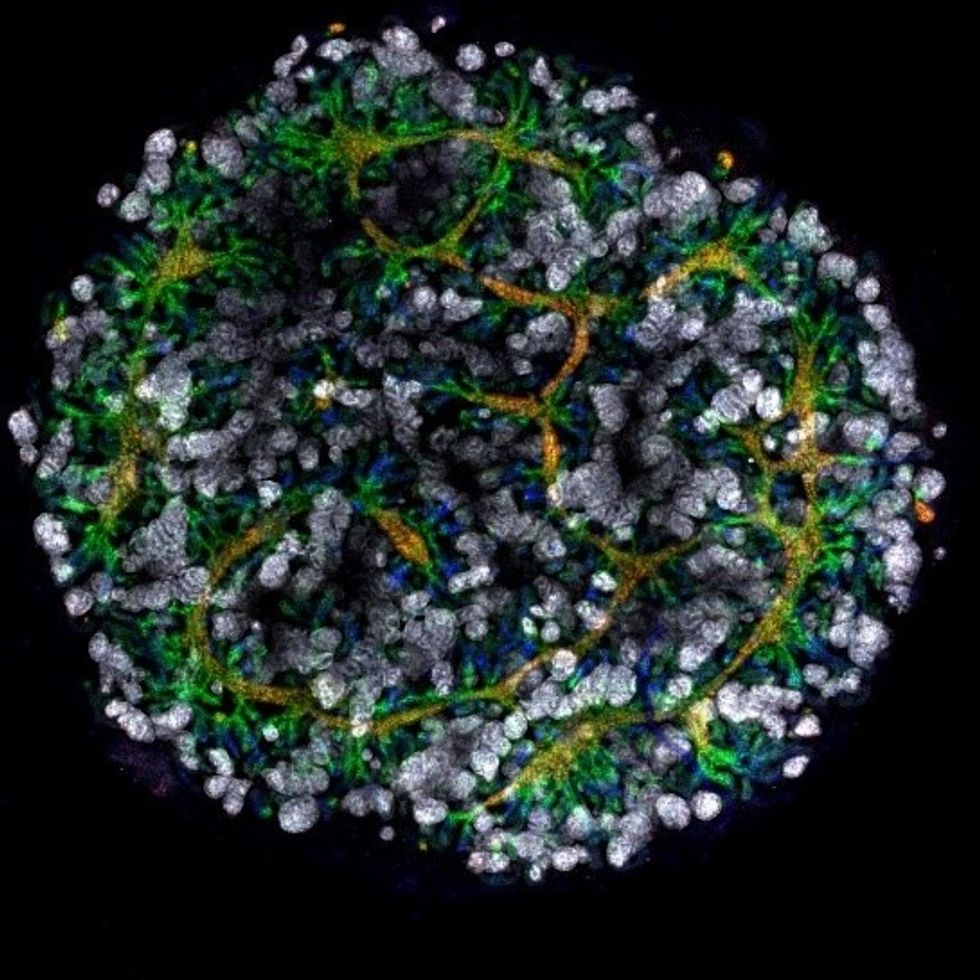

An organoid kidney created by the Murdoch Children's Institute in Melbourne, Australia.

Photo Credit: Shahnaz Khan.

But Melissa Little, head of the kidney research laboratory at the Murdoch Children's Institute in Melbourne, says these organoids are invaluable tools for research. Although renal failure is rare in children, more than half of those who suffer from such a disorder inherited it.

The mini kidneys enable scientists to better understand the progression of such disorders because they can be grown with a patient's specific genetic condition.

Mature stem cells can be extracted from a patient's blood sample and then reprogrammed to become like embryonic cells, able to turn into any type of cell in the body. It's akin to walking back the clock so that the cells regain unlimited potential for development. (The Japanese scientist who pioneered this technique was awarded the Nobel Prize in 2012.) These "induced pluripotent stem cells" can then be chemically coaxed to grow into mini kidneys that have the patient's genetic disorder.

"The (genetic) defects are quite clear in the organoids, and they can be monitored in the dish," Little says. To date, her research team has created organoids from 20 different stem cell lines.

Medication regimens can also be tested on the organoids, allowing specific tailoring for each patient. For now, such testing remains restricted to mice, but Little says it eventually will be done on human organoids so that the results can more accurately reflect how a given patient will respond to particular drugs.

Next Steps

Although these organoids cannot yet replace kidneys, Little says they may plug a huge gap in renal care by assisting in developing new treatments for chronic conditions. Currently, most patients with a serious kidney disorder see their options narrow to dialysis or organ transplantation. The former not only requires multiple sessions a week, but takes a huge toll on patient health.

Ten percent of older patients on dialysis die every year in the U.S. Aside from the physical trauma of organ transplantation, finding a suitable donor outside of a family member can be difficult.

"This is just another great example of the potential of pluripotent stem cells."

Meanwhile, the ongoing creation of organoids is supplying Little and her colleagues with enough information to create larger and more functional organs in the future. According to Little, researchers in the Netherlands, for example, have found that implanting organoids in mice leads to the creation of vascular growth, a potential pathway toward creating bigger and better kidneys.

And while Little acknowledges that creating a fully-formed custom organ is the ultimate goal, the mini organs are an important bridge step.

"This is just another great example of the potential of pluripotent stem cells, and I am just passionate to see it do some good."