What’s the Right Way to Regulate Gene-Edited Crops?

A cornfield in summer.

In the next few decades, humanity faces its biggest food crisis since the invention of the plow. The planet's population, currently 7.6 billion, is expected to reach 10 billion by 2050; to avoid mass famine, according to the World Resource Institute, we'll need to produce 70 percent more calories than we do today.

Imagine that a cheap, easy-to-use, and rapidly deployable technology could make crops more fertile and strengthen their resistance to threats.

Meanwhile, climate change will bring intensifying assaults by heat, drought, storms, pests, and weeds, depressing farm yields around the globe. Epidemics of plant disease—already laying waste to wheat, citrus, bananas, coffee, and cacao in many regions—will spread ever further through the vectors of modern trade and transportation.

So here's a thought experiment: Imagine that a cheap, easy-to-use, and rapidly deployable technology could make crops more fertile and strengthen their resistance to these looming threats. Imagine that it could also render them more nutritious and tastier, with longer shelf lives and less vulnerability to damage in shipping—adding enhancements to human health and enjoyment, as well as reduced food waste, to the possible benefits.

Finally, imagine that crops bred with the aid of this tool might carry dangers. Some could contain unsuspected allergens or toxins. Others might disrupt ecosystems, affecting the behavior or very survival of other species, or infecting wild relatives with their altered DNA.

Now ask yourself: If such a technology existed, should policymakers encourage its adoption, or ban it due to the risks? And if you chose the former alternative, how should crops developed by this method be regulated?

In fact, this technology does exist, though its use remains mostly experimental. It's called gene editing, and in the past five years it has emerged as a potentially revolutionary force in many areas—among them, treating cancer and genetic disorders; growing transplantable human organs in pigs; controlling malaria-spreading mosquitoes; and, yes, transforming agriculture. Several versions are currently available, the newest and nimblest of which goes by the acronym CRISPR.

Gene editing is far simpler and more efficient than older methods used to produce genetically modified organisms (GMOs). Unlike those methods, moreover, it can be used in ways that leave no foreign genes in the target organism—an advantage that proponents argue should comfort anyone leery of consuming so-called "Frankenfoods." But debate persists over what precautions must be taken before these crops come to market.

Recently, two of the world's most powerful regulatory bodies offered very different answers to that question. The United States Department of Agriculture (USDA) declared in March 2018 that it "does not currently regulate, or have any plans to regulate" plants that are developed through most existing methods of gene editing. The Court of Justice of the European Union (ECJ), by contrast, ruled in July that such crops should be governed by the same stringent regulations as conventional GMOs.

Some experts suggest that the broadly permissive American approach and the broadly restrictive EU policy are equally flawed.

Each announcement drew protests, for opposite reasons. Anti-GMO activists assailed the USDA's statement, arguing that all gene-edited crops should be tested and approved before marketing. "You don't know what those mutations or rearrangements might do in a plant," warned Michael Hansen, a senior scientist with the advocacy group Consumers Union. Biotech boosters griped that the ECJ's decision would stifle innovation and investment. "By any sensible standard, this judgment is illogical and absurd," wrote the British newspaper The Observer.

Yet some experts suggest that the broadly permissive American approach and the broadly restrictive EU policy are equally flawed. "What's behind these regulatory decisions is not science," says Jennifer Kuzma, co-director of the Genetic Engineering and Society Center at North Carolina State University, a former advisor to the World Economic Forum, who has researched and written extensively on governance issues in biotechnology. "It's politics, economics, and culture."

The U.S. Welcomes Gene-Edited Food

Humans have been modifying the genomes of plants and animals for 10,000 years, using selective breeding—a hit-or-miss method that can take decades or more to deliver rewards. In the mid-20th century, we learned to speed up the process by exposing organisms to radiation or mutagenic chemicals. But it wasn't until the 1980s that scientists began modifying plants by altering specific stretches of their DNA.

Today, about 90 percent of the corn, cotton and soybeans planted in the U.S. are GMOs; such crops cover nearly 4 million square miles (10 million square kilometers) of land in 29 countries. Most of these plants are transgenic, meaning they contain genes from an unrelated species—often as biologically alien as a virus or a fish. Their modifications are designed primarily to boost profit margins for mechanized agribusiness: allowing crops to withstand herbicides so that weeds can be controlled by mass spraying, for example, or to produce their own pesticides to lessen the need for chemical inputs.

In the early days, the majority of GM crops were created by extracting the gene for a desired trait from a donor organism, multiplying it, and attaching it to other snippets of DNA—usually from a microbe called an agrobacterium—that could help it infiltrate the cells of the target plant. Biotechnologists injected these particles into the target, hoping at least one would land in a place where it would perform its intended function; if not, they kept trying. The process was quicker than conventional breeding, but still complex, scattershot, and costly.

Because agrobacteria can cause plant tumors, Kuzma explains, policymakers in the U.S. decided to regulate GMO crops under an existing law, the Plant Pest Act of 1957, which addressed dangers like imported trees infested with invasive bugs. Every GMO containing the DNA of agrobacterium or another plant pest had to be tested to see whether it behaved like a pest, and undergo a lengthy approval process. By 2010, however, new methods had been developed for creating GMOs without agrobacteria; such plants could typically be marketed without pre-approval.

Soon after that, the first gene-edited crops began appearing. If old-school genetic engineering was a shotgun, techniques like TALEN and CRISPR were a scalpel—or the search-and-replace function on a computer program. With CRISPR/Cas9, for example, an enzyme that bacteria use to recognize and chop up hostile viruses is reprogrammed to find and snip out a desired bit of a plant or other organism's DNA. The enzyme can also be used to insert a substitute gene. If a DNA sequence is simply removed, or the new gene comes from a similar species, the changes in the target plant's genotype and phenotype (its general characteristics) may be no different from those that could be produced through selective breeding. If a foreign gene is added, the plant becomes a transgenic GMO.

Companies are already teeing up gene-edited products for the U.S. market, like a cooking oil and waxy corn.

This development, along with the emergence of non-agrobacterium GMOs, eventually prompted the USDA to propose a tiered regulatory system for all genetically engineered crops, beginning with an initial screening for potentially hazardous metaboloids or ecological impacts. (The screening was intended, in part, to guard against the "off-target effects"—stray mutations—that occasionally appear in gene-edited organisms.) If no red flags appeared, the crop would be approved; otherwise, it would be subject to further review, and possible regulation.

The plan was unveiled in January 2017, during the last week of the Obama presidency. Then, under the Trump administration, it was shelved. Although the USDA continues to promise a new set of regulations, the only hint of what they might contain has been Secretary of Agriculture Sonny Perdue's statement last March that gene-edited plants would remain unregulated if they "could otherwise have been developed through traditional breeding techniques, as long as they are not plant pests or developed using plant pests."

Because transgenic plants could not be "developed through traditional breeding techniques," this statement could be taken to mean that gene editing in which foreign DNA is introduced might actually be regulated. But because the USDA regulates conventional transgenic GMOs only if they trigger the plant-pest stipulation, experts assume gene-edited crops will face similarly limited oversight.

Meanwhile, companies are already teeing up gene-edited products for the U.S. market. An herbicide-resistant oilseed rape, developed using a proprietary technique, has been available since 2016. A cooking oil made from TALEN-tweaked soybeans, designed to have a healthier fatty-acid profile, is slated for release within the next few months. A CRISPR-edited "waxy" corn, designed with a starch profile ideal for processed foods, should be ready by 2021.

In all likelihood, none of these products will have to be tested for safety.

In the E.U., Stricter Rules Apply

Now let's look at the European Union. Since the late 1990s, explains Gregory Jaffe, director of the Project on Biotechnology at the Center for Science in the Public Interest, the EU has had a "process-based trigger" for genetically engineered products: "If you use recombinant DNA, you are going to be regulated." All foods and animal feeds must be approved and labeled if they consist of or contain more than 0.9 percent GM ingredients. (In the U.S., "disclosure" of GM ingredients is mandatory, if someone asks, but labeling is not required.) The only GM crop that can be commercially grown in EU member nations is a type of insect-resistant corn, though some countries allow imports.

European scientists helped develop gene editing, and they—along with the continent's biotech entrepreneurs—have been busy developing applications for crops. But European farmers seem more divided over the technology than their American counterparts. The main French agricultural trades union, for example, supports research into non-transgenic gene editing and its exemption from GMO regulation. But it was the country's small-farmers' union, the Confédération Paysanne, along with several allied groups, that in 2015 submitted a complaint to the ECJ, asking that all plants produced via mutagenesis—including gene-editing—be regulated as GMOs.

At this point, it should be mentioned that in the past 30 years, large population studies have found no sign that consuming GM foods is harmful to human health. GMO critics can, however, point to evidence that herbicide-resistant crops have encouraged overuse of herbicides, giving rise to poison-proof "superweeds," polluting the environment with suspected carcinogens, and inadvertently killing beneficial plants. Those allegations were key to the French plaintiffs' argument that gene-edited crops might similarly do unexpected harm. (Disclosure: Leapsmag's parent company, Bayer, recently acquired Monsanto, a maker of herbicides and herbicide-resistant seeds. Also, Leaps by Bayer, an innovation initiative of Bayer and Leapsmag's direct founder, has funded a biotech startup called JoynBio that aims to reduce the amount of nitrogen fertilizer required to grow crops.)

The ruling was "scientifically nonsensical. It's because of things like this that I'll never go back to Europe."

In the end, the EU court found in the Confédération's favor on gene editing—though the court maintained the regulatory exemption for mutagenesis induced by chemicals or radiation, citing the 'long safety record' of those methods.

The ruling was "scientifically nonsensical," fumes Rodolphe Barrangou, a French food scientist who pioneered CRISPR while working for DuPont in Wisconsin and is now a professor at NC State. "It's because of things like this that I'll never go back to Europe."

Nonetheless, the decision was consistent with longstanding EU policy on crops made with recombinant DNA. Given the difficulty and expense of getting such products through the continent's regulatory system, many other European researchers may wind up following Barrangou to America.

Getting to the Root of the Cultural Divide

What explains the divergence between the American and European approaches to GMOs—and, by extension, gene-edited crops? In part, Jennifer Kuzma speculates, it's that Europeans have a different attitude toward eating. "They're generally more tied to where their food comes from, where it's produced," she notes. They may also share a mistrust of government assurances on food safety, borne of the region's Mad Cow scandals of the 1980s and '90s. In Catholic countries, consumers may have misgivings about tinkering with the machinery of life.

But the principal factor, Kuzma argues, is that European and American agriculture are structured differently. "GM's benefits have mostly been designed for large-scale industrial farming and commodity crops," she says. That kind of farming is dominant in the U.S., but not in Europe, leading to a different balance of political power. In the EU, there was less pressure on decisionmakers to approve GMOs or exempt gene-edited crops from regulation—and more pressure to adopt a GM-resistant stance.

Such dynamics may be operating in other regions as well. In China, for example, the government has long encouraged research in GMOs; a state-owned company recently acquired Syngenta, a Swiss-based multinational corporation that is a leading developer of GM and gene-edited crops. GM animal feed and cooking oil can be freely imported. Yet commercial cultivation of most GM plants remains forbidden, out of deference to popular suspicions of genetically altered food. "As a new item, society has debates and doubts on GMO techniques, which is normal," President Xi Jinping remarked in 2014. "We must be bold in studying it, [but] be cautious promoting it."

The proper balance between boldness and caution is still being worked out all over the world. Europe's process-based approach may prevent researchers from developing crops that, with a single DNA snip, could rescue millions from starvation. EU regulations will also make it harder for small entrepreneurs to challenge Big Ag with a technology that, as Barrangou puts it, "can be used affordably, quickly, scalably, by anyone, without even a graduate degree in genetics." America's product-based approach, conversely, may let crops with hidden genetic dangers escape detection. And by refusing to investigate such risks, regulators may wind up exacerbating consumers' doubts about GM and gene-edited products, rather than allaying them.

"Science...can't tell you what to regulate. That's a values-based decision."

Perhaps the solution lies in combining both approaches, and adding some flexibility and nuance to the mix. "I don't believe in regulation by the product or the process," says CSPI's Jaffe. "I think you need both." Deleting a DNA base pair to silence a gene, for example, might be less risky than inserting a foreign gene into a plant—unless the deletion enables the production of an allergen, and the transgene comes from spinach.

Kuzma calls for the creation of "cooperative governance networks" to oversee crop genome editing, similar to bodies that already help develop and enforce industry standards in fisheries, electronics, industrial cleaning products, and (not incidentally) organic agriculture. Such a network could include farmers, scientists, advocacy groups, private companies, and governmental agencies. "Safety isn't an all-or-nothing concept," Kuzma says. "Science can tell you what some of the issues are in terms of risk and benefit, but it can't tell you what to regulate. That's a values-based decision."

By drawing together a wide range of stakeholders to make such decisions, she adds, "we're more likely to anticipate future consequences, and to develop a robust approach—one that not only seems more legitimate to people, but is actually just plain old better."

New elevators could lift up our access to space

A space elevator would be cheaper and cleaner than using rockets

Story by Big Think

When people first started exploring space in the 1960s, it cost upwards of $80,000 (adjusted for inflation) to put a single pound of payload into low-Earth orbit.

A major reason for this high cost was the need to build a new, expensive rocket for every launch. That really started to change when SpaceX began making cheap, reusable rockets, and today, the company is ferrying customer payloads to LEO at a price of just $1,300 per pound.

This is making space accessible to scientists, startups, and tourists who never could have afforded it previously, but the cheapest way to reach orbit might not be a rocket at all — it could be an elevator.

The space elevator

The seeds for a space elevator were first planted by Russian scientist Konstantin Tsiolkovsky in 1895, who, after visiting the 1,000-foot (305 m) Eiffel Tower, published a paper theorizing about the construction of a structure 22,000 miles (35,400 km) high.

This would provide access to geostationary orbit, an altitude where objects appear to remain fixed above Earth’s surface, but Tsiolkovsky conceded that no material could support the weight of such a tower.

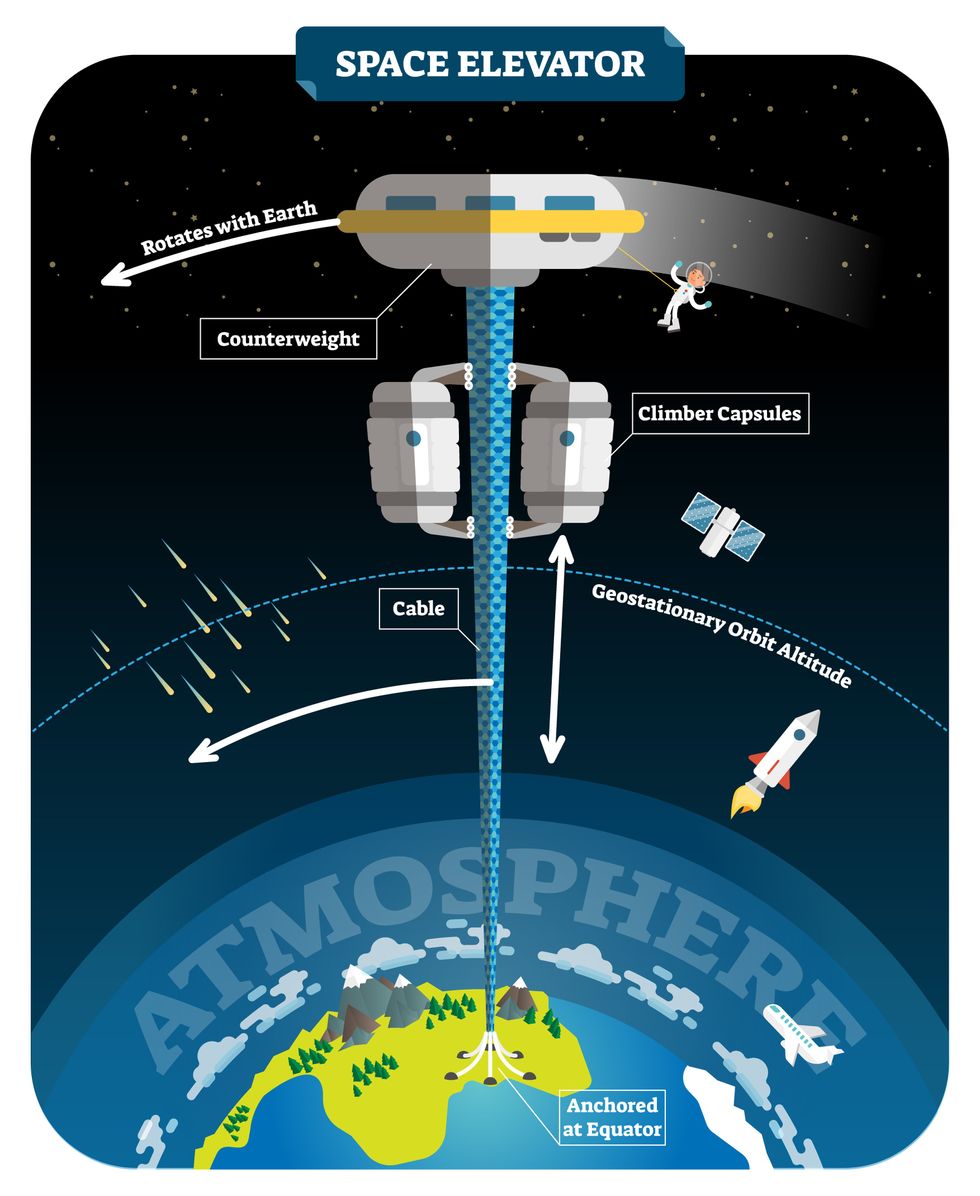

We could then send electrically powered “climber” vehicles up and down the tether to deliver payloads to any Earth orbit.

In 1959, soon after Sputnik, Russian engineer Yuri N. Artsutanov proposed a way around this issue: instead of building a space elevator from the ground up, start at the top. More specifically, he suggested placing a satellite in geostationary orbit and dropping a tether from it down to Earth’s equator. As the tether descended, the satellite would ascend. Once attached to Earth’s surface, the tether would be kept taut, thanks to a combination of gravitational and centrifugal forces.

We could then send electrically powered “climber” vehicles up and down the tether to deliver payloads to any Earth orbit. According to physicist Bradley Edwards, who researched the concept for NASA about 20 years ago, it’d cost $10 billion and take 15 years to build a space elevator, but once operational, the cost of sending a payload to any Earth orbit could be as low as $100 per pound.

“Once you reduce the cost to almost a Fed-Ex kind of level, it opens the doors to lots of people, lots of countries, and lots of companies to get involved in space,” Edwards told Space.com in 2005.

In addition to the economic advantages, a space elevator would also be cleaner than using rockets — there’d be no burning of fuel, no harmful greenhouse emissions — and the new transport system wouldn’t contribute to the problem of space junk to the same degree that expendable rockets do.

So, why don’t we have one yet?

Tether troubles

Edwards wrote in his report for NASA that all of the technology needed to build a space elevator already existed except the material needed to build the tether, which needs to be light but also strong enough to withstand all the huge forces acting upon it.

The good news, according to the report, was that the perfect material — ultra-strong, ultra-tiny “nanotubes” of carbon — would be available in just two years.

“[S]teel is not strong enough, neither is Kevlar, carbon fiber, spider silk, or any other material other than carbon nanotubes,” wrote Edwards. “Fortunately for us, carbon nanotube research is extremely hot right now, and it is progressing quickly to commercial production.”Unfortunately, he misjudged how hard it would be to synthesize carbon nanotubes — to date, no one has been able to grow one longer than 21 inches (53 cm).

Further research into the material revealed that it tends to fray under extreme stress, too, meaning even if we could manufacture carbon nanotubes at the lengths needed, they’d be at risk of snapping, not only destroying the space elevator, but threatening lives on Earth.

Looking ahead

Carbon nanotubes might have been the early frontrunner as the tether material for space elevators, but there are other options, including graphene, an essentially two-dimensional form of carbon that is already easier to scale up than nanotubes (though still not easy).

Contrary to Edwards’ report, Johns Hopkins University researchers Sean Sun and Dan Popescu say Kevlar fibers could work — we would just need to constantly repair the tether, the same way the human body constantly repairs its tendons.

“Using sensors and artificially intelligent software, it would be possible to model the whole tether mathematically so as to predict when, where, and how the fibers would break,” the researchers wrote in Aeon in 2018.

“When they did, speedy robotic climbers patrolling up and down the tether would replace them, adjusting the rate of maintenance and repair as needed — mimicking the sensitivity of biological processes,” they continued.Astronomers from the University of Cambridge and Columbia University also think Kevlar could work for a space elevator — if we built it from the moon, rather than Earth.

They call their concept the Spaceline, and the idea is that a tether attached to the moon’s surface could extend toward Earth’s geostationary orbit, held taut by the pull of our planet’s gravity. We could then use rockets to deliver payloads — and potentially people — to solar-powered climber robots positioned at the end of this 200,000+ mile long tether. The bots could then travel up the line to the moon’s surface.

This wouldn’t eliminate the need for rockets to get into Earth’s orbit, but it would be a cheaper way to get to the moon. The forces acting on a lunar space elevator wouldn’t be as strong as one extending from Earth’s surface, either, according to the researchers, opening up more options for tether materials.

“[T]he necessary strength of the material is much lower than an Earth-based elevator — and thus it could be built from fibers that are already mass-produced … and relatively affordable,” they wrote in a paper shared on the preprint server arXiv.

After riding up the Earth-based space elevator, a capsule would fly to a space station attached to the tether of the moon-based one.

Electrically powered climber capsules could go up down the tether to deliver payloads to any Earth orbit.

Adobe Stock

Some Chinese researchers, meanwhile, aren’t giving up on the idea of using carbon nanotubes for a space elevator — in 2018, a team from Tsinghua University revealed that they’d developed nanotubes that they say are strong enough for a tether.

The researchers are still working on the issue of scaling up production, but in 2021, state-owned news outlet Xinhua released a video depicting an in-development concept, called “Sky Ladder,” that would consist of space elevators above Earth and the moon.

After riding up the Earth-based space elevator, a capsule would fly to a space station attached to the tether of the moon-based one. If the project could be pulled off — a huge if — China predicts Sky Ladder could cut the cost of sending people and goods to the moon by 96 percent.

The bottom line

In the 120 years since Tsiolkovsky looked at the Eiffel Tower and thought way bigger, tremendous progress has been made developing materials with the properties needed for a space elevator. At this point, it seems likely we could one day have a material that can be manufactured at the scale needed for a tether — but by the time that happens, the need for a space elevator may have evaporated.

Several aerospace companies are making progress with their own reusable rockets, and as those join the market with SpaceX, competition could cause launch prices to fall further.

California startup SpinLaunch, meanwhile, is developing a massive centrifuge to fling payloads into space, where much smaller rockets can propel them into orbit. If the company succeeds (another one of those big ifs), it says the system would slash the amount of fuel needed to reach orbit by 70 percent.

Even if SpinLaunch doesn’t get off the ground, several groups are developing environmentally friendly rocket fuels that produce far fewer (or no) harmful emissions. More work is needed to efficiently scale up their production, but overcoming that hurdle will likely be far easier than building a 22,000-mile (35,400-km) elevator to space.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.

New tech aims to make the ocean healthier for marine life

Overabundance of dissolved carbon dioxide poses a threat to marine life. A new system detects elevated levels of the greenhouse gases and mitigates them on the spot.

A defunct drydock basin arched by a rusting 19th century steel bridge seems an incongruous place to conduct state-of-the-art climate science. But this placid and protected sliver of water connecting Brooklyn’s Navy Yard to the East River was just right for Garrett Boudinot to float a small dock topped with water carbon-sensing gear. And while his system right now looks like a trio of plastic boxes wired up together, it aims to mediate the growing ocean acidification problem, caused by overabundance of dissolved carbon dioxide.

Boudinot, a biogeochemist and founder of a carbon-management startup called Vycarb, is honing his method for measuring CO2 levels in water, as well as (at least temporarily) correcting their negative effects. It’s a challenge that’s been occupying numerous climate scientists as the ocean heats up, and as states like New York recognize that reducing emissions won’t be enough to reach their climate goals; they’ll have to figure out how to remove carbon, too.

To date, though, methods for measuring CO2 in water at scale have been either intensely expensive, requiring fancy sensors that pump CO2 through membranes; or prohibitively complicated, involving a series of lab-based analyses. And that’s led to a bottleneck in efforts to remove carbon as well.

But recently, Boudinot cracked part of the code for measurement and mitigation, at least on a small scale. While the rest of the industry sorts out larger intricacies like getting ocean carbon markets up and running and driving carbon removal at billion-ton scale in centralized infrastructure, his decentralized method could have important, more immediate implications.

Specifically, for shellfish hatcheries, which grow seafood for human consumption and for coastal restoration projects. Some of these incubators for oysters and clams and scallops are already feeling the negative effects of excess carbon in water, and Vycarb’s tech could improve outcomes for the larval- and juvenile-stage mollusks they’re raising. “We’re learning from these folks about what their needs are, so that we’re developing our system as a solution that’s relevant,” Boudinot says.

Ocean acidification can wreak havoc on developing shellfish, inhibiting their shells from growing and leading to mass die-offs.

Ocean waters naturally absorb CO2 gas from the atmosphere. When CO2 accumulates faster than nature can dissipate it, it reacts with H2O molecules, forming carbonic acid, H2CO3, which makes the water column more acidic. On the West Coast, acidification occurs when deep, carbon dioxide-rich waters upwell onto the coast. This can wreak havoc on developing shellfish, inhibiting their shells from growing and leading to mass die-offs; this happened, disastrously, at Pacific Northwest oyster hatcheries in 2007.

This type of acidification will eventually come for the East Coast, too, says Ryan Wallace, assistant professor and graduate director of environmental studies and sciences at Long Island’s Adelphi University, who studies acidification. But at the moment, East Coast acidification has other sources: agricultural runoff, usually in the form of nitrogen, and human and animal waste entering coastal areas. These excess nutrient loads cause algae to grow, which isn’t a problem in and of itself, Wallace says; but when algae die, they’re consumed by bacteria, whose respiration in turn bumps up CO2 levels in water.

“Unfortunately, this is occurring at the bottom [of the water column], where shellfish organisms live and grow,” Wallace says. Acidification on the East Coast is minutely localized, occurring closest to where nutrients are being released, as well as seasonally; at least one local shellfish farm, on Fishers Island in the Long Island Sound, has contended with its effects.

The second Vycarb pilot, ready to be installed at the East Hampton shellfish hatchery.

Courtesy of Vycarb

Besides CO2, ocean water contains two other forms of dissolved carbon — carbonate (CO3-) and bicarbonate (HCO3) — at all times, at differing levels. At low pH (acidic), CO2 prevails; at medium pH, HCO3 is the dominant form; at higher pH, CO3 dominates. Boudinot’s invention is the first real-time measurement for all three, he says. From the dock at the Navy Yard, his pilot system uses carefully calibrated but low-cost sensors to gauge the water’s pH and its corresponding levels of CO2. When it detects elevated levels of the greenhouse gas, the system mitigates it on the spot. It does this by adding a bicarbonate powder that’s a byproduct of agricultural limestone mining in nearby Pennsylvania. Because the bicarbonate powder is alkaline, it increases the water pH and reduces the acidity. “We drive a chemical reaction to increase the pH to convert greenhouse gas- and acid-causing CO2 into bicarbonate, which is HCO3,” Boudinot says. “And HCO3 is what shellfish and fish and lots of marine life prefers over CO2.”

This de-acidifying “buffering” is something shellfish operations already do to water, usually by adding soda ash (NaHCO3), which is also alkaline. Some hatcheries add soda ash constantly, just in case; some wait till acidification causes significant problems. Generally, for an overly busy shellfish farmer to detect acidification takes time and effort. “We’re out there daily, taking a look at the pH and figuring out how much we need to dose it,” explains John “Barley” Dunne, director of the East Hampton Shellfish Hatchery on Long Island. “If this is an automatic system…that would be much less labor intensive — one less thing to monitor when we have so many other things we need to monitor.”

Across the Sound at the hatchery he runs, Dunne annually produces 30 million hard clams, 6 million oysters, and “if we’re lucky, some years we get a million bay scallops,” he says. These mollusks are destined for restoration projects around the town of East Hampton, where they’ll create habitat, filter water, and protect the coastline from sea level rise and storm surge. So far, Dunne’s hatchery has largely escaped the ill effects of acidification, although his bay scallops are having a finicky year and he’s checking to see if acidification might be part of the problem. But “I think it's important to have these solutions ready-at-hand for when the time comes,” he says. That’s why he’s hosting a second, 70-liter Vycarb pilot starting this summer on a dock adjacent to his East Hampton operation; it will amp up to a 50,000 liter-system in a few months.

If it can buffer water over a large area, absolutely this will benefit natural spawns. -- John “Barley” Dunne.

Boudinot hopes this new pilot will act as a proof of concept for hatcheries up and down the East Coast. The area from Maine to Nova Scotia is experiencing the worst of Atlantic acidification, due in part to increased Arctic meltwater combining with Gulf of St. Lawrence freshwater; that decreases saturation of calcium carbonate, making the water more acidic. Boudinot says his system should work to adjust low pH regardless of the cause or locale. The East Hampton system will eventually test and buffer-as-necessary the water that Dunne pumps from the Sound into 100-gallon land-based tanks where larvae grow for two weeks before being transferred to an in-Sound nursery to plump up.

Dunne says this could have positive effects — not only on his hatchery but on wild shellfish populations, too, reducing at least one stressor their larvae experience (others include increasing water temperatures and decreased oxygen levels). “If it can buffer water over a large area, absolutely this will [benefit] natural spawns,” he says.

No one believes the Vycarb model — even if it proves capable of functioning at much greater scale — is the sole solution to acidification in the ocean. Wallace says new water treatment plants in New York City, which reduce nitrogen released into coastal waters, are an important part of the equation. And “certainly, some green infrastructure would help,” says Boudinot, like restoring coastal and tidal wetlands to help filter nutrient runoff.

In the meantime, Boudinot continues to collect data in advance of amping up his own operations. Still unknown is the effect of releasing huge amounts of alkalinity into the ocean. Boudinot says a pH of 9 or higher can be too harsh for marine life, plus it can also trigger a release of CO2 from the water back into the atmosphere. For a third pilot, on Governor’s Island in New York Harbor, Vycarb will install yet another system from which Boudinot’s team will frequently sample to analyze some of those and other impacts. “Let's really make sure that we know what the results are,” he says. “Let's have data to show, because in this carbon world, things behave very differently out in the real world versus on paper.”