With Lab-Grown Chicken Nuggets, Dumplings, and Burgers, Futuristic Foods Aim to Seem Familiar

Shiok Meat's lab-grown "shrimp" dumplings, alongside a photo of the company's CTO, Ka Yi Ling, in a lab.

Sandhya Sriram is at the forefront of the expanding lab-grown meat industry in more ways than one.

"[Lab-grown meat] is kind of a brave new world for a lot of people, and food isn't something people like being brave about."

She's the CEO and co-founder of one of fewer than 30 companies that is even in this game in the first place. Her Singapore-based company, Shiok Meats, is the only one to pop up in Southeast Asia. And it's the only company in the world that's attempting to grow crustaceans in a lab, starting with shrimp. This spring, the company debuted a prototype of its shrimp, and completed a seed funding round of $4.6 million.

Yet despite all of these wins, Sriram's own mother won't try the company's shrimp. She's a staunch, lifelong vegetarian, adhering to a strict definition of what that means.

"[Lab-grown meat] is kind of a brave new world for a lot of people, and food isn't something people like being brave about. It's really a rather hard-wired thing," says Kate Krueger, the research director at New Harvest, a non-profit accelerator for cellular agriculture (the umbrella field that studies how to grow animal products in the lab, including meat, dairy, and eggs).

It's so hard-wired, in fact, that trends in food inform our species' origin story. In 2017, a group of paleoanthropologists caused an upset when they unearthed fossils in present day Morocco showing that our earliest human ancestors lived much further north and 100,000 years earlier than expected -- the remains date back 300,000 years. But the excavation not only included bones and tools, it also painted a clear picture of the prevailing menu at the time: The oldest humans were apparently chomping on tons of gazelle, as well as wildebeest and zebra when they could find them, plus the occasional seasonal ostrich egg.

These were people with a diet shaped by available resources, but also by the ability to cook in the first place. In his book Catching Fire: How Cooking Made Us Human, Harvard primatologist Richard Wrangam writes that the very thing that allowed for the evolution of Homo sapiens was the ability to transform raw ingredients into edible nutrients through cooking.

Today, our behavior and feelings around food are the product of local climate, crops, animal populations, and tools, but also religion, tradition, and superstition. So what happens when you add science to the mix? Turns out, we still trend toward the familiar. The innovations in lab-grown meat that are picking up the most steam are foods like burgers, not meat chips, and salmon, not salmon-cod-tilapia hybrids. It's not for lack of imagination, it's because the industry's practitioners know that a lifetime of food memories is a hard thing to contend with. So far, the nascent lab-grown meat industry is not so much disrupting as being shaped by the oldest culture we have.

Not a single piece of lab-grown meat is commercially available to consumers yet, and already so much ink has been spilled debating if it's really meat, if it's kosher, if it's vegetarian, if it's ethical, if it's sustainable. But whether or not the industry succeeds and sticks around is almost moot -- watching these conversations and innovations unfold serves as a mirror reflecting back who we are, what concerns us, and what we aspire to.

The More Things Change, the More They Stay the Same

The building blocks for making lab-grown meat right now are remarkably similar, no matter what type of animal protein a company is aiming to produce.

First, a small biopsy, about the size of a sesame seed, is taken from a single animal. Then, the muscle cells are isolated and added to a nutrient-dense culture in a bioreactor -- the same tool used to make beer -- where the cells can multiply, grow, and form muscle tissue. This tissue can then be mixed with additives like nutrients, seasonings, binders, and sometimes colors to form a food product. Whether a company is attempting to make chicken, fish, beef, shrimp, or any other animal protein in a lab, the basic steps remain similar. Cells from various animals do behave differently, though, and each company has its own proprietary techniques and tools. Some, for example, use fetal calf serum as their cell culture, while others, aiming for a more vegan approach, eschew it.

"New gadgets feel safest when they remind us of other objects that we already know."

According to Mark Post, who made the first lab-grown hamburger at Maastricht University in the Netherlands in 2013, the cells of just one cow can give way to 175 million four-ounce burgers. By today's available burger-making methods, you'd need to slaughter 440,000 cows for the same result. The projected difference in the purely material efficiency between the two systems is staggering. The environmental impact is hard to predict, though. Some companies claim that their lab-grown meat requires 99 percent less land and 96 percent less water than traditional farming methods -- and that rearing fewer cows, specifically, would reduce methane emissions -- but the energy cost of running a lab-grown-meat production facility at an industrial scale, especially as compared to small-scale, pasture-raised farming, could be problematic. It's difficult to truly measure any of this in a burgeoning industry.

At this point, growing something like an intact shrimp tail or a marbled steak in a lab is still a Holy Grail. It would require reproducing the complex musculo-skeletal and vascular structure of meat, not just the cellular basis, and no one's successfully done it yet. Until then, many companies working on lab-grown meat are perfecting mince. Each new company's demo of a prototype food feels distinctly regional, though: At the Disruption in Food and Sustainability Summit in March, Shiok (which is pronounced "shook," and is Singaporean slang for "very tasty and delicious") first shared a prototype of its shrimp as an ingredient in siu-mai, a dumpling of Chinese origin and a fixture at dim sum. JUST, a company based in the U.S., produced a demo chicken nugget.

As Jean Anthelme Brillat-Savarin, the 17th century founder of the gastronomic essay, famously said, "Show me what you eat, and I'll tell you who you are."

For many of these companies, the baseline animal protein they are trying to innovate also feels tied to place and culture: When meat comes from a bioreactor, not a farm, the world's largest exporter of seafood could be a landlocked region, and beef could be "reared" in a bayou, yet the handful of lab-grown fish companies, like Finless Foods and BlueNalu, hug the American coasts; VOW, based in Australia, started making lab-grown kangaroo meat in August; and of course the world's first lab-grown shrimp is in Singapore.

"In the U.S., shrimps are either seen in shrimp cocktail, shrimp sushi, and so on, but [in Singapore] we have everything from shrimp paste to shrimp oil," Sriram says. "It's used in noodles and rice, as flavoring in cup noodles, and in biscuits and crackers as well. It's seen in every form, shape, and size. It just made sense for us to go after a protein that was widely used."

It's tempting to assume that innovating on pillars of cultural significance might be easier if the focus were on a whole new kind of food to begin with, not your popular dim sum items or fast food offerings. But it's proving to be quite the opposite.

"That could have been one direction where [researchers] just said, 'Look, it's really hard to reproduce raw ground beef. Why don't we just make something completely new, like meat chips?'" says Mike Lee, co-founder and co-CEO of Alpha Food Labs, which works on food innovation more broadly. "While that strategy's interesting, I think we've got so many new things to explain to people that I don't know if you want to also explain this new format of food that you've never, ever seen before."

We've seen this same cautious approach to change before in other ways that relate to cooking. Perhaps the most obvious example is the kitchen range. As Bee Wilson writes in her book Consider the Fork: A History of How We Cook and Eat, in the 1880s, convincing ardent coal-range users to switch to newfangled gas was a hard sell. To win them over, inventor William Sugg designed a range that used gas, but aesthetically looked like the coal ones already in fashion at the time -- and which in some visual ways harkened even further back to the days of open-hearth cooking. Over time, gas range designs moved further away from those of the past, but the initial jump was only made possible through familiarity. There's a cleverness to meeting people where they are.

"New gadgets feel safest when they remind us of other objects that we already know," writes Wilson. "It is far harder to accept a technology that is entirely new."

Maybe someday we won't want anything other than meat chips, but not today.

Measuring Success

A 2018 Gallup poll shows that in the U.S., rates of true vegetarianism and veganism have been stagnant for as long as they've been measured. When the poll began in 1999, six percent of Americans were vegetarian, a number that remained steady until 2012, when the number dropped one point. As of 2018, it remained at five percent.

In 2012, when Gallup first measured the percentage of vegans, the rate was two percent. By 2018 it had gone up just one point, to three percent. Increasing awareness of animal welfare, health, and environmental concerns don't seem to be incentive enough to convince Americans, en masse, to completely slam the door on a food culture characterized in many ways by its emphasis on traditional meat consumption.

"A lot of consumers get over the ick factor when you tell them that most of the food that you're eating right now has entered the lab at some point."

Wilson writes that "experimenting with new foods has always been a dangerous business. In the wild, trying out some tempting new berries might lead to death. A lingering sense of this danger may make us risk-averse in the kitchen."

That might be one psychologically deep-seated reason that Americans are so resistant to ditch meat altogether. But a middle ground is emerging with a rise in flexitarianism, which aims to reduce reliance on traditional animal products. "Americans are eager to include alternatives to animal products in their diets, but are not willing to give up animal products completely," the same 2018 Gallup poll reported. This may represent the best opportunity for lab-grown meat to wedge itself into the culture.

Quantitatively predicting a population's willingness to try a lab-grown version of its favorite protein is proving a hard thing to measure, however, because it's still science fiction to a regular consumer. Measuring popular opinion of something that doesn't really exist yet is a dubious pastime.

In 2015, University of Wisconsin School of Public Health researchers Linnea Laestadius and Mark Caldwell conducted a study using online comments on articles about lab-grown meat to suss out public response to the food. The results showed a mostly negative attitude, but that was only two years into a field that is six years old today. Already public opinion may have shifted.

Shiok Meat's Sriram and her co-founder Ka Yi Ling have used online surveys to get a sense of the landscape, but they also take a more direct approach sometimes. Every time they give a public talk about their company and their shrimp, they poll their audience before and after the talk, using the question, "How many of you are willing to try, and pay, to eat lab-grown meat?"

They consistently find that the percentage of people willing to try goes up from 50 to 90 percent after hearing their talk, which includes information about the downsides of traditional shrimp farming (for one thing, many shrimp are raised in sewage, and peeled and deveined by slaves) and a bit of information about how lab-grown animal protein is being made now. I saw this pan out myself when Ling spoke at a New Harvest conference in Cambridge, Massachusetts in July.

"A lot of consumers get over the ick factor when you tell them that most of the food that you're eating right now has entered the lab at some point," Sriram says. "We're not going to grow our meat in the lab always. It's in the lab right now, because we're in R&D. Once we go into manufacturing ... it's going to be a food manufacturing facility, where a lot of food comes from."

The downside of the University of Wisconsin's and Shiok Meat's approach to capturing public opinion is that they each look at self-selecting groups: Online commenters are often fueled by a need to complain, and it's likely that anyone attending a talk by the co-founders of a lab-grown meat company already has some level of open-mindedness.

So Sriram says that she and Ling are also using another method to assess the landscape, and it's somewhere in the middle. They've been watching public responses to the closest available product to lab-grown meat that's on the market: Impossible Burger. As a 100 percent plant-based burger, it's not quite the same, but this bleedable, searable patty is still very much the product of science and laboratory work. Its remarkable similarity to beef is courtesy of yeast that have been genetically engineered to contain DNA from soy plant roots, which produce a protein called heme as they multiply. This heme is a plant-derived protein that can look and act like the heme found in animal muscle.

So far, the sciencey underpinnings of the burger don't seem to be turning people off. In just four years, it's already found its place within other American food icons. It's readily available everywhere from nationwide Burger Kings to Boston's Warren Tavern, which has been in operation since 1780, is one of the oldest pubs in America, and is even named after the man who sent Paul Revere on his midnight ride. Some people have already grown so attached to the Impossible Burger that they will actually walk out of a restaurant that's out of stock. Demand for the burger is outpacing production.

"Even though [Impossible] doesn't consider their product cellular agriculture, it's part of a spectrum of innovation," Krueger says. "There are novel proteins that you're not going to find in your average food, and there's some cool tech there. So to me, that does show a lot of willingness on people's part to think about trying something new."

The message for those working on animal-based lab-grown meat is clear: People will accept innovation on their favorite food if it tastes good enough and evokes the same emotional connection as the real deal.

"How people talk about lab-grown meat now, it's still a conversation about science, not about culture and emotion," Lee says. But he's confident that the conversation will start to shift in that direction if the companies doing this work can nail the flavor memory, above all.

And then proving how much power flavor lords over us, we quickly derail into a conversation about Doritos, which he calls "maniacally delicious." The chips carry no health value whatsoever and are a native product of food engineering and manufacturing — just watch how hard it is for Bon Appetit associate food editor Claire Saffitz to try and recreate them in the magazine's test kitchen — yet devotees remain unfazed and crunch on.

"It's funny because it shows you that people don't ask questions about how [some foods] are made, so why are they asking so many questions about how lab-grown meat is made?" Lee asks.

For all the hype around Impossible Burger, there are still controversies and hand-wringing around lab-grown meat. Some people are grossed out by the idea, some people are confused, and if you're the U.S. Cattlemen's Association (USCA), you're territorial. Last year, the group sent a petition to the USDA to "exclude products not derived directly from animals raised and slaughtered from the definition of 'beef' and meat.'"

"I think we are probably three or four big food safety scares away from everyone, especially younger generations, embracing lab-grown meat as like, 'Science is good; nature is dirty, and can kill you.'"

"I have this working hypothesis that if you look at the nation in 50-year spurts, we revolve back and forth between artisanal, all-natural food that's unadulterated and pure, and food that's empowered by science," Lee says. "Maybe we've only had one lap around the track on that, but I think we are probably three or four big food safety scares away from everyone, especially younger generations, embracing lab-grown meat as like, 'Science is good; nature is dirty, and can kill you.'"

Food culture goes beyond just the ingredients we know and love — it's also about how we interact with them, produce them, and expect them to taste and feel when we bite down. We accept a margin of difference among a fast food burger, a backyard burger from the grill, and a gourmet burger. Maybe someday we'll accept the difference between a burger created by killing a cow and a burger created by biopsying one.

Looking to the Future

Every time we engage with food, "we are enacting a ritual that binds us to the place we live and to those in our family, both living and dead," Wilson writes in Consider the Fork. "Such things are not easily shrugged off. Every time a new cooking technology has been introduced, however useful … it has been greeted in some quarters with hostility and protestations that the old ways were better and safer."

This is why it might be hard for a vegetarian mother to try her daughter's lab-grown shrimp, no matter how ethically it was produced or how awe-inspiring the invention is. Yet food cultures can and do change. "They're not these static things," says Benjamin Wurgaft, a historian whose book Meat Planet: Artificial Flesh and the Future of Food comes out this month. "The real tension seems to be between slow change and fast change."

In fact, the very definition of the word "meat" has never exclusively meant what the USCA wants it to mean. Before the 12th century, when it first appeared in Old English as "mete," it wasn't very specific at all and could be used to describe anything from "nourishment," to "food item," to "fodder," to "sustenance." By the 13th century it had been narrowed down to mean "flesh of warm-blooded animals killed and used as food." And yet the British mincemeat pie lives on as a sweet Christmas treat full of -- to the surprise of many non-Brits -- spiced, dried fruit. Since 1901, we've also used this word with ease as a general term for anything that's substantive -- as in, "the meat of the matter." There is room for yet more definitions to pile on.

"The conversation [about lab-ground meat] has changed remarkably in the last six years," Wurgaft says. "It has become a conversation about whether or not specific companies will bring a product to market, and that's a really different conversation than asking, 'Should we produce meat in the lab?'"

As part of the field research for his book, Wurgaft visited the Rijksmuseum Boerhaave, a Dutch museum that specializes in the history of science and medicine. It was 2015, and he was there to see an exhibit on the future of food. Just two years earlier, Mark Post had made that first lab-grown hamburger about a two-and-a-half hour drive south of the museum. When Wurgaft arrived, he found the novel invention, which Post had donated to the museum, already preserved and served up on a dinner plate, the whole outfit protected by plexiglass.

"They put this in the exhibit as if it were already part of the historical records, which to a historian looked really weird," Wurgaft says. "It looked like somebody taking the most recent supercomputer and putting it in a museum exhibit saying, 'This is the supercomputer that changed everything,' as if you were already 100 years in the future, looking back."

It seemed to symbolize an effort to codify a lab-grown hamburger as a matter of Dutch pride, perhaps someday occupying a place in people's hearts right next to the stroopwafel.

"Who's to say that we couldn't get a whole school of how to cook with lab-grown meat?"

Lee likes to imagine that part of the legacy of lab-grown meat, if it succeeds, will be to inspire entirely new fads in cooking -- a step beyond ones like the crab-filled avocado of the 1960s or the pesto of the 1980s in the U.S.

"[Lab-grown meat] is inherently going to be a different quality than anything we've done with an animal," he says. "Look at every cut [of meat] on the sphere today -- each requires a slightly different cooking method to optimize the flavor of that cut. Who's to say that we couldn't get a whole school of how to cook with lab-grown meat?"

At this point, most of us have no way of trying lab-grown meat. It remains exclusively available through sometimes gimmicky demos reserved for investors and the media. But Wurgaft says the stories we tell about this innovation, the articles we write, the films we make, and yes, even the museum exhibits we curate, all hold as much cultural significance as the product itself might someday.

The Friday Five: How to exercise for cancer prevention

How to exercise for cancer prevention. Plus, a device that brings relief to back pain, ingredients for reducing Alzheimer's risk, the world's oldest disease could make you young again, and more.

The Friday Five covers five stories in research that you may have missed this week. There are plenty of controversies and troubling ethical issues in science – and we get into many of them in our online magazine – but this news roundup focuses on scientific creativity and progress to give you a therapeutic dose of inspiration headed into the weekend.

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

Here are the promising studies covered in this week's Friday Five:

- How to exercise for cancer prevention

- A device that brings relief to back pain

- Ingredients for reducing Alzheimer's risk

- Is the world's oldest disease the fountain of youth?

- Scared of crossing bridges? Your phone can help

New approach to brain health is sparking memories

This fall, Robert Reinhart of Boston University published a study finding that electrical stimulation can boost memory - and Reinhart was surprised to discover the effects lasted a full month.

What if a few painless electrical zaps to your brain could help you recall names, perform better on Wordle or even ward off dementia?

This is where neuroscientists are going in efforts to stave off age-related memory loss as well as Alzheimer’s disease. Medications have shown limited effectiveness in reversing or managing loss of brain function so far. But new studies suggest that firing up an aging neural network with electrical or magnetic current might keep brains spry as we age.

Welcome to non-invasive brain stimulation (NIBS). No surgery or anesthesia is required. One day, a jolt in the morning with your own battery-operated kit could replace your wake-up coffee.

Scientists believe brain circuits tend to uncouple as we age. Since brain neurons communicate by exchanging electrical impulses with each other, the breakdown of these links and associations could be what causes the “senior moment”—when you can’t remember the name of the movie you just watched.

In 2019, Boston University researchers led by Robert Reinhart, director of the Cognitive and Clinical Neuroscience Laboratory, showed that memory loss in healthy older adults is likely caused by these disconnected brain networks. When Reinhart and his team stimulated two key areas of the brain with mild electrical current, they were able to bring the brains of older adult subjects back into sync — enough so that their ability to remember small differences between two images matched that of much younger subjects for at least 50 minutes after the testing stopped.

Reinhart wowed the neuroscience community once again this fall. His newer study in Nature Neuroscience presented 150 healthy participants, ages 65 to 88, who were able to recall more words on a given list after 20 minutes of low-intensity electrical stimulation sessions over four consecutive days. This amounted to a 50 to 65 percent boost in their recall.

Even Reinhart was surprised to discover the enhanced performance of his subjects lasted a full month when they were tested again later. Those who benefited most were the participants who were the most forgetful at the start.

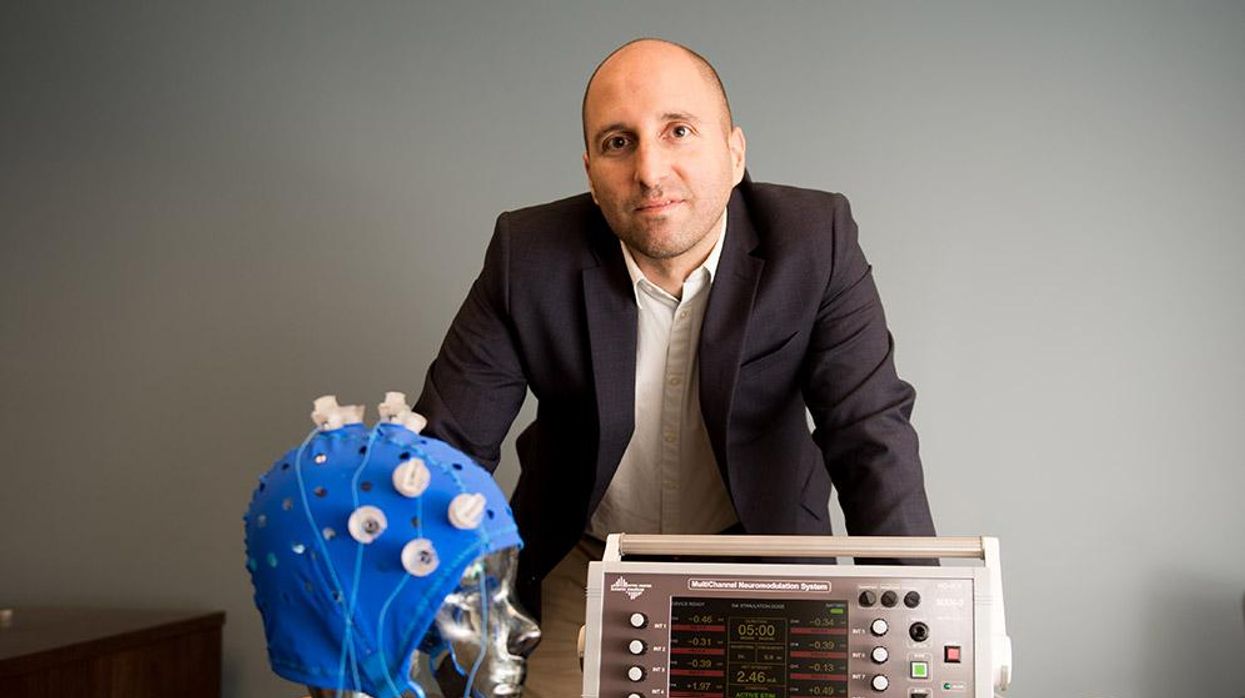

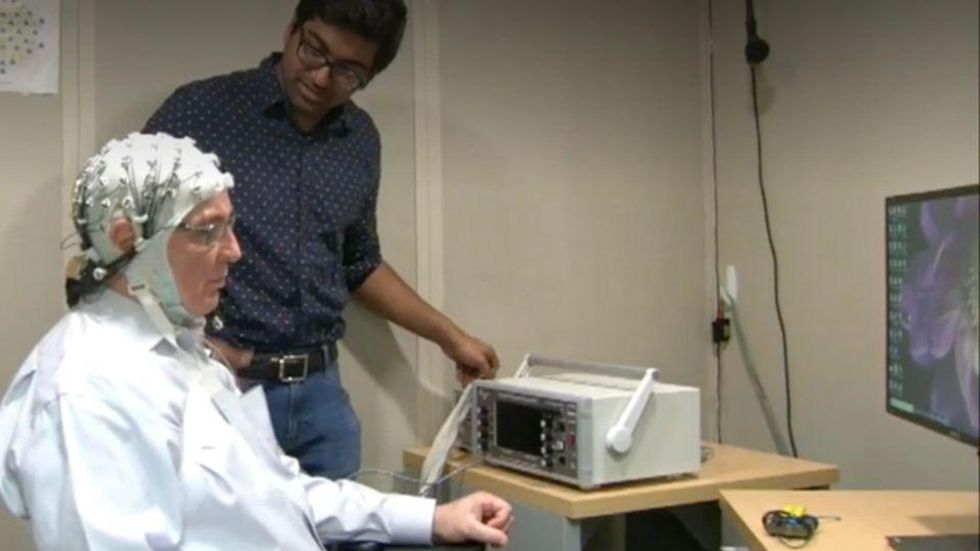

An older person participates in Robert Reinhart's research on brain stimulation.

Robert Reinhart

Reinhart’s subjects only suffered normal age-related memory deficits, but NIBS has great potential to help people with cognitive impairment and dementia, too, says Krista Lanctôt, the Bernick Chair of Geriatric Psychopharmacology at Sunnybrook Health Sciences Center in Toronto. Plus, “it is remarkably safe,” she says.

Lanctôt was the senior author on a meta-analysis of brain stimulation studies published last year on people with mild cognitive impairment or later stages of Alzheimer’s disease. The review concluded that magnetic stimulation to the brain significantly improved the research participants’ neuropsychiatric symptoms, such as apathy and depression. The stimulation also enhanced global cognition, which includes memory, attention, executive function and more.

This is the frontier of neuroscience.

The two main forms of NIBS – and many questions surrounding them

There are two types of NIBS. They differ based on whether electrical or magnetic stimulation is used to create the electric field, the type of device that delivers the electrical current and the strength of the current.

Transcranial Current Brain Stimulation (tES) is an umbrella term for a group of techniques using low-wattage electrical currents to manipulate activity in the brain. The current is delivered to the scalp or forehead via electrodes attached to a nylon elastic cap or rubber headband.

Variations include how the current is delivered—in an alternating pattern or in a constant, direct mode, for instance. Tweaking frequency, potency or target brain area can produce different effects as well. Reinhart’s 2022 study demonstrated that low or high frequencies and alternating currents were uniquely tied to either short-term or long-term memory improvements.

Sessions may be 20 minutes per day over the course of several days or two weeks. “[The subject] may feel a tingling, warming, poking or itching sensation,” says Reinhart, which typically goes away within a minute.

The other main approach to NIBS is Transcranial Magnetic Simulation (TMS). It involves the use of an electromagnetic coil that is held or placed against the forehead or scalp to activate nerve cells in the brain through short pulses. The stimulation is stronger than tES but similar to a magnetic resonance imaging (MRI) scan.

The subject may feel a slight knocking or tapping on the head during a 20-to-60-minute session. Scalp discomfort and headaches are reported by some; in very rare cases, a seizure can occur.

No head-to-head trials have been conducted yet to evaluate the differences and effectiveness between electrical and magnetic current stimulation, notes Lanctôt, who is also a professor of psychiatry and pharmacology at the University of Toronto. Although TMS was approved by the FDA in 2008 to treat major depression, both techniques are considered experimental for the purpose of cognitive enhancement.

“One attractive feature of tES is that it’s inexpensive—one-fifth the price of magnetic stimulation,” Reinhart notes.

Don’t confuse either of these procedures with the horrors of electroconvulsive therapy (ECT) in the 1950s and ‘60s. ECT is a more powerful, riskier procedure used only as a last resort in treating severe mental illness today.

Clinical studies on NIBS remain scarce. Standardized parameters and measures for testing have not been developed. The high heterogeneity among the many existing small NIBS studies makes it difficult to draw general conclusions. Few of the studies have been replicated and inconsistencies abound.

Scientists are still lacking so much fundamental knowledge about the brain and how it works, says Reinhart. “We don’t know how information is represented in the brain or how it’s carried forward in time. It’s more complex than physics.”

Lanctôt’s meta-analysis showed improvements in global cognition from delivering the magnetic form of the stimulation to people with Alzheimer’s, and this finding was replicated inan analysis in the Journal of Prevention of Alzheimer’s Disease this fall. Neither meta-analysis found clear evidence that applying the electrical currents, was helpful for Alzheimer’s subjects, but Lanctôt suggests this might be merely because the sample size for tES was smaller compared to the groups that received TMS.

At the same time, London neuroscientist Marco Sandrini, senior lecturer in psychology at the University of Roehampton, critically reviewed a series of studies on the effects of tES on episodic memory. Often declining with age, episodic memory relates to recalling a person’s own experiences from the past. Sandrini’s review concluded that delivering tES to the prefrontal or temporoparietal cortices of the brain might enhance episodic memory in older adults with Alzheimer’s disease and amnesiac mild cognitive impairment (the predementia phase of Alzheimer’s when people start to have symptoms).

Researchers readily tick off studies needed to explore, clarify and validate existing NIBS data. What is the optimal stimulus session frequency, spacing and duration? How intense should the stimulus be and where should it be targeted for what effect? How might genetics or degree of brain impairment affect responsiveness? Would adjunct medication or cognitive training boost positive results? Could administering the stimulus while someone sleeps expedite memory consolidation?

Using MRI or another brain scan along with computational modeling of the current flow, a clinician could create a treatment that is customized to each person’s brain.

While Sandrini’s review reported improvements induced by tES in the recall or recognition of words and images, there is no evidence it will translate into improvements in daily activities. This is another question that will require more research and testing, Sandrini notes.

Scientists are still lacking so much fundamental knowledge about the brain and how it works, says Reinhart. “We don’t know how information is represented in the brain or how it’s carried forward in time. It’s more complex than physics.”

Where the science is headed

Learning how to apply precision medicine to NIBS is the next focus in advancing this technology, says Shankar Tumati, a post-doctoral fellow working with Lanctôt.

There is great variability in each person’s brain anatomy—the thickness of the skull, the brain’s unique folds, the amount of cerebrospinal fluid. All of these structural differences impact how electrical or magnetic stimulation is distributed in the brain and ultimately the effects.

Using MRI or another brain scan along with computational modeling of the current flow, a clinician could create a treatment that is customized to each person’s brain, from where to put the electrodes to determining the exact dose and duration of stimulation needed to achieve lasting results, Sandrini says.

Above all, most neuroscientists say that largescale research studies over long periods of time are necessary to confirm the safety and durability of this therapy for the purpose of boosting memory. Short of that, there can be no FDA approval or medical regulation for this clinical use.

Lanctôt urges people to seek out clinical NIBS trials in their area if they want to see the science advance. “That is how we’ll find the answers,” she says, predicting it will be 5 to 10 years to develop each additional clinical application of NIBS. Ultimately, she predicts that reigning in Alzheimer’s disease and mild cognitive impairment will require a multi-pronged approach that includes lifestyle and medications, too.

Sandrini believes that scientific efforts should focus on preventing or delaying Alzheimer’s. “We need to start intervention earlier—as soon as people start to complain about forgetting things,” he says. “Changes in the brain start 10 years before [there is a problem]. Once Alzheimer’s develops, it is too late.”