Would You Want to Know a Decade Early If You Were Getting Alzheimer's?

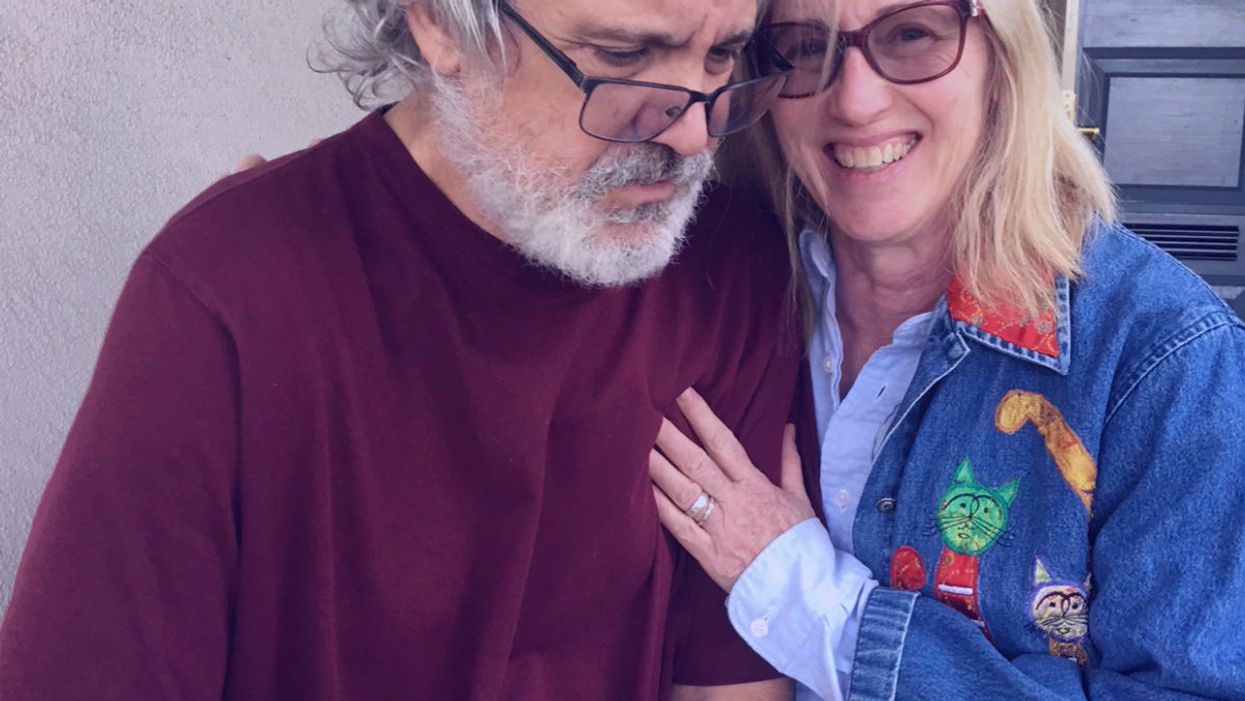

The author pictured with her husband Dallas, who has Alzheimer's.

Editor's Note: A team of researchers in Italy recently used artificial intelligence and machine learning to diagnose Alzheimer's disease on a brain scan an entire decade before symptoms show up in the patient. While some people argue that early detection is critical, others believe the knowledge would do more harm than good. LeapsMag invited contributors with opposite opinions to share their perspectives.

I first realized something was wrong with my dad when I came home for Thanksgiving 20 years ago.

I hadn't seen my family for more than a year after moving from New York to California. My father was meticulous, a multi-shower a day man, a regular Beau Brummell. He was never officially diagnosed with dementia, but it was easy to figure out after he stopped leaving the house, stopped reading, stopped being himself. My mother knew, but she never sought help. After his illness showed itself, I asked her if she considered a nursing home. "Never," she told me. "I can take care of him." And she did.

She gave herself a break once to visit me, and it was the first time she traveled separately from him since they eloped at seventeen. My brother watched my father, and it was not smooth. Dad was angry, hallucinating, and demanding his gun, which had been disposed of long ago. While Mom was visiting me in California, we played some board games. One demanded honest answers. The card read, What are you most afraid of? "Dementia," she said.

My father never saw this coming, none of us did.

Dementia ran on my mother's side. Her mother, my Nana, was senile, the popular diagnosis for older folks back then. My grandfather tried his hardest to take care of her, but she kept escaping their tidy 6th floor apartment to run away. My mother would go over every day to take care of them, but once my grandfather became ill, she took her mother into our apartment. She had two small children, Nana, and her husband in a two-bedroom flat. Nana talked to people under plates, wore tissues on her head, and tried to escape. We were on the first floor, so she could run into traffic if all eyes weren't on her. Soon, it was too much, even for my Wonder Woman mom. Nana was placed in a nursing home and died soon after.

My mother dropped dead on a NYC sidewalk two years after my father started to deteriorate. She was probably going to the store to buy milk and cigarettes. A kind stranger called 911, and a cop came to my parent's door soon after to tell my dad the news. My father cried for death, raged and ranted, then calmed down enough to come back as the dad we remembered for the week of mourning. He even ordered a Manhattan at dinner. His death came exactly a week and an hour after my mother's. He died of a broken heart. My husband cried with all his body after we left the cemetery, weeping, "Poor Buck. Poor Buck." I never saw him cry before.

Now, 18 years later, I sit here with my husband, 59 years old, as he suffers from the same hideous disease.

He is talking to someone I can't see, even laughing with him. He holds a Ph.D. in literature, taught college, had a single handicap golf game, and ate well. We never saw this coming. One day he went to type and jumbled letters came on the screen. He would show up late or early for his classes, wondering what was wrong with the students. He started running red lights. He was graciously counseled to retire, and he did, at 55. His doctor told him it was depression. The second opinion agreed. He was told to do nothing for a year, and he did. He played golf a bit, then one day he couldn't speak or think clearly. I came home from work to find him roaming the neighborhood, eyes ablaze, muttering to himself. I went on family leave. Many tests later we got the working diagnosis, but it meant nothing to him. He never reacted to the words Primary Progressive Aphasia or dementia. I was glad. If he was lucid, I knew what he would talk about doing. He told me after my dad's death that he did not want that life for himself.

I worry I may get it, too. It almost seems inescapable. Dementia has no cure, and the treatments for the symptoms are hit and miss. I thought about getting the full flight of predictive tests, but I know myself, and I scare myself into bracing for the worst. Others scare me, too, when I read their online statements about ending their lives if they learn they have it: I told my children to take me to a state where assisted suicide is legal; it's easy to overdose; I don't want to be a burden on my children. These are caregivers on social media forums. They live with the terror, eyes wide open. We have no children, but who would I burden? My sisters? My brother? Do I stay or do I go? This disease invites pandemonium. Assisted murder-suicides with caregiver spouses of those with dementia don't merit headlines, but their stories are on the sidebars. No thanks. I work on God's timeline.

There are no survivors – yet.

A diagnosis today would paralyze me and create melancholy for all who know me. I would second guess everything, I would read everything, I would cry, I would hardly live. I would be tempted to pick up that first drink after 20 plus years sober. I would even think about ending my life. It would be difficult not to consider. As a high school English teacher, I talk about suicide when I teach Hamlet. I tell the students suicide is a permanent solution to a temporary problem. Dementia isn't temporary. There are no survivors – yet.

I often think what my relatives would have done with an advance diagnosis. My grandmother was a classic worrier. She would have been beyond distraught. My father might have found that gun. My husband would have taken the right number of pills.

An advance diagnosis would paralyze me.

I appreciate the arguments for early diagnosis. Some people are made of sterner stuff. They have the mindset I lack. I admire so many who are contributing to the current conversation about dementia and are active advocates for a cure. They have found a purpose in their fate.

I don't need a test to get my ducks in a row. Loving those with dementia has prompted me to be prepared. I have a different type of bucket list: reset my priorities, slow down, be present, educate others, and make my legal plans. If and when it happens, there will be time for toast and tea and a walk along the shore. There will be time to plan for the inevitable and unenviable end. I am morbid enough to know I will recognize the purple elephant in the room. I don't want the shock and awe now. I can wait. My sisters agree. We will keep our elbows out.

Editor's Note: Consider the other side of the argument here.

Giving robots self-awareness as they move through space - and maybe even providing them with gene-like methods for storing rules of behavior - could be important steps toward creating more intelligent machines.

One day in recent past, scientists at Columbia University’s Creative Machines Lab set up a robotic arm inside a circle of five streaming video cameras and let the robot watch itself move, turn and twist. For about three hours the robot did exactly that—it looked at itself this way and that, like toddlers exploring themselves in a room full of mirrors. By the time the robot stopped, its internal neural network finished learning the relationship between the robot’s motor actions and the volume it occupied in its environment. In other words, the robot built a spatial self-awareness, just like humans do. “We trained its deep neural network to understand how it moved in space,” says Boyuan Chen, one of the scientists who worked on it.

For decades robots have been doing helpful tasks that are too hard, too dangerous, or physically impossible for humans to carry out themselves. Robots are ultimately superior to humans in complex calculations, following rules to a tee and repeating the same steps perfectly. But even the biggest successes for human-robot collaborations—those in manufacturing and automotive industries—still require separating the two for safety reasons. Hardwired for a limited set of tasks, industrial robots don't have the intelligence to know where their robo-parts are in space, how fast they’re moving and when they can endanger a human.

Over the past decade or so, humans have begun to expect more from robots. Engineers have been building smarter versions that can avoid obstacles, follow voice commands, respond to human speech and make simple decisions. Some of them proved invaluable in many natural and man-made disasters like earthquakes, forest fires, nuclear accidents and chemical spills. These disaster recovery robots helped clean up dangerous chemicals, looked for survivors in crumbled buildings, and ventured into radioactive areas to assess damage.

Now roboticists are going a step further, training their creations to do even better: understand their own image in space and interact with humans like humans do. Today, there are already robot-teachers like KeeKo, robot-pets like Moffin, robot-babysitters like iPal, and robotic companions for the elderly like Pepper.

But even these reasonably intelligent creations still have huge limitations, some scientists think. “There are niche applications for the current generations of robots,” says professor Anthony Zador at Cold Spring Harbor Laboratory—but they are not “generalists” who can do varied tasks all on their own, as they mostly lack the abilities to improvise, make decisions based on a multitude of facts or emotions, and adjust to rapidly changing circumstances. “We don’t have general purpose robots that can interact with the world. We’re ages away from that.”

Robotic spatial self-awareness – the achievement by the team at Columbia – is an important step toward creating more intelligent machines. Hod Lipson, professor of mechanical engineering who runs the Columbia lab, says that future robots will need this ability to assist humans better. Knowing how you look and where in space your parts are, decreases the need for human oversight. It also helps the robot to detect and compensate for damage and keep up with its own wear-and-tear. And it allows robots to realize when something is wrong with them or their parts. “We want our robots to learn and continue to grow their minds and bodies on their own,” Chen says. That’s what Zador wants too—and on a much grander level. “I want a robot who can drive my car, take my dog for a walk and have a conversation with me.”

Columbia scientists have trained a robot to become aware of its own "body," so it can map the right path to touch a ball without running into an obstacle, in this case a square.

Jane Nisselson and Yinuo Qin/ Columbia Engineering

Today’s technological advances are making some of these leaps of progress possible. One of them is the so-called Deep Learning—a method that trains artificial intelligence systems to learn and use information similar to how humans do it. Described as a machine learning method based on neural network architectures with multiple layers of processing units, Deep Learning has been used to successfully teach machines to recognize images, understand speech and even write text.

Trained by Google, one of these language machine learning geniuses, BERT, can finish sentences. Another one called GPT3, designed by San Francisco-based company OpenAI, can write little stories. Yet, both of them still make funny mistakes in their linguistic exercises that even a child wouldn’t. According to a paper published by Stanford’s Center for Research on Foundational Models, BERT seems to not understand the word “not.” When asked to fill in the word after “A robin is a __” it correctly answers “bird.” But try inserting the word “not” into that sentence (“A robin is not a __”) and BERT still completes it the same way. Similarly, in one of its stories, GPT3 wrote that if you mix a spoonful of grape juice into your cranberry juice and drink the concoction, you die. It seems that robots, and artificial intelligence systems in general, are still missing some rudimentary facts of life that humans and animals grasp naturally and effortlessly.

How does one give robots a genome? Zador has an idea. We can’t really equip machines with real biological nucleotide-based genes, but we can mimic the neuronal blueprint those genes create.

It's not exactly the robots’ fault. Compared to humans, and all other organisms that have been around for thousands or millions of years, robots are very new. They are missing out on eons of evolutionary data-building. Animals and humans are born with the ability to do certain things because they are pre-wired in them. Flies know how to fly, fish knows how to swim, cats know how to meow, and babies know how to cry. Yet, flies don’t really learn to fly, fish doesn’t learn to swim, cats don’t learn to meow, and babies don’t learn to cry—they are born able to execute such behaviors because they’re preprogrammed to do so. All that happens thanks to the millions of years of evolutions wired into their respective genomes, which give rise to the brain’s neural networks responsible for these behaviors. Robots are the newbies, missing out on that trove of information, Zador argues.

A neuroscience professor who studies how brain circuitry generates various behaviors, Zador has a different approach to developing the robotic mind. Until their creators figure out a way to imbue the bots with that information, robots will remain quite limited in their abilities. Each model will only be able to do certain things it was programmed to do, but it will never go above and beyond its original code. So Zador argues that we have to start giving robots a genome.

How does one do that? Zador has an idea. We can’t really equip machines with real biological nucleotide-based genes, but we can mimic the neuronal blueprint those genes create. Genomes lay out rules for brain development. Specifically, the genome encodes blueprints for wiring up our nervous system—the details of which neurons are connected, the strength of those connections and other specs that will later hold the information learned throughout life. “Our genomes serve as blueprints for building our nervous system and these blueprints give rise to a human brain, which contains about 100 billion neurons,” Zador says.

If you think what a genome is, he explains, it is essentially a very compact and compressed form of information storage. Conceptually, genomes are similar to CliffsNotes and other study guides. When students read these short summaries, they know about what happened in a book, without actually reading that book. And that’s how we should be designing the next generation of robots if we ever want them to act like humans, Zador says. “We should give them a set of behavioral CliffsNotes, which they can then unwrap into brain-like structures.” Robots that have such brain-like structures will acquire a set of basic rules to generate basic behaviors and use them to learn more complex ones.

Currently Zador is in the process of developing algorithms that function like simple rules that generate such behaviors. “My algorithms would write these CliffsNotes, outlining how to solve a particular problem,” he explains. “And then, the neural networks will use these CliffsNotes to figure out which ones are useful and use them in their behaviors.” That’s how all living beings operate. They use the pre-programmed info from their genetics to adapt to their changing environments and learn what’s necessary to survive and thrive in these settings.

For example, a robot’s neural network could draw from CliffsNotes with “genetic” instructions for how to be aware of its own body or learn to adjust its movements. And other, different sets of CliffsNotes may imbue it with the basics of physical safety or the fundamentals of speech.

At the moment, Zador is working on algorithms that are trying to mimic neuronal blueprints for very simple organisms—such as earthworms, which have only 302 neurons and about 7000 synapses compared to the millions we have. That’s how evolution worked, too—expanding the brains from simple creatures to more complex to the Homo Sapiens. But if it took millions of years to arrive at modern humans, how long would it take scientists to forge a robot with human intelligence? That’s a billion-dollar question. Yet, Zador is optimistic. “My hypotheses is that if you can build simple organisms that can interact with the world, then the higher level functions will not be nearly as challenging as they currently are.”

Lina Zeldovich has written about science, medicine and technology for Popular Science, Smithsonian, National Geographic, Scientific American, Reader’s Digest, the New York Times and other major national and international publications. A Columbia J-School alumna, she has won several awards for her stories, including the ASJA Crisis Coverage Award for Covid reporting, and has been a contributing editor at Nautilus Magazine. In 2021, Zeldovich released her first book, The Other Dark Matter, published by the University of Chicago Press, about the science and business of turning waste into wealth and health. You can find her on http://linazeldovich.com/ and @linazeldovich.

Podcast: Wellness chatbots and meditation pods with Deepak Chopra

Leaps.org talked with Deepak Chopra about new technologies he's developing for mental health with Jonathan Marcoschamer, CEO of OpenSeed, and others.

Over the last few decades, perhaps no one has impacted healthy lifestyles more than Deepak Chopra. While several of his theories and recommendations have been criticized by prominent members of the scientific community, he has helped bring meditation, yoga and other practices for well-being into the mainstream in ways that benefit the health of vast numbers of people every day. His work has led many to accept new ways of thinking about alternative medicine, the power of mind over body, and the malleability of the aging process.

His impact is such that it's been observed our culture no longer recognizes him as a human being but as a pervasive symbol of new-agey personal health and spiritual growth. Last week, I had a chance to confirm that Chopra is, in fact, a human being – and deserving of his icon status – when I talked with him for the Leaps.org podcast. He relayed ideas that were wise and ancient, yet highly relevant to our world today, with the fluidity and ease of someone discussing the weather. Showing no signs of slowing down at age 76, he described his prolific work, including the publication of two books in the past year and a range of technologies he’s developing, including a meditation app, meditation pods for the workplace, and a chatbot for mental health called Piwi.

Take a listen and get inspired to do some meditation and deep thinking on the future of health. As Chopra told me, “If you don’t have time to meditate once per day, you probably need to meditate twice per day.”

Highlights:

2:10: Chopra talks about meditation broadly and meditation pods, including the ones made by OpenSeed for meditation in the workplace.

6:10: The drawbacks of quick fixes like drugs for mental health.

10:30: The benefits of group meditation versus individual meditation.

14:35: What is a "metahuman" and how to become one.

19:40: The difference between the conditioned mind and the mind that's infinitely creative.

22:48: How Chopra's views of free will differ from the views of many neuroscientists.

28:04: Thinking Fast and Slow, and the role of intuition.

31:20: Athletic and creative geniuses.

32:43: The nature of fundamental truth.

34:00: Meditation for kids.

37:12: Never alone.Love and how AI chatbots can support mental health.

42:30: Extending lifespan, gene editing and lifestyle.

46:05: Chopra's mentor in living a long good life (and my mentor).

47:45: The power of yoga.

Links:

- OpenSeed meditation pods for people to meditate at work (Chopra is an advisor to OpenSeed).

- Chopra's book from 2021, Metahuman: Unleash Your Infinite Potential

- Chopra's book from 2022, Abundance: The Inner Path to Wealth

- NeverAlone.Love, Chopra's collaboration of businesses, policy makers, mental health professionals and others to raise awareness about mental health, advance scientific research and "create a global technology platform to democratize access to resources."

- The Piwi chatbot for mental health

- The Chopra Meditation & Well-Being App for people of all ages

- Only 1.6 percent of U.S. children meditate, according to the National Center for Complementary and Integrative Health