Your Digital Avatar May One Day Get Sick Before You Do

Artificial neurons in a concept of artificial intelligence.

Artificial intelligence is everywhere, just not in the way you think it is.

These networks, loosely designed after the human brain, are interconnected computers that have the ability to "learn."

"There's the perception of AI in the glossy magazines," says Anders Kofod-Petersen, a professor of Artificial Intelligence at the Norwegian University of Science and Technology. "That's the sci-fi version. It resembles the small guy in the movie AI. It might be benevolent or it might be evil, but it's generally intelligent and conscious."

"And this is, of course, as far from the truth as you can possibly get."

What Exactly Is Artificial Intelligence, Anyway?

Let's start with how you got to this piece. You likely came to it through social media. Your Facebook account, Twitter feed, or perhaps a Google search. AI influences all of those things, machine learning helping to run the algorithms that decide what you see, when, and where. AI isn't the little humanoid figure; it's the system that controls the figure.

"AI is being confused with robotics," Eleonore Pauwels, Director of the Anticipatory Intelligence Lab with the Science and Technology Innovation Program at the Wilson Center, says. "What AI is right now is a data optimization system, a very powerful data optimization system."

The revolution in recent years hasn't come from the method scientists and other researchers use. The general ideas and philosophies have been around since the late 1960s. Instead, the big change has been the dramatic increase in computing power, primarily due to the development of neural networks. These networks, loosely designed after the human brain, are interconnected computers that have the ability to "learn." An AI, for example, can be taught to spot a picture of a cat by looking at hundreds of thousands of pictures that have been labeled "cat" and "learning" what a cat looks like. Or an AI can beat a human at Go, an achievement that just five years ago Kofod-Petersen thought wouldn't be accomplished for decades.

"It's very difficult to argue that something is intelligent if it can't learn, and these algorithms are getting pretty good at learning stuff. What they are not good at is learning how to learn."

Medicine is the field where this expertise in perception tasks might have the most influence. It's already having an impact as iPhones use AI to detect cancer, Apple watches alert the wearer to a heart problem, AI spots tuberculosis and the spread of breast cancer with a higher accuracy than human doctors, and more. Every few months, another study demonstrates more possibility. (The New Yorker published an article about medicine and AI last year, so you know it's a serious topic.)

But this is only the beginning. "I personally think genomics and precision medicine is where AI is going to be the biggest game-changer," Pauwels says. "It's going to completely change how we think about health, our genomes, and how we think about our relationship between our genotype and phenotype."

The Fundamental Breakthrough That Must Be Solved

To get there, however, researchers will need to make another breakthrough, and there's debate about how long that will take. Kofod-Petersen explains: "If we want to move from this narrow intelligence to this broader intelligence, that's a very difficult problem. It basically boils down to that we haven't got a clue about what intelligence actually is. We don't know what intelligence means in a biological sense. We think we might recognize it but we're not completely sure. There isn't a working definition. We kind of agree with the biologists that learning is an aspect of it. It's very difficult to argue that something is intelligent if it can't learn, and these algorithms are getting pretty good at learning stuff. What they are not good at is learning how to learn. They can learn specific tasks but we haven't approached how to teach them to learn to learn."

In other words, current AI is very, very good at identifying that a picture of a cat is, in fact, a cat – and getting better at doing so at an incredibly rapid pace – but the system only knows what a "cat" is because that's what a programmer told it a furry thing with whiskers and two pointy ears is called. If the programmer instead decided to label the training images as "dogs," the AI wouldn't say "no, that's a cat." Instead, it would simply call a furry thing with whiskers and two pointy ears a dog. AI systems lack the explicit inference that humans do effortlessly, almost without thinking.

Pauwels believes that the next step is for AI to transition from supervised to unsupervised learning. The latter means that the AI isn't answering questions that a programmer asks it ("Is this a cat?"). Instead, it's almost like it's looking at the data it has, coming up with its own questions and hypothesis, and answering them or putting them to the test. Combining this ability with the frankly insane processing power of the computer system could result in game-changing discoveries.

In the not-too-distant future, a doctor could run diagnostics on a digital avatar, watching which medical conditions present themselves before the person gets sick in real life.

One company in China plans to develop a way to create a digital avatar of an individual person, then simulate that person's health and medical information into the future. In the not-too-distant future, a doctor could run diagnostics on a digital avatar, watching which medical conditions presented themselves – cancer or a heart condition or anything, really – and help the real-life version prevent those conditions from beginning or treating them before they became a life-threatening issue.

That, obviously, would be an incredibly powerful technology, and it's just one of the many possibilities that unsupervised AI presents. It's also terrifying in the potential for misuse. Even the term "unsupervised AI" brings to mind a dystopian landscape where AI takes over and enslaves humanity. (Pick your favorite movie. There are dozens.) This is a concern, something for developers, programmers, and scientists to consider as they build the systems of the future.

The Ethical Problem That Deserves More Attention

But the more immediate concern about AI is much more mundane. We think of AI as an unbiased system. That's incorrect. Algorithms, after all, are designed by someone or a team, and those people have explicit or implicit biases. Intentionally, or more likely not, they introduce these biases into the very code that forms the basis for the AI. Current systems have a bias against people of color. Facebook tried to rectify the situation and failed. These are two small examples of a larger, potentially systemic problem.

It's vital and necessary for the people developing AI today to be aware of these issues. And, yes, avoid sending us to the brink of a James Cameron movie. But AI is too powerful a tool to ignore. Today, it's identifying cats and on the verge of detecting cancer. In not too many tomorrows, it will be on the forefront of medical innovation. If we are careful, aware, and smart, it will help simulate results, create designer drugs, and revolutionize individualize medicine. "AI is the only way to get there," Pauwels says.

Although Jonas Salk has gone down in history for helping rid the world (almost) of polio, his revolutionary vaccine wouldn't have been possible without the world’s largest clinical trial – and the bravery of thousands of kids.

Exactly 67 years ago, in 1955, a group of scientists and reporters gathered at the University of Michigan and waited with bated breath for Dr. Thomas Francis Jr., director of the school’s Poliomyelitis Vaccine Evaluation Center, to approach the podium. The group had gathered to hear the news that seemingly everyone in the country had been anticipating for the past two years – whether the vaccine for poliomyelitis, developed by Francis’s former student Jonas Salk, was effective in preventing the disease.

Polio, at that point, had become a household name. As the highly contagious virus swept through the United States, cities closed their schools, movie theaters, swimming pools, and even churches to stop the spread. For most, polio presented as a mild illness, and was usually completely asymptomatic – but for an unlucky few, the virus took hold of the central nervous system and caused permanent paralysis of muscles in the legs, arms, and even people’s diaphragms, rendering the person unable to walk and breathe. It wasn’t uncommon to hear reports of people – mostly children – who fell sick with a flu-like virus and then, just days later, were relegated to spend the rest of their lives in an iron lung.

For two years, researchers had been testing a vaccine that would hopefully be able to stop the spread of the virus and prevent the 45,000 infections each year that were keeping the nation in a chokehold. At the podium, Francis greeted the crowd and then proceeded to change the course of human history: The vaccine, he reported, was “safe, effective, and potent.” Widespread vaccination could begin in just a few weeks. The nightmare was over.

The road to success

Jonas Salk, a medical researcher and virologist who developed the vaccine with his own research team, would rightfully go down in history as the man who eradicated polio. (Today, wild poliovirus circulates in just two countries, Afghanistan and Pakistan – with only 140 cases reported in 2020.) But many people today forget that the widespread vaccination campaign that effectively ended wild polio across the globe would have never been possible without the human clinical trials that preceded it.

As with the COVID-19 vaccine, skepticism and misinformation around the polio vaccine abounded. But even more pervasive than the skepticism was fear. The consequences of polio had arguably never been more visible.

The road to human clinical trials – and the resulting vaccine – was a long one. In 1938, President Franklin Delano Roosevelt launched the National Foundation for Infantile Paralysis in order to raise funding for research and development of a polio vaccine. (Today, we know this organization as the March of Dimes.) A polio survivor himself, Roosevelt elevated awareness and prevention into the national spotlight, even more so than it had been previously. Raising funds for a safe and effective polio vaccine became a cornerstone of his presidency – and the funds raked in by his foundation went primarily to Salk to fund his research.

The Trials Begin

Salk’s vaccine, which included an inactivated (killed) polio virus, was promising – but now the researchers needed test subjects to make global vaccination a possibility. Because the aim of the vaccine was to prevent paralytic polio, researchers decided that they had to test the vaccine in the population that was most vulnerable to paralysis – young children. And, because the rate of paralysis was so low even among children, the team required many children to collect enough data. Francis, who led the trial to evaluate Salk’s vaccine, began the process of recruiting more than one million school-aged children between the ages of six and nine in 272 counties that had the highest incidence of the disease. The participants were nicknamed the “Polio Pioneers.”

Double-blind, placebo-based trials were considered the “gold standard” of epidemiological research back in Francis's day - and they remain the best approach we have today. These rigorous scientific studies are designed with two participant groups in mind. One group, called the test group, receives the experimental treatment (such as a vaccine); the other group, called the control, receives an inactive treatment known as a placebo. The researchers then compare the effects of the active treatment against the effects of the placebo, and every researcher is “blinded” as to which participants receive what treatment. That way, the results aren’t tainted by any possible biases.

But the study was controversial in that only some of the individual field trials at the county and state levels had a placebo group. Researchers described this as a “calculated risk,” meaning that while there were risks involved in giving the vaccine to a large number of children, the bigger risk was the potential paralysis or death that could come with being infected by polio. In all, just 200,000 children across the US received a placebo treatment, while an additional 725,000 children acted as observational controls – in other words, researchers monitored them for signs of infection, but did not give them any treatment.

As with the COVID-19 vaccine, skepticism and misinformation around the polio vaccine abounded. But even more pervasive than the skepticism was fear. President Roosevelt, who had made many public and televised appearances in a wheelchair, served as a perpetual reminder of the consequences of polio, as an infection at age 39 had rendered him permanently unable to walk. The consequences of polio had arguably never been more visible, and parents signed up their children in droves to participate in the study and offer them protection.

The Polio Pioneer Legacy

In a little less than a year, roughly half a million children received a dose of Salk’s polio vaccine. While plenty of children were hesitant to get the shot, many former participants still remember the fear surrounding the disease. One former participant, a Polio Pioneer named Debbie LaCrosse, writes of her experience: “There was no discussion, no listing of pros and cons. No amount of concern over possible side effects or other unknowns associated with a new vaccine could compare to the terrifying threat of polio.” For their participation, each kid received a certificate – and sometimes a pin – with the words “Polio Pioneer” emblazoned across the front.

When Francis announced the results of the trial on April 12, 1955, people did more than just breathe a sigh of relief – they openly celebrated, ringing church bells and flooding into the streets to embrace. Salk, who had become the face of the vaccine at that point, was instantly hailed as a national hero – and teachers around the country had their students to write him ‘thank you’ notes for his years of diligent work.

But while Salk went on to win national acclaim – even accepting the Presidential Medal of Freedom for his work on the polio vaccine in 1977 – his success was due in no small part to the children (and their parents) who took a risk in order to advance medical science. And that risk paid off: By the early 1960s, the yearly cases of polio in the United States had gone down to just 910. Where before the vaccine polio had caused around 15,000 cases of paralysis each year, only ten cases of paralysis were recorded in the entire country throughout the 1970s. And in 1979, the virus that once shuttered entire towns was declared officially eradicated in this country. Thanks to the efforts of these brave pioneers, the nation – along with the majority of the world – remains free of polio even today.

Why you should (virtually) care

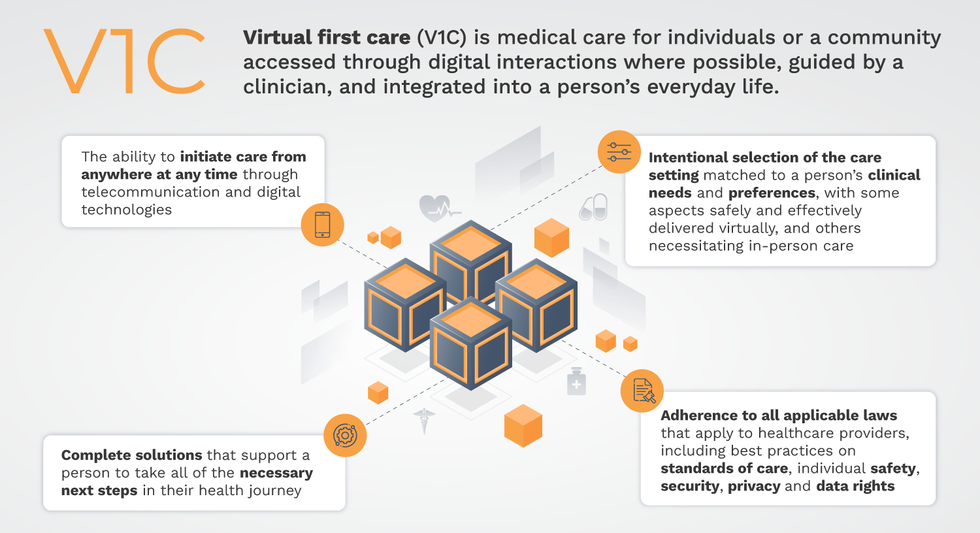

Virtual-first care, or V1C, could increase the quality of healthcare and make it more patient-centric by letting patients combine in-person visits with virtual options such as video for seeing their care providers.

As the pandemic turns endemic, healthcare providers have been eagerly urging patients to return to their offices to enjoy the benefits of in-person care.

But wait.

The last two years have forced all sorts of organizations to be nimble, adaptable and creative in how they work, and this includes healthcare providers’ efforts to maintain continuity of care under the most challenging of conditions. So before we go back to “business as usual,” don’t we owe it to those providers and ourselves to admit that business as usual did not work for most of the people the industry exists to help? If we’re going to embrace yet another period of change – periods that don’t happen often in our complex industry – shouldn’t we first stop and ask ourselves what we’re trying to achieve?

Certainly, COVID has shown that telehealth can be an invaluable tool, particularly for patients in rural and underserved communities that lack access to specialty care. It’s also become clear that many – though not all – healthcare encounters can be effectively conducted from afar. That said, the telehealth tactics that filled the gap during the pandemic were largely stitched together substitutes for existing visit-based workflows: with offices closed, patients scheduled video visits for help managing the side effects of their blood pressure medications or to see their endocrinologist for a quarterly check-in. Anyone whose children slogged through the last year or two of remote learning can tell you that simply virtualizing existing processes doesn’t necessarily improve the experience or the outcomes!

But what if our approach to post-pandemic healthcare came from a patient-driven perspective? We have a fleeting opportunity to advance a care model centered on convenient and equitable access that first prioritizes good outcomes, then selects approaches to care – and locations – tailored to each patient. Using the example of education, imagine how effective it would be if each student, regardless of their school district and aptitude, received such individualized attention.

That’s the idea behind virtual-first care (V1C), a new care model centered on convenient, customized, high-quality care that integrates a full suite of services tailored directly to patients’ clinical needs and preferences. This package includes asynchronous communication such as texting; video and other live virtual modes; and in-person options.

V1C goes beyond what you might think of as standard “telehealth” by using evidence-based protocols and tools that include traditional and digital therapeutics and testing, personalized care plans, dynamic patient monitoring, and team-based approaches to care. This could include spit kits mailed for laboratory tests and complementing clinical care with health coaching. V1C also replaces some in-person exams with ongoing monitoring, using sensors for more ‘whole person’ care.

Amidst all this momentum, we have the opportunity to rethink the goals of healthcare innovation, but that means bringing together key stakeholders to demonstrate that sustainable V1C can redefine healthcare.

Established V1C healthcare providers such as Omada, Headspace, and Heartbeat Health, as well as emerging market entrants like Oshi, Visana, and Wellinks, work with a variety of patients who have complicated long-term conditions such as diabetes, heart failure, gastrointestinal illness, endometriosis, and COPD. V1C is comprehensive in ways that are lacking in digital health and its other predecessors: it has the potential to integrate multiple data streams, incorporate more frequent touches and check-ins over time, and manage a much wider range of chronic health conditions, improving lives and reducing disease burden now and in the future.

Recognizing the pandemic-driven interest in virtual care, significant energy and resources are already flowing fast toward V1C. Some of the world’s largest innovators jumped into V1C early on: Verily, Alphabet’s Life Sciences Company, launched Onduo in 2016 to disrupt the diabetes healthcare market, and is now well positioned to scale its solutions. Major insurers like Aetna and United now offer virtual-first plans to members, responding as organizations expand virtual options for employees. Amidst all this momentum, we have the opportunity to rethink the goals of healthcare innovation, but that means bringing together key stakeholders to demonstrate that sustainable V1C can redefine healthcare.

That was the immediate impetus for IMPACT, a consortium of V1C companies, investors, payers and patients founded last year to ensure access to high-quality, evidence-based V1C. Developed by our team at the Digital Medicine Society (DiMe) in collaboration with the American Telemedicine Association (ATA), IMPACT has begun to explore key issues that include giving patients more integrated experiences when accessing both virtual and brick-and-mortar care.

Digital Medicine Society

V1C is not, nor should it be, virtual-only care. In this new era of hybrid healthcare, success will be defined by how well providers help patients navigate the transitions. How do we smoothly hand a patient off from an onsite primary care physician to, say, a virtual cardiologist? How do we get information from a brick-and-mortar to a digital portal? How do you manage dataflow while still staying HIPAA compliant? There are many complex regulatory implications for these new models, as well as an evolving landscape in terms of privacy, security and interoperability. It will be no small task for groups like IMPACT to determine the best path forward.

None of these factors matter unless the industry can recruit and retain clinicians. Our field is facing an unprecedented workforce crisis. Traditional healthcare is making clinicians miserable, and COVID has only accelerated the trend of overworked, disenchanted healthcare workers leaving in droves. Clinicians want more interactions with patients, and fewer with computer screens – call it “More face time, less FaceTime.” No new model will succeed unless the industry can more efficiently deploy its talent – arguably its most scarce and precious resource. V1C can help with alleviating the increasing burden and frustration borne by individual physicians in today’s status quo.

In healthcare, new technological approaches inevitably provoke no shortage of skepticism. Past lessons from Silicon Valley-driven fixes have led to understandable cynicism. But V1C is a different breed of animal. By building healthcare around the patient, not the clinic, V1C can make healthcare work better for patients, payers and providers. We’re at a fork in the road: we can revert back to a broken sick-care system, or dig in and do the hard work of figuring out how this future-forward healthcare system gets financed, organized and executed. As a field, we must find the courage and summon the energy to embrace this moment, and make it a moment of change.