Your Digital Avatar May One Day Get Sick Before You Do

Artificial neurons in a concept of artificial intelligence.

Artificial intelligence is everywhere, just not in the way you think it is.

These networks, loosely designed after the human brain, are interconnected computers that have the ability to "learn."

"There's the perception of AI in the glossy magazines," says Anders Kofod-Petersen, a professor of Artificial Intelligence at the Norwegian University of Science and Technology. "That's the sci-fi version. It resembles the small guy in the movie AI. It might be benevolent or it might be evil, but it's generally intelligent and conscious."

"And this is, of course, as far from the truth as you can possibly get."

What Exactly Is Artificial Intelligence, Anyway?

Let's start with how you got to this piece. You likely came to it through social media. Your Facebook account, Twitter feed, or perhaps a Google search. AI influences all of those things, machine learning helping to run the algorithms that decide what you see, when, and where. AI isn't the little humanoid figure; it's the system that controls the figure.

"AI is being confused with robotics," Eleonore Pauwels, Director of the Anticipatory Intelligence Lab with the Science and Technology Innovation Program at the Wilson Center, says. "What AI is right now is a data optimization system, a very powerful data optimization system."

The revolution in recent years hasn't come from the method scientists and other researchers use. The general ideas and philosophies have been around since the late 1960s. Instead, the big change has been the dramatic increase in computing power, primarily due to the development of neural networks. These networks, loosely designed after the human brain, are interconnected computers that have the ability to "learn." An AI, for example, can be taught to spot a picture of a cat by looking at hundreds of thousands of pictures that have been labeled "cat" and "learning" what a cat looks like. Or an AI can beat a human at Go, an achievement that just five years ago Kofod-Petersen thought wouldn't be accomplished for decades.

"It's very difficult to argue that something is intelligent if it can't learn, and these algorithms are getting pretty good at learning stuff. What they are not good at is learning how to learn."

Medicine is the field where this expertise in perception tasks might have the most influence. It's already having an impact as iPhones use AI to detect cancer, Apple watches alert the wearer to a heart problem, AI spots tuberculosis and the spread of breast cancer with a higher accuracy than human doctors, and more. Every few months, another study demonstrates more possibility. (The New Yorker published an article about medicine and AI last year, so you know it's a serious topic.)

But this is only the beginning. "I personally think genomics and precision medicine is where AI is going to be the biggest game-changer," Pauwels says. "It's going to completely change how we think about health, our genomes, and how we think about our relationship between our genotype and phenotype."

The Fundamental Breakthrough That Must Be Solved

To get there, however, researchers will need to make another breakthrough, and there's debate about how long that will take. Kofod-Petersen explains: "If we want to move from this narrow intelligence to this broader intelligence, that's a very difficult problem. It basically boils down to that we haven't got a clue about what intelligence actually is. We don't know what intelligence means in a biological sense. We think we might recognize it but we're not completely sure. There isn't a working definition. We kind of agree with the biologists that learning is an aspect of it. It's very difficult to argue that something is intelligent if it can't learn, and these algorithms are getting pretty good at learning stuff. What they are not good at is learning how to learn. They can learn specific tasks but we haven't approached how to teach them to learn to learn."

In other words, current AI is very, very good at identifying that a picture of a cat is, in fact, a cat – and getting better at doing so at an incredibly rapid pace – but the system only knows what a "cat" is because that's what a programmer told it a furry thing with whiskers and two pointy ears is called. If the programmer instead decided to label the training images as "dogs," the AI wouldn't say "no, that's a cat." Instead, it would simply call a furry thing with whiskers and two pointy ears a dog. AI systems lack the explicit inference that humans do effortlessly, almost without thinking.

Pauwels believes that the next step is for AI to transition from supervised to unsupervised learning. The latter means that the AI isn't answering questions that a programmer asks it ("Is this a cat?"). Instead, it's almost like it's looking at the data it has, coming up with its own questions and hypothesis, and answering them or putting them to the test. Combining this ability with the frankly insane processing power of the computer system could result in game-changing discoveries.

In the not-too-distant future, a doctor could run diagnostics on a digital avatar, watching which medical conditions present themselves before the person gets sick in real life.

One company in China plans to develop a way to create a digital avatar of an individual person, then simulate that person's health and medical information into the future. In the not-too-distant future, a doctor could run diagnostics on a digital avatar, watching which medical conditions presented themselves – cancer or a heart condition or anything, really – and help the real-life version prevent those conditions from beginning or treating them before they became a life-threatening issue.

That, obviously, would be an incredibly powerful technology, and it's just one of the many possibilities that unsupervised AI presents. It's also terrifying in the potential for misuse. Even the term "unsupervised AI" brings to mind a dystopian landscape where AI takes over and enslaves humanity. (Pick your favorite movie. There are dozens.) This is a concern, something for developers, programmers, and scientists to consider as they build the systems of the future.

The Ethical Problem That Deserves More Attention

But the more immediate concern about AI is much more mundane. We think of AI as an unbiased system. That's incorrect. Algorithms, after all, are designed by someone or a team, and those people have explicit or implicit biases. Intentionally, or more likely not, they introduce these biases into the very code that forms the basis for the AI. Current systems have a bias against people of color. Facebook tried to rectify the situation and failed. These are two small examples of a larger, potentially systemic problem.

It's vital and necessary for the people developing AI today to be aware of these issues. And, yes, avoid sending us to the brink of a James Cameron movie. But AI is too powerful a tool to ignore. Today, it's identifying cats and on the verge of detecting cancer. In not too many tomorrows, it will be on the forefront of medical innovation. If we are careful, aware, and smart, it will help simulate results, create designer drugs, and revolutionize individualize medicine. "AI is the only way to get there," Pauwels says.

No, the New COVID Vaccine Is Not "Morally Compromised"

As the proportion of vaccinated elderly people increases, family reunions become possible again -- but not if people reject the vaccines on religious grounds.

The approval of the Johnson & Johnson COVID-19 vaccine has been heralded as a major advance. A single-dose vaccine that is highly efficacious at removing the ability of the virus to cause severe disease, hospitalization, and death (even in the face of variants) is nothing less than pathbreaking. Anyone who is offered this vaccine should take it. However, one group advises its adherents to preferentially request the Moderna or Pfizer vaccines instead in the quest for morally "irreproachable" vaccines.

Is this group concerned about lower numerical efficacy in clinical trials? No, it seems that they have deemed the J&J vaccine "morally compromised". The group is the U.S. Conference of Catholic Bishops and if something is "morally compromised" it is surely not the vaccine. (Notably Pope Francis has not taken such a stance).

At issue is a cell line used to manufacture the vaccine. Specifically, a cell line used to grow the adenovirus vector used in the vaccine. The purpose of the vector is to carry a genetic snippet of the coronavirus spike protein into the body, like a Trojan Horse ferrying in an enemy combatant, in order to safely trigger an immune response without any chance of causing COVID-19 itself.

It is my hope that the country's 50 million Catholics do not heed the U.S. Conference of Bishops' potentially deadly advice and instead obtain whichever vaccine is available to them as soon as possible.

The cell line of the vector, known as PER.C6, was derived from a fetus that was aborted in 1985. This cell line is prolific in biotechnology, as are other fetal-derived cell lines such as HEK-293 (human embryonic kidney), used in the manufacture of the Astra Zeneca COVID-19 vaccine. Indeed, fetal cell lines are used in the manufacture of critical vaccines directed against pathogens such as hepatitis A, rubella, rabies, chickenpox, and shingles and were used to test the Moderna and Pfizer COVID-19 vaccines (which, accordingly, the U.S. Conference of Bishops deem to only raise moral "concerns").

As such, fetal cell lines from abortions are a common and critical component of biotechnology that we all rely on to improve our health. Such cell lines have been used to help find treatments for cancer, Ebola, and many other diseases.

Dr. Andrea Gambotto, a vaccine scientist at the University of Pittsburgh School of Medicine, explained to Science magazine last year why fetal cells are so important to vaccine development: "Cultured [nonhuman] animal cells can produce the same proteins, but they would be decorated with different sugar molecules, which—in the case of vaccines—runs the risk of failing to evoke a robust and specific immune response." Thus, the fetal cells' human origins are key to their effectiveness.

So why the opposition to this life-saving technology, especially in the midst of the deadliest pandemic in over a century? How could such a technology be "morally compromised" when morality, as I understand it, is a code of values to guide human life on Earth with the purpose of enhancing well-being?

By any measure, the J&J vaccine accomplishes that, since human life, not embryonic or fetal life, is the standard of value. An embryo or fetus in the earlier stages of development, while harboring the potential to grow into a human being, is not the moral equivalent of a person. Thus, creating life-saving medical technology using cells that would have otherwise been destroyed is not in conflict with a proper moral code. To me, it is nihilistic to oppose these vaccines on the grounds cited by the U.S. Conference of Bishops.

Reason, the rational faculty, is the human means of knowledge. It is what one should wield when approaching a scientific or health issue. Appeals from clerics, devoid of any need to tether their principles to this world, should not have any bearing on one's medical decision-making.

In the Dark Ages, the Catholic Church opposed all forms of scientific inquiry, even castigating science and curiosity as the "lust of the eyes": One early Middle Ages church father reveled in his rejection of reality and evidence, proudly declaring, "I believe because it is absurd." This organization, which tyrannized scientists such as Galileo and murdered the Italian cosmologist Bruno, today has shown itself to still harbor anti-science sentiments in its ranks.

It is my hope that the country's 50 million Catholics do not heed the U.S. Conference of Bishops' potentially deadly advice and instead obtain whichever vaccine is available to them as soon as possible. When judged using the correct standard of value, vaccines using fetal cell lines in their development are an unequivocal good -- while those who attempt to undermine them deserve a different category altogether.

Dr. Adalja is focused on emerging infectious disease, pandemic preparedness, and biosecurity. He has served on US government panels tasked with developing guidelines for the treatment of plague, botulism, and anthrax in mass casualty settings and the system of care for infectious disease emergencies, and as an external advisor to the New York City Health and Hospital Emergency Management Highly Infectious Disease training program, as well as on a FEMA working group on nuclear disaster recovery. Dr. Adalja is an Associate Editor of the journal Health Security. He was a coeditor of the volume Global Catastrophic Biological Risks, a contributing author for the Handbook of Bioterrorism and Disaster Medicine, the Emergency Medicine CorePendium, Clinical Microbiology Made Ridiculously Simple, UpToDate's section on biological terrorism, and a NATO volume on bioterrorism. He has also published in such journals as the New England Journal of Medicine, the Journal of Infectious Diseases, Clinical Infectious Diseases, Emerging Infectious Diseases, and the Annals of Emergency Medicine. He is a board-certified physician in internal medicine, emergency medicine, infectious diseases, and critical care medicine. Follow him on Twitter: @AmeshAA

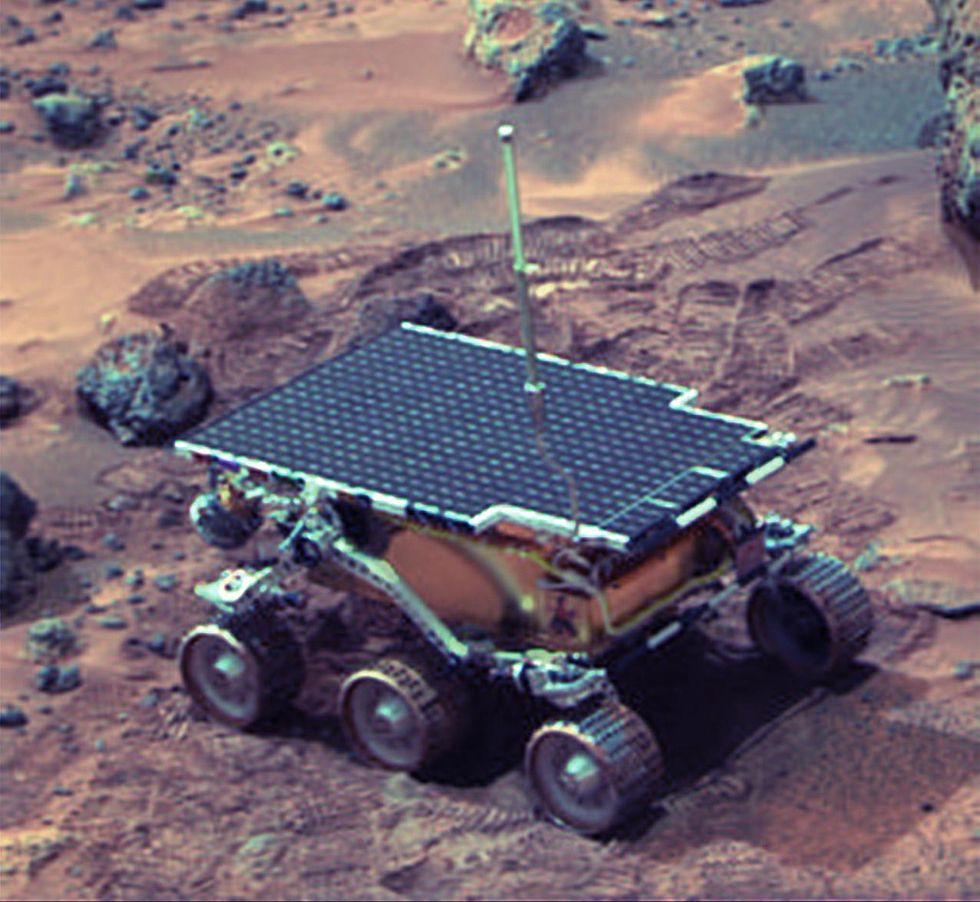

Donna Shirley pictured at her home in Tulsa, with a model of the Sojourner rover she was in charge of that explored Mars.

When NASA's Perseverance rover landed successfully on Mars on February 18, 2021, calling it "one giant leap for mankind" – as Neil Armstrong said when he set foot on the moon in 1969 – would have been inaccurate. This year actually marked the fifth time the U.S. space agency has put a remote-controlled robotic exploration vehicle on the Red Planet. And it was a female engineer named Donna Shirley who broke new ground for women in science as the manager of both the Mars Exploration Program and the 30-person team that built Sojourner, the first rover to land on Mars on July 4, 1997.

For Shirley, the Mars Pathfinder mission was the climax of her 32-year career at NASA's Jet Propulsion Laboratory (JPL) in Pasadena, California. The Oklahoma-born scientist, who earned her Master's degree in aerospace engineering from the University of Southern California, saw her profile skyrocket with media appearances from CNN to the New York Times, and her autobiography Managing Martians came out in 1998. Now 79 and living in a Tulsa retirement community, she still embraces her status as a female pioneer.

"Periodically, I'll hear somebody say they got into the space program because of me, and that makes me feel really good," Shirley told Leaps.org. "I look at the mission control area, and there are a lot of women in there. I'm quite pleased I was able to break the glass ceiling."

Her $25-million, 25-pound microrover – powered by solar energy and designed to get rock samples and test soil chemistry for evidence of life – was named after Sojourner Truth, a 19th-century Black abolitionist and women's rights activist. Unlike Mars Pathfinder, Shirley didn't have to travel more than 131 million miles to reach her goal, but her path to scientific fame as a woman sometimes resembled an asteroid field.

The Sojourner Rover in 1997 on Mars.NASA/JPL

The Sojourner Rover in 1997 on Mars.NASA/JPLAs a high-IQ tomboy growing up in Wynnewood, Oklahoma (pop. 2,300), Shirley yearned to escape. She decided to become an engineer at age 10 and took flying lessons at 15. Her extraterrestrial aspirations were fueled by Ray Bradbury's The Martian Chronicles and Arthur C. Clarke's The Sands of Mars. Yet when she entered the University of Oklahoma (OU) in 1958, her freshman academic advisor initially told her: "Girls can't be engineers." She ignored him.

Years later, Shirley would combat such archaic thinking, succeeding at JPL with her creative, collaborative management style. "If you look at the literature, you'll find that teams that are either led by or heavily involved with women do better than strictly male teams," she noted.

However, her career trajectory stalled at OU. Burned out by her course load and distracted by a broken engagement to marry a fellow student, she switched her major to professional writing. After graduation, she applied her aeronautical background as a McDonnell Aircraft technical writer, but her boss, she says, harassed her and she faced gender-based hostility from male co-workers.

Returning to OU, Shirley finished off her engineering degree and became a JPL aerodynamist in 1966 after answering an ad in the St. Louis Post-Dispatch. At first, she was the only female engineer among the research center's 2,000-odd engineers. She wore many hats, from designing planetary atmospheric entry vehicles to picking the launch date of November 4, 1973 for Mariner 10's mission to Venus and Mercury.

By the mid-1980's, she was managing teams that focused on robotics and Mars, delivering creative solutions when NASA budget cuts loomed. In 1989, the same year the Sojourner microrover concept was born, President George H.W. Bush announced his Space Exploration Initiative, including plans for a human mission to Mars by 2019.

That target, of course, wasn't attained, despite huge advances in technology and our understanding of the Martian environment. Today, Shirley believes humans could land on Mars by 2030. She became the founding director of the Science Fiction Museum and Hall of Fame in Seattle in 2004 after leaving NASA, and to this day, she enjoys checking out pop culture portrayals of Mars landings – even if they're not always accurate.

After the novel The Martian was published in 2011, which later was adapted into the hit film starring Matt Damon, Shirley phoned author Andy Weir: "You've got a major mistake in here. It says there's a storm that tries to blow the rocket over. But actually, the Mars atmosphere is so thin, it would never blow a rocket over!"

Fearlessly speaking her mind and seeking the stars helped Donna Shirley make history. However, a 2019 Washington Post story noted: "Women make up only about a third of NASA's workforce. They comprise just 28 percent of senior executive leadership positions and are only 16 percent of senior scientific employees." Whether it's traveling to Mars or trending toward gender equality, we've still got a long way to go.