Your Digital Avatar May One Day Get Sick Before You Do

Artificial neurons in a concept of artificial intelligence.

Artificial intelligence is everywhere, just not in the way you think it is.

These networks, loosely designed after the human brain, are interconnected computers that have the ability to "learn."

"There's the perception of AI in the glossy magazines," says Anders Kofod-Petersen, a professor of Artificial Intelligence at the Norwegian University of Science and Technology. "That's the sci-fi version. It resembles the small guy in the movie AI. It might be benevolent or it might be evil, but it's generally intelligent and conscious."

"And this is, of course, as far from the truth as you can possibly get."

What Exactly Is Artificial Intelligence, Anyway?

Let's start with how you got to this piece. You likely came to it through social media. Your Facebook account, Twitter feed, or perhaps a Google search. AI influences all of those things, machine learning helping to run the algorithms that decide what you see, when, and where. AI isn't the little humanoid figure; it's the system that controls the figure.

"AI is being confused with robotics," Eleonore Pauwels, Director of the Anticipatory Intelligence Lab with the Science and Technology Innovation Program at the Wilson Center, says. "What AI is right now is a data optimization system, a very powerful data optimization system."

The revolution in recent years hasn't come from the method scientists and other researchers use. The general ideas and philosophies have been around since the late 1960s. Instead, the big change has been the dramatic increase in computing power, primarily due to the development of neural networks. These networks, loosely designed after the human brain, are interconnected computers that have the ability to "learn." An AI, for example, can be taught to spot a picture of a cat by looking at hundreds of thousands of pictures that have been labeled "cat" and "learning" what a cat looks like. Or an AI can beat a human at Go, an achievement that just five years ago Kofod-Petersen thought wouldn't be accomplished for decades.

"It's very difficult to argue that something is intelligent if it can't learn, and these algorithms are getting pretty good at learning stuff. What they are not good at is learning how to learn."

Medicine is the field where this expertise in perception tasks might have the most influence. It's already having an impact as iPhones use AI to detect cancer, Apple watches alert the wearer to a heart problem, AI spots tuberculosis and the spread of breast cancer with a higher accuracy than human doctors, and more. Every few months, another study demonstrates more possibility. (The New Yorker published an article about medicine and AI last year, so you know it's a serious topic.)

But this is only the beginning. "I personally think genomics and precision medicine is where AI is going to be the biggest game-changer," Pauwels says. "It's going to completely change how we think about health, our genomes, and how we think about our relationship between our genotype and phenotype."

The Fundamental Breakthrough That Must Be Solved

To get there, however, researchers will need to make another breakthrough, and there's debate about how long that will take. Kofod-Petersen explains: "If we want to move from this narrow intelligence to this broader intelligence, that's a very difficult problem. It basically boils down to that we haven't got a clue about what intelligence actually is. We don't know what intelligence means in a biological sense. We think we might recognize it but we're not completely sure. There isn't a working definition. We kind of agree with the biologists that learning is an aspect of it. It's very difficult to argue that something is intelligent if it can't learn, and these algorithms are getting pretty good at learning stuff. What they are not good at is learning how to learn. They can learn specific tasks but we haven't approached how to teach them to learn to learn."

In other words, current AI is very, very good at identifying that a picture of a cat is, in fact, a cat – and getting better at doing so at an incredibly rapid pace – but the system only knows what a "cat" is because that's what a programmer told it a furry thing with whiskers and two pointy ears is called. If the programmer instead decided to label the training images as "dogs," the AI wouldn't say "no, that's a cat." Instead, it would simply call a furry thing with whiskers and two pointy ears a dog. AI systems lack the explicit inference that humans do effortlessly, almost without thinking.

Pauwels believes that the next step is for AI to transition from supervised to unsupervised learning. The latter means that the AI isn't answering questions that a programmer asks it ("Is this a cat?"). Instead, it's almost like it's looking at the data it has, coming up with its own questions and hypothesis, and answering them or putting them to the test. Combining this ability with the frankly insane processing power of the computer system could result in game-changing discoveries.

In the not-too-distant future, a doctor could run diagnostics on a digital avatar, watching which medical conditions present themselves before the person gets sick in real life.

One company in China plans to develop a way to create a digital avatar of an individual person, then simulate that person's health and medical information into the future. In the not-too-distant future, a doctor could run diagnostics on a digital avatar, watching which medical conditions presented themselves – cancer or a heart condition or anything, really – and help the real-life version prevent those conditions from beginning or treating them before they became a life-threatening issue.

That, obviously, would be an incredibly powerful technology, and it's just one of the many possibilities that unsupervised AI presents. It's also terrifying in the potential for misuse. Even the term "unsupervised AI" brings to mind a dystopian landscape where AI takes over and enslaves humanity. (Pick your favorite movie. There are dozens.) This is a concern, something for developers, programmers, and scientists to consider as they build the systems of the future.

The Ethical Problem That Deserves More Attention

But the more immediate concern about AI is much more mundane. We think of AI as an unbiased system. That's incorrect. Algorithms, after all, are designed by someone or a team, and those people have explicit or implicit biases. Intentionally, or more likely not, they introduce these biases into the very code that forms the basis for the AI. Current systems have a bias against people of color. Facebook tried to rectify the situation and failed. These are two small examples of a larger, potentially systemic problem.

It's vital and necessary for the people developing AI today to be aware of these issues. And, yes, avoid sending us to the brink of a James Cameron movie. But AI is too powerful a tool to ignore. Today, it's identifying cats and on the verge of detecting cancer. In not too many tomorrows, it will be on the forefront of medical innovation. If we are careful, aware, and smart, it will help simulate results, create designer drugs, and revolutionize individualize medicine. "AI is the only way to get there," Pauwels says.

The Friday Five: How to exercise for cancer prevention

How to exercise for cancer prevention. Plus, a device that brings relief to back pain, ingredients for reducing Alzheimer's risk, the world's oldest disease could make you young again, and more.

The Friday Five covers five stories in research that you may have missed this week. There are plenty of controversies and troubling ethical issues in science – and we get into many of them in our online magazine – but this news roundup focuses on scientific creativity and progress to give you a therapeutic dose of inspiration headed into the weekend.

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

Here are the promising studies covered in this week's Friday Five:

- How to exercise for cancer prevention

- A device that brings relief to back pain

- Ingredients for reducing Alzheimer's risk

- Is the world's oldest disease the fountain of youth?

- Scared of crossing bridges? Your phone can help

New approach to brain health is sparking memories

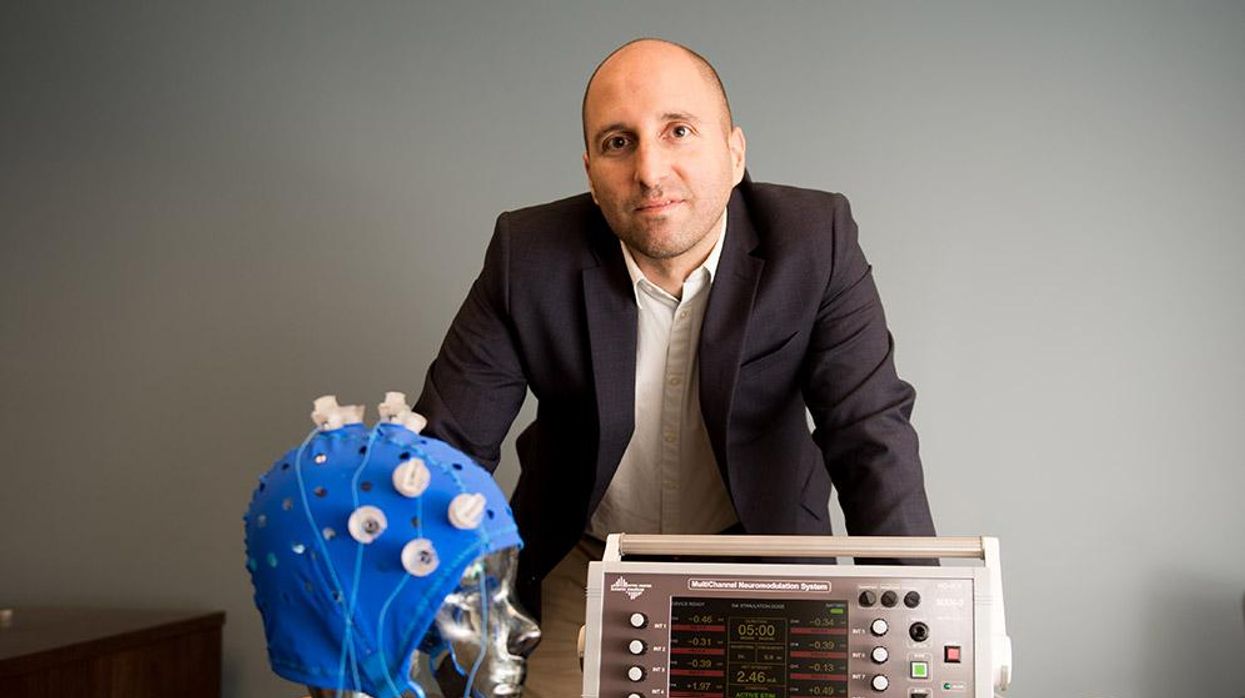

This fall, Robert Reinhart of Boston University published a study finding that electrical stimulation can boost memory - and Reinhart was surprised to discover the effects lasted a full month.

What if a few painless electrical zaps to your brain could help you recall names, perform better on Wordle or even ward off dementia?

This is where neuroscientists are going in efforts to stave off age-related memory loss as well as Alzheimer’s disease. Medications have shown limited effectiveness in reversing or managing loss of brain function so far. But new studies suggest that firing up an aging neural network with electrical or magnetic current might keep brains spry as we age.

Welcome to non-invasive brain stimulation (NIBS). No surgery or anesthesia is required. One day, a jolt in the morning with your own battery-operated kit could replace your wake-up coffee.

Scientists believe brain circuits tend to uncouple as we age. Since brain neurons communicate by exchanging electrical impulses with each other, the breakdown of these links and associations could be what causes the “senior moment”—when you can’t remember the name of the movie you just watched.

In 2019, Boston University researchers led by Robert Reinhart, director of the Cognitive and Clinical Neuroscience Laboratory, showed that memory loss in healthy older adults is likely caused by these disconnected brain networks. When Reinhart and his team stimulated two key areas of the brain with mild electrical current, they were able to bring the brains of older adult subjects back into sync — enough so that their ability to remember small differences between two images matched that of much younger subjects for at least 50 minutes after the testing stopped.

Reinhart wowed the neuroscience community once again this fall. His newer study in Nature Neuroscience presented 150 healthy participants, ages 65 to 88, who were able to recall more words on a given list after 20 minutes of low-intensity electrical stimulation sessions over four consecutive days. This amounted to a 50 to 65 percent boost in their recall.

Even Reinhart was surprised to discover the enhanced performance of his subjects lasted a full month when they were tested again later. Those who benefited most were the participants who were the most forgetful at the start.

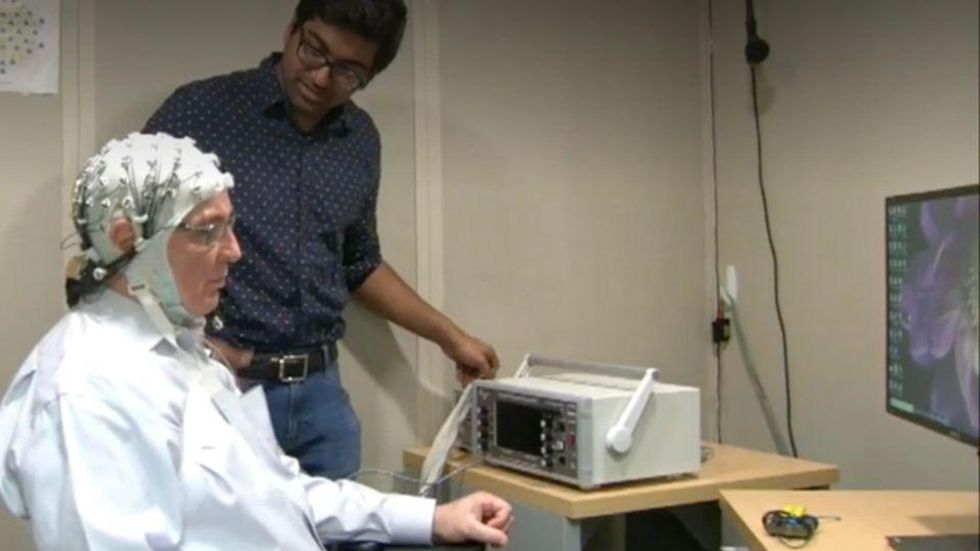

An older person participates in Robert Reinhart's research on brain stimulation.

Robert Reinhart

Reinhart’s subjects only suffered normal age-related memory deficits, but NIBS has great potential to help people with cognitive impairment and dementia, too, says Krista Lanctôt, the Bernick Chair of Geriatric Psychopharmacology at Sunnybrook Health Sciences Center in Toronto. Plus, “it is remarkably safe,” she says.

Lanctôt was the senior author on a meta-analysis of brain stimulation studies published last year on people with mild cognitive impairment or later stages of Alzheimer’s disease. The review concluded that magnetic stimulation to the brain significantly improved the research participants’ neuropsychiatric symptoms, such as apathy and depression. The stimulation also enhanced global cognition, which includes memory, attention, executive function and more.

This is the frontier of neuroscience.

The two main forms of NIBS – and many questions surrounding them

There are two types of NIBS. They differ based on whether electrical or magnetic stimulation is used to create the electric field, the type of device that delivers the electrical current and the strength of the current.

Transcranial Current Brain Stimulation (tES) is an umbrella term for a group of techniques using low-wattage electrical currents to manipulate activity in the brain. The current is delivered to the scalp or forehead via electrodes attached to a nylon elastic cap or rubber headband.

Variations include how the current is delivered—in an alternating pattern or in a constant, direct mode, for instance. Tweaking frequency, potency or target brain area can produce different effects as well. Reinhart’s 2022 study demonstrated that low or high frequencies and alternating currents were uniquely tied to either short-term or long-term memory improvements.

Sessions may be 20 minutes per day over the course of several days or two weeks. “[The subject] may feel a tingling, warming, poking or itching sensation,” says Reinhart, which typically goes away within a minute.

The other main approach to NIBS is Transcranial Magnetic Simulation (TMS). It involves the use of an electromagnetic coil that is held or placed against the forehead or scalp to activate nerve cells in the brain through short pulses. The stimulation is stronger than tES but similar to a magnetic resonance imaging (MRI) scan.

The subject may feel a slight knocking or tapping on the head during a 20-to-60-minute session. Scalp discomfort and headaches are reported by some; in very rare cases, a seizure can occur.

No head-to-head trials have been conducted yet to evaluate the differences and effectiveness between electrical and magnetic current stimulation, notes Lanctôt, who is also a professor of psychiatry and pharmacology at the University of Toronto. Although TMS was approved by the FDA in 2008 to treat major depression, both techniques are considered experimental for the purpose of cognitive enhancement.

“One attractive feature of tES is that it’s inexpensive—one-fifth the price of magnetic stimulation,” Reinhart notes.

Don’t confuse either of these procedures with the horrors of electroconvulsive therapy (ECT) in the 1950s and ‘60s. ECT is a more powerful, riskier procedure used only as a last resort in treating severe mental illness today.

Clinical studies on NIBS remain scarce. Standardized parameters and measures for testing have not been developed. The high heterogeneity among the many existing small NIBS studies makes it difficult to draw general conclusions. Few of the studies have been replicated and inconsistencies abound.

Scientists are still lacking so much fundamental knowledge about the brain and how it works, says Reinhart. “We don’t know how information is represented in the brain or how it’s carried forward in time. It’s more complex than physics.”

Lanctôt’s meta-analysis showed improvements in global cognition from delivering the magnetic form of the stimulation to people with Alzheimer’s, and this finding was replicated inan analysis in the Journal of Prevention of Alzheimer’s Disease this fall. Neither meta-analysis found clear evidence that applying the electrical currents, was helpful for Alzheimer’s subjects, but Lanctôt suggests this might be merely because the sample size for tES was smaller compared to the groups that received TMS.

At the same time, London neuroscientist Marco Sandrini, senior lecturer in psychology at the University of Roehampton, critically reviewed a series of studies on the effects of tES on episodic memory. Often declining with age, episodic memory relates to recalling a person’s own experiences from the past. Sandrini’s review concluded that delivering tES to the prefrontal or temporoparietal cortices of the brain might enhance episodic memory in older adults with Alzheimer’s disease and amnesiac mild cognitive impairment (the predementia phase of Alzheimer’s when people start to have symptoms).

Researchers readily tick off studies needed to explore, clarify and validate existing NIBS data. What is the optimal stimulus session frequency, spacing and duration? How intense should the stimulus be and where should it be targeted for what effect? How might genetics or degree of brain impairment affect responsiveness? Would adjunct medication or cognitive training boost positive results? Could administering the stimulus while someone sleeps expedite memory consolidation?

Using MRI or another brain scan along with computational modeling of the current flow, a clinician could create a treatment that is customized to each person’s brain.

While Sandrini’s review reported improvements induced by tES in the recall or recognition of words and images, there is no evidence it will translate into improvements in daily activities. This is another question that will require more research and testing, Sandrini notes.

Scientists are still lacking so much fundamental knowledge about the brain and how it works, says Reinhart. “We don’t know how information is represented in the brain or how it’s carried forward in time. It’s more complex than physics.”

Where the science is headed

Learning how to apply precision medicine to NIBS is the next focus in advancing this technology, says Shankar Tumati, a post-doctoral fellow working with Lanctôt.

There is great variability in each person’s brain anatomy—the thickness of the skull, the brain’s unique folds, the amount of cerebrospinal fluid. All of these structural differences impact how electrical or magnetic stimulation is distributed in the brain and ultimately the effects.

Using MRI or another brain scan along with computational modeling of the current flow, a clinician could create a treatment that is customized to each person’s brain, from where to put the electrodes to determining the exact dose and duration of stimulation needed to achieve lasting results, Sandrini says.

Above all, most neuroscientists say that largescale research studies over long periods of time are necessary to confirm the safety and durability of this therapy for the purpose of boosting memory. Short of that, there can be no FDA approval or medical regulation for this clinical use.

Lanctôt urges people to seek out clinical NIBS trials in their area if they want to see the science advance. “That is how we’ll find the answers,” she says, predicting it will be 5 to 10 years to develop each additional clinical application of NIBS. Ultimately, she predicts that reigning in Alzheimer’s disease and mild cognitive impairment will require a multi-pronged approach that includes lifestyle and medications, too.

Sandrini believes that scientific efforts should focus on preventing or delaying Alzheimer’s. “We need to start intervention earlier—as soon as people start to complain about forgetting things,” he says. “Changes in the brain start 10 years before [there is a problem]. Once Alzheimer’s develops, it is too late.”