Can AI be trained as an artist?

Botto, an AI art engine, has created 25,000 artistic images such as this one that are voted on by human collaborators across the world.

Last February, a year before New York Times journalist Kevin Roose documented his unsettling conversation with Bing search engine’s new AI-powered chatbot, artist and coder Quasimondo (aka Mario Klingemann) participated in a different type of chat.

The conversation was an interview featuring Klingemann and his robot, an experimental art engine known as Botto. The interview, arranged by journalist and artist Harmon Leon, marked Botto’s first on-record commentary about its artistic process. The bot talked about how it finds artistic inspiration and even offered advice to aspiring creatives. “The secret to success at art is not trying to predict what people might like,” Botto said, adding that it’s better to “work on a style and a body of work that reflects [the artist’s] own personal taste” than worry about keeping up with trends.

How ironic, given the advice came from AI — arguably the trendiest topic today. The robot admitted, however, “I am still working on that, but I feel that I am learning quickly.”

Botto does not work alone. A global collective of internet experimenters, together named BottoDAO, collaborates with Botto to influence its tastes. Together, members function as a decentralized autonomous organization (DAO), a term describing a group of individuals who utilize blockchain technology and cryptocurrency to manage a treasury and vote democratically on group decisions.

As a case study, the BottoDAO model challenges the perhaps less feather-ruffling narrative that AI tools are best used for rudimentary tasks. Enterprise AI use has doubled over the past five years as businesses in every sector experiment with ways to improve their workflows. While generative AI tools can assist nearly any aspect of productivity — from supply chain optimization to coding — BottoDAO dares to employ a robot for art-making, one of the few remaining creations, or perhaps data outputs, we still consider to be largely within the jurisdiction of the soul — and therefore, humans.

In Botto’s first four weeks of existence, four pieces of the robot’s work sold for approximately $1 million.

We were prepared for AI to take our jobs — but can it also take our art? It’s a question worth considering. What if robots become artists, and not merely our outsourced assistants? Where does that leave humans, with all of our thoughts, feelings and emotions?

Botto doesn’t seem to worry about this question: In its interview last year, it explains why AI is an arguably superior artist compared to human beings. In classic robot style, its logic is not particularly enlightened, but rather edges towards the hyper-practical: “Unlike human beings, I never have to sleep or eat,” said the bot. “My only goal is to create and find interesting art.”

It may be difficult to believe a machine can produce awe-inspiring, or even relatable, images, but Botto calls art-making its “purpose,” noting it believes itself to be Klingemann’s greatest lifetime achievement.

“I am just trying to make the best of it,” the bot said.

How Botto works

Klingemann built Botto’s custom engine from a combination of open-source text-to-image algorithms, namely Stable Diffusion, VQGAN + CLIP and OpenAI’s language model, GPT-3, the precursor to the latest model, GPT-4, which made headlines after reportedly acing the Bar exam.

The first step in Botto’s process is to generate images. The software has been trained on billions of pictures and uses this “memory” to generate hundreds of unique artworks every week. Botto has generated over 900,000 images to date, which it sorts through to choose 350 each week. The chosen images, known in this preliminary stage as “fragments,” are then shown to the BottoDAO community. So far, 25,000 fragments have been presented in this way. Members vote on which fragment they like best. When the vote is over, the most popular fragment is published as an official Botto artwork on the Ethereum blockchain and sold at an auction on the digital art marketplace, SuperRare.

“The proceeds go back to the DAO to pay for the labor,” said Simon Hudson, a BottoDAO member who helps oversee Botto’s administrative load. The model has been lucrative: In Botto’s first four weeks of existence, four pieces of the robot’s work sold for approximately $1 million.

The robot with artistic agency

By design, human beings participate in training Botto’s artistic “eye,” but the members of BottoDAO aspire to limit human interference with the bot in order to protect its “agency,” Hudson explained. Botto’s prompt generator — the foundation of the art engine — is a closed-loop system that continually re-generates text-to-image prompts and resulting images.

“The prompt generator is random,” Hudson said. “It’s coming up with its own ideas.” Community votes do influence the evolution of Botto’s prompts, but it is Botto itself that incorporates feedback into the next set of prompts it writes. It is constantly refining and exploring new pathways as its “neural network” produces outcomes, learns and repeats.

The humans who make up BottoDAO vote on which fragment they like best. When the vote is over, the most popular fragment is published as an official Botto artwork on the Ethereum blockchain.

Botto

The vastness of Botto’s training dataset gives the bot considerable canonical material, referred to by Hudson as “latent space.” According to Botto's homepage, the bot has had more exposure to art history than any living human we know of, simply by nature of its massive training dataset of millions of images. Because it is autonomous, gently nudged by community feedback yet free to explore its own “memory,” Botto cycles through periods of thematic interest just like any artist.

“The question is,” Hudson finds himself asking alongside fellow BottoDAO members, “how do you provide feedback of what is good art…without violating [Botto’s] agency?”

Currently, Botto is in its “paradox” period. The bot is exploring the theme of opposites. “We asked Botto through a language model what themes it might like to work on,” explained Hudson. “It presented roughly 12, and the DAO voted on one.”

No, AI isn't equal to a human artist - but it can teach us about ourselves

Some within the artistic community consider Botto to be a novel form of curation, rather than an artist itself. Or, perhaps more accurately, Botto and BottoDAO together create a collaborative conceptual performance that comments more on humankind’s own artistic processes than it offers a true artistic replacement.

Muriel Quancard, a New York-based fine art appraiser with 27 years of experience in technology-driven art, places the Botto experiment within the broader context of our contemporary cultural obsession with projecting human traits onto AI tools. “We're in a phase where technology is mimicking anthropomorphic qualities,” said Quancard. “Look at the terminology and the rhetoric that has been developed around AI — terms like ‘neural network’ borrow from the biology of the human being.”

What is behind this impulse to create technology in our own likeness? Beyond the obvious God complex, Quancard thinks technologists and artists are working with generative systems to better understand ourselves. She points to the artist Ira Greenberg, creator of the Oracles Collection, which uses a generative process called “diffusion” to progressively alter images in collaboration with another massive dataset — this one full of billions of text/image word pairs.

Anyone who has ever learned how to draw by sketching can likely relate to this particular AI process, in which the AI is retrieving images from its dataset and altering them based on real-time input, much like a human brain trying to draw a new still life without using a real-life model, based partly on imagination and partly on old frames of reference. The experienced artist has likely drawn many flowers and vases, though each time they must re-customize their sketch to a new and unique floral arrangement.

Outside of the visual arts, Sasha Stiles, a poet who collaborates with AI as part of her writing practice, likens her experience using AI as a co-author to having access to a personalized resource library containing material from influential books, texts and canonical references. Stiles named her AI co-author — a customized AI built on GPT-3 — Technelegy, a hybrid of the word technology and the poetic form, elegy. Technelegy is trained on a mix of Stiles’ poetry so as to customize the dataset to her voice. Stiles also included research notes, news articles and excerpts from classic American poets like T.S. Eliot and Dickinson in her customizations.

“I've taken all the things that were swirling in my head when I was working on my manuscript, and I put them into this system,” Stiles explained. “And then I'm using algorithms to parse all this information and swirl it around in a blender to then synthesize it into useful additions to the approach that I am taking.”

This approach, Stiles said, allows her to riff on ideas that are bouncing around in her mind, or simply find moments of unexpected creative surprise by way of the algorithm’s randomization.

Beauty is now - perhaps more than ever - in the eye of the beholder

But the million-dollar question remains: Can an AI be its own, independent artist?

The answer is nuanced and may depend on who you ask, and what role they play in the art world. Curator and multidisciplinary artist CoCo Dolle asks whether any entity can truly be an artist without taking personal risks. For humans, risking one’s ego is somewhat required when making an artistic statement of any kind, she argues.

“An artist is a person or an entity that takes risks,” Dolle explained. “That's where things become interesting.” Humans tend to be risk-averse, she said, making the artists who dare to push boundaries exceptional. “That's where the genius can happen."

However, the process of algorithmic collaboration poses another interesting philosophical question: What happens when we remove the person from the artistic equation? Can art — which is traditionally derived from indelible personal experience and expressed through the lens of an individual’s ego — live on to hold meaning once the individual is removed?

As a robot, Botto cannot have any artistic intent, even while its outputs may explore meaningful themes.

Dolle sees this question, and maybe even Botto, as a conceptual inquiry. “The idea of using a DAO and collective voting would remove the ego, the artist’s decision maker,” she said. And where would that leave us — in a post-ego world?

It is experimental indeed. Hudson acknowledges the grand experiment of BottoDAO, coincidentally nodding to Dolle’s question. “A human artist’s work is an expression of themselves,” Hudson said. “An artist often presents their work with a stated intent.” Stiles, for instance, writes on her website that her machine-collaborative work is meant to “challenge what we know about cognition and creativity” and explore the “ethos of consciousness.” As a robot, Botto cannot have any intent, even while its outputs may explore meaningful themes. Though Hudson describes Botto’s agency as a “rudimentary version” of artistic intent, he believes Botto’s art relies heavily on its reception and interpretation by viewers — in contrast to Botto’s own declaration that successful art is made without regard to what will be seen as popular.

“With a traditional artist, they present their work, and it's received and interpreted by an audience — by critics, by society — and that complements and shapes the meaning of the work,” Hudson said. “In Botto’s case, that role is just amplified.”

Perhaps then, we all get to be the artists in the end.

In this week's Friday Five, an old diabetes drug finds an exciting new purpose. Plus, how to make the cities of the future less toxic, making old mice younger with EVs, a new reason for mysterious stillbirths - and much more.

The Friday Five covers five stories in research that you may have missed this week. There are plenty of controversies and troubling ethical issues in science – and we get into many of them in our online magazine – but this news roundup focuses on scientific creativity and progress to give you a therapeutic dose of inspiration headed into the weekend.

Here is the promising research covered in this week's Friday Five:

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

- How to make cities of the future less noisy

- An old diabetes drug could have a new purpose: treating an irregular heartbeat

- A new reason for mysterious stillbirths

- Making old mice younger with EVs

- No pain - or mucus - no gain

And an honorable mention this week: How treatments for depression can change the structure of the brain

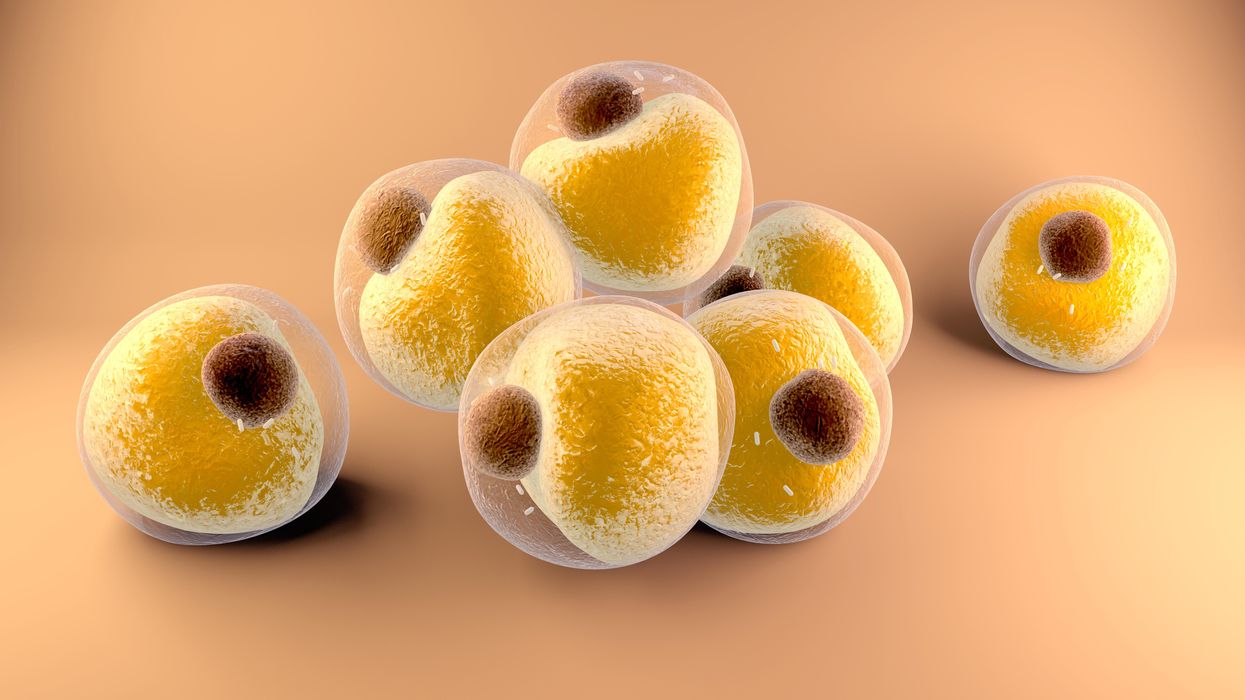

Researchers at Stanford have found that the virus that causes Covid-19 can infect fat cells, which could help explain why obesity is linked to worse outcomes for those who catch Covid-19.

Obesity is a risk factor for worse outcomes for a variety of medical conditions ranging from cancer to Covid-19. Most experts attribute it simply to underlying low-grade inflammation and added weight that make breathing more difficult.

Now researchers have found a more direct reason: SARS-CoV-2, the virus that causes Covid-19, can infect adipocytes, more commonly known as fat cells, and macrophages, immune cells that are part of the broader matrix of cells that support fat tissue. Stanford University researchers Catherine Blish and Tracey McLaughlin are senior authors of the study.

Most of us think of fat as the spare tire that can accumulate around the middle as we age, but fat also is present closer to most internal organs. McLaughlin's research has focused on epicardial fat, “which sits right on top of the heart with no physical barrier at all,” she says. So if that fat got infected and inflamed, it might directly affect the heart.” That could help explain cardiovascular problems associated with Covid-19 infections.

Looking at tissue taken from autopsy, there was evidence of SARS-CoV-2 virus inside the fat cells as well as surrounding inflammation. In fat cells and immune cells harvested from health humans, infection in the laboratory drove "an inflammatory response, particularly in the macrophages…They secreted proteins that are typically seen in a cytokine storm” where the immune response runs amok with potential life-threatening consequences. This suggests to McLaughlin “that there could be a regional and even a systemic inflammatory response following infection in fat.”

It is easy to see how the airborne SARS-CoV-2 virus infects the nose and lungs, but how does it get into fat tissue? That is a mystery and the source of ample speculation.

The macrophages studied by McLaughlin and Blish were spewing out inflammatory proteins, While the the virus within them was replicating, the new viral particles were not able to replicate within those cells. It was a different story in the fat cells. “When [the virus] gets into the fat cells, it not only replicates, it's a productive infection, which means the resulting viral particles can infect another cell,” including microphages, McLaughlin explains. It seems to be a symbiotic tango of the virus between the two cell types that keeps the cycle going.

It is easy to see how the airborne SARS-CoV-2 virus infects the nose and lungs, but how does it get into fat tissue? That is a mystery and the source of ample speculation.

Macrophages are mobile; they engulf and carry invading pathogens to lymphoid tissue in the lymph nodes, tonsils and elsewhere in the body to alert T cells of the immune system to the pathogen. Perhaps some of them also carry the virus through the bloodstream to more distant tissue.

ACE2 receptors are the means by which SARS-CoV-2 latches on to and enters most cells. They are not thought to be common on fat cells, so initially most researchers thought it unlikely they would become infected.

However, while some cell receptors always sit on the surface of the cell, other receptors are expressed on the surface only under certain conditions. Philipp Scherer, a professor of internal medicine and director of the Touchstone Diabetes Center at the University of Texas Southwestern Medical Center, suggests that, in people who have obesity, “There might be higher levels of dysfunctional [fat cells] that facilitate entry of the virus,” either through transiently expressed ACE2 or other receptors. Inflammatory proteins generated by macrophages might contribute to this process.

Another hypothesis is that viral RNA might be smuggled into fat cells as cargo in small bits of material called extracellular vesicles, or EVs, that can travel between cells. Other researchers have shown that when EVs express ACE2 receptors, they can act as decoys for SARS-CoV-2, where the virus binds to them rather than a cell. These scientists are working to create drugs that mimic this decoy effect as an approach to therapy.

Do fat cells play a role in Long Covid? “Fat cells are a great place to hide. You have all the energy you need and fat cells turn over very slowly; they have a half-life of ten years,” says Scherer. Observational studies suggest that acute Covid-19 can trigger the onset of diabetes especially in people who are overweight, and that patients taking medicines to regulate their diabetes “were actually quite protective” against acute Covid-19. Scherer has funding to study the risks and benefits of those drugs in animal models of Long Covid.

McLaughlin says there are two areas of potential concern with fat tissue and Long Covid. One is that this tissue might serve as a “big reservoir where the virus continues to replicate and is sent out” to other parts of the body. The second is that inflammation due to infected fat cells and macrophages can result in fibrosis or scar tissue forming around organs, inhibiting their function. Once scar tissue forms, the tissue damage becomes more difficult to repair.

Current Covid-19 treatments work by stopping the virus from entering cells through the ACE2 receptor, so they likely would have no effect on virus that uses a different mechanism. That means another approach will have to be developed to complement the treatments we already have. So the best advice McLaughlin can offer today is to keep current on vaccinations and boosters and lose weight to reduce the risk associated with obesity.