Massive benefits of AI come with environmental and human costs. Can AI itself be part of the solution?

Generative AI has a large carbon footprint and other drawbacks. But AI can help mitigate its own harms—by plowing through mountains of data on extreme weather and human displacement.

The recent explosion of generative artificial intelligence tools like ChatGPT and Dall-E enabled anyone with internet access to harness AI’s power for enhanced productivity, creativity, and problem-solving. With their ever-improving capabilities and expanding user base, these tools proved useful across disciplines, from the creative to the scientific.

But beneath the technological wonders of human-like conversation and creative expression lies a dirty secret—an alarming environmental and human cost. AI has an immense carbon footprint. Systems like ChatGPT take months to train in high-powered data centers, which demand huge amounts of electricity, much of which is still generated with fossil fuels, as well as water for cooling. “One of the reasons why Open AI needs investments [to the tune of] $10 billion from Microsoft is because they need to pay for all of that computation,” says Kentaro Toyama, a computer scientist at the University of Michigan. There’s also an ecological toll from mining rare minerals required for hardware and infrastructure. This environmental exploitation pollutes land, triggers natural disasters and causes large-scale human displacement. Finally, for data labeling needed to train and correct AI algorithms, the Big Data industry employs cheap and exploitative labor, often from the Global South.

Generative AI tools are based on large language models (LLMs), with most well-known being various versions of GPT. LLMs can perform natural language processing, including translating, summarizing and answering questions. They use artificial neural networks, called deep learning or machine learning. Inspired by the human brain, neural networks are made of millions of artificial neurons. “The basic principles of neural networks were known even in the 1950s and 1960s,” Toyama says, “but it’s only now, with the tremendous amount of compute power that we have, as well as huge amounts of data, that it’s become possible to train generative AI models.”

Though there aren’t any official figures about the power consumption or emissions from data centers, experts estimate that they use one percent of global electricity—more than entire countries.

In recent months, much attention has gone to the transformative benefits of these technologies. But it’s important to consider that these remarkable advances may come at a price.

AI’s carbon footprint

In their latest annual report, 2023 Landscape: Confronting Tech Power, the AI Now Institute, an independent policy research entity focusing on the concentration of power in the tech industry, says: “The constant push for scale in artificial intelligence has led Big Tech firms to develop hugely energy-intensive computational models that optimize for ‘accuracy’—through increasingly large datasets and computationally intensive model training—over more efficient and sustainable alternatives.”

Though there aren’t any official figures about the power consumption or emissions from data centers, experts estimate that they use one percent of global electricity—more than entire countries. In 2019, Emma Strubell, then a graduate researcher at the University of Massachusetts Amherst, estimated that training a single LLM resulted in over 280,000 kg in CO2 emissions—an equivalent of driving almost 1.2 million km in a gas-powered car. A couple of years later, David Patterson, a computer scientist from the University of California Berkeley, and colleagues, estimated GPT-3’s carbon footprint at over 550,000 kg of CO2 In 2022, the tech company Hugging Face, estimated the carbon footprint of its own language model, BLOOM, as 25,000 kg in CO2 emissions. (BLOOM’s footprint is lower because Hugging Face uses renewable energy, but it doubled when other life-cycle processes like hardware manufacturing and use were added.)

Luckily, despite the growing size and numbers of data centers, their increasing energy demands and emissions have not kept pace proportionately—thanks to renewable energy sources and energy-efficient hardware.

But emissions don’t tell the full story.

AI’s hidden human cost

“If historical colonialism annexed territories, their resources, and the bodies that worked on them, data colonialism’s power grab is both simpler and deeper: the capture and control of human life itself through appropriating the data that can be extracted from it for profit.” So write Nick Couldry and Ulises Mejias, authors of the book The Costs of Connection.

The energy requirements, hardware manufacture and the cheap human labor behind AI systems disproportionately affect marginalized communities.

Technologies we use daily inexorably gather our data. “Human experience, potentially every layer and aspect of it, is becoming the target of profitable extraction,” Couldry and Meijas say. This feeds data capitalism, the economic model built on the extraction and commodification of data. While we are being dispossessed of our data, Big Tech commodifies it for their own benefit. This results in consolidation of power structures that reinforce existing race, gender, class and other inequalities.

“The political economy around tech and tech companies, and the development in advances in AI contribute to massive displacement and pollution, and significantly changes the built environment,” says technologist and activist Yeshi Milner, who founded Data For Black Lives (D4BL) to create measurable change in Black people’s lives using data. The energy requirements, hardware manufacture and the cheap human labor behind AI systems disproportionately affect marginalized communities.

AI’s recent explosive growth spiked the demand for manual, behind-the-scenes tasks, creating an industry described by Mary Gray and Siddharth Suri as “ghost work” in their book. This invisible human workforce that lies behind the “magic” of AI, is overworked and underpaid, and very often based in the Global South. For example, workers in Kenya who made less than $2 an hour, were the behind the mechanism that trained ChatGPT to properly talk about violence, hate speech and sexual abuse. And, according to an article in Analytics India Magazine, in some cases these workers may not have been paid at all, a case for wage theft. An exposé by the Washington Post describes “digital sweatshops” in the Philippines, where thousands of workers experience low wages, delays in payment, and wage theft by Remotasks, a platform owned by Scale AI, a $7 billion dollar American startup. Rights groups and labor researchers have flagged Scale AI as one company that flouts basic labor standards for workers abroad.

It is possible to draw a parallel with chattel slavery—the most significant economic event that continues to shape the modern world—to see the business structures that allow for the massive exploitation of people, Milner says. Back then, people got chocolate, sugar, cotton; today, they get generative AI tools. “What’s invisible through distance—because [tech companies] also control what we see—is the massive exploitation,” Milner says.

“At Data for Black Lives, we are less concerned with whether AI will become human…[W]e’re more concerned with the growing power of AI to decide who’s human and who’s not,” Milner says. As a decision-making force, AI becomes a “justifying factor for policies, practices, rules that not just reinforce, but are currently turning the clock back generations years on people’s civil and human rights.”

Ironically, AI plays an important role in mitigating its own harms—by plowing through mountains of data about weather changes, extreme weather events and human displacement.

Nuria Oliver, a computer scientist, and co-founder and vice-president of the European Laboratory of Learning and Intelligent Systems (ELLIS), says that instead of focusing on the hypothetical existential risks of today’s AI, we should talk about its real, tangible risks.

“Because AI is a transverse discipline that you can apply to any field [from education, journalism, medicine, to transportation and energy], it has a transformative power…and an exponential impact,” she says.

AI's accountability

“At the core of what we were arguing about data capitalism [is] a call to action to abolish Big Data,” says Milner. “Not to abolish data itself, but the power structures that concentrate [its] power in the hands of very few actors.”

A comprehensive AI Act currently negotiated in the European Parliament aims to rein Big Tech in. It plans to introduce a rating of AI tools based on the harms caused to humans, while being as technology-neutral as possible. That sets standards for safe, transparent, traceable, non-discriminatory, and environmentally friendly AI systems, overseen by people, not automation. The regulations also ask for transparency in the content used to train generative AIs, particularly with copyrighted data, and also disclosing that the content is AI-generated. “This European regulation is setting the example for other regions and countries in the world,” Oliver says. But, she adds, such transparencies are hard to achieve.

Google, for example, recently updated its privacy policy to say that anything on the public internet will be used as training data. “Obviously, technology companies have to respond to their economic interests, so their decisions are not necessarily going to be the best for society and for the environment,” Oliver says. “And that’s why we need strong research institutions and civil society institutions to push for actions.” ELLIS also advocates for data centers to be built in locations where the energy can be produced sustainably.

Ironically, AI plays an important role in mitigating its own harms—by plowing through mountains of data about weather changes, extreme weather events and human displacement. “The only way to make sense of this data is using machine learning methods,” Oliver says.

Milner believes that the best way to expose AI-caused systemic inequalities is through people's stories. “In these last five years, so much of our work [at D4BL] has been creating new datasets, new data tools, bringing the data to life. To show the harms but also to continue to reclaim it as a tool for social change and for political change.” This change, she adds, will depend on whose hands it is in.

FDA, researchers work to make clinical trials more diverse

The U.S. population is becoming more diverse, but clinical trials don't reflect that, experts say. Some are focusing on recruiting minorities to participate in research.

Nestled in a predominately Hispanic neighborhood, a new mural outside Guadalupe Centers Middle School in Kansas City, Missouri imparts a powerful message: “Clinical Research Needs Representation.” The colorful portraits painted above those words feature four cancer survivors of different racial and ethnic backgrounds. Two individuals identify as Hispanic, one as African American and another as Native American.

One of the patients depicted in the mural is Kim Jones, a 51-year-old African American breast cancer survivor since 2012. She advocated for an African American friend who participated in several clinical trials for ovarian cancer. Her friend was diagnosed in an advanced stage at age 26 but lived nine more years, thanks to the trials testing new therapeutics. “They are definitely giving people a longer, extended life and a better quality of life,” said Jones, who owns a nail salon. And that’s the message the mural aims to send to the community: Clinical trials need diverse participants.

While racial and ethnic minority groups represent almost half of the U.S. population, the lack of diversity in clinical trials poses serious challenges. Limited awareness and access impede equitable representation, which is necessary to prove the safety and effectiveness of medical interventions across different groups.

A Yale University study on clinical trial diversity published last year in BMJ Medicine found that while 81 percent of trials testing the new cancer drugs approved by the U.S. Food and Drug Administration between 2012 and 2017 included women, only 23 percent included older adults and 5 percent fairly included racial and ethnic minorities. “It’s both a public health and social justice issue,” said Jennifer E. Miller, an associate professor of medicine at Yale School of Medicine. “We need to know how medicines and vaccines work for all clinically distinct groups, not just healthy young White males.” A recent JAMA Oncology editorial stresses out the need for legislation that would require diversity action plans for certain types of trials.

Ensuring meaningful representation of racial and ethnic minorities in clinical trials for regulated medical products is fundamental to public health.--FDA Commissioner Robert M. Califf.

But change is on the horizon. Last April, the FDA issued a new draft guidance encouraging industry to find ways to revamp recruitment into clinical trials. The announcement, which expanded on previous efforts, called for including more participants from underrepresented racial and ethnic segments of the population.

“The U.S. population has become increasingly diverse, and ensuring meaningful representation of racial and ethnic minorities in clinical trials for regulated medical products is fundamental to public health,” FDA commissioner Robert M. Califf, a physician, said in a statement. “Going forward, achieving greater diversity will be a key focus throughout the FDA to facilitate the development of better treatments and better ways to fight diseases that often disproportionately impact diverse communities. This guidance also further demonstrates how we support the Administration’s Cancer Moonshot goal of addressing inequities in cancer care, helping to ensure that every community in America has access to cutting-edge cancer diagnostics, therapeutics and clinical trials.”

Lola Fashoyin-Aje, associate director for Science and Policy to Address Disparities in the Oncology Center of Excellence at the FDA, said that the agency “has long held the view that clinical trial participants should reflect the clinical and demographic characteristics of the patients who will ultimately receive the drug once approved.” However, “numerous studies over many decades” have measured the extent of underrepresentation. One FDA analysis found that the proportion of White patients enrolled in U.S. clinical trials (88 percent) is much higher than their numbers in country's population. Meanwhile, the enrollment of African American and Native Hawaiian/American Indian and Alaskan Native patients is below their national numbers.

The FDA’s guidance is accelerating researchers’ efforts to be more inclusive of diverse groups in clinical trials, said Joyce Sackey, a clinical professor of medicine and associate dean at Stanford School of Medicine. Underrepresentation is “a huge issue,” she noted. Sackey is focusing on this in her role as the inaugural chief equity, diversity and inclusion officer at Stanford Medicine, which encompasses the medical school and two hospitals.

Until the early 1990s, Sackey pointed out, clinical trials were based on research that mainly included men, as investigators were concerned that women could become pregnant, which would affect the results. This has led to some unfortunate consequences, such as indications and dosages for drugs that cause more side effects in women due to biological differences. “We’ve made some progress in including women, but we have a long way to go in including people of different ethnic and racial groups,” she said.

A new mural outside Guadalupe Centers Middle School in Kansas City, Missouri, advocates for increasing diversity in clinical trials. Kim Jones, 51-year-old African American breast cancer survivor, is second on the left.

Artwork by Vania Soto. Photo by Megan Peters.

Among racial and ethnic minorities, distrust of clinical trials is deeply rooted in a history of medical racism. A prime example is the Tuskegee Study, a syphilis research experiment that started in 1932 and spanned 40 years, involving hundreds of Black men with low incomes without their informed consent. They were lured with inducements of free meals, health care and burial stipends to participate in the study undertaken by the U.S. Public Health Service and the Tuskegee Institute in Alabama.

By 1947, scientists had figured out that they could provide penicillin to help patients with syphilis, but leaders of the Tuskegee research failed to offer penicillin to their participants throughout the rest of the study, which lasted until 1972.

Opeyemi Olabisi, an assistant professor of medicine at Duke University Medical Center, aims to increase the participation of African Americans in clinical research. As a nephrologist and researcher, he is the principal investigator of a clinical trial focusing on the high rate of kidney disease fueled by two genetic variants of the apolipoprotein L1 (APOL1) gene in people of recent African ancestry. Individuals of this background are four times more likely to develop kidney failure than European Americans, with these two variants accounting for much of the excess risk, Olabisi noted.

The trial is part of an initiative, CARE and JUSTICE for APOL1-Mediated Kidney Disease, through which Olabisi hopes to diversify study participants. “We seek ways to engage African Americans by meeting folks in the community, providing accessible information and addressing structural hindrances that prevent them from participating in clinical trials,” Olabisi said. The researchers go to churches and community organizations to enroll people who do not visit academic medical centers, which typically lead clinical trials. Since last fall, the initiative has screened more than 250 African Americans in North Carolina for the genetic variants, he said.

Other key efforts are underway. “Breaking down barriers, including addressing access, awareness, discrimination and racism, and workforce diversity, are pivotal to increasing clinical trial participation in racial and ethnic minority groups,” said Joshua J. Joseph, assistant professor of medicine at the Ohio State University Wexner Medical Center. Along with the university’s colleges of medicine and nursing, researchers at the medical center partnered with the African American Male Wellness Agency, Genentech and Pfizer to host webinars soliciting solutions from almost 450 community members, civic representatives, health care providers, government organizations and biotechnology professionals in 25 states and five countries.

Their findings, published in February in the journal PLOS One, suggested that including incentives or compensation as part of the research budget at the institutional level may help resolve some issues that hinder racial and ethnic minorities from participating in clinical trials. Compared to other groups, more Blacks and Hispanics have jobs in service, production and transportation, the authors note. It can be difficult to get paid leave in these sectors, so employees often can’t join clinical trials during regular business hours. If more leaders of trials offer money for participating, that could make a difference.

Obstacles include geographic access, language and other communications issues, limited awareness of research options, cost and lack of trust.

Christopher Corsico, senior vice president of development at GSK, formerly GlaxoSmithKline, said the pharmaceutical company conducted a 17-year retrospective study on U.S. clinical trial diversity. “We are using epidemiology and patients most impacted by a particular disease as the foundation for all our enrollment guidance, including study diversity plans,” Corsico said. “We are also sharing our results and ideas across the pharmaceutical industry.”

Judy Sewards, vice president and head of clinical trial experience at Pfizer’s headquarters in New York, said the company has committed to achieving racially and ethnically diverse participation at or above U.S. census or disease prevalence levels (as appropriate) in all trials. “Today, barriers to clinical trial participation persist,” Sewards said. She noted that these obstacles include geographic access, language and other communications issues, limited awareness of research options, cost and lack of trust. “Addressing these challenges takes a village. All stakeholders must come together and work collaboratively to increase diversity in clinical trials.”

It takes a village indeed. Hope Krebill, executive director of the Masonic Cancer Alliance, the outreach network of the University of Kansas Cancer Center in Kansas City, which commissioned the mural, understood that well. So her team actively worked with their metaphorical “village.” “We partnered with the community to understand their concerns, knowledge and attitudes toward clinical trials and research,” said Krebill. “With that information, we created a clinical trials video and a social media campaign, and finally, the mural to encourage people to consider clinical trials as an option for care.”

Besides its encouraging imagery, the mural will also be informational. It will include a QR code that viewers can scan to find relevant clinical trials in their location, said Vania Soto, a Mexican artist who completed the rendition in late February. “I’m so honored to paint people that are survivors and are living proof that clinical trials worked for them,” she said.

Jones, the cancer survivor depicted in the mural, hopes the image will prompt people to feel more open to partaking in clinical trials. “Hopefully, it will encourage people to inquire about what they can do — how they can participate,” she said.

Send in the Robots: A Look into the Future of Firefighting

Drones are just one of several new technologies that are rising to the challenge of more frequent wildfires.

April in Paris stood still. Flames engulfed the beloved Notre Dame Cathedral as the world watched, horrified, in 2019. The worst looked inevitable when firefighters were forced to retreat from the out-of-control fire.

But the Paris Fire Brigade had an ace up their sleeve: Colossus, a firefighting robot. The seemingly indestructible tank-like machine ripped through the blaze with its motorized water cannon. It was able to put out flames in places that would have been deadly for firefighters.

Firefighting is entering a new era, driven by necessity. Conventional methods of managing fires have been no match for the fiercer, more expansive fires being triggered by climate change, urban sprawl, and susceptible wooded areas.

Robots have been a game-changer. Inspired by Paris, the Los Angeles Fire Department (LAFD) was the first in the U.S. to deploy a firefighting robot in 2021, the Thermite Robotics System 3 – RS3, for short.

RS3 is a 3,500-pound turbine on a crawler—the size of a Smart car—with a 36.8 horsepower engine that can go for 20 hours without refueling. It can plow through hazardous terrain, move cars from its path, and pull an 8,000-pound object from a fire.

All that while spurting 2,500 gallons of water per minute with a rear exhaust fan clearing the smoke. At a recent trade show, RS3 was billed as equivalent to 10 firefighters. The Los Angeles Times referred to it as “a droid on steroids.”

Robots such as the Thermite RS3 can plow through hazardous terrain and pull an 8,000-pound object from a fire.

Los Angeles Fire Department

The advantage of the robot is obvious. Operated remotely from a distance, it greatly reduces an emergency responder’s exposure to danger, says Wade White, assistant chief of the LAFD. The robot can be sent into airplane fires, nuclear reactors, hazardous areas with carcinogens (think East Palestine, Ohio), or buildings where a roof collapse is imminent.

Advances for firefighters are taking many other forms as well. Fibers have been developed that make the firefighter’s coat lighter and more protective from carcinogens. New wearable devices track firefighters’ biometrics in real time so commanders can monitor their heat stress and exertion levels. A sensor patch is in development which takes readings every four seconds to detect dangerous gases such as methane and carbon dioxide. A sonic fire extinguisher is being explored that uses low frequency soundwaves to remove oxygen from air molecules without unhealthy chemical compounds.

The demand for this technology is only increasing, especially with the recent rise in wildfires. In 2021, fires were responsible for 3,800 deaths and 14,700 injuries of civilians in this country. Last year, 68,988 wildfires burned down 7.6 million acres. Whether the next generation of firefighting can address these new challenges could depend on special cameras, robots of the aerial variety, AI and smart systems.

Fighting fire with cameras

Another key innovation for firefighters is a thermal imaging camera (TIC) that improves visibility through smoke. “At a fire, you might not see your hand in front of your face,” says White. “Using the TIC screen, you can find the door to get out safely or see a victim in the corner.” Since these cameras were introduced in the 1990s, the price has come down enough (from $10,000 or more to about $700) that every LAFD firefighter on duty has been carrying one since 2019, says White.

TICs are about the size of a cell phone. The camera can sense movement and body heat so it is ideal as a search tool for people trapped in buildings. If a firefighter has not moved in 30 seconds, the motion detector picks that up, too, and broadcasts a distress signal and directional information to others.

To enable firefighters to operate the camera hands-free, the newest TICs can attach inside a helmet. The firefighter sees the images inside their mask.

TICs also can be mounted on drones to get a bird’s-eye, 360 degree view of a disaster or scout for hot spots through the smoke. In addition, the camera can take photos to aid arson investigations or help determine the cause of a fire.

More help From above

Firefighters prefer the term “unmanned aerial systems” (UAS) to drones to differentiate them from military use.

A UAS carrying a camera can provide aerial scene monitoring and topography maps to help fire captains deploy resources more efficiently. At night, floodlights from the drone can illuminate the landscape for firefighters. They can drop off payloads of blankets, parachutes, life preservers or radio devices for stranded people to communicate, too. And like the robot, the UAS reduces risks for ground crews and helicopter pilots by limiting their contact with toxic fumes, hazardous chemicals, and explosive materials.

“The nice thing about drones is that they perform multiple missions at once,” says Sean Triplett, team lead of fire and aviation management, tools and technology at the Forest Service.

Experts predict we’ll see swarms of drones dropping water and fire retardant on burning buildings and forests in the near future.

The UAS is especially helpful during wildfires because it can track fires, get ahead of wind currents and warn firefighters of wind shifts in real time. The U.S. Forest Service also uses long endurance, solar-powered drones that can fly for up to 30 days at a time to detect early signs of wildfire. Wildfires are no longer seasonal in California – they are a year-long threat, notes Thanh Nguyen, fire captain at the Orange County Fire Authority.

In March, Nguyen’s crew deployed a drone to scope out a huge landslide following torrential rains in San Clemente, CA. Emergency responders used photos and videos from the drone to survey the evacuated area, enabling them to stay clear of ground on the hillside that was still sliding.

Improvements in drone batteries are enabling them to fly for longer with heavier payloads. Experts predict we’ll see swarms of drones dropping water and fire retardant on burning buildings and forests in the near future.

AI to the rescue

The biggest peril for a firefighter is often what they don’t see coming. Flashovers are a leading cause of firefighter deaths, for example. They occur when flammable materials in an enclosed area ignite almost instantaneously. Or dangerous backdrafts can happen when a firefighter opens a window or door; the air rushing in can ignite a fire without warning.

The Fire Fighting Technology Group at the National Institute of Standards and Technology (NIST) is developing tools and systems to predict these potentially lethal events with computer models and artificial intelligence.

Partnering with other institutions, NIST researchers developed the Flashover Prediction Neural Network (FlashNet) after looking at common house layouts and running sets of scenarios through a machine-learning model. In the lab, FlashNet was able to predict a flashover 30 seconds before it happened with 92.1% success. When ready for release, the technology will be bundled with sensors that are already installed in buildings, says Anthony Putorti, leader of the NIST group.

The NIST team also examined data from hundreds of backdrafts as a basis for a machine-learning model to predict them. In testing chambers the model predicted them correctly 70.8% of the time; accuracy increased to 82.4% when measures of backdrafts were taken in more positions at different heights in the chambers. Developers are working on how to integrate the AI into a small handheld device that can probe the air of a room through cracks around a door or through a created opening, Putorti says. This way, the air can be analyzed with the device to alert firefighters of any significant backdraft risk.

Early wildfire detection technologies based on AI are in the works, too. The Forest Service predicts the acreage burned each year during wildfires will more than triple in the next 80 years. By gathering information on historic fires, weather patterns, and topography, says White, AI can help firefighters manage wildfires before they grow out of control and create effective evacuation plans based on population data and fire patterns.

The future is connectivity

We are in our infancy with “smart firefighting,” says Casey Grant, executive director emeritus of the Fire Protection Research Foundation. Grant foresees a new era of cyber-physical systems for firefighters—a massive integration of wireless networks, advanced sensors, 3D simulations, and cloud services. To enhance teamwork, the system will connect all branches of emergency responders—fire, emergency medical services, law enforcement.

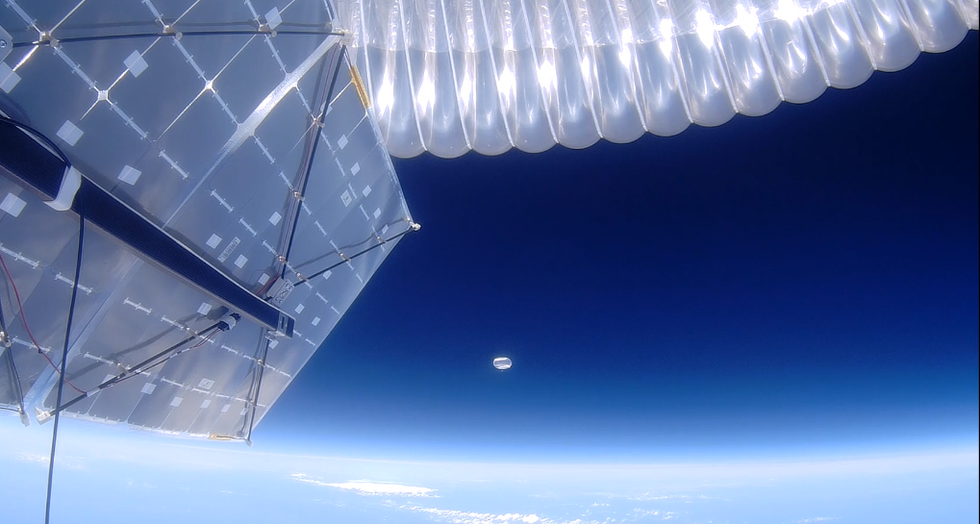

FirstNet (First Responder Network Authority) now provides a nationwide high-speed broadband network with 5G capabilities for first responders through a terrestrial cell network. Battling wildfires, however, the Forest Service needed an alternative because they don’t always have access to a power source. In 2022, they contracted with Aerostar for a high altitude balloon (60,000 feet up) that can extend cell phone power and LTE. “It puts a bubble of connectivity over the fire to hook in the internet,” Triplett explains.

A high altitude balloon, 60,000 feet high, can extend cell phone power and LTE, putting a "bubble" of internet connectivity over fires.

Courtesy of USDA Forest Service

Advances in harvesting, processing and delivering data will improve safety and decision-making for firefighters, Grant sums up. Smart systems may eventually calculate fire flow paths and make recommendations about the best ways to navigate specific fire conditions. NIST’s plan to combine FlashNet with sensors is one example.

The biggest challenge is developing firefighting technology that can work across multiple channels—federal, state, local and tribal systems as well as for fire, police and other emergency services— in any location, says Triplett. “When there’s a wildfire, there are no political boundaries,” he says. “All hands are on deck.”