Massive benefits of AI come with environmental and human costs. Can AI itself be part of the solution?

Generative AI has a large carbon footprint and other drawbacks. But AI can help mitigate its own harms—by plowing through mountains of data on extreme weather and human displacement.

The recent explosion of generative artificial intelligence tools like ChatGPT and Dall-E enabled anyone with internet access to harness AI’s power for enhanced productivity, creativity, and problem-solving. With their ever-improving capabilities and expanding user base, these tools proved useful across disciplines, from the creative to the scientific.

But beneath the technological wonders of human-like conversation and creative expression lies a dirty secret—an alarming environmental and human cost. AI has an immense carbon footprint. Systems like ChatGPT take months to train in high-powered data centers, which demand huge amounts of electricity, much of which is still generated with fossil fuels, as well as water for cooling. “One of the reasons why Open AI needs investments [to the tune of] $10 billion from Microsoft is because they need to pay for all of that computation,” says Kentaro Toyama, a computer scientist at the University of Michigan. There’s also an ecological toll from mining rare minerals required for hardware and infrastructure. This environmental exploitation pollutes land, triggers natural disasters and causes large-scale human displacement. Finally, for data labeling needed to train and correct AI algorithms, the Big Data industry employs cheap and exploitative labor, often from the Global South.

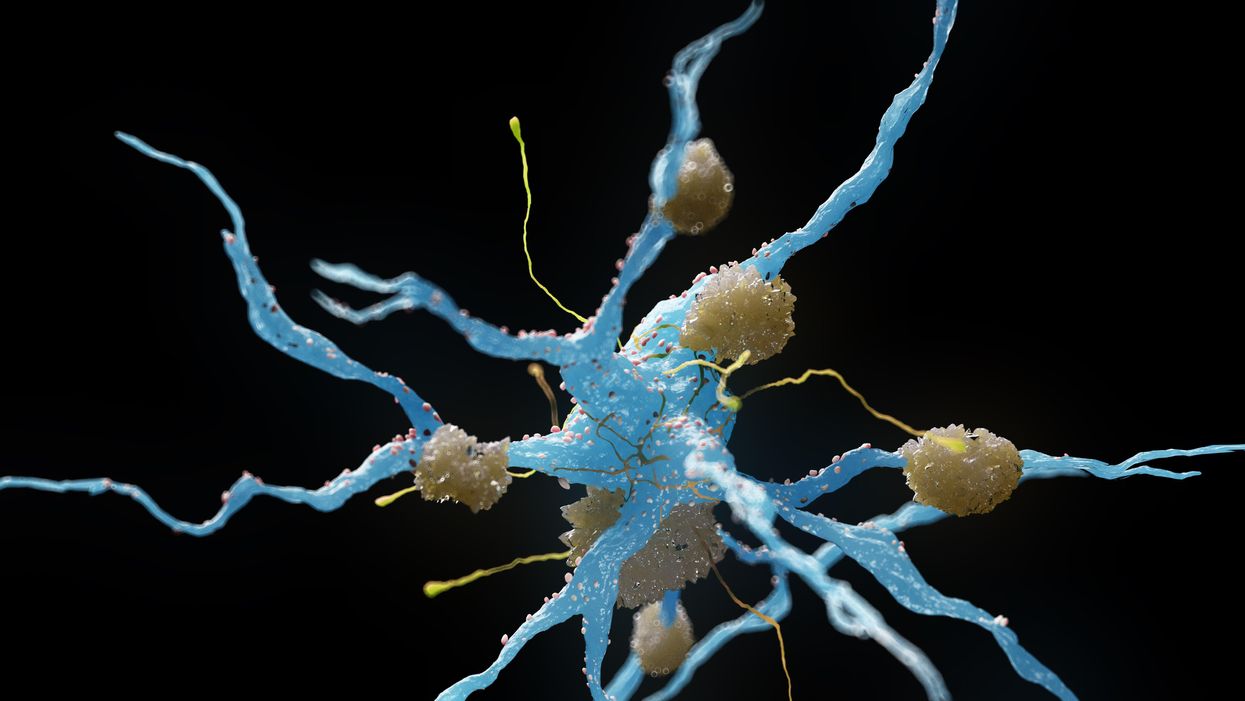

Generative AI tools are based on large language models (LLMs), with most well-known being various versions of GPT. LLMs can perform natural language processing, including translating, summarizing and answering questions. They use artificial neural networks, called deep learning or machine learning. Inspired by the human brain, neural networks are made of millions of artificial neurons. “The basic principles of neural networks were known even in the 1950s and 1960s,” Toyama says, “but it’s only now, with the tremendous amount of compute power that we have, as well as huge amounts of data, that it’s become possible to train generative AI models.”

Though there aren’t any official figures about the power consumption or emissions from data centers, experts estimate that they use one percent of global electricity—more than entire countries.

In recent months, much attention has gone to the transformative benefits of these technologies. But it’s important to consider that these remarkable advances may come at a price.

AI’s carbon footprint

In their latest annual report, 2023 Landscape: Confronting Tech Power, the AI Now Institute, an independent policy research entity focusing on the concentration of power in the tech industry, says: “The constant push for scale in artificial intelligence has led Big Tech firms to develop hugely energy-intensive computational models that optimize for ‘accuracy’—through increasingly large datasets and computationally intensive model training—over more efficient and sustainable alternatives.”

Though there aren’t any official figures about the power consumption or emissions from data centers, experts estimate that they use one percent of global electricity—more than entire countries. In 2019, Emma Strubell, then a graduate researcher at the University of Massachusetts Amherst, estimated that training a single LLM resulted in over 280,000 kg in CO2 emissions—an equivalent of driving almost 1.2 million km in a gas-powered car. A couple of years later, David Patterson, a computer scientist from the University of California Berkeley, and colleagues, estimated GPT-3’s carbon footprint at over 550,000 kg of CO2 In 2022, the tech company Hugging Face, estimated the carbon footprint of its own language model, BLOOM, as 25,000 kg in CO2 emissions. (BLOOM’s footprint is lower because Hugging Face uses renewable energy, but it doubled when other life-cycle processes like hardware manufacturing and use were added.)

Luckily, despite the growing size and numbers of data centers, their increasing energy demands and emissions have not kept pace proportionately—thanks to renewable energy sources and energy-efficient hardware.

But emissions don’t tell the full story.

AI’s hidden human cost

“If historical colonialism annexed territories, their resources, and the bodies that worked on them, data colonialism’s power grab is both simpler and deeper: the capture and control of human life itself through appropriating the data that can be extracted from it for profit.” So write Nick Couldry and Ulises Mejias, authors of the book The Costs of Connection.

The energy requirements, hardware manufacture and the cheap human labor behind AI systems disproportionately affect marginalized communities.

Technologies we use daily inexorably gather our data. “Human experience, potentially every layer and aspect of it, is becoming the target of profitable extraction,” Couldry and Meijas say. This feeds data capitalism, the economic model built on the extraction and commodification of data. While we are being dispossessed of our data, Big Tech commodifies it for their own benefit. This results in consolidation of power structures that reinforce existing race, gender, class and other inequalities.

“The political economy around tech and tech companies, and the development in advances in AI contribute to massive displacement and pollution, and significantly changes the built environment,” says technologist and activist Yeshi Milner, who founded Data For Black Lives (D4BL) to create measurable change in Black people’s lives using data. The energy requirements, hardware manufacture and the cheap human labor behind AI systems disproportionately affect marginalized communities.

AI’s recent explosive growth spiked the demand for manual, behind-the-scenes tasks, creating an industry described by Mary Gray and Siddharth Suri as “ghost work” in their book. This invisible human workforce that lies behind the “magic” of AI, is overworked and underpaid, and very often based in the Global South. For example, workers in Kenya who made less than $2 an hour, were the behind the mechanism that trained ChatGPT to properly talk about violence, hate speech and sexual abuse. And, according to an article in Analytics India Magazine, in some cases these workers may not have been paid at all, a case for wage theft. An exposé by the Washington Post describes “digital sweatshops” in the Philippines, where thousands of workers experience low wages, delays in payment, and wage theft by Remotasks, a platform owned by Scale AI, a $7 billion dollar American startup. Rights groups and labor researchers have flagged Scale AI as one company that flouts basic labor standards for workers abroad.

It is possible to draw a parallel with chattel slavery—the most significant economic event that continues to shape the modern world—to see the business structures that allow for the massive exploitation of people, Milner says. Back then, people got chocolate, sugar, cotton; today, they get generative AI tools. “What’s invisible through distance—because [tech companies] also control what we see—is the massive exploitation,” Milner says.

“At Data for Black Lives, we are less concerned with whether AI will become human…[W]e’re more concerned with the growing power of AI to decide who’s human and who’s not,” Milner says. As a decision-making force, AI becomes a “justifying factor for policies, practices, rules that not just reinforce, but are currently turning the clock back generations years on people’s civil and human rights.”

Ironically, AI plays an important role in mitigating its own harms—by plowing through mountains of data about weather changes, extreme weather events and human displacement.

Nuria Oliver, a computer scientist, and co-founder and vice-president of the European Laboratory of Learning and Intelligent Systems (ELLIS), says that instead of focusing on the hypothetical existential risks of today’s AI, we should talk about its real, tangible risks.

“Because AI is a transverse discipline that you can apply to any field [from education, journalism, medicine, to transportation and energy], it has a transformative power…and an exponential impact,” she says.

AI's accountability

“At the core of what we were arguing about data capitalism [is] a call to action to abolish Big Data,” says Milner. “Not to abolish data itself, but the power structures that concentrate [its] power in the hands of very few actors.”

A comprehensive AI Act currently negotiated in the European Parliament aims to rein Big Tech in. It plans to introduce a rating of AI tools based on the harms caused to humans, while being as technology-neutral as possible. That sets standards for safe, transparent, traceable, non-discriminatory, and environmentally friendly AI systems, overseen by people, not automation. The regulations also ask for transparency in the content used to train generative AIs, particularly with copyrighted data, and also disclosing that the content is AI-generated. “This European regulation is setting the example for other regions and countries in the world,” Oliver says. But, she adds, such transparencies are hard to achieve.

Google, for example, recently updated its privacy policy to say that anything on the public internet will be used as training data. “Obviously, technology companies have to respond to their economic interests, so their decisions are not necessarily going to be the best for society and for the environment,” Oliver says. “And that’s why we need strong research institutions and civil society institutions to push for actions.” ELLIS also advocates for data centers to be built in locations where the energy can be produced sustainably.

Ironically, AI plays an important role in mitigating its own harms—by plowing through mountains of data about weather changes, extreme weather events and human displacement. “The only way to make sense of this data is using machine learning methods,” Oliver says.

Milner believes that the best way to expose AI-caused systemic inequalities is through people's stories. “In these last five years, so much of our work [at D4BL] has been creating new datasets, new data tools, bringing the data to life. To show the harms but also to continue to reclaim it as a tool for social change and for political change.” This change, she adds, will depend on whose hands it is in.

Patients voice hope and relief as FDA gives third-ever drug approval for ALS

On Sept. 29, the FDA approved Relyvrio, a new drug for ALS, even though a study of 137 ALS patients did not result in “substantial evidence” that Relyvrio was effective.

At age 52, Glen Rouse suffered from arm weakness and a lot of muscle twitches. “I first thought something was wrong when I could not throw a 50-pound bag of dog food over the tailgate of my truck—something I use to do effortlessly,” said the 54-year-old resident of Anderson, California, about three hours north of San Francisco.

In August, Rouse retired as a forester for a private timber company, a job he had held for 31 years. The impetus: amyotrophic lateral sclerosis, or ALS, a progressive neuromuscular disease that is commonly known as Lou Gehrig’s disease, named after the New York Yankees’ first baseman who succumbed to it less than a month shy of his 38th birthday in 1941. ALS eventually robs an individual of the ability to talk, walk, chew, swallow and breathe.

Rouse is now dependent on ventilation through a nasal mask and uses a powerchair to get around. “I can no longer walk or use my arms very well,” he said. “I can still move my wrists and fingers. I can also transfer from my chair to the toilet if I have two of my friends help me.”

It’s “shocking” that modern medicine has very little to offer to people with this devastating condition, Rouse said. But there is hope on the horizon. Yesterday, the U.S. Food and Drug Administration approved Relyvrio, a drug made up of two parts, sodium phenylbutyrate and taurursodiol, to treat patients with ALS.

“This approval provides another important treatment option for ALS, a life-threatening disease that currently has no cure,” said Billy Dunn, director of the Office of Neuroscience in the FDA’s Center for Drug Evaluation and Research, in a statement. “The FDA remains committed to facilitating the development of additional ALS treatments.”

Until this point, the FDA had approved only two other medications—Riluzole (rilutek) in 1995 and Radicava (edaravone) in 2017—to extend life in patients with ALS, which typically kills within two to five years after diagnosis. That’s why earlier this week, Rouse was optimistic about the FDA’s likely approval of a controversial new drug for ALS.

When Relyvrio is taken in addition to Riluzole, it appears to slow functional decline by an additional 25 percent and extend life by another 6 to 10 months, said Richard Bedlak, director of the Duke ALS Clinic. “It is not a cure, but it is definitely a step forward.”

“The whole ALS community is extremely excited about it,” he said the day before Relyvrio’s expected approval. “We are very hopeful. We’re on pins and needles.”

A study of 137 ALS patients did not result in “substantial evidence” that Relyvrio was effective, the agency’s Peripheral and Central Nervous System Drugs Advisory Committee concluded in March. However, after some persuasion from FDA officials, patients and their families, the committee met again and decided to recommend approving the drug.

In January 2019, following an ALS diagnosis at age 58 in October the previous year, Jeff Sarnacki, of Chester, Maryland, was accepted into a trial for Relyvrio. “Because of the trial, we did experience hope and a greater sense of help than had we not had that opportunity,” said Juliet Taylor, his wife and caregiver. They both believed the drug “worked for him in giving him more time.”

In June 2019, Sarnacki chose an open-label extension, offered to patients by drug researchers after a study ends, and took the active drug until he died peacefully at home under hospice care in May 2020, five days after his 60th birthday. A retired agent with the federal Bureau of Alcohol, Tobacco, Firearms and Explosives who later worked as a security consultant, Sarnacki lived about 19 months after diagnosis, which is shorter than the typical prognosis.

His symptoms began with leg cramps in fall 2017 and foot drop in early 2018. A feeding tube was placed in 2019, as it became necessary early in his illness, Taylor said. He also took Radicava and Riluzole, the two previously approved drugs, for his ALS. “We were both incredulous that, so many years after Lou Gehrig’s own diagnosis, there were so few treatments available,” she said.

The dearth of successful treatments for ALS is “certainly not for lack of trying,” said Karen Raley Steffens, a registered nurse and ALS support services coordinator at the Les Turner ALS Foundation in Skokie, Ill. “There are thousands of researchers and scientists all over the world working tirelessly to try to develop treatments for ALS.”

Unfortunately, she added, research takes time and exorbitant amounts of funding, while bureaucratic challenges persist. The rare disease also manifests and progresses in many different ways, so many treatments are needed.

As of 2017, the Centers for Disease Control and Prevention estimated that more than 31,000 people in the U.S. live with ALS, and an average of 5,000 people are newly diagnosed every year. It is slightly more common in men than women. Most people are diagnosed between the ages of 55 and 75.

Most cases of ALS are sporadic, meaning that doctors don’t know the cause. There is about a one-year interval between symptom onset and an ALS diagnosis for most patients, so many motor neurons are lost by the time individuals can enroll in a clinical trial, said Richard Bedlack, professor of neurology and director of the Duke ALS Clinic in Durham, North Carolina.

Bedlack found the new drug, Relyvrio, to be “very promising,” which is why he testified to the FDA in favor of approval. (He’s a consultant and disease state speaker for multiple companies including Amylyx, manufacturer of Relyvrio.)

The “drug has different mechanisms of action than the currently approved treatments,” Bedlack said. He added that, when Relyvrio is taken in addition to Riluzole, it appears to slow functional decline by an additional 25 percent and extend life by another 6 to 10 months. “It is not a cure, but it is definitely a step forward.”

T. Scott Diesing, a neurohospitalist and director of general neurology at the University of Nebraska Medical Center in Omaha, said he hopes the drug is “as good as people anticipated it should be, because there are not too many options for these patients.”

"FDA went out on a limb in approving Relyvrio based on limited results from a small trial while a larger study remains in progress," said Florian P. Thomas, co-director of the ALS Center at Hackensack University Medical Center and Hackensack Meridian School of Medicine in New Jersey. "While it is definitely promising, clearly, the last word on this drug has not been spoken."

So far, Rouse's voice is holding up, but he knows the day will come when ALS will steal that and much more from him.

ALS is 100 percent fatal, with some patients dying as soon as a year after diagnosis. A few have lasted as long as 15 years, but those are the exceptions, Diesing said.

“If this drug can provide even months of additional life, or would maintain quality of life, that’s a big deal,” he noted, adding that “the patients are saying, ‘I know it’s not proven conclusively, but what do we have to lose?’ So, they would like to try it while additional studies are ongoing.” The drug has already been conditionally approved in Canada.

As his disease progresses, Rouse hopes to get a speech-to-text voice-generating computer that he can control with his eyes. So far, his voice is holding up, but he knows the day will come when ALS will steal that and much more from him. He works at I AM ALS, a patient-led community, and six of his friends have already died of the disease.

“Every time I lose a friend to ALS, I grieve and am sad but I resolve myself to keep working harder for them, myself and others,” Rouse said. “People living with ALS find great purpose in life advocating and trying to make a difference.”

Friday Five Podcast: New drug may slow the rate of Alzheimer's disease

On September 27, pharmaceuticals Biogen and Eisai announced that their drug, lecanemab, can slow the rate of Alzheimer's disease, according to a clinical trial. Today's Friday Five episode covers this story and other health research over the month of September.

The Friday Five covers important stories in health and science research that you may have missed - usually over the previous week, but today's episode is a lookback on important studies over the month of September.

Most recently, on September 27, pharmaceuticals Biogen and Eisai announced that a clinical trial showed their drug, lecanemab, can slow the rate of Alzheimer's disease. There are plenty of controversies and troubling ethical issues in science – and we get into many of them in our online magazine – but this news roundup focuses on scientific creativity and progress to give you a therapeutic dose of inspiration headed into the weekend and the new month.

Listen on Apple | Listen on Spotify | Listen on Stitcher | Listen on Amazon | Listen on Google

This Friday Five episode covers the following studies published and announced over the past month:

- A new drug is shown to slow the rate of Alzheimer's disease

- The need for speed if you want to reduce your risk of dementia

- How to refreeze the north and south poles

- Ancient wisdom about Neti pots could pay off for Covid

- Two women, one man and a baby