Don’t fear AI, fear power-hungry humans

Story by Big Think

We live in strange times, when the technology we depend on the most is also that which we fear the most. We celebrate cutting-edge achievements even as we recoil in fear at how they could be used to hurt us. From genetic engineering and AI to nuclear technology and nanobots, the list of awe-inspiring, fast-developing technologies is long.

However, this fear of the machine is not as new as it may seem. Technology has a longstanding alliance with power and the state. The dark side of human history can be told as a series of wars whose victors are often those with the most advanced technology. (There are exceptions, of course.) Science, and its technological offspring, follows the money.

This fear of the machine seems to be misplaced. The machine has no intent: only its maker does. The fear of the machine is, in essence, the fear we have of each other — of what we are capable of doing to one another.

How AI changes things

Sure, you would reply, but AI changes everything. With artificial intelligence, the machine itself will develop some sort of autonomy, however ill-defined. It will have a will of its own. And this will, if it reflects anything that seems human, will not be benevolent. With AI, the claim goes, the machine will somehow know what it must do to get rid of us. It will threaten us as a species.

Well, this fear is also not new. Mary Shelley wrote Frankenstein in 1818 to warn us of what science could do if it served the wrong calling. In the case of her novel, Dr. Frankenstein’s call was to win the battle against death — to reverse the course of nature. Granted, any cure of an illness interferes with the normal workings of nature, yet we are justly proud of having developed cures for our ailments, prolonging life and increasing its quality. Science can achieve nothing more noble. What messes things up is when the pursuit of good is confused with that of power. In this distorted scale, the more powerful the better. The ultimate goal is to be as powerful as gods — masters of time, of life and death.

Should countries create a World Mind Organization that controls the technologies that develop AI?

Back to AI, there is no doubt the technology will help us tremendously. We will have better medical diagnostics, better traffic control, better bridge designs, and better pedagogical animations to teach in the classroom and virtually. But we will also have better winnings in the stock market, better war strategies, and better soldiers and remote ways of killing. This grants real power to those who control the best technologies. It increases the take of the winners of wars — those fought with weapons, and those fought with money.

A story as old as civilization

The question is how to move forward. This is where things get interesting and complicated. We hear over and over again that there is an urgent need for safeguards, for controls and legislation to deal with the AI revolution. Great. But if these machines are essentially functioning in a semi-black box of self-teaching neural nets, how exactly are we going to make safeguards that are sure to remain effective? How are we to ensure that the AI, with its unlimited ability to gather data, will not come up with new ways to bypass our safeguards, the same way that people break into safes?

The second question is that of global control. As I wrote before, overseeing new technology is complex. Should countries create a World Mind Organization that controls the technologies that develop AI? If so, how do we organize this planet-wide governing board? Who should be a part of its governing structure? What mechanisms will ensure that governments and private companies do not secretly break the rules, especially when to do so would put the most advanced weapons in the hands of the rule breakers? They will need those, after all, if other actors break the rules as well.

As before, the countries with the best scientists and engineers will have a great advantage. A new international détente will emerge in the molds of the nuclear détente of the Cold War. Again, we will fear destructive technology falling into the wrong hands. This can happen easily. AI machines will not need to be built at an industrial scale, as nuclear capabilities were, and AI-based terrorism will be a force to reckon with.

So here we are, afraid of our own technology all over again.

What is missing from this picture? It continues to illustrate the same destructive pattern of greed and power that has defined so much of our civilization. The failure it shows is moral, and only we can change it. We define civilization by the accumulation of wealth, and this worldview is killing us. The project of civilization we invented has become self-cannibalizing. As long as we do not see this, and we keep on following the same route we have trodden for the past 10,000 years, it will be very hard to legislate the technology to come and to ensure such legislation is followed. Unless, of course, AI helps us become better humans, perhaps by teaching us how stupid we have been for so long. This sounds far-fetched, given who this AI will be serving. But one can always hope.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.

Meet the Psychologist Using Psychedelics to Treat Racial Trauma

Monnica Williams was stuck. The veteran psychologist wanted to conduct a study using psychedelics, but her university told her they didn't have the expertise to evaluate it via an institutional review board, which is responsible for providing ethical and regulatory oversight for research that involves human participants. Instead, they directed her to a hospital, whose reviewers turned it down, citing research of a banned substance as unethical.

"I said, 'We're not using illegal psilocybin, we're going through Health Canada,'" Williams said. Psilocybin was banned in Canada in 1974, but can now be obtained with an exemption from Health Canada, the federal government's health policy department. After learning this, the hospital review board told Williams they couldn't review her proposal because she's not affiliated with the hospital, after all.

It's all part of balancing bureaucracy with research goals for Williams, a leading expert on racial trauma and psychedelic medicine, as well as obsessive compulsive disorder (OCD), at the University of Ottawa. She's exploring the use of hallucinogenic substances like MDMA and psilocybin — commonly known as ecstasy and magic mushrooms, respectively — to help people of color address the psychological impacts of systemic racism. A prolific researcher, Williams also works as an expert witness, offering clinical evaluations for racial trauma cases.

Scientists have long known that psychedelics produce an altered state of consciousness and openness to new perspectives. For people with mental health conditions who haven't benefited from traditional therapy, psychedelics may be able to help them discover what's causing their pain or trauma, including racial trauma—the mental and emotional injury spurred by racial bias.

"Using psychedelics can not only bring these pain points to the surface for healing, but can reduce the anxiety or response to these memories and allow them to speak openly about them without the pain they bring," Williams says. Her research harnesses the potential of psychedelics to increase neuroplasticity, which includes the brain's ability to build new pathways.

"People of color are dealing with racism all the time, in large and small ways, and even dealing with racism in healthcare, even dealing with racism in therapy."

But she says therapists of color aren't automatically equipped to treat racial trauma. First, she notes, people of color are "vastly underrepresented in the mental health workforce." This is doubly true in psychedelic-assisted psychotherapy, in which a person is guided through a psychedelic session by a therapist or team of therapists, then processes the experience in subsequent therapy sessions.

"On top of that, the therapists of color are getting the same training that the white therapists are getting, so it's not even really guaranteed that they're going to be any better at helping a person that may have racial trauma emerging as part of their experience," she says.

In her own training to become a clinical psychologist at the University of Virginia, Williams says she was taught "how to be a great psychologist for white people." Yet even people of color, she argues, need specialized training to work with marginalized groups, particularly when it comes to MDMA, psilocybin and other psychedelics. Because these drugs can lower natural psychological defense mechanisms, Williams says, it's important for providers to be specially trained.

"People of color are dealing with racism all the time, in large and small ways, and even dealing with racism in healthcare, even dealing with racism in therapy. So [they] generally develop a lot of defenses and coping strategies to ward off racism so that they can function." she says. This is particularly true with psychedelic-assisted psychotherapy: "One possibility is that you're going to be stripped of your defenses, you're going to be vulnerable. And so you have to work with a therapist who is going to understand that and not enact more racism in their work with you."

Williams has struggled to find funding and institutional approval for research involving psychedelics, or funding for investigations into racial trauma or the impacts of conditions like OCD and post-traumatic stress disorder (PTSD) in people of color. With the bulk of her work focusing on OCD, she hoped to focus on people of color, but found there was little funding for that type of research. In 2020, that started to change as structural racism garnered more media attention.

After the killing of George Floyd, a 46-year-old Black man, by a white police officer in May 2020, Williams was flooded with media requests. "Usually, when something like that happens, I get contacted a lot for a couple of weeks, and it dies off. But after George Floyd, it just never did."

Monnica Williams, clinical psychologist at the University of Ottawa

Williams was no stranger to the questions that soon blazed across headlines: How can we mitigate microaggressions? How do race and ethnicity impact mental health? What terms should we use to discuss racial issues? What constitutes an ally, and why aren't there more of them? Why aren't there more people of color in academia, and so many other fields?

Now, she's hoping that the increased attention on racial justice will mean more acceptance for the kind of research she's doing.

In fact, Williams herself has used psychedelics in order to gain a better understanding of how to use them to treat racial trauma. In a study published in January, she and two other Black female psychotherapists took MDMA in a supervised setting, guided by a team of mental health practitioners who helped them process issues that came up as the session progressed. Williams, who was also the study's lead author, found that participants' experiences centered around processing and finding release from racial identities, and, in one case, of simply feeling wholly human without the burden of racial identity for the first time.

The purpose of the study was twofold: to understand how Black women react to psychedelics and to provide safe, firsthand, psychedelic experiences to Black mental health practitioners. One of the other study participants has since gone on to offer psychedelic-assisted psychotherapy to her own patients.

Psychedelic research, and psilocybin in particular, has become a hot topic of late, particularly after Oregon became the first state to legalize it for therapeutic use last November. A survey-based, observational study with 313 participants, published in 2020, paved the way for Williams' more recent MDMA experiments by describing improvements in depression, anxiety and racial trauma among people of color who had used LSD, psilocybin or MDMA in a non-research setting.

Williams and her team included only respondents who reported a moderate to strong psychoactive effect of past psychedelic consumption and believed these experiences provided "relief from the challenging effects of ethnic discrimination." Participants reported a memorable psychedelic experience as well as its acute and lasting effects, completing assessments of psychological insight, mystical experience and emotional challenges experienced during psychedelic experience, then describing their mental health — including depression, anxiety and trauma symptoms — before and after that experience.

Still, Williams says addressing racism is much more complex than treating racial trauma. "One of the questions I get asked a lot is, 'How can Black people cope with racism?' And I don't really like that question," she says. "I think it's important and I don't mind answering it, but I think the more important question is, how can we end racism? What can Black people do to stop racism that's happening to them and what can we do as a society to stop racism? And people aren't really asking this question."

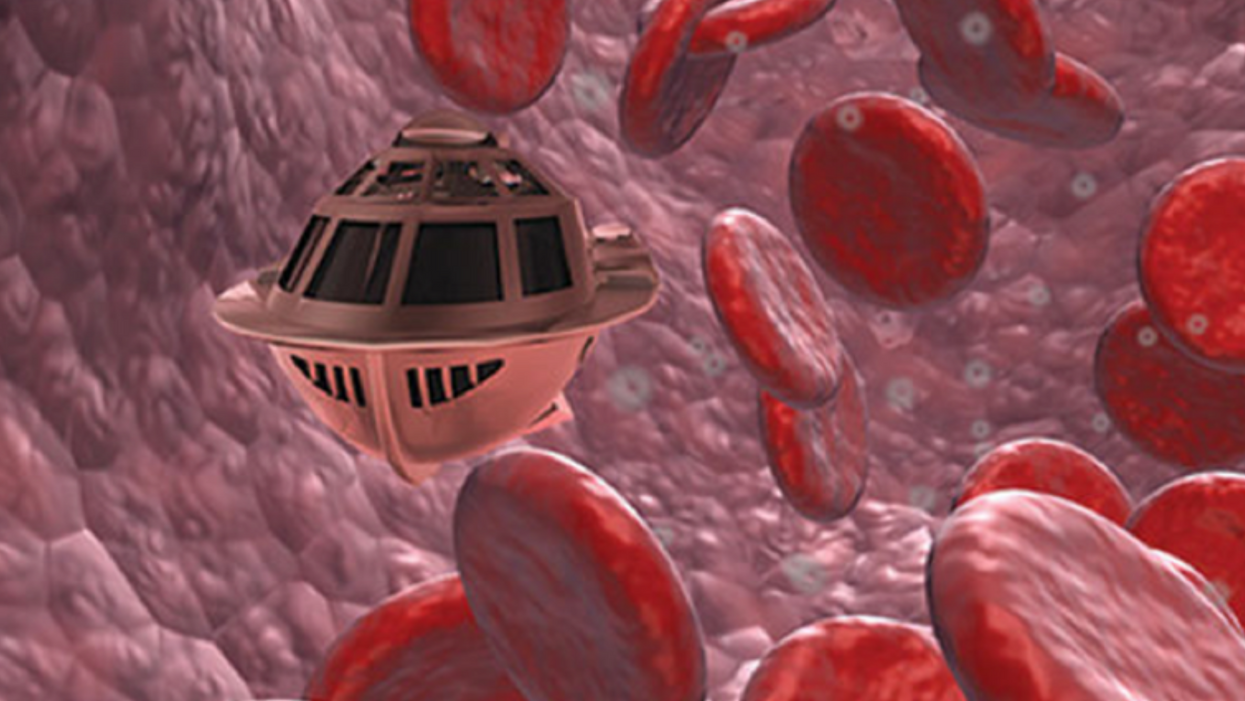

Tiny, Injectable Robots Could Be the Future of Brain Treatments

A movie still from the 1966 film "Fantastic Voyage"

In the 1966 movie "Fantastic Voyage," actress Raquel Welch and her submarine were shrunk to the size of a cell in order to eliminate a blood clot in a scientist's brain. Now, 55 years later, the scenario is becoming closer to reality.

California-based startup Bionaut Labs has developed a nanobot about the size of a grain of rice that's designed to transport medication to the exact location in the body where it's needed. If you think about it, the conventional way to deliver medicine makes little sense: A painkiller affects the entire body instead of just the arm that's hurting, and chemotherapy is flushed through all the veins instead of precisely targeting the tumor.

"Chemotherapy is delivered systemically," Bionaut-founder and CEO Michael Shpigelmacher says. "Often only a small percentage arrives at the location where it is actually needed."

But what if it was possible to send a tiny robot through the body to attack a tumor or deliver a drug at exactly the right location?

Several startups and academic institutes worldwide are working to develop such a solution but Bionaut Labs seems the furthest along in advancing its invention. "You can think of the Bionaut as a tiny screw that moves through the veins as if steered by an invisible screwdriver until it arrives at the tumor," Shpigelmacher explains. Via Zoom, he shares the screen of an X-ray machine in his Culver City lab to demonstrate how the half-transparent, yellowish device winds its way along the spine in the body. The nanobot contains a tiny but powerful magnet. The "invisible screwdriver" is an external magnetic field that rotates that magnet inside the device and gets it to move and change directions.

The current model has a diameter of less than a millimeter. Shpigelmacher's engineers could build the miniature vehicle even smaller but the current size has the advantage of being big enough to see with bare eyes. It can also deliver more medicine than a tinier version. In the Zoom demonstration, the micorobot is injected into the spine, not unlike an epidural, and pulled along the spine through an outside magnet until the Bionaut reaches the brainstem. Depending which organ it needs to reach, it could be inserted elsewhere, for instance through a catheter.

"The hope is that we can develop a vehicle to transport medication deep into the body."

Imagine moving a screw through a steak with a magnet — that's essentially how the device works. But of course, the Bionaut is considerably different from an ordinary screw: "At the right location, we give a magnetic signal, and it unloads its medicine package," Shpigelmacher says.

To start, Bionaut Labs wants to use its device to treat Parkinson's disease and brain stem gliomas, a type of cancer that largely affects children and teenagers. About 300 to 400 young people a year are diagnosed with this type of tumor. Radiation and brain surgery risk damaging sensitive brain tissue, and chemotherapy often doesn't work. Most children with these tumors live less than 18 months. A nanobot delivering targeted chemotherapy could be a gamechanger. "These patients really don't have any other hope," Shpigelmacher says.

Of course, the main challenge of the developing such a device is guaranteeing that it's safe. Because tissue is so sensitive, any mistake could risk disastrous results. Over the past four years, Bionaut has tested its technology in dozens of healthy sheep and pigs with no major adverse effects. Sheep make a good stand-in for humans because their brains and spines are similar to ours.

The Bionaut device is about the size of a grain of rice.

Bionaut Labs

"As the Bionaut moves through brain tissue, it creates a transient track that heals within a few weeks," Shpigelmacher says. The company is hoping to be the first to test a nanobot in humans. That could happen as early as 2023, Shpigelmacher says.

Once the technique has been perfected, further applications could include addressing other kinds of brain disorders that are considered incurable now, such as Alzheimer's or Huntington's disease. "Microrobots could serve as a bridgehead, opening the gateway to the brain and facilitating precise access of deep brain structure – either to deliver medication, take cell samples or stimulate specific brain regions," Shpigelmacher says.

Robot-assisted hybrid surgery with artificial intelligence is already used in state-of-the-art surgery centers, and many medical experts believe that nanorobotics will be the instrument of the future. In 2016, three scientists were awarded the Nobel Prize in Chemistry for their development of "the world's smallest machines," nano "elevators" and minuscule motors. Since then, the scientific experiments have progressed to the point where applicable devices are moving closer to actually being implemented.

Bionaut's technology was initially developed by a research team lead by Peer Fischer, head of the independent Micro Nano and Molecular Systems Lab at the Max Planck Institute for Intelligent Systems in Stuttgart, Germany. Fischer is considered a pioneer in the research of nano systems, which he began at Harvard University more than a decade ago. He and his team are advising Bionaut Labs and have licensed their technology to the company.

"The hope is that we can develop a vehicle to transport medication deep into the body," says Max Planck scientist Tian Qiu, who leads the cooperation with Bionaut Labs. He agrees with Shpigelmacher that the Bionaut's size is perfect for transporting medication loads and is researching potential applications for even smaller nanorobots, especially in the eye, where the tissue is extremely sensitive. "Nanorobots can sneak through very fine tissue without causing damage."

In "Fantastic Voyage," Raquel Welch's adventures inside the body of a dissident scientist let her swim through his veins into his brain, but her shrunken miniature submarine is attacked by antibodies; she has to flee through the nerves into the scientist's eye where she escapes into freedom on a tear drop. In reality, the exit in the lab is much more mundane. The Bionaut simply leaves the body through the same port where it entered. But apart from the dramatization, the "Fantastic Voyage" was almost prophetic, or, as Shpigelmacher says, "Science fiction becomes science reality."