Don’t fear AI, fear power-hungry humans

Story by Big Think

We live in strange times, when the technology we depend on the most is also that which we fear the most. We celebrate cutting-edge achievements even as we recoil in fear at how they could be used to hurt us. From genetic engineering and AI to nuclear technology and nanobots, the list of awe-inspiring, fast-developing technologies is long.

However, this fear of the machine is not as new as it may seem. Technology has a longstanding alliance with power and the state. The dark side of human history can be told as a series of wars whose victors are often those with the most advanced technology. (There are exceptions, of course.) Science, and its technological offspring, follows the money.

This fear of the machine seems to be misplaced. The machine has no intent: only its maker does. The fear of the machine is, in essence, the fear we have of each other — of what we are capable of doing to one another.

How AI changes things

Sure, you would reply, but AI changes everything. With artificial intelligence, the machine itself will develop some sort of autonomy, however ill-defined. It will have a will of its own. And this will, if it reflects anything that seems human, will not be benevolent. With AI, the claim goes, the machine will somehow know what it must do to get rid of us. It will threaten us as a species.

Well, this fear is also not new. Mary Shelley wrote Frankenstein in 1818 to warn us of what science could do if it served the wrong calling. In the case of her novel, Dr. Frankenstein’s call was to win the battle against death — to reverse the course of nature. Granted, any cure of an illness interferes with the normal workings of nature, yet we are justly proud of having developed cures for our ailments, prolonging life and increasing its quality. Science can achieve nothing more noble. What messes things up is when the pursuit of good is confused with that of power. In this distorted scale, the more powerful the better. The ultimate goal is to be as powerful as gods — masters of time, of life and death.

Should countries create a World Mind Organization that controls the technologies that develop AI?

Back to AI, there is no doubt the technology will help us tremendously. We will have better medical diagnostics, better traffic control, better bridge designs, and better pedagogical animations to teach in the classroom and virtually. But we will also have better winnings in the stock market, better war strategies, and better soldiers and remote ways of killing. This grants real power to those who control the best technologies. It increases the take of the winners of wars — those fought with weapons, and those fought with money.

A story as old as civilization

The question is how to move forward. This is where things get interesting and complicated. We hear over and over again that there is an urgent need for safeguards, for controls and legislation to deal with the AI revolution. Great. But if these machines are essentially functioning in a semi-black box of self-teaching neural nets, how exactly are we going to make safeguards that are sure to remain effective? How are we to ensure that the AI, with its unlimited ability to gather data, will not come up with new ways to bypass our safeguards, the same way that people break into safes?

The second question is that of global control. As I wrote before, overseeing new technology is complex. Should countries create a World Mind Organization that controls the technologies that develop AI? If so, how do we organize this planet-wide governing board? Who should be a part of its governing structure? What mechanisms will ensure that governments and private companies do not secretly break the rules, especially when to do so would put the most advanced weapons in the hands of the rule breakers? They will need those, after all, if other actors break the rules as well.

As before, the countries with the best scientists and engineers will have a great advantage. A new international détente will emerge in the molds of the nuclear détente of the Cold War. Again, we will fear destructive technology falling into the wrong hands. This can happen easily. AI machines will not need to be built at an industrial scale, as nuclear capabilities were, and AI-based terrorism will be a force to reckon with.

So here we are, afraid of our own technology all over again.

What is missing from this picture? It continues to illustrate the same destructive pattern of greed and power that has defined so much of our civilization. The failure it shows is moral, and only we can change it. We define civilization by the accumulation of wealth, and this worldview is killing us. The project of civilization we invented has become self-cannibalizing. As long as we do not see this, and we keep on following the same route we have trodden for the past 10,000 years, it will be very hard to legislate the technology to come and to ensure such legislation is followed. Unless, of course, AI helps us become better humans, perhaps by teaching us how stupid we have been for so long. This sounds far-fetched, given who this AI will be serving. But one can always hope.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.

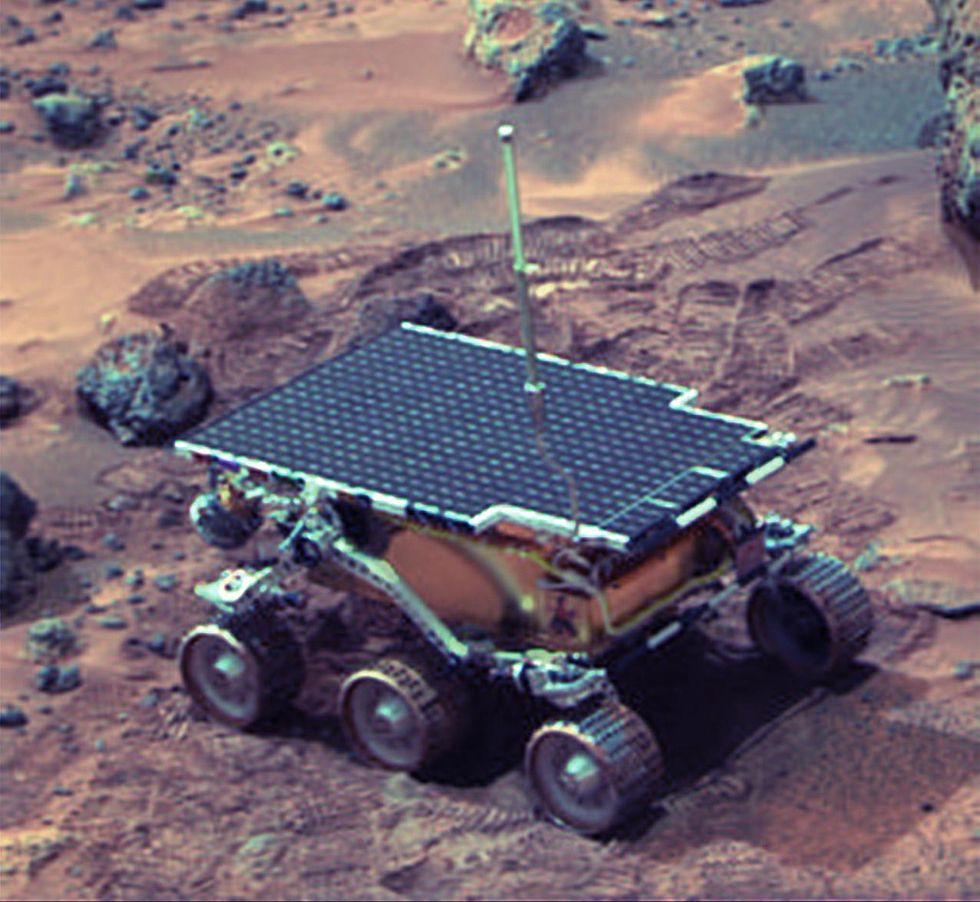

Donna Shirley pictured at her home in Tulsa, with a model of the Sojourner rover she was in charge of that explored Mars.

When NASA's Perseverance rover landed successfully on Mars on February 18, 2021, calling it "one giant leap for mankind" – as Neil Armstrong said when he set foot on the moon in 1969 – would have been inaccurate. This year actually marked the fifth time the U.S. space agency has put a remote-controlled robotic exploration vehicle on the Red Planet. And it was a female engineer named Donna Shirley who broke new ground for women in science as the manager of both the Mars Exploration Program and the 30-person team that built Sojourner, the first rover to land on Mars on July 4, 1997.

For Shirley, the Mars Pathfinder mission was the climax of her 32-year career at NASA's Jet Propulsion Laboratory (JPL) in Pasadena, California. The Oklahoma-born scientist, who earned her Master's degree in aerospace engineering from the University of Southern California, saw her profile skyrocket with media appearances from CNN to the New York Times, and her autobiography Managing Martians came out in 1998. Now 79 and living in a Tulsa retirement community, she still embraces her status as a female pioneer.

"Periodically, I'll hear somebody say they got into the space program because of me, and that makes me feel really good," Shirley told Leaps.org. "I look at the mission control area, and there are a lot of women in there. I'm quite pleased I was able to break the glass ceiling."

Her $25-million, 25-pound microrover – powered by solar energy and designed to get rock samples and test soil chemistry for evidence of life – was named after Sojourner Truth, a 19th-century Black abolitionist and women's rights activist. Unlike Mars Pathfinder, Shirley didn't have to travel more than 131 million miles to reach her goal, but her path to scientific fame as a woman sometimes resembled an asteroid field.

The Sojourner Rover in 1997 on Mars.NASA/JPL

The Sojourner Rover in 1997 on Mars.NASA/JPLAs a high-IQ tomboy growing up in Wynnewood, Oklahoma (pop. 2,300), Shirley yearned to escape. She decided to become an engineer at age 10 and took flying lessons at 15. Her extraterrestrial aspirations were fueled by Ray Bradbury's The Martian Chronicles and Arthur C. Clarke's The Sands of Mars. Yet when she entered the University of Oklahoma (OU) in 1958, her freshman academic advisor initially told her: "Girls can't be engineers." She ignored him.

Years later, Shirley would combat such archaic thinking, succeeding at JPL with her creative, collaborative management style. "If you look at the literature, you'll find that teams that are either led by or heavily involved with women do better than strictly male teams," she noted.

However, her career trajectory stalled at OU. Burned out by her course load and distracted by a broken engagement to marry a fellow student, she switched her major to professional writing. After graduation, she applied her aeronautical background as a McDonnell Aircraft technical writer, but her boss, she says, harassed her and she faced gender-based hostility from male co-workers.

Returning to OU, Shirley finished off her engineering degree and became a JPL aerodynamist in 1966 after answering an ad in the St. Louis Post-Dispatch. At first, she was the only female engineer among the research center's 2,000-odd engineers. She wore many hats, from designing planetary atmospheric entry vehicles to picking the launch date of November 4, 1973 for Mariner 10's mission to Venus and Mercury.

By the mid-1980's, she was managing teams that focused on robotics and Mars, delivering creative solutions when NASA budget cuts loomed. In 1989, the same year the Sojourner microrover concept was born, President George H.W. Bush announced his Space Exploration Initiative, including plans for a human mission to Mars by 2019.

That target, of course, wasn't attained, despite huge advances in technology and our understanding of the Martian environment. Today, Shirley believes humans could land on Mars by 2030. She became the founding director of the Science Fiction Museum and Hall of Fame in Seattle in 2004 after leaving NASA, and to this day, she enjoys checking out pop culture portrayals of Mars landings – even if they're not always accurate.

After the novel The Martian was published in 2011, which later was adapted into the hit film starring Matt Damon, Shirley phoned author Andy Weir: "You've got a major mistake in here. It says there's a storm that tries to blow the rocket over. But actually, the Mars atmosphere is so thin, it would never blow a rocket over!"

Fearlessly speaking her mind and seeking the stars helped Donna Shirley make history. However, a 2019 Washington Post story noted: "Women make up only about a third of NASA's workforce. They comprise just 28 percent of senior executive leadership positions and are only 16 percent of senior scientific employees." Whether it's traveling to Mars or trending toward gender equality, we've still got a long way to go.

Announcing March Event: "COVID Vaccines and the Return to Life: Part 1"

Leading medical and scientific experts will discuss the latest developments around the COVID-19 vaccines at our March 11th event.

EVENT INFORMATION

DATE:

Thursday, March 11th, 2021 at 12:30pm - 1:45pm EST

On the one-year anniversary of the global declaration of the pandemic, this virtual event will convene leading scientific and medical experts to discuss the most pressing questions around the COVID-19 vaccines. Planned topics include the effect of the new circulating variants on the vaccines, what we know so far about transmission dynamics post-vaccination, how individuals can behave post-vaccination, the myths of "good" and "bad" vaccines as more alternatives come on board, and more. A public Q&A will follow the expert discussion.

CONTACT:

kira@goodinc.com

LOCATION:

Zoom webinar

SPEAKERS:

Dr. Paul Offit speaking at Communicating Vaccine Science.

commons.wikimedia.orgDr. Paul Offit, M.D., is the director of the Vaccine Education Center and an attending physician in infectious diseases at the Children's Hospital of Philadelphia. He is a co-inventor of the rotavirus vaccine for infants, and he has lent his expertise to the advisory committees that review data on new vaccines for the CDC and FDA.

Dr. Monica Gandhi

UCSF Health

Dr. Monica Gandhi, M.D., MPH, is Professor of Medicine and Associate Division Chief (Clinical Operations/ Education) of the Division of HIV, Infectious Diseases, and Global Medicine at UCSF/ San Francisco General Hospital.

Dr. Onyema Ogbuagu, MBBCh, FACP, FIDSA

Yale Medicine

Dr. Onyema Ogbuagu, MBBCh, is an infectious disease physician at Yale Medicine who treats COVID-19 patients and leads Yale's clinical studies around COVID-19. He ran Yale's trial of the Pfizer/BioNTech vaccine.

Dr. Eric Topol

Dr. Topol's Twitter

Dr. Eric Topol, M.D., is a cardiologist, scientist, professor of molecular medicine, and the director and founder of Scripps Research Translational Institute. He has led clinical trials in over 40 countries with over 200,000 patients and pioneered the development of many routinely used medications.

REGISTER NOW

This event is the first of a four-part series co-hosted by LeapsMag, the Aspen Institute Science & Society Program, and the Sabin–Aspen Vaccine Science & Policy Group, with generous support from the Gordon and Betty Moore Foundation and the Howard Hughes Medical Institute.

Kira Peikoff was the editor-in-chief of Leaps.org from 2017 to 2021. As a journalist, her work has appeared in The New York Times, Newsweek, Nautilus, Popular Mechanics, The New York Academy of Sciences, and other outlets. She is also the author of four suspense novels that explore controversial issues arising from scientific innovation: Living Proof, No Time to Die, Die Again Tomorrow, and Mother Knows Best. Peikoff holds a B.A. in Journalism from New York University and an M.S. in Bioethics from Columbia University. She lives in New Jersey with her husband and two young sons. Follow her on Twitter @KiraPeikoff.