Don’t fear AI, fear power-hungry humans

Story by Big Think

We live in strange times, when the technology we depend on the most is also that which we fear the most. We celebrate cutting-edge achievements even as we recoil in fear at how they could be used to hurt us. From genetic engineering and AI to nuclear technology and nanobots, the list of awe-inspiring, fast-developing technologies is long.

However, this fear of the machine is not as new as it may seem. Technology has a longstanding alliance with power and the state. The dark side of human history can be told as a series of wars whose victors are often those with the most advanced technology. (There are exceptions, of course.) Science, and its technological offspring, follows the money.

This fear of the machine seems to be misplaced. The machine has no intent: only its maker does. The fear of the machine is, in essence, the fear we have of each other — of what we are capable of doing to one another.

How AI changes things

Sure, you would reply, but AI changes everything. With artificial intelligence, the machine itself will develop some sort of autonomy, however ill-defined. It will have a will of its own. And this will, if it reflects anything that seems human, will not be benevolent. With AI, the claim goes, the machine will somehow know what it must do to get rid of us. It will threaten us as a species.

Well, this fear is also not new. Mary Shelley wrote Frankenstein in 1818 to warn us of what science could do if it served the wrong calling. In the case of her novel, Dr. Frankenstein’s call was to win the battle against death — to reverse the course of nature. Granted, any cure of an illness interferes with the normal workings of nature, yet we are justly proud of having developed cures for our ailments, prolonging life and increasing its quality. Science can achieve nothing more noble. What messes things up is when the pursuit of good is confused with that of power. In this distorted scale, the more powerful the better. The ultimate goal is to be as powerful as gods — masters of time, of life and death.

Should countries create a World Mind Organization that controls the technologies that develop AI?

Back to AI, there is no doubt the technology will help us tremendously. We will have better medical diagnostics, better traffic control, better bridge designs, and better pedagogical animations to teach in the classroom and virtually. But we will also have better winnings in the stock market, better war strategies, and better soldiers and remote ways of killing. This grants real power to those who control the best technologies. It increases the take of the winners of wars — those fought with weapons, and those fought with money.

A story as old as civilization

The question is how to move forward. This is where things get interesting and complicated. We hear over and over again that there is an urgent need for safeguards, for controls and legislation to deal with the AI revolution. Great. But if these machines are essentially functioning in a semi-black box of self-teaching neural nets, how exactly are we going to make safeguards that are sure to remain effective? How are we to ensure that the AI, with its unlimited ability to gather data, will not come up with new ways to bypass our safeguards, the same way that people break into safes?

The second question is that of global control. As I wrote before, overseeing new technology is complex. Should countries create a World Mind Organization that controls the technologies that develop AI? If so, how do we organize this planet-wide governing board? Who should be a part of its governing structure? What mechanisms will ensure that governments and private companies do not secretly break the rules, especially when to do so would put the most advanced weapons in the hands of the rule breakers? They will need those, after all, if other actors break the rules as well.

As before, the countries with the best scientists and engineers will have a great advantage. A new international détente will emerge in the molds of the nuclear détente of the Cold War. Again, we will fear destructive technology falling into the wrong hands. This can happen easily. AI machines will not need to be built at an industrial scale, as nuclear capabilities were, and AI-based terrorism will be a force to reckon with.

So here we are, afraid of our own technology all over again.

What is missing from this picture? It continues to illustrate the same destructive pattern of greed and power that has defined so much of our civilization. The failure it shows is moral, and only we can change it. We define civilization by the accumulation of wealth, and this worldview is killing us. The project of civilization we invented has become self-cannibalizing. As long as we do not see this, and we keep on following the same route we have trodden for the past 10,000 years, it will be very hard to legislate the technology to come and to ensure such legislation is followed. Unless, of course, AI helps us become better humans, perhaps by teaching us how stupid we have been for so long. This sounds far-fetched, given who this AI will be serving. But one can always hope.

This article originally appeared on Big Think, home of the brightest minds and biggest ideas of all time.

At the “Apple Store of Doctor’s Offices,” Preventive Care Is High Tech. Is it Worth $150 a Month?

A patient getting examined at health startup Forward.

What if going to the doctor's office could be … nice?

If you didn't have to wait for your appointment, but were ushered right in; if your medical data was all collated and easily searchable on an iPhone app; if a remote scribe took notes while you spoke with your doctor so you could make eye contact with them; if your doctor didn't seem horribly rushed.

Would you go to the doctor to get help staying healthy, rather than just to stop being sick?

Would that change the way you thought about your health? Would you go to the doctor to get help staying healthy, rather than just to stop being sick? And would that, in the long run, be much better for you?

Those are the animating questions for Forward, a healthcare startup devoted to preventive care. Led by founder Adrian Aoun, formerly of Google/Sidewalk labs, Forward opened its first office in San Francisco in 2016 and has since expanded to Los Angeles, Orange County, New York, and Washington, D.C., with a San Diego location opening soon.

It's been described as the "Apple Store of doctor's offices," which in some ways is a reaction to Forward's vibe: Patients have described the offices as having blonde wood, minimalist design, sparkling water on tap — and lots of high-tech gadgets, like the full-body scanner that replaces the standard scale and stethoscope.

The interior of a Forward office.

(Courtesy Forward)

The more crucial difference, though, is its model of care. Forward doesn't take insurance. Instead, patients, or "members," pay a flat $149 per month, along the lines of a subscription service like Netflix or a gym membership. That fee covers visits, messaging with medical staff through the Forward app, the use of a wearable (like a Fitbit or a sleep tracker) if the physician recommends it, plus any bloodwork or diagnostic tests run in the on-site labs. (The company declined to disclose how many people have signed up for memberships.)

Predictability is Forward's other significant, distinguishing feature: No surprise co-pays, or extra charges showing up on a billing statement months later. Everything is wrapped up in the $149 membership fee, unless the physician recommends visiting an outside specialist.

That caveat isn't a small one. It's important to note that Forward is in no way meant to replace standard health insurance. The service is strictly focused on preventive care, so it wouldn't be much use in case of an emergency; it's meant to help people, as far as is possible, avoid that emergency at all.

Ani Okkasian's family recently went through such an emergency. Her 62-year-old father, an active and seemingly healthy man living with diabetes, had been feeling unwell for a while, but struggled to receive constructive follow-up or tests from his doctor. It finally emerged that his liver was severely damaged, and he suffered a stroke — the risk of which can be elevated by liver disease. He seemed to deteriorate completely within mere weeks, Okkasian said, and in January he passed away.

"He was someone who'd go to the doctor regularly and listen to what they said and follow it," Okkasian said. "I shouldn't have had to bury my father at 62. I still believe to my core that his death could have been avoided if the primary care was adequate."

"I could tell that the people who designed [Forward] had lost someone to the legacy system; it was so streamlined and so much clearer."

Okkasian began researching, looking for a better alternative, and discovered Forward. Founder Aoun lost his grandfather to a heart attack; his brother's heart attack at age 31 was the impetus to start Forward.

"I could tell that that was the genesis," Okkasian said. "Having just lost someone, and having had to deal with different aspects of the healthcare industry — how complicated and convoluted that all is — I could tell that the people who designed [Forward] had lost someone to the legacy system; it was so streamlined and so much clearer."

So Who Is Forward For?

The Affordable Care Act mandates that evidence-based preventive care must be covered by insurers without any cost to the patient. Today, 30 million Americans are still living without health insurance; but for most of the population, cost shouldn't prevent access to standard, preventive care, says Benjamin Sommers, a physician and professor at the Harvard T.H. Chan School of Public Health who has studied the effect of the ACA on preventive care access.

For Okkasian and her family, it wasn't a lack of access to primary care that was at issue; it was the quality of that primary care. In 2019, that's probably true for a lot of people.

"How come all other industries have been disturbed except the medical industry?" Okkasian asked. "It's disturbing the most people. We're so advanced in so many ways, but when it comes to the healthcare system, we're not prioritizing the wellness of a person."

Is Forward the answer? Well, probably not for everyone. Its office are only in a handful of cities, and there are limits to how scalable it would be; it's unavoidable that the $149 per month charge restricts access for a lot of people. Those who have insurance through their employer might have a flexible spending account (FSA) that would cover some or all of the membership fee, and Forward has said that 15 percent of their early members came from underserved communities and were offered free plans; but for many others, that's just an unworkable extra cost.

Sommers also sounded a dubious note about a maximalist attitude toward data collection.

"Even though some patients may think that 'more is always better' — more testing, more screening, etc. — this isn't true," he said. "Some types of cancer screening, ovarian cancer screening for instance, are actually harmful or of no benefit, because studies have shown that they don't improve survival or health outcomes, but can lead to unnecessary testing, pain, false positives, anxiety, and other side effects.

"It's really great for people who are in good health, looking to make it better."

"I'm generally skeptical of efforts to charge people more to get 'extra testing' that isn't currently supported by the medical evidence," he added.

But relatively healthy people who want to take a more active approach to their health — or people who have frequent testing needs, like those using the HIV-prevention drug PrEP, and want to avoid co-pays — might benefit from the on-demand, low-friction experience that Forward offers.

"It's really great for people who are in good health, looking to make it better," Okkasian said. "Your experience is simplified to a point where you feel empowered, not scared."

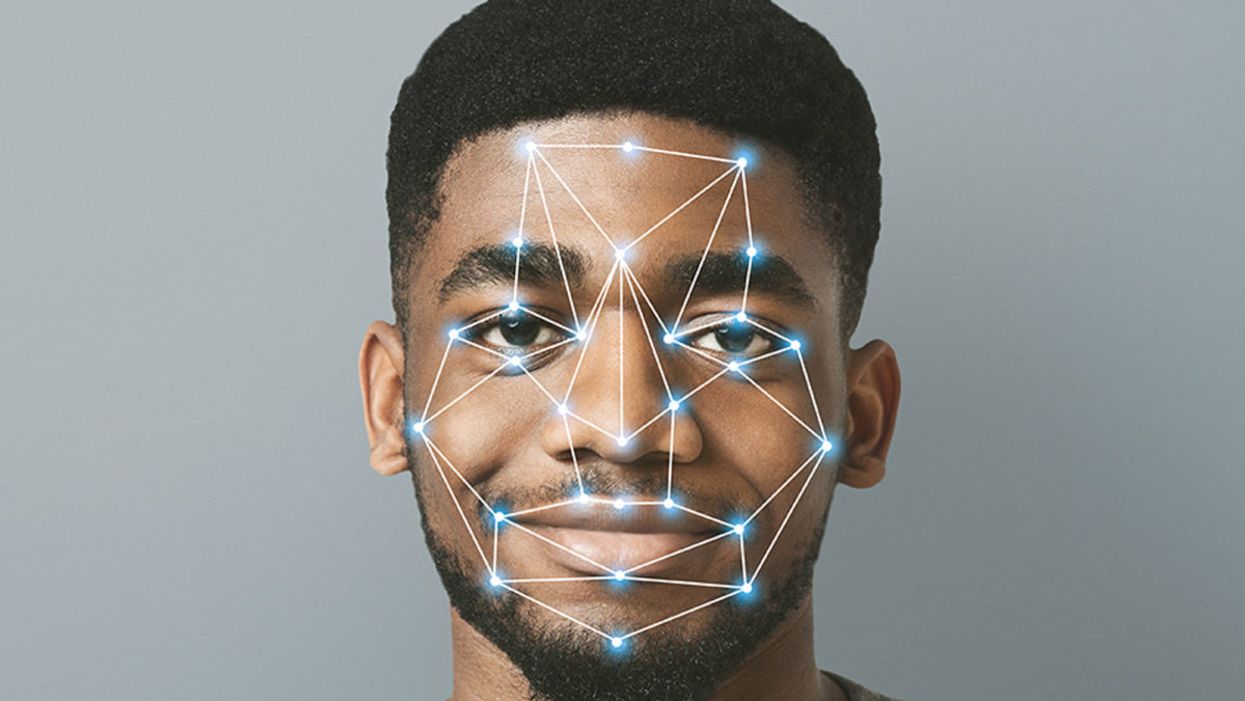

Facial Recognition Can Reduce Racial Profiling and False Arrests

The use of face recognition technology is expanding exponentially right now.

[Editor's Note: This essay is in response to our current Big Question, which we posed to experts with different perspectives: "Do you think the use of facial recognition technology by the police or government should be banned? If so, why? If not, what limits, if any, should be placed on its use?"]

Opposing facial recognition technology has become an article of faith for civil libertarians. Many who supported the bans in cities like San Francisco and Oakland have declared the technology to be inherently racist and abusive.

The greatest danger would be to categorically oppose this technology and pretend that it will simply go away.

I have spent my career as a criminal defense attorney and a civil libertarian -- and I do not fear it. Indeed, I see it as positive so long as it is appropriately regulated and controlled.

We are living in the beginning of a biometric age, where technology uses our physical or biological characteristics for a variety of products and services. It holds great promises as well as great risks. The greatest danger, however, would be to categorically oppose this technology and pretend that it will simply go away.

This is an age driven as much by consumer as it is government demand. Living in denial may be emotionally appealing, but it will only hasten the creation of post-privacy world. If we do not address this emerging technology, movements in public will increasingly result in instant recognition and even tracking. It is the type of fish-bowl society that strips away any expectation of privacy in our interactions and associations.

The biometrics field is expanding exponentially, largely due to the popularity of consumer products using facial recognition technology (FRT) -- from the iPhone program to shopping ones that recognize customers.

But the privacy community is losing this battle because it is using the privacy rationales and doctrines forged in the earlier electronic surveillance periods. Just as generals are often accused of planning to fight the last war, civil libertarians can sometimes cling to past models despite their decreasing relevance in the current world.

I see FRT as having positive implications that are worth pursuing. When properly used, biometrics can actually enhance privacy interests and even reduce racial profiling by reducing false arrests and the warrantless "patdowns" allowed by the Supreme Court. Bans not only deny police a technology widely used by businesses, but return police to the highly flawed default of "eye balling" suspects -- a system with a considerably higher error rate than top FRT programs.

Officers are often wrong and stop a great number of suspects in the hopes of finding a wanted felon.

A study in Australia showed that passport officers who had taken photographs of subjects in ideal conditions nonetheless experienced high error rates when identifying them shortly afterward, including 14 percent false acceptance rates. Currently, officers stop suspects based on their memory from seeing a photograph days or weeks earlier. They are often wrong and stop a great number of suspects in the hopes of finding a wanted felon. The best FRT programs achieve an astonishing accuracy rate, though real-world implementation has challenges that must be addressed.

One legitimate concern raised in early studies showed higher error rates in recognitions for certain groups, particularly African American women. An MIT study finding that error rate prompted major improvements in the algorithms as well as training changes to greatly reduce the frequency of errors. The issue remains a concern, but there is nothing inherently racist in algorithms. These are a set of computer instructions that isolate and process with the parameters and conditions set by creators.

To be sure, there is room for improvement in some algorithms. Tests performed by the American Civil Liberties Union (ACLU) reportedly showed only an 80 percent accuracy rate in comparing mug shots to pictures of members of Congress when using Amazon's "Rekognition" system. It recently showed the same 80 percent rate in doing the same comparison to members of the California legislators.

However, different algorithms are available with differing levels of performance. Moreover, these products can be set with a lower discrimination level. The fact is that the top algorithms tested by the National Institute of Standards and Technology showed that their accuracy rate is greater than 99 percent.

The greatest threat of biometric technologies is to democratic values.

Assuming a top-performing algorithm is used, the result could be highly beneficial for civil liberties as opposed to the alternative of "eye balling" suspects. Consider the Boston Bombing where police declared a "containment zone" and forced families into the street with their hands in the air.

The suspect, Dzhokhar Tsarnaev, moved around Boston and was ultimately found outside the "containment zone" once authorities abandoned near martial law. He was caught on some surveillance systems but not identified. FRT can help law enforcement avoid time-consuming area searches and the questionable practice of forcing people out of their homes to physically examine them.

If we are to avoid a post-privacy world, we will have to redefine what we are trying to protect and reconceive how we hope to protect it. In my view, the greatest threat of biometric technologies is to democratic values. Authoritarian nations like China have made huge investments into FRT precisely because they know that the threat of recognition in public deters citizens from associating or interacting with protesters or dissidents. Recognition changes conduct. That chilling effect is what we have the worry about the most.

Conventional privacy doctrines do not offer much protection. The very concept of "public privacy" is treated as something of an oxymoron by courts. Public acts and associations are treated as lacking any reasonable expectation of privacy. In the same vein, the right to anonymity is not a strong avenue for protection. We are not living in an anonymous world anymore.

Consumers want products like FaceFind, which link their images with others across social media. They like "frictionless" transactions and authentications using faceprints. Despite the hyperbole in places like San Francisco, civil libertarians will not succeed in getting that cat to walk backwards.

The basis for biometric privacy protection should not be focused on anonymity, but rather obscurity. You will be increasingly subject to transparency-forcing technology, but we can legislatively mandate ways of obscuring that information. That is the objective of the Biometric Privacy Act that I have proposed in recent research. However, no such comprehensive legislation has passed through Congress.

The ability to spot fraudulent entries at airports or recognizing a felon in flight has obvious benefits for all citizens.

We also need to recognize that FRT has many beneficial uses. Biometric guns can reduce accidents and criminals' conduct. New authentications using FRT and other biometric programs could reduce identity theft.

And, yes, FRT could help protect against unnecessary police stops or false arrests. Finally, and not insignificantly, this technology could stop serious crimes, from terrorist attacks to the capturing of dangerous felons. The ability to spot fraudulent entries at airports or recognizing a felon in flight has obvious benefits for all citizens.

We can live and thrive in a biometric era. However, we will need to bring together civil libertarians with business and government experts if we are going to control this technology rather than have it control us.

[Editor's Note: Read the opposite perspective here.]