Beyond Henrietta Lacks: How the Law Has Denied Every American Ownership Rights to Their Own Cells

A 2017 portrait of Henrietta Lacks.

The common perception is that Henrietta Lacks was a victim of poverty and racism when in 1951 doctors took samples of her cervical cancer without her knowledge or permission and turned them into the world's first immortalized cell line, which they called HeLa. The cell line became a workhorse of biomedical research and facilitated the creation of medical treatments and cures worth untold billions of dollars. Neither Lacks nor her family ever received a penny of those riches.

But racism and poverty is not to blame for Lacks' exploitation—the reality is even worse. In fact all patients, then and now, regardless of social or economic status, have absolutely no right to cells that are taken from their bodies. Some have called this biological slavery.

How We Got Here

The case that established this legal precedent is Moore v. Regents of the University of California.

John Moore was diagnosed with hairy-cell leukemia in 1976 and his spleen was removed as part of standard treatment at the UCLA Medical Center. On initial examination his physician, David W. Golde, had discovered some unusual qualities to Moore's cells and made plans prior to the surgery to have the tissue saved for research rather than discarded as waste. That research began almost immediately.

"On both sides of the case, legal experts and cultural observers cautioned that ownership of a human body was the first step on the slippery slope to 'bioslavery.'"

Even after Moore moved to Seattle, Golde kept bringing him back to Los Angeles to collect additional samples of blood and tissue, saying it was part of his treatment. When Moore asked if the work could be done in Seattle, he was told no. Golde's charade even went so far as claiming to find a low-income subsidy to pay for Moore's flights and put him up in a ritzy hotel to get him to return to Los Angeles, while paying for those out of his own pocket.

Moore became suspicious when he was asked to sign new consent forms giving up all rights to his biological samples and he hired an attorney to look into the matter. It turned out that Golde had been lying to his patient all along; he had been collecting samples unnecessary to Moore's treatment and had turned them into a cell line that he and UCLA had patented and already collected millions of dollars in compensation. The market for the cell lines was estimated at $3 billion by 1990.

Moore felt he had been taken advantage of and filed suit to claim a share of the money that had been made off of his body. "On both sides of the case, legal experts and cultural observers cautioned that ownership of a human body was the first step on the slippery slope to 'bioslavery,'" wrote Priscilla Wald, a professor at Duke University whose career has focused on issues of medicine and culture. "Moore could be viewed as asking to commodify his own body part or be seen as the victim of the theft of his most private and inalienable information."

The case bounced around different levels of the court system with conflicting verdicts for nearly six years until the California Supreme Court ruled on July 9, 1990 that Moore had no legal rights to cells and tissue once they were removed from his body.

The court made a utilitarian argument that the cells had no value until scientists manipulated them in the lab. And it would be too burdensome for researchers to track individual donations and subsequent cell lines to assure that they had been ethically gathered and used. It would impinge on the free sharing of materials between scientists, slow research, and harm the public good that arose from such research.

"In effect, what Moore is asking us to do is impose a tort duty on scientists to investigate the consensual pedigree of each human cell sample used in research," the majority wrote. In other words, researchers don't need to ask any questions about the materials they are using.

One member of the court did not see it that way. In his dissent, Stanley Mosk raised the specter of slavery that "arises wherever scientists or industrialists claim, as defendants have here, the right to appropriate and exploit a patient's tissue for their sole economic benefit—the right, in other words, to freely mine or harvest valuable physical properties of the patient's body. … This is particularly true when, as here, the parties are not in equal bargaining positions."

Mosk also cited the appeals court decision that the majority overturned: "If this science has become for profit, then we fail to see any justification for excluding the patient from participation in those profits."

But the majority bought the arguments that Golde, UCLA, and the nascent biotechnology industry in California had made in amici briefs filed throughout the legal proceedings. The road was now cleared for them to develop products worth billions without having to worry about or share with the persons who provided the raw materials upon which their research was based.

Critical Views

Biomedical research requires a continuous and ever-growing supply of human materials for the foundation of its ongoing work. If an increasing number of patients come to feel as John Moore did, that the system is ripping them off, then they become much less likely to consent to use of their materials in future research.

Some legal and ethical scholars say that donors should be able to limit the types of research allowed for their tissues and researchers should be monitored to assure compliance with those agreements. For example, today it is commonplace for companies to certify that their clothing is not made by child labor, their coffee is grown under fair trade conditions, that food labeled kosher is properly handled. Should we ask any less of our pharmaceuticals than that the donors whose cells made such products possible have been treated honestly and fairly, and share in the financial bounty that comes from such drugs?

Protection of individual rights is a hallmark of the American legal system, says Lisa Ikemoto, a law professor at the University of California Davis. "Putting the needs of a generalized public over the interests of a few often rests on devaluation of the humanity of the few," she writes in a reimagined version of the Moore decision that upholds Moore's property claims to his excised cells. The commentary is in a chapter of a forthcoming book in the Feminist Judgment series, where authors may only use legal precedent in effect at the time of the original decision.

"Why is the law willing to confer property rights upon some while denying the same rights to others?" asks Radhika Rao, a professor at the University of California, Hastings College of the Law. "The researchers who invest intellectual capital and the companies and universities that invest financial capital are permitted to reap profits from human research, so why not those who provide the human capital in the form of their own bodies?" It might be seen as a kind of sweat equity where cash strapped patients make a valuable in kind contribution to the enterprise.

The Moore court also made a big deal about inhibiting the free exchange of samples between scientists. That has become much less the situation over the more than three decades since the decision was handed down. Ironically, this decision, as well as other laws and regulations, have since strengthened the power of patents in biomedicine and by doing so have increased secrecy and limited sharing.

"Although the research community theoretically endorses the sharing of research, in reality sharing is commonly compromised by the aggressive pursuit and defense of patents and by the use of licensing fees that hinder collaboration and development," Robert D. Truog, Harvard Medical School ethicist and colleagues wrote in 2012 in the journal Science. "We believe that measures are required to ensure that patients not bear all of the altruistic burden of promoting medical research."

Additionally, the increased complexity of research and the need for exacting standardization of materials has given rise to an industry that supplies certified chemical reagents, cell lines, and whole animals bred to have specific genetic traits to meet research needs. This has been more efficient for research and has helped to ensure that results from one lab can be reproduced in another.

The Court's rationale of fostering collaboration and free exchange of materials between researchers also has been undercut by the changing structure of that research. Big pharma has shrunk the size of its own research labs and over the last decade has worked out cooperative agreements with major research universities where the companies contribute to the research budget and in return have first dibs on any findings (and sometimes a share of patent rights) that come out of those university labs. It has had a chilling effect on the exchange of materials between universities.

Perhaps tracking cell line donors and use restrictions on those donations might have been burdensome to researchers when Moore was being litigated. Some labs probably still kept their cell line records on 3x5 index cards, computers were primarily expensive room-size behemoths with limited capacity, the internet barely existed, and there was no cloud storage.

But that was the dawn of a new technological age and standards have changed. Now cell lines are kept in state-of-the-art sub zero storage units, tagged with the source, type of tissue, date gathered and often other information. Adding a few more data fields and contacting the donor if and when appropriate does not seem likely to disrupt the research process, as the court asserted.

Forging the Future

"U.S. universities are awarded almost 3,000 patents each year. They earn more than $2 billion each year from patent royalties. Sharing a modest portion of these profits is a novel method for creating a greater sense of fairness in research relationships that we think is worth exploring," wrote Mark Yarborough, a bioethicist at the University of California Davis Medical School, and colleagues. That was penned nearly a decade ago and those numbers have only grown.

The Michigan BioTrust for Health might serve as a useful model in tackling some of these issues. Dried blood spots have been collected from all newborns for half a century to be tested for certain genetic diseases, but controversy arose when the huge archive of dried spots was used for other research projects. As a result, the state created a nonprofit organization to in essence become a biobank and manage access to these spots only for specific purposes, and also to share any revenue that might arise from that research.

"If there can be no property in a whole living person, does it stand to reason that there can be no property in any part of a living person? If there were, can it be said that this could equate to some sort of 'biological slavery'?" Irish ethicist Asim A. Sheikh wrote several years ago. "Any amount of effort spent pondering the issue of 'ownership' in human biological materials with existing law leaves more questions than answers."

Perhaps the biggest question will arise when -- not if but when -- it becomes possible to clone a human being. Would a human clone be a legal person or the property of those who created it? Current legal precedent points to it being the latter.

Today, October 4, is the 70th anniversary of Henrietta Lacks' death from cancer. Over those decades her immortalized cells have helped make possible miraculous advances in medicine and have had a role in generating billions of dollars in profits. Surviving family members have spoken many times about seeking a share of those profits in the name of social justice; they intend to file lawsuits today. Such cases will succeed or fail on their own merits. But regardless of their specific outcomes, one can hope that they spark a larger public discussion of the role of patients in the biomedical research enterprise and lead to establishing a legal and financial claim for their contributions toward the next generation of biomedical research.

Real-Time Monitoring of Your Health Is the Future of Medicine

Implantable sensors and other surveillance technologies offer tremendous health benefits -- and ethical challenges.

The same way that it's harder to lose 100 pounds than it is to not gain 100 pounds, it's easier to stop a disease before it happens than to treat an illness once it's developed.

In Morris' dream scenario "everyone will be implanted with a sensor" ("…the same way most people are vaccinated") and the sensor will alert people to go to the doctor if something is awry.

Bio-engineers working on the next generation of diagnostic tools say today's technology, such as colonoscopies or mammograms, are reactionary; that is, they tell a person they are sick often when it's too late to reverse course. Surveillance medicine — such as implanted sensors — will detect disease at its onset, in real time.

What Is Possible?

Ever since the Human Genome Project — which concluded in 2003 after mapping the DNA sequence of all 30,000 human genes — modern medicine has shifted to "personalized medicine." Also called, "precision health," 21st-century doctors can in some cases assess a person's risk for specific diseases from his or her DNA. The information enables women with a BRCA gene mutation, for example, to undergo more frequent screenings for breast cancer or to pro-actively choose to remove their breasts, as a "just in case" measure.

But your DNA is not always enough to determine your risk of illness. Not all genetic mutations are harmful, for example, and people can get sick without a genetic cause, such as with an infection. Hence the need for a more "real-time" way to monitor health.

Aaron Morris, a postdoctoral researcher in the Department of Biomedical Engineering at the University of Michigan, wants doctors to be able to predict illness with pinpoint accuracy well before symptoms show up. Working in the lab of Dr. Lonnie Shea, the team is building "a tiny diagnostic lab" that can live under a person's skin and monitor for illness, 24/7. Currently being tested in mice, the Michigan team's porous biodegradable implant becomes part of the body as "cells move right in," says Morris, allowing engineered tissue to be biopsied and analyzed for diseases. The information collected by the sensors will enable doctors to predict disease flareups, such as for cancer relapses, so that therapies can begin well before a person comes out of remission. The technology will also measure the effectiveness of those therapies in real time.

In Morris' dream scenario "everyone will be implanted with a sensor" ("…the same way most people are vaccinated") and the sensor will alert people to go to the doctor if something is awry.

While it may be four or five decades before Morris' sensor becomes mainstream, "the age of surveillance medicine is here," says Jamie Metzl, a technology and healthcare futurist who penned Hacking Darwin: Genetic Engineering and the Future of Humanity. "It will get more effective and sophisticated and less obtrusive over time," says Metzl.

Already, Google compiles public health data about disease hotspots by amalgamating individual searches for medical symptoms; pill technology can digitally track when and how much medication a patient takes; and, the Apple watch heart app can predict with 85-percent accuracy if an individual using the wrist device has Atrial Fibrulation (AFib) — a condition that causes stroke, blood clots and heart failure, and goes undiagnosed in 700,000 people each year in the U.S.

"We'll never be able to predict everything," says Metzl. "But we will always be able to predict and prevent more and more; that is the future of healthcare and medicine."

Morris believes that within ten years there will be surveillance tools that can predict if an individual has contracted the flu well before symptoms develop.

At City College of New York, Ryan Williams, assistant professor of biomedical engineering, has built an implantable nano-sensor that works with a florescent wand to scope out if cancer cells are growing at the implant site. "Instead of having the ovary or breast removed, the patient could just have this [surveillance] device that can say 'hey we're monitoring for this' in real-time… [to] measure whether the cancer is maybe coming back,' as opposed to having biopsy tests or undergoing treatments or invasive procedures."

Not all surveillance technologies that are being developed need to be implanted. At Case Western, Colin Drummond, PhD, MBA, a data scientist and assistant department chair of the Department of Biomedical Engineering, is building a "surroundable." He describes it as an Alexa-style surveillance system (he's named her Regina) that will "tell" the user, if a need arises for medication, how much to take and when.

Bioethical Red Flags

"Everyone should be extremely excited about our move toward what I call predictive and preventive health care and health," says Metzl. "We should also be worried about it. Because all of these technologies can be used well and they can [also] be abused." The concerns are many layered:

Discriminatory practices

For years now, bioethicists have expressed concerns about employee-sponsored wellness programs that encourage fitness while also tracking employee health data."Getting access to your health data can change the way your employer thinks about your employability," says Keisha Ray, assistant professor at the University of Texas Health Science Center at Houston (UTHealth). Such access can lead to discriminatory practices against employees that are less fit. "Surveillance medicine only heightens those risks," says Ray.

Who owns the data?

Surveillance medicine may help "democratize healthcare" which could be a good thing, says Anita Ho, an associate professor in bioethics at both the University of California, San Francisco and at the University of British Columbia. It would enable easier access by patients to their health data, delivered to smart phones, for example, rather than waiting for a call from the doctor. But, she also wonders who will own the data collected and if that owner has the right to share it or sell it. "A direct-to-consumer device is where the lines get a little blurry," says Ho. Currently, health data collected by Apple Watch is owned by Apple. "So we have to ask bigger ethical questions in terms of what consent should be required" by users.

Insurance coverage

"Consumers of these products deserve some sort of assurance that using a product that will predict future needs won't in any way jeopardize their ability to access care for those needs," says Hastings Center bioethicist Carolyn Neuhaus. She is urging lawmakers to begin tackling policy issues created by surveillance medicine, now, well ahead of the technology becoming mainstream, not unlike GINA, the Genetic Information Nondiscrimination Act of 2008 -- a federal law designed to prevent discrimination in health insurance on the basis of genetic information.

And, because not all Americans have insurance, Ho wants to know, who's going to pay for this technology and how much will it cost?

Trusting our guts

Some bioethicists are concerned that surveillance technology will reduce individuals to their "risk profiles," leaving health care systems to perceive them as nothing more than a "bundle of health and security risks." And further, in our quest to predict and prevent ailments, Neuhaus wonders if an over-reliance on data could damage the ability of future generations to trust their gut and tune into their own bodies?

It "sounds kind of hippy-dippy and feel-goodie," she admits. But in our culture of medicine where efficiency is highly valued, there's "a tendency to not value and appreciate what one feels inside of their own body … [because] it's easier to look at data than to listen to people's really messy stories of how they 'felt weird' the other day. It takes a lot less time to look at a sheet, to read out what the sensor implanted inside your body or planted around your house says."

Ho, too, worries about lost narratives. "For surveillance medicine to actually work we have to think about how we educate clinicians about the utility of these devices and how to how to interpret the data in the broader context of patients' lives."

Over-diagnosing

While one of the goals of surveillance medicine is to cut down on doctor visits, Ho wonders if the technology will have the opposite effect. "People may be going to the doctor more for things that actually are benign and are really not of concern yet," says Ho. She is also concerned that surveillance tools could make healthcare almost "recreational" and underscores the importance of making sure that the goals of surveillance medicine are met before the technology is unleashed.

"We can't just assume that any of these technologies are inherently technologies of liberation."

AI doesn't fix existing healthcare problems

"Knowing that you're going to have a fall or going to relapse or have a disease isn't all that helpful if you have no access to the follow-up care and you can't afford it and you can't afford the prescription medication that's going to ward off the onset," says Neuhaus. "It may still be worth knowing … but we can't fool ourselves into thinking that this technology is going to reshape medicine in America if we don't pay attention to … the infrastructure that we don't currently have."

Race-based medicine

How surveillances devices are tested before being approved for human use is a major concern for Ho. In recent years, alerts have been raised about the homogeneity of study group participants — too white and too male. Ho wonders if the devices will be able to "accurately predict the disease progression for people whose data has not been used in developing the technology?" COVID-19 has killed Black people at a rate 2.5 time greater than white people, for example, and new, virtual clinical research is focused on recruiting more people of color.

The Biggest Question

"We can't just assume that any of these technologies are inherently technologies of liberation," says Metzl.

Especially because we haven't yet asked the 64-thousand dollar question: Would patients even want to know?

Jenny Ahlstrom is an IT professional who was diagnosed at 43 with multiple myeloma, a blood cancer that typically attacks people in their late 60s and 70s and for which there is no cure. She believes that most people won't want to know about their declining health in real time. People like to live "optimistically in denial most of the time. If they don't have a problem, they don't want to really think they have a problem until they have [it]," especially when there is no cure. "Psychologically? That would be hard to know."

Ahlstrom says there's also the issue of trust, something she experienced first-hand when she launched her non-profit, HealthTree, a crowdsourcing tool to help myeloma patients "find their genetic twin" and learn what therapies may or may not work. "People want to share their story, not their data," says Ahlstrom. "We have been so conditioned as a nation to believe that our medical data is so valuable."

Metzl acknowledges that adoption of new technologies will be uneven. But he also believes that "over time, it will be abundantly clear that it's much, much cheaper to predict and prevent disease than it is to treat disease once it's already emerged."

Beyond cost, the tremendous potential of these technologies to help us live healthier and longer lives is a game-changer, he says, as long as we find ways "to ultimately navigate this terrain and put systems in place ... to minimize any potential harms."

How Smallpox Was Wiped Off the Planet By a Vaccine and Global Cooperation

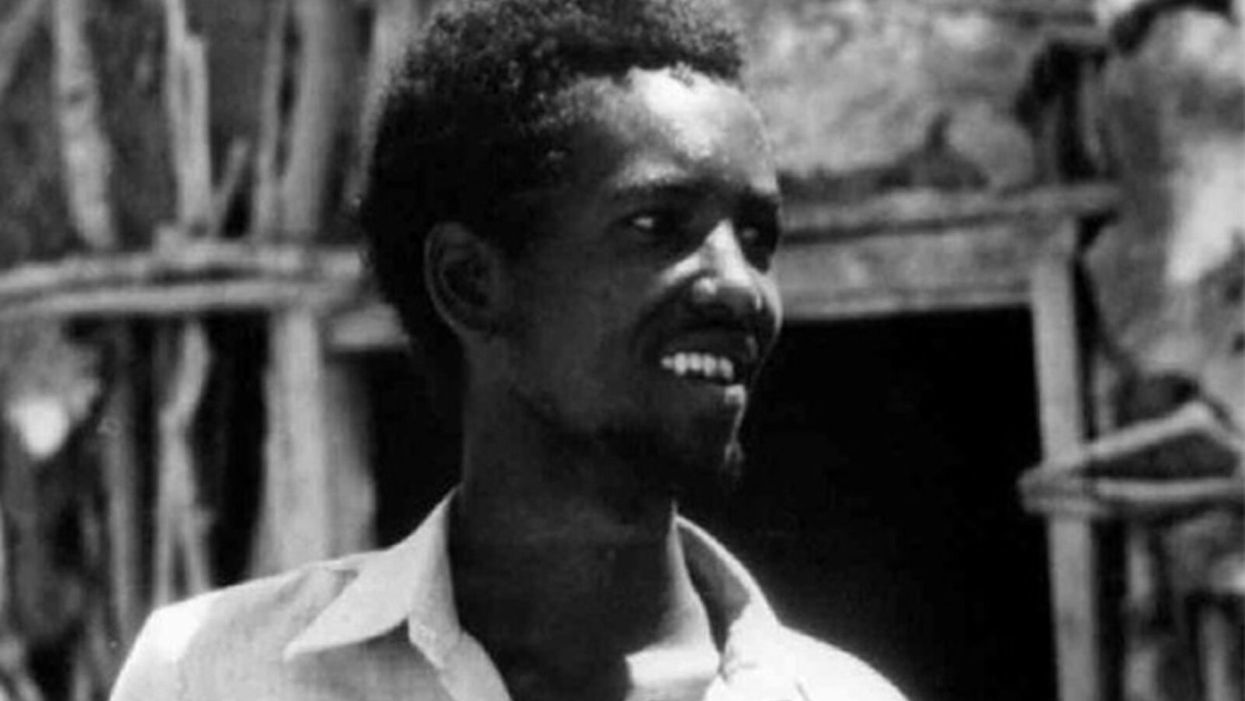

The world's last recorded case of endemic smallpox was in Ali Maow Maalin, of Merka, Somalia, in October 1977. He made a full recovery.

For 3000 years, civilizations all over the world were brutalized by smallpox, an infectious and deadly virus characterized by fever and a rash of painful, oozing sores.

Doctors had to contend with wars, floods, and language barriers to make their campaign a success.

Smallpox was merciless, killing one third of people it infected and leaving many survivors permanently pockmarked and blind. Although smallpox was more common during the 18th and 19th centuries, it was still a leading cause of death even up until the early 1950s, killing an estimated 50 million people annually.

A Primitive Cure

Sometime during the 10th century, Chinese physicians figured out that exposing people to a tiny bit of smallpox would sometimes result in a milder infection and immunity to the disease afterward (if the person survived). Desperate for a cure, people would huff powders made of smallpox scabs or insert smallpox pus into their skin, all in the hopes of getting immunity without having to get too sick. However, this method – called inoculation – didn't always work. People could still catch the full-blown disease, spread it to others, or even catch another infectious disease like syphilis in the process.

A Breakthrough Treatment

For centuries, inoculation – however imperfect – was the only protection the world had against smallpox. But in the late 18th century, an English physician named Edward Jenner created a more effective method. Jenner discovered that inoculating a person with cowpox – a much milder relative of the smallpox virus – would make that person immune to smallpox as well, but this time without the possibility of actually catching or transmitting smallpox. His breakthrough became the world's first vaccine against a contagious disease. Other researchers, like Louis Pasteur, would use these same principles to make vaccines for global killers like anthrax and rabies. Vaccination was considered a miracle, conferring all of the rewards of having gotten sick (immunity) without the risk of death or blindness.

Scaling the Cure

As vaccination became more widespread, the number of global smallpox deaths began to drop, particularly in Europe and the United States. But even as late as 1967, smallpox was still killing anywhere from 10 to 15 million people in poorer parts of the globe. The World Health Assembly (a decision-making body of the World Health Organization) decided that year to launch the first coordinated effort to eradicate smallpox from the planet completely, aiming for 80 percent vaccine coverage in every country in which the disease was endemic – a total of 33 countries.

But officials knew that eradicating smallpox would be easier said than done. Doctors had to contend with wars, floods, and language barriers to make their campaign a success. The vaccination initiative in Bangladesh proved the most challenging, due to its population density and the prevalence of the disease, writes journalist Laurie Garrett in her book, The Coming Plague.

In one instance, French physician Daniel Tarantola on assignment in Bangladesh confronted a murderous gang that was thought to be spreading smallpox throughout the countryside during their crime sprees. Without police protection, Tarantola confronted the gang and "faced down guns" in order to immunize them, protecting the villagers from repeated outbreaks.

Because not enough vaccines existed to vaccinate everyone in a given country, doctors utilized a strategy called "ring vaccination," which meant locating individual outbreaks and vaccinating all known and possible contacts to stop an outbreak at its source. Fewer than 50 percent of the population in Nigeria received a vaccine, for example, but thanks to ring vaccination, it was eradicated in that country nonetheless. Doctors worked tirelessly for the next eleven years to immunize as many people as possible.

The World Health Organization declared smallpox officially eradicated on May 8, 1980.

A Resounding Success

In November 1975, officials discovered a case of variola major — the more virulent strain of the smallpox virus — in a three-year-old Bangladeshi girl named Rahima Banu. Banu was forcibly quarantined in her family's home with armed guards until the risk of transmission had passed, while officials went door-to-door vaccinating everyone within a five-mile radius. Two years later, the last case of variola major in human history was reported in Somalia. When no new community-acquired cases appeared after that, the World Health Organization declared smallpox officially eradicated on May 8, 1980.

Because of smallpox, we now know it's possible to completely eliminate a disease. But is it likely to happen again with other diseases, like COVID-19? Some scientists aren't so sure. As dangerous as smallpox was, it had a few characteristics that made eradication possibly easier than for other diseases. Smallpox, for instance, has no animal reservoir, meaning that it could not circulate in animals and resurge in a human population at a later date. Additionally, a person who had smallpox once was guaranteed immunity from the disease thereafter — which is not the case for COVID-19.

In The Coming Plague, Japanese physician Isao Arita, who led the WHO's Smallpox Eradication Unit, admitted to routinely defying orders from the WHO, mobilizing to parts of the world without official approval and sometimes even vaccinating people against their will. "If we hadn't broken every single WHO rule many times over, we would have never defeated smallpox," Arita said. "Never."

Still, thanks to the life-saving technology of vaccines – and the tireless efforts of doctors and scientists across the globe – a once-lethal disease is now a thing of the past.