A blood test may catch colorectal cancer before it's too late

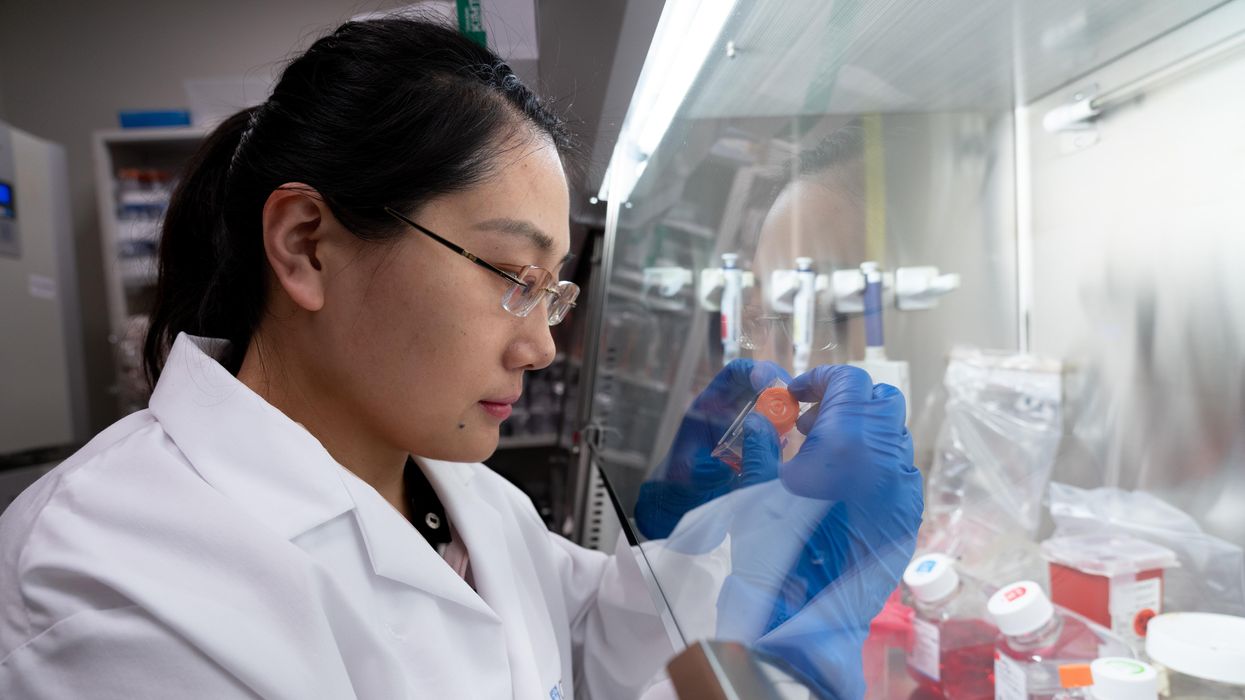

A scientist works on a blood test in the Ajay Goel Lab, one of many labs that are developing blood tests to screen for different types of cancer.

Soon it may be possible to find different types of cancer earlier than ever through a simple blood test.

Among the many blood tests in development, researchers announced in July that they have developed one that may screen for early-onset colorectal cancer. The new potential screening tool, detailed in a study in the journal Gastroenterology, represents a major step in noninvasively and inexpensively detecting nonhereditary colorectal cancer at an earlier and more treatable stage.

In recent years, this type of cancer has been on the upswing in adults under age 50 and in those without a family history. In 2021, the American Cancer Society's revised guidelines began recommending that colorectal cancer screenings with colonoscopy begin at age 45. But that still wouldn’t catch many early-onset cases among people in their 20s and 30s, says Ajay Goel, professor and chair of molecular diagnostics and experimental therapeutics at City of Hope, a Los Angeles-based nonprofit cancer research and treatment center that developed the new blood test.

“These people will mostly be missed because they will never be screened for it,” Goel says. Overall, colorectal cancer is the fourth most common malignancy, according to the U.S. Centers for Disease Control and Prevention.

Goel is far from the only one working on this. Dozens of companies are in the process of developing blood tests to screen for different types of malignancies.

Some estimates indicate that between one-fourth and one-third of all newly diagnosed colorectal cancers are early-onset. These patients generally present with more aggressive and advanced disease at diagnosis compared to late-onset colorectal cancer detected in people 50 years or older.

To develop his test, Goel examined publicly available datasets and figured out that changes in novel microRNAs, or miRNAs, which regulate the expression of genes, occurred in people with early-onset colorectal cancer. He confirmed these biomarkers by looking for them in the blood of 149 patients who had the early-onset form of the disease. In particular, Goel and his team of researchers were able to pick out four miRNAs that serve as a telltale sign of this cancer when they’re found in combination with each other.

The blood test is being validated by following another group of patients with early-onset colorectal cancer. “We have filed for intellectual property on this invention and are currently seeking biotech/pharma partners to license and commercialize this invention,” Goel says.

He’s far from the only one working on this. Dozens of companies are in the process of developing blood tests to screen for different types of malignancies, says Timothy Rebbeck, a professor of cancer prevention at the Harvard T.H. Chan School of Public Health and the Dana-Farber Cancer Institute. But, he adds, “It’s still very early, and the technology still needs a lot of work before it will revolutionize early detection.”

The accuracy of the early detection blood tests for cancer isn’t yet where researchers would like it to be. To use these tests widely in people without cancer, a very high degree of precision is needed, says David VanderWeele, interim director of the OncoSET Molecular Tumor Board at Northwestern University’s Lurie Cancer Center in Chicago.

Otherwise, “you’re going to cause a lot of anxiety unnecessarily if people have false-positive tests,” VanderWeele says. So far, “these tests are better at finding cancer when there’s a higher burden of cancer present,” although the goal is to detect cancer at the earliest stages. Even so, “we are making progress,” he adds.

While early detection is known to improve outcomes, most cancers are detected too late, often after they metastasize and people develop symptoms. Only five cancer types have recommended standard screenings, none of which involve blood tests—breast, cervical, colorectal, lung (smokers considered at risk) and prostate cancers, says Trish Rowland, vice president of corporate communications at GRAIL, a biotechnology company in Menlo Park, Calif., which developed a multi-cancer early detection blood test.

These recommended screenings check for individual cancers rather than looking for any form of cancer someone may have. The devil lies in the fact that cancers without widespread screening recommendations represent the vast majority of cancer diagnoses and most cancer deaths.

GRAIL’s Galleri multi-cancer early detection test is designed to find more cancers at earlier stages by analyzing DNA shed into the bloodstream by cells—with as few false positives as possible, she says. The test is currently available by prescription only for those with an elevated risk of cancer. Consumers can request it from their healthcare or telemedicine provider. “Galleri can detect a shared cancer signal across more than 50 types of cancers through a simple blood draw,” Rowland says, adding that it can be integrated into annual health checks and routine blood work.

Cancer patients—even those with early and curable disease—often have tumor cells circulating in their blood. “These tumor cells act as a biomarker and can be used for cancer detection and diagnosis,” says Andrew Wang, a radiation oncologist and professor at the University of Texas Southwestern Medical Center in Dallas. “Our research goal is to be able to detect these tumor cells to help with cancer management.” Collaborating with Seungpyo Hong, the Milton J. Henrichs Chair and Professor at the University of Wisconsin-Madison School of Pharmacy, “we have developed a highly sensitive assay to capture these circulating tumor cells.”

Even if the quality of a blood test is superior, finding cancer early doesn’t always mean it’s absolutely best to treat it. For example, prostate cancer treatment’s potential side effects—the inability to control urine or have sex—may be worse than living with a slow-growing tumor that is unlikely to be fatal. “[The test] needs to tell me, am I going to die of that cancer? And, if I intervene, will I live longer?” says John Marshall, chief of hematology and oncology at Medstar Georgetown University Hospital in Washington, D.C.

Ajay Goel Lab

A blood test developed at the University of Texas MD Anderson Cancer Center in Houston helps predict who may benefit from lung cancer screening when it is combined with a risk model based on an individual’s smoking history, according to a study published in January in the Journal of Clinical Oncology. The personalized lung cancer risk assessment was more sensitive and specific than the 2021 and 2013 U.S. Preventive Services Task Force criteria.

The study involved participants from the Prostate, Lung, Colorectal, and Ovarian Cancer Screening Trial with a minimum of a 10 pack-year smoking history, meaning they smoked 20 cigarettes per day for ten years. If implemented, the blood test plus model would have found 9.2 percent more lung cancer cases for screening and decreased referral to screening among non-cases by 13.7 percent compared to the 2021 task force criteria, according to Oncology Times.

The conventional type of screening for lung cancer is an annual low-dose CT scan, but only a small percentage of people who are eligible will actually get these scans, says Sam Hanash, professor of clinical cancer prevention and director of MD Anderson’s Center for Global Cancer Early Detection. Such screening is not readily available in most countries.

In methodically searching for blood-based biomarkers for lung cancer screening, MD Anderson researchers developed a simple test consisting of four proteins. These proteins circulating in the blood were at high levels in individuals who had lung cancer or later developed it, Hanash says.

“The interest in blood tests for cancer early detection has skyrocketed in the past few years,” he notes, “due in part to advances in technology and a better understanding of cancer causation, cancer drivers and molecular changes that occur with cancer development.”

However, at the present time, none of the blood tests being considered eliminate the need for screening of eligible subjects using established methods, such as colonoscopy for colorectal cancer. Yet, Hanash says, “they have the potential to complement these modalities.”

Researchers probe extreme gene therapy for severe alcoholism

When all traditional therapeutic approaches fail for alcohol abuse disorder, a radical gene therapy might be something to try in the future.

Story by Freethink

A single shot — a gene therapy injected into the brain — dramatically reduced alcohol consumption in monkeys that previously drank heavily. If the therapy is safe and effective in people, it might one day be a permanent treatment for alcoholism for people with no other options.

The challenge: Alcohol use disorder (AUD) means a person has trouble controlling their alcohol consumption, even when it is negatively affecting their life, job, or health.

In the U.S., more than 10 percent of people over the age of 12 are estimated to have AUD, and while medications, counseling, or sheer willpower can help some stop drinking, staying sober can be a huge struggle — an estimated 40-60 percent of people relapse at least once.

A team of U.S. researchers suspected that an in-development gene therapy for Parkinson’s disease might work as a dopamine-replenishing treatment for alcoholism, too.

According to the CDC, more than 140,000 Americans are dying each year from alcohol-related causes, and the rate of deaths has been rising for years, especially during the pandemic.

The idea: For occasional drinkers, alcohol causes the brain to release more dopamine, a chemical that makes you feel good. Chronic alcohol use, however, causes the brain to produce, and process, less dopamine, and this persistent dopamine deficit has been linked to alcohol relapse.

There is currently no way to reverse the changes in the brain brought about by AUD, but a team of U.S. researchers suspected that an in-development gene therapy for Parkinson’s disease might work as a dopamine-replenishing treatment for alcoholism, too.

To find out, they tested it in heavy-drinking monkeys — and the animals’ alcohol consumption dropped by 90% over the course of a year.

How it works: The treatment centers on the protein GDNF (“glial cell line-derived neurotrophic factor”), which supports the survival of certain neurons, including ones linked to dopamine.

For the new study, a harmless virus was used to deliver the gene that codes for GDNF into the brains of four monkeys that, when they had the option, drank heavily — the amount of ethanol-infused water they consumed would be equivalent to a person having nine drinks per day.

“We targeted the cell bodies that produce dopamine with this gene to increase dopamine synthesis, thereby replenishing or restoring what chronic drinking has taken away,” said co-lead researcher Kathleen Grant.

To serve as controls, another four heavy-drinking monkeys underwent the same procedure, but with a saline solution delivered instead of the gene therapy.

The results: All of the monkeys had their access to alcohol removed for two months following the surgery. When it was then reintroduced for four weeks, the heavy drinkers consumed 50 percent less compared to the control group.

When the researchers examined the monkeys’ brains at the end of the study, they were able to confirm that dopamine levels had been replenished in the treated animals, but remained low in the controls.

The researchers then took the alcohol away for another four weeks, before giving it back for four. They repeated this cycle for a year, and by the end of it, the treated monkeys’ consumption had fallen by more than 90 percent compared to the controls.

“Drinking went down to almost zero,” said Grant. “For months on end, these animals would choose to drink water and just avoid drinking alcohol altogether. They decreased their drinking to the point that it was so low we didn’t record a blood-alcohol level.”

When the researchers examined the monkeys’ brains at the end of the study, they were able to confirm that dopamine levels had been replenished in the treated animals, but remained low in the controls.

Looking ahead: Dopamine is involved in a lot more than addiction, so more research is needed to not only see if the results translate to people but whether the gene therapy leads to any unwanted changes to mood or behavior.

Because the therapy requires invasive brain surgery and is likely irreversible, it’s unlikely to ever become a common treatment for alcoholism — but it could one day be the only thing standing between people with severe AUD and death.

“[The treatment] would be most appropriate for people who have already shown that all our normal therapeutic approaches do not work for them,” said Grant. “They are likely to create severe harm or kill themselves or others due to their drinking.”

This article originally appeared on Freethink, home of the brightest minds and biggest ideas of all time.

Massive benefits of AI come with environmental and human costs. Can AI itself be part of the solution?

Generative AI has a large carbon footprint and other drawbacks. But AI can help mitigate its own harms—by plowing through mountains of data on extreme weather and human displacement.

The recent explosion of generative artificial intelligence tools like ChatGPT and Dall-E enabled anyone with internet access to harness AI’s power for enhanced productivity, creativity, and problem-solving. With their ever-improving capabilities and expanding user base, these tools proved useful across disciplines, from the creative to the scientific.

But beneath the technological wonders of human-like conversation and creative expression lies a dirty secret—an alarming environmental and human cost. AI has an immense carbon footprint. Systems like ChatGPT take months to train in high-powered data centers, which demand huge amounts of electricity, much of which is still generated with fossil fuels, as well as water for cooling. “One of the reasons why Open AI needs investments [to the tune of] $10 billion from Microsoft is because they need to pay for all of that computation,” says Kentaro Toyama, a computer scientist at the University of Michigan. There’s also an ecological toll from mining rare minerals required for hardware and infrastructure. This environmental exploitation pollutes land, triggers natural disasters and causes large-scale human displacement. Finally, for data labeling needed to train and correct AI algorithms, the Big Data industry employs cheap and exploitative labor, often from the Global South.

Generative AI tools are based on large language models (LLMs), with most well-known being various versions of GPT. LLMs can perform natural language processing, including translating, summarizing and answering questions. They use artificial neural networks, called deep learning or machine learning. Inspired by the human brain, neural networks are made of millions of artificial neurons. “The basic principles of neural networks were known even in the 1950s and 1960s,” Toyama says, “but it’s only now, with the tremendous amount of compute power that we have, as well as huge amounts of data, that it’s become possible to train generative AI models.”

Though there aren’t any official figures about the power consumption or emissions from data centers, experts estimate that they use one percent of global electricity—more than entire countries.

In recent months, much attention has gone to the transformative benefits of these technologies. But it’s important to consider that these remarkable advances may come at a price.

AI’s carbon footprint

In their latest annual report, 2023 Landscape: Confronting Tech Power, the AI Now Institute, an independent policy research entity focusing on the concentration of power in the tech industry, says: “The constant push for scale in artificial intelligence has led Big Tech firms to develop hugely energy-intensive computational models that optimize for ‘accuracy’—through increasingly large datasets and computationally intensive model training—over more efficient and sustainable alternatives.”

Though there aren’t any official figures about the power consumption or emissions from data centers, experts estimate that they use one percent of global electricity—more than entire countries. In 2019, Emma Strubell, then a graduate researcher at the University of Massachusetts Amherst, estimated that training a single LLM resulted in over 280,000 kg in CO2 emissions—an equivalent of driving almost 1.2 million km in a gas-powered car. A couple of years later, David Patterson, a computer scientist from the University of California Berkeley, and colleagues, estimated GPT-3’s carbon footprint at over 550,000 kg of CO2 In 2022, the tech company Hugging Face, estimated the carbon footprint of its own language model, BLOOM, as 25,000 kg in CO2 emissions. (BLOOM’s footprint is lower because Hugging Face uses renewable energy, but it doubled when other life-cycle processes like hardware manufacturing and use were added.)

Luckily, despite the growing size and numbers of data centers, their increasing energy demands and emissions have not kept pace proportionately—thanks to renewable energy sources and energy-efficient hardware.

But emissions don’t tell the full story.

AI’s hidden human cost

“If historical colonialism annexed territories, their resources, and the bodies that worked on them, data colonialism’s power grab is both simpler and deeper: the capture and control of human life itself through appropriating the data that can be extracted from it for profit.” So write Nick Couldry and Ulises Mejias, authors of the book The Costs of Connection.

The energy requirements, hardware manufacture and the cheap human labor behind AI systems disproportionately affect marginalized communities.

Technologies we use daily inexorably gather our data. “Human experience, potentially every layer and aspect of it, is becoming the target of profitable extraction,” Couldry and Meijas say. This feeds data capitalism, the economic model built on the extraction and commodification of data. While we are being dispossessed of our data, Big Tech commodifies it for their own benefit. This results in consolidation of power structures that reinforce existing race, gender, class and other inequalities.

“The political economy around tech and tech companies, and the development in advances in AI contribute to massive displacement and pollution, and significantly changes the built environment,” says technologist and activist Yeshi Milner, who founded Data For Black Lives (D4BL) to create measurable change in Black people’s lives using data. The energy requirements, hardware manufacture and the cheap human labor behind AI systems disproportionately affect marginalized communities.

AI’s recent explosive growth spiked the demand for manual, behind-the-scenes tasks, creating an industry described by Mary Gray and Siddharth Suri as “ghost work” in their book. This invisible human workforce that lies behind the “magic” of AI, is overworked and underpaid, and very often based in the Global South. For example, workers in Kenya who made less than $2 an hour, were the behind the mechanism that trained ChatGPT to properly talk about violence, hate speech and sexual abuse. And, according to an article in Analytics India Magazine, in some cases these workers may not have been paid at all, a case for wage theft. An exposé by the Washington Post describes “digital sweatshops” in the Philippines, where thousands of workers experience low wages, delays in payment, and wage theft by Remotasks, a platform owned by Scale AI, a $7 billion dollar American startup. Rights groups and labor researchers have flagged Scale AI as one company that flouts basic labor standards for workers abroad.

It is possible to draw a parallel with chattel slavery—the most significant economic event that continues to shape the modern world—to see the business structures that allow for the massive exploitation of people, Milner says. Back then, people got chocolate, sugar, cotton; today, they get generative AI tools. “What’s invisible through distance—because [tech companies] also control what we see—is the massive exploitation,” Milner says.

“At Data for Black Lives, we are less concerned with whether AI will become human…[W]e’re more concerned with the growing power of AI to decide who’s human and who’s not,” Milner says. As a decision-making force, AI becomes a “justifying factor for policies, practices, rules that not just reinforce, but are currently turning the clock back generations years on people’s civil and human rights.”

Ironically, AI plays an important role in mitigating its own harms—by plowing through mountains of data about weather changes, extreme weather events and human displacement.

Nuria Oliver, a computer scientist, and co-founder and vice-president of the European Laboratory of Learning and Intelligent Systems (ELLIS), says that instead of focusing on the hypothetical existential risks of today’s AI, we should talk about its real, tangible risks.

“Because AI is a transverse discipline that you can apply to any field [from education, journalism, medicine, to transportation and energy], it has a transformative power…and an exponential impact,” she says.

AI's accountability

“At the core of what we were arguing about data capitalism [is] a call to action to abolish Big Data,” says Milner. “Not to abolish data itself, but the power structures that concentrate [its] power in the hands of very few actors.”

A comprehensive AI Act currently negotiated in the European Parliament aims to rein Big Tech in. It plans to introduce a rating of AI tools based on the harms caused to humans, while being as technology-neutral as possible. That sets standards for safe, transparent, traceable, non-discriminatory, and environmentally friendly AI systems, overseen by people, not automation. The regulations also ask for transparency in the content used to train generative AIs, particularly with copyrighted data, and also disclosing that the content is AI-generated. “This European regulation is setting the example for other regions and countries in the world,” Oliver says. But, she adds, such transparencies are hard to achieve.

Google, for example, recently updated its privacy policy to say that anything on the public internet will be used as training data. “Obviously, technology companies have to respond to their economic interests, so their decisions are not necessarily going to be the best for society and for the environment,” Oliver says. “And that’s why we need strong research institutions and civil society institutions to push for actions.” ELLIS also advocates for data centers to be built in locations where the energy can be produced sustainably.

Ironically, AI plays an important role in mitigating its own harms—by plowing through mountains of data about weather changes, extreme weather events and human displacement. “The only way to make sense of this data is using machine learning methods,” Oliver says.

Milner believes that the best way to expose AI-caused systemic inequalities is through people's stories. “In these last five years, so much of our work [at D4BL] has been creating new datasets, new data tools, bringing the data to life. To show the harms but also to continue to reclaim it as a tool for social change and for political change.” This change, she adds, will depend on whose hands it is in.